YouTube Search and Suggested Video Ranking Factors in 2026

Introduction: The Metamorphosis from Search Engine to Multimodal Answer Engine

The architecture of digital video discovery has undergone a profound metamorphosis. By 2026, YouTube has transcended its historical identity as merely the world's second-largest search engine to become the foundational, multimodal data repository powering the global artificial intelligence infrastructure 123. With over 200 billion daily views generated by YouTube Shorts, $60 billion in annual platform revenue, and the integration of highly sophisticated generative AI across the platform, the underlying algorithmic mechanics have been completely re-engineered 4. The legacy frameworks of Search Engine Optimization (SEO) - which historically relied on metadata manipulation, keyword density, and raw click-through rates - have been deprecated. In their place, a new ecosystem governed by Generative Engine Optimization (GEO), audience satisfaction modeling, and machine-readable content structuring has emerged 155.

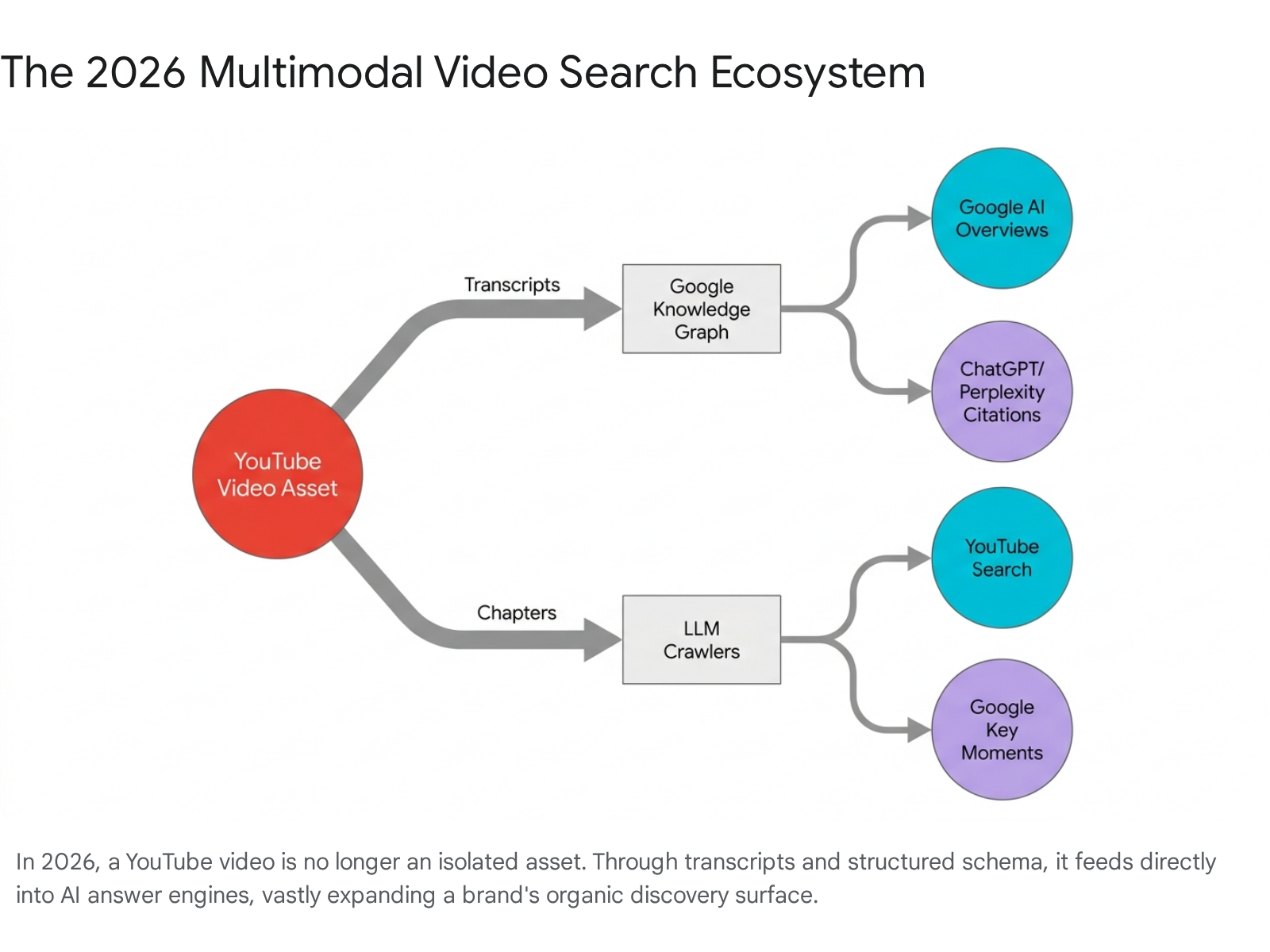

In 2026, algorithmic visibility demands a paradigm known within the industry as "Search Everywhere Optimization." This framework acknowledges that video discovery no longer occurs solely within the native YouTube application. Rather, YouTube content is actively scraped, summarized, and cited by Google's AI Overviews, OpenAI's ChatGPT, Perplexity, and Apple's AI integrations 237. Furthermore, YouTube has introduced its own conversational search interface, colloquially known as "Ask YouTube," which replaces traditional blue links with synthesized multimodal itineraries and text-video hybrids 6. To achieve sustained visibility in this environment, video content must be structured to appeal simultaneously to human behavioral psychology and sophisticated Large Language Models (LLMs) 39. This exhaustive analysis dissects the specific mechanisms driving YouTube rankings in 2026, contrasting the independent algorithms governing Search, Suggested Video, and Shorts, while tracing the longitudinal shifts from legacy metadata practices to the contemporary focus on AI-driven content understanding.

Longitudinal Shifts in Ranking Factors: 2018 - 2020 versus 2024 - 2026

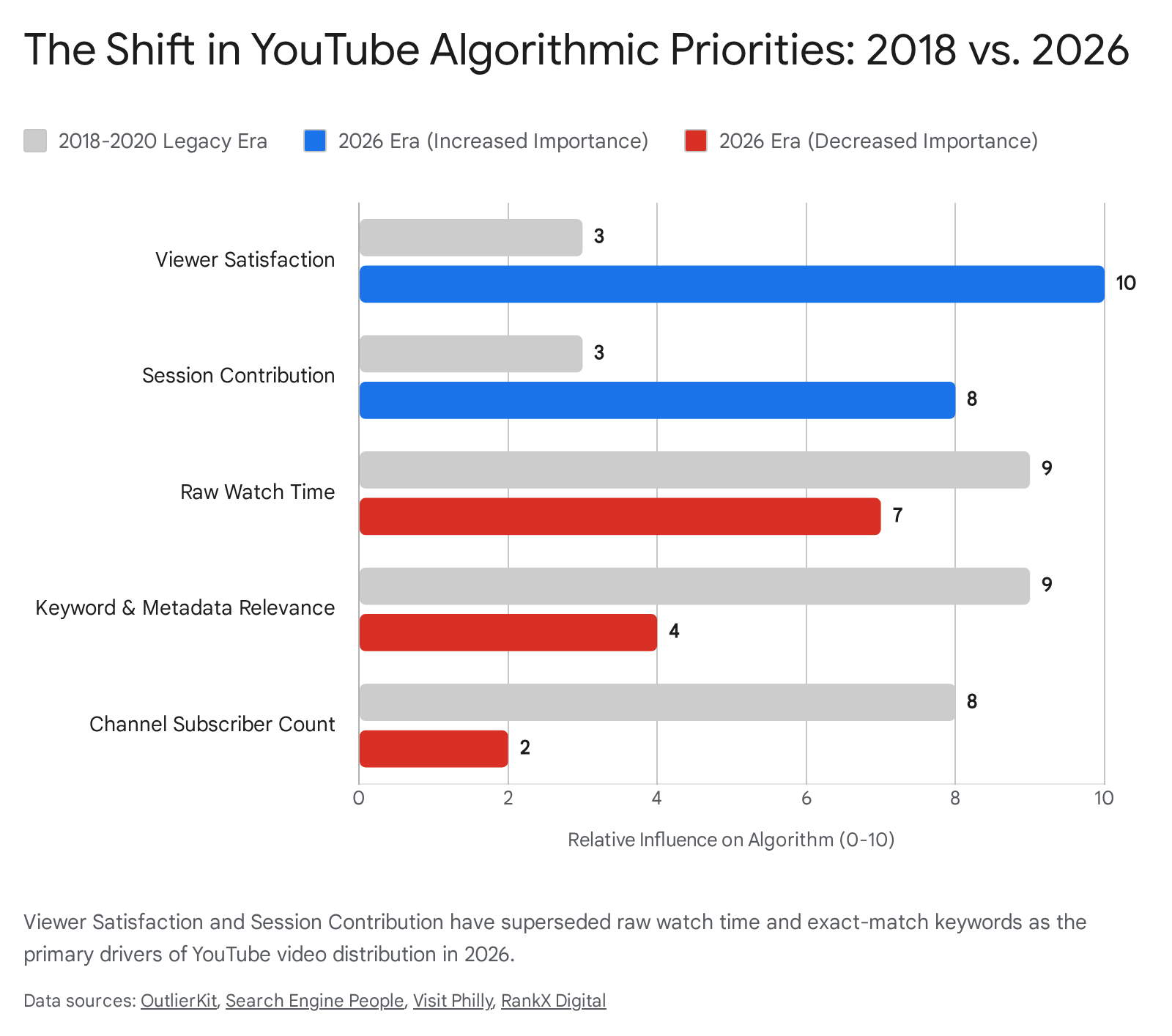

To properly contextualize the 2026 YouTube algorithm, it is critical to map the longitudinal shifts in ranking signal weights. Between 2018 and 2020, YouTube's recommendation engine functioned primarily as a traditional semantic search algorithm, relying heavily on text-based metadata to comprehend video content 51011. During this legacy era, exact keyword matches in titles, descriptions containing dense keyword repetitions, manual tagging, and raw view counts were the primary levers for algorithmic distribution. Following a 2015 Google patent, the algorithm utilized raw "watch time" as its dominant ranking signal, prioritizing excessively long-form content purely on the basis of total minutes accumulated, often at the expense of pacing and narrative efficiency 1011.

However, official communications from YouTube's Creator Insider, corroborated by extensive enterprise data from Search Engine Land and Backlinko, confirm that the 2024 - 2026 algorithmic architecture has shifted entirely away from raw watch time 457. The platform now relies on a composite metric known as the Viewer Satisfaction Score. This shift accelerated following the mid-2025 AI editing controversies and the subsequent rollout of strict AI content labeling protocols, which forced the algorithm to become far more sophisticated in detecting authentic human engagement 4. The modern algorithm assesses whether a viewer actually derived value from the time spent on the platform, mitigating the historical advantage of clickbait that drove high initial clicks but generated poor user experiences 8915.

The structural evolution of specific algorithmic ranking signals from the legacy era to the projected 2026 model illustrates a clear deprecation of manual inputs in favor of behavioral and semantic evaluations.

| Ranking Signal | 2018 - 2020 Weight & Mechanism | 2024 - 2026 Weight & Mechanism | Trajectory & Rationale |

|---|---|---|---|

| Viewer Satisfaction Score | Low. Tracked primarily via simple binary "Likes/Dislikes." | Very High. The dominant algorithmic signal. Aggregates post-watch surveys, sentiment analysis of comments, and return-viewer loyalty behaviors. | Increased. Raw watch time proved insufficient for long-term retention; the algorithm now requires qualitative proof of positive user sentiment to prevent platform churn 4. |

| Average View Duration (AVD) & Retention | High. Focused almost exclusively on raw minutes watched (Watch Time). | High. Evaluated as "Retention Depth" relative to query intent. Deep retention on a 6-minute video vastly outperforms poor retention on a 20-minute video. | Evolved. The system actively rewards efficient intent satisfaction over artificial video lengthening, shifting the focus to narrative pacing 1017. |

| Session Contribution / Session Depth | Moderate. Tracked whether a user happened to watch another video. | Medium-High. Heavily dictates Suggested Video placement. Videos that initiate prolonged viewing sessions across the platform are rewarded exponentially. | Increased. YouTube prioritizes overall platform retention; content acting as a gateway to broader, multi-video consumption is prioritized for organic distribution 4. |

| Title / Description Keyword Density | High. Exact keyword matches were considered essential for indexing. | Low. Keyword stuffing is actively penalized. Titles must reflect human intent; descriptions provide context for LLMs. Only 6% of top-ranking videos use exact title matches. | Decreased. AI-driven semantic understanding negates the need for exact text strings. Natural language processing interprets topical authority contextually 518. |

| Click-Through Rate (CTR) | Very High. Evaluated primarily in the first 48 hours to determine viral potential. | High (Threshold Filter). Evaluated continuously over a 7-day window. CTR acts as a gateway; high CTR without corresponding satisfaction leads to active suppression. | Evolved. CTR is measured contextually against specific micro-niches rather than a platform-wide average, preventing generic clickbait from dominating niche searches 1719. |

| Manual Tags | High. Critical for categorization, topic clustering, and resolving misspellings. | Negligible. Used almost exclusively to correct common spelling errors of brand names or highly technical terms. | Decreased. Replaced entirely by Visual Entity Recognition and audio transcription algorithms that natively scan the video file 112122. |

The Demise of Keyword Density and the Rise of Audience Satisfaction

The empirical data extracted from industry reports establishes definitively that keyword density is an obsolete ranking factor in 2026. Analysis conducted by Backlinko and Search Engine Land encompassing over 1.6 million indexed videos reveals absolutely no consistent correlation between high keyword density in descriptions and top rankings 1224. In fact, only 6% of top-ranking videos utilize exact keyword matches in their titles 18. The algorithmic evaluation has pivoted from string-matching to semantic understanding, making "keyword stuffing" a liability that triggers spam filters rather than an optimization tactic.

Instead, the algorithm relies on "information gain." Modern search evaluations reward content that brings novel data, unique perspectives, or comprehensive topical coverage that competing videos lack 24. This qualitative assessment is quantified through the aforementioned Viewer Satisfaction Score. This score is aggregated from several nuanced behavioral data points. First, YouTube frequently interrupts the user experience with direct post-watch surveys, asking viewers to rate their enjoyment of a specific video on a sliding scale 8913. Second, the algorithm monitors negative sentiment signals, such as the frequency with which users click "Not Interested" or "Don't Recommend Channel," which actively suppresses future distribution 913. Finally, the algorithm heavily weights "return viewer" metrics; if a video successfully compels a viewer to return to the creator's channel days or weeks later, the system reads this as the ultimate proof of long-term content satisfaction 4810.

AI-Driven Content Understanding Overriding Manual Tagging

In previous iterations of the platform, content creators acted as manual translators for the algorithm. Because legacy systems could not natively "watch" or interpret a video file, creators utilized titles, descriptions, and up to 500 characters of manual tags to explain the visual data. By 2026, the integration of Google's Gemini multimodal reasoning models into the YouTube backend has entirely inverted this dynamic 2627. The AI no longer relies on the creator's metadata to understand the video; it natively comprehends the audio, visual, and contextual layers with terrifying accuracy 212614.

Visual Entity Recognition and Scene Detection

YouTube's algorithmic infrastructure actively scans individual video frames to recognize objects, facial expressions, actions, and on-screen text 14. This visual entity recognition is cross-referenced in real-time with Google's Knowledge Graph. For example, if a creator titles a video "Advanced Python Programming 2026" but the visual entity recognition detects prolonged scenes of unrelated video game footage, the algorithm immediately identifies the semantic discrepancy and suppresses the content, regardless of how perfectly the metadata is formatted 1429.

Consequently, the visual integrity of the thumbnail and on-screen graphics has gained immense importance. Misleading thumbnails are easily detected, and the AI now actively prioritizes thumbnails that genuinely reflect the internal visual data of the video 14. On-screen graphics, supplementary b-roll, and text overlays act as direct, machine-readable SEO signals in 2026, serving as primary training data for the algorithmic indexing of the asset 2.

Audio Transcription and Natural Language Processing

Simultaneously, the algorithm transcribes and analyzes every spoken word, effectively converting the audio track into an invisible, highly detailed metadata file that supersedes any written description 31114. Semantic intent and topical authority are derived directly from the presenter's vocabulary. If a video targets a query regarding complex financial strategies, the algorithm expects to hear related entities spoken naturally throughout the content 1129.

This reality makes accurate captions not merely an accessibility feature, but a paramount SEO necessity. While YouTube provides auto-generated captions, their 2026 iteration still struggles with niche industry jargon, brand names, and complex technical phrasing. Uploading a pristine, manually verified transcript ensures that technical terms and nuanced concepts are correctly indexed, significantly expanding the surface area for both traditional search and AI-driven discovery 23.

The Liability of Automatic Chapters versus Manual Timestamps

A critical manifestation of AI's expanding role is the platform's aggressive deployment of auto-generated video chapters. While positioned as a feature to improve user navigation, empirical data from late 2025 reveals that relying on YouTube's auto-chapters is actively detrimental to modern SEO efforts. Auto-chapters demonstrate a timing accuracy of only 40% to 60%, frequently generating generic, non-descriptive titles that dilute the video's topical relevance 3031.

Conversely, manually inputted timestamps increase average watch time by 15% to 25% 30. While it seems counterintuitive to encourage skipping, allowing viewers to navigate directly to their specific point of interest prevents them from bouncing off the video entirely, thereby preserving the critical retention depth metric 32. Furthermore, manual chapters are a critical gateway for "SeekToAction" structured data. Google Search algorithms prioritize manually labeled chapters over auto-inferred ones, indexing them as clickable "Key Moments" directly within the Search Engine Results Pages (SERPs) 3215. Content creators who manually timestamp their videos effectively secure multiple distinct search ranking opportunities within a single asset, satisfying both human pacing preferences and machine-indexing requirements.

Search Everywhere Optimization and Multimodal Discovery

The definition of video SEO has expanded dramatically due to the integration of YouTube data into global LLMs. By the close of 2026, traditional query volumes on standard search engines are projected to drop by 25%, as users increasingly migrate to AI-driven answer engines 37. YouTube has cemented its position as the premier, machine-readable video repository, cited up to 200 times more frequently than alternative platforms like TikTok or Instagram across ChatGPT, Google, and Perplexity 1.

The Mechanics of AI Overviews and LLM Citations

When a user inputs a query into Google's AI Overviews or an independent LLM, the system synthesizes an answer and exposes underlying citations. The objective of SEO has therefore shifted from merely ranking in a vertical list of blue links to earning inclusion as a trusted citation within an AI's synthesized response 2. LLMs cannot visually "watch" video in real-time; they rely entirely on the structured text data surrounding the video 121.

To optimize for this multimodal search paradigm, brands must adopt "Search Everywhere Optimization" 73435. This involves treating a single YouTube video not as a final product, but as a chaptered data resource. The optimization workflow in 2026 requires several interconnected layers. First, titles must be formulated as explicit user queries rather than branded corporate statements; a title reading "How to Reduce Cloud Storage Costs" aligns perfectly with natural language prompts, whereas "Acme Q3 Cloud Update" will be ignored by LLMs 2. Second, machine-readable transcripts must be deployed to feed the LLM's semantic understanding of the spoken content 23. Third, the video must be embedded into a long-form textual blog post on the brand's primary domain, wrapped in precise Video and FAQ Schema. This creates a powerful recursive loop: the embedded video increases dwell time on the website (a positive Google SEO signal), while the web traffic feeds external view velocity back to YouTube (a positive YouTube ranking signal) 3637.

YouTube itself is aggressively testing conversational search features. The "Ask YouTube" interface allows users to prompt the platform for complex itineraries or multi-step instructions, generating a mixed-media output of Shorts, long-form videos, and textual steps 6. Furthermore, enterprise tools like MagicLinks' AI Shelf now score creator content specifically for "AI readiness," predicting its likelihood to be cited by external platforms 1. Videos lacking clean metadata, manual chapters, and clear visual entity signaling are entirely bypassed by these new multimodal answer engines, resulting in a devastating loss of organic traffic.

Algorithmic Bifurcation: Search versus Suggested/Browse

A fundamental error made by many digital marketers is optimizing a channel for a singular "YouTube Algorithm." The reality, detailed exhaustively in Creator Insider updates and major platform overhauls, is that YouTube operates multiple, independent recommendation engines, specifically bifurcated between the "Search" environment and the "Suggested/Browse" environment 438. These systems evaluate completely different sets of signals based on the viewer's psychological state and intent.

The Search Algorithm: Intent Validation and Retention Depth

The Search algorithm caters to active intent. When a user queries a term, they are actively seeking a specific answer, tutorial, or review. In this environment, the algorithm operates as a filtering system built on three distinct layers: Query Intent Matching, Performance Validation, and Satisfaction Confirmation 17.

First, Search relies on titles, transcripts, and visual entities to match the video to the semantic intent of the query 1712. Second, CTR serves as a strict threshold filter. If the CTR falls below the historical baseline for that specific micro-niche, the video is suppressed. However, crossing the CTR threshold does not guarantee ranking; it merely advances the video to the validation phase 17. Finally, Search rankings heavily prioritize Retention Depth over Retention Percentage. The system assesses the total meaningful watch time that satisfies the query. For example, a 60% retention rate on a 6-minute video (3.6 minutes watched) is heavily favored over a 40% retention rate on a 20-minute video (8 minutes watched) if the 6-minute video completely resolves the user's intent without superfluous filler 17. A video that acts as a "last long click" - where the user finds their answer and does not return to the search bar to query the same topic - is awarded a dominant search ranking 1736. Search behavior thus behaves more akin to traditional Google Search, rewarding structural clarity and immediate value proposition 8.

The Suggested and Browse Algorithm: Session Depth and Micro-Niches

In stark contrast, the Suggested (sidebar and below-video mobile placements) and Browse (homepage) algorithms cater to passive intent. The user is leaning back, browsing for entertainment or prolonged education. This system is responsible for the vast majority of all views on the platform and relies heavily on affinity scoring and pattern recognition 813.

The primary metric governing the Suggested algorithm in 2026 is Session Contribution, also referred to as Session Depth 4. The system measures what the viewer does after watching a specific video. If a video reliably prompts the viewer to watch a second or third video (preferably from the same creator through end-screens or playlists), it is deemed a powerful session initiator and receives massive Suggested distribution 410. Conversely, if a video consistently ends a user's session, causing them to close the YouTube application, its impressions in the Suggested sidebar are severely throttled 410.

Furthermore, a massive update in February 2026 overhauled the Browse feed, shifting it from broad categorical targeting to deep micro-niche personalization 4. The algorithm now clusters videos based on highly specific watch-history patterns, differentiating, for example, between a generic "fitness" viewer and a specific "kettlebell mobility" viewer. Consequently, highly generic content that attempts to appeal to broad demographics is rapidly filtered out. Hyper-niche, highly aligned content, however, benefits from explosive discoverability within these tight, algorithmic audience clusters 413.

The Symbiosis of YouTube Shorts and Long-Form Channel Authority

The strategic tension between short-form vertical video and traditional long-form content reached a critical inflection point entering 2026. Initially, creators feared that publishing YouTube Shorts would cannibalize their long-form audience, depressing click-through rates and diluting channel authority by attracting low-attention subscribers 39. However, official platform updates and enterprise data clearly demonstrate that the relationship has evolved into a highly lucrative, mathematically proven symbiosis.

Algorithmic Decoupling

In late 2025, YouTube decisively updated its infrastructure to fully decouple the Shorts recommendation engine from the long-form recommendation engine 4. The YouTube Creator Academy now treats Shorts and long-form as entirely separate growth vectors. Performance metrics acquired within the Shorts feed - whether exceptionally viral or entirely stagnant - no longer exert a direct penalty or artificial boost on the algorithmic distribution of the creator's long-form library 4. The Shorts algorithm evaluates content fundamentally differently, prioritizing initial swipe-through rates, the Viewed vs. Swiped Away (VVSA) ratio (requiring a minimum 70% view rate for strong distribution), and loop counts 8940.

The Hybrid Funnel Strategy and Monetization Dynamics

Despite the algorithmic separation, the most dominant strategy in 2026 relies on combining both formats into a cohesive funnel. Empirical data reveals that hybrid channels - those publishing both Shorts and long-form content consistently - experience channel growth 41% faster than channels relying on a single format 404142. Furthermore, creators employing this dual approach report an average revenue increase of 40% to 60%, alongside subscriber growth rates accelerating by a multiple of three 43.

The mechanics of this advantage are rooted in audience behavior and funnel economics. YouTube Shorts acts as a massive top-of-funnel discovery engine. With over 200 billion daily views, Shorts content is heavily surfaced to non-subscribers; 74% of all Shorts views originate from users completely unfamiliar with the creator 440. Shorts boast an engagement rate of 5.91% (higher than TikTok or Instagram Reels), making them exceptional vehicles for driving brand awareness and rapid subscriber acquisition at a low production cost 404142.

However, subscribers acquired exclusively through short-form content generate notoriously low revenue. In 2026, Shorts Revenue Per Mille (RPM) ranges between $0.05 and $0.07, requiring millions of views to generate meaningful income. Conversely, long-form videos command RPMs ranging from $1.61 to over $29.00 due to multiple mid-roll ad placements and deeper viewer investment 414244. Therefore, the definitive 2026 strategy utilizes Shorts as high-velocity teasers to capture attention, subsequently funneling that audience into long-form videos to cultivate topical authority, trust, and substantial monetization 394145. YouTube facilitates this directly via the "Related Video" feature, which permits creators to hard-link a relevant long-form video directly onto a Short, seamlessly bridging the gap between fleeting attention and deep-session engagement 39.

Regional SEO and Global Audience Expansion

As YouTube's global footprint expands, with over 80% of views originating outside the United States and India becoming the platform's largest market (followed by the U.S.), optimizing for regional SEO has transitioned from an optional tactic to a core growth strategy 16. The most disruptive feature in this domain is the wide release of Multi-Language Audio (MLA) tracks, supercharged by AI-driven dubbing capabilities 4849.

The Strategic Implementation of Multi-Language Audio

MLA allows creators to upload multiple dubbed language tracks to a single video file, automatically serving the viewer their native language based on geographic and account settings 4950. This eliminates the need to run separate, fragmented channels for different countries. Instead, it consolidates global views, watch time, and engagement onto a single asset, massively compounding the video's aggregate ranking signals 50. Early adopters of the feature observed watch time from non-native language audiences increase to comprise over 25% of their total channel engagement. A prominent case study involving "Vania Mania Kids" demonstrated that adding Portuguese, Spanish, German, and Polish audio tracks to an existing channel resulted in a 45% increase in views, effectively capturing 5 million additional views from previously unengaged demographics 4849.

Localized Thumbnails and Algorithmic Credibility

However, executing MLA effectively requires navigating regional algorithmic sensitivities. Because YouTube aggregates performance metrics across all audio tracks, a poorly performing secondary language track can dilute the video's overall CTR and Retention, inadvertently confusing the recommendation algorithm and suppressing the asset in its native market 48. This phenomenon, known as "track-to-audience mismatch," occurs when the algorithm fails to recognize the video as relevant in the new region because the visual cues do not align with the audio track 48.

To counteract this, regional SEO in 2026 dictates that audio translation must be paired with visual localization. A Spanish-dubbed audio track will severely underperform if the on-screen text and thumbnail remain in English, causing an immediate mismatch in viewer expectations and a severe drop in retention 48. Consequently, YouTube has piloted multi-language thumbnails, allowing creators to dynamically serve region-specific visual packaging alongside the dubbed audio 4849. Aligning the translated audio track with localized metadata and thumbnails ensures high intent matching, solidifying the video's "algorithmic credibility" in previously untapped international markets and generating strong signals for the localized recommendation engine 4851.

2026 Enterprise SEO Tools Evaluation: VidIQ vs. TubeBuddy

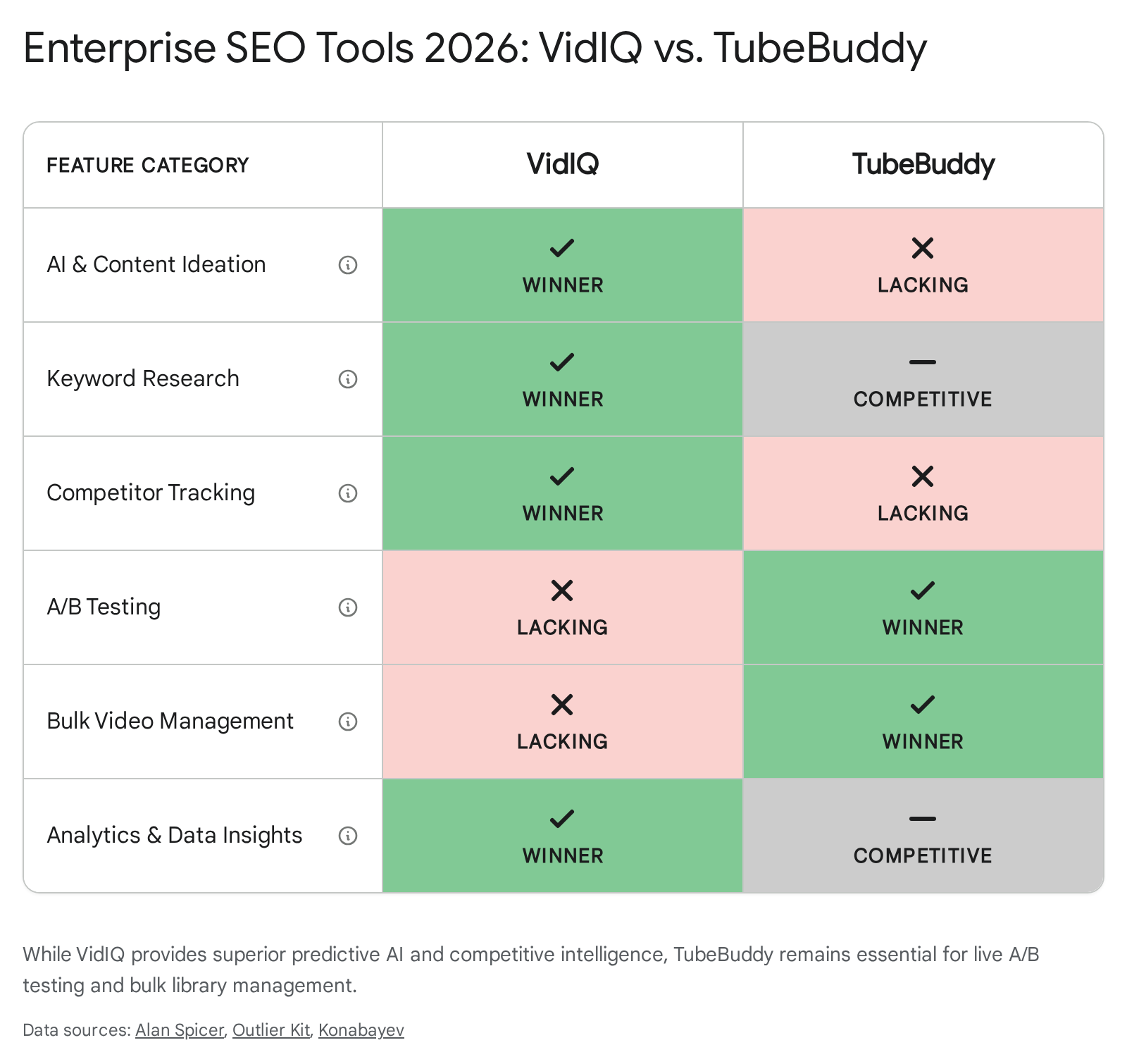

Executing a modern, data-driven YouTube strategy requires robust enterprise software. By 2026, the two dominant platforms, VidIQ and TubeBuddy, have diverged significantly in their core competencies, catering to completely different elements of the optimization workflow 525354.

The table below contrasts the distinct advantages of each platform based on 2026 updates:

| Feature Category | VidIQ (2026 Capabilities) | TubeBuddy (2026 Capabilities) | Strategic Application |

|---|---|---|---|

| Pre-Production Research & AI Ideation | Dominant. Features "AI Daily Ideas" tailored to channel history and deep search volume predictions. | Lacking. Focuses on basic tag exploration with estimated search volumes. | VidIQ is essential for planning content schedules, predicting viral trends, and finding low-competition, high-volume topics 525354. |

| Real-Time Competitor Tracking | Dominant. Offers "Views Per Hour" (VPH) tracking to spot viral velocity on competitor channels instantly. | Lacking. Does not offer real-time velocity tracking. | VidIQ allows creators to capitalize on micro-trends the moment they begin to surge in a specific niche 5354. |

| Live A/B Testing (Thumbnails & Titles) | Lacking. Does not natively offer A/B testing functionalities. | Dominant. Offers rigorous, statistically sound A/B testing for both thumbnails and titles on live videos. | TubeBuddy is unparalleled for post-publish optimization, allowing creators to definitively improve CTR by testing variations against real audiences 525354. |

| Bulk Library Management | Competitive, but limited compared to TubeBuddy. | Dominant. Offers extensive tools for retroactively updating metadata, descriptions, and end-screens across thousands of videos. | TubeBuddy is critical for established channels that need to update outbound links, affiliate codes, or SEO tags across expansive historical libraries 5354. |

| Value for Creators | $19/month (Boost plan) provides the best AI features. $49/month required for full competitor tracking. | The Legend Plan at $9/month is highly cost-effective, providing full A/B testing and bulk processing. | VidIQ is an investment in strategic growth and ideation; TubeBuddy offers massive ROI on operational efficiency and CTR optimization 5253. |

Industry consensus dictates that creators heavily focused on conceptualizing high-performing topics leverage VidIQ's intelligence, while operations teams obsessively iterating on CTR and packaging rely on TubeBuddy's highly economical Legend plan 5253.

Conclusion: Strategic Imperatives for 2026

The operational playbook for YouTube SEO in 2026 has been entirely rewritten by artificial intelligence, multimodal search integration, and shifting viewer behaviors. Manipulative metadata, keyword density, and raw watch-time metrics have been completely superseded by intent matching, satisfaction modeling, and machine-readable structuring.

To secure and compound organic visibility, digital strategists must adopt the following imperatives: 1. Prioritize Satisfaction Over Algorithmic Manipulation: Ensure content delivers on the precise intent of the query to maximize Retention Depth and positive user sentiment. A technically unoptimized video that deeply satisfies a viewer and leads to high survey scores will rapidly outrank a perfectly tagged video that induces a bounce or negative feedback. 2. Embrace Search Everywhere Optimization (GEO): Treat YouTube as a multimodal data source rather than an isolated video platform. Utilize manual chapters to capture Google Key Moments, provide flawless human-verified transcripts to feed LLMs, and utilize cross-platform video schema to ensure citations in AI-generated answers. 3. Deploy the Hybrid Format Funnel: Leverage the algorithmic decoupling by utilizing YouTube Shorts as a high-velocity discovery engine to capture non-subscribers. Deliberately funnel that top-of-funnel awareness into comprehensive long-form videos using "Related Video" links to drive topical authority and high-RPM monetization. 4. Globalize via Holistic Localization: Expand total addressable markets by implementing Multi-Language Audio tracks, taking absolute care to pair dubbed audio with regionally localized thumbnails to preserve algorithmic credibility and prevent track-to-audience mismatch.

By aligning content architecture with the platform's sophisticated AI analysis and unwavering focus on behavioral satisfaction, creators and enterprise brands can construct an impenetrable moat of visibility across the evolving multimodal search landscape of 2026.