YouTube Recommendation and Search Algorithms in 2026

The YouTube algorithm in 2026 represents a highly sophisticated, decoupled network of distinct machine learning models rather than a single monolithic engine. Serving an ecosystem that generates over $60 billion in annual revenue and processes 200 billion daily short-form views, the platform's discovery mechanics have transitioned from optimizing for raw watch time to prioritizing long-term viewer satisfaction and semantic intent 1. This structural shift dictates how content is parsed, indexed, and surfaced across global audiences.

The algorithmic infrastructure is designed to resolve a fundamental computational challenge: filtering billions of available videos in milliseconds to present a hyper-personalized slate of content that maximizes platform retention and viewer trust 2. By analyzing the distinct mathematical models driving candidate generation, ranking engines, the Browse feed, native Search, and the independent Shorts ecosystem, a comprehensive understanding of YouTube's 2026 discovery mechanics emerges. Furthermore, the interplay between these technical systems, independent algorithmic audits, and mandatory European Union transparency regulations provides a complete picture of the platform's operational realities.

Evolution of the Algorithmic Architecture

To understand the current iteration of the YouTube algorithm, it is necessary to trace the platform's architectural evolution. In its earliest phases, YouTube functioned primarily as a search repository reliant on keyword matching and crude click-through rates 3. This model was highly susceptible to clickbait, leading to a subsequent era where raw watch time became the dominant optimization target 3.

A watershed moment in the platform's engineering occurred with the implementation of the two-stage deep neural network architecture for recommendation, which separated the process into "candidate generation" and "ranking" phases 4. The candidate generation network processed user activity history to retrieve a small subset of videos from the massive corpus, providing broad personalization via collaborative filtering 4. The ranking network then assigned a score to each candidate using a rich feature set, sorting the videos for the final user interface 4.

However, optimizing strictly for watch time created unintended secondary effects. While it maximized immediate engagement, it did not necessarily correlate with user satisfaction, and in some cases, it incentivized the platform to surface polarizing or increasingly extreme content to maintain session duration 5. To address these structural flaws and align with evolving user expectations, YouTube initiated a comprehensive algorithmic overhaul beginning in 2024 and culminating in the 2026 "satisfaction-weighted discovery" models 6. The contemporary architecture incorporates multi-objective optimization, balancing engagement vectors with explicit satisfaction signals, diversity requirements, and stringent safety controls 27.

Viewer Satisfaction and Core Evaluation Metrics

In 2026, viewer satisfaction signals systematically outweigh pure engagement metrics like Click-Through Rate (CTR) and Average View Duration (AVD) across the platform's primary recommendation surfaces 16. The algorithmic framework attempts to answer a specific predictive question: what content will a viewer find most satisfying at a given moment, such that they develop long-term trust and habitual reliance on the platform 12.

To operationalize this abstract concept of satisfaction, YouTube's engineering teams have developed several composite metrics that blend vast quantities of behavioral data with qualitative feedback mechanisms. These signals inform the ranking deep neural networks (DNNs) that finalize the content slate presented to individual users.

The Five Primary Satisfaction Signals

The current algorithmic evaluation relies on a framework of five primary signals to rank and distribute content, moving away from isolated vanity metrics toward holistic session analysis.

The most heavily weighted signal is the Watch Satisfaction Score (WSS). This is an internal composite metric derived from direct post-view survey prompts, watch retention quality, and positive sentiment modeling 36. YouTube intermittently presents viewers with surveys asking them to rate their enjoyment of a recently watched video; these responses act as the ground-truth standard for the algorithm 3. When survey data is unavailable, the system infers satisfaction by analyzing the ratio of likes to views, the semantic sentiment of the comment section, and the stability of the viewer's retention curve 6. The WSS is currently the single strongest determinant of a video's long-term algorithmic viability 1.

Working in tandem with WSS is the Quality Click Ratio (QCR). This derivative metric calculates the ratio of satisfied viewers to total clicks by analyzing the delta between Click-Through Rate and Average View Duration 36. While CTR remains critical for initial discovery, a high CTR coupled with a steep early drop-off in retention results in a negative QCR 36. The algorithm interprets a negative QCR as an indicator of deceptive packaging or unfulfilled intent, colloquially known as clickbait 3. Consequently, the system imposes heavy suppressive weights on content with poor Quality Click Ratios, quickly halting its distribution in broader recommendation feeds 3. Success in 2026 requires aligning the promise made by the thumbnail and title with an immediate payoff in the video's opening seconds 8.

Beyond individual video performance, the algorithm evaluates broader behavioral patterns using the Session Continuity Rate (SCR), also referred to as Session Depth. This metric tracks whether a video acts as a catalyst for continued platform usage or serves as an exit point 16. Videos that prompt viewers to watch subsequent content - whether from the same creator or from unrelated channels - contribute positively to platform satisfaction and are heavily rewarded, particularly in Suggested video placements 16. Conversely, videos that routinely prompt users to close the application or navigate away from YouTube receive diminished algorithmic distribution, as they disrupt the platform's overarching retention goals 1.

The evaluation of content pacing and engagement is formalized through the Retention Delta (RD). Instead of evaluating raw watch time in a vacuum, the algorithm calculates the difference between a specific video's retention curve and the historical median for that specific category or channel 6. A positive Retention Delta indicates that the content outperforms its direct competition in holding audience attention, signaling to the ranking engine that the video should be prioritized in Browse and Suggested feeds 6.

Finally, the Viewer Loyalty Index (VLI) measures the propensity of users to return to a specific channel within a 7-to-30-day window following initial exposure 6. A high VLI indicates that the content successfully builds reliable audience habits and fosters trust 6. The algorithm relies on "returning viewer satisfaction" as a proxy for content quality, rewarding creators who utilize consistent formats, logical playlist integrations, and cohesive narrative structures that encourage episodic consumption 6.

Metric Weighting and Optimization Shifts

The recalibration of these signals has materially altered the mathematical weighting of ranking factors compared to previous iterations of the platform. Creators and digital strategists must adapt to a landscape where optimization strategies focused solely on generating clicks are actively penalized by the system.

| Metric Classification | Estimated 2023 Algorithmic Weight | Estimated 2026 Algorithmic Weight | Primary Optimization Variable |

|---|---|---|---|

| Viewer Satisfaction (Surveys & Inferred) | 15% | 35% (Very High) | Direct viewer sentiment, pacing, payoff |

| Average View Duration (AVD) | 35% | 25% (High) | First 30-second hook, pattern interrupts |

| Click-Through Rate (CTR) | 35% | 20% (High) | Title/thumbnail alignment, outcome promise |

| Return Sessions (VLI / Loyalty) | 10% | 15% (Medium-High) | Consistent formats, playlist integrations |

| User Feedback Negatives ("Not Interested") | 5% | 5% (Sharp Penalty) | Relevancy targeting, avoiding clickbait |

Data reflecting the shifts in recommendation model weighting from 2023 to the current 2026 baseline, highlighting the dominance of qualitative satisfaction tracking over raw engagement accumulation 16.

Implicit vs. Explicit Data Processing

The transition toward satisfaction-based optimization has required YouTube to expand its reliance on implicit behavioral signals rather than explicit user actions. While explicit signals such as "Likes" and "Subscribes" are straightforward indicators of approval, they are subject to bias and represent only a fraction of total user interaction 2. In 2026, the real-time event logging pipeline prioritizes implicit signals that are significantly more difficult to manipulate 2.

The system tracks micro-behaviors such as dwell time over a thumbnail, scroll speed, and specific navigation within the video player 2. For instance, if a user slows their scrolling pace while passing a thumbnail but ultimately does not click, the system logs this hesitation as a feature indicating latent interest 2. Similarly, if a viewer rewinds a specific segment of a tutorial video, the algorithm interprets this not as a loss of attention, but as a strong signal of deep engagement and value extraction 2. These implicit feedback loops are fed continuously into the deep neural network rankers, allowing the algorithm to construct highly accurate predictions regarding expected watch time and satisfaction probability 2.

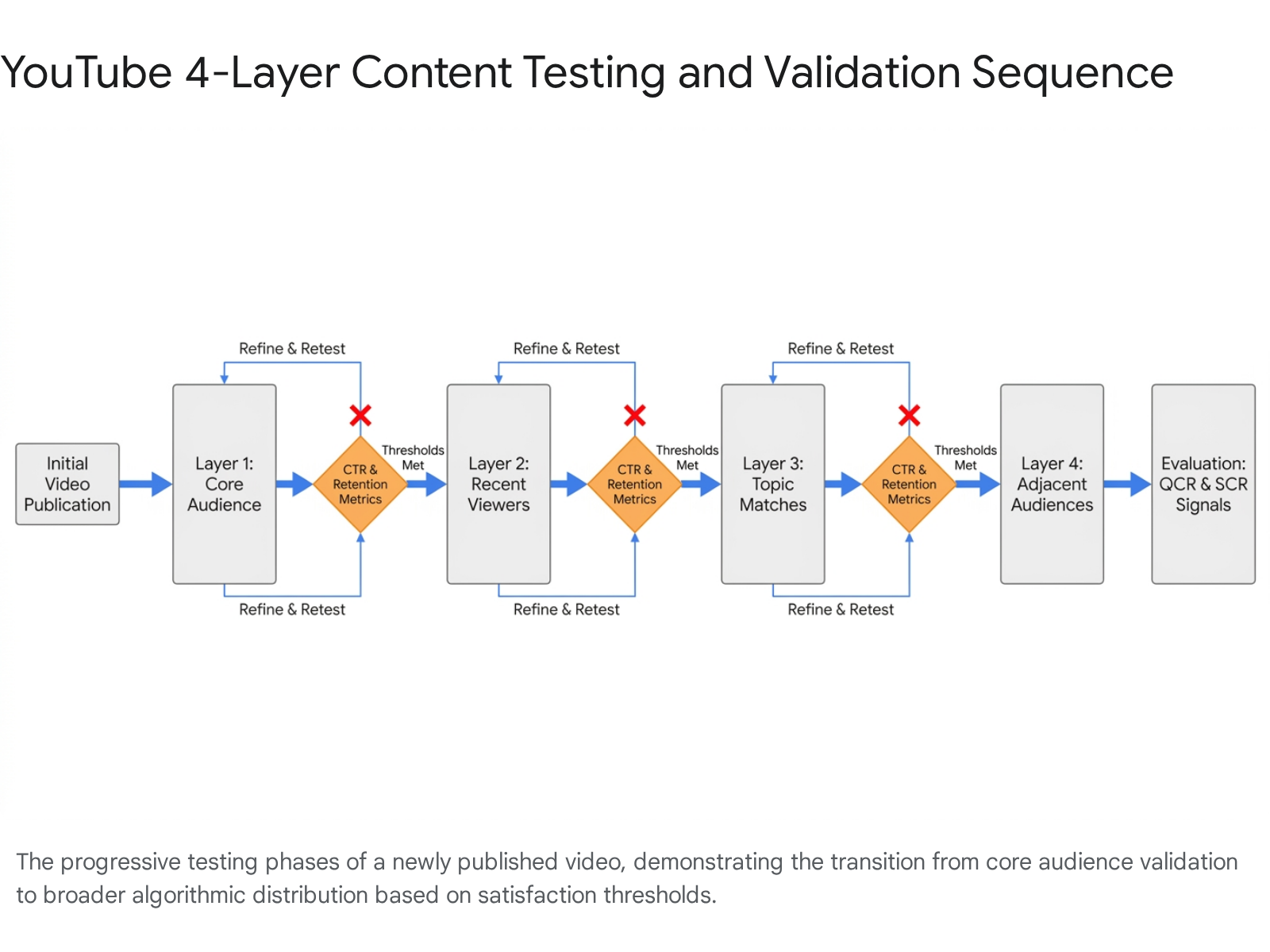

The Four-Layer Testing and Validation System

When a new video is published, the recommendation system does not immediately assess its ultimate viability across the entire platform. Instead, it deploys a progressive, multi-layered testing framework designed to establish baseline performance metrics before authorizing expanded reach 9. This sequential validation protocol prevents low-quality, deceptive, or hyper-niche content from saturating broad discovery surfaces and degrading the general user experience.

The architecture of this testing system is conceptually analogous to expanding concentric circles, where a video must achieve predetermined algorithmic thresholds at each stage to unlock the subsequent tier of distribution.

Layer 1: Core Audience Validation

Upon upload, the system first surfaces the video to a narrowly defined "Core Audience" consisting of subscribers who regularly engage with the creator's content and viewers who have activated push notifications 9. During this initial phase, the algorithm heavily scrutinizes the Quality Click Ratio and Average View Duration. The system expects higher-than-average engagement metrics from this cohort due to their pre-existing affinity for the creator. If the CTR is strong but the resulting retention drops precipitously, the system identifies a fundamental mismatch between the video's packaging and its substantive content 610. A failure to satisfy the core audience generally halts the testing process, restricting the video to organic search and direct channel navigation.

Layer 2: Recent Viewer Expansion

If the video successfully satisfies the core audience baseline, it is systematically presented to "Recent Viewers" - users who have interacted with the channel's content recently but may not be actively subscribed 9. This layer tests the content's ability to re-engage casual viewers and convert passive interest into active consumption. The algorithm monitors whether these users watch the video to completion and whether their interaction positively influences the channel's overall Viewer Loyalty Index.

Layer 3: Topic and Semantic Matches

Videos that exhibit strong performance across the first two layers are evaluated for broader "Topic Matches." At this stage, the algorithm surfaces the video on the Home feeds of viewers who regularly consume content in the same specific niche, but who have no prior exposure to the specific creator 9. The clarity of the video's metadata and the semantic consistency of its content are crucial here, as the system relies on vector embeddings to match the video with the appropriate audience clusters 11. In 2026, YouTube has accelerated this phase for smaller, emerging channels. If early signals from Layers 1 and 2 are exceptionally strong, the system will bypass traditional data collection delays, testing the video with broader topic-matched audiences within days rather than the weeks required in previous iterations of the algorithm 9.

Layer 4: Adjacent Audience Virality

The final layer of the testing framework involves presenting the content to "Adjacent Audiences" - viewers whose historical watch patterns indicate an interest in tangentially related topics 9. This is the computational mechanism through which videos achieve viral scale. The algorithm relies on complex inference models to determine that users with overlapping behavioral clusters will exhibit similar Watch Satisfaction Scores 9. Content that succeeds at this layer transitions from being a niche success to a high-authority piece of media, frequently featured on the top level of the Home page and in broad recommendations 12.

Surface-Specific Recommendation Mechanics

A critical misunderstanding among digital strategists is the assumption that YouTube operates on a unified algorithmic protocol. In reality, the platform deploys distinct recommendation engines tailored to specific discovery surfaces 113. The algorithms driving the Browse feed, Suggested videos, and native Search evaluate content using divergent operational logic, prioritizing different user intents and contextual signals 13.

The Browse Feed and Micro-Niche Clustering

The Browse surface, predominantly encompassing the YouTube homepage, is designed to capture user attention when they arrive at the platform without a specific search intent. The overarching goal of the Browse algorithm is to predict latent desires based on historical behavior.

Previously, the Browse feed grouped content into broad, generalized categorical buckets such as "Technology," "Gaming," or "Cooking" 1. By 2026, the Browse engine has transitioned to highly granular user-clustering based on detailed watch history patterns 114. The system utilizes advanced machine learning to identify and map micro-niches within an individual's viewing habits 1. For instance, rather than categorizing a video broadly under "marketing," the AI clusters content specifically around "email marketing automation for online coaches" and surfaces it only to viewers whose behavioral vectors precisely match that exact profile 14.

For content creators, this necessitates profound strategic shifts. Channels that consistently produce content within a tightly defined semantic cluster benefit from high algorithmic predictability 13. The system can efficiently route their videos to the correct Home feeds because the audience profile is clearly delineated. Conversely, channels that pivot abruptly between unrelated topics disrupt the algorithm's ability to maintain a stable audience cluster. This lack of clarity reduces the efficiency of the recommendation system, resulting in suppressed Home feed impressions, as the algorithm attempts to mitigate the risk of serving irrelevant content to a generalized audience 1314.

Suggested Videos and Session Depth Optimization

Suggested videos - appearing in the right-hand sidebar on desktop interfaces or in the continuous below-video feed on mobile devices - remain one of the most critical traffic sources for the platform 1. The algorithm responsible for selecting these videos operates under a fundamentally different mandate than the Browse feed: its primary objective is to maintain and extend the current viewing session.

In 2026, the Suggested video algorithm relies heavily on three core variables: the topical adjacency to the currently playing video, the individual viewer's historical co-visitation graph, and the candidate video's historical Session Continuity Rate 1. The mathematical weighting of session contribution has increased substantially over recent years 1. Videos that consistently act as bridges, leading viewers to click and consume another video, are granted outsized visibility in the Suggested feed 1. Conversely, videos that historically result in users closing the application or navigating away from YouTube are actively demoted from this surface, regardless of their individual Average View Duration or Click-Through Rate 1. This mechanism ensures that the platform maximizes total session engagement by chaining together highly compatible content.

Search Infrastructure and Multimodal Retrieval

YouTube Search has evolved far beyond a traditional metadata-matching system. It has transitioned into an intent-based, multimodal retrieval engine capable of understanding the semantic reality of video content 1115. Legacy optimization strategies that relied on keyword stuffing in titles, tags, and descriptions have been rendered largely obsolete by natural language processing and advanced machine learning models 16.

The 2026 Search algorithm evaluates how well videos perform for real users post-search, applying a strict performance filter based on the average watch time and satisfaction scores derived specifically from search queries 11. If a video ranks highly for a specific keyword but viewers consistently abandon the video after clicking, the algorithm quickly downranks the content for that query, regardless of how perfectly the metadata matches the search terms 11.

The Gemini Embedding 2 Integration

A transformative structural shift in YouTube's Search and indexing infrastructure occurred with the deployment of Gemini Embedding 2 in April 2026 1617. This architecture represents a natively multimodal embedding model capable of mapping text, images, video, audio, and documents into a single, unified continuous vector space 1618.

Prior to the integration of unified models, building reliable cross-modal search was a significant technical challenge 17. Video management tools and search engines treated video files as "metadata deserts," relying entirely on external text descriptions because they had no inherent understanding of the visual and auditory content within the file 17.

Through the capabilities of Gemini 3.1 Pro and the Gemini API, YouTube's backend systems can now automatically process up to 120-second continuous chunks of video, segmenting them into coherent scenes with semantic labels (e.g., "Action," "Comedic Beat") and generating natural language descriptions for visual elements 1718. This allows the Search algorithm to perform deep semantic retrieval based on the actual visual and auditory reality of the video 17. When a user queries a highly specific scenario, the algorithm can retrieve exact timestamps from videos that visually depict the query, even if the creator never mentioned the specific keywords in their title or transcript 17.

Furthermore, the model addresses the massive storage costs and search latency associated with indexing billions of high-dimensional vectors. Utilizing Matryoshka representation learning, the critical semantic information is nested in the earliest dimensions of the vector 16. This allows YouTube to request the default 3,072 dimensions for maximum precision or dynamically truncate the vectors to 1536 or 768 dimensions to optimize for search speed at global scale without sacrificing significant retrieval accuracy 16.

The Shorts Ecosystem and Format Hybridization

The algorithmic infrastructure powering YouTube Shorts operates entirely independently from the long-form recommendation systems 113. Living primarily inside a swipe-driven vertical feed, the Shorts algorithm functions similarly to dedicated short-form competitors like TikTok, evaluating content in real-time based almost exclusively on immediate behavioral signals rather than historical channel authority, subscriber counts, or long-term loyalty metrics 1319.

Distinct Algorithmic Processing for Short-Form Content

When a Short is published, it enters a highly volatile "Test Audience Phase" during its first hour of availability 19. The algorithm pushes the video to a sample audience and evaluates two primary engagement metrics with extreme prejudice:

- Swipe-Away Rate vs. View Rate: The algorithm measures the percentage of users who stop scrolling to watch the Short versus those who instantly swipe past it. This metric functions as the short-form equivalent of Click-Through Rate and is the primary gatekeeper for further distribution 921.

- Completion and Replay Rates: The system tracks the percentage of the video watched, and crucially, how many users allow the video to loop multiple times. High replay rates are interpreted by the algorithm as the strongest possible satisfaction signal for the vertical format 1013.

Because the Shorts feed prioritizes these rapid behavioral signals over historical channel data, a brand-new account with zero subscribers can achieve viral scale within hours if the content perfectly aligns with immediate viewer intent and prevents the swipe-away action 19. Conversely, established creators with millions of subscribers can experience severe algorithmic suppression in the Shorts feed if their short-form content fails to match the aggressive pacing and immediate hook-structure required by the vertical format 19. The algorithm evaluates the content strictly on its isolated performance.

Hybrid Creator Strategies and Audience Conversion

Despite the technical and operational separation of the two algorithms, YouTube in 2026 explicitly favors and rewards creators who deploy a hybrid content strategy 22. Internal data indicates that channels consistently utilizing both Shorts and long-form content grow their subscriber base three times faster than single-format channels, and hybrid creators observe a 40-60% increase in total monetization 22.

This systemic symbiosis occurs because Shorts act as the platform's primary top-of-funnel discovery engine. Approximately 74% of all Shorts views originate from non-subscribers, providing unparalleled reach into new audience segments 20. Viewers who discover and engage with a creator via the Shorts feed are subsequently seeded with the creator's long-form content on their Browse feed 1022.

However, format-aware filtering dictates the boundaries of this cross-pollination. If a viewer predominantly consumes short-form media and routinely ignores long-form videos, the algorithm will cease suggesting long-form content to them entirely, isolating them within the Shorts ecosystem 11. Therefore, creators who rapidly built massive audiences exclusively through Shorts often struggle to convert those specific viewers to their long-form videos, as the audiences represent distinct behavioral cohorts with divergent consumption preferences 21.

| Algorithmic Metric | Shorts Feed Delivery | Long-Form Delivery (Browse & Search) |

|---|---|---|

| Primary Discovery Source | Native Swipe Feed (74% non-subscribers) | Browse (Home), Suggested, and Native Search |

| Average Engagement Rate | 5.91% | ~3.87% - 4.5% |

| Key Retention Target | 73%+ (High emphasis on loop completion) | 50%+ Average View Duration |

| Monetization Impact (RPM) | Low ($0.01 - $0.07 per 1,000 views) | High (Varies heavily by niche and demographics) |

| Algorithmic Testing Velocity | Rapid (First 1-2 hours heavily determine trajectory) | Measured (Layered expansion over 24-48 hours) |

Comparison of algorithmic metrics, audience behaviors, and distribution mechanics across YouTube's distinct content formats in 2026 19202522.

Creator Tools and Artificial Intelligence Analytics

To assist creators in navigating the increasing complexity of these recommendation systems, YouTube introduced advanced generative AI tools directly into the creator workflow. The most significant development is "Ask Studio AI," an integrated conversational assistant located within the native YouTube Studio dashboard 123.

Operating through a natural language interface, Ask Studio AI utilizes large language models to analyze a channel's proprietary dataset 24. Prior to this integration, creators were required to manually interpret complex data matrices, parsing through Quality Click Ratios, retention drop-offs, and audience demographic shifts. Ask Studio automates this analytical burden, allowing creators to query the system in plain text (e.g., "Why did my last video underperform?") and receive data-driven, strategic recommendations 25.

The system evaluates the channel's performance across the algorithm's various testing layers and surfaces actionable insights 24. Crucially, YouTube maintains a strict, privacy-centric boundary for this tool; Ask Studio trains and operates exclusively on the individual creator's isolated data silo 24. It does not compare channels against specific competitors or utilize external datasets, ensuring that competitive analytics and proprietary Watch Satisfaction Score data are not inadvertently leaked or homogenized across the platform 24.

Algorithmic Audits and Systemic Vulnerabilities

The opacity, complexity, and sheer scale of YouTube's recommendation systems have subjected the platform to rigorous external auditing. The historical pursuit of engagement, session depth, and watch time carried the inherent risk of prioritizing polarizing, extreme, or deceptive content - a phenomenon studied extensively by independent researchers and digital rights organizations 526.

The Mozilla Foundation Investigations

The Mozilla Foundation's RegretsReporter project represents the largest crowdsourced experimental audit of the YouTube algorithm ever conducted 2627. Analyzing data generated by tens of thousands of global participants, the research sought to quantify the prevalence and distribution mechanisms of harmful content.

The findings determined that 71% of videos reported by users as "regrettable" or harmful were proactively surfaced by YouTube's recommendation algorithm, rather than being actively searched for by the user 2628. Furthermore, videos recommended by the algorithm were 40% more likely to be flagged as regrettable than those found via organic search, highlighting a systemic flaw where the optimization for engagement inadvertently amplified boundary-pushing material 26.

The audit exposed severe inefficiencies in algorithmic enforcement: * Policy Violations and Velocity: An estimated 12.2% of reported videos were found to explicitly violate YouTube's own Community Guidelines 26. In instances where these recommended videos were eventually identified and removed by the platform, they had already accumulated a collective 160 million views 28. At the time of reporting, these videos were averaging 5,794 views per day prior to deletion - a velocity 70% higher than standard, compliant content 2829. * User Control Failures: A secondary Mozilla study involving 22,722 data donors revealed that YouTube's native feedback mechanisms (such as the "Dislike" or "Don't Recommend Channel" buttons) were largely ineffective at altering the algorithm's behavior. Users frequently experienced "algorithmic rabbit holes" where explicitly requesting the cessation of a specific topic (e.g., firearm content or cryptocurrency scams) resulted in the algorithm continuing to suggest adjacent or identical material shortly thereafter, indicating that behavioral watch patterns overrode explicit user controls 27. * Geographic and Linguistic Disparities: The rate of algorithmic harm was unevenly distributed across the global user base. The incidence of regrettable recommendations was 60% higher in countries where English is not the primary language (such as Brazil, Germany, and France) 2930. Furthermore, non-English content featured a disproportionately high rate of pandemic-related misinformation, constituting 36% of flagged content compared to only 14% within the English-speaking ecosystem 29.

It is necessary to note that some contemporary institutional studies present a more nuanced view of the algorithm's trajectory. A 2026 report by the Institute for Strategic Dialogue (ISD) focused on young male users found that the algorithm primarily reinforces established user interests - such as gaming or traditional masculine hobbies - without necessarily pushing users toward extremism unprompted 31. The findings suggest that without active engagement signals from the user, the algorithm does not significantly alter the overall recommendation mix to introduce harmful ideological content 31. Nevertheless, the empirical data from crowdsourced audits underscores the ongoing tension between optimizing for Session Continuity and the inevitable surfacing of controversial media 5.

Regulatory Compliance and the Digital Services Act

In direct response to the societal implications of algorithmic content curation and the vulnerabilities exposed by independent audits, robust regulatory frameworks have been enacted. Operating under the European Union's Digital Services Act (DSA), YouTube is formally classified as a Very Large Online Platform (VLOP) 3632. This designation subjects the platform's algorithms to unprecedented legal scrutiny and mandatory transparency protocols.

The DSA mandates that all VLOPs submit granular, machine-readable transparency reports biannually, replacing the previously fragmented and incompatible corporate disclosures 3632. The first harmonized reporting cycle utilizing the new standardized CSV templates concluded in February 2026, covering enforcement data from the latter half of 2025 32. These regulations compel YouTube and its parent company to disclose exact quantitative figures regarding content moderation, the precision of automated systems, and the allocation of human resources across all EU member states 3233.

Algorithmic Enforcement and Moderation Disparities

The February 2026 DSA transparency disclosures revealed the absolute reliance of major platforms on algorithmic enforcement. Across the broader industry, manual human review cannot scale to the volume of content generated. Data from the reporting period indicated the removal of over 112 million pieces of policy-violating content by major VLOPs, with automated algorithmic systems responsible for 93.8% of these takedowns 34. The platforms reported a precision rate of 97.6% for these automated decisions 34.

| DSA Mandatory Reporting Requirements for VLOPs (2026) | Description of Regulatory Mandate |

|---|---|

| Biannual Reporting Frequency | Comprehensive reports covering Jan-Jun due in August; Jul-Dec due in February. |

| Standardized Machine-Readable Format | Utilization of uniform CSV templates to ensure cross-platform data comparability. |

| Primary Risk Categorization | Moderation data sorted into 15 mandatory categories (e.g., cyber violence, protection of minors, scams). |

| Automation and Precision Metrics | Explicit disclosure of content removed via automated algorithms versus human review, alongside error rates. |

| Appeals Tracking and Resolution | Quantitative data on internal complaint mechanisms, user appeals, and original decision reversal rates. |

| Human Resource Allocation | Disclosure of in-house versus third-party content moderators, segmented by specific linguistic expertise. |

Core transparency mandates imposed on YouTube by the European Commission under the Digital Services Act, reflecting the shift toward regulated algorithmic accountability 323334.

A notable finding from the recent DSA analyses is the extraordinarily low rate of user appeals. Despite the massive volume of automated content takedowns, appeals account for well under one percent of moderation decisions, suggesting that users either lack awareness of the mechanisms or rarely contest the algorithm's enforcement actions 34.

Furthermore, the mandated reports corroborated the concerns raised by the Mozilla audits regarding uneven language coverage. The data explicitly demonstrated that human moderation resources and the most sophisticated NLP models remain heavily concentrated in major languages (English, French, German, and Spanish) 34. In smaller linguistic markets, moderators frequently handle multiple languages simultaneously, leaving these populations increasingly reliant on less-refined automated systems and more vulnerable to the spread of localized misinformation or harmful content 34.

Academic Convergence and Future Trajectories

The industrial practices utilized by YouTube closely mirror broader theoretical advancements documented in academic computer science. Analysis of the proceedings from the ACM Recommender Systems (RecSys) conferences in 2025 and 2026 highlights the ongoing convergence of industry-scale applications and foundational research 735.

Multi-Objective Optimization Models

A dominant theme in contemporary RecSys research is the challenge of multi-objective optimization 236. Historical models optimized for a single proxy, such as predicted rating or click-through rate. Modern theoretical frameworks focus on mathematically balancing competing objectives - such as immediate engagement, long-term retention, revenue generation, and content diversity - within a single, cohesive ranking engine 7.

Industrial platforms like YouTube must solve these conflicting goals continuously, mitigating the algorithmic "filter bubble" effect while maintaining user interest 3637. Academic researchers are advancing solutions such as Diversity-Driven Random Walks (D-RDW), lightweight algorithms designed to inject customizable target distributions of diverse content into standard recommendation queues without devastating engagement metrics 35. Furthermore, the integration of "engagement-aware personalized loss" functions allows platforms to align instantaneous intent signals (like a click) with long-term user happiness across multiple sessions 3839.

Large Language Models in Taste Profiling

The integration of Large Language Models (LLMs) into recommendation architectures represents a major paradigm shift, moving systems away from purely numerical matrix factorization toward semantic understanding 7. Academic papers from RecSys 2025 demonstrate that LLMs are increasingly utilized to generate natural language user taste profiles from historical consumption data 35.

This approach provides a highly interpretable alternative to opaque collaborative filtering representations, allowing the algorithm to understand subtle semantic nuances in user behavior 35. Furthermore, LLMs are being deployed to mitigate the "cold start" problem for new content and new users. By generating augmented user histories and synthesizing thematic clusters based on text embeddings (such as video transcripts or titles), algorithms can facilitate highly accurate initial audience matching before extensive behavioral data is collected 3539.

Conformal Risk Control Mechanisms

Addressing the systemic vulnerabilities exposed by regulatory bodies and independent audits, researchers are advancing techniques like Conformal Risk Control to systematically mitigate unwanted or harmful recommendations 37.

These mathematical frameworks provide strict statistical guarantees on the performance of the algorithm. Rather than relying on heuristic moderation rules, Conformal Risk Control ensures that the probability of a recommendation system surfacing policy-violating or misaligned content remains below a predefined, mathematically proven threshold 38. The implementation of these advanced statistical controls represents the necessary future trajectory for massive platforms, aligning technical development with the increasingly rigorous transparency and safety mandates of global digital services legislation 38.

Conclusion

In 2026, the YouTube algorithm is fundamentally characterized by its transition from an engagement-maximization engine to a highly nuanced satisfaction-prediction system. By elevating qualitative metrics such as the Watch Satisfaction Score, Quality Click Ratio, and Session Continuity Rate over traditional watch time, the platform seeks to cultivate sustainable viewing habits and foster long-term user trust.

The underlying technological infrastructure has shifted decisively toward multimodal, semantic understanding. Heavily driven by advanced models like Gemini Embedding 2, the system parses video content scene-by-scene, reducing reliance on easily manipulated text metadata. Simultaneously, the distinct computational logic governing the Browse feed, native Search, and the swipe-driven Shorts ecosystem requires content creators to adopt hybridized, format-aware strategies to maintain visibility. While the efficiency of these generative and retrieval systems is unprecedented, the absolute reliance on automated curation continues to attract significant regulatory scrutiny. This dynamic ensures that the platform will continue to evolve, balancing the pursuit of personalized discovery with the rigorous demands of algorithmic transparency and multi-objective safety controls.