Symbolic and connectionist AI debate and neuro-symbolic models

The pursuit of artificial general intelligence has historically been fractured by a profound methodological and philosophical schism regarding the fundamental architecture of machine cognition. This divide separates the connectionist paradigm, which models intelligence through the emergent, statistical properties of distributed neural networks, from the symbolic paradigm, which relies on the explicit, rule-based representation of human knowledge and formal mathematical logic. For over six decades, the pendulum of academic consensus has swung dramatically between these two approaches, driven by hardware limitations, theoretical breakthroughs, and shifting funding landscapes.

In recent years, the staggering empirical success of deep learning and large language models has heavily favored the connectionist approach, sidelining traditional symbolic logic. However, persistent limitations regarding out-of-distribution generalization, logical reliability, and architectural interpretability have catalyzed a resurgence of interest in explicit reasoning systems. Neuro-symbolic artificial intelligence has emerged as the proposed resolution to this dichotomy. By attempting to synthesize the high-dimensional pattern-recognition capabilities of neural networks with the rigorous, deductive reasoning of symbolic logic, neuro-symbolic systems aim to transcend the limitations of both parent paradigms. This report provides an exhaustive analysis of the connectionist versus symbolic debate, the mechanical integration of hybrid cognitive systems, and the regulatory, mathematical, and architectural forces driving the adoption of neuro-symbolic frameworks.

The Connectionist Paradigm

The current dominance of deep learning is philosophically and technically underpinned by an observation that computer scientist Richard Sutton formalized as "The Bitter Lesson" 12. Sutton articulated that the history of artificial intelligence research demonstrates a consistent, inescapable pattern: general methods that leverage vast computational power and scale arbitrarily - specifically, statistical search and deep learning - consistently outperform systems that depend on handcrafted human knowledge 345.

The Scale Hypothesis and Computation

The core argument of the connectionist triumph is that human intuition regarding how cognition functions is often a direct impediment to machine learning. Early artificial intelligence systems, particularly expert systems from the symbolic tradition, required researchers to explicitly encode rules, heuristics, and domain-specific semantic networks 6. While these systems performed exceptionally well in narrow, closed-loop environments like chess or specific medical diagnostics, they proved entirely brittle when deployed in the noisy, continuous, and unpredictable environments of the physical world.

Sutton's thesis posits that leveraging massive datasets to allow a computational model to discover its own internal representations is invariably more effective than forcing a machine to adopt human-defined concepts 467. The deep learning revolution, precipitated by advancements in backpropagation algorithms, the proliferation of specialized hardware like Graphical Processing Units, and the exponential growth of internet-scale datasets, validated this perspective. Neural networks, particularly those utilizing the Transformer architecture, treat intelligence as an emergent property of statistical pattern matching across vast, continuous parameter spaces 2.

The "Bitter Lesson" has subsequently evolved into the contemporary "Scale Hypothesis," which serves as the prevailing ethos driving the development of frontier artificial intelligence models. The scale hypothesis suggests that as models ingest exponentially more data and compute, novel emergent capabilities - including rudimentary forms of reasoning and logical deduction - naturally arise without the need for distinct, hard-coded logical subsystems 3579. Proponents of scaling argue that because large language models, operating purely as auto-regressive next-token predictors, can successfully pass professional examinations, generate complex executable code, and simulate logical deductions using homogenous, undifferentiated artificial neurons, explicit symbolic programming is obsolete 110.

The Deficiencies of Pure Connectionism

Despite these successes, the assumption that scaling alone will yield artificial general intelligence remains heavily contested across the academic community. Critics argue that while the connectionist approach undeniably dominates visual perception and linguistic fluency, its application to complex, multi-step logical deduction operates as a sophisticated approximation rather than a genuine cognitive process 78910.

Pure neural networks operate as statistical approximators; they do not possess a grounded model of the physical world, nor do they natively understand objective truth 10. Because they map inputs to outputs entirely via continuous vector spaces, they are inherently prone to generating highly fluent but factually incorrect information, a phenomenon widely documented as hallucination 911. Furthermore, when confronted with out-of-distribution tasks that require strict compositional generalization - the ability to combine known concepts in novel ways under rigid constraints - pure neural networks frequently fail unpredictably, exposing the brittleness of intelligence derived solely from data interpolation 11512.

Cognitive Critiques of Connectionism

To articulate the precise architectural deficits of current neural networks, researchers frequently draw upon dual-process theories of human cognition. Cognitive scientists and artificial intelligence researchers, most prominently Gary Marcus and Yoshua Bengio, argue that pure connectionist architectures possess inherent architectural ceilings that prevent them from achieving robust generalization 12371017. These critics assert that deep learning models remain fundamentally constrained by the manifold of their training distributions and lack the structural mechanisms required for systematic reasoning 1813.

Dual-Process Theory in Machine Cognition

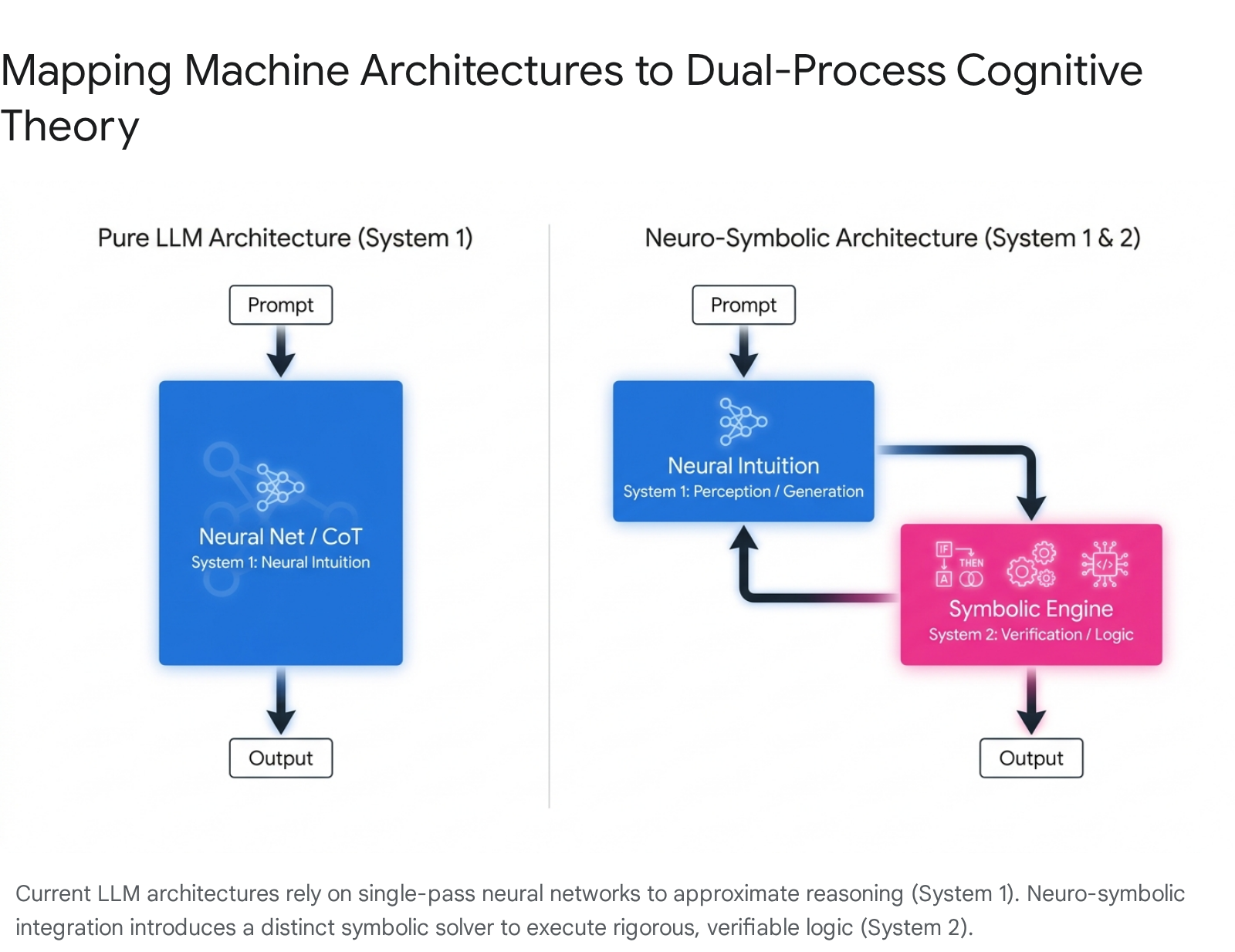

The critique of pure connectionism relies heavily on Daniel Kahneman's dual-process theory, which separates cognitive architecture into System 1 and System 2 subsystems 7914.

System 1 represents thinking that is fast, automatic, associative, and reactive. In the domain of machine learning, this corresponds precisely to the feed-forward inference pass of a deep neural network, which instantly maps a stimulus, such as an image matrix or a text prompt, to a highly probable response vector based on learned feature distributions 2714. System 2, conversely, represents thinking that is slower, effortful, conscious, and intensely logical. System 2 thinking involves explicit planning, hypothesis generation and testing, maintaining discrete variables in working memory, and executing precise algorithmic rules 3479.

Yoshua Bengio and his contemporaries argue that current deep learning models excel exclusively at System 1 tasks. They maintain that a true System 2 architecture requires an inference machine capable of generating solutions that align with a discrete, verifiable world model, rather than simply predicting the statistically likeliest continuation of a pattern 714.

| Cognitive Process | Human Cognition (Dual-Process Theory) | Artificial Intelligence Equivalent | Primary Limitations |

|---|---|---|---|

| System 1 | Fast, intuitive, associative, reactive, unconscious | Pure Neural Networks, Large Language Models (Feed-forward pass) | Prone to bias, hallucinations, inability to handle out-of-distribution logical constraints 79. |

| System 2 | Slow, deliberative, logical, algorithmic, verifiable | Symbolic Logic Engines, Theorem Provers, Knowledge Graphs | Brittle in noisy environments, requires explicit manual programming, struggles with raw perception 7814. |

Limitations of Chain of Thought Reasoning

Advocates for pure neural networks frequently point to prompt engineering techniques like Chain-of-Thought (CoT) as evidence that large language models can natively execute System 2 reasoning. The Chain-of-Thought methodology forces the model to generate intermediate deductive steps sequentially before arriving at a final answer, a process that has been shown to significantly improve model performance on arithmetic, common sense reasoning, and multi-step logical benchmarks 815.

However, critical analysis reveals a profound architectural distinction between Chain-of-Thought generation and true formal logic execution 9. Chain-of-Thought is implemented purely at the level of decoding; the artificial intelligence does not possess a genuine reflective state, a persistent memory of the problem's constraints, or an internal verification mechanism 9. The model's "reasoning steps" are simply a continuation of auto-regressive text generation, rendering the intermediate logic just as prone to statistical leaps and factual hallucinations as the final answer 89. Because there is no external ground truth or symbolic engine validating the logic at each individual step, Chain-of-Thought acts as a learned behavioral emulation of human reasoning rather than rigorous mathematical deduction 912.

Architectural Frameworks for Integration

Neuro-symbolic artificial intelligence seeks a synergistic synthesis of both computing paradigms. The objective is to utilize neural networks for their unparalleled ability to learn from raw, unstructured data streams and handle ambient uncertainty, while simultaneously leveraging symbolic components for structured reasoning, declarative knowledge representation, and system interpretability 6151222.

The field is generally categorized into distinct architectural paradigms based on the depth and mechanics of the integration between the neural components and the symbolic components.

| Integration Paradigm | Architectural Strategy | Primary Advantages | Primary Disadvantages |

|---|---|---|---|

| Hybrid Integration | Couples existing neural networks with external symbolic solvers (e.g., Python interpreters, ProLog, theorem assistants). The systems communicate sequentially, typically via natural language or direct API calls. | Highly modular and model-agnostic. Allows the use of state-of-the-art frozen language models. Outputs from the symbolic engine are inherently interpretable 815. | Vulnerable to parsing and "translation errors" where the neural model fails to convert natural language into precise formal syntax. Generally incurs high computational latency 1516. |

| Integrative Systems | Modifies the core neural network architecture natively, embedding logical constraints, equations, or symbolic graphs directly into the continuous embedding space and the optimization gradient flow. | Offers low latency and is end-to-end differentiable. Unifies statistical learning and rule-based reasoning at a profound mathematical level 81617. | Extremely complex to engineer. Current implementations are often highly domain-specific and struggle to scale to the massive parameter counts of general-purpose models 816. |

The hybrid approach currently dominates practical applications because it bypasses the need to reinvent foundational architectures. It allows researchers to leverage the massive pre-trained knowledge bases of models like GPT-4 while delegating strict reasoning tasks, mathematics, and code execution to deterministic, rule-based execution environments 82218. However, integrative systems represent the vanguard of academic research, attempting to solve a profound mathematical problem: reconciling continuous neural gradients, derived through calculus, with discrete symbolic logic based on boolean algebra.

Integrative Methodologies and Real Logic

To achieve end-to-end differentiability in a system that simultaneously reasons and learns, researchers have pioneered highly specialized computational formalisms. Logic Tensor Networks provide a robust framework that grounds first-order logic directly into neural computational graphs 171920. Traditional formal logic relies on strict binary truth values, which cannot be mathematically differentiated, rendering standard neural network backpropagation impossible. Logic Tensor Networks bypass this structural limitation by utilizing "Real Logic" - a many-valued fuzzy logic framework where truth values exist on a continuous mathematical interval of zero to one 172021.

Within a Logic Tensor Network, logical symbols are assigned specific geometric interpretations in a continuous multi-dimensional tensor space. Constants representing individuals or entities are grounded as learnable vectors or embeddings in real space. Predicates, representing properties or relations between entities, are grounded as neural networks performing regression or classification tasks, outputting a probability value representing the degree of truth 1920. Logical connectives, such as implications and conjunctions, are implemented using differentiable fuzzy logic operations known as t-norms.

By converting explicit logical rules into differentiable objective functions, Logic Tensor Networks force the underlying neural network to optimize its internal weights not just to reduce standard classification loss, but to strictly satisfy high-level symbolic constraints supplied by human experts 19. This ensures that the neural network learns representations that natively obey the laws of physics, specific regulatory constraints, or known taxonomic relationships, without requiring the exhaustive scaling data prescribed by the connectionist paradigm.

Probabilistic Logic Programming

Another prominent integrative approach is DeepProbLog, which approaches neuro-symbolic synthesis from a probabilistic logic programming perspective 2230. Extending the existing ProbLog language, DeepProbLog incorporates "neural predicates" - probabilistic facts whose likelihoods are parameterized entirely by a neural network's continuous output 302324.

The primary mathematical breakthrough of DeepProbLog is its utilization of Algebraic Model Counting and Sentential Decision Diagrams 232526. Because exact logical inference across complex programs is computationally intractable, DeepProbLog compiles logic programs into deterministic decomposable negational normal forms. This compilation allows for highly efficient weighted model counting. By mapping the logical inference process directly to a gradient semiring, DeepProbLog calculates the exact mathematical gradient of a logical query's success probability with respect to the neural network's weights 2325. Consequently, standard gradient-descent optimizers can update the neural network's perceptual abilities based purely on the outcome of a complex, multi-step logical proof 2324.

The AlphaGeometry System

Perhaps the most prominent, peer-reviewed validation of the neuro-symbolic architecture is DeepMind's AlphaGeometry system, along with its successor, AlphaGeometry 2. These systems achieved gold-medalist performance in International Mathematical Olympiad geometry problems, a domain notorious for requiring a seamless synthesis of creative spatial intuition and absolute, uncompromising logical rigor 2736372829.

The Dual-Engine Inference Loop

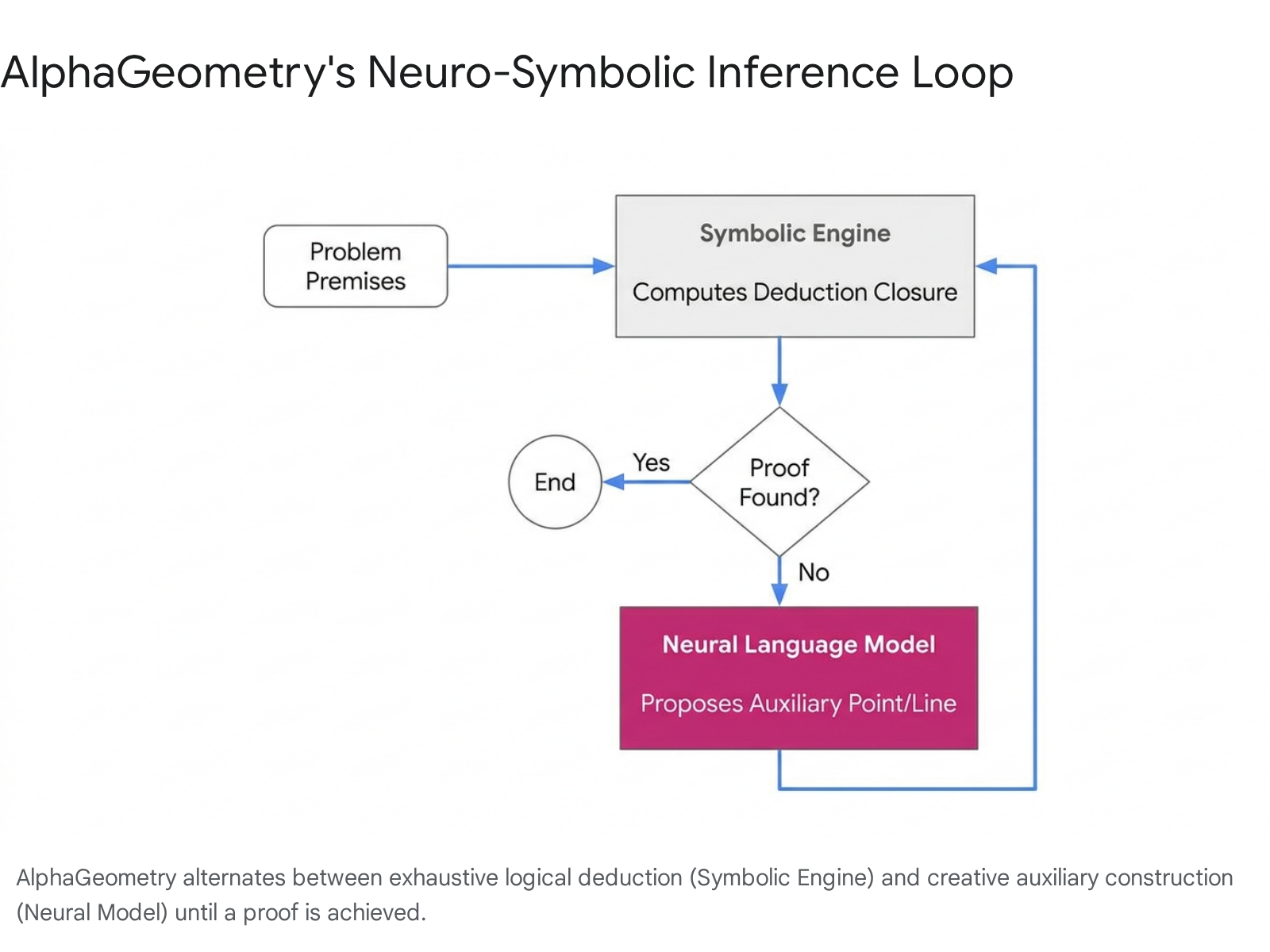

AlphaGeometry's architecture is a direct, operational realization of the System 1 and System 2 cognitive theory, utilizing a meticulously designed alternating loop between a generative neural language model and a deterministic symbolic deduction engine 372930.

The symbolic engine, termed Deductive Database and Arithmetic Reasoning (DDAR), represents the rigorous, slow-thinking component of the system 293132. It operates strictly on a fixed set of explicit geometric rules formulated mathematically as Horn clauses. Given a set of problem premises, the DDAR engine computes the complete "deduction closure" by exhaustively generating every single possible provable fact until it either solves the overarching problem or completely runs out of valid mathematical derivations 313233.

When the symbolic engine exhausts its deterministic options without finding the requisite solution, the neural language model activates. Acting as the fast, intuitive cognitive engine, the language model analyzes the current state of the proof and proposes an "auxiliary construction" 2829. An auxiliary construction is a new geometric entity - such as an intersecting point, a bisecting line, or a tangent circle - that is not explicitly mentioned in the original problem statement but is intuitively essential to unlocking further logical proofs 2829.

This newly proposed construct is fed directly back into the symbolic engine, radically expanding the mathematical search space. The DDAR engine then restarts its exhaustive logical deduction based on this new entity. This generate-check-refine feedback loop continues iterating until the mathematical theorem is definitively proven 372930.

Synthetic Data Generation and Traceback Algorithms

A historical bottleneck for applying symbolic artificial intelligence to advanced mathematics is the severe lack of human-annotated proof data required to effectively train deep neural models 2831. AlphaGeometry entirely bypassed this fundamental limitation by generating 100 million synthetic theorem-proof pairs strictly without any human demonstration or data contamination 3033.

The system achieved this milestone by algorithmically generating billions of random geometric diagrams and utilizing the DDAR symbolic engine to deduce all possible true statements regarding those random shapes 3133. Once a vast, complex deduction closure was established, researchers employed a highly optimized traceback algorithm 28313233. Starting from a final deduced fact in the diagram, the traceback algorithm works relentlessly backward through the dependency subgraph to identify the absolute minimal set of premises and auxiliary constructions necessary to prove that specific fact 283032.

This algorithm cleanly extracts flawless synthetic triples containing the premises, the conclusion, and the minimal proof path. This vast synthetic corpus allows the language model to be fine-tuned purely on verified, minimal logic paths 2830. By isolating the neural model's responsibility strictly to the creative generation of auxiliary constructs, AlphaGeometry emphatically demonstrates that neuro-symbolic systems can bypass the theoretical scaling limits of pure connectionist models when operating in formal, structured domains.

Regulatory Frameworks and Verifiable Systems

While the debate between connectionist and symbolic approaches is deeply rooted in cognitive philosophy and computational architecture, the momentum behind neuro-symbolic research is currently being heavily accelerated by international law and regulatory frameworks. As artificial intelligence systems are increasingly deployed in high-stakes environments - such as autonomous driving, algorithmic criminal justice, medical diagnostics, and financial risk assessment - the opaque nature of pure neural networks has transitioned from a theoretical limitation to a critical legal liability 3435.

The European Union Artificial Intelligence Act

The European Union's Artificial Intelligence Act, which formally entered into force in 2024, establishes strict, harmonized rules for the deployment of machine learning . Under the provisions of the Act, applications classified as "High-Risk AI Systems" are subject to intense regulatory scrutiny regarding data governance, risk management, and, crucially, human oversight and algorithmic transparency 364849.

A central tension in the current technological landscape arises from the legal mandate that artificial intelligence-supported decisions must allow human users to interpret outputs appropriately and actively prevent undue reliance on automated conclusions 4849. In contemporary legal scholarship, this requirement is frequently analyzed through the lens of the "right to explanation" - a regulatory demand stipulating that any automated decision significantly affecting fundamental human rights or physical safety must be inherently decipherable and auditable 3649.

Pure connectionist models natively and fundamentally fail this regulatory requirement. Because a deep neural network distributes its learned knowledge across billions of continuous weight parameters, it operates as an impenetrable "black box" 3448. Even when enterprise developers employ post-hoc explainability tools, the underlying system cannot definitively prove the causal logic that led to a specific output; it can only provide statistical approximations indicating which input features were most influential 37.

Self-Attesting Intelligence Frameworks

To bridge the widening gap between neural capability and legal compliance, prominent research institutions like Fraunhofer IAIS and various European governance bodies are urgently prioritizing the development of explicitly Verifiable AI 343538394041. Within this context, neuro-symbolic integration is viewed not merely as a cognitive upgrade, but as an essential compliance mechanism.

By architecturally decoupling the statistical perception layer from the ultimate decision-making layer, neuro-symbolic systems offer a viable pathway to what researchers term "Self-Attesting Intelligence" 41. In these specialized frameworks, the symbolic engine acts as a "Declarative Knowledge Limiter" or a rigid constraints engine 41. Because the final automated decision is rendered through explicit formal logic - for example, automatically checking a neural network's output against a codified legal ontology or a strict safety rule - the system's architecture becomes intrinsic evidence of its own legal compliance 3741.

This neuro-symbolic decoupling allows for the generation of unforgeable "Certificates of Compliance," mathematically proving via Zero-Knowledge Proofs that a system operated strictly within its mandated constraints, without requiring a third-party auditor to directly interpret the neural network's proprietary internal weights 3741. In sectors like industrial automation and healthcare, this enables artificial intelligence systems to be formally certified under existing safety integrity levels, a feat fundamentally impossible for isolated connectionist models 343755. This paradigm shift, often categorized as AI Integrity rather than mere AI Safety, mandates that the reasoning process itself - not just the final output - be protected from bias and maintained in a verifiable manner 56.

Embodied Artificial Intelligence

The realization that scaling laws may begin to yield diminishing returns for robust logical reasoning has shifted strategic priorities within global research hubs. Notably, major Chinese research institutions - including the Beijing Institute for General Artificial Intelligence, Tsinghua University, and the Chinese Academy of Sciences - are heavily investing in neuro-symbolic architectures, focusing their efforts specifically on embodied agents and physical robotics 2257425960.

Structural Execution in Robotics

Institutions advancing this research are explicitly pivoting away from the resource-intensive "massive data, single task" approach championed by standard language model developers in the West 42. Instead, their strategic focus centers on a "small data, big task" philosophy, emphasizing efficient cognitive architecture, the reinforcement of physical rules, and grounded common-sense reasoning 42.

A primary focus area is the development of Neuro-Symbolic Reinforcement Learning frameworks designed for embodied agents operating in complex physical spaces 2261. Traditional language-model-driven robotics suffer heavily from a "reality gap" - they probabilistically hallucinate physical affordances or fail to maintain strict spatial constraints, leading to erratic or unsafe robotic actions in unpredictable environments 22.

Recent architectural frameworks from the Chinese Academy of Sciences attempt to definitively resolve this reality gap by utilizing vision foundation models solely as perception modules to extract structural state representations, such as specific object coordinates 61. These mathematically structured states are subsequently fed directly into a symbolic policy engine. By distilling chaotic visual data into discrete symbolic states, the embodied agent can execute multi-step physical planning that guarantees logical consistency, physical safety, and operational transparency, doing so without requiring massive amounts of real-world trial-and-error training data 226143.

Furthermore, because the core behavioral policies are fundamentally symbolic, they can be easily translated by an overlying language model back into standard natural language. This allows the system to generate explicit, causally accurate explanations for every action a physical robot takes, drastically reducing the cognitive load required for human oversight and fulfilling the requirements for verifiable safety in physical deployment 6061.

Conclusion

The decades-long debate between symbolic and connectionist artificial intelligence is no longer characterized by a zero-sum struggle for absolute dominance, but by an urgent, pragmatic search for synthesis. Richard Sutton's "Bitter Lesson" proved irrefutably that human-engineered heuristics cannot compete with the sheer force of massive computational scaling and general learning algorithms in tasks related to perception, natural language generation, and high-dimensional pattern recognition. However, the subsequent ideological assumption - that continuously scaling these connectionist models will automatically yield robust, interpretable, and flawless logical reasoning - has encountered severe empirical and regulatory friction.

Pure neural networks remain probabilistic engines inherently vulnerable to hallucinations, out-of-distribution failures, and catastrophic logical missteps. In specialized domains where the cost of failure is high, the scaling hypothesis simply offers insufficient safety guarantees. Neuro-symbolic artificial intelligence does not refute the connectionist victory; rather, it structurally contextualizes it. By mapping deep learning architectures to intuitive, rapid processing and delegating rigid algorithmic deduction to deterministic symbolic engines, researchers have forged a highly resilient resolution.

Technologies ranging from AlphaGeometry to differentiable logic tensor networks demonstrate that the profound mathematical barriers to unifying these disparate systems are surmountable. Furthermore, the accelerating regulatory pressures imposed by frameworks like the European Union Artificial Intelligence Act dictate that the future of enterprise and high-risk technology will not be measured solely by fluency or benchmark scores, but by strict verifiability, transparency, and logical integrity. The path to robust, general-purpose, and legally compliant artificial intelligence requires the extraordinary pattern-recognition engine of the neural network to be fundamentally anchored by the rigorous, undeniable structure of the mathematical symbol.