State of search engine optimization in 2026

Macroeconomic Search Market Dynamics

The search engine optimization (SEO) industry in 2026 operates within a complex paradigm characterized by perceived technological obsolescence on one hand and measurable, record-breaking financial growth on the other. Despite widespread technological disruption, the global SEO services market crossed the $100 billion threshold for the first time in 2026, with estimates placing its total valuation between $83.98 billion and $108.28 billion, depending on the inclusion of internal enterprise tooling and adjacent consulting revenues 1. The broader market, which encompasses diagnostic and analytics software platforms such as Semrush, Ahrefs, and Moz, continues to expand at a robust compound annual growth rate (CAGR) of approximately 9.6%, with industry projections suggesting a total valuation of $122 billion by 2028 12.

This sustained financial expansion occurs alongside significant operational volatility within service delivery models. SEO agencies are experiencing record client churn rates nearing 38% annually, coupled with a 37% year-over-year drop in traditional SEO job listings observed in early 2024, followed by a frantic restructuring of agency service models to prioritize artificial intelligence integration 1. The discrepancy between traditional job market contractions and overall revenue growth indicates that the discipline is not dying, but rather undergoing a rigorous structural consolidation. Investment capital continues to flow into search optimization because it remains highly cost-effective, delivering a return on investment (ROI) that averages 5.3 times higher than paid search acquisition 2.

The fundamental catalyst for this industry-wide restructuring is the transition from decentralized hyperlink browsing to centralized, AI-synthesized answer engines. Technology research firm Gartner previously predicted that by 2026, traditional search engine volume would decline by 25% as users migrated toward conversational virtual agents and large language models (LLMs) 235. Current market data partially validates this trajectory, though the reality of user behavior is more hybridized. While Google retains an overwhelming 89.87% to 90.02% share of the traditional global search market, the conversational search market has fractured dramatically 45. ChatGPT dominates the dedicated artificial intelligence chatbot sector with 60.7% of all AI search traffic (and 68% of pure chatbot traffic), followed by Google Gemini at 15.0% and Microsoft Copilot at 13.2% 5.

Because modern users frequently alternate between traditional keyword platforms and conversational interfaces during a single research session, overall digital discovery volume has actually increased 5. However, the distribution of outbound clicks has changed permanently. Nearly 60% of searches conducted on traditional search engines now yield zero clicks, as users consume the necessary information directly from the search engine results page (SERP) without navigating to external publisher websites 26.

Impact of Generative Artificial Intelligence on Click-Through Rates

The most disruptive structural change to the digital discovery landscape is the widespread global deployment of Google's AI Overviews (AIOs). Functioning as a retrieval-augmented generation (RAG) system layered atop the traditional search index, AIOs provide multi-source, conversational summaries directly above organic search results. By early 2026, these generative summaries reached over 2 billion monthly users globally 26.

The deployment of AIOs is highly calibrated to user intent rather than uniformly applied. According to comprehensive data from late 2025 and early 2026, AI Overviews appear on approximately 30% to 50% of all desktop search queries in the United States 97. Mobile prevalence experienced a staggering year-over-year increase of nearly 475%, aligning with mobile-first consumer consumption habits, while United Kingdom desktop presence surged by 536% over the same period 7.

The likelihood of an AI Overview appearing correlates strongly with the informational density required to satisfy the query. Informational searches designed to gather knowledge trigger AI Overviews at rates between 80% and 88%, depending on the specific industry vertical 211. Question-format queries see an 85.9% trigger rate, and comparative queries trigger AIOs 95.4% of the time 89. Conversely, commercial queries indicating intent to research a purchase trigger AIOs in only about 8% to 8.69% of cases, while high-intent transactional and navigational searches trigger AIOs rarely, typically between 1.43% and 5% of the time 289.

Interestingly, algorithmic optimization has resulted in shorter generative responses. Data from late 2025 revealed a 70% reduction in AIO character count, dropping from an average of 5,300 characters to roughly 1,600 characters 710. This suggests search algorithms are refining their synthesis to prevent excessive cognitive load, prioritizing concise answer formats that further disincentivize scrolling to traditional organic links.

Click-Through Rate Compression and Recovery Dynamics

The presence of generative summaries has fundamentally altered the organic click-through rate (CTR) curve. Historically, achieving Position 1 in organic search guaranteed a CTR between 28% and 40%, with the top three positions commanding nearly 69% of all clicks 11. By early 2026, Position 1 organic CTR for traditional results dropped by roughly 32% year-over-year, hovering near 12.4% 1011.

When an AI Overview is present, the CTR compression is exceptionally severe. Wide-scale industry studies tracking millions of queries revealed that organic CTR plummeted by 61% (from an average of 1.76% down to 0.61%) for queries where AIOs appeared 161218. Panel-based analysis corroborates this, showing that only 8% of users click a traditional link when an AI summary is present, compared to 15% on non-summarized SERPs, and roughly 26% of searches with AI summaries end without any further user action whatsoever 68.

However, the collapse in CTR shows signs of algorithmic stabilization. Data from the first quarter of 2026 indicates a slight rebound. Organic CTR on AIO-present queries recovered from a floor of 1.3% in December 2025 to 2.4% by February 2026 89. Furthermore, organic CTR for queries without an AI Overview increased from 2.8% to 3.8% in the same period, suggesting that when search engines refrain from synthesizing an answer, user motivation to click external links remains robust 89.

The Economics of Generative Citation

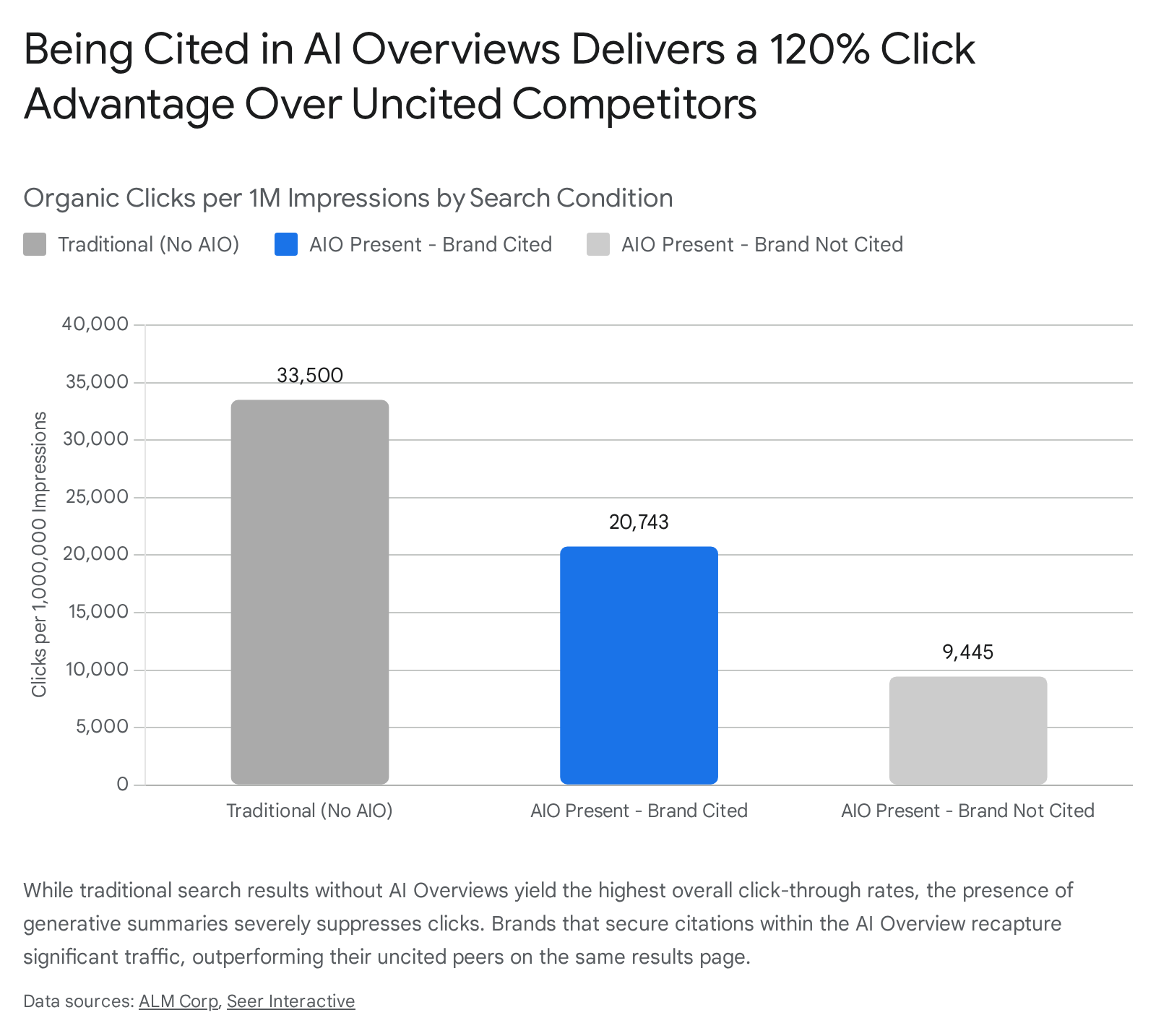

In the 2026 search landscape, visibility within the generative summary itself has become the primary commercial battleground. Over 99% of the instances of AI Overviews are sourced directly from the top 10 traditional web results, indicating that traditional ranking remains the prerequisite for generative inclusion 7. When a brand is cited within an AI Overview, the economic and traffic outcomes differ vastly from traditional ranking metrics.

While total traffic volume is lower than historical benchmarks, being cited as a source inside an AIO increases organic clicks by 120% per impression compared to ranking organically on the same page but remaining uncited 89.

Furthermore, visitors arriving via AI referrals exhibit substantially higher intent and engagement. Retail site analytics show that AI-referred visitors boast a 27% lower bounce rate, spend 38% more time on-page, and view 12% more pages than traditional organic traffic 619. From a conversion perspective, AI search visitors convert at rates up to 4.4 times higher than standard organic traffic, and in specific sectors such as B2B SaaS, conversion rates from AI chatbots (6.69%) are virtually identical to highly qualified traditional organic search traffic (6.71%) 6181920.

Shifting Click Curves and Ranking Positions

The structural introduction of AIOs has warped the traditional distribution of clicks across the search engine results page. When an AI-generated answer appears at the top of the viewport, the traditional Position 1 organic result is pushed below the fold.

Consequently, a counterintuitive phenomenon has emerged in click distribution metrics. While Position 1 delivers 32% fewer clicks than it did previously, positions 6 through 10 are experiencing a 30% increase in click volume 11. As users scroll past the AI Overview to verify or expand upon the synthesized information, they are interacting with lower-ranking results at higher rates than historical benchmarks predicted 11.

The most severe visibility losses occur when multiple rich search features overlap. Keywords that trigger both an AI Overview and a traditional Featured Snippet experience the largest drop in organic CTR, with an average decrease of 37.04% 13. The combined presence of these two extraction features crowds out the entire page above the fold, leaving virtually no visual space for standard organic results to capture user attention 13.

| Search Feature Configuration | Impact on Organic Click-Through Rate | Primary User Behavior Observation |

|---|---|---|

| Traditional SERP (No AIO) | Baseline CTR (e.g., 3.82% average) | Users click top 3 organic positions reliably. |

| AIO Present (Brand Cited) | Moderate suppression (retains 61% of baseline) | Users click the citation link within the generative summary. |

| AIO Present (Brand Uncited) | Severe suppression (retains 28% of baseline) | Users consume the summary and abandon the search or scroll past Position 1. |

| AIO + Featured Snippet Overlap | Maximum suppression (-37.04% CTR drop) | Above-the-fold space is entirely monopolized by zero-click extraction features. |

(Data aggregated from performance studies detailing SERP feature overlap and CTR compression in early 2026 91113.)

Transition to Generative Engine Optimization

Because generative AI models summarize information rather than providing a directory of hyperlinks, the operational practice of SEO has evolved from keyword density manipulation to Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO) 141524.

Traditional SEO relied heavily on ranking a specific URL for a target exact-match keyword. GEO, conversely, focuses on making a brand's data highly extractable, fact-dense, and semantically structured so that machine learning models prioritize it as a definitive source 1425. AI models ingest and process data in discrete algorithmic chunks; they favor content that provides direct, concise answers immediately, typically within the opening paragraphs. Research indicates that fact-dense, structured opening paragraphs are cited up to 67% more frequently by generative models than answers buried deep within unstructured, long-form prose 14.

The Rising Importance of Entity Authority

Search engines now evaluate brands as digital entities rather than mere collections of domain-level keywords. Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) remain the core ranking system, but the vectors for proving these traits have shifted dramatically 2627.

AI systems require external validation to confirm entity authority. Therefore, unlinked brand mentions, discussions on verified forums, and citations in trusted trade publications function as the modern equivalent of backlinks 1416. If a large language model encounters a brand consistently associated with a specific topic across multiple authoritative off-site ecosystems, it assigns a high probability weight to that brand's expertise, dramatically increasing the likelihood of citation in an AI Overview. Furthermore, branded search volume has quietly become one of the strongest protective signals against AI invisibility; when human users actively search for a brand by name, AI models interpret this as definitive proof of market relevance and credibility 26.

To support this entity recognition, semantic clarity is prioritized over traditional link metrics. Content must explicitly identify the entity (e.g., specific people, places, and attributes) and update frequently, as AI systems exhibit a strong recency bias. Content older than three months sees a sharp drop in AI citations, necessitating quarterly content refreshes for critical pages 16.

Technical Infrastructure for Answer Engines

If content strategy and entity authority form the conceptual layer of modern SEO, technical SEO forms the absolute precondition for participation in the generative landscape. AI bots are often technically inferior to traditional search crawlers like Googlebot 17. While Googlebot operates a robust Chromium-based renderer capable of executing complex JavaScript, intelligently retrying failed fetches, and following redirects, most AI training and retrieval crawlers operate with strict latency limits and rudimentary HTML parsing capabilities 17.

If a website relies heavily on client-side rendering (CSR), dynamic JavaScript hydration, or places core content behind interactive accordions and user-triggered events, that content is functionally invisible to large language models 161718. Technical SEO in 2026 mandates Server-Side Rendering (SSR), Incremental Static Regeneration (ISR), or pre-rendering services to ensure AI crawlers receive fully structured HTML upon the initial server request 1718. Additionally, Core Web Vitals, specifically Interaction to Next Paint (INP), remain critical, as excessive server response times (Time to First Byte over 500ms) will frequently result in LLMs abandoning the fetch request entirely, resulting in immediate exclusion from the AI Overview 1718.

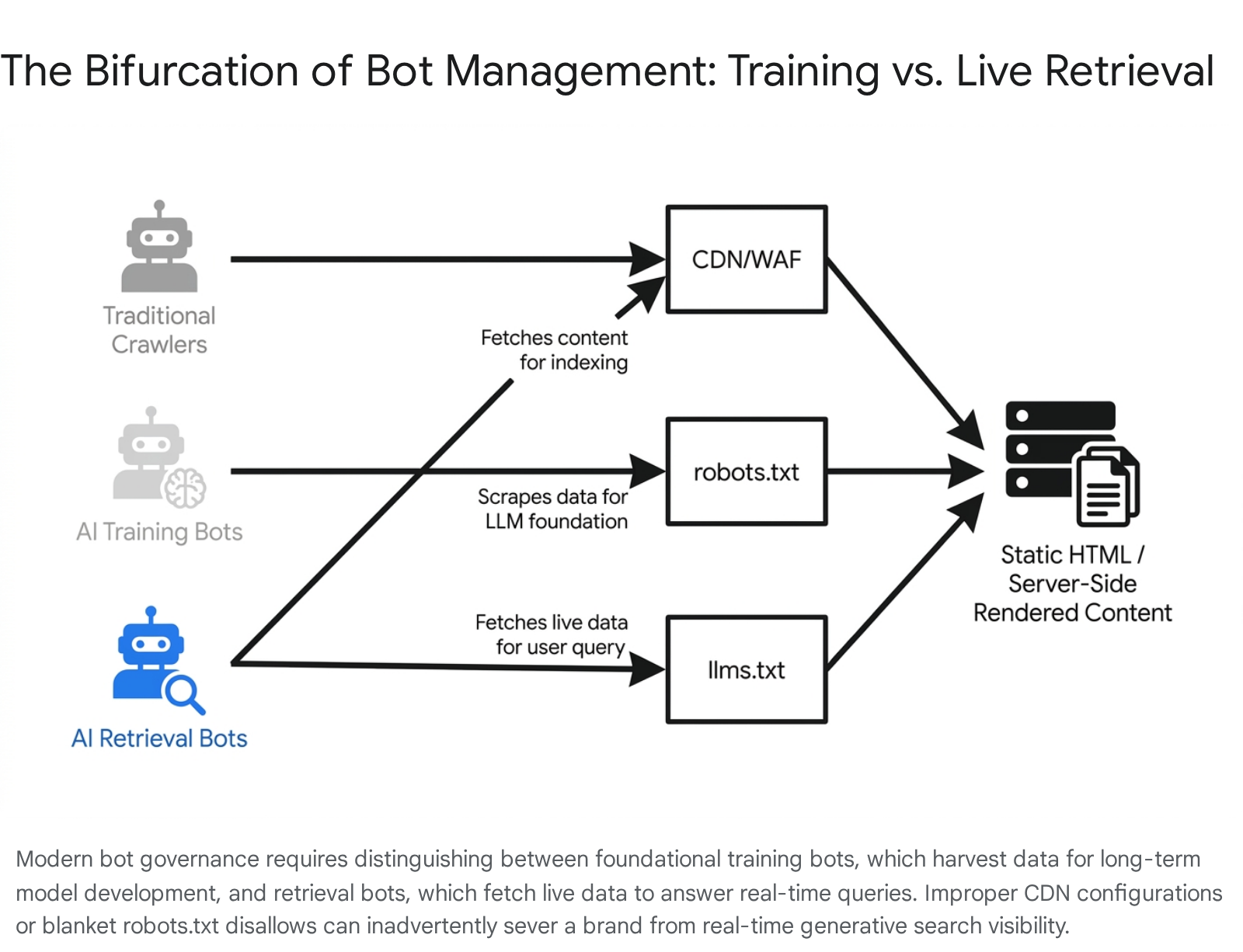

The Bifurcation of Bot Management

In 2026, network administration and SEO converge at the server level, requiring brands to manage a bifurcated bot landscape via the robots.txt file and CDN configurations:

| Crawler Category | Primary Function | Business Impact of Blocking | Examples |

|---|---|---|---|

| Traditional Bots | Indexing for classic search engine results. | Complete removal from standard search and AI Overviews. | Googlebot, Bingbot |

| Training Bots | Scraping data to build foundational LLMs. | Prevents proprietary data commoditization, but limits long-term semantic footprint. | GPTBot, ClaudeBot, CCBot |

| Retrieval Bots | Fetching live data for real-time RAG answers. | Immediate removal from live generative AI answers and citations. | OAI-SearchBot, PerplexityBot |

(Taxonomy of automated crawlers impacting search visibility in 2026 1718.)

Many organizations inadvertently sabotage their generative search visibility through overly aggressive Content Delivery Network (CDN) configurations. Default security settings in platforms like Cloudflare or AWS WAF frequently issue 403 Forbidden errors to recognized AI user agents, rendering the site silently invisible to LLMs despite excellent on-page optimization efforts 1718. Aligning marketing objectives with cybersecurity protocols is now a foundational requirement for search visibility.

Implementation of the Language Model Specification File

To facilitate efficient data extraction by LLMs constrained by computational token limits, the SEO industry has seen the introduction of the llms.txt standard. Proposed in September 2024 by Jeremy Howard at Answer.AI, llms.txt is a plain-text markdown file hosted at the root directory of a domain 1920.

Unlike the legacy robots.txt file, which operates as an exclusion protocol dictating what crawlers may not access, llms.txt serves a curation and recommendation function. It provides LLMs with a clean, token-efficient map of a website's most authoritative content, structurally stripping away navigation elements, advertisements, JavaScript, and superfluous chrome 192021. The official specification utilizes standard markdown formatting, incorporating an H1 for the site name, optional blockquote summaries, and H2 sections containing bulleted links to priority content 20. By offering an immediate directory of definitive answers, websites reduce the computational friction for AI agents, functionally telling the model, "if you want a definitive answer about this topic, fetch this exact page." 19.

Despite significant industry discourse surrounding its potential as the missing link between generative AI and traditional websites, the adoption of llms.txt remains in the early stages. Mid-2026 data analyzing approximately 300,000 domains reveals an overall adoption rate of just 10.13%, largely concentrated among AI-native SaaS companies, technical documentation portals, and highly specialized B2B enterprises 2021. Medium- and low-traffic sites paradoxically show higher adoption rates than legacy authoritative domains, which have been slower to allocate development resources to an unratified standard 21. Because the standard is not officially enforced by bodies like the IETF, its primary utility currently lies in establishing a competitive, token-efficient advantage for retrieval bots before widespread saturation inevitably occurs 1934.

Evolution of Business-to-Business Purchasing and Agentic AI

The transition toward AI-mediated discovery has profound implications for digital marketing accountability, particularly within the business-to-business (B2B) sector. Forrester Research highlights a growing intimacy between consumers and generative tools, noting that 62% of global users now engage with GenAI at least weekly, viewing it as an emotionally supportive, non-judgmental "second brain" 22.

This intimacy translates directly into commercial behavior. Gartner predicts that by 2028, 60% of brands will utilize agentic AI to facilitate streamlined, one-to-one interactions, and 90% of B2B buying will be intermediated by autonomous AI agents 223. These AI agents will independently gather information, compare software vendors, negotiate quotes, and route over $15 trillion in corporate B2B spend without direct human intervention at every stage 237.

This structural shift threatens the foundational accountability model of digital marketing. For decades, marketing departments have relied on engagement metrics - website visits, form fills, and time-on-page - to prove commercial value. As B2B buyers shift their research processes into the zero-click environments of answer engines, these traditional engagement metrics are drying up. Marketing leaders report web traffic and demand volume declines of 20% to 30%, even when overall business revenue remains stable 38.

The critical risk in 2026 is misinterpreting this decline in vanity metrics as a failure of marketing efficacy. The actions that businesses need most in the generative era - building buyer preference and gaining citation visibility within agentic AI research protocols - rarely register in legacy analytics platforms. Organizations that drastically cut SEO and content budgets due to falling traffic risk total exclusion from the AI models that will increasingly dictate B2B procurement 38.

Antitrust Litigation and Search Market Fragmentation

The technological shift toward AI is heavily influenced by significant legal and regulatory developments reshaping the balance of power in search. In September 2025, U.S. District Judge Amit Mehta issued a landmark antitrust ruling determining that Google illegally maintained its search monopoly through exclusive default agreements with device manufacturers such as Apple and Samsung 3940.

While the court rejected more drastic measures like forcing the sale of the Chrome browser, it imposed severe structural remedies aimed at fostering competition. Crucially, the ruling prohibited exclusive default agreements and mandated that Google open portions of its search index and click-and-query interaction data to qualified competitors via syndication licenses 39402442.

For decades, the immense financial and computational cost of crawling the entire web constituted an insurmountable barrier to entry for rival search engines. By forcing Google to share its high-quality index data - including crawl dates, spam scores, and click patterns, though keeping proprietary ranking signals confidential - the ruling has accelerated the capabilities of competitors like Bing, DuckDuckGo, and emerging LLM platforms 402442. Consequently, the search landscape is becoming fundamentally multi-polar. SEO in 2026 is no longer a monolithic, single-platform endeavor; brands must monitor and optimize for an interconnected ecosystem of alternative search engines and AI chatbots relying on newly democratized index data 2425.

Copyright Law and Training Data Provenance

Simultaneously, the industry is navigating a labyrinth of copyright and web scraping litigation that directly impacts how search engines train their AI models. More than 80 major lawsuits, involving entities like The New York Times, Reddit, YouTube creators, and authors, have been filed against AI developers including OpenAI, Meta, Anthropic, and Snap 262728.

Many recent class-action lawsuits focus on the circumvention of Technological Protection Measures (TPMs), alleging violations of Section 1201(a) of the Digital Millennium Copyright Act (DMCA) for unauthorized scraping of videos and text 27. In response to this influx of litigation, federal judges have begun establishing critical legal distinctions between the input phase (the sourcing of training data) and the output phase (the generated response provided to the user) 29.

While courts have generally favored AI companies by classifying the ingestion of lawfully acquired, publicly available data as "transformative" fair use, they are drawing strict compliance lines against models trained on pirated material or shadow libraries 282930. Furthermore, courts are imposing a high burden of proof on plaintiffs regarding outputs, requiring them to demonstrate concrete economic harm and prove that a specific AI output is substantially similar to their copyrighted work 29.

For digital marketers and SEO professionals, the implication of this ongoing litigation is profound. AI companies are increasingly incentivized to engage in formal data licensing agreements or rely on highly structured, explicitly permissioned data to insulate themselves from liability. Content that is technically inaccessible, blocked by restrictive paywalls, or lacking clear provenance will simply be omitted from the next generation of generative models 2930.

Search Decentralization and Social Platform Validation

As the traditional SERP becomes increasingly congested with generative answers and sponsored features, consumer discovery behavior has migrated laterally toward social validation networks and specialized video platforms. Traditional search optimization must now encompass these distributed ecosystems to maintain comprehensive visibility.

Reddit's Ascension as an Intent Validation Engine

Reddit has definitively transitioned from a decentralized message board into one of the most powerful research engines on the internet. As of early 2026, the platform boasts over 1.1 billion monthly active users and 97 million daily active users worldwide 49. Driven by shifting consumer preferences and a highly lucrative $60 million annual data-sharing partnership with Google, Reddit's organic search traffic surged 97% in 2024 and an additional 45% in 2025, propelling it to the sixth most visited website globally by organic search traffic 4950.

This explosive growth is deeply intertwined with AI visibility. Because Reddit provides a vast repository of authentic human discourse, Google prominently features Reddit threads in 42% of all searches possessing informational or commercial intent 49. Furthermore, Google's AI Overviews cite Reddit in 28% of cases where user-generated content is utilized as a source, and the platform sits among the top five most-cited domains across ChatGPT and Perplexity 495051.

For consumers, particularly Millennials and Gen Z, Reddit provides an antidote to the perceived sterility of AI-generated content and heavily optimized affiliate marketing. Users routinely append "reddit" to search queries - a behavior that increased 142% between 2024 and 2025 - to access authentic, human-validated experiences 49. Consequently, 74% of Reddit users report that the platform directly influences their purchasing decisions 5051. Optimizing for Reddit requires a complete departure from traditional brand broadcasting; success necessitates participating authentically in niche communities, generating organic upvotes, and fostering transparent discussions that external AI crawlers can index as high-value E-E-A-T signals 5051.

Short-Form Video Search Dynamics

Among younger demographics, the definition of a "search engine" has fundamentally expanded to include visual and short-form video platforms. Generation Z spends an average of 4.5 hours per day on social media, with TikTok commanding an 83% daily active user rate within that cohort 52. When seeking experiential information - such as restaurant atmospheres, travel itineraries, or product demonstrations - visual proof is increasingly paramount.

Early alarmist marketing narratives suggested that Gen Z had entirely abandoned Google for TikTok. Comprehensive 2026 data presents a more stabilized, nuanced reality. While 65% of Gen Z users report utilizing TikTok as a search engine, the percentage who state they prefer TikTok exclusively over Google dropped by 50%, falling from 8% in 2024 to 4% in 2026 313255. Only 25% of Gen Z found TikTok highly effective for pure information retrieval, acknowledging its limitations for factual, non-visual queries 3155.

The data strongly indicates multi-platform search behavior rather than complete platform substitution. A modern user might utilize ChatGPT for coding assistance, Google for a local business address, and TikTok to evaluate the ambiance of a café. Consequently, video SEO - optimizing captions, utilizing text overlays, and integrating localized keywords within short-form video content - has become a mandatory component of modern search visibility, rather than a total replacement for traditional text indexing 5556.

Development of Regional Artificial Intelligence Search Ecosystems

The shift toward AI-integrated search is a global phenomenon, with regional technology giants pioneering deeply integrated architectures that diverge from Western models. In the Asian market, search engines are evolving rapidly into execution-based agents seamlessly integrated into existing super-apps.

In China, the search landscape is dominated by Baidu's ERNIE Bot, Alibaba's Quark, Tencent's Yuanbao, and ByteDance's Doubao. Together, these AI engines serve over 900 million monthly active users 57. Rather than forcing users to download standalone AI applications, Baidu embedded the ERNIE model directly into its primary search app, which serves 700 million users, blurring the line between a traditional keyword query and an autonomous agent prompt 33. While Doubao recently surpassed Baidu in consumer metrics with 260 million monthly active users due to superior multimodal capabilities, Baidu ERNIE (220 million active users) remains the enterprise and government standard, driving significant API revenue growth 5733.

Similarly, in South Korea, Naver - the dominant domestic search portal - launched "AI Tab," a conversational agent deeply embedded in its proprietary ecosystem. Unlike Google's early iterations of generative search, Naver's AI Tab acts as a comprehensive execution-based agent. It leverages highly localized, domain-specific data from Naver Place reviews, Naver Shopping, and community blogs to answer complex logistical queries. Crucially, the interface allows users to execute product purchases and make local reservations directly within the conversational window, bypassing the need to navigate to external websites 3435.

| Regional AI Search Engine | Parent Company | Estimated Monthly Active Users | Key Differentiator |

|---|---|---|---|

| Doubao | ByteDance | 260 Million | Strongest multimodal and video search integration. |

| ERNIE Bot | Baidu | 220 Million | Deep ecosystem integration; default for Chinese enterprise/government. |

| Quark | Alibaba | 180 Million | Optimized for long-context documents and academic queries. |

| AI Tab | Naver | Beta Deployment | Direct commercial execution (purchases/reservations) within chat UI. |

(Market share and capability breakdown of leading Asian AI search ecosystems in 2026 573435.)

These regional models underscore the broader trajectory of global search optimization: moving away from mere traffic generation and toward direct commercial execution within the search engine's proprietary interface.

Conclusion on the Viability of Search Optimization Investment

The core question facing marketing executives in 2026 is whether traditional search optimization remains a viable investment against the backdrop of zero-click generative answers, declining organic CTRs, and the rise of autonomous AI purchasing agents. The unequivocal conclusion drawn from current market data, antitrust developments, and technological infrastructure shifts is that SEO is not obsolete; rather, its fundamental role has expanded and migrated deeper into the digital infrastructure.

The mechanics of visibility have changed permanently. Traffic volume is no longer the sole, or even the primary, metric of success. The new imperative is establishing entity authority, ensuring technical machine readability, and securing citations within the generative summaries that increasingly act as the internet's gatekeepers. Traditional SEO hygiene - encompassing server-side rendering, structured data implementation, entity disambiguation, and authoritative off-site validation - is the foundational dataset that feeds generative AI. Without a technically accessible and semantically authoritative web presence, a brand will not be ingested into training models, nor will it be fetched during real-time retrieval queries.

Organizations that abandon search optimization in the face of declining traditional traffic metrics risk total exclusion from the AI models that will dictate future consumer discovery and B2B procurement. In 2026, investing in search optimization is no longer just about winning clicks; it is the mandatory cost of ensuring that when autonomous systems synthesize reality, a brand is positioned as the definitive, credible truth.