Sociological and psychological impacts of AI surveillance

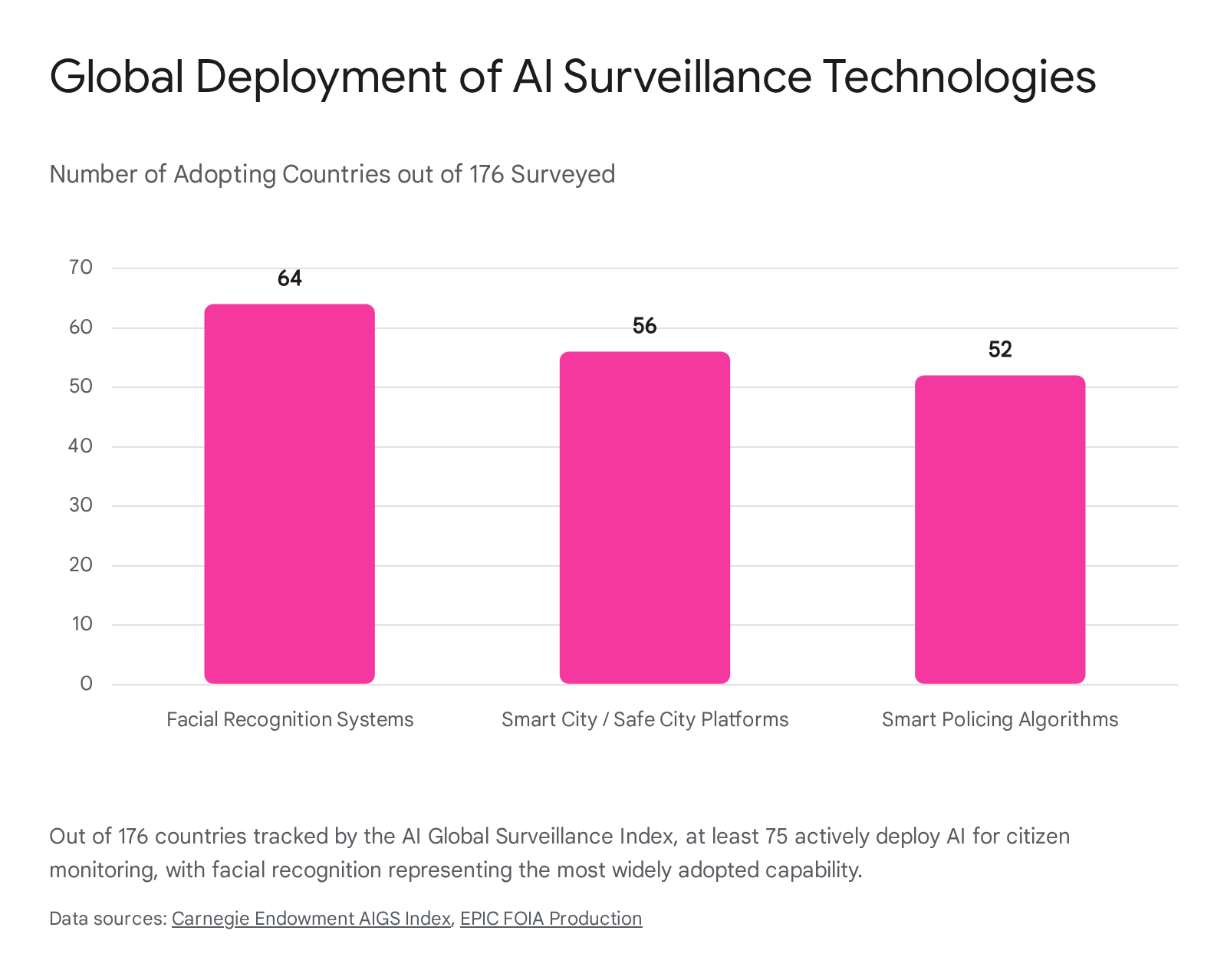

The proliferation of artificial intelligence (AI) has transformed surveillance from a targeted tool of state and corporate security into a pervasive, ambient infrastructure. As advanced algorithms, computer vision, and machine learning models are integrated into urban environments, digital platforms, and workplaces, the mechanisms of observation have become automated, predictive, and continuously active. This structural shift fundamentally alters the psychological experience of being watched, disrupting traditional paradigms of privacy, interpersonal trust, and identity construction. Globally, at least 75 out of 176 countries are actively deploying AI technologies for surveillance purposes, encompassing smart city platforms, facial recognition systems, and predictive policing 12.

As these technologies mature, they generate complex sociotechnical dilemmas. The deployment of AI surveillance is not merely a technical upgrade; it represents a profound rewiring of the social contract between institutions and individuals. This research report examines the exhaustive sociological and psychological impacts of AI surveillance, analyzing the erosion of trust in the workplace, the chilling effects on civil society, the geopolitical contest over surveillance exports to the Global South, and the highly fragmented legal frameworks attempting to govern these technologies.

Psychological Frameworks of Algorithmic Observation

The psychological burden of surveillance has historically been understood through the lens of human observation. The introduction of autonomous algorithmic systems alters this dynamic, replacing human context and empathy with opaque data extraction and automated evaluation. This shift requires a reevaluation of established privacy paradigms and psychological theories.

Reevaluating the "Nothing to Hide" Argument

The expansion of AI surveillance is frequently justified by the rhetorical trope, "If you have nothing to hide, you have nothing to fear." This reductionist premise suggests that privacy is only necessary for concealing illicit or immoral behavior. However, contemporary sociological research dismantles this argument, demonstrating that privacy is not merely the concealment of transgressions, but the foundational mechanism for identity construction and personal autonomy 3456.

As observed in debates surrounding mass data collection, arguing that one does not care about privacy because they have nothing to hide is logically equivalent to claiming one does not care about free speech because they have nothing to say 46. Psychologists and sociologists assert that individuals require the ability to control the flow of personal information to engage in "impression management" and selective withholding 57. Without spatial, decisional, and relational control over personal data, individuals are defined from without rather than from within, stripping them of the agency required to formulate a preferred self 7. Legal theorists like Daniel Solove have proposed a taxonomic approach to conceptualizing privacy as a plurality of distinct yet related problems, emphasizing that sweeping surveillance disrupts the fundamental boundaries required for self-development, an approach sometimes critiqued for its vast scope but widely foundational in privacy law 8.

Furthermore, digital identity in an era of pervasive algorithmic tracking creates a "genealogical paradox." Individuals seek rootedness and connection in digital spaces, yet the platforms they use are designed to extract their behavioral data, turning the seeker of identity into the final product of prosumer capitalism 9. Drawing on Zygmunt Bauman's concept of liquid identity, researchers argue that when AI systems continuously aggregate and interpret an individual's data exhaust, the subject loses control over their historical narrative, resulting in a fragmented self that is perpetually subjected to algorithmic judgment 9. As a form of resistance, creators increasingly engage in "AI passing" - the strategic effort to humanize and conceal AI-assisted content to maintain an aura of authenticity and dodge the stigma of automated generation 10.

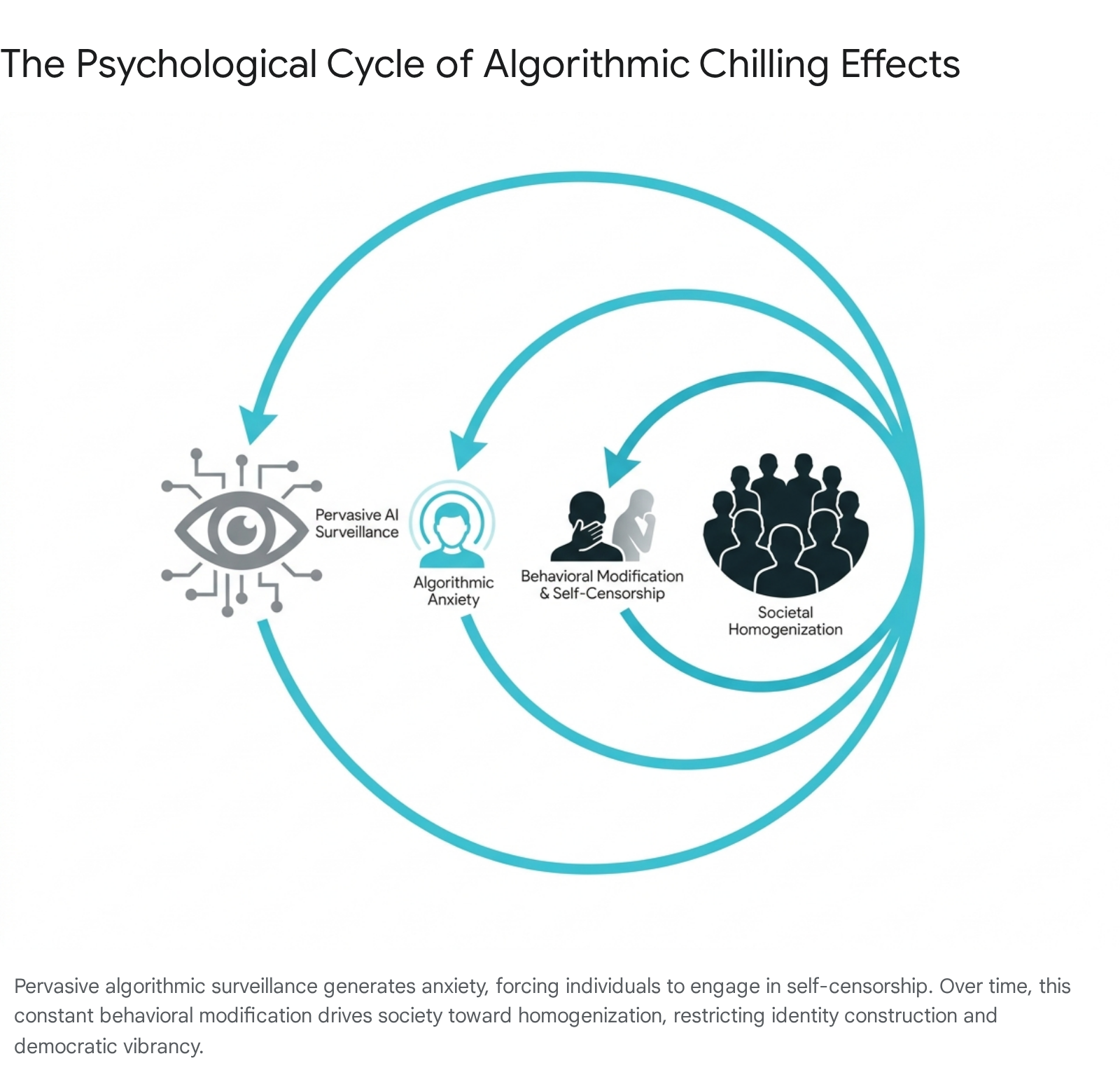

Algorithmic Anxiety and the Chilling Effect

The psychological response to inescapable AI observation has been termed "algorithmic anxiety" - a pervasive apprehension stemming from the accelerated development, opacity, and ubiquity of AI technologies 11. This anxiety is compounded by the knowledge that AI systems not only record behavior but actively synthesize information to predict future actions and categorize individuals 6.

One of the most profound consequences of algorithmic anxiety is the "chilling effect." This phenomenon occurs when individuals consciously or subconsciously alter their behavior, beliefs, or associations due to the fear of being observed and subsequently penalized by algorithmic systems 121314. Chilling effects manifest directly through self-censorship and indirectly through an unwillingness to engage with marginalized groups or controversial ideas, thus blocking the free exchange of discourse 14.

The long-term impact of surveillance-induced chilling effects is a gradual homogenization of society.

As individuals modify their behavior to align with the perceived mainstream expectations of the algorithm, the space for personal and political development shrinks 13. Evaluated through the lens of Aristotelian naturalism and the capabilities approach, this structural conformity devastates human flourishing, eroding the vibrancy necessary for democratic societies to evolve 13. Human rights frameworks frequently struggle to conceptualize this cumulative, long-term harm, as it is difficult to quantify the absence of unexpressed thoughts or unformed associations 1314.

The Paradox of Diagnostic Algorithms

While AI is a primary driver of algorithmic anxiety, the technology is simultaneously being deployed in clinical settings to diagnose and monitor anxiety disorders. A systematic review of 119 peer-reviewed studies published between 2019 and 2024 revealed that machine learning and deep learning algorithms demonstrate higher accuracy in detecting anxiety disorders from physiological data, self-reports, and social network behavior than traditional diagnostic tests 1516. This creates a paradoxical technological environment: the same underlying data-extraction architectures that induce stress and burnout in public and professional spheres are being optimized to measure and treat the resulting psychological damage 111516.

Workplace Surveillance and the Erosion of Trust

The digital transformation of the workplace, accelerated by the normalization of remote and hybrid work models, has fundamentally altered the relationship between employers and employees. Management has increasingly turned to AI-powered surveillance tools to monitor productivity, evaluate performance, and predict employee behavior, resulting in an environment characterized by a severe trust deficit.

The Scale of Occupational Monitoring

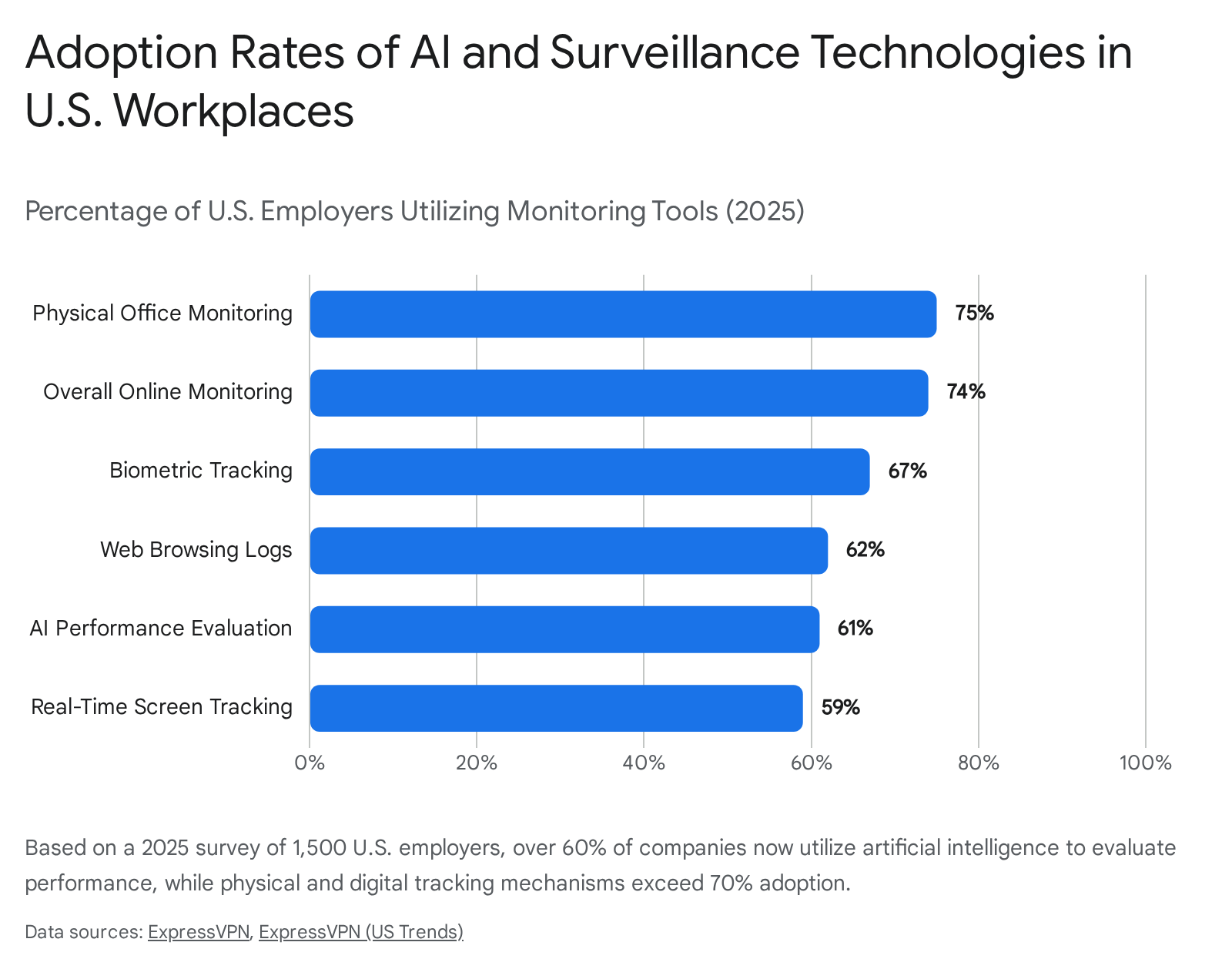

Workplace surveillance has transitioned from localized, physical oversight to continuous, granular digital tracking. A comprehensive 2025 survey conducted by ExpressVPN encompassing 1,500 U.S. employers and 1,500 U.S. employees - alongside corresponding data for the United Kingdom - revealed the staggering scale of modern occupational monitoring 171819. By 2025, the global workforce analytics software market reached a valuation of $2.37 billion, growing roughly 14% annually 20.

In the United States, 74% of employers utilize online monitoring tools, while 75% monitor employees within physical offices using methods such as video surveillance (69%) and biometric access controls (58%) 1719.

Furthermore, 61% of U.S. companies employ AI to evaluate employee performance through automated productivity metrics 1719. The figures are even higher in the United Kingdom, where 85% of employers admit to monitoring their staff's online activity 18. This surveillance captures a vast array of telemetry, including active work hours, web browsing histories, inbound and outbound communications, real-time screen tracking, and in some cases, keystroke logging and GPS tracking 1718.

| Surveillance Metric | United States (2025) | United Kingdom (2025) |

|---|---|---|

| Employers using online monitoring tools | 74% | 85% |

| Real-time screen tracking | 59% | 27% |

| Logging web browsing history | 62% | 36% |

| Use of biometric tracking / access controls | 67% | 92% (in-office belief) |

| AI integration for performance evaluation | 61% | N/A |

| Employees feeling stress/anxiety due to monitoring | 45% (in highly monitored settings) | 46% |

Comparison of employer surveillance adoption and employee sentiment based on 2025 quantitative workplace surveys 171819.

The rationales provided by employers heavily emphasize productivity and operational control. In the UK, 51% of employers confess they do not trust employees to work without direct supervision, and 56% feel remote work results in a lack of operational control 18. Consequently, 91% of UK employers believe online monitoring effectively tracks performance, despite widespread employee dissent regarding its ethical deployment 18.

Erosion of Autonomy and Counterproductive Behaviors

The widespread implementation of these systems has triggered a mental health crisis among the workforce. Research demonstrates that workers generally react negatively to on-the-job surveillance, but algorithmic monitoring induces significantly higher levels of dissatisfaction and resistance than human oversight 2021.

A rigorous pair of studies from Cornell University demonstrated that when participants believed they were being monitored and evaluated by AI, they perceived a greater loss of autonomy, generated fewer creative ideas, and exhibited worse overall performance compared to those monitored by a human research assistant 21. The problem lies in the perceived lack of context; employees fear that AI assessments evaluate behavior automatically straight from the data, without the ability to contextualize human nuance, fatigue, or qualitative effort 2021.

A 2026 working paper by the International Labour Organization (ILO) corroborated these findings on a global scale. The ILO warned that intrusive surveillance, shrinking autonomy, and opaque data practices are creating a new generation of psychosocial risks 22. As AI systems increasingly determine task allocation, performance evaluation, and promotions, the resulting loss of job control directly correlates with heightened stress, burnout, and emotional exhaustion 2223. Furthermore, the lack of interpersonal communication resulting from heavy reliance on AI tools isolates workers, depleting their emotional resources and exacerbating feelings of loneliness 24.

In response to the pervasive feeling of being watched, employees engage in systemic resistance and "productivity theater." The awareness of constant monitoring does not reliably increase genuine productivity; instead, it diverts cognitive energy toward gaming the surveillance infrastructure 1720. In the U.S., 32% of employees feel pressured to work faster due to monitoring, and 24% take fewer breaks to avoid appearing idle 1719. To artificially inflate their digital footprint, 16% of U.S. workers keep unnecessary applications open, 15% schedule emails to send at delayed intervals, and 12% utilize physical or software-based "mouse jigglers" to simulate activity 1719. In the UK, 14% of employees engage in "coffee badging" - attending the physical office briefly solely to register their presence on biometric or keycard systems 18.

These behavioral adaptations are explained by the Conservation of Resources (COR) theory. When employees' emotional and cognitive resources are depleted by the stress of algorithmic surveillance, they resort to counterproductive work behaviors to protect their remaining energy and simulate compliance, rather than engaging in deep, meaningful labor 24. The ultimate resistance is attrition: in the U.S., one in six employees is actively considering quitting their job entirely due to invasive monitoring practices, and 24% would be willing to take a pay cut to escape surveillance 17.

Predictive Analytics: The Corporate Rationalization

While employee sentiment skews heavily negative, the enterprise software industry argues that AI performance tools are indispensable for organizational health and equity. Platforms utilizing AI-driven people analytics - such as Workday People Analytics, Qualtrics EmployeeXM, Windmill, and Leapsome - focus heavily on "predictive retention" and dynamic goal optimization 262526. By continuously harvesting data from collaboration tools (like Slack and GitHub), engagement surveys, and attendance patterns, these systems can accurately identify "flight risks" - employees likely to resign - up to 60 days in advance 252627.

Proponents argue that AI actually reduces human bias in performance evaluations by anchoring reviews to objective, data-driven outputs rather than subjective managerial recollections 252627. In 2025, 70% of organizations reported using AI for real-time performance tracking, with some models citing a 33% reduction in promotion bias 2526. The Cornell researchers noted a crucial caveat regarding employee acceptance: when algorithmic surveillance is explicitly framed as developmental and supportive, rather than punitive and automated, workers exhibit significantly less resistance 2021. However, establishing this trust requires ironclad data governance and transparent communication, which remains exceedingly rare in practice, leading to persistent friction 2728.

Public Surveillance and Civil Society Impact

Beyond the workplace, AI surveillance is fundamentally altering the geography of public spaces. From retail environments to municipal infrastructure, the deployment of computer vision and behavioral analytics is reshaping how citizens interact with their physical surroundings, often prioritizing institutional security over individual privacy.

AI in Retail and Loss Prevention

In Western economies, public AI surveillance is driven predominantly by corporate loss prevention and municipal policing rather than overt state social engineering. By 2025, the retail sector faced an existential crisis due to organized retail crime (ORC) and inventory shrinkage, which cost U.S. businesses over $100 billion annually 29. Traditional security models - such as static cameras, locked displays, and human security guards - proved insufficient and financially unsustainable 29.

Consequently, retailers have aggressively deployed AI-powered computer vision systems capable of real-time anomaly detection . Platforms developed by companies like Auror and Indyme do not merely record video; they autonomously flag suspicious behaviors, detect scan avoidance at self-checkouts, spot product switching, and utilize voice-event reporting to auto-populate incident logs 3031. Retailers increasingly view AI not as a futuristic add-on but as a core requirement for business survival, shifting security from a reactive posture to predictive intelligence 2931.

State Surveillance Myths and Realities

Conversely, state-driven public surveillance is heavily scrutinized, often through the lens of China's Social Credit System (SCS). In Western sociological discourse, the SCS is frequently characterized as an Orwellian, unified AI panopticon that autonomously punishes citizens for minor infractions - akin to dystopian fiction. However, scholarly analysis from 2024 and 2025 reveals a far more complex and fragmented reality 35.

Far from a unified algorithmic monolith, the SCS remains a conglomeration of 43 independently operated local pilot systems 35. Contrary to the myth of a high-tech AI apparatus, many of these local systems rely heavily on manual data input, which introduces human bias and error rather than machine omniscience 35. Furthermore, the system is fundamentally reward-centric; local initiatives focus heavily on "redlists" to reward desirable behavior, rather than "blacklists" designed for mass punishment 35. The primary sociological driver of the SCS is an attempt to mend a profound "crisis of trust" within society, triggered by decades of government corruption and severe food safety scandals, rather than to serve as a primary tool for repressing political dissidents 35.

Nevertheless, legitimate human rights concerns persist globally regarding public biometric tracking. Facial recognition technology (FRT) utilized by law enforcement remains deeply flawed. Extensive testing by the National Institute of Standards and Technology (NIST) demonstrates that FRT algorithms exhibit persistent racial and gender biases, experiencing significantly higher false positive match rates for Asian and African American individuals, as well as women 3233. The American Civil Liberties Union (ACLU) has warned that utilizing these systems exacerbates racism in policing outcomes and violates Fourth Amendment privacy rights, leading to unjustified arrests based on algorithmic hallucinations 33. In the UK, an independent legal review by the Ada Lovelace Institute in 2024 concluded that police deployment of Live Facial Recognition (LFR) and emerging affect recognition (emotion tracking) exists in a regulatory vacuum and relies on shaky legal grounds that often fail to meet human rights standards 3435.

Geopolitical Proliferation and the Global South

The export of AI surveillance technology has become a central theater of geopolitical competition. The ability to supply a nation's digital infrastructure provides the exporting country with profound economic leverage, diplomatic soft power, and access to vast data repositories. This dynamic is rapidly reshaping governance in the Global South.

Export Trends and the Digital Divide

The Carnegie Endowment for International Peace's AI Global Surveillance (AIGS) Index tracks the unprecedented proliferation of these technologies. The data reveals that AI surveillance is spreading at a significantly faster rate than previously understood 1. At least 75 countries - representing 43% of the assessed nations globally - are deploying AI-powered surveillance, ranging from closed autocracies to advanced liberal democracies 136. The most dominant applications are facial recognition systems (used by 64 countries), smart city platforms (56 countries), and smart policing algorithms (52 countries) 1.

The geopolitical contest between the United States and China fundamentally shapes this deployment. Washington has largely pursued a "strategy of control," utilizing export controls to restrict foreign access to advanced AI chips and attempting to consolidate frontier model weights among a small tier of trusted allies to prevent adversaries from free-riding on innovation 373839.

Conversely, Beijing pursues a "strategy of diffusion" 39. By championing open-source AI communities, subsidizing efficiency in models (like DeepSeek, which rapidly gained 125 million users globally), and attaching fewer ideological strings to its technology, China effectively courts emerging economies 4041. Through initiatives like the Digital Silk Road, China provides comprehensive infrastructure packages to the Global South, bundling fiber optic cables, data centers, and surveillance cameras 4243. While U.S. companies like Microsoft and Google are making investments - such as a $1 billion data center project in Kenya - China remains the primary supplier of surveillance hardware to the developing world 4142.

Digital Authoritarianism and Civil Society Risks

The influx of foreign AI surveillance into the Global South presents severe risks to civil society. A 2026 report co-authored by the African Digital Rights Network highlighted that 11 African governments have spent at least $2 billion on Chinese-built mass surveillance systems 4445. Nations such as Nigeria ($470 million for 10,000 smart cameras), Egypt, and Uganda have installed thousands of biometric tracking devices, often financed through loans from Chinese banks 4445.

While these governments justify the expenditures as necessary for national security and urban modernization, researchers note a distinct lack of evidence that these systems effectively reduce crime 4445. Instead, the deployment occurs in regulatory vacuums. Without robust data protection laws or independent judicial oversight, these AI systems are utilized to monitor human rights activists, track political opponents, and suppress protests, disproportionately affecting marginalized groups 4445.

This dynamic fosters "digital authoritarianism" and perpetuates a post-colonial dependency wherein countries in the Global South become mere deployment sites and data-harvesting grounds for foreign powers, without reaping the economic or technological benefits of AI 464748. As indicated by a comprehensive 2025 study by KPMG and the University of Melbourne encompassing 48,000 respondents across 47 countries, public trust in AI remains precarious globally, with only 46% of people expressing a willingness to trust AI systems, highlighting a universal tension between the technology's benefits and its invasive risks 495051.

International Regulatory Divergence

As the societal risks of AI surveillance become undeniable, global regulatory bodies are attempting to impose legal constraints. However, the international landscape is characterized by deep fragmentation, creating a complex web of compliance requirements for technology developers. The European Union, the United States, and China have adopted fundamentally different regulatory philosophies.

| Regulatory Regime | Core Philosophy | Enforcement Scope | Key AI Surveillance Restrictions |

|---|---|---|---|

| European Union (AI Act) | Comprehensive, horizontal, risk-based classification 5657. | Extraterritorial (applies to any system impacting EU citizens) 56. | Bans real-time biometric identification in public and emotion recognition in workplaces/schools 5259. |

| United States (Federal/State) | Fragmented, market-driven, sector-specific 5660. | Territorial, mostly state-level enforcement (e.g., California, Illinois) 5660. | No federal ban. State laws (e.g., BIPA) require strict consent and provide private rights of action for biometric data 535463. |

| China (Deep Synthesis & AI Measures) | State-controlled, content-focused, rapid iteration 5657. | Extraterritorial (applies to systems accessible by Chinese users) 5759. | Mandatory labeling of AI content; security assessments ensuring alignment with state ideology 565955. |

The European Union's Preemptive Architecture

The EU AI Act (Regulation 2024/1689), which entered into force in August 2024 with prohibitions taking effect in early 2025, represents the world's first comprehensive legal framework for AI 565752. The EU utilizes a risk-tiered system, explicitly prioritizing the protection of fundamental human rights over unfettered innovation 5965.

Crucially, the Act categorizes specific forms of AI surveillance as "unacceptable risk" and bans them outright. This includes social scoring mechanisms and the use of emotion recognition technologies in workplaces and educational institutions 5259. Real-time remote biometric identification in publicly accessible spaces by law enforcement is also strictly prohibited, save for exceptionally narrow, pre-authorized circumstances 5260. The Act's extraterritorial reach means that any global corporation deploying AI affecting EU citizens must comply, establishing the EU as a de facto global standard-setter 5660.

The Fragmented United States Landscape

In stark contrast, the United States lacks a comprehensive federal AI statute. Federal oversight relies on non-binding Executive Orders (such as EO 14110) and the application of existing consumer protection laws by sector-specific agencies like the Federal Trade Commission (FTC) 5657. Consequently, the regulatory burden has fallen to individual states, resulting in a fractured patchwork of laws that vary wildly in enforcement capability 6056.

The most potent mechanism against corporate AI surveillance in the U.S. is the Illinois Biometric Information Privacy Act (BIPA). Enacted in 2008 and continually tested by modern AI applications, BIPA requires private entities to obtain explicit written consent before collecting biometric identifiers - including facial geometries and voiceprints 5363. Unlike California's Consumer Privacy Act (CCPA), BIPA includes a private right of action, allowing individuals to sue for statutory damages ranging from $1,000 to $5,000 per violation 535463.

This provision has resulted in massive corporate liability, including a $550 million settlement by Meta/Facebook regarding facial recognition tagging, and recent class-action lawsuits targeting AI meeting assistants (e.g., Fireflies.AI) that autonomously record and generate voiceprints during corporate calls without prior consent 5758. In early 2026 rulings, courts explicitly rejected attempts by corporations to apply California's weaker CCPA standards to Illinois residents, emphasizing that BIPA reflects a fundamental public policy to protect immutable biometric identities from corporate extraction 54.

California, meanwhile, finalized regulations in July 2025 under the CCPA targeting Automated Decision-Making Technology (ADMT) 53. These rules require companies to provide detailed notices to employees and job applicants before utilizing AI application screening tools or performance evaluation metrics, adding significant friction to the deployment of workplace surveillance 53.

China's Agile Content Governance and Labeling Standards

China has enacted highly targeted, rapid regulations focusing primarily on content control, data sovereignty, and social stability 57. Rather than relying on a sweeping horizontal law, China implemented the Deep Synthesis Provisions (2023) and Generative AI Measures, which require mandatory watermarking and labeling of all AI-generated content 565755. China was the first country to globally mandate AI content labeling, moving over two years before the EU AI Act's transparency obligations became enforceable 55.

Before any generative AI service or predictive algorithm can be released to the Chinese public, it must undergo a stringent state security assessment to ensure its outputs conform to state ideologies, utilize lawfully sourced training data, and respect intellectual property 5659. This approach ensures that while commercial innovation in AI is aggressively subsidized by the state to compete globally, the underlying algorithms remain entirely subservient to the political imperatives of the government 395759.

Conclusion

The integration of artificial intelligence into the apparatus of surveillance represents a fundamental disruption of human psychology, workplace dynamics, and international governance. As algorithms replace human oversight, the nature of being watched shifts from intermittent and contextual to continuous, predictive, and opaque.

In the workplace, the aggressive deployment of tracking software and algorithmic evaluation tools has engineered an environment characterized by profound distrust. While corporations leverage these systems to predict retention and optimize output, the psychological cost is extracted directly from the workforce, manifesting as elevated anxiety, autonomy loss, and systemic behavioral resistance. In the public sphere, the geopolitical race to export AI surveillance infrastructure, particularly to the Global South, threatens to permanently alter the balance of power between states and citizens, accelerating the risk of digital authoritarianism.

The rhetorical defense that those with "nothing to hide" should welcome surveillance is sociologically obsolete. Privacy is not a shroud for illicit activity, but the necessary precondition for identity formation and democratic participation. As algorithmic anxiety induces chilling effects across populations, the diverse, unscripted behaviors that drive human flourishing are increasingly constrained. Ultimately, the future of AI surveillance will be dictated not merely by technological capability, but by the ongoing clash between fragmented global regulatory frameworks and the resilience of populations demanding transparent, human-centric governance.