Signal detection theory in perception and decision-making

The architecture of decision-making under uncertainty has long occupied the center of cognitive psychology, neuroscience, and human factors engineering. At the core of this inquiry lies Signal Detection Theory (SDT), a mathematical and psychological framework that revolutionized the understanding of how biological and artificial agents discriminate meaningful information (signals) from background interference (noise). Originally emerging from the radar and communications engineering fields of the post-World War II era, SDT was formally introduced to psychophysics in 1954 by researchers including Wilson P. Tanner Jr., John A. Swets, and Theodore Birdsall at the University of Michigan 1234. Their foundational work dismantled the prevailing assumptions of sensory perception, replacing rigid physiological models with a dynamic, statistically driven understanding of human judgment 24.

Today, the applications of SDT extend far beyond the detection of auditory tones and visual flashes in controlled laboratory environments. In contemporary contexts, SDT serves as the foundational grammar for evaluating highly complex sociotechnical systems. From the life-or-death diagnoses of radiology and the asymmetrical risk matrices of aviation security, to the algorithmic moderation of synthetic media and the cross-cultural variations in legal evidentiary standards, SDT provides an indispensable analytical lens 656. The following comprehensive analysis explores the theoretical underpinnings of SDT, explicitly refutes archaic concepts of absolute sensory thresholds, and maps the theory's modern applications across high-consequence human-AI interactions and diverse geographic and cultural landscapes.

The Epistemological Break: Refuting the Absolute Sensory Threshold

Prior to the advent of Signal Detection Theory, classical psychophysics - rooted in the 19th-century work of Gustav Fechner and Ernst Heinrich Weber - operated on the assumption of an "absolute sensory threshold" 37. This classical model posited a fixed, physiological boundary defining the minimum level of sensory input required for an organism to detect a stimulus at least fifty percent of the time 78. Within this paradigm, perception was viewed as a relatively passive, binary reception of physical reality 8. If a stimulus possessed sufficient physical energy to cross this biological threshold, it would enter conscious awareness; if it fell below this threshold, it would remain undetected, occasionally causing subconscious physiological reactions but failing to trigger cognitive recognition 79. Under this model, failures to detect a stimulus were entirely attributed to the organism's sensory inadequacy or the weakness of the physical signal.

The work of Tanner and Swets (1954) systematically dismantled this assumption, introducing a paradigm shift that remains the bedrock of modern perceptual science 13. The core argument against the absolute threshold rests on the realization that decisions are never made in a biological or environmental vacuum; they are continuously executed against a backdrop of spontaneous neural activity and external interference 2310. Tanner and Swets demonstrated that consciously accessible neural signals are inherently noisy, even in the complete absence of physical stimulation 310. Therefore, when a stimulus is presented, it does not trigger a response from a pristine baseline of zero. Instead, the stimulus yields a neural response consisting of the "signal plus noise" 34.

The observer is forced to act as an active, calculating decision-maker, evaluating whether the internal sensory magnitude experienced on a given trial was drawn from the spontaneous "noise-alone" distribution or the "signal-plus-noise" distribution 41112. Because these two statistical distributions invariably overlap, there is no fixed physiological threshold that can perfectly separate signals from noise 413. Instead of a fixed biological barrier, observers establish an adjustable, psychological decision criterion 34. If the internal neural activity exceeds this self-imposed criterion, the observer reports the presence of the signal; if it falls below, they report its absence 312.

This dynamic is further illuminated by comparing SDT to the High-Threshold Theory, an older competing model which postulated that response bias merely reflected random guessing when a stimulus failed to cross the threshold 14. SDT elegantly refutes this by showing that what classical psychophysicists interpreted as a fluctuating physiological threshold was actually the observer shifting their decision criterion based on how certain they were required to be 34. By demonstrating that human observers shift their "thresholds" based on task instructions, reward structures, fatigue, and the prior probability of a signal's appearance, SDT definitively proved that the classical absolute threshold was a methodological artifact 24. SDT substituted this artifact with a robust model of perception driven by statistical inference under uncertainty 14.

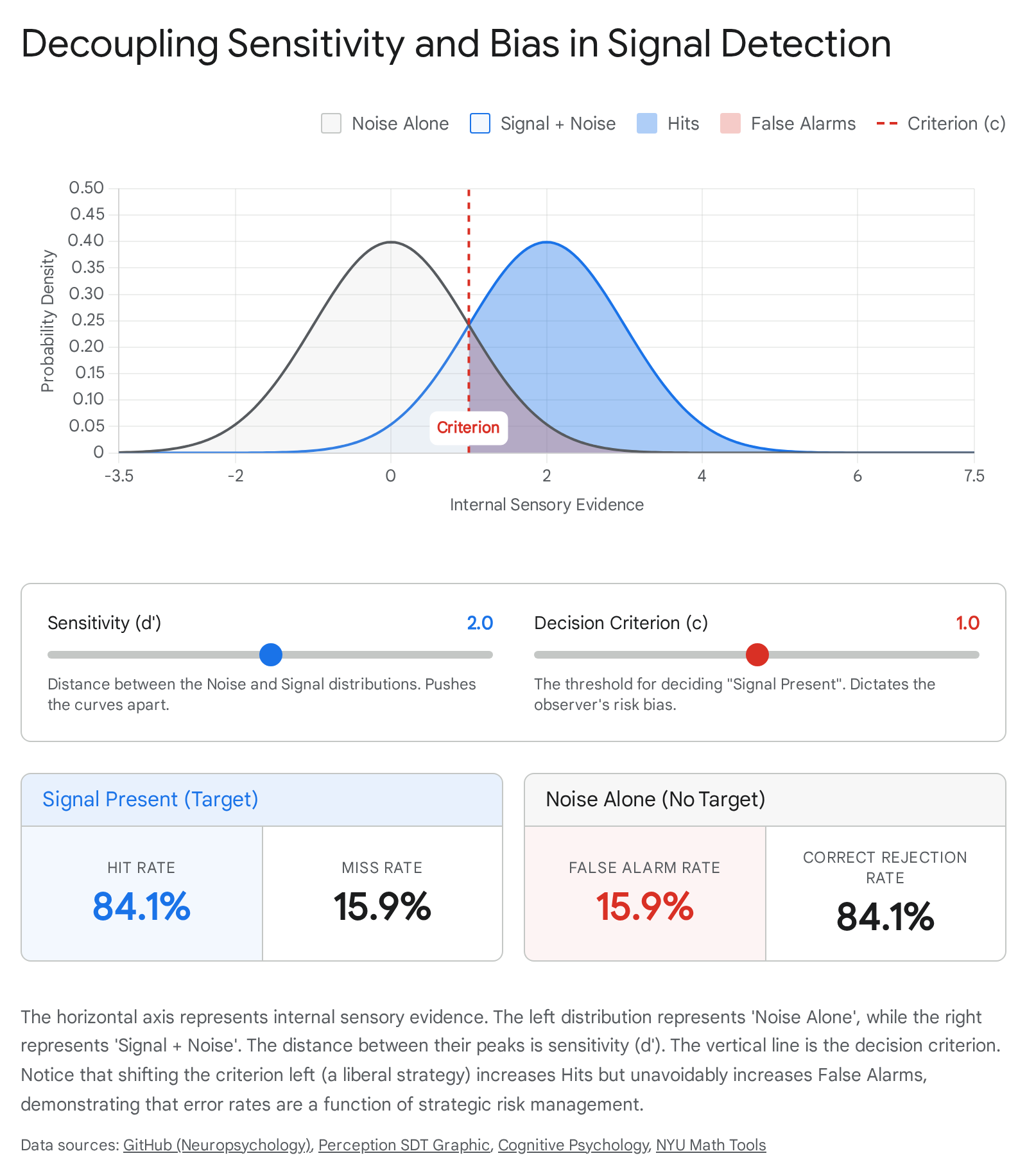

The Mathematical Architecture of SDT: Sensitivity Versus Response Bias

The enduring power of Signal Detection Theory lies in its ability to mathematically decouple the true discriminative capacity of an observer from their strategic willingness to report a signal. In any detection task involving uncertainty, there are four possible outcomes based on the true state of the environment and the observer's subsequent decision 1115.

The 2x2 Matrix of Decision Outcomes

The following matrix maps the fundamental permutations that anchor all SDT analysis, providing the foundational metrics for evaluating everything from human sentries to sophisticated machine learning classifiers 2111516.

| Signal is Present (True State) | Signal is Absent (True State) | |

|---|---|---|

| Observer Responds "Yes" | Hit (Correct Detection) The observer correctly identifies the presence of the target stimulus. |

False Alarm (Type I Error) The observer incorrectly claims the target is present, reacting to noise. |

| Observer Responds "No" | Miss (Type II Error) The observer fails to detect a target that is actually present in the environment. |

Correct Rejection The observer accurately determines that no target is present. |

Traditional metrics, such as overall diagnostic accuracy, fundamentally confound performance because they fail to separate the observer's inherent sensory capabilities from their behavioral strategies 17. SDT dictates that simple accuracy should be abandoned in favor of measuring sensitivity and bias independently, because multiple, wildly divergent combinations of sensitivity and bias can produce the exact same overall accuracy score 17.

Under SDT, performance is evaluated using independent, orthogonal metrics 1218.

Sensitivity ($d'$ and $A'$)

Sensitivity, most commonly denoted as $d'$ (d-prime), quantifies the observer's fundamental ability to discriminate the "signal-plus-noise" distribution from the "noise-alone" distribution 41219. Mathematically, $d'$ represents the standardized distance between the means of these two overlapping probability distributions 121419. It is calculated as the difference between the z-transformed hit rate and the z-transformed false alarm rate ($d' = z[Hit Rate] - z[False Alarm Rate]$) 111920. A higher $d'$ indicates greater discriminability and less overlap between the distributions, representing an inherently superior diagnostic capacity, a stronger physical signal, or a less noisy environment 1921.

When the assumption of equal variance between the signal and noise distributions cannot be met, researchers often utilize non-parametric estimates of discriminability such as $A'$ (A-prime), where a value near 1.0 indicates perfect discriminability and 0.5 indicates chance performance 111922. Furthermore, in tasks like X-ray visual inspection, advanced metrics like $d_a$ (which accommodates unequal variances and utilizes a slope parameter) are increasingly recommended to avoid systemic overestimation of true detection capabilities 2223.

Response Bias and Decision Criterion ($\beta$ and $c$)

While sensitivity metrics measure ability, the decision criterion measures strategy. The criterion reflects the strictness of the threshold the observer requires before being willing to endorse a "Yes" decision 419. This is commonly quantified using either $\beta$ (the ratio of the normal density functions of the signal to noise distributions at the specific criterion point) or $c$ (the standard deviation distance of the criterion from the intersection of the two distributions) 111924. Furthermore, non-parametric estimates of bias like $B''D$ are utilized to represent liberal or conservative tendencies without assuming normal distributions 1119.

A critical insight of SDT is that systematic errors - specifically high rates of False Alarms or Misses - are rarely the result of sensory or systemic failure. Rather, they are driven by strategic risk tolerance based on the operator's environment 101725.

- Liberal Bias (Low Criterion / $\beta < 1$): The observer minimizes Misses but accepts a correspondingly high number of False Alarms. The observer is highly willing to say "Yes" upon minimal sensory evidence 121925.

- Conservative Bias (High Criterion / $\beta > 1$): The observer minimizes False Alarms but suffers a high rate of Misses. The observer requires overwhelming evidence before committing to a "Yes" decision 121925.

The full range of an observer's operational choices can be mapped using a Receiver Operating Characteristic (ROC) curve, which plots the False Alarm rate against the Hit rate across all possible criterion settings 410. A highly sensitive observer produces an ROC curve that bows sharply toward the upper-left corner of the graph, indicating the ability to achieve a high Hit rate with minimal False Alarms 410. Conversely, a curve hugging the diagonal indicates near-chance performance, where the signal is indistinguishable from the noise 1026.

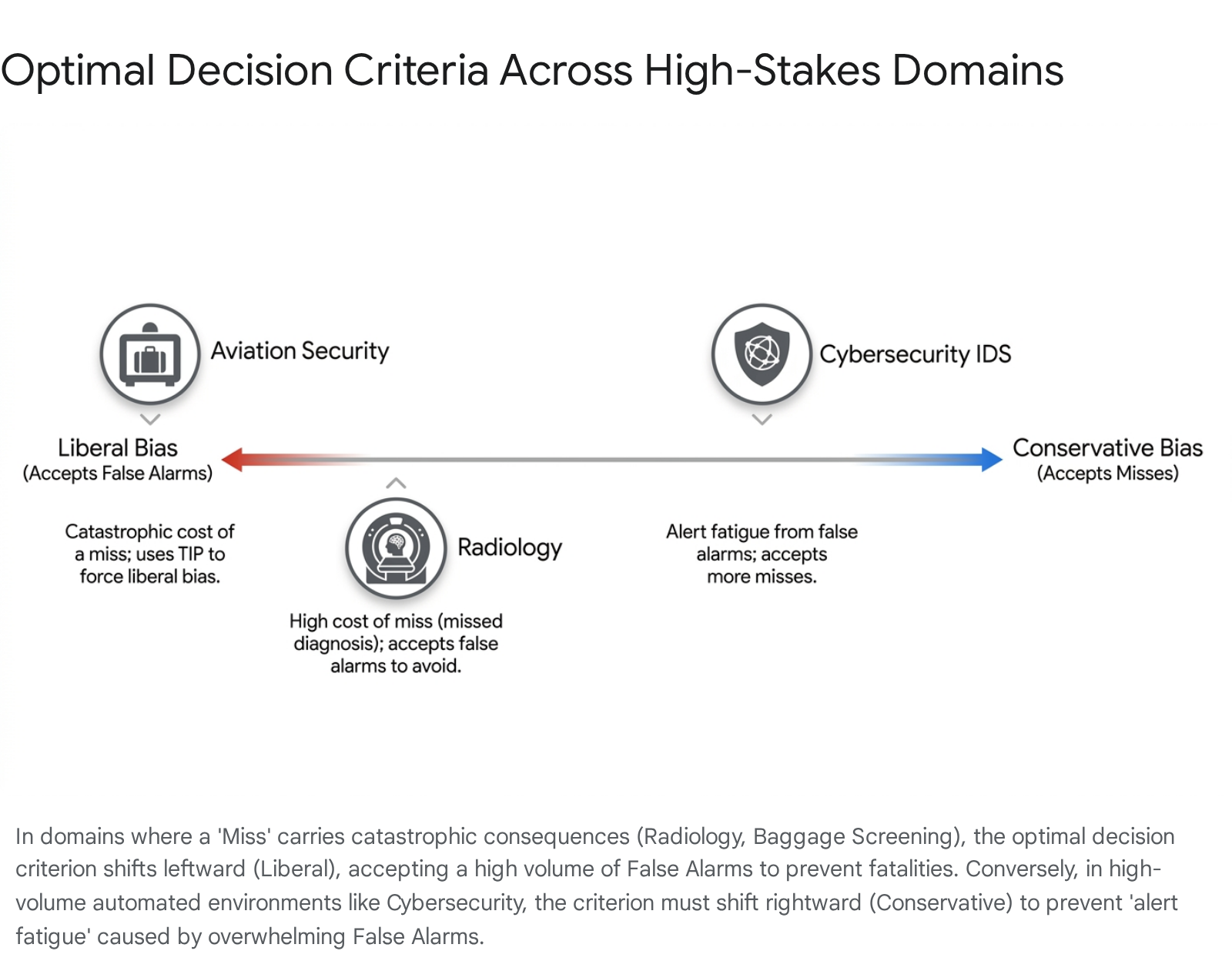

Asymmetrical Costs: Shifting the Optimum Threshold Across High-Stakes Domains

By incorporating the economic concept of utility into SDT, the framework serves as a generative model of optimal decision-making rather than merely a descriptive analytical tool 17. In real-world environments, the cost of a False Alarm is rarely equivalent to the cost of a Miss 2527. Furthermore, the prior probability (or base rate) of encountering a true signal is rarely an evenly balanced fifty percent 1725. To maximize expected utility, human operators must dynamically adjust their decision criterion ($\beta$) based on the asymmetrical payoff matrix inherent to their specific operational field 25.

A comparative survey of high-stakes domains - radiology, aviation security, and cybersecurity - illustrates how environmental parameters strictly dictate optimal criterion placement and how human cognition occasionally falters under these constraints.

Radiology and Medical Diagnostics

In medical imaging, radiologists search for low-contrast anomalies (tumors) within highly textured, noisy backgrounds (healthy anatomical tissue) . The asymmetrical costs in this domain are stark and uncompromising. A Miss - failing to detect an early-stage malignancy - carries a catastrophic cost, potentially resulting in patient mortality or delayed, more difficult treatment 2528. Conversely, a False Alarm - flagging a benign anomaly as potentially malignant - results in heightened patient anxiety and costly, invasive secondary testing such as biopsies, but rarely leads directly to death 25.

Given this asymmetrical payoff matrix, optimal decision-making in radiology mathematically demands a heavily liberal bias (low criterion) 2526. Physicians are ethically and legally conditioned to accept a higher volume of False Alarms to ensure that the rate of Misses approaches zero 25. However, radiologists face a severe complicating factor recognized in psychophysics as the "prevalence effect" 29. In routine screenings, the actual base rate of significant disease is exceedingly low. The human cognitive system naturally drifts toward a more conservative criterion when signals are rare, leading to an insidious and dangerous increase in Misses 2930. While researchers have proposed mitigating this effect by digitally inserting fictional patients or anomalies into the workflow to artificially raise the signal prevalence, surveys indicate heavy resistance; up to 89% of academic radiologists oppose the practice, primarily citing the unacceptable loss of actual clinical diagnostic time 29.

Aviation and Baggage Screening

The cognitive demands of airport X-ray baggage screening share striking psychophysical similarities with radiology, yet the operational constraints and payoff matrices drive completely different systemic adaptations 29. Like the radiologist, the aviation security officer is hunting for rare, catastrophic anomalies (weapons, improvised explosive devices) hidden in visually complex noise (heavily cluttered luggage) 3031. A Miss could result in the downing of a commercial airliner, an event of unimaginable cost, while a False Alarm merely results in a secondary manual search of the passenger's bag or body 2732.

However, unlike the carefully paced environment of radiology, aviation security requires rapid, continuous throughput. The Transportation Security Administration (TSA) relies on Explosive Detection Systems (EDS) that cost nearly $1 million each and process between 160 to 210 bags per hour 6. Cost-benefit analyses of advanced security layers, such as Advanced Imaging Technology (AIT) full-body scanners, highlight the extreme financial variables at play. Models estimating economic losses from a successful attack at $2 to $50 billion suggest that an attack probability must exceed 160% to 330% per year to guarantee a 90% certainty that the $1.2 billion annual cost of AIT scanners is economically justified 32.

For the human operator, the extreme rarity of threat items naturally pushes their internal criterion toward an overly conservative response bias, severely compromising security 293033. To counteract this degradation, modern aviation security heavily relies on Threat Image Projection (TIP) systems 3033. TIP systems digitally superimpose fictional threat items (FTIs) - such as guns or explosives - onto the live X-ray feeds of actual passenger bags 2933. By artificially inflating the target prevalence, the system forces the screener's internal criterion back toward a liberal, high-vigilance state, mitigating the prevalence effect 2933. While highly effective, human factors research notes that TIP systems must be carefully calibrated; if FTIs exhibit unrealistic artifacts or are projected onto impossible locations (e.g., floating outside a bag), they fail to properly train the operator's $d'$ sensitivity and instead merely frustrate the screening process 3033.

| Security Domain Metric | System Characteristics & SDT Implications |

|---|---|

| Explosive Detection Systems (EDS) | High throughput (160-210 bags/hr), high cost (~$1M/unit). Operates as primary screening. Relies on TIP to manage human operator response bias toward rare threats 630. |

| Explosive Trace Detection (ETD) | Lower throughput (40-50 bags/hr), lower initial cost ($45K/unit) but 10x higher labor cost. 99.7% accurate. Acts as secondary screening to resolve False Alarms generated by primary systems 6. |

| Advanced Imaging Technology (AIT) | Millimeter-wave full-body scanners. Requires an estimated attack probability of >160% per year to be considered highly cost-effective under utility models simulating $2B-$50B in attack losses 32. |

Cybersecurity and Intrusion Detection

In the realm of cybersecurity and network intrusion detection systems (IDS), the SDT parameters are often inverted by the sheer scale of the data and the nature of the adversary 3435. Unlike radiology or baggage screening, where the human visual cortex is the primary sensor, modern cybersecurity relies heavily on automated algorithms to detect malicious code or network anomalies before a human is ever alerted 3438.

In cybersecurity, the base rate of innocuous network traffic is astronomically high. If an IDS is tuned with a highly liberal criterion to ensure absolutely no zero-day exploits are missed, the system generates an unmanageable cascade of False Alarms 3435. This dynamic results in "alert fatigue," a psychological phenomenon where human analysts, overwhelmed by the noise of false positives, effectively shift their own cognitive criteria to become extremely conservative, ultimately ignoring genuine alerts 3435.

Recent peer-reviewed research (2024-2025) emphasizes that rather than simply shifting the decision criterion, systems must inherently increase their $d'$ sensitivity through synergistic, hybrid ensembles 343836. For instance, modern Adaptive Intrusion Detection Systems utilizing deep learning have managed to push precision to 90% and recall to 85% by refining how the algorithm separates signal from noise 34. In the context of phishing detection, researchers have demonstrated that integrating subtle behavioral deception cues - such as right-click blocking, mouse-over effects, and hidden iframes - alongside traditional structural features can yield a macro F1 score of 97% 36. By increasing the algorithmic $d'$, these systems can operate at a more conservative criterion (reducing alert fatigue) without sacrificing the critical Miss rate 3436.

The Algorithmic Frontier: SDT in Modern AI Applications (2023+)

As artificial intelligence matures from discrete classifiers to generative models and autonomous agents, SDT is increasingly deployed not just to model human cognition, but to evaluate the sophisticated sociotechnical ecosystems where humans and algorithms interact 374142. In the past several years (2023 - 2026), human factors research has prioritized SDT to decode the complexities of algorithmic content moderation, deepfake detection, and the phenomenological feedback loops of human-AI trust and cognitive bias 3738444539.

Algorithmic Content Moderation: The Free Speech vs. Safety Trade-off

Social media platforms are tasked with filtering billions of pieces of user-generated content daily, operating at a scale that necessitates algorithmic intervention 54440. The algorithms deployed to detect hate speech, cyberbullying, and synthetic media operate entirely on the principles of SDT, relying on vast datasets to build models of signal and noise 3848. When platform engineers design these systems, they must tune the algorithmic criterion to balance fiercely opposing public interests.

Setting a highly sensitive, liberal criterion effectively purges the platform of toxic content, minimizing the Misses that lead to user psychological harm, radicalization, and brand degradation 444548. However, algorithms notoriously struggle with nuance, context, and human intent - for instance, failing to distinguish between genuine hate speech and the reclaiming of a slur by a marginalized community 3848. A liberal criterion inevitably results in a high volume of False Alarms - benign, legitimate speech that is unjustly removed or suppressed 545. Under the regulatory framework of the European Digital Services Act (DSA) and broader considerations of democratic discourse, these False Alarms are recognized not merely as technical glitches, but as systematic infringements on freedom of expression and digital rights 54142.

The inconsistency in how platforms place their criteria is glaring. During the 2024 U.S. Presidential election, an automated evaluation of Text-to-Image (T2I) systems designed to prevent political deepfakes revealed that prominent platforms took fundamentally different approaches to moderation 43. Some exhibited little to no consistency in blocking behavior, while others, like Stability AI, allowed almost all prompts featuring political figures until implementing a sudden, extreme criterion shift just two weeks before the election 43.

Conversely, setting a conservative criterion protects free speech by minimizing False Alarms but allows dangerous misinformation and abusive content to proliferate 3840. The psychological toll of this moderation structure is profound. Recent studies highlight that content moderators - the humans tasked with manually reviewing the algorithmic outputs and edge cases - suffer severe secondary trauma 4445. When algorithmic sensitivity is miscalibrated, these workers are forced to view massive volumes of borderline or explicitly violent content. This regular exposure to abusive material leads to conditions such as PTSD and induces confirmation bias, fundamentally skewing the human moderators' own internal SDT criteria toward negative interpretations of ambiguous stimuli 45. High-profile incidents, such as the widespread circulation of deepfake pornography targeting South Korean actress Shin Se-kyung in 2024, underscore the psychological devastation caused when platforms under-enforce policies, ultimately driving sweeping legal reforms regarding AI-generated content 45.

To mitigate these issues, researchers advocate for "friction-in-design" and hybrid approaches that blend algorithmic scale with the "wisdom of the crowd" - utilizing user reports, community feedback, and contextual retrieval-augmented generation (RAG) to ensure the system analyzes not just the raw text, but the intent and history of the user 3844.

Human-AI Interaction and Bias Amplification

A critical emerging area of SDT research involves evaluating human trust in large language models (LLMs) and predictive AI systems deployed in high-consequence environments 424546. When a human operator utilizes an AI decision-support system (DSS) - whether a military commander analyzing automated target recognition on a digitized battlefield or a clinician using diagnostic AI - the human's sensitivity ($d'$) becomes inextricably linked to the machine's outputs 42.

Recent experimental data demonstrates a deeply troubling phenomenon: human-AI feedback loops actively amplify inherent human biases 413947. Because AI systems possess vast computational resources, their internal judgements tend to be far less noisy than human judgements once trained 47. This high signal-to-noise ratio renders the AI highly persuasive to the human operator 4547.

However, when an AI system exhibits a subtle bias - perhaps due to historical representational bias in the training data, or an optimization parameter that skewed the algorithm's criterion - human operators rapidly adopt and amplify this bias 413947. The human operator calibrates their own internal decision criteria to match the machine, assuming the machine possesses superior discriminability 4147. This "automation bias" highlights a critical vulnerability in human-AI teaming. Human oversight, which is often legally mandated as a safeguard against algorithmic failure, routinely degrades because operators defer to the algorithm's seemingly superior performance, effectively collapsing their independent standard of review 374142. Interestingly, research suggests that specialized training can mitigate this; for instance, studies involving West Point cadets utilizing AI decision-support tools indicated they may be more accurately calibrated to resist automation bias than civilian counterparts, actively engaging in independent verification of algorithmic outputs 42. Furthermore, in certain human resources contexts, individuals with specific AI training still maintain a higher degree of trust in human managers over algorithmic recommendations, indicating that trust dynamics remain highly context-dependent 48.

Cross-Cultural Lenses and Regional Risk Tolerance

While the mathematics of Signal Detection Theory are structurally universal, the parameters of utility - specifically how a society weights the cost of a False Alarm against the cost of a Miss - are deeply embedded in cultural values and institutional histories. Cognitive theories of decision-making have increasingly incorporated cross-cultural dimensions, revealing that baseline decision criteria and response biases are not monolithic globally 49.

Cultural Response Bias: Individualism vs. Collectivism

Research spanning the last several decades, heavily updated through 2026, indicates significant, systematic variations in response bias correlating with cultural indices of individualism and collectivism 495059. When faced with ambiguity or uncertainty in psychophysical tasks or attitudinal surveys, individuals from highly individualistic cultures (e.g., North America, Western Europe) tend to exhibit an extreme response bias 5161. In SDT terms, they are prone to adopting distinct, firm criteria - either highly liberal or highly conservative - reflecting cultural values that prioritize assertiveness, decisiveness, and individual agency 4952.

Conversely, respondents from collectivist cultures - particularly those with historical ties to Confucianism in East Asia - frequently exhibit a "midpoint bias" or cautious response style 5163. Confronted with the exact same signal-to-noise uncertainty, these operators default to a highly guarded criterion, delaying definitive "Yes" or "No" decisions in favor of neutrality 51. This reflects deep-seated cultural norms emphasizing modesty, group harmony, and the avoidance of disruptive errors that could impact social cohesion 5152.

| Cultural Context | Prevailing SDT Response Bias Characteristics | Underlying Cultural Drivers |

|---|---|---|

| Highly Individualistic (e.g., North America, Sweden) |

Extreme Response Bias: Tendency to set firm, polarizing criteria at the ends of a scale. Rapid commitment to a "Yes" or "No" decision under uncertainty 5051. | Values prioritizing independence, assertiveness, uniqueness, and personal agency over group consensus 4952. |

| Highly Collectivistic (e.g., Confucian Asia, Global South) |

Midpoint / Cautious Bias: Tendency to set a guarded, central criterion. Reluctance to claim extreme certainty, resulting in neutral responses 505163. | Norms emphasizing modesty, duty to the in-group, maintaining social harmony, and avoiding disruptive individual errors 5152. |

These cultural dynamics heavily influence cognitive processing styles. Comparative experimental studies utilizing cartographic stimuli have shown that Central European populations tend to utilize an analytic cognitive style, breaking down visual noise into discrete parts, whereas East Asian populations tend to categorize the same spatial information holistically 53. When evaluating global data, researchers utilizing SDT must mathematically extract these cultural variations in response bias ($c$) to uncover the true underlying sensitivity ($d'$) of the populations 23596163. Techniques such as the "Over-Claiming Technique," which utilizes SDT formulas to index a respondent's tendency to claim familiarity with non-existent foils, are critical for separating actual knowledge (sensitivity) from culturally driven self-enhancement or modesty (bias) 54. It is also crucial to note that foundational global cultural mappings, such as Geert Hofstede's 1980 indices, have been heavily criticized in modern literature for relying on outdated IBM employee surveys that severely overestimated Western individualism and Eastern collectivism, skewing the understanding of these biases for decades 50.

Jurisprudential SDT: "Beyond a Reasonable Doubt"

The intersection of SDT and legal theory provides a profound demonstration of how regional institutional policies define the acceptable threshold for error 66655. In Western criminal justice systems, the standard of proof "Beyond a Reasonable Doubt" (BARD) represents the ultimate, legally mandated decision criterion 6.

Translated into the language of SDT, a criminal trial is a complex signal detection task where the "Signal" is the defendant's guilt and the "Noise" is their innocence. A False Alarm equates to a wrongful conviction, while a Miss equates to an acquittal of a guilty party. The BARD standard explicitly dictates an exceedingly high, conservative decision criterion 655. It operates on the philosophy of the Blackstone Ratio - the foundational legal ethos that it is better that ten guilty persons escape (ten Misses) than one innocent suffer (one False Alarm).

However, comparative legal studies reveal that the interpretation and execution of this criterion fluctuate wildly based on regional frameworks and institutional design 6655. The Anglo-American adversarial system relies heavily on lay juries, resulting in a heterogeneous and often flawed application of the criterion. Mock jury studies consistently show that because the term "reasonable doubt" is rarely quantified or defined by the court, individual jurors fail to distinguish it from lower standards like the preponderance of evidence, applying vastly different internal thresholds based on their own personal risk tolerance 655.

In contrast, continental European civil law systems (inquisitorial systems) rely on professional judges and the concept of intime conviction (an inner, deep-seated, personal conviction) 6655. While structurally analogous to BARD, the institutional requirements differ. For example, in systems like Bosnia and Herzegovina, the requirement to produce extensively reasoned, written judgments aligned with the jurisprudence of the European Court of Human Rights forces a highly formalized, standardized articulation of the evidentiary threshold 666. This institutional requirement to explicitly justify the decision limits unfettered judicial discretion and arguably stabilizes the decision criterion across the judicial system far more effectively than the opaque deliberations of a common law jury 666.

Conclusion

More than seven decades after Tanner and Swets relegated the absolute sensory threshold to historical obsolescence, Signal Detection Theory remains an unrivaled framework for deciphering complex decision-making architectures. By insisting on the rigorous mathematical separation of discriminative capacity ($d'$) from strategic risk tolerance ($\beta$ and $c$), SDT forces a vital realization: errors in high-stakes environments are rarely mere accidents of biology or flawed computer code. Rather, they are the unavoidable statistical byproducts of operating under uncertainty, dictated by the asymmetrical costs defined by the operational domain.

As society transitions further into an era of algorithmic governance and autonomous systems, the principles of SDT are more urgent than ever. Whether calibrating an X-ray scanner to intercept an explosive threat, tuning a multimodal large language model to moderate global speech without violating civil liberties, mitigating the automation bias in human-AI military teaming, or understanding how cultural humility alters diagnostic data, the fundamental challenge remains structurally identical. The optimization of these systems does not stem simply from increasing raw sensitivity or indiscriminately upgrading sensor technology. True optimization requires a profound, ethically grounded comprehension of the payoff matrix. Future engineering, legal reform, and policymaking must acknowledge that the placement of a decision criterion is, ultimately, a reflection of societal values - a quantifiable, mathematical measure of what a culture is willing to risk, and what it strives above all else to protect.