Self-organized criticality in complex systems

The study of complex systems is fundamentally concerned with understanding how large numbers of interacting elements spontaneously organize into macroscopic patterns without centralized coordination. Over the past four decades, the paradigm of self-organized criticality has emerged as a central framework for explaining the ubiquitous presence of scale-invariant phenomena, fractal geometries, and power-law distributions across both the natural and social sciences. First proposed in 1987 by physicists Per Bak, Chao Tang, and Kurt Wiesenfeld, the theory suggests that many dynamic systems naturally evolve toward a critical state - poised precisely at the boundary between order and chaos - without the need for external parameter tuning 12.

Initially demonstrated through the abstract dynamics of theoretical sandpile models, the self-organized criticality framework has since been applied to an extraordinarily diverse array of disciplines. Researchers have sought to explain phenomena ranging from the frequency-magnitude distribution of earthquakes and the firing patterns of mammalian neural networks, to the size distributions of wildland fires, the sudden collapse of financial markets, and the emergent reasoning capabilities of large language models 3456. However, as empirical data has grown more precise and theoretical physics has advanced, the original formulation of self-organized criticality has faced rigorous scrutiny. Alternative mechanisms, such as the sweeping of an instability and the presence of endogenous "Dragon King" outliers, have challenged the notion that all heavy-tailed distributions arise from true self-organization 47. This research report provides an exhaustive, expert-level analysis of the theoretical foundations of self-organized criticality and evaluates its applicability, successes, and limitations across geophysics, neuroscience, ecology, financial economics, and artificial intelligence.

Theoretical Foundations of Critical Phase Transitions

To understand the impact and the ongoing controversy surrounding self-organized criticality, it is necessary to examine the physical and statistical principles that define phase transitions, as well as the alternative mathematical frameworks that generate similar macroscopic signatures. The distinction between a system that is manually tuned to a critical point and one that arrives there spontaneously is the crux of the paradigm.

Classic Phase Transitions and the Sandpile Paradigm

In statistical mechanics, continuous (second-order) phase transitions occur when a system is driven precisely to a critical point via the careful tuning of an external control parameter 2. A classic example is the adjustment of temperature in a ferromagnetic material; at a specific critical temperature, the system transitions between an ordered magnetic state and a disordered non-magnetic state. At this exact critical point, the correlation length between interacting elements diverges to infinity, meaning that local fluctuations can propagate across the entire thermodynamic system 8. The macroscopic observables of the system at this boundary state do not possess a characteristic scale. Instead, they are described by power-law distributions, which appear as straight lines on logarithmic plots 89.

The defining innovation of the Bak-Tang-Wiesenfeld paradigm was the proposition that certain open, dissipative, and non-equilibrium systems do not require an external experimenter to tune them to this critical point. Instead, the intrinsic dynamics of the system naturally push it toward a statistically stationary state of marginal stability 12. This mechanism relies on the interplay between a slow, continuous driving force and fast, threshold-dependent dissipation. In the classic abelian sandpile model, grains of sand are added slowly (the driving force) until the local slope exceeds a critical threshold, triggering an avalanche (the fast dissipation) that redistributes the sand to neighboring sites 210. Over time, the system self-organizes to an absorbing-active continuous phase transition boundary where avalanches of all possible sizes occur, and the probability distribution of these avalanche sizes strictly follows a power law 211.

The observation of power laws - where the frequency of an event scales as a negative power of its size - became the primary empirical fingerprint used to identify self-organized criticality in the wild. If a system's event-size distribution formed a straight line on a log-log plot, it was frequently assumed to be self-organized critical 813. However, this assumption has been heavily contested by subsequent mathematical research, which demonstrated that power laws can arise from a multitude of non-critical mechanisms, including multiplicative stochastic processes, random walks, and preferential attachment dynamics 81213.

Sweeping of an Instability

One of the most robust physical critiques of the classic self-organized criticality hypothesis comes from the concept of the "sweeping of an instability," formalized extensively by physicist Didier Sornette and colleagues 412. This framework posits that many numerical and experimental systems that appear to exhibit self-organized criticality are actually operating under an entirely different mechanism. Rather than functioning persistently in a marginally stable condition regulated by a continuous feedback loop, these systems undergo a slow, continuous variation - or sweeping - of a control parameter across a bifurcation point or global instability 414.

In the sweeping of an instability, the observation of power-law distributions is traced back to the cumulative measurement of fluctuations that diverge as the system slowly approaches a non-critical instability, such as a first-order phase transition 4. Because the control parameter is constantly moving rather than hovering perfectly at an equilibrium point, the system integrates the precursory fluctuations over time. This temporal aggregation results in a distribution that mimics true self-organized criticality 414. This mechanism explains why power-law exponents often change dynamically as a system approaches total failure. In material rupture, acoustic emissions, and the Barkhausen effect, the critical exponents vary as the load increases, a phenomenon that cannot be easily explained by the strictly scale-invariant, constant exponents demanded by classic self-organized criticality 412.

Dragon Kings versus Black Swans

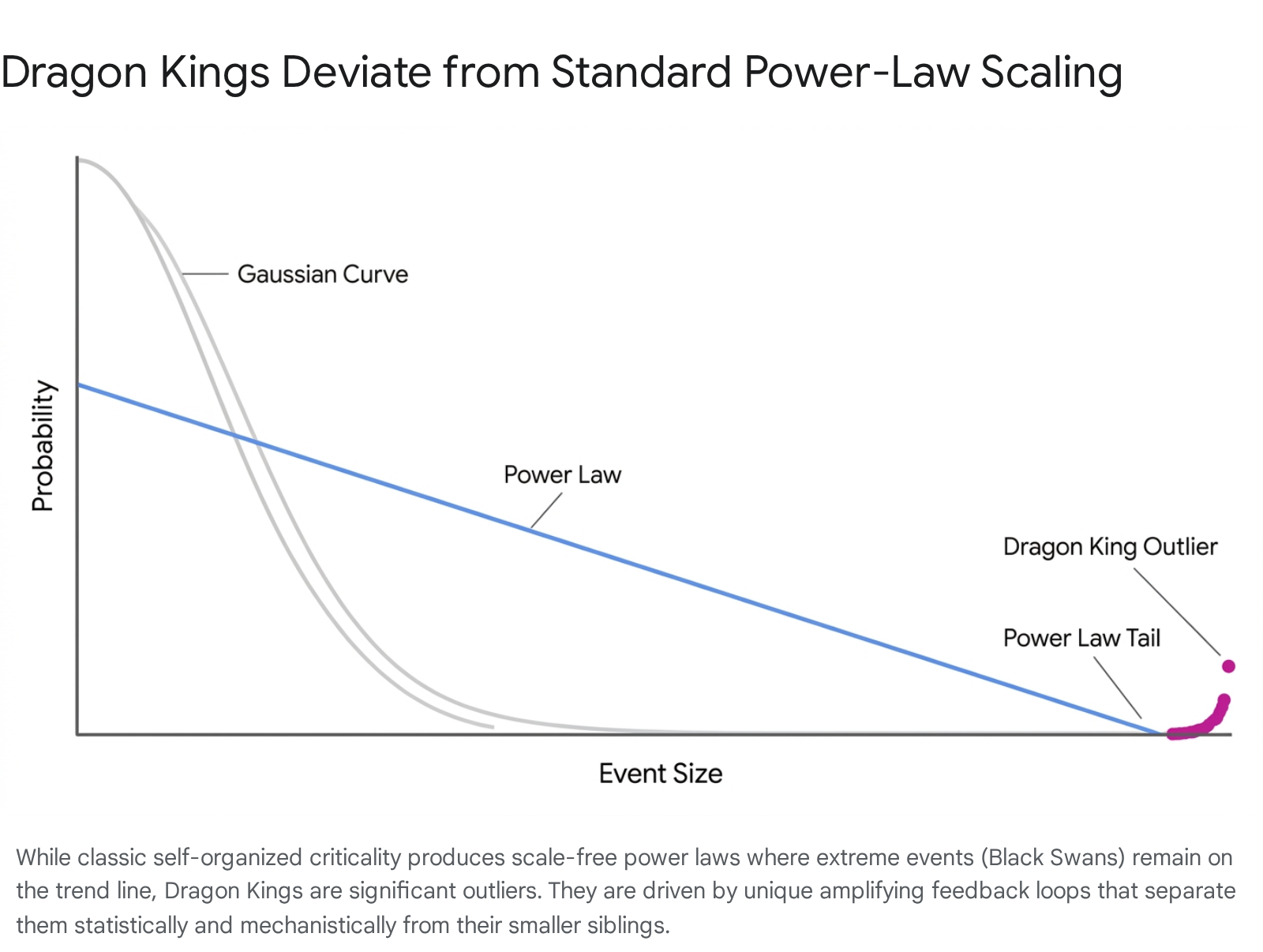

As the study of scale-free systems matured, researchers recognized that the extreme tails of power-law distributions often fail to capture the most catastrophic, system-altering events. In the prevailing nomenclature popularized by risk analysts, a "Black Swan" is an extreme, unforeseeable event that belongs to the heavy tail of a power-law distribution but is fundamentally unknowable in advance because it adheres to the same generative mechanics as smaller events 15.

In contrast, the "Dragon King" theory identifies extreme events that lie distinctly outside and above the extrapolated power-law curve of their smaller siblings 715.

Dragon Kings are statistically significant outliers generated by specific, identifiable endogenous feedback mechanisms, phase transitions, or synchronization processes that do not govern the smaller events in the system. They have been documented in the distribution of city sizes, the energies of epileptic seizures, hydrodynamic turbulence, and financial drawdowns 715.

Because they are the result of structural maturation and amplifying feedback loops, Dragon Kings exhibit observable precursory patterns - often in the form of log-periodic oscillations - that make them theoretically predictable 151617. This distinction is critical for risk management: while classic self-organized criticality implies that catastrophic events are driven by the exact same microscopic mechanics as tiny events and are therefore inherently unpredictable, the Dragon King framework asserts that the largest events represent a distinct physical regime that provides warning signs before rupture 720.

To clarify the phenomenological differences across these theoretical models, the following table summarizes the key mechanisms that generate scale-free or heavy-tailed distributions in complex systems.

| Theoretical Framework | Primary Mechanism of Action | Characteristic Output Distribution | Predictability of Extreme Events |

|---|---|---|---|

| Self-Organized Criticality | Slow driving and fast dissipation naturally tune the system to a marginally stable state. | Pure power law across all scales. | Unpredictable; large avalanches share the same mechanics as small ones. |

| Sweeping of an Instability | Continuous, slow variation of a control parameter across an instability point. | Power law up to an exponential cutoff, often with time-varying exponents. | Partially predictable; precursory fluctuations increase near the instability. |

| Dragon King Theory | Endogenous positive feedback, synchronization, or structural maturation isolated to extreme scales. | Power law for small/medium events, with distinct outliers at the extreme tail. | Theoretically predictable; precursors such as log-periodic oscillations appear. |

| Multiplicative Processes | Proportional growth dynamics independent of initial size (e.g., preferential attachment). | Log-normal or power law (Pareto distribution). | Inherently unpredictable; strictly stochastic compounding. |

Seismology and the Geophysics of Fault Ruptures

The physics of earthquakes provided one of the earliest and most compelling testing grounds for the theory of self-organized criticality. The Earth's crust, subjected to the slow, continuous tectonic loading of continental drift, releases accumulated stress through sudden, violent fault ruptures 118. This dynamic closely mirrors the slow-driving, fast-dissipation criteria of the classic theoretical sandpile model.

The Olami-Feder-Christensen Model and Gutenberg-Richter Scaling

The primary empirical argument for self-organized criticality in seismology is the Gutenberg-Richter law, which dictates that the frequency of earthquakes of a given magnitude scales exponentially with that magnitude, mapping perfectly to a power-law distribution of seismic energy release 1219. To replicate this behavior mathematically, geophysicists adapted models like the Burridge-Knopoff spring-block system into cellular automata.

The Olami-Feder-Christensen (OFC) model treats the brittle crust as a two-dimensional lattice of blocks connected by springs. As tectonic forces uniformly push the blocks, friction holds them in place until the local stress exceeds a designated threshold. Once this threshold is breached, a block slips, transferring stress to its neighbors and potentially triggering a system-wide avalanche 181920. Remarkably, the OFC model reproduces power-law distributions even when the energy transfer is non-conservative, reflecting the dissipation of energy as heat and seismic waves in real faults 12. Advanced statistical analyses of the OFC model reveal that the probability density functions of avalanche size differences exhibit fat tails with a q-Gaussian shape, a characteristic that remains invariant regardless of the time interval and is consistent with the energy differences observed between actual earthquakes 19.

If the Earth's crust is truly a self-organized critical system, earthquakes should be perfectly scale-invariant. This implies that a small micro-tremor and a massive megathrust earthquake begin with the exact same microscopic physical processes. In this view, it is only the instantaneous, unpredictable configuration of the surrounding stress field that determines whether a rupture will stop immediately or propagate across an entire tectonic boundary 121. Consequently, classic self-organized criticality asserts that earthquake prediction is fundamentally impossible, as the state of the system is constantly poised at criticality 1821.

Empirical Constraints in South American Megathrust Zones

Detailed empirical measurements of large-scale seismic events, particularly in highly active tectonic margins like western South America, challenge the strict scale-invariance required by pure self-organized criticality. The subduction of the oceanic Nazca plate beneath the continental South American plate produces a 5,900-kilometer-long trench that hosts some of the largest megathrust earthquakes in recorded history, including the 1960 Valdivia earthquake (Mw 9.5) in Chile, which released over 300 times more energy than typical massive tremors 25.

When analyzing the scaling laws of these South American earthquakes, geophysicists note significant breakdowns in scale-invariance as the seismic moment increases. Empirical evidence demonstrates that parameters such as rupture length, slip distance, and fault width initially grow in tandem with the size of the earthquake 22. However, because the seismogenic zone has a finite physical depth - the specific point at which high temperatures and pressures cause the crust to become ductile rather than brittle - the vertical width of the rupture cannot grow indefinitely 22. Once an earthquake is large enough to rupture the entire vertical width of the fault, further energy release can only occur through linear extension along the length of the fault. This geometric constraint forces a breakdown in the standard three-dimensional scaling laws, predicting a transition where the seismic moment scales linearly with length, introducing a characteristic physical limit that contradicts infinite scale-invariance 2223.

Furthermore, the temporal distribution of great earthquakes in regions like the northern Chile and southern Peru seismic gaps exhibits periodicities that violate the random, memoryless timing implied by strict self-organized criticality. Historical reappraisals of the massive 1868 and 1877 earthquakes (both estimated at Mw 8.8) reveal spatial gaps that undergo distinct "earthquake cycles" of stress accumulation and maturity, leading to notable periods of seismic quiescence followed by clustered, catastrophic ruptures 1823.

The Coexistence of Periodicity and Criticality

The presence of quasi-periodic behavior in subduction zones, combined with variations in rupture kinematics, points toward a more nuanced reality than the BTW model initially proposed. Earthquake rupture velocities vary drastically; slow earthquake ruptures, which travel at approximately 1 kilometer per second, are hyperslow compared to acoustic waves but generate vastly different tsunami directivity lobes and energy-to-moment ratios than standard fast ruptures 22.

Additionally, studies analyzing early warning parameters - such as the 2007 Mw 7.8 Tocopilla earthquake in Chile - demonstrate that the early seconds of P-wave and S-wave arrivals for massive events do not always scale perfectly with final magnitude. When examining low-pass-filtered displacement readings, slope changes in the regression curves occur for events larger than Mw 6.5, suggesting that while the initial nucleation may be a critical phenomenon, the ultimate size of the event is influenced by deterministic boundaries 24. Because of these deviations, many researchers now favor models where self-organized criticality and periodicity coexist. The crust may self-organize into a critical state locally, allowing for power-law distributions of smaller aftershocks, but at a macro level, the sweeping of an instability and the finite dimensions of the tectonic plates introduce characteristic scales and cyclical energy limits 1218.

Neural Criticality and Brain Network Dynamics

Just as tectonic plates must balance continuous stress accumulation with sudden release, the mammalian brain must balance the continuous accumulation of sensory input with the rapid propagation of electrochemical signals. Over the last two decades, the "neural criticality hypothesis" has gained significant traction, proposing that the brain operates at a second-order phase transition boundary between order (subcriticality) and chaos (supercriticality) 2526. Speculation regarding this optimized state dates back to Alan Turing's seminal 1950 paper on artificial intelligence, but modern electrophysiology has provided the quantitative evidence to map it to physical scaling laws 27.

Avalanche Dynamics and Information Processing

The brain is composed of massive networks of excitatory and inhibitory neurons. If the network is heavily inhibited (a subcritical state), signals extinguish rapidly, limiting communication and preventing complex thought. If the network is over-excited (a supercritical state), signals amplify explosively, leading to uncontrolled seizure-like activity. At the exact boundary between these phases, the network is in a critical state characterized by scale-invariant cascades of activity known as "neural avalanches" 1125.

Experimental evidence for neural criticality was first established in isolated, layered organotypic cortex cultures and acute brain slices. Under conditions of strong external drive, the synchronization of local field potentials and spiking activity forms avalanches whose sizes follow a robust power law with a precise slope of -3/2 28. Crucially, these critical systems exhibit a branching parameter ($\sigma$) of precisely 1, meaning that, on average, one firing neuron triggers exactly one subsequent neuron 2728.

Operating at the edge of chaos is theoretically optimal for computation. Systems at criticality maximize dynamic range, information transmission, and memory capacity, because no single temporal or spatial scale dominates the network 2527. The absence of a characteristic scale ensures that the brain can process both highly localized sensory details and widespread cognitive concepts simultaneously. This optimization provides a compelling evolutionary argument for why biological neural networks would naturally self-organize toward this precise boundary.

Synaptic Plasticity and Homeostatic Regulation

Unlike abstract physical models such as sandpiles, the brain possesses complex internal mechanisms that actively tune its parameters. Theoretical models have demonstrated that the brain does not rely solely on the passive physics of an open system to reach criticality. Instead, it utilizes homeostatic feedback loops, primarily through synaptic plasticity, to maintain a "quasi-critical" state 26. Dynamic synapses alter their connection strengths on fast timescales, autonomously adapting to incoming stimuli to keep the overall excitation-to-inhibition (E/I) ratio balanced 1128.

Developmental studies track this self-organization in real-time. Slices from newborn rats show a temporal progression from unconnected, subcritical dynamics toward mature, critical dynamics as the networks grow and synapses physically form 25. This homeostatic regulation is highly robust; when critical in vitro networks are pharmacologically perturbed to reduce inhibition (e.g., using picrotoxin to push the system into a supercritical state), the network autonomously regulates its synaptic weights. Over time, it separates its temporal scales and returns the avalanche size distribution back to the critical power-law state, demonstrating true self-organization independent of external structural inputs 28. It is notable that this rich dynamical repertoire - including nested theta/gamma oscillations and coherence potentials - is observed in layered cortex preparations but not in dissociated cell cultures, which lack functional differentiation and instead exhibit behavior typical of a first-order phase transition 28.

Pathological Deviations and Epileptogenesis

The clinical relevance of the neural criticality hypothesis is best observed when the critical state collapses entirely. Epileptic seizures are the hallmark of a biological network that has crossed the phase boundary into a supercritical regime. During a seizure, the delicate balance of excitation and inhibition is lost, and the scale-invariant power laws that characterize healthy avalanche dynamics are destroyed, replaced by synchronized, monolithic waves of activity 2526.

Post-seizure, the brain often falls into a highly suppressed, subcritical state characterized by reduced connectivity and a diminished critical exponent. This suppression is thought to be a drastic homeostatic response to the supercritical trauma, before the network slowly attempts to re-regulate toward the critical boundary over days or weeks 25. Understanding how structural network abnormalities disrupt this self-organized quasi-criticality remains a major frontier in computational neuroscience, offering potential pathways for diagnosing and intervening in neurodynamical pathologies prior to full system failure 1626.

Ecological Patterns and Environmental Resource Models

The mathematical application of self-organized criticality extends beyond biological tissue into vast ecological macrosystems. Vegetation dynamics, resource flows, and wildland fires have all been analyzed through the lens of critical phase transitions to understand how ecosystems maintain resilience against constant environmental degradation.

Global Fire Size Distributions and Log-Normal Deviations

For decades, forest fires were considered one of the purest real-world manifestations of self-organized criticality. Classic ecological models, such as the Henley (1989) and Drossel-Schwabl (1992) forest fire models, proposed an endogenous ecosystem-fire feedback loop: trees grow slowly, accumulating fuel over years, until a random spark (e.g., lightning) ignites a fire that rapidly consumes the biomass 329. Because the growth of vegetation connects previously isolated patches of fuel, the ecosystem supposedly self-organizes to a critical density where fires of all sizes can occur, theoretically resulting in a pure power-law frequency distribution of fire sizes 23.

However, the advent of high-resolution satellite imagery and comprehensive global datasets has rigorously tested this assumption. The MODIS-derived Global Fire Atlas tracks the daily progression, speed, and direction of individual fires at a 500-meter resolution. Analysis of 13.3 million individual fires across eight diverse global biomes - spanning from 2003 to 2016 and covering boreal, temperate, and tropical regions - revealed that wildland fires rarely adhere to a pure power law 32930. Instead, in six out of eight global regions studied, statistical testing using maximum likelihood estimation rejected the power-law hypothesis in favor of a log-normal distribution 329.

This massive deviation from true criticality is driven by external physical constraints that limit scale-invariance. While precipitation gradients and fuel availability govern the baseline propensity for fire, the actual spread of wildfires is heavily constrained by landscape fragmentation - both natural features like rivers and anthropogenic barriers like roads and agricultural borders 31. In arid ecosystems with low fuel densities, high spread rates lead to large, short-duration fires that quickly burn out as they exhaust local resources. Conversely, in human-dominated landscapes, high ignition densities result in numerous small fires that are artificially contained 30. Because of these hard spatial boundaries and fuel limits, the system operates below the theoretical critical threshold, introducing a finite-size cutoff that transforms the expected scale-free power law into a log-normal probability curve 23.

Spatial Self-Organization in Semi-Arid Vegetation

While global fire sizes may deviate from pure temporal criticality due to boundaries, specific spatial phenomena within ecosystems still exhibit strong signatures of self-organization. In semi-arid landscapes, vegetation patches self-segregate to survive extreme resource scarcity. Theoretical models incorporating an Allee-logistic reaction term and nonlinear diffusion have demonstrated that as water scarcity crosses a predictable threshold, vegetation shifts from a uniform distribution into distinct, cooperative clusters separated by bare ground 32.

This spatial self-segregation is driven by local positive interactions - such as cooperation for moisture retention and the provision of shade - competing against global resource limitations. While a Turing-like instability mechanism can explain the formation of regular, evenly spaced vegetation patterns, the emergence of irregular patterns relies on a self-organizing criticality mechanism. The resulting irregular vegetation structures exhibit a distinctive power-law distribution of patch sizes, often accompanied by an exponential cutoff where cluster sizes reach an absolute environmental limit 32. This spatial self-organization demonstrates how ecological systems utilize critical phase transitions to maximize resilience; by clustering at the edge of chaos, the vegetation network optimizes its dynamic range and survives environmental conditions that would otherwise lead to total desertification.

Financial Market Volatility and Economic Networks

The inherent volatility of global financial markets cannot be entirely explained by exogenous economic news or standard macroeconomic variables. Instead, financial markets function as massively interconnected networks of interacting agents, heavily influenced by imitation, herding behavior, and dynamic feedback loops 733. This architecture has made financial systems a prime candidate for analyzing self-organized criticality in human-engineered constructs.

The Excess Volatility Puzzle and Marginal Stability

The "excess volatility puzzle" highlights a fundamental issue in classical economics: asset prices fluctuate much more violently than the underlying fundamental values of the assets justify 3338. In a standard competitive equilibrium model, markets should clear efficiently, and profits should normalize to zero over time. However, when viewed through the lens of self-organized criticality, the quest for absolute efficiency is fundamentally incompatible with systemic resilience 534.

As economic networks optimize toward perfect efficiency and zero excess profit, the time needed for the system to recover from a minor supply or demand shock diverges to infinity 34. The network becomes "marginally stable," hovering at the edge of an instability point where the classical Hawkins-Simons conditions for market equilibrium begin to break down. In this fragile, critical state, insignificant external perturbations are accumulated and amplified as "avalanches" of buying or selling that cascade through the network 3334. This continuous internal frustration generates the fat-tailed return distributions and long-memory correlations characteristic of market crashes, offering a compelling explanation for the sudden "small shocks, large business cycle" paradigm identified by Hyman Minsky and Per Bak 533.

Log-Periodic Power Laws and Crash Predictability

While classic self-organized criticality suggests that market crashes are unpredictable random avalanches, the application of Dragon King theory to finance offers a different perspective on extreme events. Financial bubbles are driven by endogenous positive feedback mechanisms - specifically, the herding behavior of irrational "noise" traders imitating one another across a network 735. This herding forces the market into a super-exponential growth phase that is mathematically unsustainable, inevitably culminating in a finite-time singularity representing the crash 36.

According to the Johansen-Ledoit-Sornette (JLS) model, which adapts the Ising model of ferromagnetism to financial agents existing in binary "buy" or "sell" states, the approach to this singularity is not a smooth exponential curve. Instead, it is a power-law acceleration decorated with accelerating, log-periodic oscillations 3536. These Log-Periodic Power Laws (LPPL) represent the hierarchical structure of the trader network and the increasing tension between the rising asset price and the fundamentally perceived value. Because the crash is the result of the "sweeping of an instability" rather than static, continuous criticality, the log-periodic fluctuations serve as observable precursors to failure 1217.

The detection of these LPPL signatures has been used empirically to diagnose financial bubbles in real-time, successfully analyzing the gold bubble of 2009 and famously predicting the October 1997 market crash 1735. By identifying these precursors, researchers shift the analysis of extreme financial risks away from unpredictable Black Swans and toward analyzable Dragon Kings 1617.

Network Flow Dynamics in Emerging Markets

The vulnerability of economic networks to these critical avalanches depends heavily on their topology and flow dynamics. Unlike static networks, economic networks are tied together by the continuous flow of capital and resources. When a flow network evolves into a power-law degree distribution, it becomes dramatically more vulnerable to catastrophic cascading failures. This occurs because the disruption of throughput at any major hub propagates instantly downstream, causing a self-reinforcing collapse that is significantly worse than failures in non-flow networks 37.

This flow-dependency dynamic was highly evident during the 2008 Global Financial Crisis and subsequent economic shocks. While highly interconnected Western financial institutions suffered massive cascading failures due to toxic asset exposure, several major emerging economies - specifically the BRICS nations (Brazil, Russia, India, China, and South Africa) - exhibited varying degrees of resilience. Because the financial sectors of Brazil, India, and South Africa possessed different regulatory architectures, macro-prudential oversight, and substantially lower direct flow exposure to the highly connected toxic asset nodes, they absorbed the initial shocks far better than G7 nations 383940.

However, as the network topology of global finance continues to evolve and interconnectedness increases, the distinction between shock-transmitters and shock-absorbers dictates the global propagation of volatility. Recent studies indicate that while India, China, and South Africa tend to absorb external financial shocks, Brazil and Russia increasingly act as transmitters of volatility, mirroring the highly connected critical state that precipitated the 2008 crisis 38.

Emergent Criticality in Artificial Neural Networks

In the most recent and arguably most profound extension of the theory, the principles of critical phase transitions are now being applied to understand the fundamental mechanics of artificial intelligence. Large language models (LLMs) based on the Transformer architecture exhibit emergent abilities - such as in-context learning, symbolic logic processing, and multi-step reasoning - that were not explicitly programmed but arise spontaneously as the models scale in parameters and training data 4142. The physics of self-organized criticality offers a rigorous mathematical framework for analyzing how and why these cognitive abilities emerge.

Transient In-Context Learning and Frustrated Networks

One of the most surprising features of modern Transformers is their capacity for In-Context Learning (ICL), which allows the model to adapt its behavior to novel tasks at inference time without requiring its internal weights to be updated. Research tracking the emergence of ICL versus In-Weights Learning (IWL) reveals that as Transformers train, the capacity for dynamic contextual reasoning emerges rapidly at a critical boundary, but it is often a transient phenomenon 4344. If the model is over-trained, the fluid ICL capabilities disappear and give way to rigid, hardcoded weight dependencies (IWL). This implies an asymptotic preference for fixed weights, meaning that maintaining an LLM exactly at the edge of chaos - often utilizing L2 regularization to prevent over-optimization - is required to preserve its maximum fluid reasoning capabilities 4344.

Furthermore, this critical topology forces the development of functional modularity within the Transformer. During early pre-training, the model spontaneously organizes into a modular structure where distinct subsets of artificial neurons group into specialized "experts" dedicated to specific logical functions, mimicking the biological modularity of the human brain 45. The emergence of these reasoning processes maps directly to the self-organization of a semantic complex network whose topology remains persistently sparse. This sparsity induces a state of maximal frustration and slow learning, which is eventually broken by a phase-transition-like growth phase where new skills are rapidly integrated at the network's frontier 51.

Learning at Criticality via Reinforcement Optimization

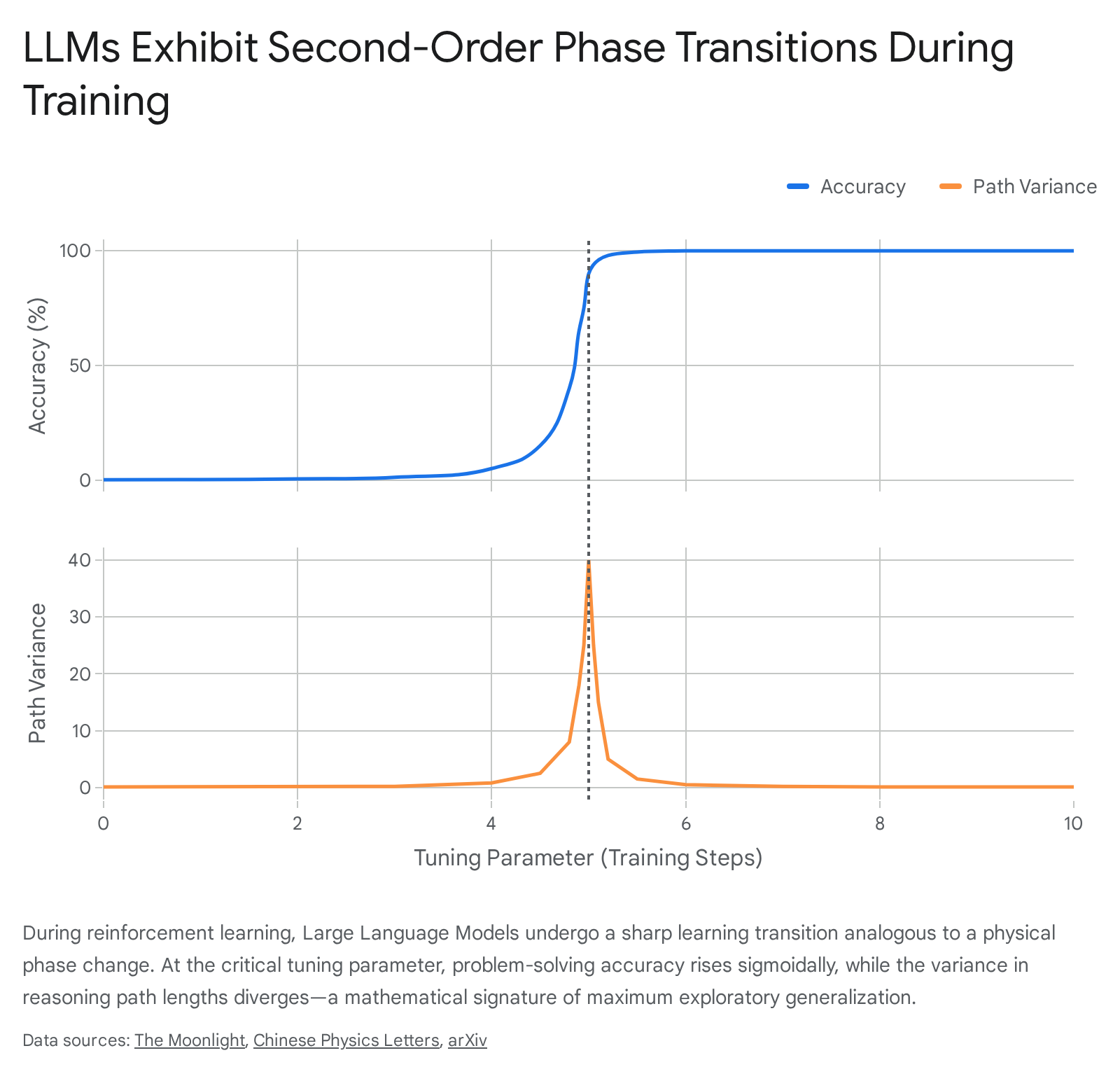

Historically, AI models required vast, highly curated datasets to learn basic functions, severely limiting their application in complex scientific fields where training data is scarce. However, a novel training paradigm termed "Learning at Criticality" (LaC) utilizes reinforcement learning to deliberately drive LLMs to a sharp learning transition point 646.

By optimizing the policy via algorithms like Direct-Advantage Policy Optimization (DAPO) - a variant of Group Relative Policy Optimization (GRPO) - researchers can tune the LLM toward a continuous phase transition. The algorithm rewards effective reasoning paths and updates the policy based on the advantage function ($A_m = R_m - \bar{R}$), strengthening transitions that lead to correct answers 53.

At this precise critical point, the model's ability to generalize maximizes dramatically. When an 8-billion parameter LLM was trained on a single instance of a complex 7-digit base-7 arithmetic addition, its accuracy followed a sigmoidal phase transition curve. Precisely at the steepest gradient of this transition, the model achieved peak generalization, successfully solving unseen higher-order mathematical problems. If training pushed the model past this critical boundary, it entered an overfitted state, and its ability to generalize collapsed entirely, even as its accuracy on the single training example remained perfect 465347.

The power of this critical tuning is highly evident in advanced symbolic physics tasks. Applying LaC to symbolic Matsubara frequency summation in Quantum Field Theory (QFT), an 8-billion parameter LLM trained on a few low-order diagrams learned the underlying algorithmic procedure and successfully solved highly complex, unseen multi-loop diagrams. As detailed below, the model tuned to criticality vastly outperformed base models containing nearly two orders of magnitude more parameters.

| AI Model Architecture | 1-Loop Accuracy | 2-Loop Accuracy | 3-Loop Accuracy | 4-Loop Accuracy |

|---|---|---|---|---|

| DeepSeek-R1-0120 (671B Parameters) | 12.5% | 10.0% | 1.1% | 0.4% |

| Qwen3-32B Base (32B Parameters) | 47.8% | 25.5% | 1.6% | 0.6% |

| LaC-Tuned Qwen3 (8B Parameters) | High | High | 9.5% | 1.7% |

Data reflecting generalization capabilities on unseen Quantum Field Theory Matsubara summation diagrams. The LaC-tuned 8B model achieves superior algorithmic abstraction despite its dramatically smaller parameter size 653.

The Concept-Network Abstraction

The physical mechanics of this emergent reasoning can be elucidated using a theoretical abstraction known as the Concept-Network (CoNet) model. In this framework, cohesive sequences of text tokens generated by the LLM (where the next token is predicted with near 100% certainty) are grouped and represented as distinct nodes in a sparse, K-regular random graph. The model's reasoning process is subsequently treated as a Markovian random walk attempting to find a logical path from a source node (the question) to a target node (the answer) 653.

During training, as the external tuning parameter (the reinforcement learning signal) increases, the CoNet undergoes a second-order phase transition 653. At the exact critical point, the variance of the reasoning path lengths diverges massively, and the distribution of these path lengths perfectly adheres to a power law 5347.

This scale-invariant exploration pattern indicates that the artificial model is maximizing its "critical thinking" capacity - searching across multiple disparate logic scales simultaneously without becoming locked into a single, repetitive deterministic loop 5348. By mathematically tuning the AI to the edge of chaos, engineers can circumvent the massive data dependencies that have historically bottlenecked conventional deep learning, proving that the physical principles governing natural complexity are equally binding on artificial intelligence 646.

Synthesizing the Generality and Limits of the SOC Paradigm

The hypothesis of self-organized criticality fundamentally reshaped the scientific understanding of complexity, demonstrating that scale-invariant geometry, chaotic fluctuations, and fractal architectures are not random anomalies, but rather the natural attractors of dynamic, dissipative systems. The application of this framework successfully bridges the micro-mechanics of individual interacting units - whether they be tectonic fault blocks grinding against one another, biological neurons firing across a synapse, forest fuel patches accumulating biomass, financial traders acting on noise, or attention-heads distributing weights in a neural network - with the sweeping macroscopic behavior of the entire system.

However, over decades of empirical testing, the assumption that all power laws signify true, memoryless self-organized criticality has proven overly reductive. As evidenced by the finite scaling limits in South American megathrust earthquakes and the log-normal bounds of global wildland fires, physical geometry and resource scarcity introduce characteristic scales that prevent infinite avalanches. Furthermore, the log-periodic precursors to market crashes indicate that the most extreme events (Dragon Kings) are driven by endogenous synchronization rather than the blind, scale-free physics of a traditional sandpile. Finally, in biological and artificial neural networks, pure self-organization is superseded by active homeostatic tuning - through synaptic plasticity and reinforcement learning gradients - that deliberately steers the system toward, and holds it upon, the edge of chaos to maximize computational efficiency.

Ultimately, while perfect self-organized criticality may be a mathematical ideal rarely achieved in its purest, unconstrained form in nature, the framework provides an indispensable analytical lens. It proves that complex systems derive their resilience, memory, and functional utility not by avoiding instability, but by dwelling as close to the precipice of chaos as the physical limits of their environment will allow.