Scientific limitations and the hard problem of consciousness

Introduction

The scientific and philosophical study of consciousness currently occupies a state of profound and highly productive turmoil. Characterized by an escalating tension between high-resolution empirical methodologies and deep ontological mysteries, the field is undergoing a systemic destabilization of its foundational paradigms. For decades, the dominant consensus within cognitive neuroscience operated under the implicit, physicalist assumption that meticulously mapping the neural correlates of cognitive functions would seamlessly yield a comprehensive explanation of subjective experience. However, the period spanning 2023 to 2026 has irrevocably altered this landscape 12. Landmark empirical initiatives, specifically the adversarial collaborations orchestrated by the Cogitate Consortium, have exposed critical vulnerabilities in the field's leading neurobiological theories 125. Simultaneously, deep philosophical schisms have erupted over the very definition of what constitutes a scientific theory of mind, culminating in unprecedented public controversies over whether leading mathematical models of consciousness should be classified as pseudoscience 34.

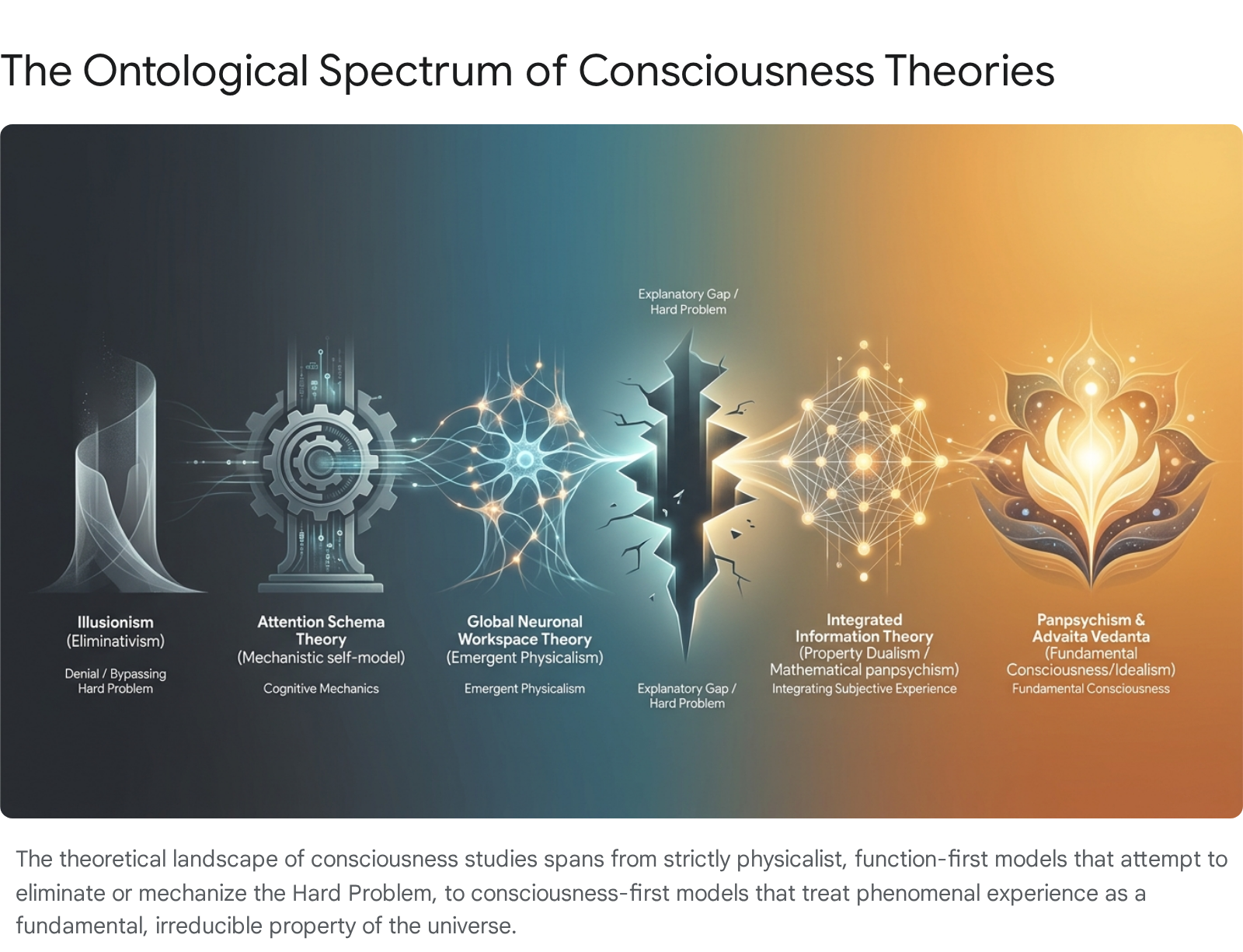

As standard physicalist and computational frameworks struggle to accommodate the totality of conscious experience, theorists are increasingly turning toward radical alternatives that reframe the mind-body problem entirely. On one extreme of this ontological spectrum, Illusionism posits that phenomenal consciousness is a systematic cognitive error, proposing a strict eliminativist solution to the mystery 59. On the opposite extreme, a renaissance of modern Panpsychism argues for the fundamental ubiquity of consciousness as an intrinsic property of the physical universe, a view that seeks to bypass the intractable problem of strong emergence 1011. Concurrently, the recognized limitations of Western analytic framing have prompted a rigorous re-examination of non-Western philosophical parallels. Specifically, the ancient Indian frameworks of Advaita Vedānta and Buddhist Yogācāra offer sophisticated, centuries-old models of foundational awareness that are increasingly resonant with cutting-edge theoretical physics and cosmopsychism 1267.

This report delivers an exhaustive, expert-level analysis of the contemporary theoretical architecture of consciousness studies. By rigorously distinguishing the "hard problem" from the "easy problems," evaluating recent empirical watersheds such as the Cogitate Consortium results, dissecting the controversies surrounding Integrated Information Theory (IIT), and synthesizing both radical philosophical and classical non-Western perspectives, this document establishes a comprehensive framework for understanding the future trajectory of modern consciousness research.

The Definitional Core: The Hard Problem Versus the Easy Problems

The persistent stagnation in consciousness research frequently stems from a profound methodological conflation: the blurring of objective cognitive processing with subjective phenomenal experience. To navigate the current theoretical landscape, it is imperative to explicitly define and differentiate the "easy problems" from the "hard problem" of consciousness, a distinction formally articulated by philosopher David Chalmers in 1995 that continues to define the boundaries of the discipline 89.

The "easy problems" of consciousness encompass the objective mechanisms and functional capacities of the cognitive system. These include the ability to discriminate, categorize, and react to environmental stimuli; the integration of diverse sensory information by a cognitive architecture; the deliberate control of behavior; the transition between states of wakefulness and sleep; and the capacity of a system to access and verbally report its own internal states 1718. While scientifically complex and demanding immense computational and neurobiological research, these problems are deemed "easy" because they are inherently susceptible to standard mechanistic explanations. They describe exactly what the brain does and how it processes information as a physical system. Discovering the precise neural network responsible for focusing visual attention or retrieving an episodic memory fully resolves the respective easy problem without requiring a revolution in physical laws.

In stark contrast, the "hard problem" addresses the existence of phenomenal consciousness itself - specifically, the qualitative, subjective nature of experience, widely referred to in the philosophy of mind as "qualia" 89. Qualia represent the "what it is like" to undergo an experience: the undeniable redness of a sunset, the visceral sting of physical pain, or the specific emotional timbre of grief. The hard problem asks a fundamental question of physical reality: why does the physical processing of electrochemical information in a biological neural network feel like anything at all? Why does this data processing not transpire "in the dark," executing complex survival algorithms devoid of any inner subjective reality 1920?

The failure to distinguish between these two domains leads to a persistent "explanatory gap," a term popularized by philosopher Joseph Levine 1910. Many neurobiological theories unwittingly substitute an easy problem for the hard one. They provide an elegant, high-resolution map of how the brain integrates sensory data or globally broadcasts information to motor centers, and then prematurely declare the problem of consciousness solved, leaving the actual generation of subjective phenomenal experience entirely unexplained 1922. To address consciousness rigorously, a theory must either provide a coherent mechanism that bridges the gap between physical function and phenomenal qualia, alter the ontological status of consciousness itself to make it fundamental, or mathematically prove that the hard problem is a conceptual illusion generated by the brain 9.

Classical Non-Western Parallels: Foundational Awareness in Indian Philosophy

The struggle to situate consciousness within a physicalist ontology is largely a byproduct of the Cartesian dualism and the subsequent mechanical philosophy that has dominated Western scientific thought for the past four centuries 2311. To contextualize and deeply enrich the modern debate, it is highly instructive to examine ancient Indian philosophical frameworks. For millennia, traditions such as Advaita Vedānta and Buddhist Yogācāra have treated consciousness not as a secondary, emergent property of biological matter, but as a primary, foundational reality 725. These sophisticated frameworks offer precise models that parallel and inform modern debates between idealism, panpsychism, and eliminativism.

Advaita Vedānta: The Architecture of Pure, Unchanging Awareness

Advaita Vedānta, formalized most famously by the eighth-century philosopher Ādi Śaṅkarācārya, asserts a radically non-dual (advaita) ontology. In this system, the ultimate reality is Brahman - an infinite, unchanging, and eternal substrate of pure consciousness (cit) and being 1226. Advaita posits that the individual, localized sense of an egoic self (jīva) and the vast multiplicity of the material universe are ultimately illusory (māyā) when viewed as entities separate from this foundational awareness 1112.

The central thesis of Advaita Vedānta is the absolute identity of Ātman (the true, inner Self) and Brahman 1212. Consciousness, in this view, is emphatically not a product of the mind or body; rather, the mind, body, and the entire physical cosmos appear within consciousness. Advaita strictly distinguishes between empirical consciousness and pure consciousness. Empirical consciousness fluctuates with the three standard states of human experience - waking, dreaming, and deep sleep - and is inextricably tied to the mind-body complex 2829. Pure consciousness, conversely, is the self-luminous, eternal witness that persists even in dreamless deep sleep, illuminating the absence of cognitive objects 28.

This framework heavily anticipates modern absolute idealism and cosmopsychism, suggesting that the Western "hard problem" is an artifact of the false assumption that insentient matter precedes sentient mind. If consciousness is identical with being itself, the burden of proof shifts fundamentally: matter does not generate consciousness through complex electrochemical interactions; rather, consciousness is the pre-existing spatial and temporal ground in which the appearance of matter is phenomenologically apprehended 728.

Buddhist Yogācāra: Momentary Streams and the Collapse of Subject-Object Duality

While Advaita Vedānta asserts an eternal, unchanging conscious substrate, the Buddhist philosophical tradition fundamentally rejects any permanent self or enduring substance, a doctrine known as anattā or anātman 12. Following the philosopher Nāgārjuna's radical deconstruction of intrinsic essence (śūnyatā or emptiness), the Yogācāra school - also known as Cittamātra or "Mind-only" - emerged in the 4th century CE under the half-brothers Asaṅga and Vasubandhu to explain how continuous experience and karma operate without relying on metaphysical reification 631.

Yogācāra provides an intricately detailed phenomenological and psychological model of the mind, identifying eight distinct classifications of consciousness. The most foundational of these are the kliṣṭamanas (the defiled mind, which generates the illusion of an ego) and the ālayavijñāna (the storehouse or substratum consciousness, which holds the karmic seeds of past actions) 6. Yogācāra posits that what humans naïvely experience as a solid, external reality is inextricably shaped and projected by this karmically conditioned consciousness 31. However, unlike Western subjective idealism (such as Berkeley's), Yogācāra does not argue that the universe is made of a permanent mental "substance." Instead, it highlights the ultimate emptiness of consciousness itself. Consciousness (vijñāna) is not an eternal Brahman; it is a momentary, ever-changing stream of discrete cognitive events, dependently originated and entirely devoid of independent, solitary existence 263233.

The primary target of Yogācāra analysis is the dualistic structure of ordinary experience - the mistaken cognitive division into an observing subject (grāhaka) and an observed external object (grāhya) 31. Liberation in this framework occurs not by merging a localized soul with an absolute Godhead, but when this subject-object polarity collapses into non-dual awareness (advaya-jñāna) through rigorous meditative deconstruction 31. Interestingly, later Indian and Tibetan interpretations of Yogācāra verge on a sophisticated form of illusionism, suggesting that the phenomenal aspects of consciousness are cognitive constructs with no ultimate foundation in reality, closely mirroring the eliminativist debates occupying contemporary Western analytic philosophy 13.

The Empirical Reckoning: The Cogitate Consortium and the Adversarial Collaborations

Returning to the contemporary neurobiological landscape, the period surrounding 2025 has been defined by a massive, concerted effort to empirically arbitrate between the leading physicalist theories of consciousness. For decades, the field suffered from what researchers colloquially termed the "toothbrush problem" - every theorist possessed their own theory and adamantly refused to use anyone else's, resulting in a proliferation of isolated, self-confirming experiments that failed to advance a unified consensus 115.

To break this relentless cycle of confirmation bias, the Cogitate Consortium (A Collaboration On GNW and IIT: Testing Alternative Theories of Experience) orchestrated a landmark "adversarial collaboration" 1415. Heavily influenced by the methodological frameworks championed by Nobel laureate Daniel Kahneman, this initiative forced the primary proponents of two dominant frameworks - Global Neuronal Workspace Theory (GNWT), led by Stanislas Dehaene, and Integrated Information Theory (IIT), proposed by Giulio Tononi - to explicitly agree ex ante on an experimental design. Before a single piece of data was collected, the rival camps had to publicly specify exactly which neural signatures would constitute validation or falsification of their respective theories 1137.

Backed by the Templeton Foundation and formally published in Nature in April 2025, the study evaluated an unprecedented 256 human participants utilizing a highly rigorous, multimodal combination of functional MRI (fMRI), magnetoencephalography (MEG), and intracranial EEG (iEEG) 13738. The results delivered a profound shock to the global neuroscientific community: the core, mutually agreed-upon predictions of both leading theories were simultaneously and severely challenged by the empirical data 1537.

The Failure of Global Neuronal Workspace Theory (GNWT)

GNWT is a prominent functionalist theory positing that consciousness arises when specific information is selected by attention and subsequently "broadcasted" globally across a widely distributed network of specialized, unconscious processors 1718. Mechanistically, GNWT acts as a "theater stage" or a highly specialized cognitive RAM. For a stimulus to be consciously experienced, it must cross a critical threshold and gain access to this global workspace, primarily mediated by long-range pyramidal neurons connecting the prefrontal and parietal cortices. This systemic broadcast makes the information available for verbal report, working memory, and voluntary action 1716.

In the Cogitate adversarial experiment, GNWT theorists predicted a distinct process of "neural ignition" - a massive, synchronized global activity peak involving the prefrontal cortex - that must occur at both the onset and the specific offset of a consciously perceived stimulus 540. Furthermore, the theory explicitly predicted that the specific contents of a conscious experience should be decodable directly from activity patterns within the prefrontal cortex 15.

The empirical reality observed in the scanners sharply contradicted these expectations. While the onset of stimuli did trigger widespread activity, the anticipated neural ignition at the offset of the conscious experience was not reliably or consistently observed, critically undermining GNWT's fundamental assumptions regarding the temporal dynamics of conscious maintenance 1540. Moreover, while certain broad categories of stimuli were evident in the prefrontal cortex, critical, high-resolution aspects of the subjective experience - such as the specific identity or exact spatial orientation of the visual stimuli - were entirely absent from prefrontal regions, despite being consciously perceived by the participants 1. This absence severely de-emphasizes the role of the prefrontal cortex as the seat of conscious content, suggesting that GNWT mistakenly conflates the downstream cognitive mechanisms of reporting or reasoning about an experience with the actual generation of the sensory experience itself 1742.

The Posterior Collapse of Integrated Information Theory (IIT)

IIT represents a radically different, mathematically driven approach. It operates as a "consciousness-first" paradigm that begins with phenomenological axioms - existence, composition, information, integration, and exclusion - and works backward to mathematically deduce the necessary physical postulates of any substrate capable of supporting experience 41844. IIT makes the bold ontological assertion that consciousness is identical to a system's maximum capacity to integrate information, a value rigorously quantified by the mathematical metric $\Phi$ (Phi) 1946. For a mammalian brain, IIT's spatial prediction was that the physical substrate of consciousness lies in a "hot zone" in the posterior cerebral cortex, where the dense, grid-like anatomical connectivity naturally supports maximally irreducible cause-effect structures 14.

The Cogitate collaboration rigorously tested IIT's central biological implementation. IIT proponents committed to the prediction that conscious visual perception would be associated with sustained, synchronized neural interactions specifically localized within this posterior cortical hot zone, remaining active for the entire duration of the conscious experience 51540.

The resulting data demonstrated a critical failure for the theory: the study found no reliable, sustained synchronization between early and mid-level visual areas in the posterior brain that corresponded to the duration of conscious perception 13740. While posterior regions demonstrated baseline activity corresponding to stimulus duration, the distinct lack of sustained synchronization contradicts IIT's foundational biological claim that specific types of highly integrated, synchronous network connectivity directly specify the phenomenal state 153740.

The simultaneous empirical destabilization of GNWT and IIT has precipitated a paradigm crisis within neuroscience. As researchers await the processed results of Cogitate's Phase 2 experiments, the failure of the dominant physicalist theories to accurately predict the biological signatures of awareness has created a profound theoretical vacuum 11520. Furthermore, this failure has immediate practical consequences for the rapidly advancing field of artificial intelligence. It has sparked the rise of "Biological Computationalism" - the theory that the specific physical and metabolic nature of biological tissue is a non-negotiable prerequisite for subjective experience. Because GNWT and IIT failed to explain human consciousness, researchers argue that applying these frameworks to extrapolate whether Large Language Models (LLMs) are conscious is scientifically unsupportable, widening the "Zombie Gap" between what an AI simulates and what it actually experiences 12.

The Boundary of Science: The IIT Pseudoscience Controversy

The pressure mounting against Integrated Information Theory is not strictly empirical; it is deeply existential. In late 2023, an open letter signed by 124 prominent scholars ignited a fierce, highly publicized controversy by characterizing IIT as "unfalsifiable pseudoscience" 3444. This bitter dispute ultimately culminated in a formal, peer-reviewed commentary published in Nature Neuroscience in March 2025, titled "What Makes a Theory of Consciousness Unscientific?" 232149.

The primary contention of the Nature Neuroscience authors is that IIT's core mathematical and philosophical claims are untestable even in principle, rendering the framework metaphysical speculation rather than empirical science 2350. Critics point directly to IIT's radical panpsychist implications: according to the theory's mathematical formalism, any physical system possessing a non-zero $\Phi$ value exhibits some degree of subjective experience, regardless of whether it is biological 4419.

The most devastating critique originated from theoretical computer scientists, notably Scott Aaronson, who utilized the "unfolding argument." Aaronson demonstrated that according to IIT's own mathematical formulas, a specific arrangement of completely inactive logic gates - known as expander graphs - could possess a massive $\Phi$ value 34451. This implies that a completely inert, non-computing grid of wires could be "unboundedly more conscious than humans are" 3. Because it is fundamentally impossible to empirically verify whether an inactive logic gate possesses phenomenal experience, critics argue that IIT demands an "unscientific leap of faith." They assert the theory relies on methodological circularity, effectively proving what it presupposes by defining consciousness as $\Phi$ and then asserting that $\Phi$ guarantees consciousness 352.

Proponents of IIT - most notably Giulio Tononi, Christof Koch, and computational neuroscientist Larissa Albantakis - mounted a vigorous and uncompromising defense against the pseudoscience label 515354. They argue that the attacks are symptomatic of a deeper, systemic crisis within the dominant computational-functionalist paradigm 53. Tononi maintains that IIT is a necessary "consciousness-first" approach. Rather than pretending consciousness is an emergent illusion, IIT rightfully acknowledges the "stubborn fact" of conscious experience, establishing an "explanatory identity" between introspective phenomenal properties and physical causal structures 5354.

Furthermore, defenders emphasize that IIT has generated highly practical, falsifiable tools that have revolutionized clinical neurology, most notably the Perturbational Complexity Index (PCI). By utilizing Transcranial Magnetic Stimulation (TMS) coupled with electroencephalography (EEG), PCI successfully quantifies the brain's capacity for integrated information. This technique offers unprecedented clinical accuracy in assessing hidden awareness in anesthetized, vegetative, and locked-in patients who cannot physically communicate 3.

The debate highlights a profound epistemological schism defining the modern era of the field: can a theory that makes accurate, life-saving clinical predictions regarding human brain states be dismissed as pseudoscience simply because its underlying mathematical logic necessitates untestable, panpsychist extrapolations in inanimate systems? As the Nature Neuroscience debate starkly demonstrates, the scientific community remains deeply fractured over whether theories of consciousness must conform strictly to behavioral, third-person functionalism, or whether they must undergo an ontological expansion to treat first-person experience as a fundamental property of the universe 222256.

Eliminativist Functionalism: Attention Schema Theory (AST)

As theories attempting to physically localize the precise origin of consciousness falter, functionalist theories that attempt to explain away the hard problem entirely have gained significant traction. Prominent among these is Attention Schema Theory (AST), developed by Princeton neuroscientist Michael Graziano. AST seeks to bridge the seemingly unbridgeable gap between neural mechanics and the illusion of qualia by treating consciousness strictly as an evolved, mechanistic control system 1923.

AST builds upon the well-established neuroscientific concept of the "body schema" - the brain's internal, simplified, and highly efficient dynamic model of the physical body, which is utilized to coordinate complex movement 2358. Graziano proposes that just as the brain must model the body to control it, it must also model its own abstract data-handling processes. Attention is a genuine, mechanistic process whereby electrochemical signals compete via lateral inhibition for limited neural resources 2460. To efficiently control and allocate this limited attention, the brain constructs an "attention schema" - a cartoonish, computationally lightweight internal model of what attention is and what it is currently focusing on 246061.

Crucially, because this internal model must be metabolically efficient and rapidly accessible, it deliberately strips away the incomprehensibly complex mechanistic details of synapses, neurons, and neurochemistry. It provides the cognitive system with a simplified, user-friendly narrative: I possess an intangible, subjective awareness of this object 23. Furthermore, AST posits that this schema evolved not just for self-control, but for social cognition - granting humans the "Theory of Mind" required to intuit and predict the attentional states of predators, prey, and peers 2325. When higher cognitive centers access this schema to generate a verbal report, the human subject earnestly claims to possess an ethereal consciousness. Thus, according to AST, consciousness is not a magical essence generated by the brain; it is the brain's highly useful, functionally inaccurate, schematic description of its own attention 2460.

AST theoretically dissolves the hard problem by refusing to treat phenomenal experience as an actual physical or metaphysical reality. There is no "ghost in the machine"; there is only a biological machine that calculates that it contains a ghost because that specific calculation optimizes behavioral control 1963.

However, AST faces stringent philosophical limitations. Peer-reviewed critiques in journals such as the Journal of Consciousness Studies highlight that AST suffers from a fatal infinite regress regarding the fundamental nature of experience 19. Critics argue that an abstract information structure or an internal model cannot "conclude," "believe," or "misrepresent" anything without a subject present to experience that conclusion. As philosopher Raymond Tallis notes in his critique of such eliminativist models, "misrepresentation presupposes presentation" 19. For the attention schema to effectively trick the brain into feeling phenomenally aware, there must inherently be a phenomenal space in which the trick is actually experienced. AST successfully explains the mechanistic reasons why humans report being conscious, but it categorically fails to explain why the cognitive processing of the attention schema itself feels like anything 19.

The Radical Ontologies: Illusionism versus Modern Panpsychism

The exhaustion of standard physicalist models and the conceptual paradoxes of functionalist schemas like AST have pushed the philosophy of mind toward two diametrically opposed, yet increasingly prominent, extremes: Illusionism and Panpsychism.

Illusionism: The Meta-Problem of Consciousness

Illusionism, championed by prominent philosophers such as Keith Frankish, Daniel Dennett, and François Kammerer, represents the most radical, uncompromising form of physicalist eliminativism 598. Illusionists assert unambiguously that phenomenal consciousness - the rich, qualitative "what it is like" of subjective experience - does not actually exist in the physical universe 826. Instead, humanity is subject to a profound, systematically wired introspective illusion.

Illusionists argue that traditional physicalism is fundamentally incompatible with the existence of qualia. Because we know through modern neuroscience that the brain is an entirely physical, computational system, the presence of non-physical, ineffable phenomenal properties is an ontological impossibility. Therefore, rather than fruitlessly attempting to solve the Hard Problem (how physical matter miraculously generates qualia), science must redirect its focus entirely to the "Illusion Problem" or the "Meta-problem" (why physical matter generates the deeply held, false belief that qualia exist) 92365. Illusionists argue that the brain models its own reactive dispositions in a highly schematic way, leading to the misrepresentation of ordinary physical states as magical "quasi-phenomenal" states 26.

The primary scientific limitation of Illusionism is that it operates largely as a philosophical defense mechanism designed to protect strict physicalism, rather than as an empirically testable hypothesis capable of generating novel neurobiological predictions 920. Its philosophical limitations are even more severe. The premise is viewed by a vast majority of researchers as uniquely counter-intuitive, bordering on logical absurdity 96667. A genuine illusion requires a subjective observer to be deceived; an illusion is, by its very definition, an experience 2068. If a subject is suffering from the illusion of experiencing intense pain, the experience of the illusion still causes actual suffering.

Furthermore, as critics in the Journal of Consciousness Studies point out, Illusionism risks profound, potentially catastrophic ethical consequences (often framed around the "zombie problem"). If sentient experience is merely a cognitive glitch and no entity truly feels anything, the moral imperatives regarding human rights, animal welfare, and the future treatment of Artificial Intelligence lose their foundational grounding 2665.

Modern Panpsychism: The Omnipresence of Experience

Directly opposed to the eliminativist framework of Illusionism is the renaissance of modern Panpsychism, advanced by analytic philosophers such as Philip Goff and Galen Strawson 106970. Rejecting the premise that consciousness can mysteriously emerge from entirely unconscious, "dead" matter (the seemingly unsolvable problem of strong emergence), Panpsychism asserts that consciousness is a fundamental, ubiquitous property of the physical universe, existing alongside fundamental physical constants like mass, spin, and charge 970. In this view, even foundational physical entities - such as electrons or quarks - possess an unimaginably simple, infinitesimal spark of subjective experience 7071.

According to this framework, when matter combines in highly integrated, complex physical arrangements - such as the densely networked human brain - these micro-experiences amalgamate into the unified macro-consciousness we experience as human beings. Panpsychism is highly elegant because it entirely dissolves the Hard Problem; there is no need to explain how matter magically generates mind if matter has inherently possessed a mental aspect from the dawn of the universe 9.

However, Panpsychism is besieged by its own profound theoretical challenge, known as the "Combination Problem": how exactly do trillions of distinct, localized micro-subjects (the infinitesimally small experiences of individual particles) fuse together to form a single, unified, macro-subject (the human ego or the "I") 101170?

Recent developments documented in the Journal of Consciousness Studies (2024-2026) have proposed novel, sophisticated solutions to the Combination Problem. Prominent theorists suggest abandoning the rigid concept of the localized "self" or the individual "subject" entirely. If consciousness is defined not as individual, encapsulated minds, but as a ubiquitous, contentless background field of pure awareness, then the combination problem effectively vanishes 101172. In this advanced cosmopsychist framework, the human brain does not frantically combine trillions of micro-minds; rather, it acts as a highly complex biological filter or receiver. The brain localizes and fills the pre-existing, universal field of contentless awareness with specific, biologically generated thoughts, memories, and sensory data 1011.

This modern philosophical maneuver strikingly mirrors the ancient frameworks of Advaita Vedānta (which posits a foundational field of pure awareness) and the Buddhist rejection of the permanent self. It demonstrates a remarkable convergence between cutting-edge analytic philosophy of mind, theoretical physics, and classical non-Western metaphysics, suggesting that the ultimate answers to the nature of mind may require synthesizing disciplines that have been separated for centuries 711.

Comparative Architecture of Leading Theories

To synthesize the complex, rapidly evolving theoretical ecosystem of consciousness studies post-2025, the following table systematically compares the five leading paradigms across their core mechanisms, their approach to the Hard Problem, and their principal scientific and philosophical limitations.

| Theory Paradigm | Core Mechanism & Architecture | Approach to Qualia / The Hard Problem | Primary Scientific Limitations | Primary Philosophical Limitations |

|---|---|---|---|---|

| Integrated Information Theory (IIT) | Consciousness is mathematically identical to maximally irreducible integrated information ($\Phi$). The physical substrate relies on highly connected cause-effect structures, theorized to be the posterior cortical "hot zone" 446. | Property Dualism / Panpsychist: Radically reframes the Hard Problem. Qualia is the multi-dimensional geometry of integrated information. Treats subjective experience as an undeniable, foundational axiom 4446. | The $\Phi$ metric is computationally intractable for complex real-world systems. Critically failed key posterior synchronization predictions in the 2025 Cogitate trials 153756. | Leads to highly unintuitive panpsychist conclusions (e.g., inactive logic gates possessing high $\Phi$). Accused of methodological circularity and branded as pseudoscience by critics 344. |

| Global Neuronal Workspace Theory (GWT) | Consciousness is the act of global broadcasting of information across a distributed cortical network, triggered by attention and massive prefrontal "ignition" 1716. | Emergent Physicalism: Bypasses the Hard Problem to solve the Easy Problems. Assumes that qualia naturally and inevitably emerges when data becomes globally accessible to the entire system 17. | Failed to consistently demonstrate prefrontal ignition at stimulus offset in Cogitate trials. Researchers could not decode key high-resolution stimulus features from the prefrontal cortex 140. | "The Explanatory Gap." Explaining the precise mechanics of how data is broadcast across a brain does not explain why that biological broadcasting feels like a subjective experience 1738. |

| Attention Schema Theory (AST) | The brain constructs a highly simplified, computationally efficient internal control model of its own attention, creating the false cognitive narrative of subjective awareness 2360. | Eliminativist Functionalism: Dissolves the Hard Problem entirely. Qualia does not exist physically; it is a useful evolutionary misrepresentation computed by the brain strictly for behavioral control 2460. | Exceptionally difficult to design falsifiable empirical tests that distinguish the presence of an attention schema from other standard forms of predictive coding or metacognition 1958. | The Infinite Regress Problem: an "illusion" of an experience inherently requires a subjective space to be experienced in. An algorithm cannot "conclude" anything without phenomenality 19. |

| Illusionism | Phenomenal consciousness is a systematic, hardwired cognitive illusion. The biological brain consistently misrepresents ordinary physical states as having ineffable qualitative properties 965. | Strict Eliminativism: Denies the existence of the Hard Problem entirely. Replaces it with the "Meta-problem" - tasking science to explain why humans falsely believe they possess qualia 965. | Distinctly lacks testable neurobiological predictions. Operates primarily as an unfalsifiable philosophical defense mechanism designed to protect strict materialist physics 920. | Profoundly counter-intuitive (the actual experience of an illusion is still an experience). Risks severe ethical implications by undermining the reality of sentience and suffering across species 2668. |

| Panpsychism / Cosmopsychism | Consciousness is a fundamental, ubiquitous property of physical matter (or a universal field), existing intrinsically alongside mass, spin, and charge 970. | Fundamental Ontology: Solves the Hard Problem by eliminating the need for strong emergence. Matter and mind have co-existed since the origin of the universe 9. | Currently lacks empirical testability. Cannot be verified via standard neuroimaging, as it requires probing the interiority of fundamental particles or the fabric of spacetime 49. | "The Combination Problem." It is exceptionally difficult to logically explain how billions of micro-conscious particles fuse to create a unified human ego, though field theories attempt to resolve this 1070. |

Conclusion and Future Trajectories

The period surrounding the 2025 Cogitate Consortium results marks a definitive, irreversible inflection point in the scientific pursuit of consciousness. The highly publicized empirical failure of the leading neurobiological models (GWT and IIT) to perfectly predict the neural correlates of awareness has shattered the long-held assumption that a purely mechanistic, physicalist explanation of the mind is imminent 1237. This empirical crisis has forced an industry-wide acknowledgment that the "hard problem" cannot be easily sidestepped or papered over by increasingly high-resolution fMRI maps of cognitive functions.

Consequently, the field is fracturing into distinct, radical trajectories that push the boundaries of scientific inquiry. One trajectory, characterized by Attention Schema Theory and Illusionism, seeks to protect the supremacy of physicalism by eliminating the explanandum entirely, reducing subjective experience to a highly evolved, functional computational glitch 923. While mechanistically plausible and evolutionarily elegant, this approach demands a profound, ethically perilous denial of the very existence of phenomenal reality, threatening to sever the moral frameworks that govern human, animal, and artificial intelligence rights 226.

The second major trajectory demands a fundamental, ground-up rewrite of ontology. Spurred by the mathematical deductions of IIT and the philosophical elegance of Panpsychism, a growing faction of theorists is increasingly willing to view consciousness not as an accidental byproduct of neural activity, but as an intrinsic, fundamental fabric of reality itself 927. This monumental shift is driving a remarkable convergence between modern quantum physics, analytic philosophy of mind, and the classical, non-dual frameworks of Advaita Vedānta and Buddhist Yogācāra 1125. By framing consciousness as a foundational field of pure, contentless awareness - upon which the biological brain imposes structure, filters, and limits - researchers are beginning to formulate a "post-Galilean" science. This ambitious new paradigm seeks to incorporate qualitative interiority without violating the established physical laws of the universe 107.

As adversarial collaborations inevitably expand into new, more complex phases beyond 2026, it is overwhelmingly evident that solving the ultimate mystery of consciousness will require far more than advanced functional neuroimaging. It necessitates the construction of a revolutionary epistemological framework - one capable of bridging the objective, third-person measurements of cognitive mechanics with the undeniable, first-person reality of the phenomenal mind.