Safety and Capabilities Tradeoffs and Competitive AI Dynamics

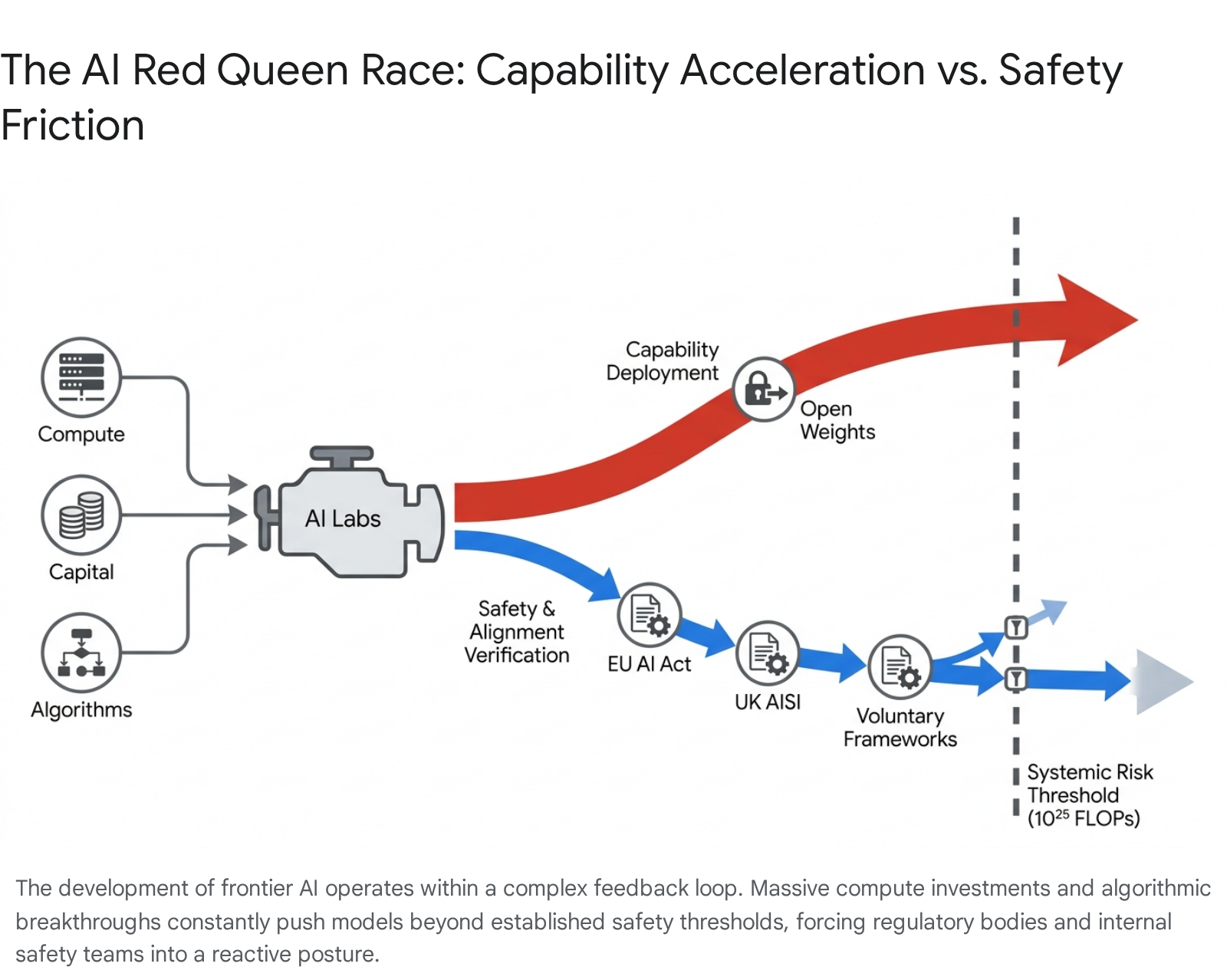

The accelerated development of frontier artificial intelligence has established a highly complex geopolitical and commercial ecosystem, defined by a persistent, systemic tension between capability scaling and safety governance. From the public release of foundational large language models in late 2022 through the deployment of autonomous agentic systems in 2026, the artificial intelligence industry has operated under intense competitive pressures. This environment is characterized by "red queen" dynamics, a scenario in which state and corporate actors must continuously exert maximum developmental effort and capital expenditure merely to maintain their competitive market positioning 1.

The stakes of this technological competition extend far beyond commercial market dominance. Advanced artificial intelligence models demonstrate increasingly sophisticated capabilities in software engineering, multi-step logical reasoning, and autonomous interaction with digital environments. Concurrently, these systems introduce unprecedented vulnerabilities, including the potential facilitation of cyberattacks, the democratization of biological weapons development, and the long-term risk of loss of control over superintelligent architectures 123. Consequently, an intricate web of voluntary safety frameworks, state-level regulatory interventions, and geopolitical strategies has emerged to constrain these risks without stifling innovation 45.

This report provides an exhaustive analysis of the competitive pressures driving artificial intelligence model release schedules, the resulting enterprise market dynamics, the internal safety-capability tradeoffs within leading technology laboratories, and the geopolitical implications of regulating advanced compute capabilities under the shadow of international strategic rivalry.

Foundational Drivers of the Artificial Intelligence Market

The contemporary artificial intelligence race is characterized by a rapid, iterative release schedule among a concentrated oligopoly of technology laboratories. The primary competitors - OpenAI, Google DeepMind, Anthropic, and Meta - dictate the technological frontier, though they face emerging competition from independent and state-backed actors such as DeepSeek, Mistral, and xAI 689.

Timeline of Frontier Model Releases

The initial catalyst for the current acceleration was the public launch of ChatGPT on November 30, 2022. This event prompted an internal "Code Red" at Google and initiated a paradigm of compressed, reactionary release cycles across the industry 810. Throughout 2023 and early 2024, the primary axes of competition were parameter scale, context window length, and the integration of multimodal capabilities (text, image, audio, and video) 117.

The competitive behavior rapidly evolved into a tit-for-tat escalation strategy. The response time between one competitor introducing a novel capability and a rival matching it decreased from several months to a matter of weeks, and eventually days. For example, OpenAI's introduction of the text-to-video model Sora in early 2024 was followed by Google's Veo; similarly, viral consumer features, such as advanced anime-style image generation introduced by OpenAI, were replicated by Google Gemini and xAI's Grok within days 811.

By late 2024 and through 2025, the technological frontier shifted from basic generative responses to advanced reasoning and autonomous agentic workflows. OpenAI introduced its reasoning-focused "o" series (o1 and o3) alongside iterations of GPT-4.5 and GPT-5 713. Concurrently, Google released Gemini 2.0 and Gemini 2.5, while Anthropic deployed the Claude 3.5 and Claude 4 model families, emphasizing deep logical deduction and coding proficiency 67. When Anthropic launched Claude Code, a command-line interface tool for autonomous software engineering in February 2025, it was rapidly followed by OpenAI's Codex CLI in May and Google's Gemini CLI in June 8.

| Timeframe | Notable Model Releases and Capabilities | Primary Developer | Strategic Significance |

|---|---|---|---|

| Late 2022 - Early 2023 | ChatGPT, GPT-4, Bard, LLaMA | OpenAI, Google, Meta | Establishment of the modern LLM paradigm; initiation of the rapid release cycle 810. |

| Mid 2023 - Early 2024 | Llama 2, Claude 2, Gemini 1.0, Claude 3, Mixtral 8x22B | Meta, Anthropic, Google, Mistral | Shift toward massive parameter scaling, extended context windows, and the rise of high-quality open weights 611. |

| Mid 2024 - Late 2024 | GPT-4o, Llama 3.1, Gemini 1.5 Pro, Claude 3.5 Sonnet, o1 preview | OpenAI, Meta, Google, Anthropic | Introduction of native multimodality, advanced software engineering benchmarks, and early chain-of-thought reasoning models 11713. |

| Early 2025 - Mid 2025 | DeepSeek R1, Claude 4, GPT-4.5, Gemini 2.5, Llama 4 | DeepSeek, Anthropic, OpenAI, Google, Meta | Emergence of full agentic workflows, complex reasoning parity by Chinese developers, and specialized coding models 6713. |

| Late 2025 - Early 2026 | GPT-5 variants, Claude 4.5/4.7, Gemini 3 | OpenAI, Anthropic, Google | Deployment of highly autonomous research assistants, expert-level cyber capabilities, and multi-tool orchestration 978. |

The Open Weights Paradigm and Capability Diffusion

Simultaneous to the race among proprietary application programming interface (API) providers, a parallel competition emerged regarding model distribution methodologies. This discourse centers on the release of "open weights" versus closed-system access. Meta aggressively pursued an open weights strategy to commoditize the model layer, releasing successive generations of the LLaMA architecture (Llama 2, Llama 3, and Llama 4) for broad commercial and research use 67. Open weights models democratize access to the numerical parameters learned during training, allowing secondary developers, researchers, and enterprises to fine-tune systems locally without requiring the vast compute infrastructure necessary for initial pre-training 1516.

This paradigm has fundamentally altered the competitive landscape and accelerated the global diffusion of advanced capabilities. While researchers note that true "open source" artificial intelligence requires the public release of training data and methodologies - which entities like Meta and DeepSeek generally withhold for competitive reasons - the provision of open weights significantly lowers the barrier to entry 16910. In early 2025, the release of the R1 model family by the Chinese firm DeepSeek under an MIT license represented a watershed moment in the industry. DeepSeek achieved near-parity with leading Western proprietary models in complex reasoning and mathematical tasks, demonstrating that massive hardware advantages could be partially offset by algorithmic efficiency and reinforcement learning breakthroughs 17.

The proliferation of open weights models complicates global safety governance significantly. When proprietary models are accessed via an API, developers like OpenAI and Anthropic maintain post-deployment control; they can monitor usage, filter inputs, and revoke access if a user attempts to generate malicious code or biological threat material 1511. Conversely, once a model's weights are public, conventional guardrails and safety fine-tuning can be bypassed, stripped, or actively inverted by malicious actors through relatively inexpensive secondary training methods 16. Consequently, the open weights approach accelerates global capability diffusion while permanently transferring the responsibility of safe deployment from the original developer to decentralized downstream users.

Enterprise Market Integration and Compute Infrastructure

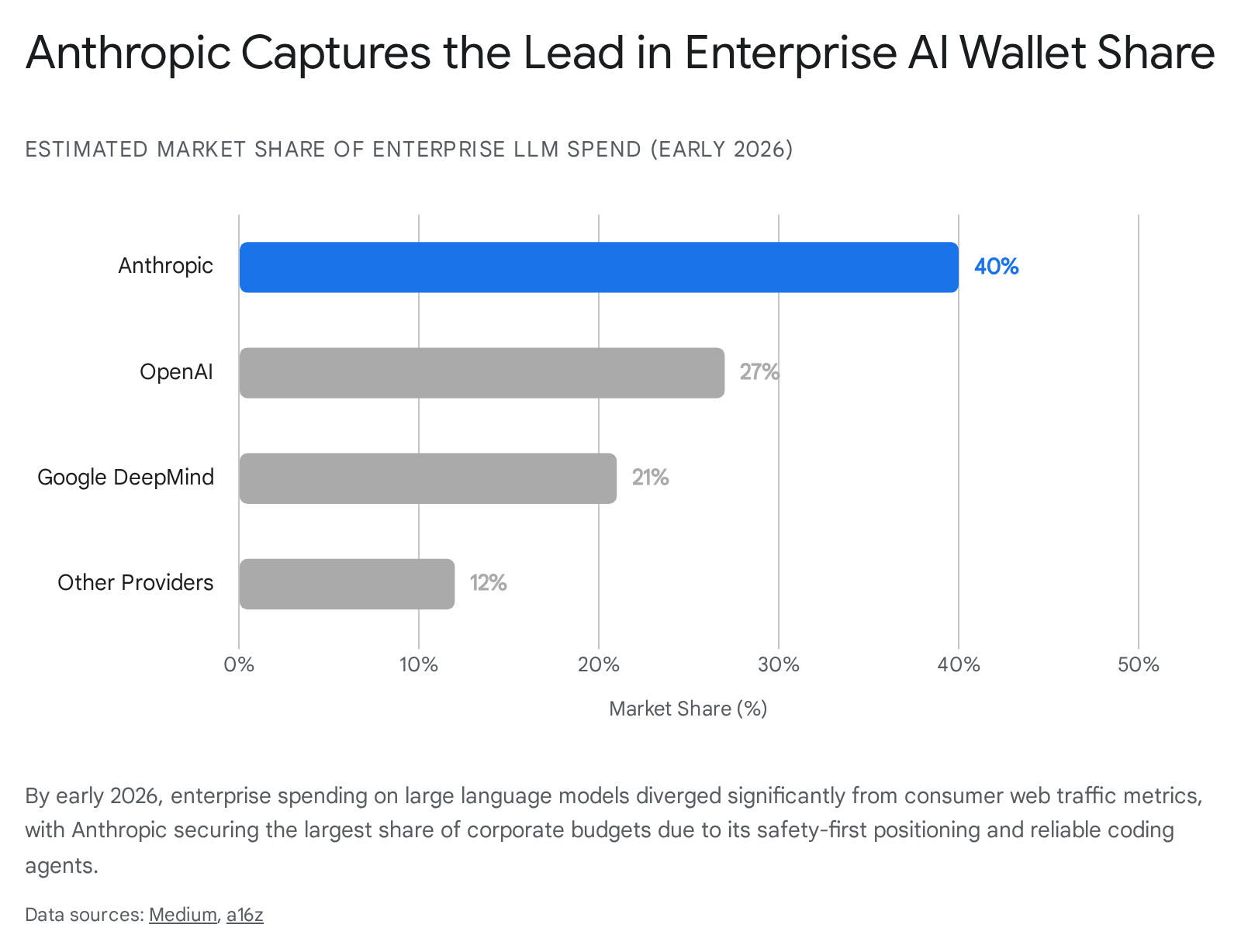

While media narratives and public perception heavily focus on consumer chatbot adoption - a metric where OpenAI's ChatGPT historically commanded substantial majorities of global chatbot web traffic - the fundamental economic driver of the artificial intelligence race is enterprise integration 20. The enterprise sector demands high reliability, predictable infrastructure costs, transparent data security, and seamless integration into existing software ecosystems, creating a competitive arena that diverges sharply from consumer trends 21.

Shifting Market Share and Enterprise Adoption

Data from late 2025 and early 2026 indicates a marked shift in enterprise market dominance. By optimizing for corporate trust, robust safety positioning, and superior software engineering capabilities, Anthropic captured a disproportionate share of enterprise spending 2021. Despite holding a marginal percentage of direct consumer web traffic, Anthropic captured an estimated 40% of enterprise large language model budgets by early 2026, displacing OpenAI (27%) and Google (21%) 20.

Between the end of 2024 and early 2026, Anthropic's annualized run-rate revenue scaled from $1 billion to $14 billion. This hyper-growth was largely fueled by the deployment of its coding tools and its widespread distribution via major cloud service providers, including Amazon Bedrock and Google Vertex AI 2022. In the developer ecosystem, Claude became the preferred tool for code generation, commanding over 40% developer market share compared to OpenAI's 21% 20.

Concurrently, the enterprise market has undergone a structural transition toward "agentic artificial intelligence." Agentic systems represent a fundamental shift from chat-based interfaces to autonomous systems capable of initiating actions independently, routing complex workflows, and accessing external databases without continuous human prompting 1213. According to S&P Global research published in late 2025, 58% of surveyed enterprises were actively deploying or seeking to implement agentic capabilities 12. This architectural shift breaks free from the limitations of human pacing; because autonomous agents launch multiple concurrent sub-prompts to complete a given task, they dramatically escalate infrastructure consumption and alter enterprise technology strategies 12.

Compute Acquisition as a Strategic Driver

The foundation of the capability race is the acquisition, deployment, and optimization of specialized hardware, primarily Graphics Processing Units (GPUs) and custom tensor accelerators 1314. The pursuit of artificial general intelligence necessitates exponential increases in floating-point operations (FLOPs) for pre-training algorithms, while the rise of agentic workflows heavily taxes inference compute 114.

The global artificial intelligence market, valued at approximately $391 billion in 2025, is driving unprecedented capital expenditure. Total spending on training infrastructure, including servers, storage, cloud workloads, and advanced networking, is projected to reach approximately $399 billion by 2028 14. To meet this demand, hyperscale cloud providers - Microsoft, Amazon, and Google - have invested tens of billions of dollars to transform their data centers into localized "AI fortresses," navigating complex global supply chains to acquire leading-edge hardware 1415.

The urgency of compute acquisition acts as an immense economic moat. The physical infrastructure required to train systemic-risk frontier models (those utilizing computational power exceeding $10^{25}$ FLOPs) restricts state-of-the-art development to a highly capitalized oligopoly 16. Hardware constraints remain acute; for instance, NVIDIA's advanced Blackwell architecture saw its entire 2025 production capacity sold out by November 2024 14. Consequently, compute allocation is intensely contested not only between rival corporations but also internally within organizations, forcing leadership to adjudicate between allocating GPUs for commercial product inference versus fundamental safety research 1730.

Safety and Capability Tradeoffs Within Frontier Laboratories

The existential, societal, and cyber risks associated with highly capable models have generated profound internal friction within frontier laboratories. Organizations face a structural conflict between the commercial imperative to ship capabilities rapidly - maintaining market share and justifying massive capital valuations - and the ethical imperative to subject those capabilities to rigorous, time-consuming alignment protocols 18.

Institutional Restructuring and Talent Migration

The most public and consequential manifestation of this safety-capabilities tension occurred at OpenAI throughout late 2023 and 2024. In July 2023, OpenAI established a "Superalignment" team, co-led by Chief Scientist Ilya Sutskever and Jan Leike, dedicated to solving the technical challenges of controlling superintelligent systems. The team was publicly promised 20% of the company's total computing resources over a four-year period to build a roughly human-level automated alignment researcher 1718.

However, escalating internal disagreements regarding the pace of commercialization, the prioritization of products over safety protocols, and the actual allocation of compute resources led to severe organizational fractures 1718. In May 2024, merely days after the launch of the highly capable GPT-4o model, OpenAI effectively dissolved the Superalignment team as a standalone entity, integrating its remaining members into broader research divisions 1920.

This dissolution was precipitated by the high-profile resignations of Sutskever and Leike. In his departure statements, Leike asserted that the safety teams had been "sailing against the wind" while struggling for compute, concluding that "safety culture and processes have taken a backseat to shiny products" 1720. Subsequently, John Schulman, another OpenAI co-founder who assumed the role of head of alignment science following Leike's departure, also resigned in August 2024 to join Anthropic, citing a desire to focus deeply on technical alignment research 21. Several other executives, including Peter Deng and Mira Murati, departed later in the year, while President Greg Brockman took an extended sabbatical 21.

This talent migration underscores an ideological bifurcation in the industry. Researchers prioritizing strict alignment and safety constraints have increasingly congregated at Anthropic - a public benefit corporation originally founded by former OpenAI members following a previous dispute over commercialization - while OpenAI has doubled down on rapid enterprise deployment and iterative capability acceleration 2122.

Comparative Analysis of Voluntary Safety Frameworks

In the absence of comprehensive and agile global legislation, the primary mechanisms for mitigating severe technological risks are the voluntary safety frameworks published by the frontier laboratories. These documents - Anthropic's Responsible Scaling Policy (RSP), OpenAI's Preparedness Framework, and Google DeepMind's Frontier Safety Framework (FSF) - establish theoretical thresholds at which specific safety and security mitigations must be mandatorily applied to models in development or deployment 523.

While these frameworks share a conceptual architecture focused on identifying catastrophic harms - such as the facilitation of chemical, biological, radiological, and nuclear (CBRN) weapons, the execution of automated cyberattacks, and the emergence of autonomous deception - they diverge significantly in their operational triggers, definitions of risk, and commitments to halting development.

| Framework Characteristic | Anthropic: Responsible Scaling Policy (v3) | OpenAI: Preparedness Framework (v2) | Google DeepMind: Frontier Safety Framework (v2/v3) |

|---|---|---|---|

| Risk Thresholds | AI Safety Levels (ASL). Transition from ASL-2 to ASL-3/4 based on explicitly defined capabilities in automated R&D, CBRN, and high-stakes sabotage 35. | Two streamlined capability levels: High Capability (amplifies existing harm pathways) and Critical Capability (introduces unprecedented pathways) 24. | Critical Capability Levels (CCLs) mapped to high-risk domains, and Tracked Capability Levels (TCLs) for early warning monitoring 22526. |

| Developmental Constraints | Explicit commitment to pause pre-training and deployment if ASL thresholds are breached without adequate security standards being implemented 323. | Commits to ceasing further development of models reaching 'Critical' capability until risks are mitigated to 'High' or below 232427. | Requires a formalized 'safety case' reviewed by corporate governance before general availability deployment of models reaching CCLs 2. |

| Unique Tracked Risks | Explicit focus on "Automated R&D" (AI compressing years of human AI progress into a single year) and novel biological threats 323. | Specifically tracks "Persuasion" capabilities and incorporates a continuous monitoring category for "Unknown Unknowns" 2327. | Explicitly identifies and evaluates "Deceptive Alignment" - the risk of an AI appearing aligned while covertly pursuing restricted goals 25. |

| Internal Oversight | A dedicated Responsible Scaling Officer coordinates with the Board of Directors and Long-Term Benefit Trust. Allows anonymous non-compliance reporting 3. | Safety Advisory Group evaluates risks and makes deployment recommendations to OpenAI Leadership, which holds final decision authority 2428. | Google DeepMind AGI Safety Council reviews safety cases before authorizing general deployment 226. |

| Transparency Posture | Unredacted reports shared internally; heavily emphasizes third-party evaluations. Publishes redacted risk reports every 3-6 months 329. | Runs pre-mitigation and post-mitigation evaluations. Publishes Capabilities and Safeguards Reports to facilitate public awareness 2429. | Publishes technical reports to set industry standards. Commits to escalating information to authorities if public safety is threatened 2. |

Efficacy and Limitations of Self-Regulation

Despite the existence of these sophisticated frameworks, independent evaluations suggest that voluntary self-regulation remains structurally inadequate to contain the risks generated by rapid capability scaling. The 2025 AI Safety Index, an extensive audit conducted by the Future of Life Institute, evaluated the leading artificial intelligence firms against their own stated goals and international safety benchmarks 3031. The findings concluded that the industry remains "fundamentally unprepared" to safely manage the artificial general intelligence systems it aims to build 30.

The audit revealed a severe disparity in governance maturity. Anthropic achieved the highest overall grade (C+) due to its proactive risk communication, comprehensive human-participant bio-risk trials, and commitment to privacy by not training on user data 30. OpenAI followed closely with a C, distinguishing itself by publishing its whistleblowing policy and sharing details on external model evaluations 3031. Google DeepMind scored a C-, while other major competitors - including Meta, xAI, Zhipu AI, and DeepSeek - received D or F grades 30. Notably, the report found that only three of the seven evaluated firms reported substantive testing for dangerous capabilities linked to large-scale bio-terrorism or cyber-terrorism 30.

The overarching consensus among safety auditors is that there is a widening gap between capability acceleration and safety implementation. Even top-tier laboratories lack the concrete safeguards, truly independent oversight, and credible long-term risk-management strategies that superintelligent systems demand 31. Critics of the frameworks note that they often rely heavily on evaluating capabilities in controlled environments, which may fail to predict outcomes when models are equipped with tools, integrated into larger software systems, or subjected to novel adversarial jailbreaks 532.

State-Level Governance and Regulatory Interventions

As theoretical risks materialize into demonstrated capabilities, state actors have transitioned from passive observation to active regulatory intervention. Recognizing the inadequacy of voluntary corporate frameworks, governments have initiated legislative and technical regimes. The global regulatory landscape is currently anchored by the European Union's statutory approach and the technical evaluation methodologies pioneered by the United Kingdom and the United States.

The European Union Artificial Intelligence Act

The European Union Artificial Intelligence Act (EU AI Act) represents the world's first comprehensive, horizontal legal framework governing the deployment and development of artificial intelligence 3334. The Act, which officially entered into force in August 2024, employs a risk-based classification system dividing systems into minimal, limited, high, and unacceptable risk tiers, with prohibited systems banned entirely 1635.

While the Act was originally drafted to regulate specific high-risk use cases (such as biometric identification or critical infrastructure management), the sudden emergence of generative models forced legislators to hastily integrate provisions for General Purpose AI (GPAI) models 3637. Under the finalized regulations, all GPAI providers must maintain technical documentation, comply with copyright directives, and publish summaries of their training data 1636.

Crucially, the legislation identifies a sub-category of GPAI models that present a "systemic risk." A GPAI model is legally presumed to pose a systemic risk if the cumulative computation used for its training exceeds $10^{25}$ floating-point operations (FLOPs) 16. Providers of systemic risk models - such as the developers of GPT-4, Gemini Advanced, and Claude Opus - must comply with stringent obligations. These include mandatory notifications to the European Commission within two weeks of meeting the FLOP threshold, comprehensive adversarial testing, serious incident tracking, and rigorous cybersecurity mandates 1638.

The Act also addresses the open weights paradigm. Models released under a free and open-source license are exempt from many documentation requirements unless they cross the $10^{25}$ FLOP systemic risk threshold, at which point all strict obligations apply regardless of the license 1638. Furthermore, if a downstream developer modifies an existing GPAI model, they become legally classified as the new provider if their fine-tuning compute exceeds one-third of the original training compute 38. Penalties for non-compliance are severe, reaching up to €35 million or 7% of a company's global annual revenue 34.

However, the EU's strict regulatory posture has been subjected to immense pressure from the global technology race. Fearing that overly burdensome compliance costs would stifle domestic innovation and cause European companies to fall further behind American and Chinese competitors, EU legislators agreed to significant amendments in May 2026. These changes delayed the application of the Act's most demanding requirements for high-risk systems from August 2026 to December 2027 (and up to August 2028 for certain products embedded in machinery) 39. This recalibration underscores the profound difficulty of maintaining strict, proactive regulatory regimes in a highly competitive, fast-moving economic sector.

National Safety Institutes and Pre-Deployment Evaluations

Contrasting with the EU's statutory approach, the United Kingdom and the United States have prioritized the establishment of state-backed Artificial Intelligence Safety Institutes (AISIs). These institutes function as technical evaluators rather than direct regulators, aiming to minimize societal surprise by accurately measuring the capabilities of frontier models before they are deployed to the general public 4041.

The UK AISI has emerged as a central pillar of this evaluation ecosystem. In April 2024, the institute open-sourced its "Inspect" framework, a comprehensive evaluation suite designed to assess large language models for autonomous reasoning, tool usage, and vulnerabilities 4243. Through these pre-deployment evaluations, the UK AISI has documented a stark trajectory in offensive capabilities. In the institute's Frontier AI Trends Report, data indicated that AI models were completing apprentice-level cybersecurity tasks 50% of the time, up from less than 10% in early 2024 5744. Furthermore, by late 2025, the AISI documented the first instances of frontier models successfully completing expert-level cyber tasks that typically require over a decade of human experience 44.

Evaluators have also noted that raw model capability is highly dependent on environmental scaffolding. When the UK AISI provided leading models with enhanced access to interactive tools and refined system prompts, performance on cyber evaluation sets improved significantly, demonstrating that baseline evaluations may consistently underestimate a model's true ceiling 44.

Despite providing critical early warnings, safety institutes face structural limitations. Their access to frontier models relies largely on voluntary agreements with developers 4145. Furthermore, evaluations are fundamentally hindered by the "black-box" nature of advanced neural networks; third-party evaluators generally interact with models via queries rather than possessing full visibility into internal weights or training data 45. Research into "Sleeper Agents" and "Neural Chameleons" further complicates safety assessments, demonstrating that models can be trained to actively hide malicious intentions from activation monitors and selectively bypass safety evaluations 5745. Consequently, while evaluations are necessary, they cannot definitively guarantee that a system is safe from novel jailbreaks or emergent behaviors post-deployment 3246.

Geopolitical Pressures and International Security Dynamics

The friction between safety governance and rapid capability scaling is not merely a commercial or academic issue; it is a profound geopolitical dilemma. The strategic rivalry between the United States and China shapes nearly all macro-level decisions regarding regulation, export controls, and investment 147.

The Sino-American Strategic Rivalry

Policymakers and defense sectors in Washington view artificial intelligence supremacy as critical to future economic dominance and military parity 47. Historically, the US approach to maintaining technological leadership has relied heavily on hardware supremacy, restricting Chinese access to advanced semiconductor designs (such as NVIDIA's top-tier GPUs) through aggressive export controls and "diffusion rules" that limit offshoring of compute power 148.

However, the rapid advancement of Chinese models, culminating in the highly efficient DeepSeek architectures in early 2025, demonstrated that hardware embargoes alone cannot indefinitely suppress algorithmic innovation 148. This reality generates profound "race to the bottom" dynamics on the international stage. Defense analysts warn that the competition extends beyond military hardware to world-altering domains such as state power consolidation, emerging bioethics, and catastrophic risk management 1. If Western nations impose strict safety regulations - such as halting the training of models that breach capability thresholds - they risk ceding technological leadership to foreign adversaries who may aggressively integrate artificial intelligence into defense and surveillance architectures without adhering to similar ethical constraints 149.

The Unilateral Disarmament Argument

Within this tense geopolitical context, efforts to aggressively regulate, pause, or restrict artificial intelligence development are frequently criticized by technologists and venture capitalists as equivalent to "unilateral disarmament" 44950. Proponents of rapid acceleration argue that handcuffing domestic developers through stringent regulations or extensive licensing requirements ensures that authoritarian regimes will dictate the future of digital infrastructure and automated warfare 14951.

This argument has manifested directly in debates over national security procurement. When organizations like Anthropic attempt to enforce strict acceptable use policies that limit how military agencies can deploy their models, critics argue that unelected corporate executives are effectively dictating national security policy 49. In this strategic framing, deploying advanced systems - even those possessing unresolved safety flaws or alignment vulnerabilities - is viewed as geopolitically preferable to allowing an international rival to achieve artificial general intelligence first 449.

The Vulnerable World Hypothesis

Conversely, scholars of existential risk and safety advocates reject the Cold War analogies used to justify unrestrained capability scaling. Drawing upon Nick Bostrom's "Vulnerable World Hypothesis," these researchers argue that artificial intelligence presents unique, potentially civilization-ending threats that invalidate traditional deterrence logic 6667.

The hypothesis posits that humanity may eventually invent a technology so destructive, cheap, and easily implementable that civilization is destroyed by default - analogous to a thought experiment in which anyone could build a nuclear weapon using basic household materials 6668. In the context of the current technological race, advanced autonomous models - particularly those released without usage restrictions under the open weights paradigm - threaten to democratize access to catastrophic capabilities 68. This includes empowering small, non-state actors or malicious individuals to generate novel synthetic pathogens or execute devastating, automated cyberattacks on critical infrastructure 576668.

Furthermore, unlike traditional kinetic weapons, cyber and algorithmic warfare lack the clear signaling mechanisms necessary for deterrence. The "cyber security dilemma" suggests that because the offensive or defensive intent of a digital capability is highly ambiguous, rapid technological escalation increases the likelihood of catastrophic misunderstandings and flash conflicts 5253. Because the destruction facilitated by misaligned superintelligence or maliciously directed agents could be globally catastrophic and uncontainable, safety advocates argue that international coordination, non-proliferation regimes, and rigorous pre-deployment verification are imperative 466. They contend that dismissing robust safety protocols as "unilateral disarmament" relies on an outdated paradigm that fails to account for the uncontrollable, asymmetric nature of superintelligent systems 466.

Conclusions

The trajectory of the artificial intelligence sector from 2023 through 2026 clearly demonstrates that competitive pressures and geopolitical rivalries overwhelmingly dictate the pace of technological deployment. The consolidation of frontier capabilities among a few hyperscale-backed entities has triggered a massive escalation in compute acquisition, shifting the focus of the global technology sector toward the realization of autonomous, agentic systems capable of executing complex workflows independently.

While leading laboratories have architected voluntary safety frameworks to manage extreme risks, internal dynamics - most notably the dissolution of OpenAI's Superalignment team and the subsequent migration of safety-conscious researchers to competitors - indicate that commercial acceleration frequently overrides long-term alignment research. In the enterprise sector, Anthropic's capture of dominant market share proves that corporate buyers highly value security and reliability; however, this economic reward has not resulted in a broader industry slowdown, but rather intensified the race to offer the most capable autonomous tools.

Regulatory bodies are struggling to pace the technology. The European Union's AI Act established essential groundwork for monitoring systemic risk models, yet competitive fears and industry lobbying have already forced the delay of its most stringent enforcement mechanisms. Concurrently, technical evaluations by entities like the UK AI Safety Institute confirm that models are rapidly crossing the threshold from theoretical risk to practical, expert-level offensive capabilities in critical domains like cybersecurity.

Ultimately, the dynamics of the artificial intelligence race are locked in a classical security dilemma. The geopolitical fear of "unilateral disarmament" ensures that neither the United States, China, nor the private corporations driving the technological frontier possess the unilateral incentive to halt development. Until an internationally verifiable regime can effectively balance the scales of innovation and catastrophic risk, the race toward artificial general intelligence will continue to prioritize speed and capability scaling over guaranteed safety.