Recommendation mechanics and reach factors of the X algorithm in 2026

The infrastructure dictating content visibility on the X platform underwent a fundamental architectural transition in January 2026. Replacing a legacy rules-based machine learning framework with a transformer-based recommendation engine powered by xAI's Grok model, the platform altered how hundreds of millions of daily posts are sourced, ranked, and distributed 123. The deployment of this new system was accompanied by a public open-source release of the underlying code under the repository xai-org/x-algorithm, providing technical visibility into the mechanism of the algorithmic timeline while simultaneously obscuring the exact mathematical weights governing engagement values 45.

This comprehensive analysis details the technical functioning of the X algorithm in 2026. It examines the underlying hardware and software infrastructure, the specific engagement weights that drive impressions, the structural advantages provided to verified accounts, the mechanics of feed sorting, and the broader societal and regulatory implications of the platform's visibility rules. The analysis synthesizes engineering documentation, empirical studies, and regulatory actions to present a complete view of how information is curated on the platform.

Architectural Infrastructure and the Grok Implementation

The legacy X recommendation system relied on heavily hand-engineered features, heuristic filters, and a relatively small 48-million-parameter neural network 23. The 2026 update eliminated the majority of these manual rules in favor of a centralized large language model architecture 567. The core engine operates on a Rust and Python-based codebase utilizing a JAX-based transformer derived from the Grok language model family, trained on the massive Colossus 2 supercluster 678. This transition indicates a shift from simple pattern recognition to semantic comprehension, wherein the model actively processes text sentiment and video context to predict user alignment 39.

The Phoenix Retrieval System

The recommendation pipeline must process approximately 500 million daily posts, narrowing them down to roughly 1,500 candidate posts per user session within milliseconds 39. This funnel relies on a two-tower neural network retrieval system known as Phoenix 410.

The Phoenix architecture functions by computing the dot-product similarity between two distinct vector embeddings. The first component, the User Tower, encodes the individual user's behavioral profile 46. The model ingests a user's recent engagement history - specifically tracking up to the last 128 sequential interactions - into a single embedding vector 410. This vector is highly dynamic, altering its trajectory every time a user likes, replies to, or dwells on a piece of content. The second component, the Candidate Tower, encodes the specific candidate post or author. Rather than relying on a fixed vocabulary, the model utilizes hash-based embeddings, which allows the system to embed any new entity, user, or topic without requiring manual vocabulary updates 46.

By calculating the similarity between the user's historical embedding and the candidate post's embedding, Phoenix narrows the global corpus into a highly personalized candidate pool 610. A critical dimension tracked within this system is the product surface embedding, which allows the model to differentiate user behavior based on context. The algorithm recognizes that a user may prefer different content types in the main feed versus the search tab, the explore page, or push notifications, optimizing predictions for the specific interface where the content will be consumed 10.

Transformer Mechanics and Candidate Isolation

A defining structural characteristic of the 2026 ranking model is the Candidate Isolation Principle, implemented via an explicit attention mask titled make_recsys_attn_mask 46.

In traditional recommendation systems, candidate posts often compete relative to one another within the same inference batch. In such systems, the score of one post might be suppressed if a highly viral post is situated next to it, creating unpredictable visibility outcomes based on algorithmic neighborhood effects 10. The X algorithm's specific attention masking prevents candidate posts from attending to each other during the inference phase 410. A candidate post can only attend to itself and to the user's historical context 46.

This architectural decision yields two primary technical advantages. First, it ensures score consistency; a post's ranking score depends entirely on its specific semantic match with the user, isolated from the noise of trending topics or other items in the batch 10. Second, it allows the platform to compute a post's score for a specific user once and cache it 410. If the user's base embedding has not significantly shifted, the system does not need to recompute the engagement probability, vastly optimizing computational overhead and reducing active parameter usage across billions of daily interactions 4611.

Sourcing Pathways and Candidate Assembly

The 1,500 candidate posts retrieved for a given user are sourced from two distinct pools, typically targeting an even ratio, though this split dynamically adjusts based on the user's specific engagement habits and app usage duration 31012.

The first pool involves in-network sourcing, which pulls directly from the accounts a user explicitly follows. This is driven by the Real Graph model, a system that predicts the likelihood of engagement between the user and the author based on historical interaction density 3. If a user follows an account but rarely interacts with its content, the Real Graph score decays, reducing the probability that the author's posts will survive the 1,500-candidate cutoff 9.

The second pool involves out-of-network sourcing, identifying discovered content from accounts the user does not currently follow. This relies heavily on SimClusters, a computational framework that categorizes users and content into roughly 145,000 overlapping topical clusters 3. SimClusters account for approximately eighty-five percent of all out-of-network recommendations 3. The mechanism functions on collaborative filtering principles: if multiple users within a specific geographic or topical SimCluster engage deeply with a post, the algorithm extrapolates that other users in that same cluster will also find it relevant. This effectively utilizes aggregate social proof to source external content and inject it into virgin feeds, allowing content to break out of established follower graphs 3913.

Engagement Weights and Algorithmic Scoring

Once the candidate pool is assembled, the algorithm must assign a definitive numerical ranking to each post to determine its exact position in the feed. The WeightedScorer module calculates a final ranking score by predicting the probability of nineteen distinct user actions and multiplying those probabilities by specific weight constants 41014.

The Opacity of the Parameter Weights

While the overarching structure of the scoring formula was made public in the xai-org/x-algorithm repository, the specific numerical weight constants were deliberately obscured. These constants reside in a module called params, which was explicitly excluded from the open-source release for security and manipulation-prevention reasons 4.

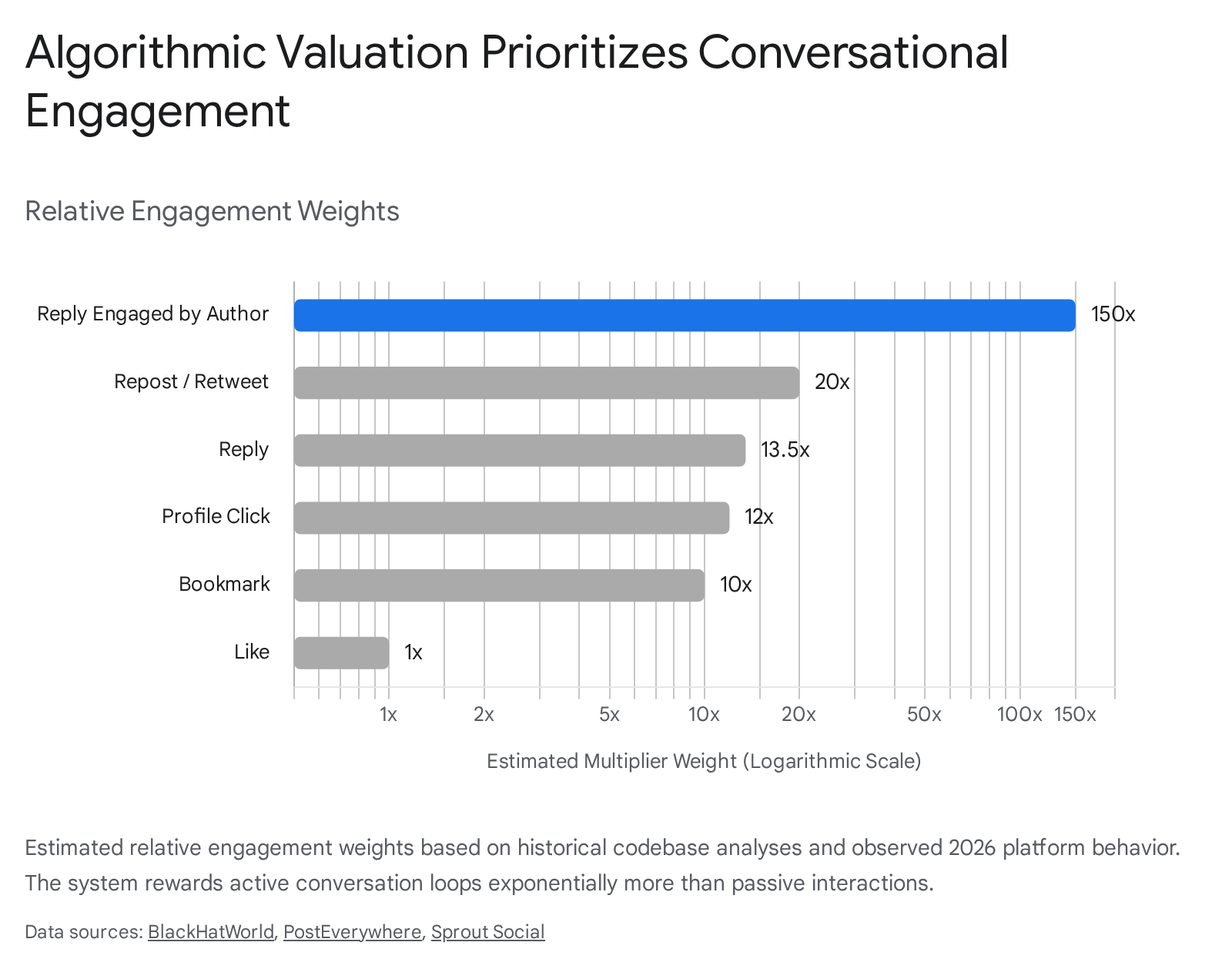

Consequently, exact contemporary weight values remain a subject of empirical estimation, reverse-engineering, and comparative analysis against the 2023 open-source release (twitter/the-algorithm) 41518. What the structural codebase analysis irrefutably confirms, however, is that the algorithm categorically prioritizes active conversation signals over passive broadcasting metrics, and penalizes negative friction heavily .

Hierarchy of Positive Signals

The scoring hierarchy strictly separates active engagement, which demands user effort and extends dwell time, from passive engagement, which requires minimal friction and offers low intent signals. Empirical tracking data and historical repository comparisons outline the estimated value of positive interactions relative to a standard like 31516.

| Engagement Metric | Estimated Multiplier (vs. Baseline Like) | Algorithmic Rationale and Behavioral Mechanism |

|---|---|---|

| Like | 1.0x (+0.5 points) | Functions as the lowest-friction passive signal; indicates baseline approval but weak conversational intent 31416. |

| Bookmark | 10.0x (+10.0 points) | Represents the highest non-public "save" signal; indicates enduring utility and intent to return to the platform 316. |

| Conversation Click & Dwell | 10.0x to 11.0x (+10.0 to +11.0 points) | Requires extended viewing (often two or more minutes) or expanding a post; proves the content holds active visual attention 36. |

| Profile Click | 12.0x (+12.0 points) | Acts as a high-intent exploratory action that frequently precedes follow events or deeper historical timeline engagement 31415. |

| Reply | 13.5x (+13.5 points) | Triggers active conversational threading and provides rich semantic context directly to the algorithm regarding sentiment 31416. |

| Repost (Retweet) | 16.0x to 20.0x (+1.0 point base, highly scaled) | Serves as direct network amplification. When processing a retweet, the Phoenix scorer uses the original author's ID, stripping the retweeter's follower count from the raw ranking calculation 41516. |

| Reply Engaged by Author | 75.0x to 150.0x (+75.0 points) | Functions as the single most powerful organic signal. Indicates successful community building, reciprocal discourse, and high-quality conversational depth 3141517. |

The extreme valuation of a reply engaged by the author fundamentally alters platform strategy for sophisticated users. A single reply chain wherein the original poster responds to a commenter provides up to one hundred and fifty times the algorithmic value of a standalone like 317. The Grok neural network processes this bidirectional sequence as definitive proof of ongoing, valuable interaction, which reliably prompts the system to inject the parent post into high-volume out-of-network recommendation clusters 31718.

Negative Predictions and Suppression Mechanics

The recommendation engine strictly monitors the probability of a user applying negative feedback, factoring these actions directly into the normalization process. Even a fractional predicted probability that a user will report or block an account can severely truncate a post's final score 10.

Mutes and "Not Interested" flags push the Phoenix similarity search away from specific topic clusters 315. While coordinated "Not Interested" farming - a tactic where organized groups mass-flag competitor content to manipulate discovery algorithms - was somewhat effective in prior years, the 2026 update aggregates negative signals primarily at the broader topical level rather than the individual post level. This architecture dilutes the impact of targeted suppression campaigns against specific creators 315.

Conversely, user blocks and abuse reports carry catastrophic scoring implications. An active block is estimated to carry a negative multiplier of up to one hundred and fifty times the weight of a standard like 15. Explicit reports for spam or toxicity act as overriding negative limits. An elevated report prediction score instantly drops an account's internal visibility metrics and immediately halts all out-of-network distribution, confining the post entirely to the most dedicated followers 23415.

Furthermore, the integration of Grok enables continuous, real-time sentiment analysis 17. Content flagged by the model as highly toxic, excessively combative, or classified as intentional "rage-bait" is actively downranked. The 2026 model has been explicitly tuned to favor constructive sentiment and constructive conversational loops, shifting away from earlier iterations of social media algorithms that purely optimized for outrage-driven virality 317.

Thread Bundling and the DedupConversationFilter

Within the feed orchestration layer, the DedupConversationFilter fundamentally alters how long-form threads are displayed to end-users. In previous algorithmic iterations, a highly engaging thread could dominate a user's timeline with multiple interconnected posts.

In the updated architecture, if an author posts an eight-part thread, and the algorithm scores five of those constituent tweets highly enough to surface them to a user, the filter actively intervenes post-selection. It identifies the root tweet ID and collapses the thread, ensuring that only the single highest-scoring tweet from that specific conversation enters the viewer's feed 4. This filtering mechanism successfully prevents feed flooding and promotes author diversity, but it requires content creators to highly optimize their initial introductory posts, as downstream replies will not achieve independent organic distribution unless directly clicked and expanded by the reader 4.

Platform Stratification and Verified Reach

The transition from a system historically designed to highlight democratic, localized voices to a "meritocracy via subscription" model is explicitly hardcoded into the X recommendation engine 1618. Purchasing an X Premium (formerly Twitter Blue) subscription is treated by the algorithm as a definitive, unassailable credibility signal, fundamentally altering the reach ceiling and baseline visibility for an account.

Quantifying the Reach Disparity

Analysis of platform behavior in early 2026 reveals the largest organic reach gap between paid and free tiers across the major social media industry 3. Free accounts face strict visibility limitations designed to curb bot activity, resulting in median engagement collapsing to near-zero for standard, non-viral posts 17.

The algorithmic multipliers granted to verified accounts operate across multiple vectors of the distribution pipeline. In-network, Premium accounts receive an automatic four-times visibility multiplier for their existing followers, controlled internally by the home_mixer_blue_verified_author_in_network_multiplier parameter 39. Out-of-network, Premium accounts receive a baseline two-times visibility multiplier in algorithmic discovery feeds, ensuring their content seeds into SimClusters at twice the velocity of unverified users 3919.

| Account Classification | Base Algorithmic Visibility Multipliers | Median Post Impressions | Strategic Distribution Capability |

|---|---|---|---|

| Free (Unverified) | 1.0x (Baseline subject to strict suppression) | ~0 (Characterized by frequent zero-engagement instances) 17 | Highly constrained; entirely dependent on extreme viral out-of-network breakthroughs. |

| X Premium | 4.0x In-Network / 2.0x Out-of-Network | 600+ 317 | Functions as the required baseline for consistent distribution and sustainable community building. |

| X Premium+ | Maximum Reply Sorting Boost & Lowest Link Penalty | 1,550+ 17 | Unlocks full Long-Form Articles formatting (25,000 characters) and enhanced native video weighting. |

Beyond raw impression multipliers, verified users' replies are algorithmically prioritized to appear at the absolute top of conversation threads 31618. Because replies themselves are heavily weighted, this structural placement guarantees premium users accumulate views, profile clicks, and secondary engagement regardless of the reply's organic quality, creating a self-reinforcing growth loop 161819.

Implicit Credibility and Sandbox Navigation

The legacy "TweepCred" reputation score - a system that manually graded accounts from zero to one hundred based on explicit factors like account age and following ratios - was officially retired. It has been replaced by implicit credibility markers learned through ongoing embedding histories 310.

However, acquiring Premium status provides a structural floor to an account's reputation calculation. By verifying identity and payment, the user applies an immediate positive boost to their credibility vectors, essentially bypassing the early-stage algorithmic "sandbox" suppression applied to new or unverified accounts 3. This financial verification signal acts as a proxy for trust, significantly reducing the probability that the system will automatically quarantine borderline content or aggressive engagement patterns.

Feed Interfaces and Chronological Deprioritization

X maintains two primary interfaces for content consumption: the algorithmic "For You" feed and the account-specific "Following" feed. The mechanical distinctions between these feeds shifted drastically following platform architecture updates in late 2025 and early 2026, pivoting aggressively toward predictive modeling over user agency.

The Algorithmic Assimilation of the Following Feed

Historically, the Following timeline functioned as a strict reverse-chronological feed, ensuring users saw every post from accounts they actively chose to follow in the exact chronological order they were published 1220. This predictability was long considered the platform's core utility for real-time information gathering.

In November 2025, X deployed the Grok AI to sort the Following feed based on predicted engagement and personalized relevance, entirely overriding strict timestamps 32122. The system analyzes historical interactions to push highly relevant posts from followed accounts to the top of the feed, regardless of when they were published 2123. By February 2026, subsequent software updates, notably iOS version 11.65, removed the "Switch to Latest" toggle from the primary mobile application interface 202428. While power users could manually create pinned lists as a workaround to access true chronological data, the default experience for accessing followed accounts became heavily mediated by machine learning predictions 32028.

Furthermore, the application updated its session management to persistently default users to the algorithmic For You feed upon opening the app, regardless of which feed interface they had viewed during their previous session 212223.

Strategic Implications for Real-Time Utility

The strategic rationale for this shift relies on total engagement optimization. By injecting algorithmic predictions into the Following feed, the platform actively prevents high-volume, low-quality posters from dominating a user's timeline simply by posting frequently. It ensures that users see the mathematically "best" content from their network 2324.

However, this transition drew sustained criticism for obscuring real-time news delivery. The platform's historical dominance during live sporting events, political crises, and breaking news relies heavily on chronological awareness. By filtering followed content through predictive relevance modeling, the platform sacrifices strict temporal accuracy in favor of aggregate engagement, fundamentally altering how journalists, financial analysts, and researchers track live events 212223. To mitigate this loss of granular control, X introduced supplementary curation tools like "Snooze Topics," allowing users to silence specific subjects for twenty-four hours, and "Custom Timelines," which pin algorithmic feeds dedicated to specific verticals like finance or geopolitics 20.

Content Formats and Media Distribution

Beyond user history and verified status, the intrinsic formatting and media type of a post heavily dictate its trajectory through the Candidate Pipeline and its ultimate reach potential.

Native Video and the Retention Threshold

The X algorithm applies distinct ranking models to native multimedia, particularly as the platform aims to compete directly with short-form video competitors. A critical threshold identified in the 2026 parameters is the algorithmic "10-Second Rule" 19.

If a native video effectively hooks a viewer and retains active attention for more than ten seconds, it triggers a massive algorithmic multiplier. Empirical tracking suggests this retention milestone yields an estimated 340 percent increase in overall reach compared to standard text-only posts 19. This strict optimization for dwell time aligns with the core architecture's focus on separating passive scrolling from active consumption 29. Furthermore, native video keeps the user entirely within the application ecosystem, limiting the risk of the user bouncing to an external site and aligning perfectly with the platform's overarching retention and advertising metrics.

The Outbound Link Dispute

The treatment of outbound external links represents a persistent point of contention between X engineering leadership and independent analytics researchers. It is widely understood that social platforms inherently suppress content containing outbound URLs to maximize their own internal "user-seconds" and ad exposure 930.

In late 2025 and into 2026, X's Head of Product, Nikita Bier, publicly stated that external links were no longer actively "deboosted" or penalized by the algorithm itself 3025. Bier argued that the previous lack of reach was due exclusively to a user interface flaw - specifically, the web browser link preview card physically covered the engagement buttons (Like, Reply, Repost) on mobile devices, mechanically preventing users from sending the positive ranking signals required for viral distribution 3026. Once the UI was adjusted to make engagement buttons easily accessible beneath external links, Bier claimed the algorithmic penalty was fully resolved 26.

Despite these official engineering claims, empirical tests run by media analysts indicate that link posts continue to severely underperform native content across all metrics 2526. This discrepancy implies two possibilities: either a baseline suppression penalty persists in the unreleased, opaque params code, or user behavior is so deeply conditioned against clicking away from the timeline that the posts organically fail to generate the minimum engagement velocity required to survive the Phoenix ranking threshold 326. In either scenario, external link integration remains a highly inefficient strategy for organic growth compared to long-form native text or video.

Societal Amplification and Regulatory Conflicts

Because the X recommendation engine mathematically dictates the visibility of information for hundreds of millions of individuals globally, its specific parameter weights carry immediate socio-political and legal consequences. Throughout 2026, the algorithm faced intense scrutiny from academic institutions regarding political polarization, and from governmental bodies regarding compliance with international digital law.

Empirical Measurements of Political Shaping

A fundamental question surrounding recommendation engines is whether they merely reflect a user's existing preferences or actively shape their worldview through subtle exposure bias. A landmark independent field experiment published in the journal Nature in February 2026 provided robust empirical evidence on this dynamic 27282930.

Researchers monitored 4,965 US-based X users over a seven-week period, randomly assigning them to consume content via either the algorithmically curated For You feed or the strict chronological feed 272830. Utilizing custom browser extensions to track exact exposure, the findings demonstrated a profound and asymmetrical political influence generated by the Grok-powered timeline.

First, the research documented definitive conservative amplification. The algorithmic feed systematically promoted conservative content and political activist accounts while demonstrably demoting posts from traditional, mainstream news outlets 272830. Second, this exposure resulted in measurable attitude alteration. Users exposed to the algorithmic feed experienced a statistically significant shift toward conservative policy positions, specifically regarding the prioritization of inflation and immigration, perceptions of the criminal investigations involving Donald Trump, and adopting more pro-Kremlin views on the war in Ukraine 282930.

Crucially, the study revealed enduring network effects. The algorithm actively drove users to follow conservative activist accounts 272830. Even when researchers subsequently switched users back to a chronological feed, the political shift persisted because their foundational follower graph had been permanently altered by the algorithm's initial recommendations 27282930. These findings unequivocally confirmed that the For You feed does not simply echo user intent; the specific embedding matches and engagement weights actively cultivate distinct, highly polarized political environments 2730.

Moderation Latency and Community Notes

To manage platform misinformation while adhering to free speech maximalism, X relies heavily on its crowdsourced "Community Notes" feature. The algorithm dictating the visibility of these notes infers a latent ideological dimension among contributors to ensure fairness 31. To reach "Helpful" status and become visible to the public, a note must garner cross-partisan support from users who have historically demonstrated opposing viewpoints 31.

While this system reduces the latency of misinformation correction in objectively verifiable environments, recent analyses from the 2024 - 2025 electoral cycles in the US and Europe demonstrate a structural flaw. The requirement for cross-partisan consensus results in systemic under-moderation of highly polarizing content 31. If a political topic is so deeply divisive that cross-partisan agreement is mathematically impossible, no Community Note achieves visibility. Consequently, the standard recommendation algorithm freely amplifies the highly polarizing, unchecked post based purely on outrage metrics and high reply density, creating significant risks to civic discourse 31.

The European Union Digital Services Act Litigation

The opacity of the specific ranking weights, combined with the platform's localized approach to content visibility, placed X in direct legal conflict with the European Union's Digital Services Act (DSA). The DSA is a sweeping regulatory framework that mandates Very Large Online Platforms (VLOPs) provide exhaustive transparency regarding how their algorithmic systems function, offer non-profiled alternative feeds, and actively audit and mitigate systemic societal risks 32333435. Furthermore, it requires the maintenance of a public "Statement of Reasons" database, forcing platforms to log the specific legal or policy justification for every moderation action 363738.

The tension between X engineering and European regulators culminated in December 2025 when the European Commission issued a €120 million fine against the platform 33940. The Commission cited insufficient algorithmic transparency in risk mitigation, the deceptive nature of the Premium blue checkmark system (which masked authentic identity behind a paid engagement boost), and the failure to maintain a functioning, compliant advertising repository for researchers 353940. Furthermore, formal investigations were launched in early 2026 by Spain and Ireland regarding the Grok AI's role in the recommender system. These inquiries specifically targeted whether the algorithmic integration amplified illegal deepfake content without proper safeguards, and whether the quiet removal of chronological defaults violated strict user choice mandates under European consumer law 33239.

In response to escalating financial penalties, X initiated a landmark legal challenge in February 2026 at the General Court of the European Union. The litigation argued that the DSA constitutes a "global speech control" framework that improperly forces American technology companies to adhere to localized European visibility restrictions, effectively allowing foreign bureaucrats to dictate algorithmic curation globally 47. This unprecedented legal battle underscores the reality that the specific lines of code, attention masks, and mathematical weights buried inside the xai-org/x-algorithm repository are no longer merely technical engineering decisions. They are highly contested instruments of international geopolitical governance, capable of shifting electoral outcomes and redefining the boundaries of the digital public square.