Quantum Darwinism and the emergence of classical reality

Introduction to the Quantum-to-Classical Transition

The fundamental disconnect between the deterministic, unitary evolution of quantum mechanics and the objective, definite reality observed at the macroscopic scale represents one of the most profound challenges in modern theoretical physics. At the microscopic level, quantum systems exhibit superpositions, entanglement, and probability amplitudes governed strictly by the Schrödinger equation 1. Yet, the classical reality perceived by macroscopic observers is characterized by a set of definite states where multiple independent agents can agree on the properties of a system without fundamentally altering those properties through the act of observation 22. Historically, this discrepancy was addressed by the Copenhagen interpretation through the axiomatic introduction of the wave-function collapse - a non-unitary projection postulate triggered by measurement, effectively governed by the Born rule 123. However, this axiomatic postulate lacked a precise physical mechanism, leaving the boundary between the quantum and classical regimes fundamentally ill-defined.

Decoherence theory, initially pioneered by H. Dieter Zeh and subsequently expanded by Wojciech Zurek and collaborators over twenty-five years, provided the first major mathematical framework toward resolving this boundary 254. Decoherence demonstrates that quantum superpositions of a system interact inevitably with their surrounding environment, leading to the rapid decay of off-diagonal elements in the system's reduced density matrix 7. The environment effectively monitors the system, continuously entangling with it and suppressing quantum interference 7. As a result, the transition from quantum to classical can be partially understood as the selective loss of information to the environment.

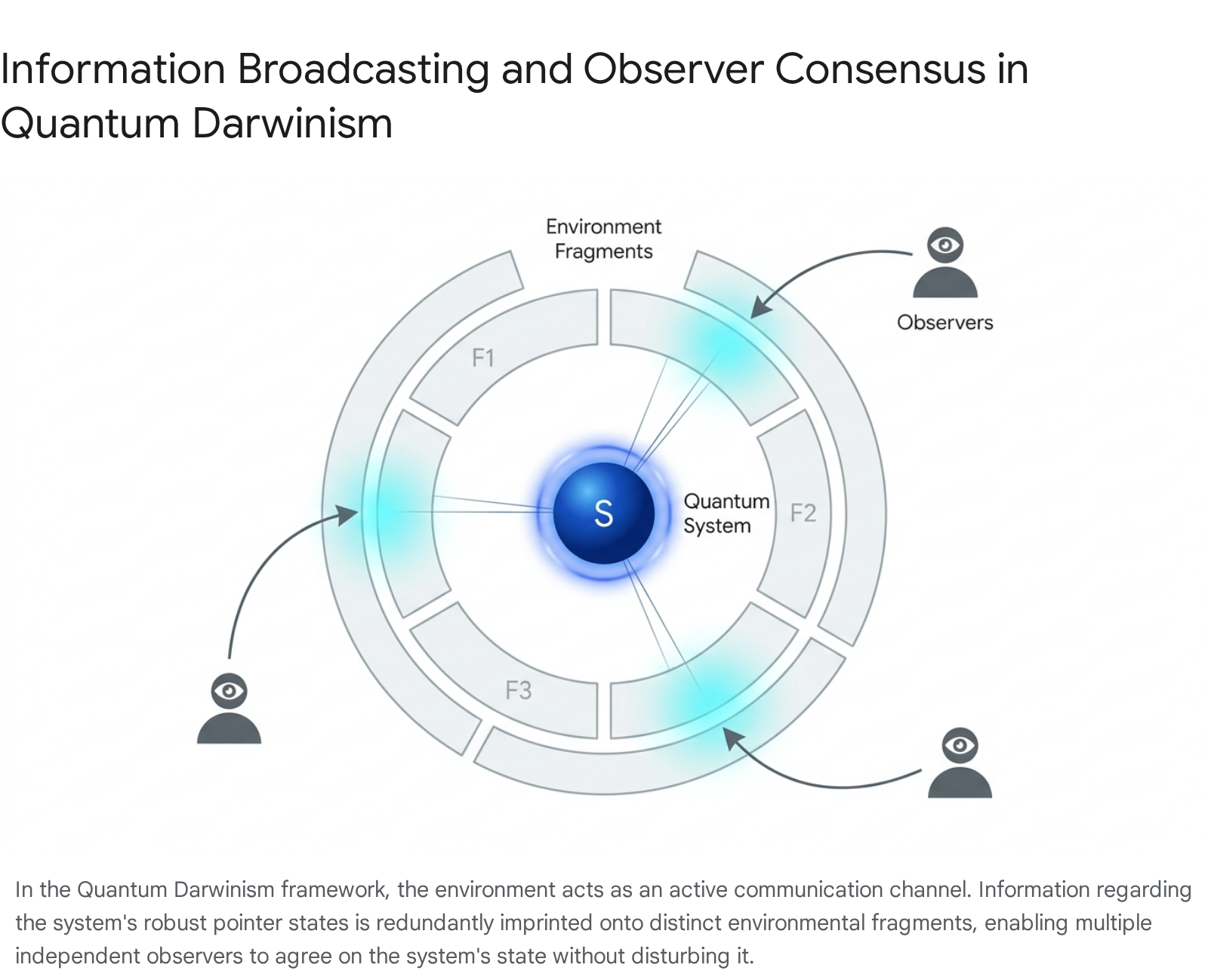

While decoherence successfully explains the suppression of macroscopic superpositions - effectively banning flagrantly non-local "Schrödinger cat" states - it does not completely resolve the emergence of an objective, shared classical reality. Decoherence theory indicates what specific states survive (known as pointer states) but does not fully account for how multiple observers can independently discover the system's state without disturbing it 15. To bridge this specific gap, the framework of Quantum Darwinism was introduced in 2003 by Wojciech Zurek, along with collaborators including Harold Ollivier, David Poulin, Juan Pablo Paz, and Robin Blume-Kohout 23. Quantum Darwinism elevates the environment from a passive sink for quantum coherence to an active, structured communication channel that redundantly broadcasts information about the system's preferred states to the broader universe, thereby enabling objective consensus 1067.

Theoretical Framework of Quantum Darwinism

Quantum Darwinism postulates that classicality is an emergent property arising from the selective proliferation and redundant encoding of information. Under this theoretical paradigm, continuous interactions between a quantum system and its encompassing environment naturally select a specific set of robust states. Simultaneously, these interactions generate multiple redundant copies of information about these states across various discrete fragments of the environment 2510.

Einselection and Pointer States

The foundational mechanism driving the selection of these robust states is environment-induced superselection, commonly referred to as "einselection" 4. When a system interacts with an environment, the interaction Hamiltonian commutes with a specific set of observables belonging to the system. The eigenstates of these particular observables constitute the "pointer states" 7. Pointer states are distinguished by their exceptional resilience; they are the specific configurations that survive the decoherence process with minimal perturbation 7.

Operationally, if the initial state of the quantum system exists in a superposition of these pointer states, the joint system-environment unitary evolution branches into a highly entangled, non-separable state. Superpositions of pointer states rapidly decohere and decay into statistical mixtures due to environmental entanglement, leaving only the most stable pointer states - those least perturbed by their surroundings - to persist 710.

In the classical limit, an observer does not have the capacity or necessity to measure the global, universal state. Instead, observers measure localized, microscopic fragments of the environment 26. The resilience of the pointer states forms the theoretical foundation that ensures observers measuring entirely distinct environmental fragments are accessing the same underlying physical reality, a phenomenon conceptually analogous to the predictability sieve used to identify preferred basis states in complex systems 76.

Redundancy and Information Proliferation

The central mathematical and operational metric used to quantify Quantum Darwinism is the mutual information, $I(\mathcal{S}:\mathcal{F})$, between the principal system $\mathcal{S}$ and an accessible fraction of the environment $\mathcal{F}$ 58. The total environment $\mathcal{E}$ is conceptually partitioned into numerous independent, non-overlapping fragments ($\mathcal{F}_1, \mathcal{F}_2, \dots, \mathcal{F}_N$), such that $\mathcal{F} \subset \mathcal{E}$ 56. Mutual information is derived using the von Neumann entropy $H(\cdot)$ and is formally defined as:

$I(\mathcal{S}:\mathcal{F}) = H(\mathcal{S}) + H(\mathcal{F}) - H(\mathcal{SF})$ 5

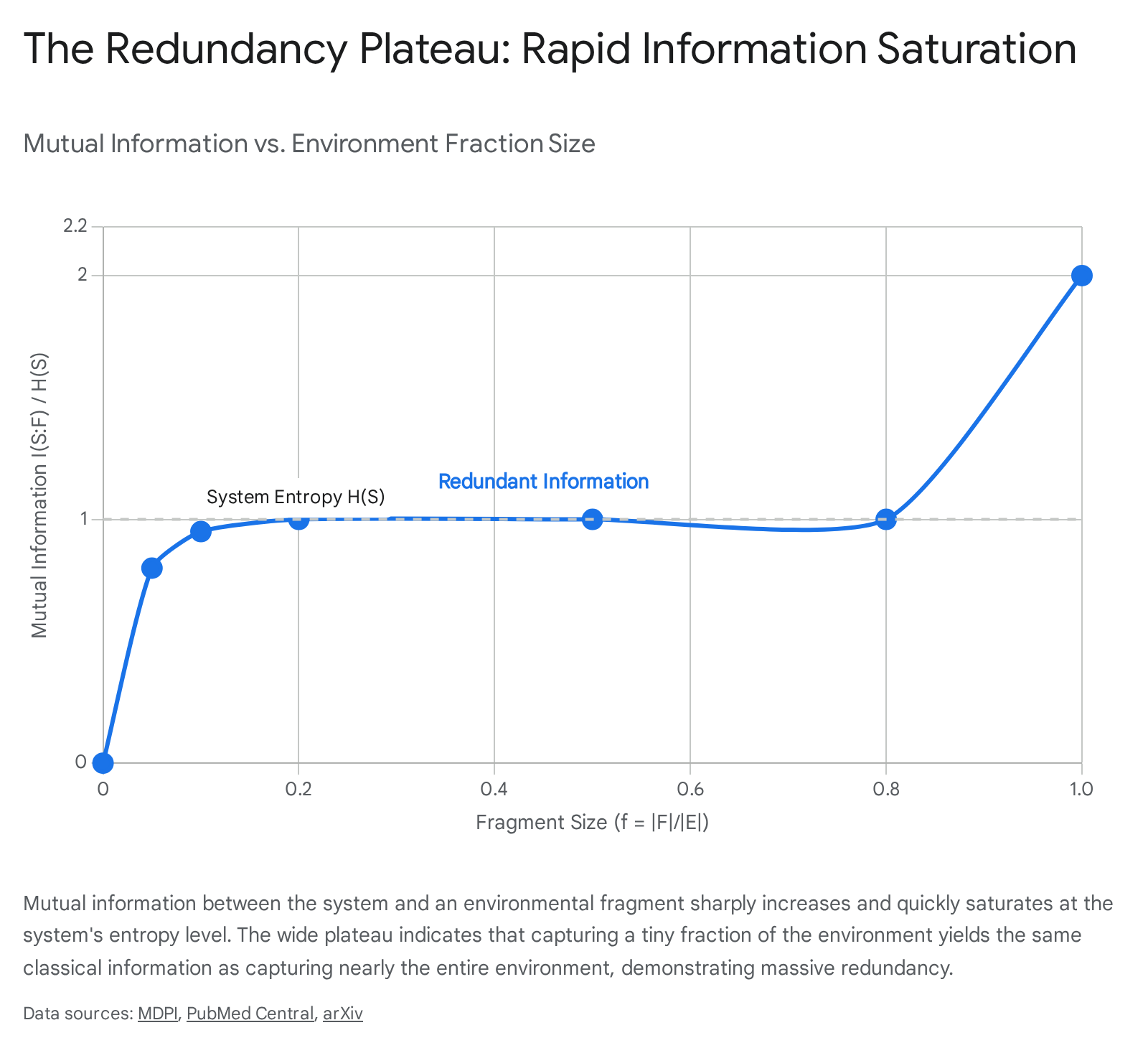

Objectivity, which is the hallmark of classicality, emerges when the mutual information between the system and a relatively small fragment of the environment equals the missing information about the system itself - specifically, the Shannon entropy of the system's pointer states 59. This relationship manifests graphically as the "redundancy plateau." When mutual information is plotted against the fractional size of the environment accessed, denoted as $f = |\mathcal{F}|/|\mathcal{E}|$, the resulting curve rises sharply for very small values of $f$ and then forms a long, invariant plateau 1510.

The existence of this plateau indicates a profound physical reality: an observer only needs to capture a minuscule fraction of the environment to acquire complete, classical information about the system's chosen pointer state 1510. Any additional environmental fragments collected after the mutual information reaches this plateau provide purely redundant records rather than novel quantum data. The degree of redundancy is typically quantified by the factor $R \approx 1/f_{obj}$, where $f_{obj}$ represents the minimum fraction of the environment necessary to achieve a mutual information virtually identical to the total entropy of the system 81510. When the redundancy factor $R \gg 1$, the state is considered to be robustly objective, ensuring that independent observers can reach a consensus without inter-observer communication or direct perturbation of the system 91017.

Spectrum Broadcast Structures and Strong Quantum Darwinism

Further theoretical formalization of objective emergence has led to the concepts of Spectrum Broadcast Structures (SBS) and Strong Quantum Darwinism 91112. While the standard formulation of Quantum Darwinism relies primarily on the scalar metric of mutual information, SBS provides a more stringent and comprehensive characterization at the level of the total multipartite system-environment quantum state 911.

A composite state exhibits a Spectrum Broadcast Structure if it can be mathematically formulated as a classically correlated mixture of pointer states, where each system pointer state is perfectly correlated with orthogonal states in the environmental fragments 59. Crucially, the SBS framework mandates the complete vanishing of quantum discord (the measurement of purely quantum correlations) between the principal system and the observing fragments 9. Under SBS conditions, observers accessing disparate fragments experience entirely uncorrelated noise profiles conditional on the system's state, preventing any collective retrieval of residual coherence 9.

Recent analytical comparisons demonstrate that while standard Quantum Darwinism ensures a high degree of consensus and redundancy, SBS acts as a stricter subset. SBS identifies the precise conditions under which the classicalization process is absolute, meaning no residual quantum correlations can be extracted or reconstituted, even in principle, by observers holding localized environmental partitions 912.

Experimental Observations and Methodologies

For over a decade, Quantum Darwinism remained a predominantly theoretical and philosophical framework. It was highly successful in computational models but exceedingly difficult to verify in the laboratory. However, recent advancements in quantum simulation, high-fidelity gating, and precision environmental control across diverse hardware platforms have finally facilitated the direct observation of information proliferation. Demonstrating Quantum Darwinism experimentally requires the capability to track both the state of the central system and the localized states of multiple, independent environmental degrees of freedom simultaneously 81513.

Photonic Simulators and Artificial Cluster States

Photonic platforms were among the first hardware systems to successfully simulate Quantum Darwinism mechanisms, primarily due to the high degree of experimental control available over linear optical components and multiphoton entanglement 813. Early simulations utilized photonic cluster states to artificially construct the redundant broadcasting interaction inherent to the theory. For instance, experimental work by Ciampini et al. (2018) mapped the interaction of a central quantum system onto multiphoton cluster states 1514. By utilizing multi-pixel photon detectors, they confirmed the mathematical emergence of the redundancy plateau for finite system sizes 1522.

Subsequent landmark work by Chen, Pan, and colleagues (2019) utilized a highly advanced photonic quantum simulator. By precisely routing single photons through complex arrays of beam splitters and phase shifters, they engineered a specific collision-model environment 815. In this setup, a primary photon (acting as the system) sequentially entangled with several ancilla photons (the environment). These early experiments served as strict foundational simulators; they explicitly built the Hamiltonians necessary to create a highly localized redundancy factor, successfully proving the framework's internal mathematical consistency within multi-qubit bounds 815.

Natural Environments and Nitrogen-Vacancy Centers

While photonic simulators generated redundancy artificially through deliberately constructed interactions, verifying Quantum Darwinism in a naturally occurring environment represents a fundamentally distinct and more complex challenge 713. Unden et al. (2019) achieved a critical demonstration using Nitrogen-Vacancy (NV) centers in diamond 1314.

In this architecture, the NV center acts as the central quantum system, evolving naturally in the presence of an environment consisting of nearby Carbon-13 ($^{13}$C) nuclear spins scattered throughout the diamond lattice 1324. The physical interaction is governed autonomously by the hyperfine coupling between the NV center's localized electronic spin and the surrounding nuclear spins 713. The core experimental challenge in natural systems lies in selectively probing the environment without disrupting the central system. To achieve this, the researchers implemented an intricate dynamical decoupling (DD) sequence designed to isolate and measure the interactions of specific $^{13}$C nuclear spins 713. The results conclusively showed that as the NV center underwent natural decoherence, the nuclear spin environment captured redundant copies of the NV's pointer state. This demonstrated that quantum Darwinism operates autonomously in out-of-equilibrium macroscopic natural systems, without the necessity for artificially synthesized Hamiltonians 713. Future experiments utilizing moderately $^{13}$C enriched diamonds are expected to yield even larger redundancy factors 713.

Superconducting Circuits and Macroscopic Scalability

A significant leap in both the scale and precision of experimental Quantum Darwinism was achieved by Zhu et al. (2025) utilizing superconducting circuits 15102516. Superconducting transmon platforms offer macroscopic lithographic scalability, rapid operational gate times, and high-fidelity deterministic readout capabilities that greatly outstrip early photonic models 1718.

The 2025 experiments directly demonstrated redundant environmental records and the emergence of objective classical states in a fully controllable, large-scale hardware platform, heavily aligning with predictive bounds 251619. Utilizing a geometric array of interacting transmon qubits arranged in an Ising-like layout, researchers designated one specific qubit as the target system and surrounding qubits as the distinct environmental fragments 1017. By dynamically tuning the system-environment coupling strength and observing the scaling of the mutual information across time, Zhu et al. confirmed the existence of a highly robust redundancy plateau 1510. The superconducting architecture allowed researchers to measure the mutual information across highly variable fragment sizes and interaction geometries, confirming that objective reality - defined precisely as consensus recorded in redundant copies - emerges natively from the continuous, unitary evolution of complex circuit Hamiltonians 1016.

Trapped Ions and Markovian Collision Models

Trapped ion systems, characterized by exceptionally long coherence times and fully connected interaction graphs via Coulomb interactions, serve as another sophisticated testbed for open quantum system dynamics 18. While standard Quantum Darwinism paradigms often assume simultaneous global interactions, trapped ions and cloud-based processors are routinely utilized to validate "collision models" (also known in the literature as repeated interaction models) 30.

In a single-qubit collision model, the central quantum system interacts sequentially with a stream of identically prepared environmental ancillae 30. Each "collision" represents a brief unitary interaction, after which the ancilla moves away, and the system encounters a fresh environmental degree of freedom. This fundamentally Markovian structure ensures that quantum information flows unidirectionally from the system to the environment without memory effects 30. Work by Giannelli, Campbell, and others has utilized collision models to verify that classical objectivity can emerge asymmetrically depending on the sequence and internal configuration of the system-ancilla entanglement events 3031. Trapped ion arrays are particularly well-suited for modifying the temporal structure of these interactions, allowing researchers to simulate how varying the collision sequences directly controls the degree of redundancy generated and the speed of objective emergence 2230.

| Experimental Platform | Core Quantum System | Environmental Fragments | Interaction Mechanism | Milestone Achievement |

|---|---|---|---|---|

| Photonic Simulators | Photonic state | Sequential ancilla photons | Linear optical beam splitters and phase shifters | First artificial confirmation of the redundancy plateau via constructed cluster states 814. |

| Nitrogen-Vacancy Centers | NV center electronic spin | $^{13}$C nuclear spins | Natural hyperfine coupling | First demonstration of autonomous QD in a naturally occurring, out-of-equilibrium solid-state environment 713. |

| Superconducting Circuits | Transmon qubit | Surrounding transmon grid | Microwave-mediated coupling | Scalable, high-fidelity validation of emergent classical histories and variable plateau boundaries (Zhu et al. 2025) 102516. |

| Trapped Ions | Trapped atomic ion | Identically prepared ancilla ions | Coulomb interaction (Collision Models) | Precise control over Markovian vs. Non-Markovian information flow and asymmetrical classical emergence 30. |

Complex Environmental Dynamics and Hamiltonian Constraints

The original formulations of Quantum Darwinism assumed a relatively passive environment - once information was imprinted onto an environmental fragment, it was assumed to remain stable and readable indefinitely 1. However, realistic natural environments are structurally complex; the degrees of freedom comprising the environment typically interact with one another dynamically. When environmental sub-units possess their own intrinsic thermalizing or chaotic dynamics, the behavior of redundancy and objectivity becomes significantly more complex 1.

Scrambling vs. Broadcasting and Non-Markovianity

Information propagation through a complex many-body environment is governed by the inherent tension between two phenomena: "broadcasting" and "scrambling" 3120. Broadcasting duplicates classical data across the environment, successfully establishing the Quantum Darwinism phase. Conversely, scrambling rapidly delocalizes quantum information such that it cannot be retrieved by measuring localized fragments, forcing the system into an "encoding phase" 20.

Recent theoretical work has identified distinct dynamic phase transitions regarding information retrieval. By analyzing system-environment interaction Hamiltonians, researchers note that if the intrinsic environmental interactions are overly strong and non-hierarchical, the environment will enter an encoding phase where the injected system information becomes entirely inaccessible in any discrete subset 20. The onset of intrinsic thermalization dynamics within the environment tends to degrade redundancy 31. The environment acts as an initial witness, but subsequent chaotic interaction among the environmental spins scrambles the pointer states into global multi-partite entanglement. This causes the redundancy plateau to collapse, making the classical information inaccessible once more and demonstrating that classicality may be a transient phase in certain highly coupled systems 31.

Furthermore, non-Markovian dynamics introduce information backflow from the environment into the system. High non-Markovianity generally hinders objectivity by suppressing redundant records 121. When the environment exhibits strong memory effects, the system and environment re-entangle continuously. This oscillation prevents the stable convergence onto a set of fixed, classical pointer states, as quantified by specialized metrics such as the quantum Jensen-Shannon divergence 121.

Non-Commuting Evolutions and Pointer State Relaxations

Standard Quantum Darwinism frameworks operate under the assumption that the system Hamiltonian and the system-environment interaction Hamiltonian commute, which clearly defines the exact pointer states via strict superselection 234. However, strictly commuting Hamiltonians are an idealization rarely found in unconstrained physical systems 1034.

Recent investigations by Chisholm, Palma, and Innocenti (2025) focus intensely on non-commuting evolutions. Their findings reveal that classical objectivity can still emerge generically even when the relevant Hamiltonians do not commute 1034. When the interaction terms conflict, traditional pointer states (defined by absolute energetic stability) are no longer perfectly preserved. Nevertheless, if the definition of pointer states is theoretically relaxed from "perfect resilience against decoherence" to simply "states whose information successfully proliferates into the environment," objective reality is consistently observed 1034. This inherent flexibility underlines the broad robustness of the Quantum Darwinism mechanism; it operates as an emergent feature that is largely independent of fine-tuned micro-dynamics, provided that widespread information proliferation eventually occurs 1010.

The Informational Economy Functional and Thermodynamics

To formalize the emergence of classical structure under general physical constraints, modern theorists have introduced the Informational Economy Functional (IEF) 6. The IEF unifies the principles of Quantum Darwinism, traditional decoherence, and predictive information thermodynamics. It posits that the emergence of classical records is not an ad hoc addition to quantum theory, but the direct result of an effective variational principle where informational capabilities and thermodynamic energy costs balance each other 6.

The functional mathematically captures three distinct competing rates: 1. The degradation of state distinguishability (the stability cost). 2. The energetic cost of continuous physical interaction ($Q_{out}$). 3. The rate of information dissemination into the environment ($I_{brd}$) 6.

Under the rules of the IEF, specific classical structures are favored by environmental selection because they efficiently convert available thermodynamic resources into persistent, shareable informational traces at a minimal energetic cost 6. Conversely, pure superpositions dissipate energy rapidly without yielding redundant records and are therefore naturally suppressed by the thermodynamic constraints of the universe 6.

Critiques, Theoretical Challenges, and Alternative Ontologies

Despite its broad explanatory power and increasing empirical support, Quantum Darwinism faces rigorous theoretical critiques - most notably regarding the fundamental source of its mechanisms within a strictly unitary universal framework. Furthermore, competing ontological models approach the problem of definite outcomes from entirely different mathematical standpoints.

The Circularity Critique in Unitary-Only Dynamics

Philosopher of physics Ruth E. Kastner and colleagues maintain that Quantum Darwinism suffers from severe logical circularity when evaluated against unitary-only quantum theories, such as the Everettian (Many-Worlds) or purely Schrödinger-based frameworks 235363722. The critique asserts that both decoherence and einselection fundamentally rely on the a priori assumption that the universal wave function naturally splits into a predefined "system" and an "environment" 235.

Kastner argues that the division of universal degrees of freedom into system and environment must be inserted by hand by the physicist 2. Furthermore, for einselection to function properly, the environmental degrees of freedom must be assumed to possess mutually random phases. In a strictly unitary dynamic, this requisite phase randomness does not emerge organically 25. Thus, Kastner concludes that the Quantum Darwinism program amounts to the logical fallacy of affirming the consequent: the framework seeks to explain the emergence of classical phenomena but secretly embeds classical features (such as localizable degrees of freedom, random phases, and defined subsystems) into its initial mathematical conditions 35.

If unitary-only dynamics cannot generate intrinsic random phases without an initial assumption of a classical boundary, Kastner proposes that theories invoking genuine, physical non-unitarity - such as Objective Collapse models or the Transactional Interpretation - may be necessary to solve the measurement problem non-circularly 353622. Within the Transactional Interpretation, non-unitarity arises through physical "measurement transitions" facilitated by time-symmetric absorber responses, eliminating the need to assume an external classical observer or arbitrarily partition the universe 33637.

Hidden Variables and Pilot Wave Theory

Unlike standard quantum mechanics, which posits that a system's state exists as a superposition of potentialities prior to measurement, Hidden Variable theories argue that systems always possess definite properties. The probabilistic nature of standard quantum mechanics is viewed simply as a result of human ignorance regarding these underlying, unobservable variables 232425.

The most mature hidden variable model is the de Broglie-Bohm theory, commonly known as Pilot Wave theory or Bohmian mechanics 2627. In Bohmian mechanics, the universe is inherently deterministic and explicitly nonlocal. A quantum object consists of an actual point particle with a defined trajectory and a guiding "pilot wave" governed by the standard Schrödinger equation 262744. The guiding equation, which introduces a quantum potential, choreographs the precise motion of the particle. The paradox of Schrödinger's cat and the broader measurement problem vanish under this framework because the particles composing the cat, the radioactive atom, and the detector always possess definite physical positions at all times 2627.

While Pilot Wave theory successfully recovers standard quantum predictions (such as the double-slit interference pattern) by routing precise particle trajectories along the contours of the guiding wave, it faces severe theoretical friction when reconciling with special relativity and standard quantum field theory due to its explicit nonlocality 2545.

Macroscopic analogue experiments, known as Pilot Wave hydrodynamics, utilize bouncing fluid droplets (walkers) to demonstrate striking similarities to quantum phenomena, including double-slit interference, unpredictable tunneling, and quantized orbits 2628. Proponents of these analogues argue that if macroscopic physics can replicate quantum behaviors via resonant field excitations, it suggests that physical pilot waves may offer an optimal, deterministic resolution to the measurement problem without relying on the extensive environmental information broadcasting required by Quantum Darwinism 28. However, critics routinely point out that these fluid analogues fail to reproduce highly non-classical phenomena like Bell inequality violations and true multi-particle entanglement dynamics, leaving the theory incomplete as a universal replacement 29.

| Framework / Theory | Ontological Basis | Resolution of the Measurement Problem | Determinism | Locality | Primary Mechanism for Classicality |

|---|---|---|---|---|---|

| Quantum Darwinism | Wave function (Epistemic & Ontic) 4 | Environment-induced superselection and consensus. | Probabilistic (from local observer's perspective). | Local interactions simulate apparent collapse. | Redundant imprinting of pointer states into environmental fragments. |

| Copenhagen Interpretation | Probabilistic wave function | Axiomatic collapse postulate (Born Rule). | Inherently stochastic. | Nonlocal (instantaneous wave collapse). | Macroscopic observer fundamentally forces the system into a definite state. |

| Pilot Wave Theory (Bohmian) | Real particles + Guiding Pilot Wave 26 | No collapse. Particles always have definite trajectories. | Strictly Deterministic. | Explicitly Nonlocal. | Classical reality is fundamental; quantum uncertainty is merely statistical ignorance. |

| Transactional Interpretation | Offer and Confirmation waves 37 | Physical collapse via wave handshakes. | Stochastic transitions. | Nonlocal (time-symmetric). | Actualization of spacetime events through fundamental energetic exchange. |

Future Directions and Cosmological Implications

As the study of Quantum Darwinism moves beyond foundational proof-of-concept experiments, its principles are increasingly applied to broader complex systems, macroscopic phenomena, and cosmological models 163031.

If classical reality is intrinsically tied to redundancy and information broadcasting, this principle must hold at cosmological scales. Theoretical extrapolations suggest that astrophysical phenomena like Hawking radiation from black holes interact uniquely with Quantum Darwinian requirements 16. According to current cosmological models, while black holes ultimately preserve information globally via the Hawking radiation footprint, they scramble it thoroughly 16. A macroscopic transducer or observer cannot access a localized, redundant fragment of Hawking radiation to infer the state of infalling matter. Therefore, under the QD paradigm, while information may be thermodynamically conserved, it fails to achieve classical objectivity because it lacks the necessary redundant environmental broadcasting 16. This distinction between preserved global information and accessible local information represents a significant boundary for objective reality.

Additionally, observational data from the Dark Energy Spectroscopic Instrument (DESI DR2) in 2025 provides strengthening evidence that dark energy is dynamic, influencing the ultimate entropy budget of the observable universe 16. With supermassive black holes dominating the current cosmological entropy budget (contributing approximately $10^{103.9}$ $k_B$), the limits of thermodynamic processing directly constrain how much information can be permanently written into the cosmic environment 16.

Current experimental designs in 2026 are also shifting to probe the upper limitations of Quantum Darwinism in the laboratory. Researchers are investigating exactly how large and structurally complex a system can be before the QD process breaks down, scaling up superconducting processors and using matrix product states to computationally model environments with thousands of interacting spins 18. Moving forward, finding precise limits on the Informational Economy Functional and integrating gravitational decoherence models could represent the final step in solidifying Quantum Darwinism as the definitive bridge between the quantum substrate and the classical cosmos.

Synthesis of the Quantum-to-Classical Transition

Quantum Darwinism represents a major paradigm shift in the understanding of the quantum-to-classical transition. By replacing the axiomatic collapse of the Copenhagen interpretation with the continuous, physical process of environmental broadcasting, Quantum Darwinism provides a cohesive, mathematically grounded mechanism for the emergence of objectivity 2310. The theory asserts that classical reality is not a separate domain of physics, but merely the specific fraction of quantum information that is robust enough to survive einselection and proliferate redundantly.

Experimental validations across varied platforms - ranging from linear photonic simulators and natural NV center spin environments to advanced superconducting transmon arrays - confirm the existence of the redundancy plateau, providing substantial empirical weight to Zurek's framework 151013. However, the exact boundaries of the theory remain a highly active area of investigation. Theoretical objections regarding circular logic in unitary-only definitions, coupled with the rigorous demands of Non-Markovian environmental scrambling, highlight areas where the underlying physics is still debated 2135. Furthermore, alternative ontologies like Pilot Wave theory continue to offer deterministic, albeit nonlocal, methods to bypass the measurement problem entirely 2627.

Ultimately, Quantum Darwinism succeeds in fundamentally altering the theoretical narrative of open quantum systems: the environment is no longer viewed merely as a destructive sink for coherence, but as the foundational communication channel that makes objective, classical consensus possible in a fundamentally quantum universe.