Quantum Contextuality and the Kochen-Specker Theorem

Foundations of Quantum Contextuality

In the domain of classical physics, macroscopic objects are assumed to possess definite, intrinsic properties that exist entirely independent of whether or not they are observed. This assumption forms the basis of classical determinism and realism, positing that the act of measurement is merely a passive extraction of pre-existing information. Quantum mechanics, however, fundamentally contradicts this classical intuition. Quantum contextuality encapsulates the phenomenon wherein the outcome of a quantum measurement cannot simply be modeled as the revelation of a pre-existing value 11. Instead, the measured value of a quantum observable depends inextricably on the specific "context" of the measurement - that is, the set of other compatible observables that are measured simultaneously alongside it 123.

This inherent dependence on context fundamentally rules out a broad class of theories known as noncontextual hidden-variable (NCHV) models. NCHV models represent attempts to restore classical realism to quantum mechanics by proposing that hidden, unobservable parameters predetermine the outcomes of all possible measurements 345. Standard quantum theory dictates that unperformed experiments have no definitive results, precluding the use of counterfactual reasoning that attempts to simultaneously combine the hypothetical outcomes of mutually exclusive, incompatible experimental arrangements . Therefore, contextuality is not merely an artifact of measurement disturbance, but a profound structural property of the quantum world.

Measurement Compatibility and Contexts

To understand contextuality, one must rigorously define measurement compatibility. In quantum mechanics, physical observables are represented by self-adjoint operators acting on a Hilbert space 67. Two observables, $A$ and $B$, are considered compatible if their corresponding operators commute - mathematically expressed as the commutator $[A, B] = AB - BA = 0$ 158. When observables commute, they share a common set of eigenvectors, meaning that they can be measured simultaneously, or in any sequential order, without the measurement of one disturbing the outcome of the other 359.

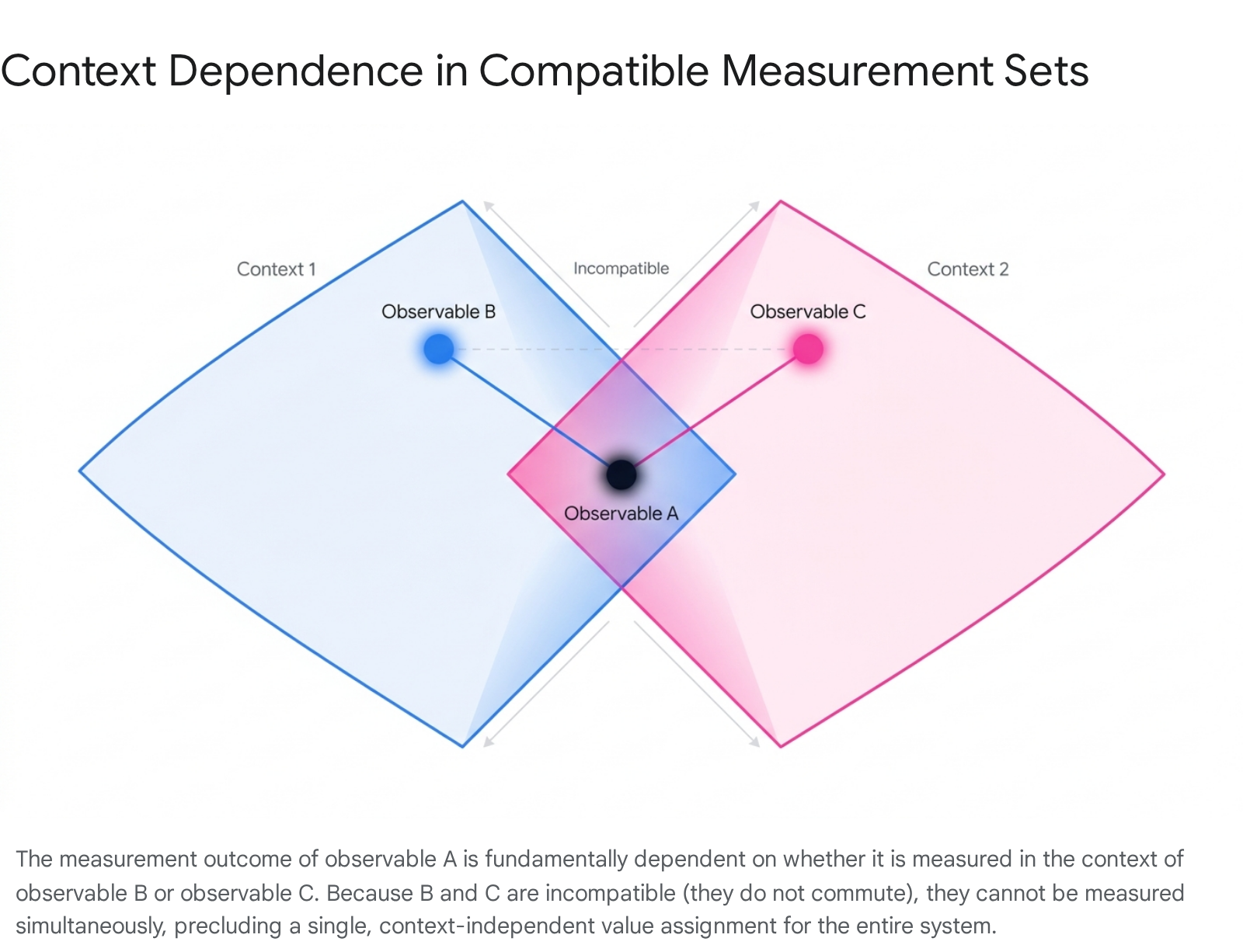

A "measurement context" is defined as a specific set of mutually compatible observables 3512. The phenomenon of contextuality arises when a single observable belongs to multiple, mutually exclusive measurement contexts. For instance, consider three observables: $A$, $B$, and $C$. If observable $A$ commutes with $B$, and $A$ also commutes with $C$, then $A$ can be measured in the context of $B$ (forming the compatible set ${A, B}$) or in the context of $C$ (forming the compatible set ${A, C}$). However, if $B$ and $C$ do not commute with each other ($[B, C] \ne 0$), they are incompatible and cannot be measured simultaneously 1510.

In a classical, noncontextual framework, the hidden variables would assign a definitive, fixed value to observable $A$, regardless of whether the experimenter chose to measure it alongside $B$ or alongside $C$ 35. Quantum contextuality demonstrates that such an assumption leads to direct mathematical and logical contradictions. The value obtained for $A$ is fundamentally contextual, tied to the macroscopic choice of the experimental apparatus used to probe the system 1114.

The Kochen-Specker Theorem

The impossibility of assigning context-independent values to quantum observables was rigorously formalized by mathematicians Simon B. Kochen and Ernst Specker in 1967, following related foundational work published by John S. Bell in 1966 346. The resulting Bell-Kochen-Specker theorem, more commonly known as the Kochen-Specker (KS) theorem, is a central "no-go" theorem in quantum foundations 2411. It places strict mathematical constraints on the permissible types of hidden-variable theories that attempt to explain the probabilistic nature of quantum mechanics 412.

Mathematical Prerequisites and Constraints

The KS theorem establishes that for any quantum system described by a Hilbert space of dimension $d \ge 3$, there exists no consistent method for assigning predetermined truth values (0 or 1) to all possible projection operators representing quantum propositions 41117. The theorem requires that any classical NCHV model attempting to assign such values must satisfy two fundamental algebraic constraints: 1. Eigenvalue Mapping: The assigned value of an observable must correspond to one of the permissible eigenvalues of the corresponding quantum mechanical operator 1113. 2. Functional Consistency (The Sum and Product Rules): If a set of mutually commuting observables obeys a certain algebraic functional relationship, their assigned values must obey the exact same relationship 1113. For example, if observable $C$ is defined as the sum of commuting observables $A$ and $B$ ($C = A + B$), then the pre-assigned value of $C$ must equal the sum of the pre-assigned values of $A$ and $B$ 11. Similarly, the product rule dictates that if $C = A \times B$, the value of $C$ must be the product of the values of $A$ and $B$ 11.

Kochen and Specker demonstrated that it is mathematically impossible to assign values subject to these constraints for all sets of mutually commuting observables in a Hilbert space of $d \ge 3$ 411. This logical contradiction serves as definitive proof that the statistical predictions of quantum mechanics cannot be mapped to an underlying noncontextual classical reality. The KS theorem acts as a complement to Gleason's theorem, which proves that any probability measure on the closed subspaces of a Hilbert space of dimension $d \ge 3$ must uniquely correspond to a density operator, thereby ruling out deterministic probability assignments 1611.

The Evolution of Kochen-Specker Operator Sets

The original proof formulated by Kochen and Specker in 1967 was highly complex. They successfully proved the theorem by explicitly constructing a set of 117 distinct projection operators within a 3-dimensional real Hilbert space 41219. They mapped these projectors to a geometric graph representing a sphere's surface, where each point corresponded to a quantum state, and each measurement corresponded to a perpendicular triad of vectors (points situated 90 degrees apart) 10. The theorem proved that it is impossible to color the points on this sphere using 0s and 1s such that every orthogonal triad contains exactly one 1 and two 0s 1011. This intricate 117-vector set is frequently referred to in historical literature as the "cat's cradle" configuration 12.

Following the publication of the original theorem, the physics community engaged in a decades-long effort to simplify the proof by identifying smaller sets of vectors that yielded the same logical contradiction. * The Quantum Polyhedron: Asher Peres successfully reduced the requirement to 33 vectors in a 3-dimensional space 111219. * The Magic Dodecahedron: Roger Penrose subsequently formulated a proof utilizing a highly symmetrical set of 40 vectors 12. * The Quantum Tesseract: Peres further reduced the number of required vectors to 24 by shifting the proof to a 4-dimensional Hilbert space ($d=4$) 1219. * The 20-Vector Set: Kernaghan further optimized the 4-dimensional proof down to 20 vectors 111219. * The 18-Vector Minimum: Finally, Cabello, Estebaranz, and García-Alcaine constructed a state-independent proof utilizing only 18 real vectors in dimension 4 4111219. This 18-vector set holds the record for the minimal size of a state-independent proof in four dimensions and contains nested subsets that allow for state-specific proofs using as few as ten vectors 12.

Classifications of Quantum Contextuality

As research into the foundational implications of the Kochen-Specker theorem expanded, theoretical physicists recognized that contextuality is not a monolithic phenomenon. The definition and scope of contextuality have been formally subdivided into distinct theoretical frameworks, primarily differentiated by their dependence on the quantum state being measured and the strictness of their measurement assumptions 3.

The three primary paradigms are State-Dependent Contextuality, State-Independent Contextuality, and Spekkens' Generalized Contextuality.

| Paradigm Feature | State-Dependent Contextuality | State-Independent Contextuality | Spekkens' Generalized Contextuality |

|---|---|---|---|

| Dependency | Arises only when measuring a specific, carefully prepared quantum state 3. | Arises universally for all quantum states $\rho$, regardless of preparation 314. | Depends on the operational indistinguishability of experimental procedures 3. |

| Minimum System Dimension | Qutrit ($d=3$) 3. | Qutrit ($d=3$) 34. | Applicable to Qubits ($d=2$) and higher 13. |

| Measurement Assumption | Assumes ideal, sharp, projective measurements 315. | Assumes ideal, sharp, projective measurements 3. | Explicitly designed to handle unsharp, nonideal measurements and mixed states 3. |

| Core Conflict | Pre-existing values contradict statistical predictions for a given state vector 316. | Value assignments are algebraically impossible for the observable set itself 314. | Failure of Leibniz's principle of identity of indiscernibles in operational space 3. |

| Experimental Signature | Violation of state-dependent noncontextuality inequalities (e.g., KCBS) 316. | Violation of state-independent noncontextuality inequalities (e.g., Yu-Oh) 3. | Violation of specific preparation/measurement noncontextuality inequalities 3. |

State-Dependent Contextuality

State-dependent contextuality indicates that the conflict between quantum theory and classical noncontextual models relies on the physical preparation of a specific quantum state 323. To observe this phenomenon, the system must have a minimal dimensionality of $d=3$ (a qutrit) 316.

This form of contextuality is most commonly identified through the violation of an inequality designed to hold true for all noncontextual hidden-variable models. The standard metric for state-dependent contextuality is the Klyachko-Can-Binicioğlu-Shumovsky (KCBS) inequality 31617. The KCBS scenario requires five distinct measurements arranged such that adjacent pairs in a cycle are compatible 3. For a noncontextual classical model governing a qutrit, the lower bound of the KCBS inequality is strictly $-3$ 3. However, quantum mechanics predicts that a specific, optimized state - such as $|\psi\rangle = (1, 0, 0)$ - can violate this classical boundary, yielding an expectation value of approximately $-3.94$ 3. Because the violation only occurs when the optimal state is prepared, the contextuality is classified as state-dependent.

State-Independent Contextuality

In contrast, state-independent contextuality (SI-C) relies entirely on the topological and algebraic properties of the chosen observables, rather than the state vector of the system 31214. When an experimental arrangement utilizes a Kochen-Specker set of projectors, the resulting algebraic contradiction prevents consistent value assignment for any quantum state 31418. Consequently, the predictions of quantum mechanics will contradict classical noncontextual models regardless of whether the system is in a pure state, a partially mixed state, or a completely maximally mixed state 3518.

The hallmark of state-independent contextuality is the violation of an inequality that holds for all input states, such as the Yu-Oh inequality 3. The Yu-Oh framework employs 13 specific vectors within a 3-dimensional complex Hilbert space ($C^3$) 3. Under any classical NCHV model, the algebraic upper bound for this scenario is 8 3. However, the quantum mechanical expectation value remains invariant at $25/3$ (approximately $8.33$) for every conceivable state $\rho$ 3. Mathematical proofs have established that any set capable of demonstrating state-independent contextuality must contain a minimum of 13 projectors, establishing a strict lower bound for the complexity of SI-C experiments 3.

Spekkens' Generalized Contextuality

The traditional formulations of the Kochen-Specker theorem are constrained by their reliance on sharp, projective measurements. In 2005, Robert W. Spekkens introduced a paradigm shift by developing a theory-independent operational framework that expanded the definition of contextuality to include unsharp measurements, positive operator-valued measures (POVMs), and mixed state preparations 31719.

Spekkens' generalized contextuality is fundamentally based on the concept of "operational equivalence." This principle states that if two physical procedures - such as two different methods of preparing a quantum state, or two distinct measurement apparatuses - yield statistically indistinguishable results across all possible experiments, they must be considered operationally equivalent 327. According to Leibniz's principle of the identity of indiscernibles, a rational generalized noncontextual ontological model must map operationally equivalent procedures to the exact same mathematical probability distribution in the underlying hidden-variable space 327.

Quantum mechanics routinely violates this expectation. It allows for scenarios where multiple preparation procedures produce identical density matrices (meaning they are statistically indistinguishable), yet require entirely different ontological representations in any underlying hidden-variable model 328. By shifting the focus from algebraic non-commutativity to operational indistinguishability, Spekkens' framework successfully demonstrated that contextuality can exist in two-dimensional systems (qubits, $d=2$), bypassing the $d \ge 3$ limitation imposed by the classical KS theorem 1328.

Operator-Based Proofs: Parity and Pseudo-Telepathy

While the original vector-based proofs of the Kochen-Specker theorem were mathematically rigorous, they were difficult to conceptualize. A major theoretical advancement occurred with the introduction of operator-based parity proofs, which utilized small, highly structured sets of observables to produce elegant algebraic contradictions 12202131.

The Mermin-Peres Magic Square

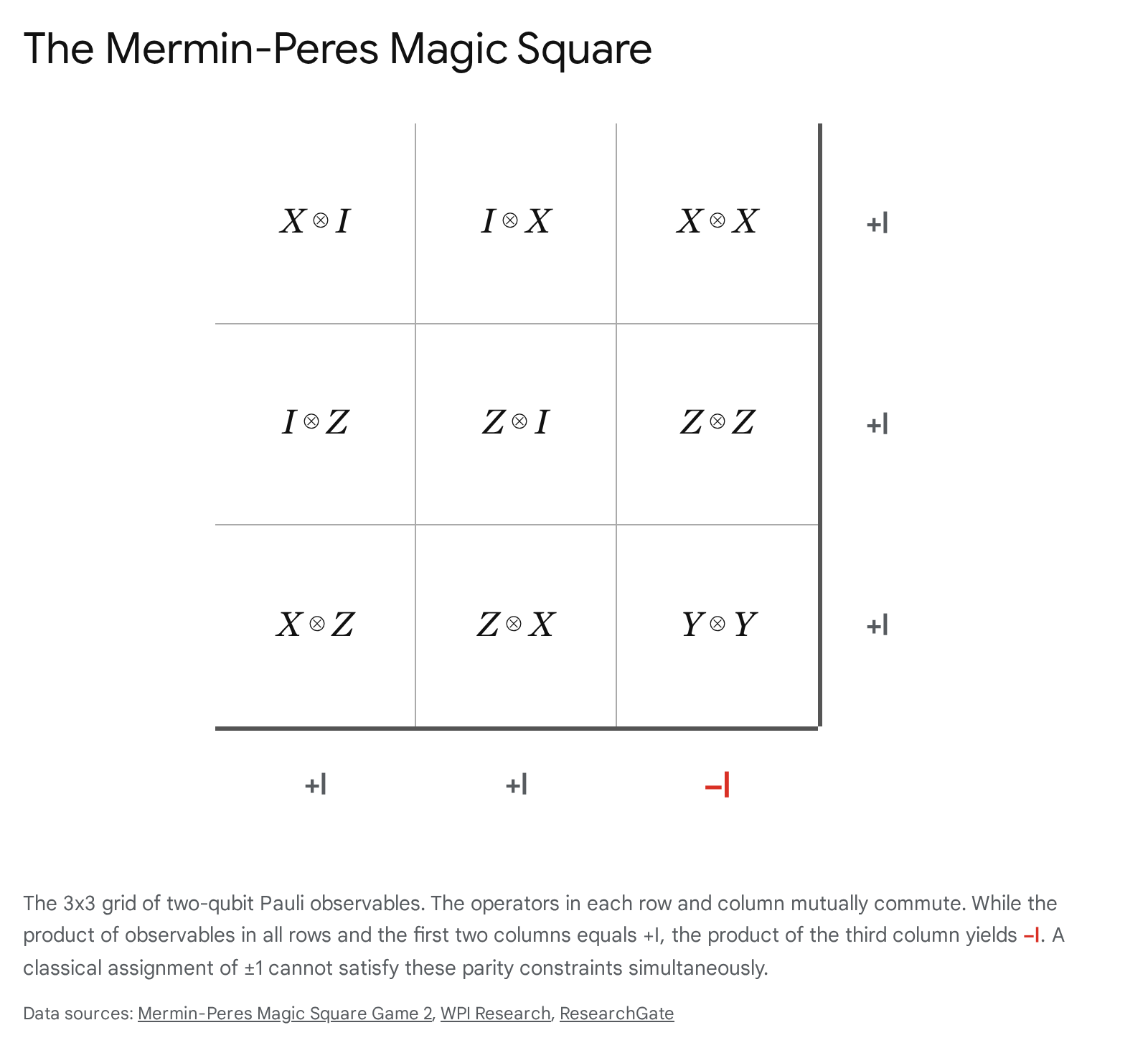

The most prominent operator-based proof is the Mermin-Peres magic square, developed independently by N. David Mermin and Asher Peres 513203222. The magic square utilizes a $3 \times 3$ grid containing nine specific two-qubit observables. These observables are constructed using the tensor products of the elementary single-qubit Pauli matrices ($X, Y, Z$) and the identity matrix ($I$) 1331323435.

The standard configuration of the magic square is arranged as follows: * Row 1: $X \otimes I$, $I \otimes X$, $X \otimes X$ * Row 2: $I \otimes Z$, $Z \otimes I$, $Z \otimes Z$ * Row 3: $X \otimes Z$, $Z \otimes X$, $Y \otimes Y$

This arrangement is explicitly engineered to possess highly constrained algebraic properties. Within any single row, all three operators mutually commute 13223435. Thus, the three observables in a row form a valid measurement context and can be measured simultaneously with arbitrary precision 1334. The exact same principle applies to the columns; the three operators within any single column mutually commute and constitute a distinct measurement context 13223435. Because Pauli matrices are dichotomic, their eigenvalues are strictly $+1$ or $-1$. Any classical hidden-variable model attempting to describe the system must pre-assign a definitive value of $+1$ or $-1$ to each of the nine nodes in the grid 573122.

The "magic" of the square - and the core of the proof - lies in the mathematical products of the rows and columns. In quantum mechanics, taking the product of the three mutually commuting operators in any of the three rows yields the identity matrix, $+I_4$ 223435. Similarly, the product of the three operators in the first column and the second column also yields $+I_4$ 2234. However, due to the inherent anti-commutation relations of Pauli matrices (e.g., $XY = -YX$, $XZ = -ZX$), the product of the three operators in the third column evaluates to $-I_4$ 9132234.

This creates an insurmountable logical paradox for any noncontextual model. If a classical model assigns predetermined values of $\pm 1$ to all nine squares, the product of the three row products must be $(+1) \times (+1) \times (+1) = +1$ 713. Because the column products contain the exact same nine underlying variables, multiplying the three column products together must logically yield the exact same total: $+1$ 713. However, quantum mechanics dictates that the product of the columns is $(+1) \times (+1) \times (-1) = -1$ 71331. It is impossible for a classical assignment to simultaneously satisfy $+1$ and $-1$, proving that the value of an observable fundamentally changes depending on whether it is evaluated as part of a row context or a column context 5722.

Mermin's Star and Pseudo-Telepathy Games

The underlying mathematics of the magic square have been adapted into cooperative, non-local logic games known as "quantum pseudo-telepathy" games 2022. In the magic square game, two players (Alice and Bob) are separated so they cannot communicate. A referee demands that Alice fill out a specific row with $+1$ or $-1$ assignments (maintaining an even number of $-1$s), while Bob fills out a specific column (maintaining an odd number of $-1$s) 2234. They must assign the exact same value to the intersecting square to win 22. Because of the parity contradiction inherent to the grid, the theoretical maximum probability that classical players can win is limited to $8/9$ 2234. However, if Alice and Bob share an entangled 4-qubit state, they can map their measurements to the Pauli operators in the magic square and win the game with 100% certainty, presenting an illusion of telepathic communication via quantum contextuality 202234.

Building upon this success, David Mermin expanded the parity proof concept to a 3-qubit system, resulting in a configuration known as Mermin's Star, or the magic pentagram 14232036. Mermin's Star utilizes 10 distinct Pauli observables arranged geometrically into a pentagram 36. The intersecting lines form five distinct edges, each containing four mutually commuting observables 36. The parity argument functions identically: multiplying the operators along four of the edges yields $+I$, while multiplying the operators along the fifth edge yields $-I$, creating a mathematically flawless state-independent contextuality proof for 3 qubits 1436. In state-dependent variations, the Mermin Star frequently employs the Greenberger-Horne-Zeilinger (GHZ) state ($|GHZ\rangle = \frac{|000\rangle + |111\rangle}{\sqrt{2}}$) to test contextuality limits against symmetry transformations within advanced topological frameworks 23.

Distinctions Between Contextuality and Nonlocality

In discussions of quantum foundations, Kochen-Specker contextuality is frequently discussed in tandem with Bell nonlocality, leading to occasional conflation. While both phenomena describe non-classical correlations and place bounds on hidden-variable theories, they operate on different principles and apply to different types of physical systems 232425.

Single-System Constraints Versus Composite Entanglement

Entanglement is a rigid mathematical property defining a specific type of composite quantum state within a multipartite Hilbert space 2325. A multipartite system is entangled if its global state vector cannot be factorized into a product of independent local state vectors 2325. Nonlocality is the physical, observational consequence of this entanglement. It describes the phenomenon where measurements performed on one part of a spatially distributed, entangled system appear to instantaneously influence the state of the other part, violating the classical limits of local realism encoded in Bell inequalities 142325.

Kochen-Specker contextuality, however, does not require a multipartite system, spacelike separation, or entanglement 141624. Contextuality is an intrinsic characteristic of a single, localized quantum system 1624. An individual indivisible qutrit ($d=3$), a single photon, or a solitary harmonic oscillator can fully exhibit contextuality without requiring any secondary particle to entangle with 516.

The phenomena are bridged conceptually by Fine's theorem. According to Fine's theorem, Bell nonlocality can be accurately interpreted as a special, restricted subclass of generalized quantum contextuality 1. In nonlocality scenarios, the measurement "contexts" are strictly enforced by the physical, spacelike separation of the different measurement apparatuses, whereas standard contextuality allows the contexts to overlap within the same localized system 1.

Entanglement Requirements in Multiqubit Contextuality

While a single $d=3$ qutrit can exhibit contextuality without entanglement, the relationship between the two phenomena becomes structurally intertwined when analyzing composite systems built from multiple qubits. Recent theoretical research has established that for systems consisting of multiple qubits (such as $d=4$ systems composed of two qubits), entanglement and Bell nonlocality actually become strict prerequisites for proving the Kochen-Specker theorem 24.

It has been rigorously proven that unentangled measurements - even non-local unentangled measurements exhibiting "nonlocality without entanglement" - can never yield a logical, state-independent proof of the KS theorem for multiqubit systems 24. Furthermore, a composite multiqubit state can only admit a statistical (state-dependent) proof of the KS theorem if and only if it is capable of simultaneously violating a Bell inequality via projective measurements 24.

This creates a nuanced physical paradigm. While single-system contextuality requires no entanglement, the manifestation of contextuality across a distributed multiqubit network relies on the exact same physical resources that drive nonlocality 24. In experiments utilizing hybrid qubit-qutrit systems, researchers have observed a direct resource trade-off. Contextuality (assessed via the KCBS inequality) is primarily governed by the population of specific higher-energy qutrit levels, while nonlocality (assessed via the CHSH inequality) is governed by the coherence of the entangled pair 17. Tuning the parameters of the system to optimize one non-classical phenomenon frequently suppresses the other, confirming that their simultaneous maximal existence is confined to a narrow range of boundary conditions 17.

Contextuality in Continuous-Variable Systems

Historically, the majority of research regarding quantum contextuality was heavily biased toward discrete-variable (DV) systems - namely, qubits, qutrits, and other qudits possessing finite-dimensional Hilbert spaces 2627. However, there is a rapidly expanding body of research concerning continuous-variable (CV) quantum systems. CV systems encode quantum information onto continuous degrees of freedom characterized by infinite-dimensional Hilbert spaces, such as the position and momentum of a harmonic oscillator or the amplitude and phase quadratures of an electromagnetic optical field 262829.

Wigner Function Negativity

In the continuous-variable regime, the state of a quantum system is typically represented not by discrete vectors, but as a quasi-probability distribution in a continuous phase space, most notably utilizing the Wigner function 262930. Because it must satisfy the uncertainty principle, the Wigner function differs fundamentally from classical probability distributions; it is not strictly positive and frequently takes on negative values in localized regions of phase space 2630.

The presence of Wigner negativity is recognized as a definitive signature of non-classicality within a CV system 2630. By definition, Gaussian states - such as coherent states and squeezed vacuum states - possess entirely non-negative, Gaussian-shaped Wigner functions 1831. A seminal result known as Hudson's theorem proves that the Wigner function of a pure quantum state is non-negative if and only if that state is strictly Gaussian 1831. Because quantum operations utilizing only Gaussian states and Gaussian measurements can be efficiently simulated on classical computers, injecting Wigner negativity (via non-Gaussian states like single-photon Fock states) is an absolute prerequisite for achieving quantum computational speedup in continuous-variable platforms 26282930.

Equivalence of Contextuality and Wigner Negativity

A major breakthrough in quantum foundations occurred with the theoretical proof establishing a strict equivalence between Wigner function negativity and quantum contextuality 19262832.

In prior research mapping discrete systems, Howard, Wallman, Veitch, and Emerson proved that contextuality and Wigner negativity coincide perfectly for qudits possessing odd prime dimensions 2631. Recent works by Haferkamp, Bermejo-Vega, Booth, and others have successfully extended this equivalence into the infinite-dimensional continuous-variable regime 192932.

They demonstrated that within the standard models of continuous-variable quantum computing (Gaussian quantum optics), any state that admits a non-contextual hidden-variable model mapping with respect to homodyne (quadrature) measurements will exclusively possess an alternative, completely non-negative Wigner function description 1932. Conversely, it was proven that the non-contextuality of continuous quantum states inherently guarantees the non-negativity of their Wigner representation 1932.

This absolute equivalence unifies two major branches of quantum information theory. It confirms that Wigner negativity and Kochen-Specker contextuality are merely different mathematical representations of the exact same underlying physical resource 19262832. Consequently, detecting Wigner negativity in a laboratory optical field serves as a direct operational witness for continuous-variable contextuality 262932.

Contextuality as a Resource for Quantum Computation

The decades-long debate over whether contextuality is simply an interpretive philosophical puzzle or a quantifiable, exploitable physical resource has been definitively settled by advancements in quantum computing 122833. Quantum contextuality is now classified as a critical computational resource, often referred to as the "magic" that fuels quantum computational advantage 11831.

Magic State Distillation and the Gottesman-Knill Theorem

The necessity of contextuality in computation is derived from the constraints of the Gottesman-Knill theorem 34. The theorem proves that any quantum circuit constructed entirely from operations within the Clifford group (e.g., Pauli gates, Hadamard, CNOT, Phase gates) acting upon stabilizer states can be perfectly and efficiently simulated on a classical computer in polynomial time 3435. Therefore, a quantum processor relying solely on Clifford gates and stabilizer states offers zero computational speedup over a conventional classical machine 34.

To unlock universal, fault-tolerant quantum computation and outpace classical algorithms, a quantum computer must consume non-Clifford resources 34. The dominant architectural approach for achieving this is "magic state distillation." In this paradigm, the system relies on easily correctable Clifford gates for the bulk of operations but injects highly specific, non-stabilizer quantum states - known as magic states - into the circuit when a non-Clifford operation (like a T-gate) is required 163134.

Rigorous mathematical proofs have established that for these magic states to provide any computational advantage, they must inherently exhibit quantum contextuality 18303134. The consumption of the magic state injects the contextual algebraic contradiction into the otherwise classical simulation, forcing the system out of the bounds of what a classical Turing machine can process efficiently 3134.

The "Necessary and Sufficient" Resource Debate

A prominent subject of scholarly debate between 2023 and 2024 has been whether contextuality serves as both a necessary and a sufficient condition for quantum advantage 283536.

There is a strong consensus that contextuality is universally necessary. In tasks ranging from quantum state discrimination and parity-oblivious multiplexing to broader communication complexity challenges, the presence of a contextual advantage is a strict requirement for outperforming classical baseline models 15272836. The Schmid-Selby-Pusey-Spekkens 2024 framework provides a structure theorem for generalized noncontextuality, reinforcing that any physical system whose statistics resist simplex-embeddable ontological models is utilizing contextuality as an epistemic resource 3337. Furthermore, contextuality has been detected beyond quantum hardware; the Contextuality-by-Default (CbD) framework recently revealed contextual signatures within the embedding vectors of Large Language Models (LLMs) like BERT, suggesting that contextual algebraic structures may offer advantages in classical natural language processing tasks 3336.

However, the question of whether contextuality is sufficient on its own to guarantee a quantum advantage is highly contested 2834. The "Qualia-from-Quantum-Magic Hypothesis" and related models argue that while the consumption of contextual magic states is required, it must occur above a certain threshold rate within specific structural algorithms 34. In practical applications, such as mapping Quadratic Unconstrained Binary Optimization (QUBO) problems or genome assembly tasks to quantum hardware, the theoretical advantage provided by contextuality is frequently erased by the severe quadratic overheads required to embed fully connected graphs onto sparsely connected, noisy physical qubits 35. Additionally, mathematical frameworks analyzing exclusive partial Boolean algebras have proven that while contextuality is universally necessary, it is not strictly sufficient for all forms of nonclassicality, indicating that achieving true fault-tolerant advantage relies on contextuality operating in tandem with pristine qubit coherence and large-scale entanglement 2834.

Recent Experimental Verifications

While theorems establish contextuality as a mathematical necessity, physically witnessing it in the laboratory has historically been difficult due to experimental noise and device imperfections. Validating the Kochen-Specker theorem requires explicitly closing a series of rigorous loopholes: * The Detection Loophole: Occurs when low detector efficiency allows lost particles to skew the statistical sample, potentially hiding a classical explanation 916. * The Individual-Existence Loophole: Assumes that the properties being measured genuinely exist at the level of individual trials, not just as ensemble averages 16. * The Compatibility Loophole: Arises when experimental imperfections cause mutually commuting measurement apparatuses to inadvertently disturb one another, meaning the measurements were not truly context-independent 16.

Recent advancements in quantum hardware platforms have successfully closed these loopholes, transitioning contextuality from theoretical proofs to robust physical demonstrations 916.

Superconducting Qubit Architectures

Superconducting circuits have become a primary platform for probing contextuality due to their high tunability and deterministic readout capabilities. In a landmark experiment, researchers utilized a finely tuned superconducting qutrit to execute the Klyachko-Can-Binicioğlu-Shumovsky (KCBS) state-dependent contextuality test 16. By engineering high-fidelity, binary-outcome microwave readouts, the team decisively violated the noncontextuality inequality 16. Crucially, they achieved this while simultaneously closing the detection, individual-existence, and compatibility loopholes in a single-system configuration, completely bypassing the need for multipartite entanglement 16. This capability is essential for the future of quantum computing, as superconducting processors running surface-code architectures rely heavily on the fault-tolerant distillation of contextual magic states 1638.

Further research into superconducting devices has provided deep insights into how contextuality interacts with physical noise. A collaborative Australian-European team led by researchers at Macquarie University recently mapped the propagation of errors through superconducting quantum chips over time 39. Traditional theoretical models often assume that errors in quantum computers are Markovian (random and memoryless) 39. However, the experiment revealed that tiny glitches and errors are heavily time-linked; they linger, evolve, and correlate across different operational moments, effectively creating a persistent memory of the system's operational context 39. Addressing these context-dependent, time-correlated errors is a mandatory step toward achieving scalable, error-free quantum hardware 39. Support for these hardware initiatives is expanding rapidly, highlighted by the founding of Analog Quantum Circuits (AQC) at the University of Queensland, which aims to miniaturize critical microwave components necessary for scaling these highly contextual superconducting environments 40.

Integrated Photonic Implementations

Integrated quantum photonics (QPICs) represent another major frontier for contextuality experiments. QPICs allow vast, complex tabletop optical setups to be miniaturized and etched onto single silicon or silicon-nitride chips using CMOS-compatible fabrication techniques 415642. These chips utilize electrically driven thermo-optic heaters to manipulate photon phases with extreme precision, granting the system a level of phase stability and noise resistance that is nearly impossible to achieve with free-space optics 564258.

This inherent stability allowed researchers at the University of Science and Technology of China (USTC) to conquer a major hurdle in contextuality research: the vulnerability of high-dimensional systems 2759. Generally, as the dimensionality ($d$) of a quantum system increases, the state becomes exponentially more sensitive to noise, causing macroscopic contextuality signatures to rapidly decohere 27. The USTC team counteracted this by identifying a unique family of noncontextuality inequalities where the theoretical maximum quantum violation actually scales upward in direct proportion to the dimension of the system, a phenomenon referred to as contextuality "concentration" 27.

Leveraging an advanced, highly stable all-optical integrated setup, the team experimentally tested this framework utilizing a massive seven-dimensional photonic system 27. By executing precise sequences of ideal measurements combined with destructive measurement and repreparation protocols, the team recorded a staggering violation of the noncontextuality inequality by 68.7 standard deviations 27. This result serves as one of the most robust physical confirmations of the Kochen-Specker theorem to date. It proves conclusively that contextuality is a concentrated, macroscopic physical resource that scales with system dimensionality, providing a direct pathway to harnessing high-dimensional quantum states for fault-tolerant computational advantage 27.