Psychological factors in digital fraud susceptibility

Epidemiological Scale and Financial Impact

The digital fraud landscape has transitioned from isolated, rudimentary deception into a highly industrialized, global ecosystem. This phenomenon operates fundamentally as an applied cognitive science designed to systematically exploit human psychological vulnerabilities rather than purely technical infrastructural flaws 1. Recent empirical data from international reporting bodies illustrates a consistent macroeconomic trend: the aggregate volume of scam reports is stabilizing or experiencing marginal declines, but the financial extraction per victim is escalating severely. Scammers are deploying highly targeted, contextually relevant psychological manipulation to maximize yield per interaction.

Global Incidence and Economic Cost

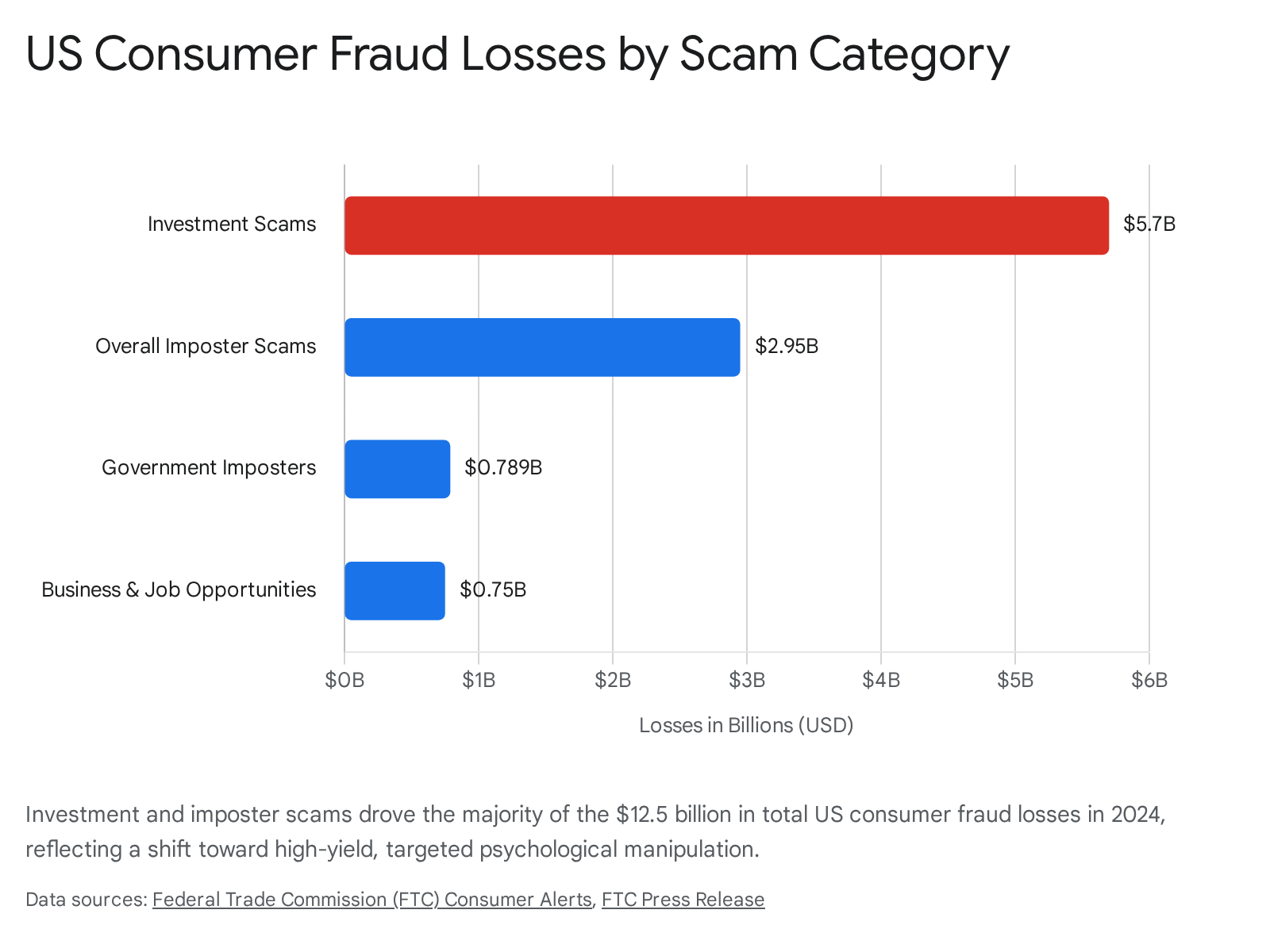

According to data released by the United States Federal Trade Commission (FTC), consumers reported losing a staggering $12.5 billion to fraud in 2024, representing a 25% increase over the previous year 12.

This surge in financial loss was not driven by an increase in total fraud reports, which remained relatively stable at approximately 2.6 million. Instead, the percentage of individuals who suffered monetary losses upon being targeted jumped significantly, from 27% in 2023 to 38% in 2024 2. Globally, the scale is similarly severe. The International Criminal Police Organization (INTERPOL) noted that scammers stole over $1 trillion from victims worldwide in 2023, facilitated largely by the global embrace of new technologies, artificial intelligence, and transnational crime syndicates 34.

Regional reporting corroborates this trend of highly targeted, high-yield exploitation across various jurisdictions. In the United Kingdom, data from Action Fraud and the Office for National Statistics (ONS) estimates the total economic and social cost of fraud against individuals and businesses at £14.4 billion for the year ending March 2024 5. Fraud now represents approximately 40% of all reported crime in the UK, with the ONS estimating 4.2 million incidents of fraud for the year ending March 2025, a 31% increase from the previous year 76. In Australia, the National Anti-Scam Centre reported combined losses of $2.03 billion across 494,732 reports in 2024 7. While this represented a 25.9% decrease in financial losses compared to 2023 - attributed to centralized disruption efforts - the absolute financial damage remains critically high 78.

Across all these jurisdictions, the transition from traditional contact methods to digital modalities (social media, messaging applications, and emails) has precipitated an environment where deception scales infinitely, shifting the burden of security entirely onto the cognitive resilience of the individual.

| Jurisdiction | Reporting Period | Total Estimated/Reported Financial Loss | Incident Volume / Reports | Key Trends |

|---|---|---|---|---|

| United States (FTC) | 2024 | $12.5 Billion | 2.6 Million Reports | 25% increase in losses; victim loss rate increased from 27% to 38% 12. |

| United Kingdom (Home Office / ONS) | YE March 2024 / 2025 | £14.4 Billion (Total Economic/Social Cost) | 4.2 Million Estimated Incidents | Fraud accounts for 40% of all crime; 31% YoY increase in incidents 576. |

| Australia (NASC / Scamwatch) | 2024 | $2.03 Billion (Combined Data) | 494,732 Reports | 25.9% decrease in losses; investment scams represent the largest share of losses 78. |

| Global (INTERPOL / GASA) | 2023 | > $1 Trillion | Not Aggregated | Rise in transnational "pig-butchering" and human trafficking for forced criminality in scam centers 34. |

Demographic Disparities in Victimization

Susceptibility to digital fraud is not uniformly distributed across the population, nor does it strictly adhere to historical stereotypes that solely the elderly fall victim to scams. Current data reveals a complex bifurcation between incidence rates and financial severity across different age cohorts. Younger adults (ages 18-34) consistently report the highest frequency of fraud victimization, driven by their extensive digital footprints, active presence on social media, and comfort with online financial transactions 1119. Gen Z and Millennials are particularly vulnerable to employment fraud, tech support scams, and cryptocurrency investment schemes 11101115.

Conversely, while older adults (ages 65 and above) report fewer overall incidents, they suffer significantly higher median financial losses when victimized. In the United States, victims aged 65 and older experience the highest median loss, often exceeding $1,650 per scam 912. In Australia, individuals aged 65 and over reported the highest total losses of any age group, reaching $99.6 million in 2024 8. This disparity is attributed to older populations having accumulated greater wealth and retirement savings, combined with potential vulnerabilities related to digital literacy, cognitive changes, or social isolation 131415.

Socioeconomic and gender variables further complicate the victimization profile. Women are significantly more likely to report an attempted scam and are victimized at higher rates than men, particularly in romance scams 121617. However, conditional on being victimized, men tend to lose more money, largely due to higher participation rates in high-risk investment and cryptocurrency fraud 1218. Furthermore, populations in areas with lower educational attainment or higher proportions of racial minorities face disproportionate targeting and victimization rates, indicating that systemic socioeconomic factors exacerbate psychological vulnerabilities 81219.

Cognitive Processing Architectures

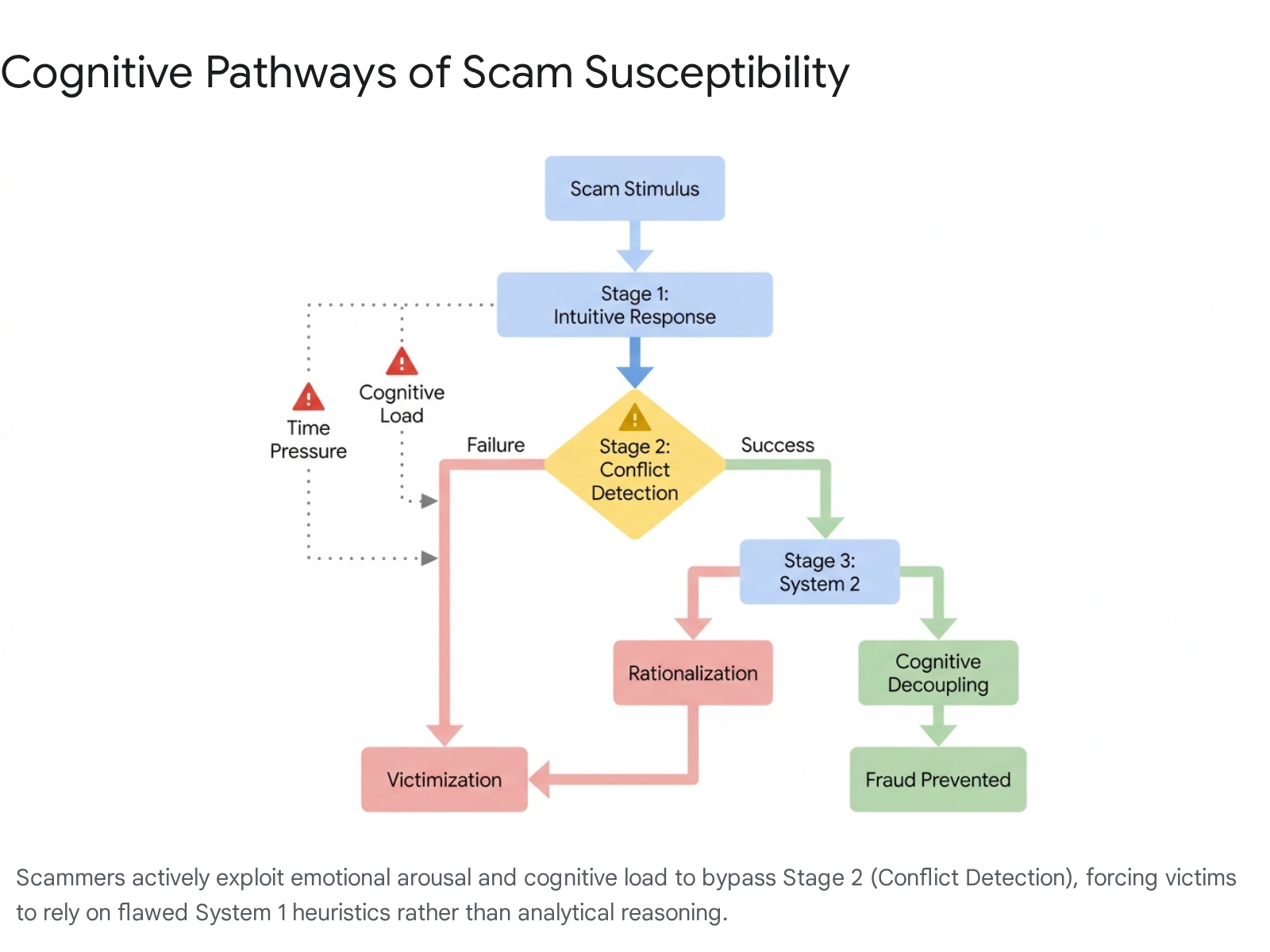

The efficacy of modern digital fraud relies heavily on exploiting fundamental architectures of human decision-making. At its root, successful fraud operates by subverting the victim's natural cognitive processing pathways, forcing reliance on instinct rather than critical analysis.

Dual-Process and Heuristic-Systematic Models

The foundational framework for understanding scam susceptibility is the Dual-Process Theory of cognition, which posits two distinct modes of human information processing 2021. System 1 (heuristic processing) is intuitive, automatic, fast, and emotionally driven, requiring minimal cognitive effort. System 2 (analytical processing) is deliberate, rational, sequential, and resource-intensive 20212223.

In the context of digital fraud, the primary objective of a scammer's design is to force the victim into a state of System 1 processing while suppressing the activation of System 2.

When users evaluate information heuristically, they rely on superficial cues - such as a familiar logo, an authoritative tone, or a sense of urgency - rather than critically assessing the fundamental veracity of the message 2223.

This dynamic is further elucidated by the Heuristic-Systematic Model (HSM) and the Elaboration Likelihood Model (ELM). Under the ELM, individuals process information through either a central route (careful consideration using prior experience) or a peripheral route (superficial evaluation of cues) 22. Scammers actively design communications to push victims toward the peripheral route. The HSM introduces the concept of an "adequacy threshold" - individuals seek a certain level of confidence in their evaluations. When heuristic processing fails to reach this threshold, the individual typically invokes systematic processing to gather more information 22. Fraudsters counter this by manipulating the perceived credibility of the source, artificially raising the victim's confidence to meet the adequacy threshold using only heuristic cues, thereby preventing the transition to systematic analysis.

The "Detecting Deception Model" outlines the standard sequence for identifying fraud: Activation (detecting anomalies), Hypothesis Generation (interpreting abnormal clues), Hypothesis Evaluation (comparing against criteria), and Global Assessment (evaluating all known clues) 22. Fraud succeeds when the Activation stage fails. If the initial anomaly is not detected due to sophisticated mimicking or cognitive distraction, the subsequent stages of systematic evaluation are never triggered.

Cognitive Load and Notification Fatigue

The modern digital environment inherently primes users for System 1 processing, acting as an accelerant for fraudulent manipulation. Human-computer interaction (HCI) research demonstrates that notification systems - characterized by red dots, alert badges, and constant urgency cues - create a sustained state of attention capture and "notification fatigue" 2425. This structural condition degrades selectivity and analytical capacity, conditioning users to react to digital prompts automatically rather than thoughtfully 24.

Working memory capacity and cognitive load significantly predict fraud susceptibility. Empirical studies utilizing simulated phishing tasks demonstrate that multitasking and high cognitive loads severely blind individuals to digital threats 132627. When individuals split their focus between complex tasks - such as managing multiple browser tabs or navigating an influx of emails - their accuracy in detecting fraudulent cues plummets 27. Eye-tracking research further reveals that users performing under high cognitive load or time pressure spend significantly less time attending to highly diagnostic cues, such as the actual URL or domain name, and instead focus on irrelevant or easily spoofed features like the text body or login fields 26.

Scammers exploit this environmental vulnerability by designing attacks that mimic routine, high-frequency digital interactions - such as calendar alerts, package delivery tracking, or IT support notifications - capitalizing on the user's habitual, low-attention response patterns 152428. The user, experiencing cognitive busyness, defaults to the heuristic that familiar UI elements represent legitimate systems, failing to engage the analytical friction required to verify the source.

Psychological Techniques and Cognitive Biases

Within a state of high cognitive load or emotional arousal, victims fall back on inherent cognitive biases. The PsyScam benchmark research, which maps psychological techniques across thousands of real-world scam reports, provides a taxonomy of the specific behavioral levers scammers manipulate 29. These techniques are grounded in established sociological and psychological theories of influence and persuasion.

Taxonomy of Heuristic Exploitation

| Psychological Technique | Underlying Cognitive Bias / Theory | Mechanism in Fraudulent Application |

|---|---|---|

| Authority and Impersonation | Authority Bias 1929 | Exploits the human tendency to obey perceived figures of authority. Scammers impersonate police, bank managers, or CEOs, bypassing critical evaluation as the victim defers to the assumed expertise of the source 1929. |

| Urgency and Scarcity | Urgency Bias / Loss Aversion 222829 | Artificially limits time or resources (e.g., "account locked in 24 hours," countdown timers). This entirely circumvents systematic processing, triggering visceral, instinctual reactions rather than logical analysis 22282930. |

| Phantom Riches | Prospect Theory 1829 | Uses visceral triggers of desire, such as guaranteed high-yield returns, to override rationality. Individuals overly optimistic about gains prioritize pursuing profits over assessing potential risks 2931. |

| Fear and Intimidation | Negative Bias / Affect Heuristic 293032 | Manipulates decision-making through the fear of severe penalties, such as arrest or financial ruin. Fear-based messages reduce the amount of analytical attention an individual can deploy 12930. |

| Consistency and Sunk Cost | Sunk Cost Fallacy 2933 | Targets the tendency to behave consistently with past decisions. Scammers demand a small initial payment, then escalate requests; victims comply to "save" their initial investment of time or money 2933. |

| Social Proof | Herd Effect 2229 | Utilizes fake testimonials, manipulated review scores, and fabricated group chats to convince the victim that a consensus of peers has already validated the opportunity 2934. |

Truth Bias and Optimism Bias

Two pervasive psychological barriers severely hinder fraud prevention efforts: truth bias and optimism bias. Truth bias is the natural human inclination to trust others and assume communications are genuine unless given a glaring reason to believe otherwise 35. Preying on this, scammers operate on the presumption of trust, embedding themselves in environments where truth bias is high, such as dating applications or professional networking sites 1035.

Optimism bias operates as a secondary, equally dangerous vulnerability. It is the cognitive distortion wherein individuals believe they are statistically less likely than their peers to experience a negative event 193335. This illusion of invulnerability creates a false sense of security, blinding individuals to potential risks. Optimism bias is particularly dangerous among highly educated and tech-savvy populations 1934. A senior professional may immediately dismiss poorly worded mass-phishing emails but fall victim to a highly sophisticated, multi-stage cryptocurrency scam because they believe their advanced digital literacy makes them immune to manipulation. Scammers directly exploit this overconfidence by using complex industry jargon, fabricating intricate "VIP" investment dashboards, and framing the scam as an exclusive opportunity that only an intelligent investor would comprehend 3334.

Personality Dispositions and Vulnerability

While situational stressors and interface design manipulate state-based cognition, inherent psychological dispositions heavily influence an individual's baseline vulnerability to fraud. Academic research frequently utilizes established psychometric frameworks to understand the trait-level predictors of victimization.

The Five-Factor Model Application

The Five-Factor Model (the "Big Five") provides a robust lens for evaluating scam susceptibility. Research indicates that individual traits significantly alter interactions with deceptive stimuli before, during, and after a scam 233637:

- Extraversion: Individuals scoring high in extraversion thrive on social stimulation and connection. This trait can increase vulnerability to social engineering, as extraverts are more likely to respond to unsolicited communications and may actively seek the social energy promised by romance or recruitment scams 233637.

- Agreeableness: Characterized by a high degree of compassion, a desire for harmony, and an inclination to trust others. Highly agreeable individuals exhibit a pronounced truth bias, defaulting to the assumption that others' intentions are genuine. They tend to avoid conflict and show concern for others, making them particularly susceptible to emotional appeals, charity scams, and romance fraud 353637.

- Openness to Experience: Individuals high in openness are curious, imaginative, and receptive to novel ideas. While beneficial in many contexts, this trait makes individuals more willing to entertain abstract, unconventional, or "too-good-to-be-true" narratives, significantly increasing exposure to fraudulent investment schemes and cyber-enabled crimes 23363738.

- Conscientiousness: Generally operating as a protective factor, conscientiousness is associated with organization, impulse control, and reliability. High conscientiousness facilitates the systematic system of processing, allowing individuals to scrutinize details, assess risk accurately, and identify deceptive cues 23363843. However, in specific contexts - such as highly sophisticated impersonations of tax authorities or law enforcement - an over-reliance on conscientiousness can backfire, as the victim may follow fraudulent "compliance" instructions out of a deep-seated desire to be responsible and rule-abiding 36.

- Neuroticism (Emotional Stability): The data on neuroticism remains complex and somewhat contested in the literature. While broad emotional stability generally protects against general cybercrime 38, high neuroticism (characterized by anxiety, stress, and mood volatility) can make individuals hyper-reactive to the visceral, fear-based cues used by scammers, severely diminishing their capacity to think critically under pressure 22363744.

The Dark Tetrad and Fraud Perpetration

To fully understand the psychology of fraud, it is necessary to contrast the victim profile with the perpetrator profile. Adjacent behavioral science research into workplace deviance, academic fraud, and insider threats demonstrates that traits from the "Dark Tetrad" - psychopathy, Machiavellianism, narcissism, and sadism - strongly correlate with a willingness to instigate fraud 3940.

Perpetrators exhibit tendencies toward personal gain, exploitation, and diminished social responsibility. Machiavellianism, in particular, predicts the ability to rationalize unethical behavior and exploit situational opportunities 3940. Narcissism correlates with a sense of entitlement and a desire for academic or financial rewards regardless of the ethical cost 3941. Crucially, these Dark Tetrad traits rarely define the victim profile. Victims are not targeted because they are inherently greedy or malicious; rather, scammers map their manipulative tactics against normal, prosocial traits (agreeableness, openness, extraversion), turning human virtues into attack vectors 3640.

Modality-Specific Psychological Exploitation

Scammers meticulously adapt their techniques to exploit the distinct psychological needs and demographic vulnerabilities of specific target groups. The assumption that a single, unified profile exists for all fraud victims is empirically false; different modalities require entirely different psychological levers.

Vulnerability Mapping by Scam Modality

| Scam Modality | Primary Target Demographic | Core Psychological Levers | Mechanisms of Manipulation |

|---|---|---|---|

| Romance / "Pig-Butchering" | Middle-aged/Older adults (Often women targeted, though men incur higher median financial loss) 121642 | Emotional isolation, desire for connection, idealization of romance, loneliness 4350. | Exploits truth bias through prolonged "grooming." Rapidly shifts communication off-platform. Often transitions into cryptocurrency investment fraud 424344. |

| Investment & Cryptocurrency | Younger adults (Ages 18-44), predominantly male, high-income earners 11183145 | Overconfidence, high risk tolerance, "Fear of Missing Out" (FOMO), financial dissatisfaction 183146. | Utilizes social proof via fake testimonials and complex "tech" jargon to bypass scrutiny. Exploits optimism bias and the prospect theory of loss-avoidance 1846. |

| Employment / Job Scams | Young adults (Gen Z, Ages 18-24), students, remote job seekers 19104748 | Financial desperation, tight labor markets, desire for remote flexibility, trust in corporate brands 91049. | Leverages the authority of recognizable corporate brands. Exploits the normalization of digital onboarding to extract upfront fees or harvest sensitive PII 104849. |

| Tech Support / Imposter | Cross-demographic (Significant spikes in older adults for loss volume, but Millennials/Gen Z see highest incidence) 111550 | Fear, panic, authority bias, obedience to instructions, technological insecurity 112950. | Triggers acute System 1 response via sudden pop-ups, auditory warnings, and frozen screens. Impersonates major brands to demand immediate remote access 1150. |

Romance and Pig-Butchering Operations

Romance scams are unique in their prolonged duration and devastating psychological aftermath. Scammers engage in extensive "grooming," creating hyper-personal relationships that exploit the victim's emotional isolation, vulnerability, and need for intimacy 161743. Research into catfishing and romance fraud reveals profound demographic targeting; scammers frequently adopt fictitious military personas, wealthy businessmen, or overseas contractors, specifically tailoring their deceptive identities to resonate with female victims 16.

These scams frequently evolve into "pig-butchering" operations - a hybrid methodology where the established romantic trust is weaponized to persuade the victim to invest heavily in fraudulent cryptocurrency platforms 3442. The psychological impact of these scams constitutes a severe "double hit": catastrophic financial ruin compounded by the traumatic loss of a perceived romantic partner 4350. Victims experience profound emotional distress, including feelings of betrayal, shame, social isolation, fear of crime, and in extreme cases, suicidal ideation 145051. The lingering shame significantly suppresses reporting rates, with estimates suggesting only a small fraction of actual romance scam cases are officially logged with authorities 4244.

Investment and Cryptocurrency Fraud

Investment fraud currently represents the highest total financial loss globally, fueled by the complex, loosely regulated nature of digital assets and decentralized finance 137. Scammers heavily target tech-savvy individuals, particularly younger males, who exhibit high risk tolerance and high openness to experience 111831.

Operating on the principles of prospect theory, scammers exploit individuals who are overly optimistic about potential gains, prompting them to make irrational decisions prioritizing profit over risk assessment 1831. By promoting fake Initial Coin Offerings (ICOs), executing wallet impersonation scams, or fabricating exclusive algorithmic trading groups on encrypted messaging platforms, fraudsters bypass analytical processing 1134. The architecture of blockchain interactions - approving tokens, connecting wallets, signing smart contracts - has become routine for these users. Scammers weaponize this familiarity, relying on the user's pattern recognition to disguise malicious transactions as standard digital operations, creating a seamless pipeline from trust to financial extraction 28.

Employment and Recruitment Deception

Job scams have emerged as one of the fastest-growing fraud typologies, with consumer losses nearly tripling between 2020 and 2024 to reach over $501 million in the United States alone 19. They overwhelmingly target younger adults (ages 18-24), recent graduates, and remote job seekers facing highly competitive labor markets 94748.

Fraudsters post highly realistic opportunities on legitimate platforms like LinkedIn and Indeed, leveraging corporate logos, professional job descriptions, and the identities of actual corporate executives or academic professors 104849. The psychological lever relies on the victim's ambition and financial necessity, creating a willingness to bypass normal critical evaluations. The primary goals are twofold: advance-fee extraction (demanding payment for "home office equipment" or "training" via fraudulent checks) or severe identity theft via the harvesting of Social Security numbers, banking details, and passports under the guise of standard corporate digital onboarding 94849.

Technical Support Impersonation

Contrary to the persistent stereotype that only the elderly fall for tech support scams, recent data indicates that Millennials and Gen Z suffer the highest incidence rates, although older demographics continue to incur the highest median financial losses 1115. This scam operates via the intense induction of acute fear.

Victims are presented with unavoidable pop-ups, loud auditory alarms, and frozen screens claiming their system is critically compromised by hackers or malware 1150. This sensory overload immediately hijacks attention and creates an intense cognitive load, forcing the user into a panic state that demands an immediate solution. The scam provides that solution by offering a phone number for "technical support." Once on the phone, the scammer utilizes authority bias - often claiming to represent Microsoft, Apple, or a major cybersecurity firm - to gain remote access to the machine. Attackers frequently deploy remote access trojans (RATs), such as NetSupport, to establish persistent control, allowing them to plunder bank accounts while the victim believes their system is being repaired 5052.

The Impact of Generative Artificial Intelligence

The integration of Generative Artificial Intelligence (GenAI) into the cybercriminal ecosystem has fundamentally altered the psychology of fraud susceptibility. Previously, users were educated to rely on distinct heuristic cues to identify fraud: poor grammar, spelling errors, mismatched corporate branding, or robotic cadences. GenAI has effectively neutralized these traditional, low-level defense mechanisms 5354.

Hyper-Personalization and Phishing-as-a-Service

Large Language Models (LLMs) enable the mass production of hyper-personalized spear-phishing content. By rapidly scraping publicly available data from social media, professional networking sites, and data breaches, AI algorithms can instantly analyze a target's communication style, professional network, and personal interests 5362.

Scammers use this data to synthesize messages that are contextually flawless. Consequently, the cognitive effort required by the victim to verify the communication increases exponentially, as the message perfectly aligns with their expectations and existing mental models 5355. Furthermore, the rise of Phishing-as-a-Service (PhaaS) platforms has democratized these advanced capabilities. Criminals no longer require technical expertise; they can purchase subscription-based AI tools to deploy highly sophisticated psychological manipulations at an unprecedented scale, driving the explosive growth of synthetic identity fraud and automated social engineering 95362.

Synthetic Media and Voice Cloning

Perhaps the most psychologically devastating application of GenAI is deepfake audio, commonly known as voice cloning. Using only brief snippets of audio harvested from public profiles, podcasts, or voicemails, scammers can flawlessly synthesize the voice of a CEO, a bank manager, or a panicked family member 565766.

Voice cloning attacks directly assault the most fundamental layers of human trust and biological response. As cybersecurity researchers note, the emotional realism of a cloned voice completely removes the mental barrier to skepticism 58. When a victim hears the precise timbre, cadence, and distress of a loved one claiming to be in a life-threatening emergency or a kidnapping scenario, the brain's analytical processing (System 2) is instantaneously overridden by acute emotional terror and the biological imperative to act 15758.

This technology has led to massive financial losses in both the consumer and enterprise sectors, including high-profile incidents where financial professionals transferred tens of millions of dollars after receiving direct, synthesized voice orders from purported executives on a video call 5658. The psychological fallout of these attacks extends beyond the immediate victim, contributing to a broader societal phenomenon termed "doppelgänger-phobia" - a pervasive anxiety regarding the inability to trust digital realities and the profound fear of one's own identity being weaponized without consent 59.

Systemic Countermeasures and Mitigation

As the tactics of digital deception evolve to perfectly mimic legitimate human interaction, reliance on individual vigilance as the primary defense mechanism is increasingly untenable. Human detection rates for high-quality synthetic media remain perilously low (estimated at roughly 24.5% for high-quality video), and sustained cognitive load guarantees that individuals will inevitably default to vulnerable heuristic processing 275366. A shift in paradigm is required, moving away from simple awareness campaigns toward systemic, HCI-driven interventions and structural friction.

HCI-Driven Interventions and Friction

To combat alert fatigue and the habitual dismissal of security warnings, digital platforms must employ polymorphic fraud warnings. Polymorphic alerts are dynamic notifications that continuously alter their visual presentation, wording, and tone to prevent neurological habituation 25. By ensuring that every alert is distinct and attention-grabbing, systems can disrupt automatic processing and force cognitive engagement.

Furthermore, mitigating the efficacy of System 1 manipulation requires the deliberate introduction of structural friction into digital environments. Interventions such as mandatory cooling-off periods for large, unusual, or first-time financial transfers disrupt the artificial urgency manufactured by scammers 32. Financial institutions are increasingly deploying AI-driven behavioral biometric layers that analyze user interactions - such as detecting the hesitated keystrokes, unusual mouse movements, or erratic navigation patterns typical of a user operating under extreme coercion or following a scammer's instructions 725.

The CATCH Framework and Psychological Inoculation

In research and academic environments facing high volumes of fraudulent digital participation, structured methodologies like the CATCH framework (Configure, Assess, Triage, Corroborate, and Hone) are being implemented to systematically identify and mitigate automated fraud without excluding legitimate users 60.

For retail consumers and investors, psychological inoculation strategies have proven highly effective. Academic experiments demonstrate that exposing individuals to simulated scams or high-level guidance on scam awareness prior to their exposure to actual fraudulent opportunities significantly reduces their susceptibility 46. When combined with simulated web-browser plug-ins that flag potentially high-risk opportunities, these strategies drastically reduce the volume of capital lost to AI-enabled scams 46.

Ultimately, understanding the psychology of fraud susceptibility reveals that falling for a scam is not an inherent failure of intelligence, but a predictable consequence of human cognitive architecture operating under deliberately engineered pressure. Systemic defense requires protecting the user from manipulation at the infrastructural level, rather than exclusively demanding flawless analytical reasoning from the individual in an increasingly deceptive digital landscape.