Progress on prime gaps and the twin prime conjecture

Introduction to Prime Spacing

The study of prime numbers - the indivisible atomic elements of the integers - is central to analytic number theory. While the fundamental theorem of arithmetic dictates that every integer greater than one can be uniquely factored into primes, the distribution of primes along the number line exhibits a chaotic, pseudo-random behavior characterized by a steady but irregular thinning. The distance between two consecutive prime numbers $p_n$ and $p_{n+1}$, denoted as the $n$-th prime gap $g_n = p_{n+1} - p_n$, is known to grow increasingly large on average 11.

The Prime Number Theorem, proven independently in 1896 by Jacques Hadamard and Charles Jean de la Vallée Poussin, established that the prime-counting function $\pi(x)$ is asymptotically equivalent to $x / \ln(x)$ 123. Consequently, the average gap between a prime $p$ and the next prime asymptotically approaches the natural logarithm of the prime, $\ln(p)$ 13. As numbers grow larger, the primes become sparser, and the average prime gap trends toward infinity.

Despite this average thinning, mathematicians have long observed that prime numbers frequently clump together in narrow intervals. The most famous manifestation of this phenomenon is the Twin Prime Conjecture. First formalized as a subset of Alphonse de Polignac's 1849 conjecture - which posits that for every even integer $2k$ there exist infinitely many pairs of primes separated by exactly $2k$ - the Twin Prime Conjecture states that there are infinitely many pairs of primes that differ by exactly 2 (e.g., 3 and 5, 11 and 13, or 2996863034895 \times 21290000 \pm 1) 4567.

For over a century, mathematicians were unable to prove the existence of any finite bound $H$ such that $\liminf_{n \to \infty} (p_{n+1} - p_n) \le H$ 89. The quest to establish a finite bound initiated a modern renaissance in analytic number theory. This effort culminated in a historic 2013 breakthrough by Yitang Zhang, followed rapidly by the independent innovations of James Maynard and Terence Tao, and the unprecedented massively collaborative efforts of the Polymath8 projects. Together, these developments compressed the proven bound on prime gaps from an initial 70,000,000 down to an unconditional 246 41110.

Large Gaps Versus Bounded Gaps

To understand the significance of bounded prime gaps, they must be situated within the broader context of prime distribution, which includes the study of unusually large gaps. Just as prime gaps can be unusually small, they can also be arbitrarily large. The factorial construction - where the sequence $(n!+2, n!+3, \dots, n!+n)$ produces $n-1$ consecutive composite numbers - provides a rudimentary proof that gaps of any desired length exist 111.

However, quantifying the exact asymptotic size of these maximum gaps remains an open problem. In 1936, the Swedish mathematician Harald Cramér proposed a probabilistic model suggesting that the primes behave like a collection of random variables, predicting that the maximum gap below a large number $X$ should be proportional to $(\ln X)^2$ 112. Conversely, the Scottish mathematician Robert Rankin established a rigorous lower bound for large gaps using smooth numbers, leading to a long-standing conjecture by Paul Erdős that Rankin's formula could be multiplied by an arbitrarily large constant 12.

In 2014, concurrent with the breakthroughs in small gaps, a coalition of mathematicians including Kevin Ford, Ben Green, Sergei Konyagin, James Maynard, and Terence Tao successfully resolved the Erdős conjecture on large prime gaps, proving that consecutive primes can be significantly further apart than Rankin's original bounding formula dictated 612. While large gap research analyzes the absolute sparsity of primes, bounded gap research analyzes their absolute density. Both fields rely on a unified mathematical infrastructure: sieve theory and the distribution of primes in arithmetic progressions.

Sieve Theory and the Parity Obstruction

The theoretical machinery utilized to isolate bounded gaps between primes is derived from sieve theory. Originating from the ancient Sieve of Eratosthenes, combinatorial sieves were mathematically formalized in the 20th century by Viggo Brun and Atle Selberg to estimate the frequency of prime numbers within specific intervals or sequences 2615.

The Selberg Sieve

The core objective of a sieve is to estimate the size of a sifted set, specifically counting the number of integers within an interval $X$ that are not divisible by any prime $p$ less than a threshold $z$. The Selberg sieve introduced a system of non-negative arithmetic weights, $\lambda_d$, constructed to over-count integers with fewer prime factors while minimizing the overall error term 21516.

By squaring the arithmetic weights to guarantee non-negativity - evaluating sums of the form $(\sum_{d|n} \lambda_d)^2$ - the Selberg sieve produces highly accurate upper bounds for the distribution of "almost primes" 216. An almost prime, denoted $P_r$, is an integer with at most $r$ prime factors. The sieve method is exceptionally effective at isolating almost primes. For example, Jingrun Chen applied sieve theory in 1973 to prove Chen's Theorem, establishing that there are infinitely many primes $p$ such that $p+2$ is a $P_2$ almost prime (either a prime or a semiprime product of two primes) 131819. However, attempting to reduce $P_2$ to $P_1$ (a pure twin prime) causes traditional combinatorial sieves to fail completely due to a fundamental structural limitation.

The Parity Problem

Identified by Atle Selberg in 1949, the parity problem dictates that any purely combinatorial sieve cannot differentiate between integers possessing an odd number of prime factors and those possessing an even number of prime factors 11131814. Because prime numbers inherently possess an odd number of prime factors (exactly one), while semiprimes possess an even number (two), a sieve operating beyond a specific depth becomes conceptually blind to the difference 2111613.

The underlying mechanism of the parity problem relates to the Möbius function, $\mu(n)$, which evaluates to $(-1)^k$ if $n$ is a square-free integer with $k$ prime factors, and 0 otherwise. Classical sieves rely heavily on sums of the Möbius function to generate inclusion-exclusion bounds (Type I estimates) 1415. As explained by Terence Tao, if a target set consists entirely of products of two primes, the true number of prime numbers in that set is exactly zero; yet, a combinatorial sieve will return an asymptotic upper bound for primes that is identical to the bound it returns for a set actually containing primes 161318.

Because of the parity barrier, any upper bound on the size of a set of primes obtained purely via sieve theory will inevitably be off from the true count by a factor of 2 or more 1613. When targeting a prime gap of 2, the sieve is asked to find an integer $p$ where $p$ is prime (odd parity) and $p+2$ is also prime (odd parity). The parity barrier means the sieve will indiscriminately trap pairs where $p$ is prime and $p+2$ is a semiprime. Breaking the parity barrier requires injecting Type II estimates (bilinear forms) into the sieve, a feat achieved by John Friedlander and Henryk Iwaniec in 1996 to prove the existence of primes of the form $a^2 + b^4$, but establishing such Type II estimates for affine-linear shifts like $p+2$ remains entirely out of reach for modern mathematics 6131415. Consequently, bounded gap research focuses on leveraging existing sieves across larger arrays of numbers rather than confronting the twin prime parity barrier directly.

Distribution in Arithmetic Progressions

To transition from sieving almost primes to establishing bounds on true primes, researchers must integrate external theorems regarding the distribution of primes in arithmetic progressions. Dirichlet's theorem on arithmetic progressions states that primes are evenly distributed among all coprime residue classes modulo $q$. The error term in this distribution - the difference between the actual count of primes in a progression, $\pi(x; q, a)$, and the expected count, $\pi(x)/\phi(q)$ - is encapsulated by a metric known as the "level of distribution," denoted as $\theta$ 52216.

The level of distribution $\theta$ determines how large the moduli $q$ can be relative to $x$ (specifically $q < x^\theta$) while keeping the average error term asymptotically negligible. The Bombieri-Vinogradov theorem, established in 1965, proves that the primes behave as though the Generalized Riemann Hypothesis is true on average, validating a level of distribution up to $\theta = 1/2 - \epsilon$ 14222417. This defines the "square root barrier," meaning the error terms remain manageable when the moduli $q$ are as large as $x^{1/2}$.

Mathematicians hypothesize that the level of distribution extends much further. The Elliott-Halberstam (EH) conjecture, formulated in 1968, postulates that the level of distribution for primes can be pushed to $\theta = 1 - \epsilon$, representing the absolute theoretical limit 162417. For decades, bounded prime gap research was paralyzed because existing sieve methods required a level of distribution strictly greater than $1/2$ to succeed.

The Goldston-Pintz-Yıldırım (GPY) Framework

The modern era of bounded prime gaps began in 2005 with the work of Daniel Goldston, János Pintz, and Cem Yalçın Yıldırım, collectively known as the GPY method 82224. GPY utilized a sophisticated modification of the Selberg sieve to analyze the clustering of primes within structures known as admissible tuples.

Admissible k-Tuples

An admissible $k$-tuple is a set of $k$ distinct non-negative integers $\mathcal{H} = {h_1, h_2, \dots, h_k}$ that does not cover all residue classes modulo any prime $p$ 32618. If a tuple covers all residue classes for a prime $p$, then for any integer $n$, at least one element in the shifted sequence ${n+h_1, \dots, n+h_k}$ must be divisible by $p$, preventing the tuple from generating pure primes indefinitely 315.

For example, the 3-tuple ${0, 2, 4}$ is not admissible. When evaluating modulo 3, the shifts are $0, 2,$ and $1$. Because all three residue classes are covered, any integer $n$ added to ${0, 2, 4}$ will guarantee that one of the resulting numbers is a multiple of 3. Conversely, the 5-tuple ${0, 2, 6, 8, 12}$ is admissible because it avoids covering all residue classes 318. The Hardy-Littlewood prime $k$-tuples conjecture predicts that any admissible tuple will simultaneously yield prime numbers infinitely often as $n$ varies 1819.

The Ratio of Sums

The GPY approach involves evaluating two massive weighted sums over integers $N < n \le 2N$: 1. $S_1$: A sum of the sieve weights over the sample space. 2. $S_2$: A sum of the sieve weights restricted to integers where $n+h_i$ is a prime.

By defining weights $\lambda_d = \mu(d)f(d)$ for a smooth function $f$, the GPY method evaluates these sums asymptotically. If the ratio $S_2 / S_1$ can be proven to be strictly greater than 1, it mathematically guarantees that for at least one integer $n$, the tuple contains two primes, yielding a bounded gap 517.

Goldston, Pintz, and Yıldırım proved unconditionally that $\liminf_{n \to \infty} (p_{n+1} - p_n) / \ln p_n = 0$, meaning that gaps between primes get arbitrarily smaller than the average gap 816. Furthermore, they demonstrated that if the level of distribution $\theta$ could be pushed strictly greater than $1/2$, bounded prime gaps must exist unconditionally 316. Because the Bombieri-Vinogradov theorem only provided $\theta = 1/2$, the GPY method fell just short of proving bounded gaps, hitting an intractable analytical wall.

Yitang Zhang's Unconditional Breakthrough

In May 2013, the landscape of additive number theory was irrevocably altered when Yitang Zhang, a relatively obscure lecturer at the University of New Hampshire, published a paper in the Annals of Mathematics proving that there are infinitely many pairs of primes that differ by no more than 70,000,000 41112. Zhang's approach successfully bridged the micro-gap that the GPY method could not close, delivering the first finite, unconditional bound for prime gaps in mathematical history.

Zhang's pivotal innovation was bypassing the rigid requirement of a universal level of distribution $\theta > 1/2$. He observed that the GPY sieve could function effectively even if the distribution estimates were weaker, provided the error terms could be controlled specifically for moduli lacking large prime factors (referred to as "smooth" or "friable" numbers) 3516.

By applying profound tools from algebraic geometry - specifically Pierre Deligne's proof of the Weil conjectures and deep bounds on Kloosterman sums - Zhang proved a weakened variant of the Bombieri-Vinogradov theorem 2629. He demonstrated an effective level of distribution $\theta = 1/2 + 1/1168$ strictly for smooth moduli 522. By injecting this refined distribution level into the GPY framework and expanding the size of the admissible $k$-tuple to a massive $k = 3,500,000$, Zhang generated a sufficiently large $S_2 / S_1$ ratio to prove that prime gaps of at most $70,000,000$ occur infinitely often 111620.

The Polymath8a Project and Massive Collaboration

Zhang's 55-page paper explicitly noted that his bound of 70,000,000 was a proof of concept and was not mathematically optimal; it could be lowered by refining the underlying variables and tuples 31121. Almost immediately following the publication, independent mathematicians began optimizing the parameters to reduce the bound 1121.

Recognizing the potential for a coordinated optimization effort, Fields Medalist Terence Tao initiated the Polymath8 project on his blog in early June 2013 112032. The Polymath initiative - a framework for massively collaborative, open-source mathematical research originally conceptualized by Timothy Gowers in 2009 - was ideally suited to the modular nature of Zhang's arguments 3334.

The Polymath8a project, titled "Bounded gaps between primes," operated as a highly efficient "factory production line" 22. Different components of the proof were separated into distinct operational tasks: 1. Admissible Tuples Generation: Computational specialists created automated systems to find the narrowest possible admissible $k$-tuples for massive values of $k$, calculating the optimal diameter $H(k)$ 182936. 2. Sieve Optimization: Analysts worked on optimizing the test functions within the Selberg sieve to improve the integral ratios, pushing the asymptotic limits of the GPY method. 3. Distribution Estimates: Researchers refined Zhang's applications of Deligne's theorem to push the level of distribution fractionally higher beyond $1/2 + 1/1168$ 2629.

Guided by Tao's orchestration across numerous specific blog threads (e.g., "Polymath8: Writing the paper"), the project maintained a ferocious momentum. Participants shared literature, circumvented technical bottlenecks, tracked down mathematical folklore, and traded supercomputing cycles 21. Within two months, the bound was violently compressed: dropping to 5,414 by August, and finalizing at $H = 4,680$ unconditionally 262023. The results were published under the collective pseudonym "D.H.J. Polymath" 2132.

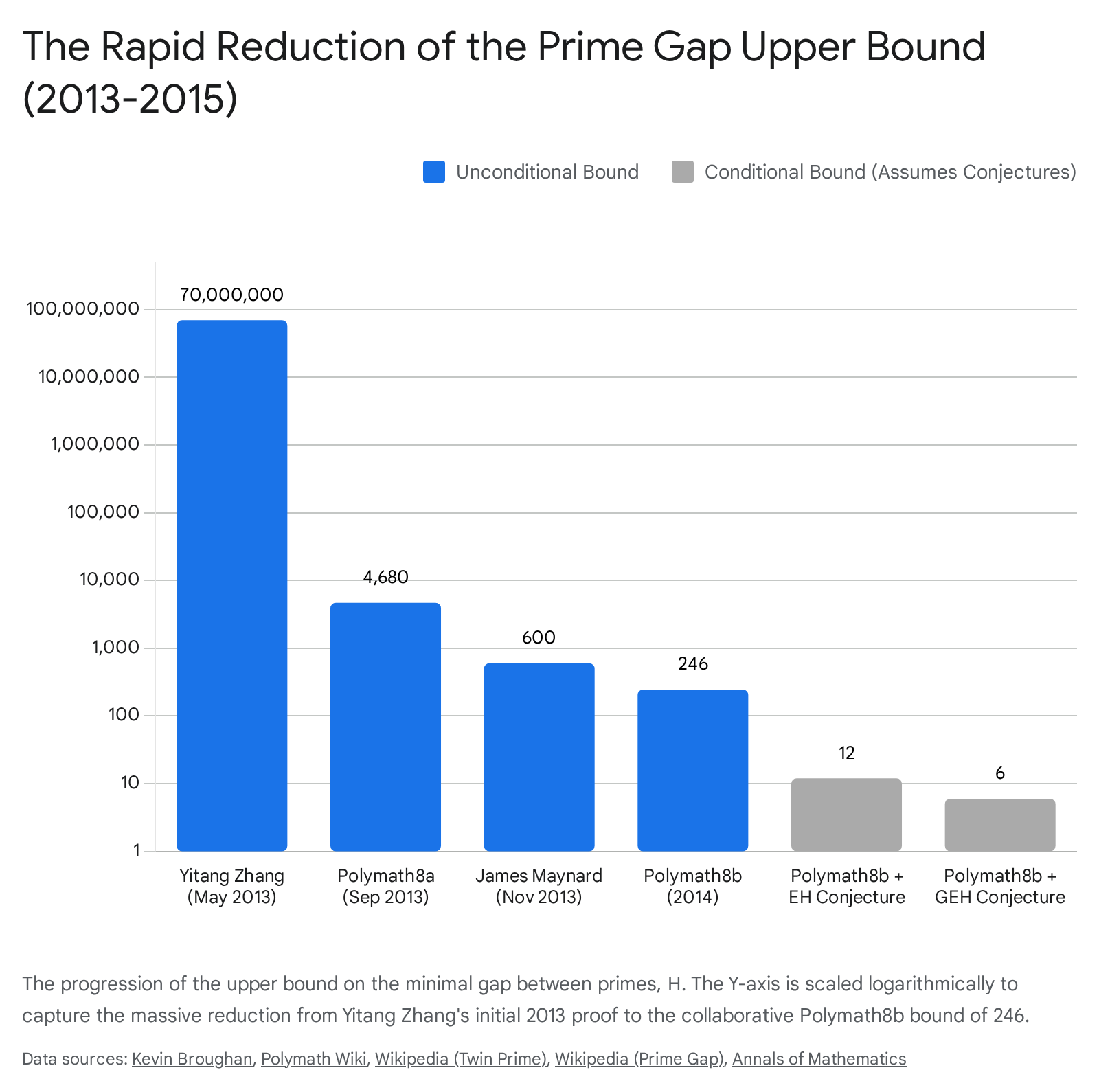

Table 1: Progression of the Prime Gap Upper Bound (2013 - 2015)

The following table summarizes the rapid sequential compression of the minimal prime gap bound resulting from individual breakthroughs and collaborative Polymath efforts.

| Milestone / Author | Date | Unconditional Bound | Conditional Bound Assumptions |

|---|---|---|---|

| Yitang Zhang | May 2013 | 70,000,000 | N/A |

| Polymath8a | Sep 2013 | 4,680 | N/A |

| James Maynard | Nov 2013 | 600 | N/A |

| Polymath8b | Jul 2014 | 246 | N/A |

| Polymath8b | Jul 2014 | 12 | Elliott-Halberstam (EH) |

| Polymath8b | Jul 2014 | 6 | Generalized Elliott-Halberstam |

(Data compiled from Polymath project records, Maynard's publications, and Zhang's initial proof 14111626.)

James Maynard and Multidimensional Sieve Weights

As Polymath8a concluded its write-up, the field experienced a secondary seismic shock. In November 2013, James Maynard, a postdoctoral researcher at the University of Montreal, released an independent paper outlining a profoundly simpler and more powerful methodology that bypassed Zhang's deep algebraic geometry entirely 111924. Terence Tao had also independently developed a similar mathematical concept contemporaneously, though Maynard's initial numerical optimization achieved superior bounds 112432.

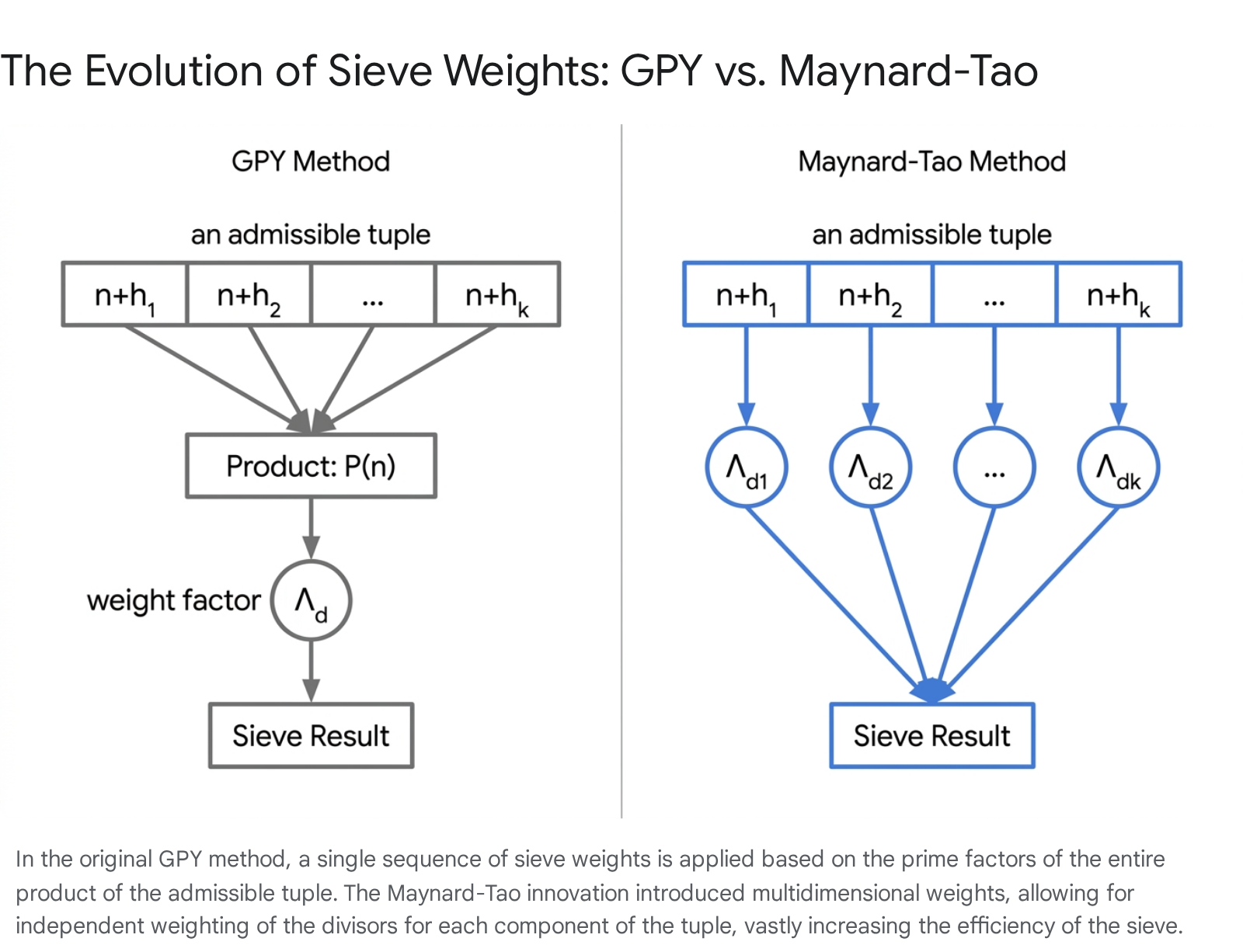

Maynard identified a structural inefficiency within the original GPY sieve. The classical GPY weights, denoted as $\lambda_d$, were constructed as a one-dimensional sequence relying heavily on the product of the tuple components $P(n) = (n+h_1)\cdots(n+h_k)$ 241718. By applying a single arithmetic weight to the aggregate product, the classical sieve lost crucial flexibility.

Maynard modified the construction to use multidimensional weights, $\lambda_{d_1, d_2, \dots, d_k}$, which depend individually on the specific divisors of each shifted integer $n+h_i$ 2417.

This allowed the sieve weights to be defined in terms of a smooth, multidimensional symmetric function $F(t_1, t_2, \dots, t_k)$, which could be optimized using the calculus of variations 1725.

The multidimensional flexibility was exceptionally efficient at converting distribution data into prime counts, allowing the method to utilize significantly weaker inputs. Maynard achieved a bound of $H \le 600$ relying solely on the standard Bombieri-Vinogradov theorem ($\theta = 1/2$), entirely avoiding the need for Zhang's complex extensions to smooth moduli 101619. To achieve this 600 bound, Maynard determined that an admissible tuple of $k=105$ was sufficient 1618.

Crucially, Maynard's multidimensional method succeeded where all previous methods had failed regarding prime multiples. He proved that for any integer $m$, there are infinitely many intervals of bounded length containing exactly $m$ primes, proving that $\liminf_{n \to \infty} (p_{n+m} - p_n) < \infty$ 111624. This represented the first definitive progress toward the Hardy-Littlewood $m$-tuples conjecture, establishing that a positive proportion of admissible $m$-tuples satisfy the conjecture. This monumental contribution to additive number theory, alongside his later proof of the Duffin-Schaeffer conjecture, earned Maynard the Fields Medal in 2022 1926.

The Polymath8b Project and the Bound of 246

Following Maynard's breakthrough, the collaborative apparatus was immediately reconstituted as Polymath8b, titled "Bounded intervals with many primes" 112636. The new objective was to merge the multidimensional sieve techniques of the Maynard-Tao method with the tight computational optimization infrastructure developed during Polymath8a 2633.

The challenge transitioned into an immense calculus of variations problem. The team sought to maximize a multidimensional integral ratio over a $k$-dimensional simplex, subject to the condition that the test function $F$ is symmetric and supported within specific geometric bounds 25. To extract maximum efficiency, the Polymath participants explored an "epsilon-enlarged" integration region and implemented numerical solutions to massive quadratic programs 322527.

Vanishing Marginals and Dimensional Scaling

A significant technical hurdle encountered during Polymath8b involved the mathematical concept of "vanishing marginals." To push the bounds lower, the multidimensional test function $F$ needed to satisfy boundary conditions where its integrals over specific sub-dimensions - its marginals - equaled zero 82527. The vanishing marginals condition ensures that there is a negligible contribution from regions where the error terms would otherwise dominate 8.

Constructing piecewise polynomial bases that satisfied these vanishing marginal conditions across high-dimensional spaces (e.g., $k=50$) proved computationally punishing 2527. The quadratic programs required to process these polynomials became exceptionally large and slow, stretching the limits of available supercomputing resources 3225.

Despite these computational bottlenecks, Polymath8b successfully identified an optimal 50-tuple and reduced the unconditional bound of the minimal prime gap to exactly 246 in early 2014 5112226. Since 2014, the bound of 246 has remained the recognized consensus record due to the exponential computing power required to push the multidimensional optimization any further without new theoretical scaffolding 36274328.

Conditional Bounds and the Parity Barrier Limit

While unconditional bounds rely solely on proven theorems like Bombieri-Vinogradov, analytic number theorists also calculate what the Maynard-Tao sieve could achieve if unproven conjectures were true.

Assuming the Elliott-Halberstam (EH) conjecture - which posits a level of distribution $\theta = 1 - \epsilon$ - provides the sieve with vastly more accurate distributional data. Under EH, the Polymath8b project demonstrated that the minimal prime gap drops to 12 1419. Furthermore, by assuming a Generalized Elliott-Halberstam (GEH) conjecture - which incorporates restrictions involving the Möbius function on shifted primes in arithmetic progressions - the bound is reduced to exactly 6 1262729.

The Modulo 6 Arithmetic Concentration

The fact that the limit halts at 6 under the most optimistic theoretical conditions is not a failure of computational power but rather a strict mathematical ceiling inherent to the nature of the sieve itself 114330.

This limit is intrinsically tied to modulo arithmetic and the parity problem. Barring the prime 3, all prime numbers take the form $6k \pm 1$ 4748. Consequently, consecutive primes naturally tend to fall into residue classes modulo 6 that prevent them from sharing small prime divisors like 2 and 3 1147. Because prime numbers inherently cluster around multiples of 6, gaps of 6 (known as "sexy primes") represent a path of least resistance for the multidimensional sieve 474849.

Table 2: Modulo Classification of Prime Constellations

The structural nature of prime constellations is categorized by their spacing, which directly correlates to their modulo 6 properties.

| Constellation Name | Gap Size ($g_n$) | Tuples Example | Modulo 6 Characteristic |

|---|---|---|---|

| Twin Primes | 2 | (p, p+2) | Requires isolating (6k-1, 6k+1). Blocked by the parity problem in standard sieve theory. |

| Cousin Primes | 4 | (p, p+4) | Blocked by similar parity and residue class constraints as twin primes. |

| Sexy Primes | 6 | (p, p+6) | Align with the $6k \pm 1$ modulo 6 density distribution. Achievable conditionally under GEH. |

(Characteristics of prime constellations based on sieve theory limitations 11474849.)

To bridge the gap from 6 down to 2 (twin primes), researchers must abandon purely combinatorial sieve-theoretic approaches and inject new mathematical ingredients capable of breaking the parity barrier 161415. Establishing Type II bilinear form estimates that correlate with the tight affine-linear spacing of $p$ and $p+2$ remains an open challenge 650. Until the parity problem is fully bypassed, $H=6$ remains the absolute theoretical floor for the Maynard-Tao multidimensional sieve methodology.

Recent Analytical Developments

While the bounds established by Polymath8b in 2014 have remained largely static for a decade, the analytic landscape continues to evolve. Recent 2024 and 2025 preprints, notably research led by Yuhang Shi, propose a new analytic framework centered on a specially constructed weight function $\lambda_q$ 22.

This proposed framework attempts to unconditionally push the effective distribution level $\theta_{eff}$ fractionally beyond $1/2$ by reducing the problem to a novel estimate for a trilinear form of Dirichlet characters. This is allegedly resolved via deep analysis of associated L-function zero distributions, using proofs by contradiction against established zero-density theorems 22. If verified, this analytic breakthrough would strengthen the Maynard-Tao sieve, permitting the use of a smaller admissible 48-tuple to establish a new unconditional upper bound of $H \le 234$ 22. However, this claim is still undergoing peer review by the analytic number theory community, and calibrated uncertainty remains regarding its ultimate validity as an unconditional proof 225152.

Conclusion

The science of prime gaps underwent a historic transformation between 2013 and 2014. The rigid, seemingly impenetrable boundary of the Prime Number Theorem was shattered by Yitang Zhang's unconditional proof of bounded gaps, achieved by circumventing the Bombieri-Vinogradov limits through smooth moduli. James Maynard's subsequent introduction of multidimensional sieve weights drastically simplified and empowered the analytical method, enabling generalizations to $m$-tuples. Simultaneously, the Polymath8 projects demonstrated the extraordinary capability of open, internet-based collaborative mathematics to optimize complex variational problems at speeds impossible for a single researcher.

Today, the unconditional gap bound stands securely at 246, with emerging research actively attempting to pull it into the 230s. Yet, the overarching goal - the Twin Prime Conjecture - remains firmly locked behind the formidable parity problem. Until analytic number theory discovers a mechanism to consistently separate numbers by the parity of their prime factors without destroying the error terms of the sieve, the ultimate gap of 2 will remain an asymptotic mirage, proven to exist heuristically, but unyielding to absolute mathematical proof.