Programmatic search engine optimization and search engine penalties

Programmatic Search Engine Optimization (SEO) constitutes a highly scalable digital marketing methodology wherein organizations automate the generation of landing pages to capture long-tail search queries. Rather than relying on manual editorial workflows, programmatic SEO leverages structured databases, application programming interfaces (APIs), and page templates to deploy thousands, or even millions, of distinct uniform resource locators (URLs) simultaneously. Historically utilized by massive aggregator platforms in real estate, travel, and software directories, the practice aims to satisfy highly specific, low-volume user intents that cumulatively result in massive organic traffic acquisition.

While the fundamental premise of programmatic SEO revolves around automation, recent advancements in generative artificial intelligence and shifting search engine algorithms have dramatically altered its execution and viability. Following aggressive algorithmic updates - most notably Google's integration of the Helpful Content System into its core ranking signals and the establishment of "Scaled Content Abuse" policies - the search ecosystem has fundamentally shifted. Organizations can no longer rely on volume alone; they must engineer genuine utility, rely on proprietary data, and structure their digital architecture to withstand rigorous user-interaction evaluations. This report examines the technical infrastructure, architectural requirements, algorithmic risks, and evolutionary future of programmatic SEO in an AI-mediated search environment.

Foundational Mechanics and Technical Architecture

The success or failure of a programmatic SEO deployment is largely determined before a single page is indexed. Because programmatic initiatives require the management and serving of immense volumes of pages, the underlying technical infrastructure must be optimized for both server efficiency and search engine crawlability.

Content Management Systems and Infrastructure Scaling

Traditional monolithic content management systems, designed primarily for manual publishing and linear editorial workflows, inherently struggle with the computational demands of programmatic SEO. When tasked with querying databases to generate hundreds of thousands of distinct pages, traditional platforms often experience database bloat, prolonged server response times, and an inability to dynamically update data points across the entire site architecture.

Consequently, enterprise-scale programmatic operations typically rely on decoupled, headless architectures or custom-built database solutions. A headless content management system separates the backend data repository from the frontend presentation layer 1. This separation allows developers to manage vast, constantly updating datasets - such as global financial exchange rates, dynamic software specifications, or real-time local weather data - while the frontend framework programmatically generates the corresponding URLs 23.

The financial technology platform Wise exemplifies the necessity of this architecture. Wise manages an estimated 260,000 programmatic pages targeting global currency conversion metrics, banking routing numbers, and SWIFT code queries 23. To manage this scale across more than sixty country-specific subdomains and multiple languages, the organization engineered a custom headless CMS 2. This infrastructure allows the company to ingest real-time currency feeds and instantly update templates across all global variations simultaneously, ensuring that the programmatic output remains factually accurate and compliant without degrading server performance 23.

Rendering Paradigms and Indexation Reliability

The architectural decision regarding how data is rendered into HyperText Markup Language (HTML) dictates how search engine crawlers interact with a programmatic site. The choice between Client-Side Rendering (CSR) and Server-Side Rendering (SSR) significantly impacts crawl budgets, indexation rates, and Core Web Vitals.

In a Client-Side Rendering environment, the web server delivers a minimal, bare-bones HTML shell to the browser alongside a bundle of JavaScript files 4. The browser - or the search engine crawler - must then download, parse, and execute the JavaScript to fetch the underlying database information and render the visual content. While CSR is the default state for many modern JavaScript frameworks and can reduce the initial load on the host server, it poses severe risks for programmatic SEO 45.

Search engines possess finite computational resources, known as a crawl budget. Because executing JavaScript is computationally expensive, crawlers like Googlebot often index the initial blank HTML shell and defer the JavaScript execution to a separate, delayed rendering queue 45. For a programmatic site attempting to index tens of thousands of pages, this delay can result in partial indexing, wherein the search engine fails to recognize the unique data on each page, interpreting them instead as identical, thin templates 4.

Conversely, Server-Side Rendering shifts the computational burden back to the host. The server executes the necessary logic, fetches the data, and delivers a fully constructed HTML document to the client or crawler upon the initial request 58. Google's search engineering guidelines explicitly advise webmasters that SSR or Static Site Generation (SSG) yields the most reliable indexing outcomes 96. A decoupled, headless architecture utilizing Server-Side Rendering ensures that search engine crawlers receive fully populated HTML documents, eliminating the indexing delays associated with JavaScript-heavy Client-Side Rendering. This architectural flow traces the path from raw data sources - such as internal APIs or crowdsourced databases - through a headless content management system. The logic layer pushes this data to a rendering engine, which outputs fully rendered HTML simultaneously to the end user's browser and to search engine crawlers.

By providing pre-rendered content, SSR dramatically improves critical performance metrics such as Time to First Byte (TTFB) and First Contentful Paint (FCP) 58. These speed enhancements not only facilitate rapid, comprehensive crawling of massive programmatic directories but also satisfy algorithmic performance thresholds that influence rankings.

| Rendering Methodology | Mechanism | Impact on Programmatic SEO | Crawlability & Indexation |

|---|---|---|---|

| Server-Side Rendering (SSR) | Server processes logic and delivers fully rendered HTML to the browser. | Highly Recommended. Optimizes Core Web Vitals and ensures immediate visibility. | Excellent. Crawlers immediately read and index all data upon the first pass. |

| Client-Side Rendering (CSR) | Server delivers an empty HTML shell; browser downloads and executes JavaScript to load content. | High Risk. Delays content visibility and strains rendering queues for large sites. | Poor. Often results in partial indexing or delayed discovery of programmatic variables. |

| Static Site Generation (SSG) | HTML is pre-rendered entirely at build time into static files. | Highly Recommended. Offers maximum speed but requires frequent rebuilding for real-time data. | Excellent. Consumes minimal crawl budget and provides instant content delivery. |

| Dynamic Rendering | Server detects bots and serves static HTML, while serving CSR to human users. | Deprecated. Google classifies this as a temporary workaround with potential cloaking risks. | Variable. Creates technical overhead and risks algorithmic penalties if content differs. |

The Deprecation of Dynamic Rendering

Historically, some organizations attempted to bridge the gap between frontend JavaScript frameworks and SEO requirements by employing Dynamic Rendering. This technique involved using middleware to detect the user agent requesting the page; if the request originated from a search engine bot, the server delivered a pre-rendered static HTML version, whereas human users received the interactive Client-Side Rendered version 6.

However, Google Search Central documentation has explicitly downgraded Dynamic Rendering from a recommended practice to a deprecated workaround 6. The search engine notes that maintaining separate rendering paths creates unnecessary architectural complexity and resource strain. Furthermore, if the static HTML served to the bot differs substantially from the interactive version served to the user, the site risks triggering algorithmic penalties for "cloaking" - a manipulative practice where search engines are intentionally deceived regarding a page's true content 611. Modern programmatic deployments are therefore strongly advised to adopt native Server-Side Rendering or hydration techniques to maintain parity between the crawler and user experiences.

Site Structure and Link Equity Distribution

Even with flawless server-side rendering, generating an immense volume of pages creates significant navigational challenges. Search engine algorithms rely on the structural relationships between URLs to determine topical relevance and hierarchy. Programmatic pages that are generated but fail to receive adequate internal links are classified as "orphan pages" 127. Without internal inbound links, crawlers struggle to discover these endpoints, and the algorithm assumes they lack importance, resulting in poor search visibility 14.

The Hub and Spoke Model

To ensure that link equity - often referred to as PageRank - flows effectively through a massive programmatic site, architects employ a "hub and spoke" or pillar page model 158. This methodology involves creating highly authoritative, broad category pages (the hubs) that systematically link out to the deeply specific programmatic variations (the spokes).

The automation platform Zapier provides an exemplary blueprint for programmatic site architecture. Zapier targets integration queries by generating distinct landing pages for every conceivable combination of software applications it supports 918. To manage this massive inventory, Zapier established a strict, tiered hierarchy 9:

1. The Root Directory: A primary /apps hub that acts as a directory for all software categories.

2. App Profiles: Dedicated profile pages for individual software entities (e.g., /apps/gmail).

3. Specific Integrations: Pages detailing the connection between two specific applications (e.g., /apps/gmail/integrations/google-sheets).

4. Specific Workflows: Granular pages exploring exact automated functions between the integrated tools.

This hierarchical architecture ensures that no programmatic page is buried deep within the site structure. Industry best practices dictate a shallow click depth, ensuring that even the most obscure programmatic variation is accessible within three to four clicks from the homepage 1410. Because Zapier's root domains and blog content acquire substantial external backlinks, the resulting authority flows efficiently down this logical structure, empowering the programmatic endpoints to rank for highly competitive, long-tail queries 911.

Navigational Versus Contextual Internal Linking

The mechanism by which internal links are deployed also influences algorithmic interpretation. Internal links are generally bifurcated into two categories: navigational and contextual 8.

Navigational links exist within site-wide structural elements, such as header menus, footers, or standardized sidebars. While these ensure basic crawlability, search engines recognize them as boilerplate architecture and assign them lower semantic weight 8. Contextual links, conversely, are embedded directly within the unique narrative prose or primary data fields of a page. Contextual linking provides crawlers with highly valuable signals regarding the semantic relationship between the source and target pages 158.

Successful programmatic SEO deployments engineer templates that automatically generate contextual links based on data relationships. For instance, an e-commerce site utilizing programmatic SEO might dynamically generate a "Related Products" or "Alternative Solutions" module that links horizontally to other programmatic pages within the same topical cluster 12. This strategic interlinking redistributes PageRank laterally and prevents the creation of isolated data silos 813.

Data Acquisition and Search Intent Alignment

The fundamental differentiator between a successful programmatic asset and a penalized spam site is the origin, utility, and uniqueness of the underlying data. Google's algorithmic systems are trained to identify and reward distinct value; they do not inherently penalize the use of templates or automation, provided the output satisfies user intent in a manner that manual creation cannot match.

Proprietary and Crowdsourced Data Pipelines

If the dataset feeding a programmatic engine is merely scraped from publicly available sources or duplicated from competitors without significant enhancement, the resulting output will inevitably trigger duplicate content or thin content classifiers 1424. Sustainable programmatic SEO relies on proprietary data integration or extensive community crowdsourcing.

Nomad List, a platform designed for remote workers, illustrates the power of crowdsourced data architectures. The platform was built primarily utilizing raw HTML, cascading style sheets (CSS), JavaScript, and PHP, backed by a lightweight SQLite database rather than complex enterprise frameworks 515. Nomad List maintains a dense, centralized dataset covering over 24,000 global cities, tracking variables such as localized cost of living, internet connectivity speeds, ambient temperature, and safety scores 516.

Critically, this data is continuously updated and validated by a network of moderators and active community members 2728. Because this aggregated, real-time dataset is proprietary to the platform, Nomad List can utilize automated templates to spin up tens of thousands of highly specific landing pages - such as "Best places to live in Europe" or "Cost of living in Lisbon" - without triggering algorithmic demotions 1629. Each generated page is populated with interactive maps, historical data charts, and user-submitted qualitative tips, ensuring the final output reads as a uniquely valuable resource rather than a sterile database dump 16. This execution resulted in high niche authority and scalable recurring revenue, demonstrating that data quality dictates programmatic success 1627.

Transactional Tool Execution

Programmatic SEO demonstrates the highest efficacy when aligned with specific transactional or navigational user intents. When a user queries a long-tail keyword seeking a precise numeric value, a conversion metric, or an interactive tool, programmatic pages designed as functional utilities consistently outperform narrative editorial content.

The international money transfer service Wise provides a definitive example of matching programmatic templates to transactional intent. Wise targets millions of long-tail commercial queries related to currency conversion (e.g., "US Dollar to Indian Rupee"), bank routing numbers, and international SWIFT codes 23. The user intent behind these queries is explicit: the searcher requires an immediate mathematical output or a specific alphanumeric code to execute a financial transaction.

Wise satisfies this intent by injecting real-time API data into functional calculator templates 2. The programmatic pages feature interactive input boxes, historical currency fluctuation charts, and clear calls-to-action facilitating immediate money transfers 2. Because the page functions as a responsive utility rather than a static article, search engines recognize its high alignment with user needs. This strategy has allowed Wise to generate an estimated 43.5 million monthly organic visits from its currency converter pages alone, accounting for the vast majority of the platform's overall search visibility 330.

Vulnerabilities of Intent Mismatch

Conversely, applying programmatic strategies to queries that demand qualitative nuance, editorial opinion, or subjective evaluation often leads to catastrophic failure. An intent mismatch occurs when a programmatic page attempts to answer a qualitative query with aggregated, quantitative data.

G2, a prominent business-to-business (B2B) software review marketplace, experienced significant volatility due to this precise misalignment. Historically, G2 utilized programmatic SEO to generate comparison pages targeting keywords such as "Best [Industry] Software" or "Compare [Software A] vs [Software B]" 1732. These templates automatically populated pages with aggregated feature checklists, pricing tables, and localized vendor descriptions pulled directly from the site's backend database 3233.

However, following the rollout of the Helpful Content Update in late 2023 and subsequent core updates, G2's programmatic category pages suffered a reported organic traffic decline of up to 80% to 85% 3334. The algorithmic demotion was driven by a fundamental misunderstanding of search intent. Users querying "best software" were not seeking automated feature matrices; they were searching for authentic, qualitative reviews, firsthand operational experience, and curated editorial recommendations 3334.

Google's algorithms, increasingly tuned to prioritize human experience and authenticity, determined that the automated templates failed to satisfy the qualitative intent of the searchers 34. In response to this algorithmic correction, G2 was forced to pivot its strategy, heavily investing in "hand-crafted," first-person editorial reviews to recover visibility for competitive commercial terms, thereby acknowledging the limitations of automation for highly subjective queries 33.

Algorithmic Penalties and Policy Enforcement

As generative artificial intelligence dramatically lowered the barrier to entry for content creation, search engines faced a proliferation of synthetic, mass-produced web pages. In response, Google initiated a series of aggressive algorithmic interventions designed to neutralize low-effort automation and penalize manipulative programmatic structures.

The March 2024 Core and Spam Updates

In March 2024, Google deployed a historic dual-update, simultaneously rolling out a massive core ranking adjustment and introducing stringent new spam policies 1819. The stated objective of these combined updates was to eliminate unhelpful, unoriginal, and manipulative content, with Google publicly estimating a 40% reduction in low-quality search results 1920.

A pivotal architectural shift during this update was the integration of the previously standalone Helpful Content System directly into the core ranking algorithm 3821. Quality evaluation, therefore, ceased to be a periodic filter and became an omnipresent signal embedded across all algorithmic calculations.

Simultaneously, Google formalized three distinct spam policies that directly impacted programmatic SEO practitioners:

| Spam Policy Designation | Definition and Mechanism | Impact on Programmatic Operations |

|---|---|---|

| Scaled Content Abuse | Generating massive volumes of pages primarily to manipulate rankings, providing little to no unique value to users. | Explicitly targets low-effort programmatic generation, regardless of whether the content is produced by humans, AI, or a hybrid process. |

| Site Reputation Abuse (Parasite SEO) | Publishing low-value, third-party programmatic content on high-authority host domains without close editorial oversight. | Neutralizes the strategy of using legacy domain authority to artificially rank mass-produced affiliate or programmatic directories. |

| Expired Domain Abuse | Purchasing abandoned, historically authoritative domain names to host newly generated, unrelated programmatic content. | Devalues the historical link equity of expired domains, preventing practitioners from bypassing the "sandbox" phase of site growth. |

The enforcement of the Scaled Content Abuse policy was particularly notable for its swiftness and severity. During the initial rollout, independent analysts observed that hundreds of domains were entirely deindexed by Google's manual web spam team 4041. Sites heavily reliant on automated scraping, unedited AI generation, or aggressive template duplication saw traffic collapse to zero within days 3842.

Scaled Content Abuse and the "SEO Heist"

Google's policy explicitly clarified that the use of automation or generative AI is not inherently prohibited; rather, the algorithmic suppression targets the intent to manipulate rankings through sheer volume without corresponding editorial value 1943. This distinction was prominently highlighted by a highly publicized case study colloquially known as the "SEO Heist."

In late 2023, a digital marketing consultant publicly documented utilizing AI tools to scrape a competitor's sitemap and programmatically generate approximately 1,800 cloned articles 2245. The automated system rewrote the competitor's structural data into novel text, allowing the site to temporarily capture over 3.6 million organic views 2245. However, because the content was entirely derivative, lacked human editorial oversight, and offered zero net-new insights to the search ecosystem, Google's spam detection systems rapidly identified the pattern 22. The domain was subsequently struck with a manual penalty for scaled content abuse and entirely deindexed 2223.

This incident underscores the algorithmic consensus: while artificial intelligence can be safely utilized to structure data, format templates, or accelerate coding, it cannot serve as an autonomous publishing engine. An analysis of over 600,000 top-ranking web pages revealed that 86.5% contained some degree of AI-assisted content, yet the statistical correlation between AI usage and ranking penalties was effectively zero 7. The determining factor for algorithmic survival is the presence of an editorial layer, human validation, and the injection of proprietary value.

Case Studies of Indexation Failure

When a programmatic SEO strategy runs afoul of algorithmic quality thresholds, the first symptom is usually an indexation failure. Search engines will simply refuse to allocate crawl budget to domains exhibiting patterns of programmatic duplication or thin content.

Data from industry case studies indicates that programmatic implementations face a high failure rate without proper execution. A documented study of a mathematically focused programmatic site revealed catastrophic crawl budget exhaustion; after attempting to programmatically generate over 35,000 pages, the search engine indexed only 350 URLs after a full month, ultimately leading to the structural failure of the entire campaign 14.

Similarly, a public programmatic SEO case study documenting a targeted content cluster - focusing on excessively granular variations of fish preparation - demonstrated sudden algorithmic suppression. Despite avoiding manual penalties, the site's daily organic impressions collapsed from approximately 1,000 to under 150 following an unannounced algorithmic sweep 24. The failure was attributed to extreme cluster depth and over-segmentation, where the programmatic variations (e.g., differentiating between cooking a "Brook Trout" versus a "Tiger Trout") failed to provide sufficient unique data to justify separate URLs 24. When templates lack distinct differentiation, Google's algorithms classify them as doorway pages and actively restrict their visibility.

User Interaction Signals and Algorithmic Validation

A fundamental paradigm shift in modern SEO is the realization that technical optimization and keyword targeting merely qualify a page for initial ranking consideration; post-click user behavior determines long-term survival. Google evaluates the quality of programmatic pages by monitoring how human users interact with them, an insight confirmed by internal documentation exposed during the 2024 Department of Justice antitrust trial 2125.

Navboost and Post-Click Behavior

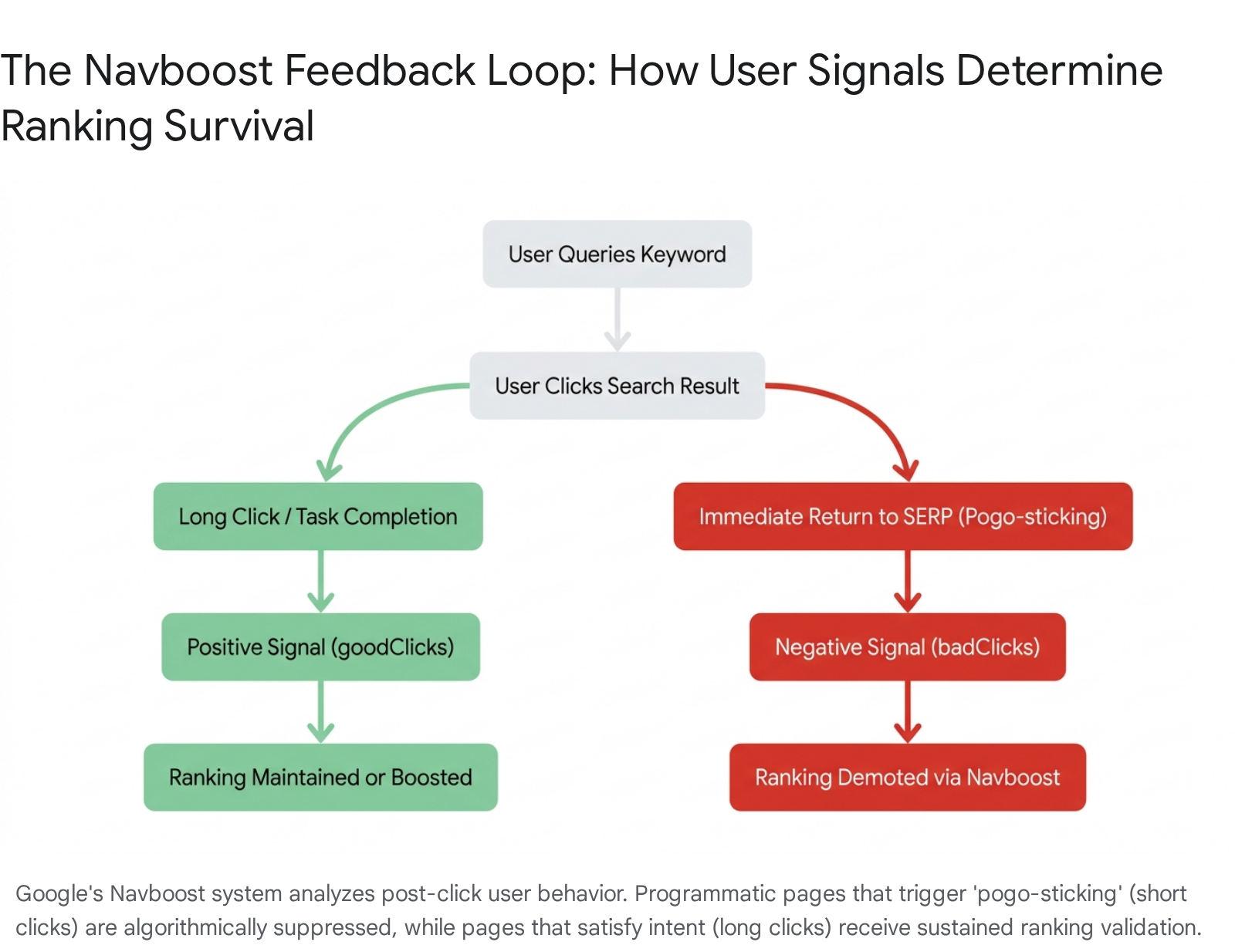

The leaked documentation confirmed the existence and outsized influence of a ranking system known as Navboost 2526. Navboost operates as a highly responsive, real-time weighting mechanism that aggregates user interaction data - specifically click-through rates and post-click engagement - over a rolling 13-month window to continuously adjust search rankings 2527.

The system meticulously categorizes user engagements into specific algorithmic metrics, including "goodClicks," "badClicks," and "lastLongestClicks" 21. This behavioral tracking serves as the ultimate arbiter of programmatic quality. If a site deploys thousands of programmatic pages that successfully secure initial visibility, Navboost acts as a validation loop.

When a user clicks on a programmatic result, encounters a thin, templated page devoid of utility, and immediately clicks the browser's "Back" button to return to the search engine results page (a phenomenon known as "pogo-sticking"), Navboost registers a definitive "badClick" or negative signal 2628. Conversely, a "Long Click" - where the searcher remains on the programmatic page to utilize a calculator, consume proprietary data, or execute a transaction without returning to the search interface - signals deep user satisfaction 28.

Consequently, scaling programmatic pages that lack genuine utility will inevitably generate patterns of high bounce rates and short clicks. Navboost detects these aggregate behavioral patterns and algorithmically demotes the offending pages, overriding any traditional on-page SEO signals 2627. The algorithm evaluates whether the programmatic page effectively terminates the user's search journey.

Empirical Thresholds for Engagement

To prevent catastrophic traffic cliffs - which affect an estimated 33% of programmatic implementations within 18 months - organizations must actively monitor behavioral metrics against site-wide averages 52.

Industry benchmarking suggests that programmatic templates must maintain engagement metrics (such as time on page and pages per session) within 30% of the domain's manually authored, editorial content 53. If programmatic endpoints demonstrate bounce rates that exceed the site average by 15% or more, or if time on page drops by 20%, it is indicative of severe user dissatisfaction 53. In such scenarios, technical guidelines recommend halting all automated page generation immediately. Organizations must conduct user testing to refine the templates, inject interactive elements, and restore engagement parity before resuming programmatic scaling, lest they trigger a domain-wide Navboost demotion 2653.

Generative Artificial Intelligence and Answer Engine Optimization

The integration of Large Language Models directly into the search interface has introduced an existential challenge to informational programmatic SEO. Google's AI Overviews - and corresponding interfaces from platforms like Perplexity and Claude - utilize generative AI to synthesize information from multiple sources, presenting comprehensive answers directly at the top of the search engine results page 1829.

The Zero-Click Search Paradigm

The proliferation of AI-synthesized answers is rapidly accelerating the incidence of "zero-click searches" - queries wherein the user acquires the necessary information from the SERP without navigating to an external website. Empirical field studies demonstrate that the presence of an AI Overview reduces organic click-through rates to standard web results by approximately 38% 1829. Furthermore, aggregated analytics from 2024 and 2025 indicate that up to 59% of Google searches, and as many as 77% on mobile devices, terminate without an outbound click 2955.

For programmatic SEO practitioners, this paradigm shift invalidates the strategy of generating thousands of pages that merely aggregate commoditized facts. If a programmatic page exists solely to provide simple definitions, population statistics, or basic weather data, the generative AI will intercept the query, parse the data, and deliver the answer directly to the user 2930. The boundary of AI interference is also expanding; while initially concentrated on broad informational queries, AI Overviews are increasingly synthesizing commercial data, comparison shopping, and product specifications, directly competing with traditional affiliate and directory templates 2931.

| Search Dimension | Traditional SEO (Pre-2024) | Answer Engine Optimization (AEO) |

|---|---|---|

| Primary Goal | Secure top organic rankings to drive click-through traffic. | Secure citations and brand mentions within AI-generated summaries. |

| Core Algorithmic Signals | Backlink profiles, exact-match keyword density, domain authority. | Entity clarity, structured data (Schema), first-hand experience (E-E-A-T). |

| User Journey | Search -> Click Result -> Consume Content on Site. | Search -> Read AI Synthesis -> Decision (Zero-Click) or Refined Click. |

| Programmatic Vulnerability | Susceptible to manual actions or duplicate content penalties. | Susceptible to complete disintermediation; AI extracts data without driving traffic. |

| Strategic Focus | Publishing comprehensive, long-form content to capture broad keyword variations. | Publishing chunked, modular data designed for machine retrieval and extraction. |

Synthesized Citations and Brand Visibility

To adapt to the generative search environment, organizations must transition their programmatic strategies toward Answer Engine Optimization (AEO). The objective is no longer solely to drive clicks, but to ensure the brand is recognized as the authoritative source cited by the underlying language models.

This requires rigorous implementation of technical signals, primarily Schema markup (JSON-LD), to explicitly define entities, facts, and relational data for machine ingestion 28. Programmatic templates must be structured logically to facilitate natural language processing. Furthermore, demonstrating E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) is critical; AI engines are programmed to retrieve data from verified, highly credible sources to minimize hallucinations 2958.

The transition to AEO is evidenced by the evolution of G2. While the platform lost massive volumes of traditional SEO traffic following the Helpful Content updates, it strategically optimized for AI retrieval. By late 2025, G2 emerged as one of the top 20 most cited domains across major LLMs 27. Because generative platforms prioritized G2's verified, crowdsourced review data as a reliable source of truth, the company experienced a 20% growth in overall web traffic through a halo effect of direct citations and AI-driven brand visibility, despite the deterioration of its traditional search rankings 27.

Metrics for Sustainable Execution

The era of indiscriminately deploying millions of programmatic pages generated from scraped data has definitively ended. However, programmatic SEO remains a potent methodology when executed with rigorous technical standards, proprietary data, and a relentless focus on user utility. To mitigate the risk of algorithmic penalties and achieve sustainable scale, organizations must adhere to strict operational imperatives.

Continuous monitoring of indexation ratios is the primary diagnostic for programmatic health. An indexation rate above 70% indicates that search algorithms recognize the programmatic templates as valuable and distinct 53. Conversely, if indexation falls below 40%, it is a clear algorithmic signal of failure; the search engine perceives the newly generated pages as redundant or low-value and actively refuses to allocate crawl budget 53. Attempting to force additional pages into the index under these conditions inevitably exacerbates crawl strain and triggers broader domain demotions.

Ultimately, automation and generative AI must be viewed as accelerators for formatting, data structuring, and deployment, rather than replacements for editorial strategy. Launching programmatic pages in controlled, phased deployments allows teams to monitor user engagement metrics and Navboost signals prior to committing to massive scale 32. Every programmatic page must pass a fundamental viability test: it must offer a unique data point, an interactive tool, or a novel synthesis that cannot be easily replicated by a language model. In a digital ecosystem governed by sophisticated machine learning classifiers and real-time user interaction signals, the only sustainable programmatic SEO strategy is one that engineers genuine utility at scale.