Ontological status of the quantum wavefunction in the PBR theorem

The interpretation of the quantum wavefunction remains one of the most persistent and profound inquiries in modern physics. Since the formalization of quantum mechanics in the 1920s, a central debate has centered on whether the wavefunction - the mathematical entity that evolves deterministically according to the Schrödinger equation - corresponds directly to an objective element of physical reality, or whether it merely represents an observer's subjective knowledge or incomplete information about an underlying, deeper physical state.

For decades, this debate was largely relegated to the realm of philosophy and metaphysics. Physicists operated predominantly under pragmatic frameworks, utilizing the wavefunction to calculate the probabilities of experimental outcomes via the Born rule without committing to a specific ontological stance. Early critiques, such as the Einstein-Podolsky-Rosen (EPR) paradox, challenged the completeness of quantum mechanics, hoping to restore classical realism by suggesting the wavefunction was merely statistical 12. While Bell's Theorem later proved that any hidden-variable theory must be non-local, it did not definitively answer whether the quantum state itself was a real physical wave or an epistemic tool 34.

The development of the Pusey-Barrett-Rudolph (PBR) theorem in 2012 shifted this specific inquiry from philosophical speculation to rigorous mathematical physics 54. By leveraging the framework of ontological models, the PBR theorem provided a mathematical proof suggesting that, under specific physical assumptions, the quantum state must be a real, physical object rather than a mere probability calculator 14. This report provides an exhaustive analysis of the PBR theorem, the mathematical frameworks it relies upon, the controversies surrounding its foundational assumptions, the experimental efforts to test its limits, and its broader implications for the prevailing interpretations of quantum mechanics.

The Ontological Models Framework

To analyze the implications of the PBR theorem rigorously, it is necessary to establish the mathematical vocabulary used to distinguish between physical reality and subjective knowledge in quantum mechanics. This vocabulary was formalized by Nicholas Harrigan and Robert Spekkens in 2010 through the ontological models framework 56.

The framework posits that every physical system possesses a true, underlying state of reality, regardless of whether that state is fully accessible or measurable by an observer. This definitive state of reality is termed the ontic state, denoted by the variable $\lambda$. The space of all possible ontic states for a given system constitutes the ontic state space, denoted as $\Lambda$ 7.

When an experimenter prepares a quantum system in a specific quantum state $|\psi\rangle$, the preparation procedure does not necessarily pinpoint a single, precise ontic state $\lambda$. Instead, the preparation generates a probability distribution, or statistical ensemble, over the ontic state space. This probability density function is denoted as $\mu_\psi(\lambda)$ 17. For any valid preparation procedure, the integral of this probability distribution over the entire ontic space must equal one.

Measurements in this framework are modeled by response functions. If an experimenter performs a measurement $M$ that can yield an outcome $k$, the ontological model defines a response function $\xi_M(k|\lambda)$. This function represents the probability that the measurement apparatus will record outcome $k$ given that the system is actually in the true ontic state $\lambda$ 7. To recover the empirical predictions of standard quantum mechanics, the ontological model must obey the Born rule on average. Thus, the quantum mechanical probability $P(k|\psi)$ is recovered by integrating the response function over the ontic probability distribution.

Categorization of Quantum States

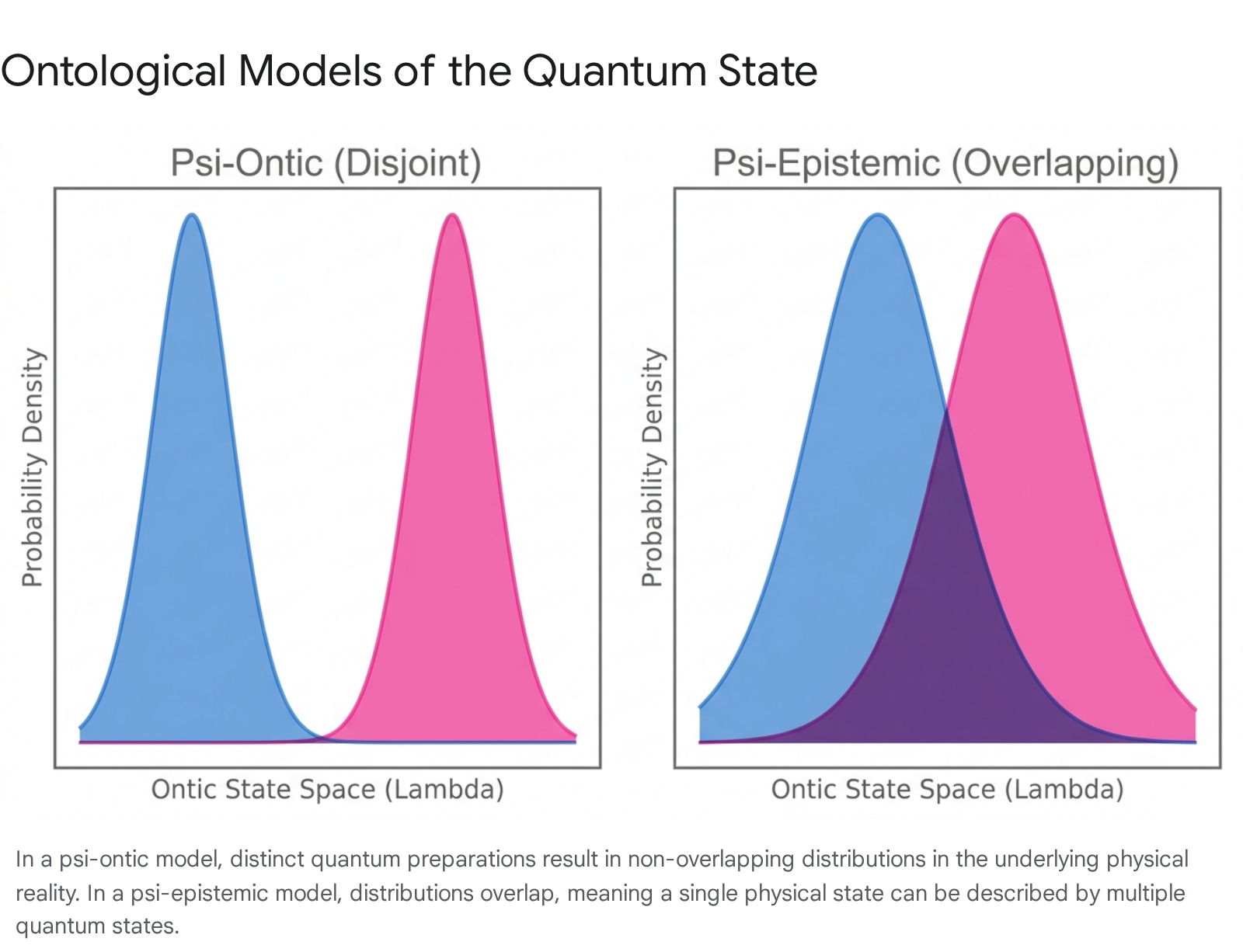

Using the ontological models framework, Harrigan and Spekkens categorized interpretations of the wavefunction into two primary classes based on how the probability distributions map to distinct quantum states 589. These are defined as $\psi$-ontic and $\psi$-epistemic models.

In a $\psi$-ontic model, the quantum state $|\psi\rangle$ is an objective property of the physical system. Mathematically, this dictates that for any two distinct pure quantum states $|\psi_1\rangle$ and $|\psi_2\rangle$, their corresponding ontic probability distributions $\mu_{\psi_1}(\lambda)$ and $\mu_{\psi_2}(\lambda)$ are strictly disjoint. They possess no overlapping regions in the ontic state space. Consequently, if one knows the true ontic state $\lambda$ of the system, there is only one possible quantum state $|\psi\rangle$ that could have been prepared 58. The wavefunction is thus woven directly into the fabric of reality, determining the ontic state completely or acting as a physical variable alongside other hidden variables.

Conversely, in a $\psi$-epistemic model, the quantum state $|\psi\rangle$ represents an observer's incomplete information - or epistemic state - concerning the true ontic state $\lambda$ 910. Mathematically, this implies that for at least some pairs of distinct pure quantum states, their ontic probability distributions overlap 10. Within this overlap region, a single physical ontic state $\lambda$ is compatible with multiple distinct quantum state preparations. Therefore, knowing the exact physical state $\lambda$ does not definitively reveal which quantum state $|\psi\rangle$ the experimenter prepared 10. The wavefunction, under this paradigm, functions analogously to a probability distribution in classical statistical mechanics; it is a mathematical tool for calculating odds rather than a substantive physical wave 811.

The distinction between these two models is highly consequential for resolving the measurement problem. Prior to 2012, $\psi$-epistemic models were attractive to many physicists because they offered a conceptually simple resolution to the apparent paradoxes of quantum measurement 12. If the wavefunction is merely a state of knowledge, then "wavefunction collapse" upon measurement is not a bizarre, instantaneous physical event that violates special relativity; it is simply a Bayesian update of probabilities upon acquiring new experimental data 1215. The physical system itself undergoes no discontinuous jump; only the observer's information changes.

To summarize the differences in mathematical and physical interpretation, the following comparison outlines the core distinctions between the two ontological frameworks.

| Feature | Psi-Ontic Model | Psi-Epistemic Model |

|---|---|---|

| Probability Distributions | Strictly disjoint (no overlap). | Overlapping for some non-orthogonal states. |

| Status of the Wavefunction | An objective physical entity or property. | A representation of incomplete observer knowledge. |

| State Determination | The ontic state uniquely determines the quantum state. | A single ontic state may be compatible with multiple quantum states. |

| Wavefunction Collapse | Represents a physical change or branching in reality. | Represents a subjective Bayesian update of information. |

The Pusey-Barrett-Rudolph Theorem

The Pusey-Barrett-Rudolph theorem, authored by Matthew Pusey, Jonathan Barrett, and Terry Rudolph, severely constrained the viability of the epistemic viewpoint. The theorem states mathematically that all ontological models that faithfully reproduce the Born rule predictions of quantum mechanics must be $\psi$-ontic 14. Through this mathematical proof, the researchers established that distinct pure quantum states cannot share overlapping ontic states, fundamentally undermining the notion that the wavefunction merely reflects observer ignorance.

Antidistinguishability and Conclusive Exclusion

The architecture of the PBR proof relies on an operational concept known as antidistinguishability, occasionally referred to in foundational literature as post-Peierls incompatibility 34. Standard distinguishing measurements attempt to determine with certainty which specific state was prepared by an experimenter. In contrast, an antidistinguishing measurement is designed to determine which state was definitely not prepared 3. The measurement yields outcomes that conclusively exclude specific initial preparations.

Pusey, Barrett, and Rudolph construct a rigorous thought experiment utilizing two identical, independently prepared quantum systems, such as two qubits. Each qubit can be prepared in one of two non-orthogonal pure states. Let these states be defined as the standard computational basis state $|0\rangle$ and the diagonal superposition state $|+\rangle$, where $|+\rangle = \frac{1}{\sqrt{2}}(|0\rangle + |1\rangle)$ 310.

Because these two states are non-orthogonal, the principles of quantum mechanics dictate that they cannot be perfectly distinguished by any single measurement on a single qubit. If a theorist posits that the quantum state is $\psi$-epistemic, this fundamental indistinguishability is explained by asserting that their respective ontic probability distributions, $\mu_0(\lambda)$ and $\mu_+(\lambda)$, overlap. Consequently, there exists a shared physical ontic state $\lambda_{shared}$ that could result from either the $|0\rangle$ or the $|+\rangle$ preparation 310.

The Logical Proof of Contradiction

The experiment proceeds by preparing the two qubits entirely independently, which generates four possible joint quantum states for the combined system. The first possibility is that both qubits are prepared in the $|0\rangle$ state. The second is that the first is $|0\rangle$ and the second is $|+\rangle$. The third reverses this, with $|+\rangle$ followed by $|0\rangle$. The final possibility is that both are prepared in the $|+\rangle$ state 310.

The researchers then mandate that these two qubits be subjected to a joint, entangled measurement. The measurement projects the system onto a specific mathematical basis of four orthogonal states, which are variants of the Bell basis. This measurement is engineered so that each of the four possible measurement outcomes is strictly orthogonal to exactly one of the four possible initial joint preparations 31013. According to the Born rule, the probability of a specific outcome is identically zero if the measurement state is orthogonal to the prepared state. Therefore, if the experiment yields the first outcome, the system could not possibly have been prepared in the first joint state. If it yields the second outcome, it could not have been the second joint state, and this exclusion applies symmetrically across all four possibilities 3.

The PBR theorem exposes a fatal contradiction for $\psi$-epistemic models by evaluating this measurement through the lens of the overlapping ontic space. The logic proceeds as follows. First, assume the model is $\psi$-epistemic. This means the distributions $\mu_0$ and $\mu_+$ overlap, indicating there is some physical ontic state $\lambda_{shared}$ that could belong to either the $|0\rangle$ or $|+\rangle$ preparation 310. Second, because the two qubits are prepared independently, there is a non-zero probability that both qubits simultaneously happen to manifest this shared ontic state. The joint physical state of the system is therefore the coordinate pair $(\lambda_{shared}, \lambda_{shared})$ 31013.

The measuring device must interact with the physical reality of the system, which currently resides in the state $(\lambda_{shared}, \lambda_{shared})$, and must produce one of the four measurement outcomes. However, the device cannot produce the first outcome, because the physical state $(\lambda_{shared}, \lambda_{shared})$ is a perfectly valid physical state for the first preparation, and quantum mechanics dictates the first preparation has a zero percent chance of yielding the first outcome 3. By identical logic, the device cannot produce the second, third, or fourth outcomes, as the shared physical state is compatible with all four initial preparations, each of which strictly forbids one corresponding outcome 31013.

The ontic state $(\lambda_{shared}, \lambda_{shared})$ therefore assigns a probability of zero to all possible measurement outcomes. This violates the fundamental axiom of probability theory, which requires that the sum of all outcome probabilities must equal exactly one 3. Because the $\psi$-epistemic assumption inevitably leads to this mathematical impossibility, the assumption itself must be false. The probability distributions cannot overlap. The quantum state must uniquely map to distinct elements of reality, establishing that the quantum wavefunction must be $\psi$-ontic 310.

The Preparation Independence Postulate

While the PBR theorem is universally recognized as a rigorous mathematical proof, its philosophical and physical implications depend entirely on the validity of its foundational premises. The most heavily scrutinized premise in the foundational literature is the Preparation Independence Postulate 101415.

The Preparation Independence Postulate asserts that if two quantum systems are prepared independently in separate laboratories, their underlying ontic states are statistically uncorrelated 510. Mathematically, this dictates that the joint probability distribution factors perfectly into the product of the individual probability distributions. The physical assumption is that choices made in preparing one system do not influence the physical reality of an entirely separate, unentangled system 151620.

Critiques of Preparation Independence

Critics of the PBR theorem, including physicists Robert Spekkens and Matthew Leifer, argue that the Preparation Independence Postulate is not a mandatory feature of reality. Instead, it functions as a hidden assumption of non-contextuality, or an assumption against holistic properties 142117. Spekkens notes that assuming systems prepared in unentangled quantum states possess no holistic underlying properties is an assumption that appeals primarily to theorists who already lean toward a $\psi$-ontic worldview 14. If one drops the assumption of separability at the ontic level, the contradiction exposed by the PBR theorem fails to materialize 14.

The most prominent theoretical challenge to the Preparation Independence Postulate was published in 2012 by Lewis, Jennings, Barrett, and Rudolph 1218. The researchers constructed an explicit mathematical model demonstrating that distinct quantum states can remain compatible with a single state of reality if the independence postulate is discarded 1218. In their framework, $\psi$-epistemicity is maintained by introducing deep, pre-existing correlations in the ontic state space between seemingly isolated systems 1218.

However, the Lewis et al. model exhibits severe limitations when applied to more complex quantum scenarios. While it successfully circumvents the PBR theorem for isolated single systems, it struggles to formulate coherent response functions for composite systems and joint measurements involving entanglement, such as the Bell-basis measurements essential to the original PBR proof 1824. Furthermore, abandoning preparation independence forces physicists into an uncomfortable philosophical position. To maintain an epistemic view of the wavefunction without preparation independence, one must accept a universe characterized by pervasive, super-deterministic correlations or extreme holistic non-locality, whereby the physical state of a particle on Earth is inherently correlated with the preparation of a particle in another galaxy 151920. For many physicists, accepting that the wavefunction is a real physical object is deemed far more scientifically palatable than accepting extreme super-determinism.

Experimental Tests of the Pusey-Barrett-Rudolph Theorem

While the PBR theorem is a definitive mathematical proof, it relies on the premise that the empirical predictions of quantum mechanics are exactly correct 13. In reality, laboratory experiments suffer from systemic noise, imperfect state preparation, and finite detector efficiency. If an experimenter attempts to perform the PBR antidistinguishability test, a sophisticated epistemic model could theoretically exploit these experimental imperfections to hide the theoretical overlap region within the noise floor 71317.

Experimental physics has therefore focused on testing the PBR theorem empirically to set strict numerical bounds on the limits of $\psi$-epistemicity. These experiments do not aim to prove the theorem mathematically - which is already established - but rather to demonstrate that nature enforces the theorem's bounds even in the presence of realistic experimental noise 1720. The objective is to quantify the maximum possible overlap between ontic distributions, utilizing total variation distance to establish how disjoint the probability distributions truly are in practice 19.

Optical and Trapped-Ion Implementations

In 2015, a research team at the University of Oxford executed a rigorous test using photons encoded with multidimensional quantum states, specifically qutrits and ququarts, utilizing both polarization and path degrees of freedom 2122. Rather than attempting to rule out all epistemic models definitively, they utilized advanced theoretical frameworks designed to restrict the permissible parameter space of such models. Their results ruled out maximally $\psi$-epistemic models with a confidence exceeding 250 standard deviations. Furthermore, they restricted any remaining $\psi$-epistemic overlap explanations to a ratio strictly below 0.69 21.

Shortly thereafter, a 2016 experiment by Nigg et al. tested the premise using highly controlled trapped ions. They evaluated the distinguishability of quantum states through single-shot measurements. Their data yielded error probabilities exceptionally close to zero, ruling out maximally $\psi$-epistemic models by over 4.5 sigma 23. The minimal deviations observed in the data were entirely consistent with expected statistical projection noise, rather than indicative of an underlying epistemic overlap in the ontic state space 23. These results paralleled the rigorousness seen in other major quantum foundation tests of the era, such as the loophole-free Bell tests conducted by the Vienna group, which systematically closed locality and detection loopholes using high-efficiency superconducting detectors 3024.

Tests on Superconducting Quantum Processors

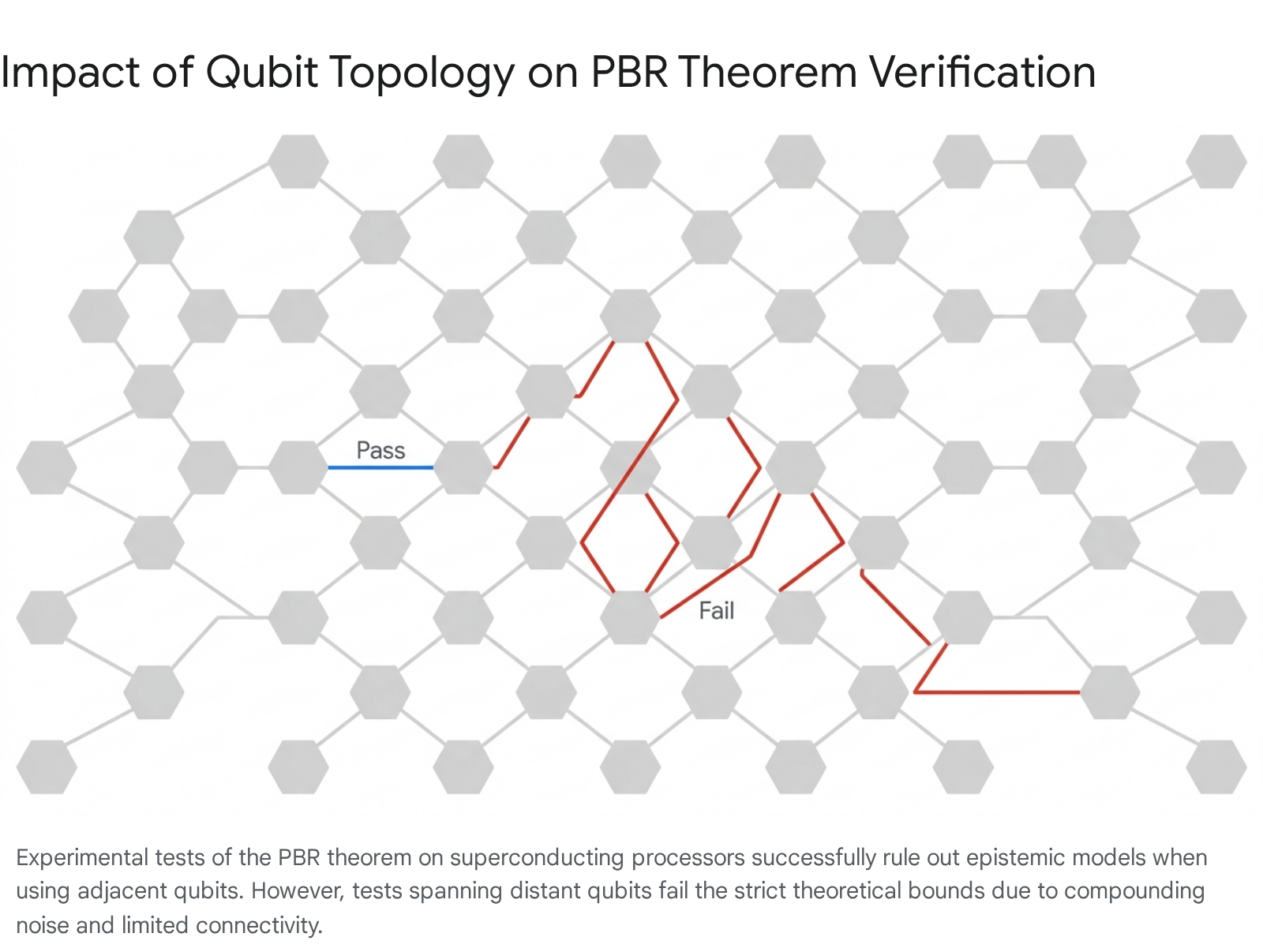

The frontier of PBR experimentation has recently shifted from bespoke optical tables to scalable quantum computing architectures. In 2025, Yang, Yuan, and Barnes published an experimental demonstration of the PBR test on IBM's 156-qubit Heron2 Marrakesh superconducting quantum processor 162432.

The researchers compiled precise unitary circuits to execute the required entangling measurements and estimated the forbidden-outcome probabilities for various input configurations. They found that for adjacent qubit pairs and tightly clustered five-qubit configurations, the error probabilities fell strictly below the classical overlap bound. This successful execution passed the PBR test and exposed a definitive statistical gap that purely epistemic models cannot bridge 1632.

Crucially, however, the Yang et al. study revealed the severe impact of hardware noise and cross-talk on foundational tests. When testing spatially separated qubits, such as pairing qubit-0 with qubit-155 across the entire span of the device, the test failed to rule out epistemic models. The accumulated noise and reduced connectivity of the multi-hop entanglement resulted in outcome statistics that breached the theoretical tolerance bounds 1632. This dynamic demonstrates that while quantum theory holds locally, confirming foundational theorems across large-scale physical distances on Noisy Intermediate-Scale Quantum platforms remains a profound engineering challenge requiring continuous calibration against cross-talk 163225.

Implications for Interpretations of Quantum Mechanics

The PBR theorem acts as an ontological filter, forcing various interpretations of quantum mechanics to clarify their physical commitments or face mathematical contradiction. If one accepts the Preparation Independence Postulate, the wavefunction must be viewed as an objective feature of physical reality. The impact of this theorem reverberates differently across the major interpretative schools 81526.

To systematically understand these impacts, interpretations can be mapped onto the Harrigan-Spekkens framework as either Type I, where probabilities are determined by intrinsic properties of the observed system, or Type II, where probabilities are determined by observer experience, context, or subjective belief 827. The following table summarizes how the prevailing interpretations of quantum mechanics absorb or reject the conclusions of the PBR theorem.

| Quantum Interpretation | Harrigan-Spekkens Classification | Response to the PBR Theorem |

|---|---|---|

| Many-Worlds (Everettian) | Type I: $\psi$-ontic | Highly compatible. The universal wavefunction is the only fundamental reality. The PBR theorem supports the premise that $\psi$ is an objective, physical entity that branches continuously over time 2838. |

| De Broglie-Bohm (Pilot Wave) | Type I: $\psi$-ontic | Compatible. The theory posits both a real wavefunction and real particle positions as hidden variables. The wavefunction physically guides the particles, fulfilling the $\psi$-ontic requirement inherently 2628. |

| Spontaneous Collapse (GRW) | Type I: $\psi$-ontic | Compatible. The wavefunction is treated as a real physical field that undergoes spontaneous, objective physical collapse mechanisms, independent of observers 8. |

| Consistent Histories | Type I/II (Debated) | Debated. Some theorists argue it operates as a $\psi$-epistemic framework over sets of mutually decoherent histories, while others argue the framework avoids PBR entirely by rejecting the assumption that a unique, single underlying reality ($\lambda$) exists prior to history selection 263839. |

| Quantum Bayesianism (QBism) | Type II: Subjective Belief | Evades PBR entirely. QBism rejects the premise of the ontological models framework. It denies that an objective underlying state $\lambda$ exists independent of an agent. Because PBR assumes $\lambda$ exists, QBism is mathematically immune, viewing $\psi$ strictly as a user's manual for agent expectations 2540. |

| Relational Quantum Mechanics | Type II: Relational | Challenged but adaptable. RQM claims wavefunctions are relative to observers. However, proponents argue RQM is a realist theory where facts are relational. RQM evades PBR by denying that quantum states represent knowledge of an absolute underlying ontic state $\lambda$ 62629. |

The PBR theorem systematically closes the intellectual exits for physicists who wish to maintain a classical, localized concept of reality 5. Prior to 2012, it was possible to hold a classical, local view of the universe by claiming that quantum mechanics was simply a statistical theory of incomplete knowledge 4. By ruling this out, the PBR theorem forces realists to accept that the wavefunction is a physical object. Because the wavefunction exhibits entanglement and non-local correlations, accepting its physical reality immediately implies accepting non-locality - a conclusion that aligns directly with Bell's Theorem, but arrived at through an arguably simpler and more direct mathematical route 430.

The Configuration Space Problem

While the PBR theorem firmly establishes that the wavefunction is a real physical object within realist frameworks, it raises a profound ontological complication: where does this physical object actually exist?

In classical mechanics, fields and waves, such as electromagnetism or water waves, exist and propagate in standard three-dimensional physical space. However, the quantum wavefunction for an $N$-particle system is not mathematically defined in 3D space. It is defined in a $3N$-dimensional abstract mathematical construct known as configuration space 4331.

If the PBR theorem dictates that the wavefunction is a real physical entity, it lends significant support to a philosophical and physical position known as Wavefunction Realism 4546. Wavefunction realists argue that if the wavefunction is the fundamental physical object of the universe, then the fundamental physical space of the universe is not the three-dimensional space humans perceive, but the unimaginably high-dimensional configuration space 4546. Our entire experience of three-dimensional space is, under this view, a macroscopic illusion or an emergent phenomenon that supervenes on a highly localized portion of the universal wavefunction 4532.

Opponents of Wavefunction Realism argue that treating a mathematical function defined in abstract configuration space as a literal physical wave is a fundamental category mistake. They suggest it is a holdover from early twentieth-century attempts by physicists like Schrödinger to make quantum mechanics resemble classical wave mechanics 4648. These critics suggest that the deep tension exposed by the PBR theorem indicates that physics requires entirely new ontological categories that neither reduce to classical 3D waves nor devolve into subjective observer ignorance 4648.

Theoretical Context: Maudlin's Trilemma and Modern No-Go Theorems

The Pusey-Barrett-Rudolph theorem does not exist in isolation; it operates as part of a modern renaissance of no-go theorems that increasingly restrict the permissible boundaries of physical reality. The philosophical crisis deepened by the PBR theorem is eloquently captured by Maudlin's Trilemma, which outlines the fundamental incompatibilities at the heart of the quantum measurement problem 5.

Maudlin's Trilemma demonstrates that one cannot simultaneously hold three seemingly obvious propositions about the universe: 1. Completeness: The wavefunction specifies all physical properties of a system directly or indirectly, with no hidden variables. 2. Linear Dynamics: The wavefunction always evolves in strict accordance with the continuous, deterministic Schrödinger equation. 3. Definite Outcomes: Measurements of macroscopic properties consistently yield single, determinate outcomes 5.

To resolve the measurement problem, one of these propositions must be systematically abandoned. The Many-Worlds interpretation abandons definite outcomes, positing that all outcomes occur in branching realities. Pilot-Wave theory abandons completeness, adding real particle positions to the ontology. Objective Collapse theories abandon linear dynamics, introducing random physical collapses into the equations 5.

The PBR theorem specifically targets historical attempts to bypass this trilemma by claiming the wavefunction is merely an epistemic tool. By forcing the wavefunction into the ontic category, the PBR theorem demands that physicists choose one of Maudlin's difficult ontological sacrifices, eliminating the ability to simply claim that quantum mechanics is a theory of subjective ignorance 54.

Furthermore, recent extensions of quantum thought experiments, most notably the Frauchiger-Renner theorem of 2018 and the Local Friendliness theorem, have applied similar rigorous no-go logic to the concept of absolute events 549. These theorems mathematically demonstrate that under strict quantum mechanics, a measurement outcome recorded as a definitive fact for one observer is not necessarily a definitive fact for an isolated super-observer 49. When combined with the reality of the wavefunction established by the PBR theorem, modern quantum foundations dictate a reality that is definitively non-local, physically determined by high-dimensional mathematical states, and potentially devoid of universally absolute observer facts.

Conclusion

The Pusey-Barrett-Rudolph theorem represents a watershed moment in the foundations of theoretical physics. By demonstrating that any model treating the quantum state as mere incomplete knowledge contradicts the empirical predictions of quantum theory, the theorem heavily restricts the viability of $\psi$-epistemic interpretations. The mathematics prove that distinct pure quantum states must correspond to disjoint elements of physical reality.

While the formal proof relies on the Preparation Independence Postulate - which can theoretically be abandoned at the heavy cost of accepting profound, systemic super-deterministic correlations across isolated systems - experimental data from optics, trapped ions, and advanced superconducting processors increasingly validates the theorem's bounds. The accumulated data confirms that to the limit of current technological precision, the universe behaves exactly as if the quantum wavefunction is an objective, physical element of reality.

Consequently, the PBR theorem forces a reckoning within the physics community. It eliminates the comfort of treating quantum mechanics as a mere statistical tool for coordinating human ignorance, mandating that physicists confront the strange, non-local, and high-dimensional physical reality that the wavefunction demands.