Neural Representation and Universality of Emotions

Foundations of Affective Neuroscience

Affective neuroscience is an interdisciplinary domain dedicated to investigating the neural systems that govern emotion, mood, and motivational states 1. Operating at the intersection of psychology, biology, and neurology, the field seeks to translate abstract psychological concepts of feeling into quantifiable biological mechanisms 12. The primary objective of affective neuroscience is to identify the specific brain circuits, cellular processes, and biochemical interactions that generate, regulate, and respond to emotional stimuli. By establishing a rigorous neurobiological foundation, researchers aim to understand how affective experiences direct behavior and what neuroanatomical alterations occur when emotional processes become maladaptive, leading to psychiatric conditions 1.

Historically, the study of the mind was bifurcated into two separate realms: cognitive neuroscience, which focused on "cold" processes such as memory, attention, and executive function, and the psychological study of "hot" processes involving emotion 13. The term "affective neuroscience" was formally coined in the early 1990s by neuroscientist Jaak Panksepp to address the cognitive-centric biases of the time, advocating for a biological examination of emotional states that traditional cognitive science had largely ignored 3. Modern research has dismantled this artificial dichotomy, demonstrating that affect and cognition are functionally inseparable and rely on extensively overlapping neural mechanisms 14. Complex decision-making, classically considered a purely cognitive function, is profoundly influenced by risk assessment and valence signals originating in emotional circuitry 1. Emotions are therefore conceptualized not as ephemeral or purely subjective phenomena, but as highly organized, measurable activities within the central nervous system that evolved to address problems of adaptation, survival, and reproduction across species 1567.

A critical evolution in the foundational paradigms of affective neuroscience involves the dismantling of the "triune brain" theory. Proposed by Paul MacLean in the 1960s, this highly influential model posited that the human brain expanded along three distinct evolutionary lines: a deep "reptilian" brain composed of the basal ganglia responsible for instincts, a "paleomammalian" limbic system responsible for emotions, and a "neomammalian" neocortex responsible for rational cognition 48910. Despite its enduring popularity as a heuristic for understanding human behavior, contemporary affective neuroscience universally rejects the triune brain model as an inaccurate neuromyth 48. Mammalian brains do not increase in complexity via linearly stacked evolutionary layers, nor do they house independent biological computers operating autonomously 810. Instead, the brain operates as an integrated, adaptive network where emotion and cognition are fundamentally interdependent 4810. There is no purely emotional center in the limbic system, just as there is no purely cognitive center in the cerebral cortex 48. Modern models view the brain as an adaptive organ founded on interdependent networks that continuously utilize interoception and exteroception to balance allostasis, emotion, and goal-directed prediction 4.

Neuroanatomical Networks of Emotion

The representation of emotion in the nervous system is computationally complex, requiring the integration of sensory inputs, internal homeostatic states, past experiences, and contextual schemas 11. Functional neuroimaging and lesion studies indicate that emotions arise from the activation of specialized neuronal populations across distributed cortical and subcortical networks 512.

Recent neuroanatomical frameworks conceptualize emotion processing as a dynamic, multistage pathway involving consecutive stations that integrate discrete physiological signals into conscious conceptual representations, which are subsequently subjected to executive regulation 13. The initial stage of this processing involves the encoding of discrete interoceptive and exteroceptive features. Regions such as the posterior insula and the amygdala rapidly evaluate the environment and the body's physiological state, assigning initial affective valence and initiating autonomic responses prior to conscious awareness 513. Following this initial appraisal, signals are forwarded to the anterior insula. The anterior insula is critical for integrating visceral and autonomic signals into unified, whole-body patterns. This region is deeply involved in emotional awareness and exhibits heightened activation during tasks requiring the evaluation of emotional valence or the observation of pain in others 1213.

Once whole-body patterns are established, they are processed by the medial prefrontal cortex, specifically medial Brodmann area 9. This region acts as an integration hub, synthesizing these physiological patterns with cognitive objects, episodic memory, and social context to form conscious "emotion concepts" 13. It is within this medial prefrontal circuitry that basic physiological arousal is cognitively interpreted as specific subjective feelings. Finally, the lateral prefrontal cortex performs selection and inhibition operations on these integrated emotion concepts. This lateral region is vital for emotion regulation, enabling cognitive reappraisal, the delay of gratification, and the suppression of maladaptive affective states to align behavior with long-term goals 13.

| Neuroanatomical Structure | Processing Stage / Function | Role in Affective Processing | Clinical Implications of Dysfunction |

|---|---|---|---|

| Amygdala & Posterior Insula | Stage 1: Discrete Body Features | Rapid environmental and interoceptive evaluation; assigning initial affective valence; associative learning. | Impaired stimulus-reinforcement learning; reduced empathy; psychopathy. |

| Anterior Insula | Stage 2: Whole-Body Patterns | Integrating visceral signals into unified physiological states; emotional awareness. | Alexithymia; difficulty distinguishing feelings from bodily sensations. |

| Medial Prefrontal Cortex | Stage 3: Emotion Concepts | Synthesizing physiological patterns with cognition to form conscious subjective feelings. | Atypical somatosensory discrimination; impaired social decision-making. |

| Lateral Prefrontal Cortex | Stage 4: Selection/Inhibition | Top-down regulation; cognitive reappraisal; suppressing maladaptive affective responses. | Emotional impulsivity; increased risk for substance use disorders. |

Reevaluating the Amygdala: From Fear Center to Strategic Arbiter

For decades, the amygdala was erroneously simplified in both scientific literature and public discourse as the brain's primitive "fear center" 141516. The canonical model presumed that the amygdala's primary function was to reflexively drive threat avoidance and mediate fear conditioning 1416. However, extensive neuroimaging and lesion studies over recent years have necessitated a paradigm shift regarding its functional-neuroanatomical complexity, indicating that the canonical model is fundamentally overly simplistic 1617.

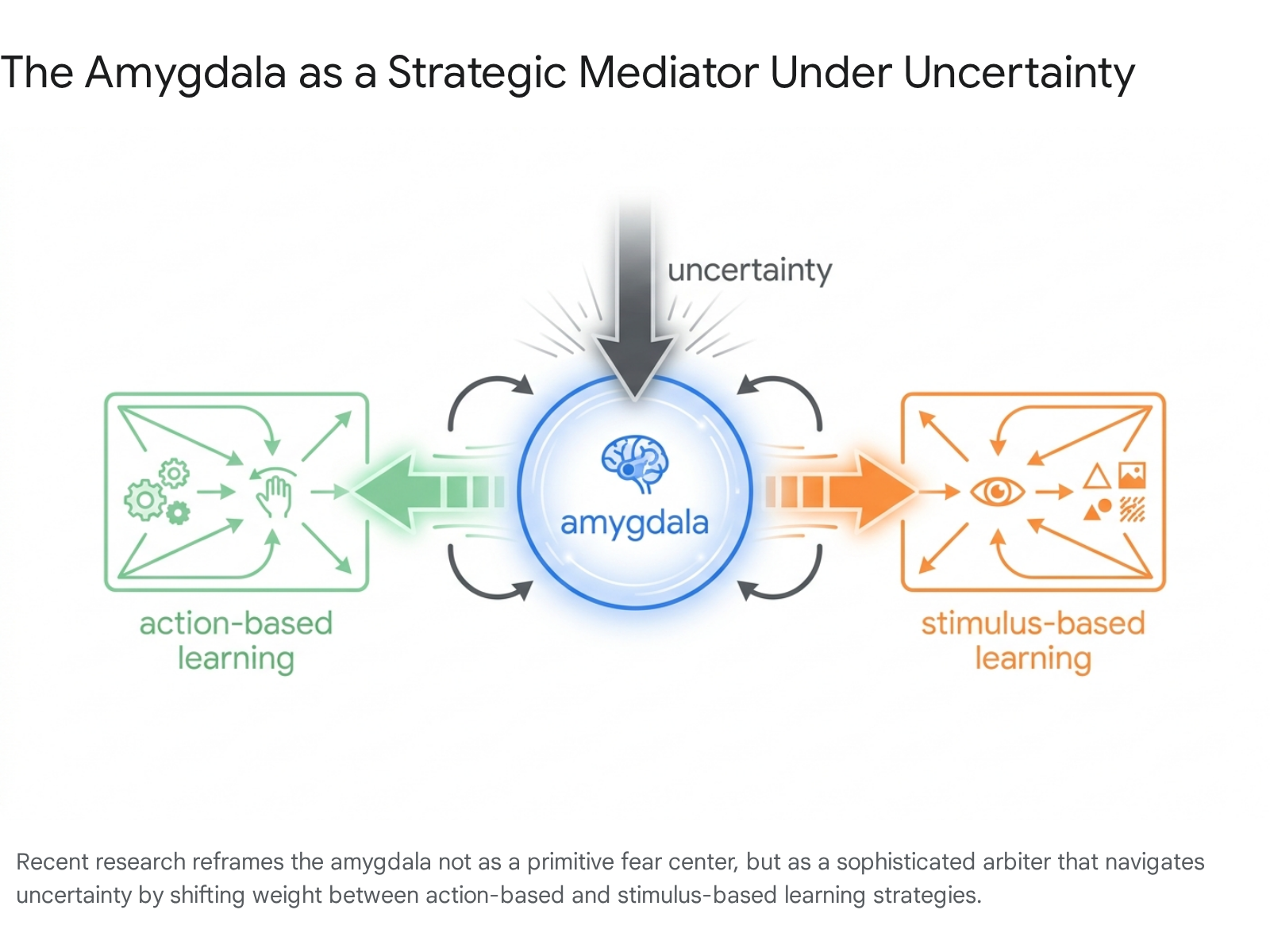

First, empirical evidence demonstrates that the amygdala is not strictly a fear locus. It is robustly engaged by positive and appetitive stimuli, playing a critical role in both positive and negative stimulus-reinforcement learning 1317. Second, human patients with bilateral amygdala damage can still experience intense fear and panic in response to endogenous threats, such as carbon dioxide-induced air hunger, demonstrating that the amygdala is not strictly necessary for the generation of all fear states 17. Instead, recent research reframes the amygdala as a sophisticated arbiter that helps the brain choose between competing strategies for learning and decision-making under uncertainty 1415. When faced with novel or ambiguous situations, the amygdala mediates between action-based learning - relying on previously successful motor movements - and stimulus-based learning, which focuses on specific object features in the environment 1415.

By gathering information and assigning weight to the most reliable model, the amygdala acts as a critical hub for cognitive flexibility rather than a mere reflex trigger 1415.

Furthermore, treating the amygdala as a single functional unit obscures its profound anatomical heterogeneity 1617. The structure is composed of at least twelve distinct nuclei containing diverse, often mutually inhibitory, cell populations. The central and medial nuclei are striatal-like and primarily inhibitory, projecting to subcortical and brainstem nuclei, whereas the lateral and basal nuclei are cortical-like and predominantly excitatory, with bidirectional connections to the cortex 17. Within the central nucleus alone, distinct microcircuits - such as somatostatin-positive versus corticotropin-releasing hormone-positive cells - drive entirely opposing behaviors, such as freezing versus escape, in response to the identical environmental threat 17. Because different cellular populations can have opposing functions, the recruitment of distinct microcircuits might result in identical changes in bulk activation as measured by functional magnetic resonance imaging, potentially obscuring meaningful brain-behavior associations 17.

Hippocampal-Prefrontal Mapping and Emotion Regulation

Emerging computational research demonstrates that emotions are organized in the brain via spatial, map-like structures. In 2026, researchers analyzing the Emo-FiLM dataset - which aligns human ratings of emotions experienced while watching film clips with corresponding functional neuroimaging data - utilized artificial neural networks to reveal that hippocampal-prefrontal circuits support the mental mapping of emotion concepts 18. Applying a computational model of relational memory known as the Tolman-Eichenbaum Machine, the researchers demonstrated that humans conceptualize emotions based on two primary features: valence, defined as the degree of pleasantness, and arousal, defined as the intensity of bodily reactions 18.

The hippocampus represents these emotional states in a structured hierarchy of nodes, where the interior part of the hippocampus represents broad categories and the posterior region represents highly granular emotion concepts 18. Simultaneously, the ventromedial prefrontal cortex tracks the relational distances between these nodes, functioning as a navigational compass that monitors transitions between different emotional states over time 18. The clinical significance of this mapping is substantial: individuals with anxiety and depression often display compressed and less differentiated neural representations of emotion, whereas high-granularity representations correlate with better psychological health outcomes 18.

Discrete pathways also govern the generation versus the regulation of these mapped emotions. The isolation of these networks indicates that emotion regulation, frequently operationalized as cognitive reappraisal, recruits higher-level cortical hierarchies that are distinct from the networks that generate initial emotional arousal 19. Meta-analyses of clinical populations confirm that successful emotion regulation relies heavily on the anterior prefrontal cortex and the dorsomedial prefrontal cortex 1920. Furthermore, the brain areas most active during the downregulation of negative emotion exhibit rich overlaps with specific neurotransmitter binding maps, notably those for cannabinoids, opioids, and serotonin receptors, providing potential targets for pharmacological and neurostimulation therapies 19.

Methodological Advances in Emotion Mapping

The functional-neuroanatomical discoveries of the past decade have been heavily dependent on shifts in neuroscientific methodology. Early neuroimaging research relied heavily on univariate functional magnetic resonance imaging (fMRI) and positron emission tomography (PET) to seek out isolated neural loci for specific emotions 2122. However, these localization approaches largely failed to map discrete emotions onto individual brain regions, leading to theoretical fragmentation 2223. Univariate analyses consistently demonstrated that basic emotions induce overlapping activations in general affective workspaces rather than lighting up unique, dedicated centers 2124.

To overcome the limitations of univariate mapping, affective neuroscience has increasingly adopted Multivoxel Pattern Analysis (MVPA) and advanced machine learning techniques. These methods reconceptualize how emotion constructs are embedded in large-scale networks, evaluating high-dimensional patterns of brain activity rather than single-voxel thresholds 23. MVPA demonstrates that information encoded in both local neural ensembles and whole-brain activation patterns can predict affective dimensions with high levels of specificity 2325. Using techniques such as LASSO principal components regression and linear discriminant analysis, researchers have successfully identified neural signatures that can reliably distinguish between passive emotional viewing and active cognitive reappraisal 2627. These multivariate signatures generalize across individuals and different emotion-eliciting conditions, proving that while emotions are widely distributed across the cortex and subcortex, they maintain specific, mathematically discriminable neural representations 2529.

Parallel advances in electroencephalography (EEG) and Explainable Artificial Intelligence (XAI) are elucidating the temporal and hemispheric dynamics of emotion. Utilizing Local Interpretable Model-Agnostic Explanations (LIME) applied to bi-hemispheric neural architectures, researchers have mapped emotion-specific hemispheric activation patterns. These models reveal consistent functional asymmetries: left frontal and central regions possess higher predictive relevance for processing sadness and fear, whereas right-hemispheric activity dominates during the processing of joy, anger, and disgust 28. The integration of these multimodal markers - subjective experiences, brain-bodily physiological signals, and expressive behaviors - through deep learning algorithms is currently mapping complex emotional landscapes within multidimensional spaces, moving the field beyond simple anatomical correlations 29.

Theoretical Models of Emotion

The shift from localized brain regions to distributed network representations directly intersects with a central and enduring debate in affective neuroscience regarding the fundamental ontology of emotions: are emotions discrete, innate biological categories, or are they psychological constructs assembled from more basic physiological building blocks? 243031.

Basic Emotion Theory

Basic Emotion Theory (BET), championed prominently by psychologists such as Paul Ekman and neuroscientists like Jaak Panksepp, posits that there exists a limited set of universally recognized, biologically inherited primary emotions 243134. Common lists of basic emotions include fear, anger, joy, sadness, and disgust 2232. According to BET, these emotions are homologous across mammalian species because they evolved to offer adaptive solutions to specific survival challenges - such as escaping predators, fighting for resources, or avoiding pathogens 3132.

Ekman focused heavily on the physical expression of these emotions, arguing that basic emotions possess distinctive universal signals, primarily invariant facial expressions that are recognizable across cultures 3132. Panksepp's neuro-evolutionary framework focused on subcortical anatomy, identifying seven primal affective systems common to all mammalian brains based on deep brain stimulation and psychopharmacological research 313334. These systems comprise four positive circuitries (SEEKING, LUST, CARE, and PLAY) and three negative circuitries (FEAR, RAGE, and PANIC/SADNESS) 34. BET asserts that these innate emotional complexes act as bottom-up drivers of behavior, initiating rapid, automatic physiological and motor responses prior to higher-order cognitive appraisal 213134.

The Theory of Constructed Emotion

The Theory of Constructed Emotion (TCE), formulated by Lisa Feldman Barrett, fundamentally rejects the essentialist view that emotions are innate, pre-wired categories residing in dedicated brain centers 21313433. TCE operates on the premise that emotions are not "natural kinds" existing independently of human perception 3031. Instead, emotions are cognitively assembled concepts constructed in the brain based on a combination of past experience, the current physiological state of the body, and the external environment 31.

In TCE, the primary function of the brain is allostasis - the predictive management of the body's energy resources and homeostasis 31. The brain continuously monitors internal sensations (interoception) and registers them as "core affect," which varies along the continuous dimensions of valence and arousal 3133. An emotion is constructed when the brain utilizes cultural, linguistic, and experiential knowledge to categorize and make meaning of this core affect in a specific context 3031. Consequently, TCE argues against the existence of dedicated neural signatures or universally invariant facial expressions for specific emotions, suggesting instead that a wide variety of distributed neural patterns and physical actions can probabilistically produce the same emotional category depending on the context 2931.

Toward a Network-Level Synthesis

Historically, the constructionist critique was bolstered by neuroimaging meta-analyses demonstrating that basic emotions do not map cleanly onto single brain regions, thereby debunking the "locationist" assumptions of early BET research 212430. However, the advent of MVPA has brokered a methodological middle ground. While univariate fMRI approaches failed to find localized centers for emotion, MVPA demonstrates that basic emotion categories are uniquely represented in the brain as highly specific, distributed, high-dimensional neural networks 2325.

Recent theoretical efforts attempt to reconcile BET and TCE by suggesting they effectively explain different stages of the affective process 3135. Under this synthesis, BET accurately describes the automatic, bioregulatory states and functional action tendencies generated by subcortical networks in response to stimuli, whereas TCE accurately describes the subsequent generation of conscious "feelings" - the cognitive representation and linguistic categorization of those physiological changes orchestrated by the neocortex 3135.

| Theoretical Framework | Core Assumption | Neural Representation | Perspective on Universality |

|---|---|---|---|

| Basic Emotion Theory (BET) | Emotions are innate, discrete, and evolutionarily conserved functional categories. | Driven by dedicated, largely subcortical neurocircuitry. | Predicts universal physiological profiles and invariant facial expressions across all cultures. |

| Theory of Constructed Emotion (TCE) | Emotions are constructed concepts based on allostasis, core affect, and learned schemas. | Relies on domain-general networks (e.g., the interoceptive system); no specific emotion loci. | Predicts extreme cultural variability; emotion concepts depend heavily on language and socialization. |

| Network-Level Synthesis | Subcortical systems drive basic physiological responses, while cortical systems construct subjective feelings. | Specific, discriminable representations exist as widely distributed whole-brain activation patterns. | Shared ecological pressures create highly recognizable, but culturally modulated, behavioral patterns. |

Universality and Cultural Specificity of Emotion

The theoretical debate regarding the biological essentialism of emotion extends deeply into cross-cultural psychology and anthropology. A central research question in affective neuroscience is the extent to which human expressions of emotion are universally recognized, independent of cultural transmission.

Cross-Cultural Meta-Analyses and the In-Group Advantage

For decades, the "Universality Thesis" dictated that basic emotions are expressed and recognized consistently across human populations, a concept originally popularized by Charles Darwin and later formalized by Paul Ekman 3640. Foundational meta-analyses of cross-cultural emotion recognition confirm that, generally, basic emotions are recognized at better-than-chance levels globally, supporting the presence of shared biological mechanisms 37383940.

However, these comprehensive analyses consistently reveal a profound "in-group advantage" that complicates strict universality. Individuals are significantly more accurate at recognizing the emotions of members within their own national, ethnic, or regional group compared to outsiders 37384142. In a landmark meta-analysis of 97 studies, emotion recognition accuracy was found to be roughly 9.3% higher when judges evaluated expressions posed by members of their own cultural group 41. This phenomenon persists across age groups and is hypothesized to stem from "nonverbal accents" - subtle, culturally specific dialects and nuances in the way universal emotions are physically expressed by different populations 4142.

Evidence from Geographically Isolated Populations

The most rigorous tests of the Universality Thesis involve small-scale, traditional societies with minimal exposure to Western media, globalization, and cultural paradigms 3640. If basic emotions are purely innate biological reflexes, isolated populations should categorize emotional expressions identically to Western populations. Recent empirical work in these societies has challenged the strict universality of basic emotions:

- The Trobriand Islanders (Papua New Guinea): Researchers studied adolescents in the culturally and visually isolated Trobriand Islands, asking them to attribute emotions to standardized photographs of Western facial expressions. When shown a "gasping" face - universally classified in Western samples as conveying fear and submission - the Trobrianders overwhelmingly interpreted it as conveying anger and an imminent threat 4043.

- The Himba (Namibia): In a free-sorting task, participants from the Himba ethnic group were asked to sort images of posed facial expressions without being provided Western linguistic emotion cues. Under these conditions, Himba participants did not replicate the presumed "universal" sorting pattern produced by U.S. participants 44. When cues to emotion concepts were provided, the sorting aligned more closely, demonstrating that emotion perception is heavily dependent on conceptual and cultural contexts rather than relying solely on innate visual recognition 44.

- The Hadza (Tanzania): Qualitative analyses of emotion narratives among the Hadza hunter-gatherers reveal fundamentally different organizing principles for emotional meaning. While Western subjects describe emotions primarily as internal mental states and subjective feelings, Hadza descriptions foreground immediate physical needs, bodily actions, the external physical environment, and the experiences of social others 45. This suggests that internal mental states may not be the universal organizing principle of emotion.

- The Mayangna (Nicaragua): Research indicates that universality may extend beyond the face to the entire body. A study of the isolated Mayangna people found that they reliably recognized bodily expressions of sadness (77% accuracy), anger (65%), and fear (43%) at levels significantly above chance 36. Because these participants had virtually no contact with Western media, the data provides strong evidence that whole-body postural expressions of certain emotions possess distinct evolutionary universality, even if facial interpretations vary 36.

These findings support a model of "minimal universality": while human anatomy limits the finite number of expressions facial and bodily muscles can physically create, specific cultures actively construct, interpret, and assign diverse meanings to these physiological expressions based on their unique ecological and social needs 43.

Ecocultural Complexes and Emotion Norms

Cultural psychology further highlights how broad ecocultural complexes shape emotional experience. Research comparing Western populations to various non-Western cultural zones demonstrates profound differences in emotional valuation 46.

In Western independent cultures, individuals tend to view self-worth based on internal feelings and seek to maximize high-arousal positive emotions 46. Consequently, European Americans report a higher frequency of positive emotions and demonstrate better biological health when experiencing positivity. Conversely, in East Asian interdependent cultures, emotions like happiness are often viewed with ambivalence, and the experience of negative emotions does not carry the same deleterious biological health impacts observed in the West 46.

Beyond the traditional East-West binary, Latin American cultures emphasize "expressive interdependence," prioritizing warm, socially engaging positive emotions to build community resilience 46. In contrast, Arab and Mediterranean "honor cultures" report high intensities of socially disengaging emotions, such as anger and pride, using these displays not to assert individual independence, but to display resourcefulness and integrate into a social hierarchy 46. These global variations confirm that the cognitive construction of emotion is heavily regulated by culturally specific feeling rules and norms.

Applied Affective Computing and Emotion Artificial Intelligence

The theoretical assumptions regarding emotion recognition have immediate, high-stakes consequences for technology, specifically within the booming sector of Emotion Artificial Intelligence (Emotion AI). Valued at roughly $21.6 billion and projected to reach over $440 billion by 2032, Emotion AI utilizes computer vision and machine learning algorithms to automatically infer individuals' affective states from facial expressions, vocal intonations, biometrics, and text 47484954. This technology is increasingly deployed across human resources for applicant screening, in law enforcement for threat detection, in marketing, and in educational settings 4754.

Scientific Validity and Algorithmic Bias

The scientific validity of commercial Emotion AI is highly contested and has drawn intense scrutiny from affective neuroscientists and civil rights organizations 474950. Most of these commercial systems are built on the foundational assumptions of Basic Emotion Theory - specifically Paul Ekman's Facial Action Coding System (FACS) 515253. These algorithms operate on the premise that distinct internal emotions are involuntarily, universally, and predictably revealed through specific facial muscle movements 5152.

Affective neuroscientists aligned with psychological constructionism argue that this assumption is critically flawed. While advanced convolutional neural networks can accurately detect and classify facial muscle movements, the mapping of these movements to internal emotional feelings is not a simple, context-free, one-to-one relationship 474853. Emotional expression is highly nuanced and culturally dependent; a smile can indicate joy, nervousness, or submission depending on the social environment 47. Reviewing over 1,000 scientific papers, the Association for Psychological Science concluded that inferring how someone feels based solely on isolated facial expressions is highly unreliable 47. Commercial systems that strip away situational context, cultural background, and physiological baselines are inherently prone to misinterpretation 4753.

Furthermore, Emotion AI datasets frequently lack diverse representation, resulting in profound algorithmic bias 5450. Studies highlight that these systems routinely assign more negative emotions, such as anger, to Black faces compared to White faces exhibiting the exact same physical expressions 4750. Emotion recognition systems may also misinterpret the cultural nuances of vocal expressions and gestures, and they frequently penalize neurodivergent individuals whose expressivity does not match the neurotypical training data 4754.

Due to these severe validity concerns, as well as the threat biometric surveillance poses to privacy and psychological autonomy, policymakers have begun to restrict the technology. In 2024, the European Union implemented the AI Act, which explicitly bans the use of emotion recognition systems in workplaces and educational institutions, citing the unacceptable risks of discrimination and the unscientific assumptions underlying the technology 474954. Despite these regulatory pushbacks, the integration of multimodal affective computing - which combines EEG, physiological sensors, and computer vision - continues to advance, requiring ongoing dialogue between neuroscientists and engineers to ensure systems reflect the true complexity of the human emotional landscape 285455.