Neural mechanisms of musical pitch rhythm and emotion

Foundational Architecture of Music Cognition

The perception and production of music constitute some of the most complex cognitive phenomena undertaken by the human brain. Historically, cultural narratives and preliminary psychological frameworks perpetuated the notion of cerebral lateralization, suggesting that creative processes, such as music comprehension and artistic expression, are localized strictly to the right hemisphere of the brain, while logical and analytical tasks remain confined to the left hemisphere 123. This "right-brain dominant" perspective has been thoroughly debunked by contemporary neuroimaging research 1243.

Functional magnetic resonance imaging (fMRI) resting-state functional connectivity studies involving over 1,000 individuals have demonstrated that while specific, localized functions - such as speech production or spatial attention - exhibit lateralization, broad behavioral traits like creativity, emotional processing, and musicality do not rely on a globally dominant hemisphere 12. Instead, music processing is an inherently whole-brain phenomenon. It requires the rapid integration of highly distributed neural networks spanning both the left and right hemispheres, simultaneously engaging auditory, motor, limbic, and executive control systems 343. When acoustic signals enter the ear, they are converted into electrical impulses that travel through the brainstem to the auditory cortices. From there, the brain engages in a sophisticated deconstruction of pitch, rhythm, timbre, and dynamics, dynamically mapping these acoustic properties onto memory, motor prediction, and emotional valuation circuits.

Methodological Constraints in Neuroimaging

The conclusions drawn regarding the neural architecture of music cognition are inherently bound by the neuroimaging technologies employed to study them. Current neuroscience navigates an ongoing trade-off between spatial and temporal resolution, dictating how acoustic processing is observed 6456.

Functional magnetic resonance imaging (fMRI) operates by measuring the blood-oxygen-level-dependent (BOLD) signal 67. It offers exceptional spatial resolution, allowing researchers to pinpoint metabolic activity deep within subcortical structures like the amygdala, hippocampus, and the ventral striatum with millimeter precision 58. However, the hemodynamic response is inherently sluggish, taking several seconds to peak. Consequently, fMRI is poorly suited for tracking the millisecond-to-millisecond temporal dynamics of fast musical syntax, rhythm entrainment, or rapid auditory-motor synchronization 64.

Conversely, Electroencephalography (EEG) and Magnetoencephalography (MEG) offer unparalleled temporal resolution, capturing electrical oscillations and steady-state evoked potentials in real time 645. This makes EEG the preferred modality for studying rhythmic entrainment, the measurement of neural microstates during the perception of complex melodies, and event-related potentials (ERPs) such as the mismatch negativity (MMN) to syntactic violations 691011. However, electrical signals are distorted as they pass through the skull and scalp - a challenge known as the "inverse problem" - which severely limits the ability of EEG to precisely localize deep-brain emotional generators 5. To overcome these limitations, cutting-edge research increasingly relies on simultaneous EEG-fMRI recordings and sophisticated machine learning algorithms to map the temporal dynamics of cortical oscillations onto the spatial reality of distributed functional brain networks 47.

Acoustic Feature Extraction and Pathways

The deconstruction of sound begins in the primary auditory cortex, located in Heschl's gyrus (Brodmann Areas 41 and 42) within the temporal lobe 1213. Here, the brain engages in the fundamental extraction of acoustic features, translating physical sound waves into perceptual representations of pitch, loudness, and timing.

The Dual-Stream Model of Pitch and Timbre

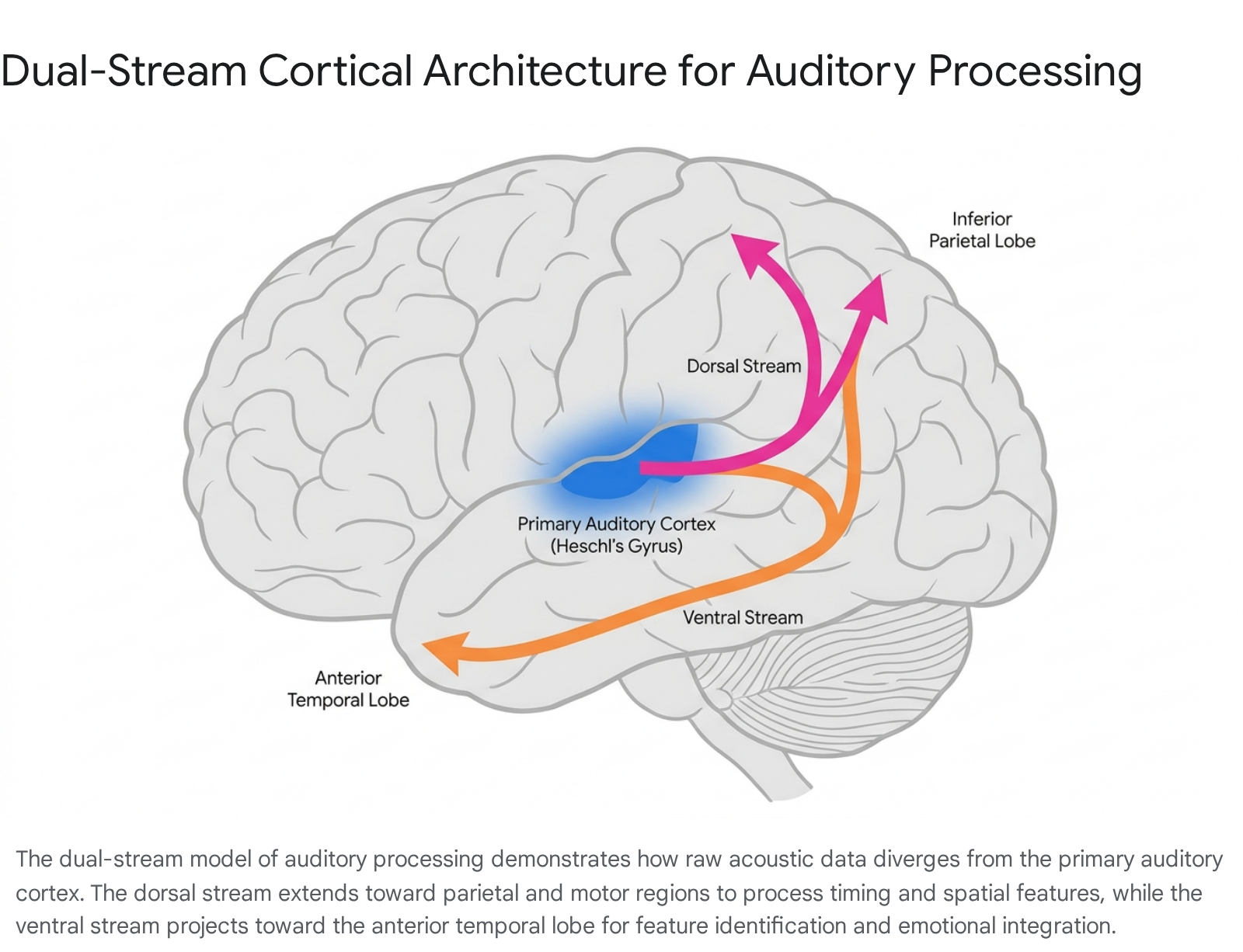

Following the initial sensory encoding in the primary auditory cortex, the processing of complex musical features - such as pitch sequences, harmonic syntax, and timbre - relies on a dual-stream cortical architecture. First conceptualized in the context of speech and language processing, this framework proposes two primary and diverging pathways: a ventral stream and a dorsal stream 121314.

The ventral auditory stream projects antero-ventrally from the primary auditory cortex toward the anterior superior temporal gyrus (aSTG) and the planum polare (Brodmann Area 22) 1213. This pathway functions as the "what" stream. It is primarily responsible for the identification of auditory objects, the categorization of sound sources, and the mapping of semantic and affective information onto pitch sequences 1314. For example, the ventral stream is crucial for distinguishing the sound of a violin from a guitar, or differentiating a bowed string from a plucked string, even if both are playing the exact same pitch at an identical volume 13.

Conversely, the dorsal auditory stream projects postero-dorsally toward the inferior parietal lobe (specifically the supramarginal gyrus, Brodmann Area 40), the planum temporale, and ultimately extends to the inferior frontal gyrus and premotor cortices 121314. Often described as the "where" or "how" stream, the dorsal pathway manages spatial localization, the sequencing of pitches over time, the syntactic processing of chord progressions, and sensorimotor transformations 13. The dorsal stream allows listeners to process interval structures, anticipate melodic trajectories, and concatenate individual pitches into a cohesive, temporal sequence 13.

Recent activation likelihood estimation (ALE) meta-analyses, synthesizing 18 experiments from 17 neuroimaging studies, have revealed that timbre - the specific "color" or quality of a sound - is not processed in isolation but rather recruits regions spanning both the dorsal and ventral streams 1213. Timbre serves a dual purpose: it allows listeners to recognize actions (e.g., the physical mechanism of sound production) and functions as a critical structuring property of music. This necessitates the integrative capacity of the bilateral posterior insula (Brodmann Area 13), the bilateral supramarginal gyrus, and the transverse temporal gyri 1213. The parallel engagement of these streams demonstrates that identifying a sound's source (ventral) and predicting its temporal, action-oriented trajectory (dorsal) occur simultaneously within the auditory cortex.

Rhythm Perception and Sensorimotor Synchronization

While pitch outlines the harmonic landscape of a musical piece, rhythm provides its temporal scaffolding. Humans possess a remarkably robust, and seemingly spontaneous, capacity to entrain to musical beats - a neurological process known as auditory-motor synchronization 1516. Listening to a rhythmic beat does not merely activate auditory regions; it spontaneously triggers a widespread motor network, even in the complete absence of overt physical movement 161718. This phenomenon underpins the universal human instinct to tap one's foot or nod to a beat.

Subcortical and Cortical Motor Circuitry

The perception and generation of rhythm rely heavily on the dynamic interplay between the auditory cortex and three primary motor structures: the basal ganglia, the supplementary motor area (SMA), and the cerebellum 1518.

The basal ganglia - a group of deep subcortical nuclei that include the putamen and globus pallidus - exhibit a highly specific response to the perception of a regular beat 151718. These structures are highly sensitive to internally generated rhythmic expectations and beat-based timing. When an individual attempts to extract a regular pulse from a complex rhythm, or taps along to a self-paced metrical structure in the absence of auditory cues, the basal ganglia are heavily recruited 1519. The striatum, a core component of the basal ganglia, processes motor preparation and is functionally linked to the brain's dopaminergic reward system, indicating a fundamental biological link between accurate rhythmic prediction, motor execution, and neurochemical reward 1819.

The supplementary motor area (SMA) and the pre-SMA act in concert with the basal ganglia to support internally guided rhythmic movements 1516. The SMA is deeply involved in covert beat generation - the internal "singing" or mental tracking of a pulse when physical movement is suppressed or when a listener must maintain the beat during a brief musical rest 1520.

In contrast to the internally oriented basal ganglia and SMA, the cerebellum appears to prioritize externally guided synchronization and immediate error correction 151718. Evidence indicates that specific cerebellar sub-regions, such as lobule VIII and the right lobules IV, V, and VI, are critical for tracking complex or highly variable rhythmic patterns 15. The cerebellum continuously adjusts motor output to match external temporal fluctuations, generating internal models that predict sensory events 1518. While the basal ganglia govern the extraction of the primary pulse, the cerebellum continually calibrates the brain's temporal expectations against incoming, real-time sensory deviations 18.

Top-Down Predictive Modeling of Temporal Structures

The brain's processing of rhythm is not a passive bottom-up reaction to sound; it is an active, top-down predictive process. This is particularly evident when analyzing the neurological processing of complex rhythmic structures, such as West African polyrhythms. In experimental paradigms utilizing steady-state evoked potentials (SSEPs), participants listened to rhythmic streams that transitioned from simple quadruple rhythms to complex 3-over-4 polyrhythms, followed by periods of complete silence 10.

EEG spectral analyses revealed that the auditory stimuli elicited clear amplitude peaks corresponding to the mathematical frequencies of the beats (e.g., 2 Hz, 4 Hz, and 8 Hz for the quadruple rhythm; adding 3 Hz and 4.5 Hz during the polyrhythm) 10. Crucially, during the silent periods following the polyrhythm, the brain continued to produce these beat-related neural oscillations 10. Because these oscillations occurred without external acoustic input, they reflect top-down, endogenously controlled neural entrainment. Interestingly, musically trained individuals demonstrated significantly higher normalized amplitudes at the harmonic frequencies during silence, indicating that prolonged exposure and training enhance the brain's ability to maintain complex temporal hierarchies internally 1011.

Cultural Enculturation in Musical Syntax

A fundamental and heavily debated question in music neuroscience is the extent to which auditory processing is universally hardwired into human biology versus shaped by cultural enculturation. Historically, the field of music psychology has suffered from a profound sampling bias, relying predominantly on WEIRD (Western, Educated, Industrialized, Rich, and Democratic) participant populations 2122. Because Western music operates primarily on isochronous, simple meter rhythms and twelve-tone equal temperament scales, brain responses tuned to these specific parameters have often been mistakenly conflated with universal human traits 2122.

Cross-Cultural Variances in Rhythmic Priors

Recent large-scale global studies demonstrate that rhythm perception is profoundly influenced by cultural exposure, with differentiation beginning as early as infancy. For example, American infants demonstrate a strong perceptual bias toward the regular metrical structures common in Western music. In contrast, Turkish infants, who are exposed to complex, irregular meters native to their culture's music, process both regular and irregular rhythms with equal facility 23. This enculturation persists into adulthood; behavioral tasks indicate that adults remember and reproduce complex rhythms from their native musical culture much more accurately than foreign rhythms of similar complexity 23.

To quantify this enculturation, researchers recently deployed an iterated reproduction paradigm across 39 distinct cultures spanning five continents 22. Participants listened to random, computer-generated rhythmic sequences and were asked to tap them back; their output was recorded and played back to them in subsequent iterations, mimicking the mechanics of the game "telephone" 22. Over multiple trials, participants' tapping drifted away from the randomized stimuli and naturally converged onto specific rhythmic patterns. These convergent patterns varied drastically across cultures, revealing deep-seated internal "priors" or biases dictated by the specific musical environments of the participants 22. The study decisively demonstrated that while the biological mechanism of auditory-motor synchronization is universal, the specific rhythmic syntax the brain anticipates is learned.

Melodic Learning and Non-Uniform Scales

Just as rhythmic processing is shaped by exposure, the brain's processing of melodic syntax relies on learned statistical probabilities. Studies utilizing artificial grammar learning demonstrate that unfamiliar musical systems are more easily learned by the brain when they feature non-uniform scales - scales containing asymmetric intervals (such as a mixture of whole steps and half steps), a characteristic highly prevalent in global musical systems from Indian ragas to Western diatonic modes 24. Non-uniform scales provide the auditory cortex with stronger structural anchors, yielding better neural encoding of musical syntax. EEG readings during artificial melody exposure show that non-uniform scales result in significantly faster behavioral recognition of syntactic violations and improved statistical learning compared to uniform (symmetrical) scales 24.

Neurological Responses to Microtonal Structures

Research into the neurological impacts of specific non-Western structures, such as Indian Hindustani classical ragas, further illustrates the brain's sophisticated response to culturally contextualized music. Ragas are highly complex melodic frameworks utilizing microtonal intervals (shrutis) and rigid ascending/descending rules that are traditionally tied to specific times of day and target emotional states (rasas) 928.

Recent EEG microstate analyses have shown that specific ragas induce highly repeatable and distinct transient brain states 929. Microstates represent the brain's momentary modes of operation, often lasting just tens of milliseconds. In a study involving 40 participants listening to various Hindustani vocal pieces, researchers found that the acoustic structure of the ragas systematically reprogrammed these cortical microstates 92526. For example, listening to Raga Darbari, a composition traditionally associated with depth and solemnity, significantly increased attention-related neural microstates while simultaneously suppressing mind-wandering activity, leading to heightened cognitive clarity 926. Conversely, Raga Jogiya, known for its melancholic pathos, enhanced attentional networks but prominently activated emotion-regulation microstates, allowing listeners to process grief-like affective states with measured cognitive composure 926. The ability of these microtonal structures to predictably shift the brain between attentive and emotion-regulatory states highlights the profound interaction between complex acoustic frameworks and high-level cognitive regulation.

Neurochemical Drivers of Affective Resonance

The capacity of music to evoke profound emotion - often exceeding the intensity of emotions experienced in daily social life - represents a unique intersection of sensory processing and ancient survival circuitry. Listening to emotionally salient music modulates activity across all major limbic and paralimbic structures, including the amygdala, hippocampus, insula, anterior cingulate cortex (ACC), and orbitofrontal cortex 27282930.

Dopaminergic Reward Circuitry

The pleasure derived from music is mediated by the exact same mesolimbic reward circuitry that drives biologically imperative survival behaviors, such as eating, reproduction, and the response to addictive substances 31323334. Positron emission tomography (PET) and fMRI studies reveal that intensely pleasurable music leads to the release of endogenous dopamine in the striatum 323335.

Crucially, this dopaminergic transmission is phase-specific and tied to the syntactic predictions generated by the auditory cortex. Anticipation of a musical peak (e.g., an approaching crescendo, a delayed rhythmic drop, or an impending harmonic resolution) triggers dopamine release in the dorsal striatum (specifically the caudate nucleus), a region heavily linked to predictive coding, motor planning, and goal-directed behavior 3235. The actual consummation of the peak emotional moment triggers dopamine release in the ventral striatum (nucleus accumbens), delivering the acute sensation of hedonic reward 323335. This temporal separation explains why the buildup of musical tension is often as pleasurable as its resolution.

Amygdala Modulation and Emotional Valence

The amygdala, traditionally associated with threat detection and the processing of negative emotional valence (such as fear), operates as a highly sensitive emotional valence evaluator during music listening. As auditory streams are processed, the amygdala assesses the affective content of the sounds 273141. Listening to highly pleasant or joyful music effectively down-regulates activity in the central regions of the amygdala (which process aversive states) while simultaneously up-regulating connectivity between the dorsal amygdala and the positive-emotion reward network, including the ventral striatum and orbitofrontal cortex 2835. Conversely, unexpected dissonant chords or unpleasant musical stimuli trigger immediate blood-oxygen-level-dependent (BOLD) increases in the laterobasal amygdala 283035.

Live Performance and Affective Synchronization

The emotional resonance of music is profoundly amplified by the social context of its delivery. A highly sophisticated 2024 fMRI study investigating the difference between live performance and recorded music revealed that live music generates a unique, highly synchronized neural response 3637. In the experiment, 27 listeners were scanned in real-time while a pianist played live. The pianist was provided with an amygdala neurofeedback loop from the audience, allowing the performer to dynamically adapt their articulation, volume, and density to intensify the listeners' emotional reactions 363738.

The results demonstrated that live performances elicit significantly higher and more consistent amygdala activation than identical recorded versions of the same pieces 363738. Furthermore, live music stimulated a much more active exchange of information across the whole brain, demonstrating a real-time affective and cognitive synchronization between the performer and the listener 3637. This heightened limbic engagement suggests that human musicality is fundamentally rooted in social interaction; the brain processes live music not merely as a passive acoustic signal, but as an active, unfolding social dialogue that cannot be neurologically replicated by streaming technologies 363738.

Physiological Divergence of Chills and Tears

Peak emotional experiences in music generally manifest in two distinct physiological and subjective states: chills (often referred to as frisson) and tears (weeping or experiencing a "lump in the throat") 394041. While both phenomena are subjectively categorized by listeners as "being moved," they correspond to opposing branches of the autonomic nervous system and are characterized by entirely different psychophysiological markers.

Psychophysiological Markers of Music-Induced Chills

Chills are associated with acute psychophysiological arousal and the rapid activation of the sympathetic nervous system 3942. The onset of a musical chill correlates with a surge in electrodermal activity (EDA), specifically spikes in skin conductance level and response 3239. This is accompanied by an enlarged pupil diameter, an increased heart rate, and deep breathing leading to an increased respiration depth 39.

Neuroanatomically, individuals who frequently experience musical frisson demonstrate a higher volume of nerve fibers connecting the auditory cortex to the anterior insular cortex 43. This dense connectivity facilitates the rapid transfer of acoustic deviations into somatic feeling states, triggering the release of dopamine in the nucleus accumbens and resulting in a highly rewarding, positively valenced emotional rush 323943.

Psychophysiological Markers of Cathartic Tears

Conversely, music-induced tears are associated with physiological calming and the activation of the parasympathetic nervous system 3942. While the onset of tears shares some initial arousal markers with chills - such as deep breathing and a momentary acceleration in heart rate - they are uniquely characterized by a subsequent, pronounced slowing of the respiration rate 39.

Furthermore, unlike chills, tearful responses lack the sharp electrodermal spikes in skin conductance 39. This specific combination of physical markers - initial cardiac acceleration followed by sustained respiratory slowing and autonomic calming - indicates a trajectory of tension followed by profound release. This physiological pattern perfectly aligns with the psychological concept of catharsis, explaining why tears induced by sad music are often reported as deeply comforting rather than distressing 283944.

| Physiological / Neural Marker | Music-Induced Chills (Frisson) | Music-Induced Tears (Catharsis) |

|---|---|---|

| Autonomic Nervous System | Sympathetic dominance (Fight/Flight/Arousal) 3940 | Parasympathetic dominance (Rest/Digest/Calming) 3942 |

| Respiration | Deep breathing, increased rate 3239 | Deep breathing, followed by decreased rate (slow respiration) 39 |

| Electrodermal Activity (EDA) | Sharp increase at peak onset 39 | No significant increase at peak onset 39 |

| Heart Rate (HR) | Sustained acceleration 3239 | Brief acceleration followed by calming 39 |

| Subjective Valence | Associated predominantly with positive emotional arousal and reward 40 | Associated with sadness, grief, relief, or "pleasure from sadness" 3945 |

| Primary Neurochemicals | High dopamine release (Striatum) 32333943 | Putative roles for oxytocin; inconclusive data on prolactin 41424647 |

Endocrine Hypotheses for the Enjoyment of Sad Music

The widespread enjoyment of sad music presents an evolutionary paradox: why do humans intentionally seek out acoustic stimuli that mimic social loss, and why does this simulated grief routinely produce pleasure? To explain this phenomenon, researchers have investigated the potential roles of various neurochemicals and endocrine hormones.

The Prolactin Hypothesis and Consolatory Release

The most prominent framework attempting to explain the pleasure of music-induced sadness is the Prolactin Hypothesis, posited by David Huron 4448. Prolactin is an endocrine hormone released by neurons in the hypothalamus in response to psychic stress, grief, and the shedding of psychic tears 4648. In genuine traumatic situations, prolactin serves to console the individual and counteract mental pain, encouraging attachment and pair-bonding 48. Huron theorized that sad music tricks the brain into simulating real sadness, thereby triggering a compensatory, consolatory release of prolactin. Because the listener is in a safe, aesthetic context and is not experiencing genuine trauma, the consoling effects of the hormone are experienced as pure tranquility or pleasure 48.

Despite its theoretical elegance, empirical testing has largely failed to support the prolactin hypothesis. In a controlled experiment measuring serum prolactin concentrations in 39 participants listening to sad and happy music, researchers found no significant increase in prolactin following exposure to sad music 46. Furthermore, the subjective pleasure of listening to sad music showed no correlation with elevated prolactin levels. Surprisingly, nominally happy music was found to actually decrease prolactin in some listeners, rendering the hormonal mechanism far more complex than a simple stress-consolation reflex 46. Other studies investigating the adrenocorticotropic hormone (ACTH), a stress biomarker, found that active group singing reduces ACTH, contributing to states of relaxation and "social flow," but these reductions are not strictly tied to the sadness of the music 49.

Oxytocin, Empathy, and Social Affiliation

Consequently, researchers have turned their attention to oxytocin, a neuropeptide central to social bonding, empathy, and maternal behavior, to explain the profound emotional resonance of music 4142. Theories of emotional contagion suggest that music mimics human vocalizations of distress; hearing a sad melody engages the brain's neural mirroring system, eliciting affective empathy and potentially stimulating oxytocin release 4144.

However, empirical data regarding music and oxytocin remains highly context-dependent and somewhat inconsistent. Studies assessing salivary and serum oxytocin have found that collaborative, group musical activities - such as choral singing or vocal jazz improvisation - frequently elevate oxytocin levels and promote social bonding and trust 47495051. Yet, listening to sad music in isolation yields contradictory oxytocin responses, with some studies showing decreases rather than increases 4751. Current scientific consensus suggests that music-induced oxytocin fluctuations are highly sensitive to the social environment, the presence of interpersonal interaction, and the empathic trait level of the listener. Therefore, oxytocin acts more as a broad modulator of social affiliation than a direct, isolated cause of the tearful response to solo listening 41475052.

Large-Scale Brain Networks and Emotional Construction

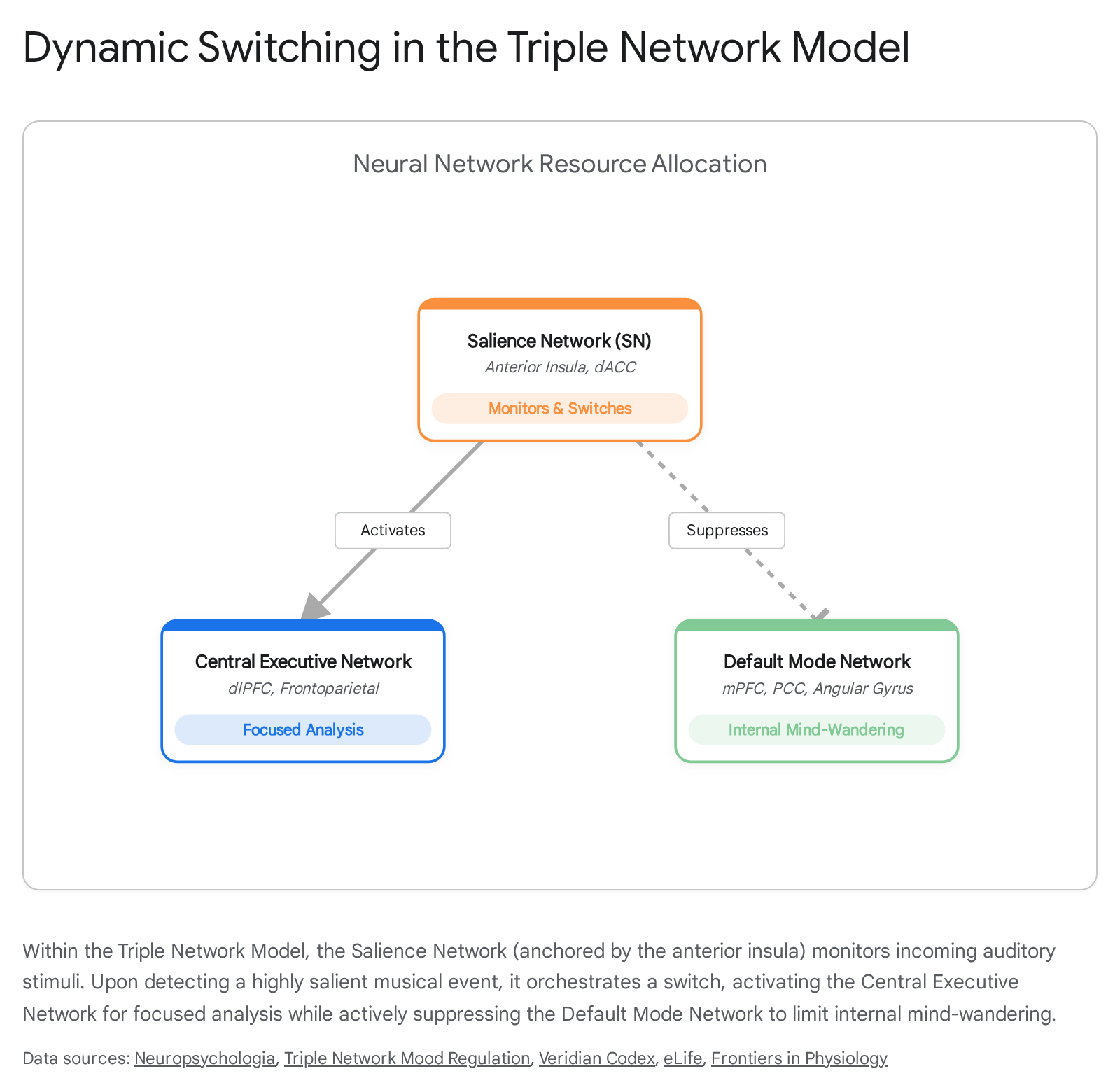

Beyond specific anatomical nodes like the amygdala or hormonal systems, the cognitive processing of music is governed by large-scale functional architectures. The integration of acoustic features with memory, emotion, and attention is best modeled by the Triple Network Model 53545556. This model posits that high-level cognition and emotional regulation depend on the dynamic interplay of three core resting-state networks:

- The Salience Network (SN): Anchored by the anterior insula and the dorsal anterior cingulate cortex (dACC), the SN acts as a sensory-emotional surveillance system. It continuously monitors external and internal stimuli, assigning importance and detecting behaviorally relevant acoustic events, structural violations, or profound emotional shifts in the music 535455.

- The Central Executive Network (CEN): A frontoparietal network responsible for working memory, focused attention, and the active, analytical manipulation of musical expectations 535455.

- The Default Mode Network (DMN): Comprising the medial prefrontal cortex (mPFC), posterior cingulate cortex (PCC), and angular gyrus, the DMN is traditionally associated with resting states, mind-wandering, autobiographical memory retrieval, and self-referential thought 555758.

During active music listening, the Salience Network serves as the master switch, mediating resource allocation between the externally oriented CEN and the internally oriented DMN 5565.

Interestingly, the emotional valence of the music dictates which network dominates. Probe-caught thought sampling during fMRI scans demonstrates that exposure to sad music significantly increases the centrality and activation of DMN nodes, pulling the listener's attention inward and triggering spontaneous, self-referential cognitive processes 6659. Happy or highly rhythmic music, conversely, tends to recruit the CEN for external tracking and motor engagement.

The Default Mode Network and Discrete Emotion Construction

The role of the DMN extends far beyond simple mind-wandering. Recent advances in affective neuroscience increasingly view the DMN through the lens of constructionist emotion theory. This theory argues that discrete emotions (e.g., fear, sadness, awe) are not biologically hardwired, modular circuits housed exclusively in subcortical limbic regions. Rather, discrete emotional experiences are conceptual constructions synthesized dynamically by the DMN 57586061.

By integrating raw, incoming sensory affect (generalized valence and physiological arousal generated by the limbic system) with past episodic memories, semantic knowledge, and social context, the DMN categorizes the auditory stimulus into a distinct, culturally recognizable emotional experience 576061. Tracking brain state transitions using hidden Markov models reveals that regions along the temporoparietal axis actively reflect transitions between music-evoked emotional states, modulating their responses based on the preceding emotional context 6263. When music moves a listener to tears, it is because the DMN has successfully woven the acoustic properties of the song - its minor intervals, its slowing tempo, its timbral texture - into an abstract narrative that is deeply and inextricably intertwined with the listener's autobiographical identity and episodic memory 5862.