Model theory and the classification of mathematical structures

Syntactic Formalisms and Semantic Realizations

Model theory functions at the precise intersection of universal algebra and metamathematics, operating as the formal mathematical study of the relationship between logical languages and the mathematical structures that give them meaning. In its most rigorous formulation, model theory investigates how abstract syntactic descriptions - such as axioms, formulas, and complete logical theories - manifest structurally as geometric, algebraic, or combinatorial entities. The discipline treats classes of mathematical structures, ranging from algebraically closed fields and torsion-free groups to arbitrary random graphs, not merely as isolated geometric objects of study, but as the semantic realizations of formal logical theories.

The core apparatus of model theory rests upon the formal separation, and subsequent reunification, of syntax and semantics. Syntactic objects are the uninterpreted strings of symbols generated by the recursive rules of a formal language, while semantic objects are the set-theoretic structures in which these strings are evaluated for truth. A theory is defined syntactically as a set of sentences in a specific formal language; a model of that theory is defined semantically as a structure wherein every sentence belonging to the theory evaluates to true 12. By defining classical mathematical concepts through this dual lens, model theory allows for the direct translation of complex algebraic and geometric problems into logical terms, where general metamathematical theorems regarding formal languages can be applied to yield profound algebraic results.

The architecture of first-order model theory relies on a rigorous mapping between the formal syntax of first-order logic and the set-theoretic realities of mathematical structures. A signature, often denoted by the symbol $\sigma$ or $L$, specifies the vocabulary of the language. This vocabulary consists of constant symbols, function symbols, and relation (or predicate) symbols, each assigned a specific fixed arity 1. When a signature is interpreted semantically, it generates a structure. A structure consists of a non-empty set representing the domain of elements (the universe), together with specific designated elements, set-theoretic operations, and relations on that domain corresponding perfectly to the symbols in the signature 12. Through Alfred Tarski's recursive definition of truth, syntactic formulas are transformed into geometric or set-theoretic definable sets within the domain of the structure.

| Syntactic Concept (Formal Logic) | Semantic Equivalent (Model Theory) | Description of the Functional Relationship |

|---|---|---|

| Signature ($L$ or $\sigma$) | Structure ($A$ or $M$) | The signature provides the vocabulary (constants, functions, relations); the structure provides the domain and the concrete mathematical interpretations of that vocabulary 1. |

| Sentence ($\phi$) | Model Satisfaction ($A \models \phi$) | A sentence is a well-formed formula containing no free variables. A structure is a model of the sentence if the sentence evaluates to true within the structure's domain 12. |

| Formula with free variables ($\phi(x_1, \dots, x_n)$) | Definable Set ($X \subseteq A^n$) | A formula containing free variables corresponds semantically to the specific set of $n$-tuples in the structure's domain that satisfy the exact conditions of the formula 1. |

| Theory ($T$) | Class of Models ($Mod(T)$) | A theory is a coherent collection of sentences. Its semantic counterpart is the entire class of mathematical structures that simultaneously satisfy every sentence in the collection 12. |

| Syntactic Completeness | Complete Theory ($Th(A)$) | A theory is syntactically complete if it contains either $\phi$ or $\neg\phi$ for every valid sentence in the language. Semantically, this equates to the set of all true sentences for a specific structure $A$ 1. |

| Quantifier Elimination | Model-Completeness | If a theory algebraically eliminates quantifiers, every formula is logically equivalent to a quantifier-free one. Semantically, this implies every embedding between models is an elementary embedding 1. |

The Compactness Theorem and First-Order Constraints

The foundational analytic power of first-order model theory derives largely from the Compactness Theorem. This fundamental theorem states that a set of first-order sentences has a satisfying model if and only if every finite subset of that set has a model 34. The immediate consequence of this theorem is that first-order logic is inherently limited in its ability to restrict or uniquely specify the cardinality of infinite structures. If a theory possesses arbitrarily large finite models, compactness dictates that it must also possess an infinite model. Similarly, if a theory has at least one infinite model, the Upward Löwenheim-Skolem Theorem guarantees that it possesses models of every possible infinite cardinality 5.

While this inability to control infinite cardinality acts as a limitation in descriptive power - meaning first-order logic cannot uniquely characterize the standard natural numbers or the real numbers up to isomorphism - it functions simultaneously as a powerful constructive tool for mathematical discovery 5. By artificially expanding a language with new constant symbols and asserting through an infinite set of axioms that a newly introduced element is larger than any standard integer, the Compactness Theorem forces the mathematical existence of non-standard models.

This specific mechanism provides the rigorous logical foundation for Abraham Robinson's non-standard analysis. Robinson demonstrated that calculus and classical analysis could be formalized using hyperreal numbers and actual infinitesimals, avoiding the traditional limit definitions by utilizing models of the real numbers that encompass both infinitely large and infinitely small elements 36. Furthermore, the realization that researchers can move fluidly between elementarily equivalent models to transfer mathematical truths is a cornerstone of modern applied model theory. If a theorem is proven true in one model of a complete theory, the semantics of first-order logic guarantee its absolute truth in all elementarily equivalent non-standard models, enabling mathematical breakthroughs across divergent subfields 36.

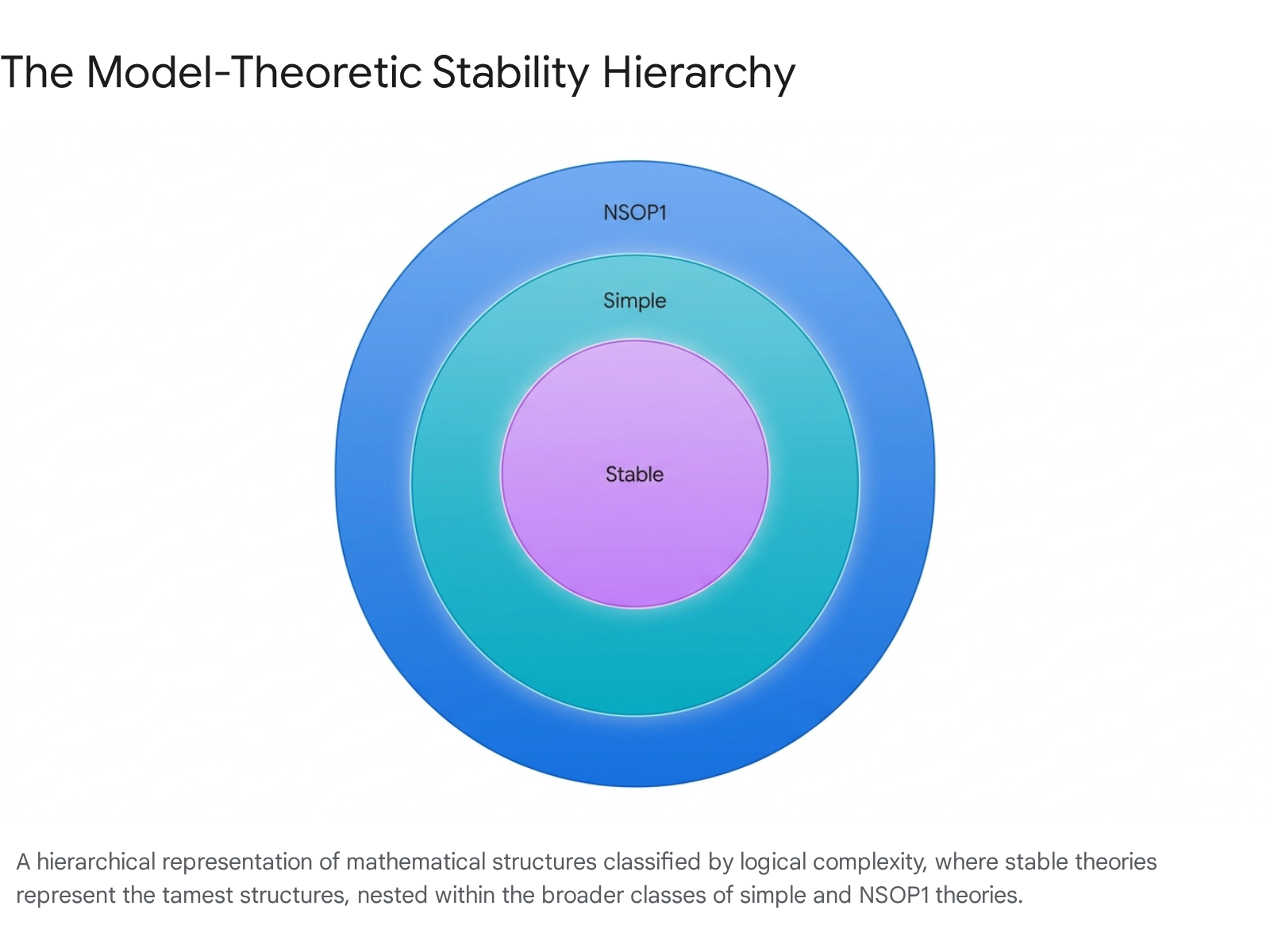

Classification Theory and the Stability Hierarchy

Beginning in the 1970s, Saharon Shelah initiated a massive, systematic program known as Classification Theory, which fundamentally shifted the focus of model theory from the study of arbitrary individual theories to the systemic classification of entire classes of theories based on the combinatorial complexity of their definable sets 789. The primary goal of this sprawling research program was to identify strict "dividing lines" - combinatorial logical properties such that theories possessing the property exhibit wild, mathematically unclassifiable behavior (often termed Gödelian complexity or strict independence), while theories lacking the property are considered "tame" and admit a deep, highly regular structure theory 79. This ongoing effort led directly to the development of the model-theoretic stability hierarchy, classifying mathematical universes by their inherent logical tractability.

Stable Theories and Non-Forking Independence

Stable theories represent the tamest, most highly structured sector of the model-theoretic universe. A theory is designated as stable if it does not possess the order property. The order property implies that one can define an infinite, linearly ordered chain of elements using a specific first-order formula within a model of the theory 89. Equivalently, from a topological perspective, a theory is stable if the number of pure types over any given parameter set is strictly controlled, completely avoiding the exponential explosion of types that characterizes mathematically unstable theories.

The structural hallmark of stability is the mathematical guarantee of a well-behaved notion of independence, which generalizes classical linear independence in vector spaces or algebraic independence in algebraically closed fields 8. In stable theories, the operation of non-forking independence is symmetric, transitive, and satisfies the vital property of local character. Local character ensures that a complex type defined over an arbitrarily large parameter set does not fork over a suitably small subset of those parameters, restricting the flow of information to finite or countable bounds. Classic, frequently studied examples of stable theories include the theory of algebraically closed fields, the theory of infinite vector spaces, and the theory of torsion-free abelian groups 9. The robust deep structure of stable theories allows mathematicians to define dimension and rank rigorously (such as Morley rank or Lascar rank), permitting deep geometric arguments to replace cumbersome combinatorial proofs 1011.

Simple Theories and the Tree Property

Simple theories form a substantially broader mathematical class that strictly contains all stable theories. Introduced initially by Shelah and developed extensively by Byunghan Kim and Anand Pillay, a theory is categorized as simple if no formula possesses the combinatorial "tree property" 1213. A formula is said to possess the tree property if it can be utilized to construct an infinite, branching hierarchical tree of parameters where paths straight through the tree are logically consistent, but any sibling nodes at the exact same level of the tree are mutually inconsistent (often defined mathematically as $k$-inconsistent for some integer $k$) 913.

In simple theories, the strict definition of non-forking independence retains its exceptionally excellent behavior, fulfilling the axioms of a generalized independence relation formally known as the Kim-Pillay theorem 814. Non-forking in simple theories successfully satisfies symmetry, transitivity, extension, local character, and the Independence Theorem (which allows for the disjoint amalgamation of types over models) 1213. However, unlike fully stable theories, simple theories are capable of interpreting pseudo-random phenomena. The prototypical example of a simple but strictly unstable theory is the theory of the random graph (often called the Rado graph or the Erdős - Rényi graph), where the notion of independence is given simply by set-theoretic disjointness 915. Another foundational, naturally occurring example is ACFA, the theory of algebraically closed fields equipped with a generic field automorphism, a theory which heavily influenced the subsequent development of applied simple model theory 14.

Within the simple regime, researchers have heavily investigated "strictly simple" theories - theories that are simple but fail to be supersimple. A theory is supersimple if it admits a well-founded rank function (like SU-rank) that assigns an ordinal dimension to every type, preventing infinite descending chains of forking extensions 16. For years, a persistent open question in this specific domain was the Koponen conjecture. The conjecture posited that every simple theory that admits quantifier elimination in a finite relational language must automatically be supersimple, possess finite rank, and be one-based 1617.

Recent analytical breakthroughs have successfully resolved the Koponen conjecture affirmatively. The complex proofs rely heavily on deep analyses of indiscernible sequences over finite sets. For simple theories that fail to be supersimple (the strictly simple case), model theorists introduced entirely new numerical invariants, including $n$-resolvability and the G-rank (or FMb-rank), which successfully generalize the concept of canonical bases to the non-supersimple landscape 16. By rigorously demonstrating that a strictly simple theory would invariably produce infinitely many distinguishable indiscernible sequences over a finite set, researchers exposed a direct contradiction with the assumption of quantifier elimination in a finite relational language. This geometric contradiction mathematically forces any such theory to collapse back down into the supersimple hierarchy 1617.

Neostability and NSOP1 Theories

Moving beyond the boundaries of simple theories lies the rapidly expanding, highly active frontier of neostability theory, specifically focusing on the study of NSOP1 theories. SOP1 stands for the "Strict Order Property of kind 1," which describes another specific, highly complex configuration of formulas forming specific trees of inconsistencies 141718. A theory is classified as NSOP1 if it lacks this debilitating property. The overarching class of NSOP1 theories strictly contains all simple theories and represents the current recognized boundary where a robust, global notion of independence can still be meaningfully formulated and utilized by mathematicians 1214.

In NSOP1 theories, the standard notion of non-forking independence frequently breaks down, losing its crucial symmetry. To rectify this, a modified notion called Kim-independence - defined by analyzing dividing only with respect to specific, well-behaved invariant Morley sequences (known as Kim-dividing) - emerges as the mathematically correct geometric tool 141820. In an NSOP1 theory, this Kim-independence satisfies symmetry, existence, and the Independence Theorem over models 1218.

The critical, defining divergence from simple theories is that Kim-independence in NSOP1 theories fails to satisfy base monotonicity. Base monotonicity is the foundational property dictating that if a tuple $A$ is independent from $B$ over a base set $C$, and $D$ is merely a subset of $B$, then $A$ must remain independent from $B$ over the larger combined base $C \cup D$. The failure of base monotonicity in NSOP1 theories means that artificially adding parameters to the base can spontaneously introduce unwanted dependencies, creating significant complications for algebraic closure 1420.

| Stability Class | Defining Logical Property | Characteristics of Independence | Prototypical Examples |

|---|---|---|---|

| Stable Theories | No Order Property. Strict bound on type counting. | Non-forking is symmetric, transitive, and features robust local character. | Algebraically closed fields, vector spaces 89. |

| Simple Theories | No Tree Property. Allows pseudo-randomness. | Non-forking satisfies the Independence Theorem and base monotonicity. | The random graph, ACFA (fields with generic automorphisms) 1315. |

| NSOP1 Theories | No Strict Order Property 1. | Kim-independence is symmetric but strictly fails base monotonicity. | ACFH, generic incidence structures, Frobenius fields 121419. |

Natural, mathematically occurring examples of strictly NSOP1 theories (meaning theories that are NSOP1 but completely fail to be simple) have been discovered and analyzed relatively recently. A prime, heavily studied example is ACFH, which acts as the model companion of the theory of fields expanded by a unary function representing a multiplicative endomorphism 14. Other prominent examples include infinite vector spaces equipped with a generic bilinear form, algebraically closed fields of positive characteristic combined with a generic additive subgroup, and generic incidence structures such as generic projective planes or bipartite graphs omitting a fixed complete bipartite graph $K_{m,n}$ 9131920. The intricate study of Kim-forking in these specific algebraic contexts relies heavily on tracking the interaction between standard field-theoretic separable algebraic closure and the pseudo-random, generic behavior of the added topological operators 142020.

Geometric Model Theory and Categoricity

While classification theory categorizes the wildness of definable sets, geometric model theory focuses exclusively on structures whose definable sets are so tightly constrained that the global isomorphism type of the models is completely determined by a single, trackable dimensional invariant. This study originates mathematically with the concept of categoricity.

Strongly Minimal Sets and Pregeometries

A first-order theory is defined as categorical in a specific cardinal $\kappa$ if, up to algebraic isomorphism, it possesses exactly one model of size $\kappa$ 1621. In the early 1960s, Michael Morley stunned the mathematical logic community by successfully proving Los's conjecture: if a countable first-order theory is categorical in any one uncountable cardinal, it is automatically categorical in all possible uncountable cardinals 1621.

Morley's deep analysis revealed that this phenomenon of uncountable categoricity is consistently driven by the existence of a highly regular, one-dimensional core within the models, mathematically known as a strongly minimal set. A definable set is strongly minimal if every single definable subset of it is either strictly finite or co-finite (meaning its complement within the set is finite) 322. In strongly minimal sets, the algebraic closure operator behaves exceptionally well, mirroring linear span in vector spaces or algebraic closure in fields, and thus satisfying the crucial Steinitz exchange property 10. This behavior yields a well-behaved pregeometry, allowing model theorists to assign a unique, integer-valued or infinite dimension to any model based on the cardinality of an independent basis 2324. The isomorphism type of an uncountable model is entirely, exclusively determined by this specific dimension.

Zilber's Trichotomy and Hrushovski's Construction

Russian mathematician Boris Zilber sought to deeply classify the exact geometric nature of all possible strongly minimal sets. Through his research, he observed that all known, naturally occurring strongly minimal sets fell exclusively into three distinct geometric categories based on the specific combinatorial behavior of their closure operators: 1. Trivial (or degenerate): The closure operation is completely flat; the closure of any set is simply the union of the closures of its individual singletons (e.g., a set possessing no structure other than basic equality). 2. Locally Modular (vector-space-like): The geometry is flat and linear, perfectly isomorphic to the geometry of affine or projective spaces operating over a division ring. 3. Field-like: The geometry is highly non-linear and mathematically capable of interpreting a fully algebraically closed field 32123.

Zilber's famous Trichotomy Conjecture asserted that these are the absolute only three possibilities in the mathematical universe. If proven true, it would imply that any non-linear strongly minimal set must secretly harbor the hidden structure of a mathematical field, binding abstract model-theoretic complexity directly and inextricably to classical algebraic geometry 25.

However, in a monumental mathematical event, Ehud Hrushovski definitively refuted the conjecture by successfully constructing a completely new, artificial strongly minimal set that was flat but strictly not locally modular, and demonstrably did not interpret a field 2124. Hrushovski achieved this by radically generalizing the classical Fraïssé limit construction. He introduced a sophisticated predimension function, typically denoted $\delta$, defined as the number of elements in a finite set minus the number of relations acting upon those elements 1516. By meticulously adjusting the amalgamation constraints to only allow "strong substructures" (where no extension can drop the predimension below zero), Hrushovski produced a strictly simple, $\aleph_0$-categorical structure whose combinatorial geometry was entirely novel and unprecedented 1516. This Hrushovski construction demonstrated conclusively that the model-theoretic universe was vastly richer and stranger than standard classical algebraic examples had previously suggested.

Despite the firm refutation of the conjecture in the abstract, pathological case, the Trichotomy Conjecture was brilliantly salvaged when restricted to specific topological and algebraic contexts. Hrushovski and Zilber collaboratively proved that if a strongly minimal set carries a suitable topology - forming what they termed a "Zariski geometry" - the original trichotomy holds perfectly without exception 1025. Thus, in contexts actually resembling classical geometry, any failure of local modularity mathematically guarantees the presence of an algebraically closed field.

Quasiminimal Excellence and Complex Exponentiation

Following the resolution of the Zariski geometries, Zilber dramatically shifted his focus to the field of complex numbers formally equipped with the exponential function, denoted as $(\mathbb{C}, +, \cdot, \exp)$. First-order logic is structurally inadequate for characterizing this specific structure because the periodic nature of the exponential function allows the direct definability of the integers $\mathbb{Z}$ (as the kernel of the exponential map is exactly $2\pi i \mathbb{Z}$) 2122. Because the integers $\mathbb{Z}$ are definable, Gödel's incompleteness theorems apply forcefully, meaning the first-order theory of complex exponentiation is inherently subject to Gödelian undecidability, is highly unstable, and mathematically cannot be uncountably categorical 2122.

To bypass this Gödelian intractability, Zilber proposed studying complex exponentiation using a powerful infinitary logic known as $L_{\omega_1, \omega}(Q)$. This specific logic permits countably infinite conjunctions and disjunctions, and introduces a specialized quantifier $Q$ denoting "there exist uncountably many" 2126. By mathematically fixing the kernel of the exponential map to a standard, invariant copy of the integers $\mathbb{Z}$, Zilber formulated the ambitious "quasiminimal excellent program."

A mathematical structure is defined as quasiminimal if every definable set within it is either strictly countable or co-countable 22. An excellent class possesses a robust combinatorial geometry where the closure operator satisfies the countable closure condition (the closure of a countable set remains countable), and exact amalgamation over independent, free configurations is absolutely guaranteed 21. Zilber successfully axiomatized a specific class of "pseudo-exponential fields" and proved rigorously that this class has exactly one unique model in every uncountable cardinality, thereby establishing uncountably categorical axioms using infinitary logic 212629.

Zilber famously conjectured that the unique, uncountably categorical model of size $2^{\aleph_0}$ in this quasiminimal excellent class is exactly isomorphic to the standard complex exponential field $(\mathbb{C}, +, \cdot, \exp)$ 2129. Proving this isomorphism remains one of the deepest, most elusive open questions in geometric model theory today, as its resolution requires verifying extremely deep number-theoretic hypotheses, including a continuous algebraic analogue of Schanuel's conjecture 29. It is worth noting that subsequent structural simplifications by model theorists Martin Bays, Bradd Hart, Tapani Hyttinen, Meeri Kesälä, and Jonathan Kirby proved that the highly complex "excellence" axiom in Zilber's original formulation is actually redundant; it follows automatically from the other, simpler axioms of quasiminimality and geometric closure 292731.

Applied Model Theory in Algebra and Geometry

The precise translation of logical stability and geometric structure into the realm of classical algebra has led directly to the resolution of several massive, long-standing mathematical conjectures. Applied model theory relies heavily on the aforementioned transfer principle: if two structures share the exact same complete first-order theory, any first-order sentence proven true in one structure is absolutely, incontrovertibly true in the other 332.

Valued Fields and the Ax-Kochen Theorem

One of the earliest, most spectacular, and most widely cited triumphs of applied model theory is the Ax-Kochen theorem, developed collaboratively by mathematicians James Ax and Simon Kochen in 1965 33. The theorem addresses a famous conjecture proposed by Emil Artin regarding the solvability of Diophantine equations over $p$-adic fields ($\mathbb{Q}_p$). Artin hypothesized that any homogeneous polynomial of degree $d$ featuring $n$ distinct variables, where $n > d^2$, must possess a non-trivial zero in $\mathbb{Q}_p$ 33.

Ax and Kochen proved this conjecture is mathematically true for all "sufficiently large" primes $p$ relative to the given degree $d$ 33. They achieved this historic result not through direct, laborious number-theoretic manipulation, but by establishing a profound logical isomorphism between ultraproducts of $p$-adic fields and ultraproducts of formal power series fields operating over finite fields, denoted $\mathbb{F}_p((t))$ 3233.

By analyzing the first-order theory of Henselian valued fields, Ax and Kochen showed that the logical properties of the valued field are entirely and uniquely determined by the properties of its underlying residue field and its value group 33. Since the complex statement regarding polynomial roots is expressible as a first-order sentence, and the function field analog $\mathbb{F}_p((t))$ was already independently known to satisfy the property, the transfer principle allowed Ax and Kochen to logically pull the truth of the statement directly back into the $p$-adic context 53233. This structural, logic-first approach demonstrated conclusively to the broader mathematical community that logical compactness, ultrafilters, and ultraproducts were not mere set-theoretic novelties, but highly essential tools for modern quantitative number theory.

Differential Fields and the Manin-Mumford Conjecture

Model theory's intersection with algebraic geometry deepened significantly through the rigorous study of differential and difference fields. A differentially closed field of characteristic zero, denoted $DCF_0$, serves as the unique model companion of the theory of fields equipped with a formal derivation operator 24. This specific theory is highly tame, classified as $\omega$-stable, and admits a robust, highly developed theory of Morley rank 24.

Within $DCF_0$, the geometries of strongly minimal sets are highly restricted. Hrushovski and Pillay definitively proved that any strongly minimal set existing within a differentially closed field is either locally modular (vector-space-like) or strictly non-orthogonal to the constants (the specific subfield where the derivative operator equals zero) 24.

This rigid geometric dichotomy was deeply instrumental in Hrushovski's celebrated model-theoretic proof of the function-field analog of the Mordell-Lang and Manin-Mumford conjectures 2324. The classical Manin-Mumford conjecture concerns the precise intersection of a specific subvariety $X$ of an Abelian variety $A$ with the group of torsion points belonging to $A$ 23. Hrushovski reformulated the entire problem within the sophisticated model theory of difference fields, utilizing the theory ACFA 2328. By applying the Zariski geometry trichotomy theorem directly to difference equations, he demonstrated that a failure of local modularity implies the existence of an underlying field. However, the specific algebraic dynamics of the difference operator acting on the torsion points severely restrict the possible geometric configurations. The logical orthogonality existing between the modular generic types and the base field yielded the desired, highly sought-after finiteness results for intersections with torsion points, fundamentally proving the deep geometric conjecture using exclusively the formal language of stability and independence 2324.

Continuous Logic and Metric Structures

While classical model theory relies entirely on a strict, binary truth assignment (where every statement is absolutely true or absolutely false), modern applied mathematics frequently deals with continuous, analytic structures such as Banach spaces, $C^*$-algebras, operator spaces, and probability measure spaces 435. To analyze these complex spaces logically without losing structural fidelity, researchers developed the framework of continuous first-order logic.

In continuous logic, the discrete binary truth set ${T, F}$ is entirely replaced by the compact real interval $[0, 1]$ 4. Formulas are evaluated not as Boolean propositions, but as continuous functions mapping from the mathematical structure directly into $[0, 1]$, where the numerical value $0$ typically represents "absolute truth" (distance zero) 4. A metric structure in this paradigm consists of a complete metric space equipped exclusively with uniformly continuous functions and relations 4.

The transition from discrete to continuous logic carefully preserves the most powerful, foundational tools of classical model theory. The vital compactness theorem holds firmly, albeit in a slightly shifted topological sense: a set of continuous conditions is considered satisfiable if and only if every finite subset of it is satisfiable up to an arbitrarily small error 4. Furthermore, the entire architecture of stability theory translates seamlessly into the continuous domain. Researchers have established direct Kim-Pillay style theorems for continuous logic, allowing for the precise classification of metric structures into stable, simple, and NSOP1 regimes just as in the discrete universe 48.

Essential Continuity and Randomization

A recurring, central theme in continuous model theory is the concept of "essential continuity" - a loosely defined but crucial notion describing continuous theories that entirely lack any definable infinite discrete structures 3536. To bridge the gap between classical discrete logic and these continuous spaces, H. Jerome Keisler introduced the "randomization" of a first-order structure. This construction effectively builds a probability measure space directly over the models of a discrete theory 36.

If $T$ is a standard, classical first-order theory, its continuous randomization $T^R$ treats the definable sets of $T$ as measurable events in a probability space. The continuous truth value of a formula then becomes the exact probability that the formula holds. Extensive research has shown that model-theoretic stability is strictly preserved under this randomization process: if $T$ is a stable theory, then $T^R$ remains formally stable 36. However, the property of simplicity is notoriously fragile under this specific operation. The randomization of any unstable theory possessing the independence property (IP) automatically generates the tree property of the second kind (TP2) in the randomized structure. Because of this mathematically inevitable emergence of TP2, no randomized IP theory can ever be strictly simple 3536.

Finding an essentially continuous, strictly simple theory was a long-standing, difficult open question in continuous logic. It was finally resolved by researcher James Hanson, who constructed the highly complex theory of richly branching $\mathbb{R}$-forests equipped with generic binary predicates. This breakthrough proved conclusively that strict simplicity can indeed exist in continuous metric spaces that are wholly and completely devoid of any infinite discrete substructures 3529.

Finite Model Theory and Computational Complexity

While infinite model theory thrives on the vast power of the compactness theorem, finite model theory restricts its universe exclusively to finite structures (such as finite graphs, finite groups, or relational databases). Over finite structures, the compactness theorem fails spectacularly, and with it, both the upward and downward Löwenheim-Skolem theorems collapse 2130. The loss of these foundational tools necessitated a completely different mathematical approach, ultimately linking finite model theory intimately to theoretical computer science, database theory, and computational complexity theory 39.

Descriptive Complexity and Fagin's Theorem

Finite model theory maps logical syntax directly to algorithmic complexity classes, a highly specialized area known as descriptive complexity. The foundational result in this domain is Fagin's Theorem, published in 1974, which established an exact, undeniable correspondence between the computational complexity class NP (Nondeterministic Polynomial time) and existential second-order logic (ESO) 39.

Fagin proved mathematically that a property of finite structures (such as graph colorability, the Hamiltonian path problem, or Boolean satisfiability) can be computed by a non-deterministic Turing machine in polynomial time if and only if that exact property can be defined purely by an existential second-order formula 39. This profound, paradigm-shifting result removed the mechanical concept of the Turing machine from the definition of NP entirely, redefining computational complexity not as a measure of time or memory, but as an inherent measure of logical expressivity. The ongoing resolution of the legendary P vs. NP problem is therefore entirely equivalent to determining whether existential second-order logic is strictly more expressive than the specific logic corresponding to P (which, over ordered finite structures, is identified as Least Fixed Point logic) 3940.

Constraint Satisfaction Problems and Universal Algebra

Finite model theory also heavily underpins the rigorous study of Constraint Satisfaction Problems (CSPs). A standard CSP asks computationally whether a set of variables can be assigned specific values from a strictly finite domain such that a given set of relations (the constraints) is simultaneously satisfied. Complex database query evaluation, particularly the containment and execution of conjunctive queries in Datalog engines, is functionally and mathematically equivalent to solving massive CSPs 3041.

Model theorists and theoretical computer scientists established the Algebraic Dichotomy Conjecture for CSPs, asserting that every single CSP operating over a finite template is either universally solvable in polynomial time (P) or is definitively NP-complete, with absolutely no intermediate complexity classes existing between them 4142. The eventual proof of this massive dichotomy relied entirely on the intersection of universal algebra and finite model theory. By associating a specific finite relational structure directly with its algebra of polymorphisms (operations that perfectly preserve the relations), researchers demonstrated that the computational hardness of any CSP is completely, invariably determined by the specific algebraic identities satisfied by its polymorphism clone 4142. Further ongoing research currently extends these finite dichotomies into the realm of infinite-domain CSPs by studying the reducts of finitely bounded homogeneous structures using the advanced tools of $\omega$-categorical model theory 4142.

Historical Development and Academic Traditions

The explosive growth of model theory into a unified, foundational framework for algebraic geometry and logic was largely driven by two highly distinct, initially parallel academic traditions that eventually converged in the late 20th century: the French school and the Russian school.

The French School of Model Theory

The French school of model theory, deeply rooted in the prestigious academic institutions of Paris (such as the University of Paris VII and the École Normale Supérieure), approached logic with the intense structuralist rigor highly characteristic of the Bourbaki group 313245. Mathematicians such as Bruno Poizat were instrumental in systematically rewriting early Anglo-American model theory through an explicit, uncompromising algebraic lens 46.

The French approach historically abhorred treating model theory as mere syntactic manipulation or Boolean bookkeeping, instead heavily emphasizing the philosophy of "groups everywhere" and focusing relentlessly on the intrinsic geometric nature of definable sets 33. Their meticulous, algebra-first work laid the vital mathematical foundation for stable group theory. This allowed researchers to rigorously analyze algebraic groups via Morley rank, a framework which later proved absolutely vital to providing the infrastructure for Hrushovski's geometric proofs regarding the Mordell-Lang conjecture 46.

The Russian School of Model Theory

Concurrently, the Russian school of mathematics, influenced heavily by early figures like A.A. Markov and later driven aggressively by Boris Zilber and Mikhail Gromov, brought a highly unique blend of combinatorial geometry, physical intuition, and constructivist philosophy to the field of model theory 463348.

Zilber's groundbreaking work at the highly complex interface of model theory and complex analysis (specifically his development of Zariski geometries and the quasiminimal excellence program) perfectly exemplified the Russian school's tendency to seek deep, unitary, overarching structures behind seemingly disparate mathematical and physical phenomena 463348. The Russian approach was highly comfortable integrating topological dynamics and infinitary logics like $L_{\omega_1, \omega}$ directly into classification problems. When the profound geometric and analytic insights of the Russian school formally merged with the robust classification apparatus developed by Shelah and subsequently refined by the French and Anglo-American schools, modern applied geometric model theory was officially born, cementing the discipline as an indispensable pillar of modern structural mathematics.