Mathematical models of mental illness

The Paradigm Shift in Psychiatric Nosology

For decades, the standard classification systems in clinical psychiatry, most notably the Diagnostic and Statistical Manual of Mental Disorders (DSM) and the International Classification of Diseases (ICD), have categorized psychiatric illnesses based on qualitative groupings of self-reported symptoms and externally observed behaviors 123. While these diagnostic frameworks have successfully provided a common clinical language, they suffer from profound limitations regarding construct and predictive validity. Symptoms are highly heterogeneous within a single diagnostic category, and extensive comorbidity exists across distinct categorical boundaries, leading to diagnostic instability and trial-and-error treatment protocols 24. Furthermore, traditional nosology offers limited insight into the underlying biological, neural, or cognitive mechanisms driving these symptoms, creating an intractable explanatory gap between fundamental neuroscience and clinical phenomenology 15.

In response to these diagnostic limitations, alternative frameworks such as the Research Domain Criteria (RDoC) and the Hierarchical Taxonomy of Psychopathology (HiTOP) have been developed. RDoC systematizes research based on behavioral neuroscience dimensions rather than conventional disease categories, integrating multiple levels of analysis from genes to self-report 6. Similarly, HiTOP provides an empirically based approach to understanding the structure of psychopathology by organizing psychiatric symptoms based on patterns of covariation, moving away from rigid categorical systems to a continuous, dimensional model 4. However, while HiTOP improves clinical description, it lacks strong mechanistic explanations detailing how these symptom dimensions connect to underlying neurocognitive processes 4.

Computational psychiatry has emerged to bridge this gap. By operating at the intersection of cognitive science, computational neuroscience, and artificial intelligence, computational psychiatry utilizes rigorous mathematical and algorithmic models to map the multi-level complex data of mental illness - from synaptic transmission and neural circuitry to high-level cognition and psychosocial behavior 789. The discipline seeks to translate verbal hypotheses into formal, mathematically tractable expressions, thereby rendering the assumptions of psychiatric theories explicit, transparent, and empirically testable 10. Through mathematical formalization, computational psychiatry accommodates both categorical and dimensional approaches, enabling researchers to isolate the hidden computational mechanisms that give rise to the behavioral phenotypes observed in the clinic 411.

The Two Cultures of Statistical Modeling

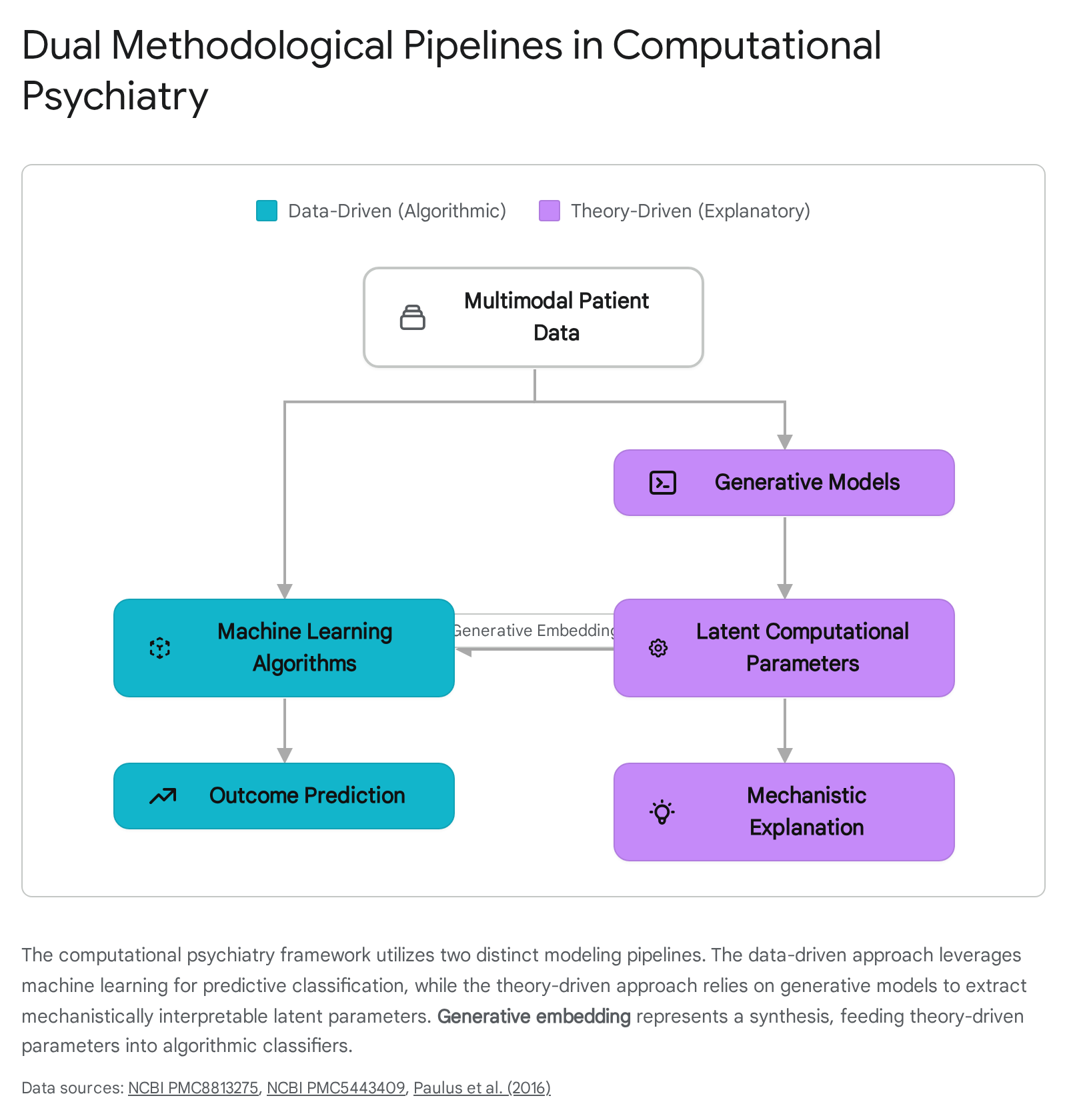

Understanding the methodological divisions within computational psychiatry requires examining the distinct but complementary cultures of statistical modeling. Originally conceptualized by statistician Leo Breiman, this dichotomy divides the field into algorithmic (data-driven) modeling and data modeling (theory-driven, explanatory) approaches 27.

The first culture, rooted in machine learning and data science, focuses strictly on prediction. In this paradigm, psychiatric phenomena are viewed through the lens of algorithmic models - such as random forests, support vector machines (SVMs), and deep neural networks - that analyze high-dimensional, multimodal datasets including neuroimaging, genomic sequences, and clinical health records 2712. The objective is to identify statistical patterns capable of classifying patients, stratifying risk, or forecasting treatment outcomes. The underlying biological or psychological mechanisms are treated as a "black box," secondary to the maximization of predictive accuracy 713.

The second culture focuses on explanation through theory-driven generative models. A generative model is a probabilistic mathematical description of the data-generating process - in this context, the neurobiological and psychological mechanisms that produce behavior and symptoms 714. Theory-driven computational psychiatry relies on frameworks such as reinforcement learning, Bayesian inference, and dynamical systems theory to simulate how an agent learns, processes uncertainty, and makes decisions 8. By fitting these computational models to patient behavior derived from highly controlled cognitive tasks, researchers extract latent variables - such as learning rates, prediction errors, precision weights, or uncertainty thresholds. These latent variables serve as formal computational phenotypes, offering a mechanistic, process-based explanation for the emergence of clinical symptoms 51516.

Theory-Driven Approaches: Mechanistic Explanations of Pathology

By mathematically articulating how a normative, healthy brain solves computational problems - such as navigating uncertain environments, allocating attention, or optimizing reward acquisition - researchers can precisely specify how these neurocomputational processes fail in psychiatric populations 11. The prevailing computational architectures utilized to model these dysfunctions map closely to specific symptom clusters, creating a taxonomy of disease based on algorithmic failure rather than superficial behavioral traits.

Predictive Coding and Aberrant Belief Updating in Psychosis

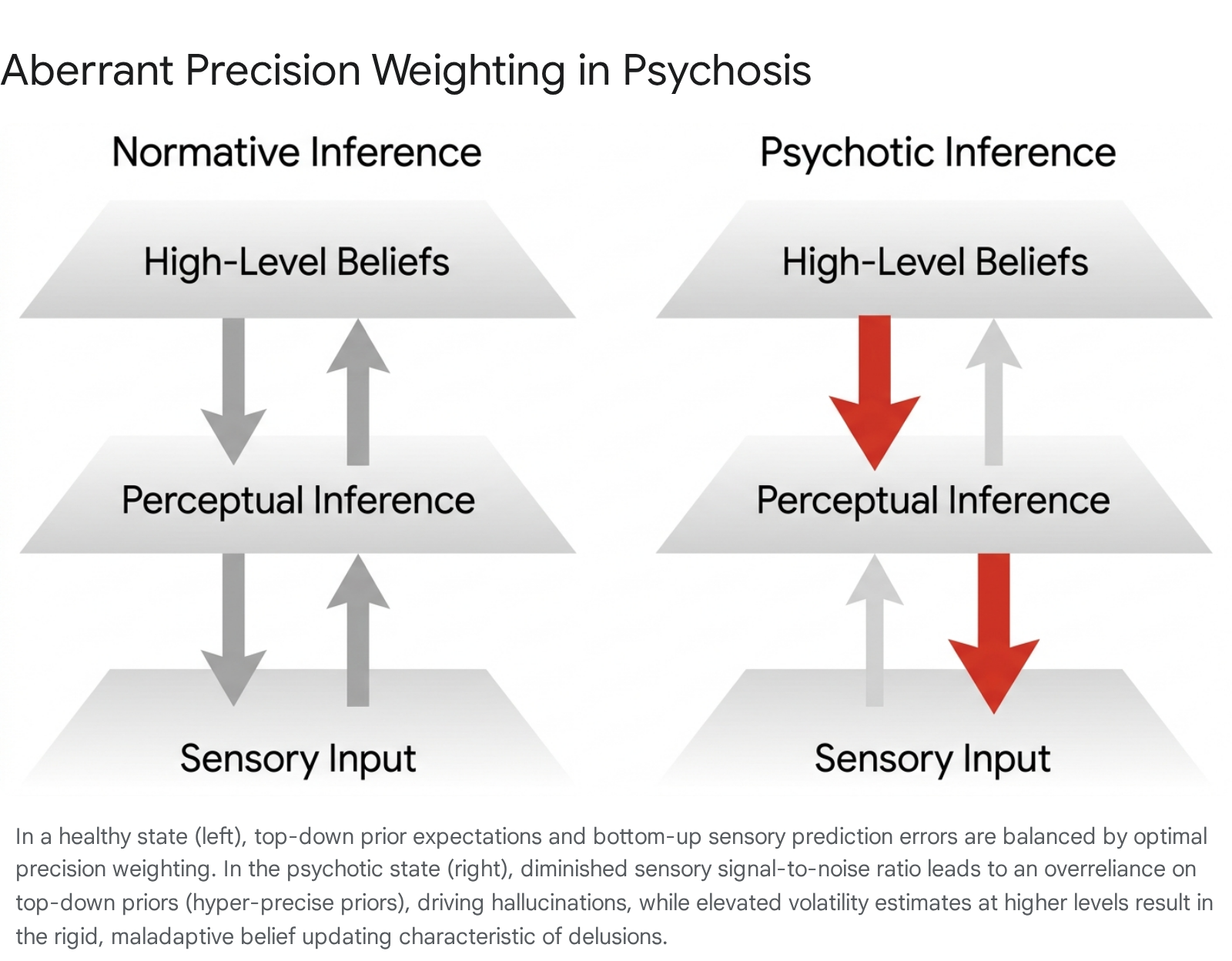

Psychotic disorders, most notably schizophrenia, are defined clinically by severe distortions in reality testing, manifesting as hallucinations (perception without a corresponding external stimulus) and delusions (fixed, false beliefs resistant to counterevidence) 17. Within computational psychiatry, the predictive coding framework provides the dominant theoretical architecture for understanding the genesis of these reality distortions 1718.

Predictive coding posits that the brain operates as a hierarchical Bayesian inference machine. It does not passively receive and process sensory input in a strictly feed-forward manner; rather, it actively constructs perception by continually generating top-down predictions about the probable causes of sensory data 171920. When incoming bottom-up sensory data mismatches these top-down predictions, a "prediction error" is generated. This error signal propagates up the cortical hierarchy to update prior beliefs, minimizing future surprise and aligning the internal generative model with external reality 19. Crucially, the extent to which prediction errors update prior beliefs is governed by "precision weighting" - a mathematical estimation of the reliability, variance, or uncertainty of the signal 1721. High-precision prediction errors force the brain to radically update its models, while low-precision errors are effectively ignored 21.

Computational models of schizophrenia suggest that psychosis emerges from a fundamental dysregulation in precision weighting 11.

A core pathophysiological hypothesis dictates that patients exhibit cortical hyperexcitability and elevated bottom-up noise transmission 18. Because the signal-to-noise ratio of incoming sensory input is severely compromised, the brain attempts to compensate by under-weighting sensory evidence and relying excessively on top-down prior beliefs 18. This compensatory mechanism results in hyper-precise priors. When prior expectations completely overpower degraded sensory reality, the brain perceives its own expectations as actual events, driving the formation of conditioned hallucinations 518.

Conversely, at higher cognitive levels, patients with schizophrenia frequently demonstrate a "Jumping-to-Conclusions" bias - a profound tendency to form fixed beliefs based on limited, insufficient evidence 8. This phenomenon has been computationally modeled using the Hierarchical Gaussian Filter (HGF), a mathematical framework that captures how individuals update their beliefs about environmental volatility 2223. Recent studies utilizing the HGF in speed-incentivized associative reward learning (SPIRL) tasks on individuals with schizotypal traits reveal a generalized failure to accurately learn higher-order statistical regularities 2224. Under conditions of high environmental volatility, healthy individuals adaptively adjust their learning rates. In contrast, individuals experiencing positive psychotic symptoms exhibit rigid belief updating regarding observational noise, creating a persistent, self-reinforcing loop where ambiguous sensory inputs are perpetually misinterpreted as highly salient, leading to the entrenchment of delusional cognition 51722.

To account for the rapid, visceral nature of delusional onset - often described clinically as delusional mood or a sudden sense of enhanced significance - modern iterations of these models advocate for "Hybrid Predictive Coding" (HPC). HPC augments continuous, gradient-based error correction with a fast, amortized inference mechanism 1717. This allows the brain to make rapid feedforward guesses based on previously learned, sometimes trauma-informed, input-cause mappings (e.g., immediately interpreting a benign smile as a direct threat). In delusion-prone individuals, these biased amortized mappings are assigned excessive precision, preventing the slower, iterative prediction error mechanisms from correcting the faulty initial assumption, thereby locking the delusion into place 17.

Reinforcement Learning in Addiction and Compulsion

Reinforcement learning (RL) models provide a computational grammar for understanding how organisms optimize decision-making to maximize future rewards. RL frameworks broadly categorize action selection into two primary systems: a computationally efficient "model-free" system that relies on cached, habitual values acquired through repetitive trial and error, and a computationally intensive "model-based" system that involves forward-planning and simulating complex future state-action outcomes 2526.

In computational psychiatry, addiction is frequently modeled as a profound pathology of the reinforcement learning system 13. The traditional Temporal Difference Reinforcement Learning (TDRL) model of addiction posits that drugs of abuse artificially hijack dopaminergic prediction error signals in the striatum 27. Normally, transient dopamine spikes code the discrepancy between expected and actual rewards; once a reward is fully predicted by environmental cues, the dopamine response transfers to the predictive cue, and the prediction error returns to zero. However, pharmacological agents directly and persistently trigger dopamine release, causing an unending, un-correctable positive prediction error 2729. According to the TDRL model, this perpetually inflates the cached value of drug-seeking actions, accelerating the transition from goal-directed (model-based) behavior to rigid, compulsive (model-free) habits that persist despite severe negative consequences 2529.

While TDRL elegantly explains the mechanics of habit formation, recent computational critiques emphasize that it fails to capture the full phenomenological depth of addiction - particularly the subjective experience of craving, which often persists long after acute withdrawal and true behavioral extinction 2729. To address this, Bayesian models have been deployed to frame craving not simply as a cached scalar value, but as a top-down predictive inference 27. In these Bayesian frameworks, individuals maintain high-confidence priors regarding a drug's efficacy to alleviate physiological distress. Craving, therefore, is modeled as an active, belief-driven expectation triggered by environmental and emotional contexts, providing a more psychologically grounded framework than pure algorithmic neurobiology 2729.

Similar reinforcement learning paradigms are utilized to model Obsessive-Compulsive Disorder (OCD) 828. Prominent psychiatric theories debate whether compulsions represent a deliberate attempt to reduce an overestimated threat or are merely expressions of an inflexible, model-free habit system 28. Computational simulations suggest that OCD patients exhibit a generalized failure in model-based control, forcing an over-reliance on habitual behaviors as a compensatory mechanism to navigate uncertainty 2528. By mathematically formalizing this deficit, researchers integrate ostensibly contradictory theories: the repetitive, stereotyped nature of the compulsion is an expression of an overactive habit system, but the trigger is a profound inability to reliably predict the outcomes of actions in highly uncertain, anxiety-provoking scenarios 28.

Active Inference and the Neurobiology of Anxiety

Anxiety and avoidance behaviors have been extensively characterized using active inference, a corollary of predictive coding that extends the Free Energy Principle from perception to action and motor control 293230. Under active inference, biological systems maintain homeostasis by actively seeking to minimize expected free energy, which mathematically equates to minimizing surprise, resolving ambiguity, and minimizing risk regarding future states of the environment 3230.

Fear and anxiety serve clear adaptive functions, but in pathological states, they result in debilitating avoidance that prevents the organism from learning that an environment is safe 15. To map the exact computational mechanisms of avoidance, researchers employ approach-avoidance conflict paradigms combined with reinforcement learning and active inference models 1531. Large-scale empirical validation of these models demonstrates that individuals with clinical anxiety exhibit a profound Pavlovian bias to withhold action in the face of potential punishment, even when withholding action results in an overall loss of reward 313236.

Computational modeling reveals that this avoidance is driven by a highly asymmetric sensitivity to punishment relative to reward 31. In highly anxious states, particularly when placed under stress, the learning rate for aversive outcomes is drastically amplified, creating a persistent bias toward expected failure 32. Furthermore, an active inference formulation suggests that anxiety involves distorted interoceptive precision 33. Patients with anxiety and eating disorders frequently demonstrate low sensory precision regarding signals ascending from the autonomic nervous system (e.g., detecting heartbeats on interoceptive tapping tasks) 33. Because the reliability of the somatic sensory data is computationally underestimated, the brain cannot accurately update its models regarding bodily threat, resulting in persistent uncertainty, emotional conflict, and the compensatory drive to avoid potentially unpredictable scenarios 1533.

Comparison of Theory-Driven Frameworks

The specific computational architectures deployed in theory-driven psychiatry map closely to the phenomenological nature of the psychiatric target. Table 1 summarizes the primary modeling frameworks, their core mathematical mechanisms, and their standard clinical applications.

| Computational Framework | Core Mathematical Mechanism | Primary Psychiatric Target | Key Phenotypic Manifestation |

|---|---|---|---|

| Hierarchical Predictive Coding | Precision-weighted prediction errors; hierarchical Bayesian belief updating. | Schizophrenia; Psychosis | Hallucinations (hyper-precise priors); Delusions (rigid belief updating). |

| Reinforcement Learning (TDRL) | Temporal difference errors; Model-free (habit) vs. Model-based (planning) trade-offs. | Addiction; OCD | Compulsion; Habitual drug-seeking; Deficits in forward-planning. |

| Active Inference | Minimization of expected free energy; Action-oriented surprise reduction. | Anxiety Disorders; PTSD | Pathological avoidance; Asymmetric punishment sensitivity. |

| Interoceptive Inference | Bayesian integration of ascending autonomic signals and prior somatic beliefs. | Eating Disorders; Anxiety | Hypo-precision of bodily signals; Unresolved autonomic uncertainty. |

Data-Driven Modeling: Algorithmic Prediction and Digital Phenotyping

While theory-driven models illuminate the 'why' and 'how' of psychiatric illness, data-driven computational psychiatry focuses predominantly on the 'who' and 'what'. The clinical reality of psychiatry is plagued by diagnostic uncertainty and a heavy reliance on trial-and-error prescribing 34. Data-driven approaches apply modern machine learning tools - including random forests, support vector machines, decision trees, convolutional neural networks (CNNs), and recurrent neural networks (RNNs) - to vast, high-dimensional arrays of patient data to forecast clinical trajectories, classify disease subtypes, and predict treatment responses 121335.

Machine Learning in Treatment Prediction

A primary translational goal of predictive modeling is to identify specific biomarkers or clinical phenotypes that reliably forecast whether a patient will respond to a specific pharmacological or psychological intervention 1336.

For instance, in the management of Obsessive-Compulsive Disorder, cognitive behavioral therapy (CBT) serves as a first-line treatment, yet response rates are highly variable. To address this, the ENIGMA-OCD consortium deployed support vector machines and random forest classifiers on a multicenter sample of 159 adults 37. The predictive models incorporated both baseline clinical data and complex resting-state functional magnetic resonance imaging (rs-fMRI) metrics, including regional homogeneity and fractional amplitude of low-frequency fluctuations 37. The results indicated that clinical data alone predicted CBT remission with an area under the receiver operating characteristic curve (AUC) of 0.69, driven heavily by variables such as baseline symptom severity, younger age, absence of cleaning obsessions, and unmedicated status 3742.

Strikingly, models trained solely on resting-state fMRI data performed only slightly above chance (AUC 0.59), and fusing neuroimaging data with clinical variables did not significantly improve predictive accuracy 3742. This empirical validation underscores a vital reality in applied computational psychiatry: while advanced neuroimaging is biologically informative, standard, low-cost clinical data currently remains the most reliable input for algorithmic outcome prediction in certain real-world therapeutic contexts 4238.

In contrast, deep neural networks have achieved remarkably high performance in predicting pharmacotherapy outcomes. Models predicting antidepressant response and remission in Major Depressive Disorder (MDD) significantly outperform classical logistic regression by integrating multimodal information 12. Furthermore, machine learning frameworks are actively being developed to predict individual risk for hospital readmission in bipolar disorder using electronic health records, utilizing combinations of decision trees, random forests, and SVMs to monitor patient trajectories 3940.

Normative Modeling: Moving Beyond Case-Control

A major limitation of traditional psychiatric neuroimaging and statistical analysis is the reliance on rigid case-control designs, which collapse highly heterogeneous patients into single diagnostic groups, washing out individual variance 46. Normative modeling has emerged as a mature statistical method within computational psychiatry to address this issue 46.

Analogous to pediatric growth charts, normative models map the centiles of biological and behavioral variation across vast, healthy reference populations 46. By training these models on large-scale datasets (often spanning thousands of neuroimaging scans to chart metrics like cortical thickness or fractional anisotropy), clinicians can quantify an individual patient's precise neuro-computational deviation from the expected norm 46. Software infrastructures such as the Predictive Clinical Neuroscience toolkit (PCNtoolkit) have implemented advanced techniques like federated learning to allow researchers to utilize these models without compromising patient privacy. Through strategies like model transfer (applying a massive reference model to a small local clinical sample) or model extension (updating the reference model with new local data), normative modeling provides statistical inferences at the single-subject level, a crucial requirement for precision psychiatry 46.

Generative Embedding: Bridging Theory and Data

A persistent critique of purely data-driven machine learning models in medicine is their "black box" nature 741. Deep learning algorithms may achieve high predictive accuracy, but they rarely afford mechanistic interpretability, making it difficult for clinicians to trust the output, understand the causal structure, or derive targeted biological interventions 4142.

To resolve this limitation, computational psychiatrists have developed a hybrid analytical technique known as generative embedding 2341. Generative embedding explicitly fuses the explanatory power of theory-driven models with the predictive power of machine learning algorithms 4143. In this workflow, a patient's behavioral or neuroimaging data is first fitted to a biophysically realistic generative model - such as a Dynamic Causal Model (DCM) of functional brain connectivity 41. The generative model extracts highly specific, latent neurobiological parameters, such as localized synaptic connection strengths, transition probabilities, or learning rates 2341. These mathematically derived, mechanistically interpretable parameters are then embedded into a discriminative classifier (e.g., an SVM) as the input features, effectively replacing raw, uninterpretable data 41.

Early empirical applications demonstrate that generative embedding can achieve near-perfect balanced classification accuracies (e.g., 98% in detecting specific cognitive deficits in aphasic patients compared to healthy controls) while simultaneously providing clinicians with a mechanistic explanation (e.g., specific abnormalities in thalamo-temporal connectivity) for the algorithmic classification 41. This method holds immense potential to dissect highly heterogeneous psychiatric spectrum disorders into physiologically well-defined, actionable subgroups, bridging the gap between classical statistics and mechanistic neurobiology 4143.

Advancements in Large Language Models and Psychiatric Reasoning

The rapid expansion of artificial intelligence has introduced Large Language Models (LLMs) to the domain of computational psychiatry. LLMs possess transformative potential for analyzing unstructured clinical data, natural language processing of electronic health records, and even providing conversational therapeutic support via chatbots 33544. However, the deployment of standard LLMs in high-stakes clinical consultation is severely constrained by "hallucinations" - the generation of highly confident but factually incorrect assertions - and a tendency toward superficial reasoning 5152.

Standard LLMs are trained to prioritize linguistic fluency and style over structured clinical logic. When faced with the subjective ambiguity and comorbidity complexity inherent in psychiatric consultation, these models often fail to execute rigorous differential diagnostic reasoning, ignoring critical exclusion criteria or misattributing symptoms 5152. To mitigate these risks, researchers are applying Reinforcement Learning from Human Feedback (RLHF) and integrating evidence-based frameworks to align LLM cognition with professional psychiatric practice 3551.

Recent innovations include the ClinMPO framework, an evidence-guided reinforcement learning architecture designed for light-parameter LLMs 5153. ClinMPO employs a specialized, independent reward model trained on a curated dataset of over 4,400 psychiatry journal articles structured according to the Oxford Centre for Evidence-Based Medicine hierarchy 5153. By explicitly rewarding evidence-based logic and heavily penalizing incoherent chains of thought, ClinMPO forces the policy model to internalize essential cognitive processes like differential diagnosis and error recognition 53. Similarly, the MIND framework incorporates a Criteria-Grounded Psychiatric Reasoning Bank (PRB) to retrieve clinical rationales that guide the LLM's multi-turn inquiry, ensuring that questioning strategies do not drift off-topic and remain anchored to diagnostic thresholds 52. When evaluated on complex test sets designed to isolate reasoning capabilities, aligned models have demonstrated diagnostic accuracies that rival or surpass human medical trainees, suggesting that explicit cognitive alignment is a scalable pathway for safe psychiatric AI deployment 51.

Methodological Hurdles: Reproducibility and Cultural Generalization

Despite the rapid proliferation of computational psychiatry literature, translating these advancements into routine clinical practice remains severely bottlenecked 1345. The discipline is currently confronting methodological and ethical hurdles that threaten its scientific validity, primarily centered around a reproducibility crisis and the lack of cross-cultural generalization.

The Reproducibility Crisis and Model Overfitting

The replication crisis observed in the broader psychological and biomedical sciences is acutely felt in computational psychiatry 554647. A widely cited survey found that 52% of researchers perceived a "reproducibility crisis" in science, with 70% failing to reproduce another researcher's findings 58. In the specific domain of computational health, empirical assessments reveal that over 60% of published studies are not computationally reproducible - meaning independent researchers cannot recreate the statistical results even when provided with the original datasets 5848.

A core tenet of data science dictates that to truly validate the accuracy of a predictive model, it must be evaluated on entirely unseen patient data (out-of-sample testing) 1343. However, a substantial portion of computational psychiatry research relies on models that are validated strictly within the dataset on which they were trained 13. This practice inevitably leads to model overfitting, where complex algorithms memorize the statistical noise and idiosyncratic heterogeneity of a specific, small local sample rather than learning the true underlying biological signal 13. Consequently, when these predictive models are applied to new clinical trials or real-world populations, their predictive power frequently collapses. For example, machine learning models predicting treatment response to antipsychotic drugs developed in one trial have routinely failed to generalize to data from independent trials 13.

Addressing this crisis requires strict adherence to open science frameworks and FAIR (Findable, Accessible, Interoperable, and Reusable) data principles 48. Researchers advocate for the mandatory use of comprehensive data dictionaries, Model Location and Specification Tables (MLast) to track variable adjustments, and the deployment of standardized software pipelines (such as Python Luigi) to automate and log model configurations, ensuring that hyperparameter tuning and performance metrics are fully transparent 4258.

Cross-Cultural Validity and Global Representation

A secondary, yet equally profound, challenge is the systemic lack of geographic, linguistic, and cultural diversity in the data utilized to train psychiatric algorithms. The vast majority of theoretical frameworks and predictive models are built on data sourced from Western, Educated, Industrialized, Rich, and Democratic (WEIRD) populations 495051.

Culture fundamentally dictates how individuals express, interpret, and experience psychological distress, mapping symptoms onto divergent physical or behavioral forms 52. Ignorance of cross-cultural nuances creates severe algorithmic bias. A recent rigorous analysis of natural language processing models designed to detect depression from social media text highlighted this failure. When models trained on standard benchmark datasets (such as CLPsych, predominantly sourced from the Global North) were applied to geo-located social media data from individuals in the Global South, they failed to generalize, consistently underperforming and misclassifying non-Western users 5164. This highlights that computational markers of mental illness are not universally applicable without localized tuning 5164.

Mitigating this bias requires targeted international consortia and the integration of culturally informed variables into mathematical models. For example, recent psychometric efforts in China have developed the Chinese Mental Health Value Scale (CMHVS) to quantify culturally grounded mental health values (e.g., family alignment, relating to others, communication) that are vastly distinct from Western individualistic frameworks 49. Expanding computational psychiatry research networks and hosting academic conferences in the Global South - including initiatives in India, Brazil, and Nigeria - is essential to validate generative models transculturally and ensure that precision psychiatry does not become an exclusive artifact of high-income nations 50656653. High-income countries must prioritize upskilling early-career researchers globally and establishing data-sharing frameworks to promote cross-cultural robustness 53.

| Challenge Domain | Specific Vulnerability | Proposed Systemic Mitigation |

|---|---|---|

| Reproducibility | Algorithmic overfitting; lack of computational reproducibility (~60% failure rate) due to missing code or variable dictionaries. | Enforcement of FAIR data principles; mandatory out-of-sample validation; standardized pipelines (e.g., Python Luigi, MLast). |

| Generalization | WEIRD sample bias; failure of NLP models to detect distress in Global South populations due to cultural symptom expression. | Expansion of global datasets; development of culturally grounded psychometrics (e.g., CMHVS); international collaborative consortia. |

| Interpretability | Deep learning models operating as "black boxes," providing predictions without actionable clinical mechanisms. | Generative embedding; explicit alignment of LLMs using RLHF and evidence-based diagnostic rubrics (ClinMPO). |

| Ethics | Privacy risks associated with digital phenotyping; algorithmic determinism eroding clinical judgment. | Implementation of the Integrated Ethical Approach for Computational Psychiatry (IEACP); federated learning to preserve privacy. |

Expanding the Paradigm: Beyond Biological Reductionism

Traditional clinical psychiatry and historical models of medicine often view the computational approach with skepticism, primarily framing it as an exercise in radical biological reductionism 6854. Critics argue that mapping the profound complexity of human suffering to dopaminergic prediction errors or precision-weighted algorithms strips psychiatric illness of its psychological, sociological, and subjective dimensions 275455. By focusing exclusively on brain states, critics fear computational psychiatry ignores the environmental and systemic traumas that precipitate mental illness 2755.

However, the vanguard of computational psychiatry explicitly rejects pure functional reductionism 1468. Generative models fundamentally recognize that the brain does not compute in a vacuum; it computes continuous interactions with a highly complex physical and social environment 214. True computational models are inherently context-sensitive, defining mental illness not as an isolated neurobiological lesion, but as a mismatch between the brain's predictive models and the environment it navigates 14.

Integrating Psychosocial Context: Active Intersubjective Inference

To address the limitations of treating mental illness merely as a chronic brain disease, researchers are extending the mathematical boundaries of predictive processing to include social cognition and psychodynamics. A prominent theoretical advancement is the development of Active Intersubjective Inference (AISI) 215672.

AISI maps psychoanalytic and relational concepts onto formal models of recursive Bayesian prediction. It posits that human self-identity is partially constructed through "second-order inference" - the recursive, highly active process of inferring not only another person's mental state, but inferring how they perceive us 5672. In this framework, psychopathology does not merely reside in faulty autonomic signaling, but in distorted interpersonal modeling resulting from early childhood interactions or trauma 21.

For example, in Major Depressive Disorder (MDD) or Post-Traumatic Stress Disorder (PTSD), patients may hold rigid, trauma-informed priors regarding social rejection or imminent threat. AISI provides a mathematically rigorous way to model classic psychodynamic phenomena like psychological projection and transference as specific computational failures in updating the second-order generative model of the self during social interactions 2156. By quantifying how the social environment modulates internal inference, computational psychiatry bridges the conceptual gap between molecular biology, cognitive neuroscience, and interpersonal psychology, providing a holistic, systems-level understanding of mental health 5557.

The Precision Psychiatry Roadmap and Future Directions

The ultimate value of computational psychiatry will be measured by its successful integration into standard clinical care. Currently, despite profound theoretical breakthroughs and a massive accumulation of literature, virtually no tools for prognosis, diagnosis, or treatment monitoring based on computational neuroscience exist in routine psychiatric practice 16.

To overcome this translational valley of death, international consortia - including representatives from the European College of Neuropsychopharmacology (ECNP), medical organizations, and regulatory agencies - have begun drafting strategic blueprints, such as the Precision Psychiatry Roadmap outlined for the 2025 - 2026 horizon 345859. This roadmap establishes a 15-year trajectory for implementing a biology-informed diagnostic classification system that transitions the field from trial-and-error medicine to targeted therapeutics 34.

Standardized Frameworks for Clinical Translation

The roadmap proposes a systematic, phased pipeline analogous to pharmacological drug development to ensure computational tools are safe, effective, and reliable 16. 1. Phase I (Robustness & Clinical Validity): Development of computational tasks and generative models on healthy volunteers, establishing rigorous test-retest reliability and construct validity. This is followed by initial application in small, carefully phenotyped clinical cohorts to identify potential latent biomarkers 16. 2. Phase II (Target Populations): Scaling up to target populations using multi-center normative modeling. This phase tests the computational models across diverse demographics to account for natural variations in age, sex, and culture 16. 3. Phase III (Randomized Clinical Trials): Thorough evaluation of computational phenotypes as active decision-support tools in large-scale randomized controlled trials. This determines if computationally guided treatment allocation significantly improves patient outcomes compared to standard clinical care 16.

Furthermore, the next generation of clinical tools will shift away from static, single-point predictions. Psychiatric illnesses unfold over time; therefore, predictive architectures like Long Short-Term Memory (LSTM) networks are being deployed for dynamic time-series forecasting 60. These temporal frameworks allow clinicians to continuously adjust treatment parameters based on the patient's evolving digital phenotype and physiological state across the entire therapeutic lifecycle 60.

By rigorously adhering to this translational pipeline, enforcing out-of-sample reproducibility, and honoring the cross-cultural and psychosocial complexities of human behavior, computational psychiatry possesses the unprecedented capability to deconstruct the heterogeneity of mental illness. In doing so, it promises to fulfill the elusive goal of bringing the right treatment to the right patient at precisely the right time 58.