Mathematical definitions of emergence in complex systems

For over a century, the scientific paradigm has been heavily dominated by reductionism - the assumption that the behavior of any complex system can be completely understood by disassembling it into its fundamental constituent parts and mapping their local interactions. However, as domains ranging from condensed matter physics and computational neuroscience to artificial intelligence and macroeconomics have evolved, reductionism has frequently proven insufficient for predicting macroscopic behavior. Complex systems frequently exhibit macroscopic properties, patterns, and causal powers that are not readily derivable from their microscopic components. This phenomenon, where the whole genuinely exceeds the sum of its parts, is known as emergence.

Historically relegated to philosophical speculation and qualitative observation, emergence is undergoing a rigorous mathematical formalization. Through the integration of information theory, causal inference, and non-equilibrium statistical mechanics, researchers are now mapping the precise mathematical conditions under which macroscopic features decouple from microscopic substrates. The analysis of emergence has transitioned from an epistemic puzzle into a measurable property of multivariate dynamical systems, allowing scientists to quantify exactly when and how a system's causal power shifts to higher levels of organization.

The Epistemological and Ontological Foundations of Emergence

The study of emergence generally distinguishes between two primary conceptual tiers: weak emergence and strong emergence. This taxonomy establishes the threshold between epistemological limitations (what observers can compute) and ontological novelty (what exists independently in nature). The debate historically hinged on whether emergent properties are simply a product of human cognitive limits or whether they represent genuinely novel causal forces in the physical universe 112.

Weak Emergence and Computational Irreducibility

Weak emergence refers to system properties that are generated by the interactions of microscopic components but cannot be practically deduced or predicted without running a step-by-step simulation of the system 1234. Coined in part to describe phenomena in artificial life and cellular automata - such as the "gliders" in Conway's Game of Life - weak emergence aligns with the concept of computational irreducibility 2568.

In weakly emergent systems, the macroscopic patterns supervene entirely on the microscopic rules. A Laplacean demon possessing complete knowledge of the initial conditions and the local interaction rules could, theoretically, compute the system's future states 113. However, because there are no mathematical shortcuts to bypass the unfolding of the system's dynamics over time, the macroscopic properties appear novel to the human observer. Empirical examples of weak emergence include traffic jams, the flocking patterns of birds, and phase transitions in thermodynamics 19. While weakly emergent properties are structurally robust and useful for describing system dynamics, they do not possess autonomous causal powers that override their constituent parts. They are compatible with a strictly bottom-up, deterministic worldview, even if minute variations in initial conditions render the system practically unpredictable due to chaos theory 1210.

Strong Emergence and the Challenge of Downward Causation

Strong emergence proposes a more radical ontological condition: macroscopic properties arise that are fundamentally irreducible to the properties and laws governing their isolated microscopic parts, even in principle 1278910. The hallmark of strong emergence is "downward causation," a regime wherein the macroscopic state exerts an irreducible causal influence over the behavior of its lower-level constituents 29101511.

Historically, strong emergence has been heavily scrutinized because it appears to violate the principle of the causal closure of the physical domain. Critics argue that attributing independent causal power to both the microscopic parts and the macroscopic whole creates an "exclusion problem," where physical events are overdetermined by competing micro and macro causes 101112. For example, if atomic forces completely determine the motion of a particle, any simultaneous macroscopic force dictating that particle's motion would be redundant or contradictory 1011. Consequently, strong emergence was often dismissed as a mystical or unscientific concept, particularly when invoked to explain consciousness or biological life without mechanistic evidence 13.

However, recent advancements in information theory and complex systems modeling have demonstrated that strong emergence can be conceptualized without violating physicalism. When analyzed through the lens of ensemble perspectives and information capacity, global constraints can systematically direct lower-level components 81114. Macroscopic variables can possess predictive and causal power that is mathematically absent at the microscopic scale, allowing strong emergence to be reframed as an objective shift in a system's informational architecture.

| Feature | Weak Emergence | Strong Emergence |

|---|---|---|

| Definition | Macro-properties are unexpected but derivable via step-by-step simulation. | Macro-properties are fundamentally irreducible to micro-level laws. |

| Predictability | Computationally irreducible; requires simulation, but predictable in principle. | Unpredictable from micro-states alone, regardless of computational power. |

| Causal Direction | Strictly bottom-up (micro determines macro). | Involves downward causation (macro constrains micro). |

| Ontological Status | Epistemological (relative to observer capacity/models). | Ontological (represents a fundamental physical reality). |

| Common Examples | Conway's Game of Life, bird flocking, traffic jams, weather patterns. | Biological autopoiesis, consciousness, specific condensed matter phases. |

Mathematical Formalisms of Causal Emergence

To move beyond semantic and philosophical debates, researchers have successfully imported Shannon information theory and Judea Pearl's calculus of interventions into the study of complex networks. This synthesis has produced formal metrics that quantify emergence as an objective property of a system's transition dynamics, measuring exactly how much causal work is being performed at different scales of observation.

Effective Information and Causal Capacity

A leading quantitative framework for emergence is "Causal Emergence," developed primarily by Erik Hoel and colleagues, which utilizes a metric known as Effective Information (EI) 212215161718. Effective Information measures the causal power of a system by quantifying how effectively its mechanisms constrain its possible past and future states 161719.

Mathematically, EI is defined as the mutual information between the interventions applied to a system (the causes) and the resulting state of the system (the effects), evaluated over a maximum entropy distribution of interventions. Utilizing Pearl's $do(x)$ operator to inject uniform noise into the system's inputs - meaning every possible state is tested with equal probability - researchers can map the system's Transition Probability Matrix (TPM) 22151618. The EI captures how deterministically a specific input leads to a specific output, and how uniquely an output can be traced back to a specific input.

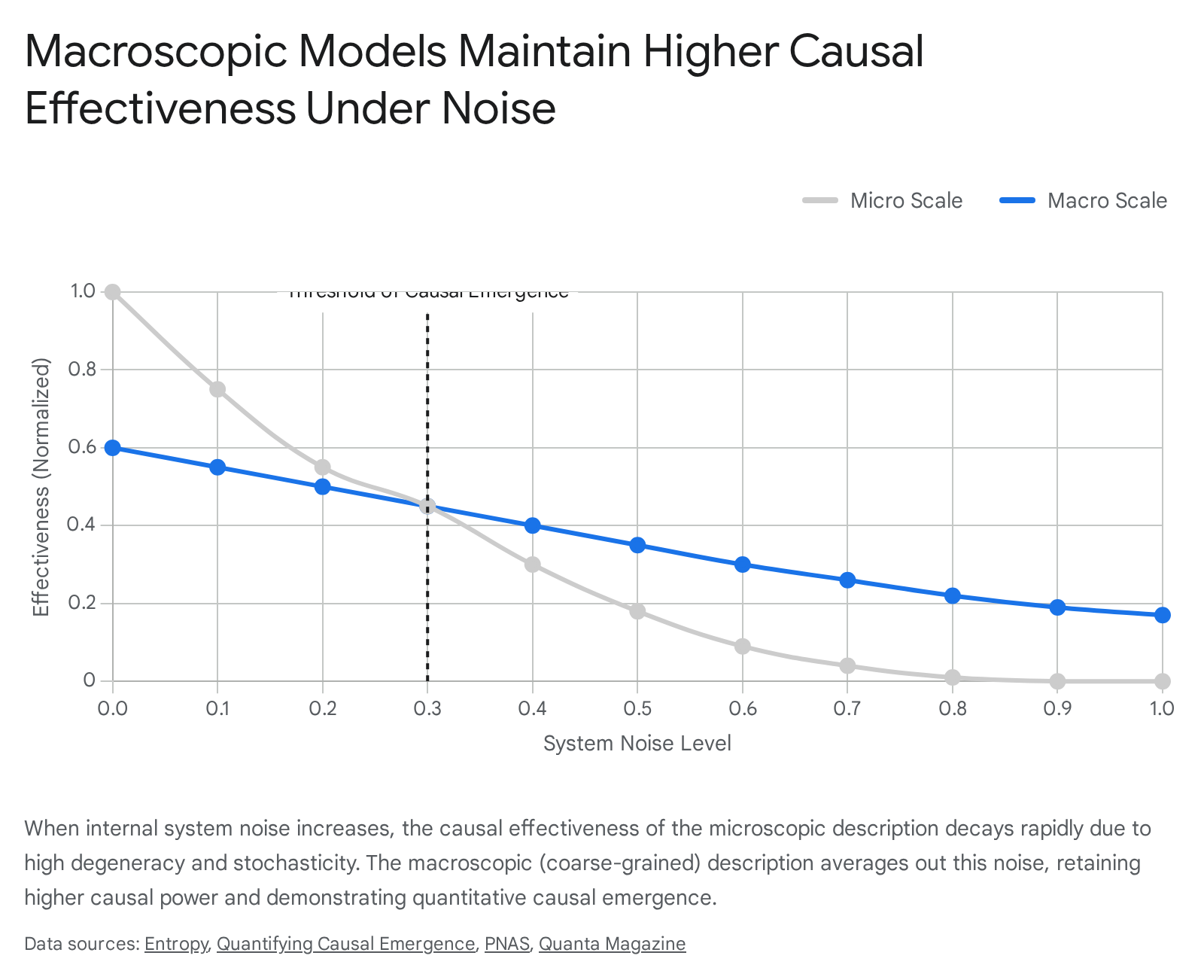

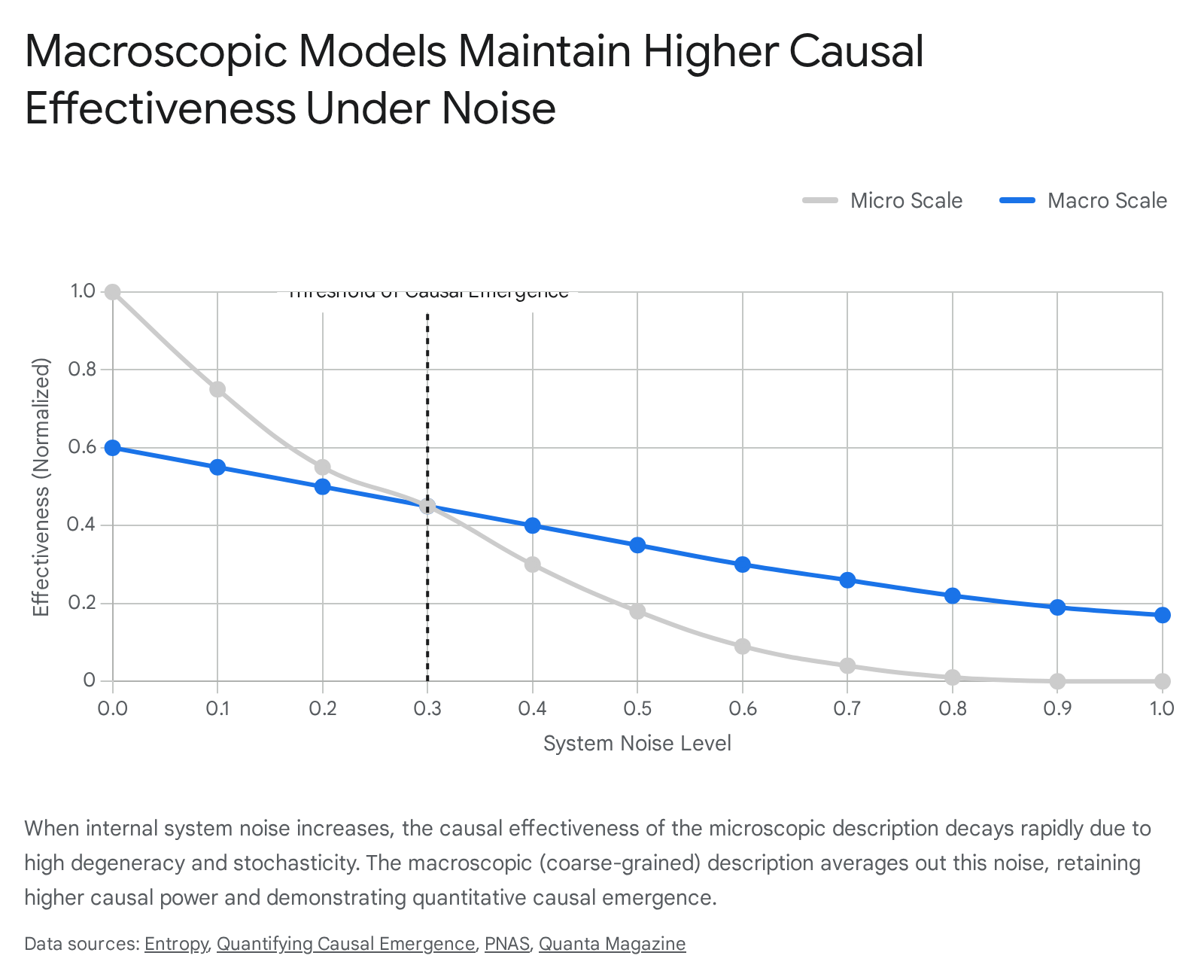

Causal emergence occurs when a macroscopic, coarse-grained description of a system possesses a higher Effective Information score than its microscopic, fine-grained description ($EI_{macro} > EI_{micro}$) 151618.

This finding demonstrates that a lower-resolution map can be objectively more informative than a fully detailed territory.

Determinism, Degeneracy, and the Channel Capacity of Causal Structure

The phenomenon where $EI_{macro} > EI_{micro}$ is driven by two distinct mathematical operations that occur during coarse-graining: 1. Reduction of Noise (Increasing Determinism): Microscopic systems are often highly stochastic and noisy. A specific micro-state may lead to a wide distribution of future micro-states, resulting in low determinism. Grouping micro-elements into macro-states averages out this noise. Transitions between macro-states become highly reliable and deterministic 1221161720.

- Reduction of Degeneracy (Increasing Selectivity): In many complex networks, multiple different microscopic states map to the exact same outcome, a property known as causal degeneracy. This makes it impossible to retroactively determine which specific micro-cause produced an effect. Coarse-graining groups these redundant micro-states together into a single macro-state, ensuring that specific macro-causes uniquely map to specific macro-effects, thereby eliminating degeneracy 21161720.

By conceptualizing a causal structure as an information channel, Hoel demonstrates that systems possess a fundamental "causal capacity" akin to Shannon's channel capacity 18292122. At the microscopic scale, noise and degeneracy prevent the system from utilizing its full causal capacity. When a system's causal interactions peak at a macroscopic scale, the macro-level is said to "beat" the micro-level, establishing an objective, measurable criterion for strong emergence 16172122.

Cross-Scale Information Conversion

The mathematical proof of causal emergence also relies heavily on the concept of information conversion across scales 232434. To illustrate this, researchers often utilize basic logic gates. Consider a macroscale XOR (exclusive-OR) gate. The XOR gate evaluates two binary inputs and outputs a '1' only if the inputs are different 2536. If one analyzes a microscopic network of simpler gates (e.g., NAND, AND, and OR gates) that collectively implement an XOR function, the total mutual information at the microscopic level may be higher (e.g., 2.5 bits) than the mutual information at the macroscopic level (e.g., 1 bit) 2324.

However, while the total volume of information decreases during coarse-graining, the type of information changes dramatically. At the microscale, much of the information is redundant or localized to specific pathways. At the macroscale, the information becomes highly synergistic 2324. This reveals that emergence is not necessarily the creation of new information out of nothing, but rather the structural conversion of microscale entropy and redundancy into causally efficacious, synergistic macroscale information 2324.

Partial Information Decomposition and Synergistic Interactions

To rigorously quantify the shift from redundant to synergistic information, researchers utilize the Partial Information Decomposition (PID) framework, originally developed by Williams and Beer in 2010 37262740. PID addresses a fundamental limitation in classical Shannon information theory: while classical mutual information $I(X;Y)$ measures the total dependence between variables, it cannot distinguish whether multiple sources provide the same information or uniquely combine to provide new information.

Decomposing Mutual Information

The PID framework decomposes the mutual information that a set of source variables holds about a target variable into non-overlapping informational atoms 2337274028: * Unique Information: Information about the target that is present in exactly one source and cannot be obtained from any other source 3727. * Redundant Information: Information about the target that is duplicated across multiple sources. Observing any single source provides this information 372729. * Synergistic Information: Information about the target that is jointly contained by multiple source variables but cannot be extracted from any single source or subset of sources viewed in isolation 3437272943.

Synergistic vs. Redundant Architectures

Emergence is tightly coupled with Synergistic Information. The XOR logic gate is the canonical paradigm for illustrating synergistic causal power 2325272830. If a target variable $Z$ is generated by $X \text{ XOR } Y$, analyzing $X$ or $Y$ individually yields exactly zero predictive information about $Z$, because knowing the state of one input does not reduce uncertainty about the output without knowing the state of the other input. The mutual information relies entirely on observing $X$ and $Y$ simultaneously; it is purely synergistic 232430.

Conversely, a COPY gate, which simply replicates an input, relies on unique or redundant information 202627. By applying PID to complex networks, researchers have demonstrated that macroscopic scales are overwhelmingly dominated by synergistic information 232427. This constitutes a formal proof that causal power is structurally reorganized into higher-order interdependencies, validating the core tenet of emergence: the whole possesses properties absent in the parts 232434.

O-Information as a Scalable Metric of Interdependency

While PID is conceptually powerful, computing the full lattice of information atoms becomes computationally intractable for systems with a large number of variables. The number of unique informational combinations scales superexponentially with the Dedekind numbers, rendering exact PID calculations impossible for large datasets 52629.

To resolve this, Fernando Rosas, Pedro Mediano, and colleagues introduced O-Information ($\Omega$), a highly scalable metric that quantifies the net balance between redundancy and synergy in arbitrary sets of interacting variables 5282943313233. O-information assesses the intrinsic properties of a system without needing to arbitrarily divide its parts into strict 'predictors' and 'targets' 43.

The O-information metric is signed, providing a clear heuristic for the system's dominant architecture: * Redundancy-Dominated ($\Omega > 0$): The macroscopic patterns are largely copies of information present in the micro-parts. Because multiple copies of the same information exist, emergence is difficult to detect 528293134. * Synergy-Dominated ($\Omega < 0$): The dependencies are best explained as patterns existing exclusively in the joint state of multiple variables, serving as a powerful statistical marker for emergence. Information is not stored in specific elements, but in the structural topology of the group 3429333435.

Applications in Neuroscience and Biological Networks

The scalability of O-information has allowed it to be applied directly to empirical data, yielding significant insights in computational neuroscience and physiology. By analyzing functional magnetic resonance imaging (fMRI) data, researchers have mapped higher-order functional connectivity in human brain networks 2933.

For instance, comparative studies evaluating the brain activity of younger versus older demographics reveal that synthetic models of aging brains exhibit significant, measurable increases in redundant O-information ($\Omega > 0$) across interaction orders 33. Healthy, highly integrated cognitive processing relies heavily on synergistic dynamics; the shift toward redundancy-dominated interdependencies serves as a quantifiable marker of cognitive decline and altered functional architecture 3335. O-information has also been utilized to detect statistical synergy in domains as varied as cardiovascular interactions, the structural progression of Baroque music scores, and the entanglement of quantum states 29433536.

The Taxonomy of Information Dynamics

Building upon the PID framework, formal criteria have been established to rigorously differentiate between varieties of emergent behavior in multivariate time-series data. Rosas, Mediano, and colleagues proposed a taxonomy of information dynamics that categorizes how macroscopic supervenient features ($V_t$) interact with their underlying microscopic state space ($X_t$) over time 53132373853.

By mapping the predictive power of a macroscopic feature over future states, the framework isolates the specific conditions under which the whole exceeds the sum of its parts, providing distinct mathematical definitions for distinct emergent phenomena 53153.

Quantifying Causal Emergence

Causal Emergence ($\Psi > 0$): This regime occurs when a macroscopic feature possesses unique predictive power over the future evolution of the entire system that cannot be accounted for by the aggregate predictive power of any groups of microscopic parts evaluated independently 315339. If $\Psi$ is strictly positive, the supervenient macroscopic feature holds dynamically relevant information that is fundamentally invisible at lower scales. This confirms emergence in systems such as the iconic "gliders" in Conway's Game of Life or the coordinated flocking patterns of birds 54031.

Mathematical Proofs of Downward Causation

Downward Causation ($\Delta > 0$): This occurs when the macroscopic feature exerts unique predictive power over the future state of specific individual microscopic elements 51531385339. In this scenario, knowing the state of the macroscopic whole allows an observer to predict the trajectory of a single microscopic particle better than if they only possessed information about the neighboring microscopic particles.

This mathematically operationalizes the concept of top-down constraints. It proves that downward causation is not a violation of physical laws or a metaphysical illusion, but a verifiable reallocation of predictive information within the system's topology 5151431.

Causal Decoupling in Supervenient Features

Causal Decoupling ($\Gamma = 0$ and $\Psi > 0$): This occurs when a macroscopic feature uniquely predicts its own future evolution (or the evolution of other macro-features), but provides zero unique predictive power regarding any individual micro-component 53132375339. In this regime, the emergent macroscopic layer behaves autonomously, fully decoupled from the low-level substrate upon which it supervenes.

Causal decoupling is highly prevalent in cognitive architectures and biology. For example, oscillatory brain waves dictate the synchronized firing phases of large populations of neurons; the wave dynamics can be predicted based on previous wave states, completely decoupled from the noisy, stochastic firing of any individual constituent neuron 3738.

| Metric | Symbol | Informational Meaning | Systemic Implication |

|---|---|---|---|

| O-Information | $\Omega$ | Balance of synergy vs. redundancy. | Negative values indicate synergy-dominated systems, acting as a broad marker for emergence. |

| Causal Emergence | $\Psi$ | Macro-feature uniquely predicts future system states. | The whole contains causal power absent in the isolated parts. |

| Downward Causation | $\Delta$ | Macro-feature uniquely predicts future micro-parts. | The macroscopic state constrains and directs the behavior of its constituents. |

| Causal Decoupling | $\Gamma$ | Macro-feature predicts itself, but not micro-parts. | The macroscopic layer operates autonomously, shielded from micro-level noise. |

Integrated Information Theory

While Causal Emergence and PID evaluate emergence through state transitions, predictive capacities, and temporal dependencies, Integrated Information Theory (IIT) approaches the problem by evaluating the structural irreducibility of a system's internal causal architecture. Originally proposed by neuroscientist Giulio Tononi as a mathematical framework to explain consciousness, IIT's core metric - Integrated Information ($\Phi$, or Phi) - serves broadly as a quantification of strong emergence in physical systems 4041424344.

Phenomenological Axioms to Physical Postulates

IIT reverses the traditional scientific methodology by starting with phenomenological axioms - the indubitable characteristics of conscious experience (e.g., that experience is unified, structured, and specific) - and deriving the necessary physical postulates that a substrate must possess to support those phenomena 404144. According to IIT, an emergent, unified system must exert intrinsic cause-effect power. It must make a difference to itself, not merely act as a conduit for inputs transforming into outputs 4041.

This physical requirement dictates that a system only exhibits Integrated Information if it features a reentrant, highly feedback-driven architecture. Purely feed-forward networks, such as many traditional artificial neural networks and basic sensory relays, yield a $\Phi$ of zero. Because feed-forward systems lack recurrent loops, they do not integrate information or possess intrinsic cause-effect power over themselves, regardless of how complex their output may appear to an outside observer 41.

Structural Irreducibility and the Maximum Entropy Boundary

The quantity of small phi ($\phi$) represents the degree to which a specific mechanism within a system is irreducible, while big Phi ($\Phi$) evaluates the irreducibility of the entire system 4043. To calculate $\Phi$, the framework identifies the "minimum information partition" - the specific division of the system into two halves that cuts the weakest causal links, resulting in the least loss of cause-effect information 414344.

The system is tested by injecting maximum entropy into one half of the partition and measuring how effectively the other half is constrained. If partitioning the system fundamentally destroys its causal power, the system is highly integrated and operates as a unified emergent whole 404344. The local maximum of integrated information across all possible subsets of elements identifies the "complex," which IIT posits as the physical boundary of the conscious entity 4041. By defining emergence through the unification, irreducibility, and intrinsic causal power of a topology, IIT aligns mathematically with biological principles, predicting that emergent entities (like biological brains) prioritize the preservation of systemic boundaries over component-level interactions 4142.

Controversies and Limitations in Causal Mapping

Despite its mathematical rigor, IIT remains highly controversial within the broader scientific community. In 2023, a group of scholars published an open letter characterizing the theory as "unfalsifiable pseudoscience," arguing that its empirical support is insufficient to justify its sweeping claims regarding consciousness 40. Furthermore, calculating the exact value of $\Phi$ requires evaluating every possible partition of a system across its entire state space. This computational burden is astronomically high, rendering precise $\Phi$ calculations practically impossible for any system larger than a few interconnected logic gates or simulated neurons, meaning large-scale empirical validation is currently unavailable 43. Nevertheless, as a theoretical framework for quantifying structural emergence and irreducibility, it remains highly influential 404243.

Emergence in Artificial Neural Networks and Large Language Models

The debate surrounding the definitions and mechanics of emergence has recently surged to the forefront of artificial intelligence, specifically regarding the capabilities of Large Language Models (LLMs). As foundation models like GPT-3, PaLM, and LaMDA scaled to hundreds of billions of parameters, researchers observed what appeared to be "sharp left turns" or breakthrough capabilities that were entirely absent in smaller iterations of the exact same architecture 460456246.

The Observation of Abrupt Capability Scaling

In a highly influential 2022 paper, Wei et al. operationally defined emergent abilities in LLMs as capabilities that remain near random-guessing levels for small and medium-scale models, but abruptly improve to high proficiency once a critical threshold of model scale or training compute is surpassed 456246.

These sudden, unpredictable leaps included advanced skills such as analogical reasoning, multi-step arithmetic, zero-shot problem solving, and even the ability to interpret novel literary metaphors or execute deception 46. Because these behaviors were not explicitly engineered or anticipated by the model creators, they generated intense discourse regarding AI safety, alignment, and the unpredictable nature of neural scaling 4546. The prevailing narrative suggested that LLMs were exhibiting genuine ontological phase transitions fundamentally tied to computational mass 60624647.

The Mirage Hypothesis and Metric Dependence

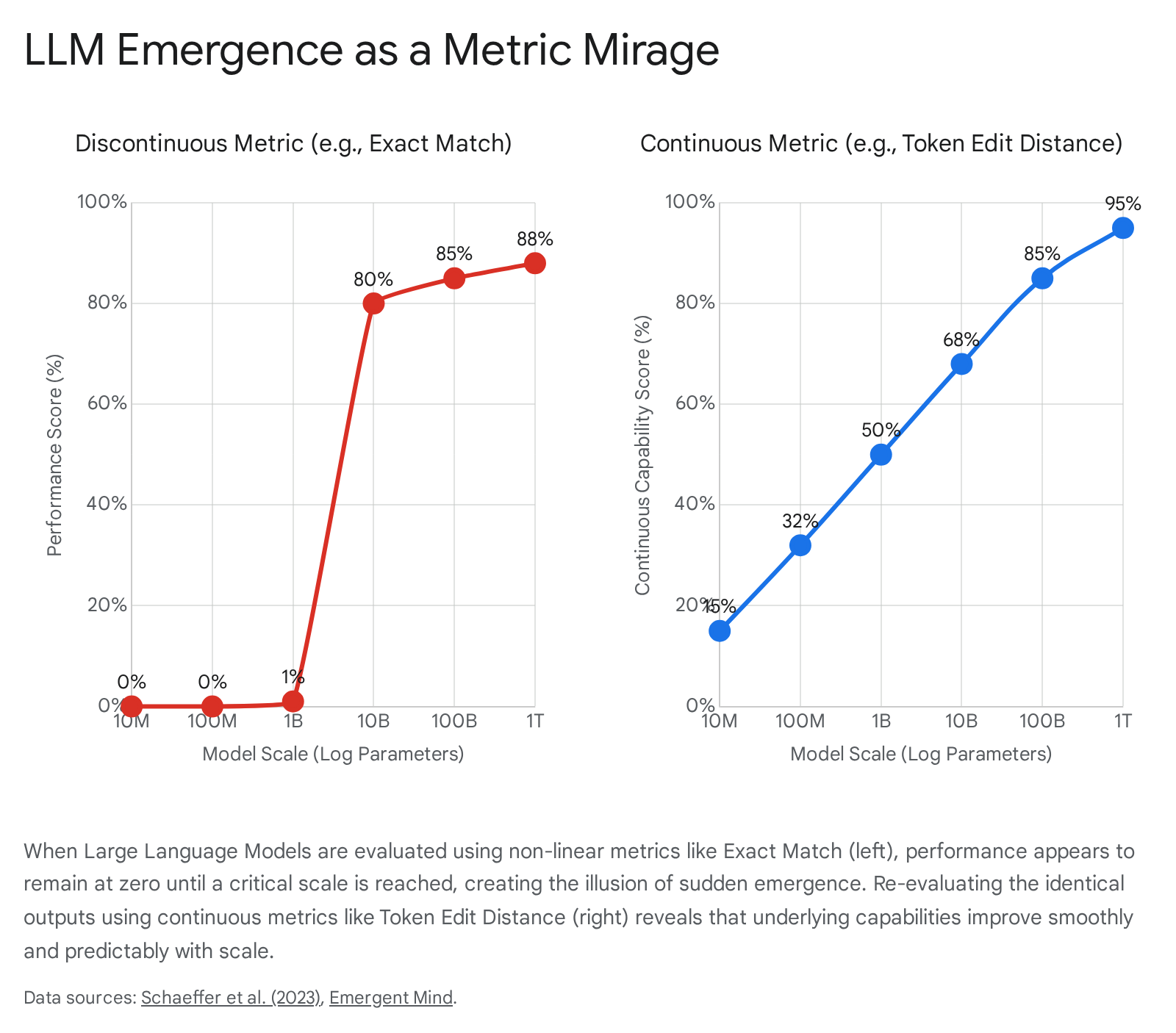

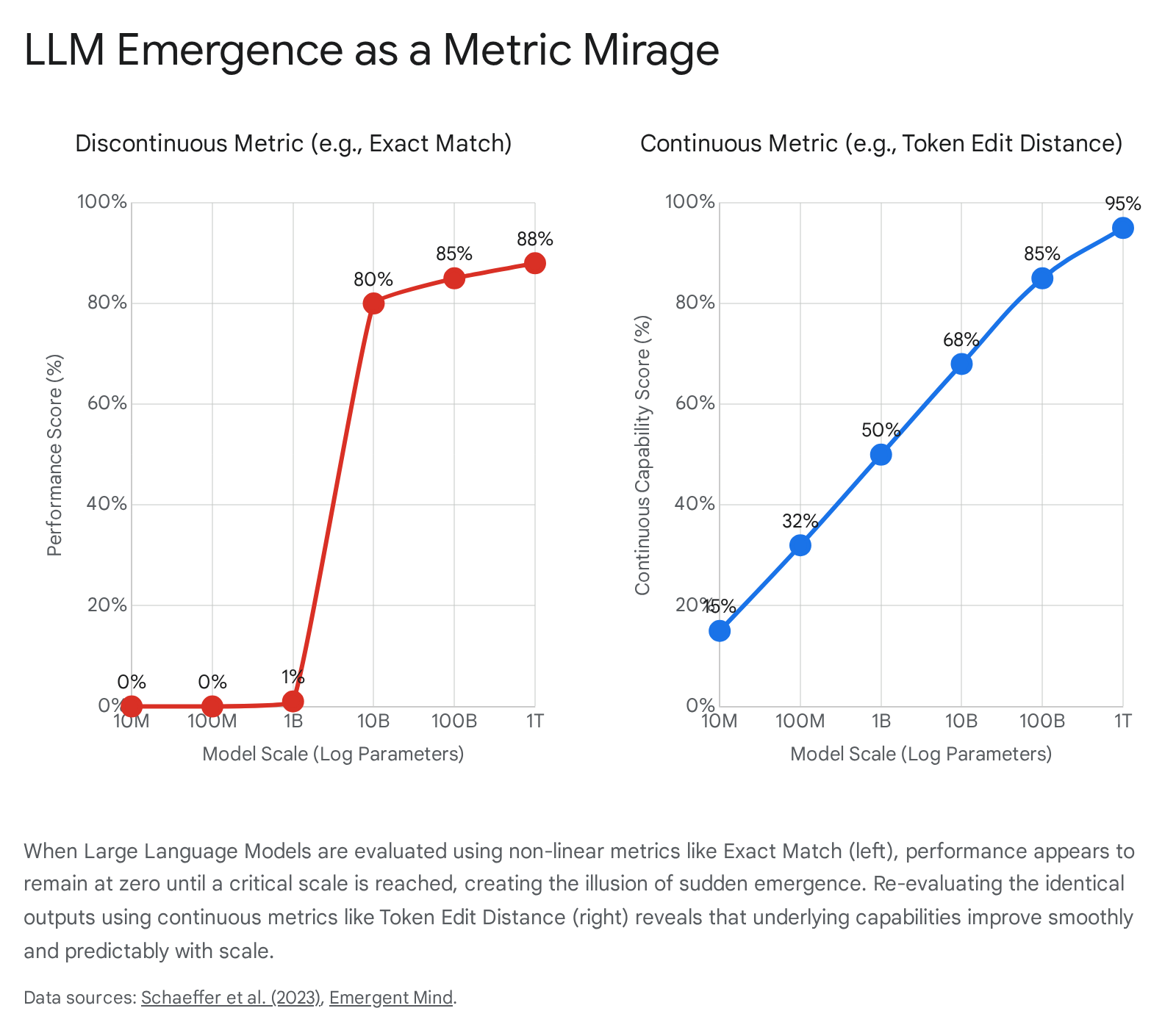

This paradigm was aggressively challenged by Schaeffer, Miranda, and Koyejo (2023), who proposed that the purported emergence in LLMs is predominantly an artifact of observer-selected measurement frameworks - a phenomenon they termed the "Mirage Hypothesis" 45464849.

Schaeffer et al. demonstrated that the appearance of sharp, discontinuous capability leaps was almost entirely dependent on the researchers' choice of non-linear or discontinuous evaluation metrics 454849. Metrics such as "Exact Match Accuracy" or "Multiple Choice Grade" are binary; a model receives zero credit for an output that is conceptually close but structurally imperfect. Because smaller models consistently fail to clear this absolute threshold, their aggregate performance registers as a flatline. When a model scales sufficiently to generate the exact required string, its score jumps abruptly from zero to highly proficient 4546.

When these same fixed model outputs are re-evaluated using continuous, high-resolution metrics - such as Token Edit Distance or Brier Score - the illusion of abrupt emergence evaporates 464849. Continuous metrics reveal that the underlying capabilities (e.g., the statistical probability of generating the correct sequence of tokens) actually scale smoothly, predictably, and continuously alongside parameter counts 45464849.

Additionally, the study noted statistical artifacts: insufficient test data in smaller models artificially inflates the perceived sharpness of the jump. Increasing the volume of evaluation samples often reveals that even small models exhibit above-chance improvements that are masked by low-resolution testing 4649.

Grokking and Non-Linear Phase Transitions

Despite the robustness of the metric mirage argument, the consensus on whether LLMs exhibit true emergence remains contested, and several counter-claims suggest genuine systemic phase transitions do occur in deep learning architectures.

One primary counter-argument invokes the phenomenon of "grokking." Grokking is a process whereby a neural network trains for an extended period, achieving high accuracy on its training data while performing at random guessing levels on unseen test data 474967. Eventually, after continued training beyond the point of apparent stagnation, the model's internal weight landscape undergoes a sudden phase transition. The model abruptly "memorizes" the underlying generative rules of the task and achieves perfect test generalization 496768.

While grokking is functionally distinct from scale-based emergence - grokking occurs within a single model over increasing gradient steps, whereas emergent abilities occur across a model family as parameter counts scale - it provides empirical proof that neural networks are capable of executing sharp, unpredictable internal reorganizations that unlock novel capabilities 4749. It demonstrates that non-linear capability jumps are not exclusively artifacts of external metric choices, but can stem from the intrinsic geometry of the network's optimization landscape 6768.

The Influence of Data Complexity and Pre-Training Loss

Further challenging the strict Mirage Hypothesis, researchers argue that emergence cannot be understood merely as a function of raw model size (parameter count), but must be viewed as an interaction between model capacity and the environment 46. Muckatira et al. (2024) demonstrated that emergent abilities can actually be unlocked in significantly smaller models simply by simplifying the training data, such as applying rigorous vocabulary filtering 4669. This indicates that the threshold of emergence is highly sensitive to the structural complexity of the information the model is attempting to compress 46.

Additionally, Fu et al. (2024) proposed redefining the threshold of emergence based on pre-training loss rather than parameter counts. Pre-training loss serves as a fundamental, unifying indicator of a model's learned state, synthesizing the combined effects of model size, dataset size, and compute. They observed that specific complex downstream abilities strictly fail to manifest until the network's pre-training loss drops below a definitive, specific boundary 6246. Crucially, this loss-threshold emergence persists even when utilizing continuous evaluation metrics, directly countering the claim that all abrupt scaling phenomena are mere metric deformations 62.

Other analyses highlight U-shaped and inverted-U developmental patterns during scaling. At specific scales, the performance of easy and hard task groups offset each other, masking improvement until a critical mass is reached where the cancellation ceases, resulting in a sudden statistical leap in aggregate capabilities 46. Consequently, evaluating LLM emergence requires sophisticated, high-resolution frameworks to accurately detect capability thresholds and mitigate potential safety risks as models are repurposed and scaled 46.

Autopoiesis, Biological Systems, and Quantum Contexts

The mathematical precision now afforded to emergence is fundamentally altering epistemological frameworks beyond computer science, deeply influencing theoretical biology and quantum physics.

Self-Organization and Autopoietic Networks

In the biological sciences, the formalization of causal decoupling provides a mathematical foundation for theories of autopoiesis 505152. Coined by biologists Humberto Maturana and Francisco Varela, an autopoietic system is a self-referential, self-reproducing network wherein the interactions between microscopic components continuously regenerate the macroscopic network that produced them in the first place 50515253.

Under strict reductionist paradigms, viewing a biological organism as an autonomous whole with top-down causal power over its individual cells was often viewed as a useful biological heuristic rather than a rigorous physical reality 52. However, applied mathematical frameworks like Effective Information and O-Information confirm that such biological networks actively convert entropy into synergistic information. They maintain robust macroscopic states that exert mathematically verifiable downward causation on molecular mechanisms 215212350. Living systems are paradigmatic examples of causal decoupling; they shield their macroscopic integrity and maintain homeostasis despite the noisy, stochastic perturbations of their microscopic environments 143752.

Effective Field Theories and Quantum Supervenience

The validation of strong emergence also resolves long-standing tensions in physics, particularly condensed matter and quantum mechanics 91011. In condensed matter physics, spontaneous symmetry breaking gives rise to macroscopic properties that simply do not exist at the microscopic scale. For example, the crystalline structure of a solid allows for the propagation of phonons - quasiparticles of sound and heat. Phonons exert real causal influence within the material, yet their existence is entirely dependent on the downward causation imposed by the macroscopic crystal lattice 11.

Similarly, in quantum mechanics, the phenomenon of entanglement creates systemic wholes that contain more information than the statistical mixture of their independent parts. A fully entangled quantum state possesses holistic properties and causal trajectories that are irreducible to the properties of individual particles 954. This indicates that ontological non-reducibility and strong emergence are not exceptions to the rules of physics, but natural phenomena rooted in the fundamental mathematical structure of nature, validating the use of distinct Effective Field Theories at distinct scales of reality 1154.

Epistemic Pluralism and Complex Systems Governance

The shift from linear, reductionist causality to complex, emergent systemic modeling aligns closely with epistemic pluralism, particularly recognizing the functional efficacy of knowledge systems originating from the Global South 50525355.

Integrating Non-Western Knowledge Systems

Classical international relations, environmental policy, and Western economic models have long relied on Cartesian dualisms - separating mind from matter, society from nature - and have utilized linear, agent-based rational choice models to predict outcomes 50525356. This machine-metaphor approach to nature views the environment as an aggregate of isolatable commodities to be managed via localized interventions 53.

However, complex systems theory - informed by the formal mathematics of emergence - demonstrates that ecological and social networks are actually adaptive, multi-equilibrium systems governed by non-linear feedback loops and autopoietic self-organization 5357. Indigenous knowledge systems, Islamic, Confucian, and Ubuntu-influenced epistemologies have historically modeled natural environments as deeply synergistic, interconnected wholes that cannot be effectively managed by isolating individual variables 50515253. The mathematical proof that macroscopic constraints and emergent synergies play an irreducible role in system evolution provides a rigorous scientific bridge to these pluralistic paradigms 505153.

Multi-Scale Adaptive Policies in the Global South

As global institutions face the non-stationary realities of the Anthropocene, policies are increasingly recognizing the necessity of treating ecosystems and societies as causally decoupled, self-organizing networks rather than mere aggregates of independent actors 505357.

For example, studies on drought adaptation in the Chiloé Archipelago of southern Chile illustrate that top-down, reductionist public policies frequently fail to build resilience in small-scale farming systems 57. Effective adaptation requires leveraging local, situated knowledge to foster community empowerment and co-produce interventions that respect the synergistic complexity of the social-ecological dynamics 57. Concurrently, institutions like the Max Planck Institute for the History of Science (MPIWG) are actively expanding research networks to integrate Global South perspectives into the history and philosophy of complex systems, acknowledging that addressing humanity's multi-scale challenges requires moving beyond WEIRD (Western, Educated, Industrialized, Rich, and Democratic) structural paradigms 5556. The strategic reawakening of the Global South in multilateral forums like BRICS+ and the G20 reflects a geopolitical transition toward resonant pluralism, where global order is increasingly defined by the emergent properties of complex network synchronization rather than unilateral power projection 5058.

Conclusion

Emergence is no longer a nebulous philosophical placeholder for the limits of human calculation; it is a measurable, mathematically distinct property of complex systems. Through rigorous frameworks such as Effective Information, Partial Information Decomposition, and Integrated Information Theory, it is now provable that macroscopic layers of organization can possess greater causal power, higher determinism, and irreducible synergistic predictive capacity compared to their microscopic constituents.

While the appearance of emergence can sometimes be an artifact of discontinuous measurement - as powerfully demonstrated by the mirage hypothesis in the evaluation of large language models - the underlying physical realities of causal decoupling and downward causation remain structurally valid. By formalizing the transition from microscopic interactions to macroscopic wholes, the scientific community has acquired the necessary tools to model reality not just as a vast collection of fundamental particles, but as a hierarchical, interconnected tapestry of irreducible, causally potent systems.