Machine Learning for Weather Forecasting and Climate Modeling

Introduction: The Dawn of Data-Driven Atmospheric Science

For over seven decades, the foundational bedrock of meteorological forecasting has been Numerical Weather Prediction (NWP). Rooted in the pioneering mid-twentieth-century work of mathematicians and atmospheric scientists, NWP relies on the strict discretization of the Earth's atmosphere into a three-dimensional grid, solving coupled, non-linear partial differential equations - primarily the Navier-Stokes equations for fluid dynamics and the laws of thermodynamics 123. Operating these traditional General Circulation Models (GCMs) requires colossal computational infrastructure. Institutions such as the European Centre for Medium-Range Weather Forecasts (ECMWF) and the United States National Oceanic and Atmospheric Administration (NOAA) utilize supercomputing clusters with tens of thousands of processing cores to produce deterministic and probabilistic ensemble forecasts 123. While physics-based models have seen steady, incremental improvements in accuracy through higher spatial resolutions and enhanced mathematical parameterizations of sub-grid processes like cloud formation and radiative transfer, they remain severely bottlenecked by their extreme computational expense and the inherent chaotic nature of atmospheric fluid dynamics.

Between 2023 and 2024, the meteorological and climate science communities experienced a profound paradigm shift. The integration of advanced deep learning architectures into atmospheric science precipitated a breakthrough: the emergence of Machine Learning Weather Prediction (MLWP) systems. Capable of analyzing decades of historical atmospheric data to infer complex, non-linear dynamics without explicitly solving the underlying physical equations, these data-driven models have rapidly matched, and in many critical metrics surpassed, the traditional NWP gold standards 1457. This transformation is fundamentally redefining the limits of predictability.

This comprehensive report examines the 2023 - 2024 breakthroughs in artificial intelligence weather forecasting, specifically evaluating the architectural mechanisms and operational impacts of models such as GraphCast, Pangu-Weather, FengWu, and NeuralGCM. To present a holistic global picture, the analysis broadens the geographical scope to recognize the intense parallel innovation occurring across both Asian technology sectors and Western counterparts. Furthermore, the analysis rigorously dispels the prevailing misconception that artificial intelligence is poised to entirely replace physics-based models, detailing the absolute reliance of machine learning on traditional physics models for training data and the accelerating trend toward hybrid architectures. Finally, the report delineates the distinct boundaries of artificial intelligence capability, contrasting its proven operational superiority in medium-range forecasting against its current, profound limitations in long-term, multi-decadal climate projection and the prediction of unprecedented extreme events in a rapidly warming world.

The Foundational Relationship: Dispelling the Physics Replacement Myth

A persistent and potentially dangerous misconception within the broader public and technological discourse is that artificial intelligence models are poised to entirely replace physics-based numerical models, rendering traditional atmospheric physics obsolete. This fundamentally misrepresents the symbiotic relationship between data-driven algorithms and physical science. The reality is that purely data-driven artificial intelligence models are entirely dependent on traditional physics models for their very existence and operational viability.

Models such as GraphCast, Pangu-Weather, and FourCastNet do not learn fluid dynamics from raw satellite imagery or disparate thermometer readings. Instead, they are trained on highly structured reanalysis datasets, almost exclusively the ERA5 archive produced by the ECMWF 16910. Reanalysis is a complex physical process that utilizes a traditional, physics-based numerical model and an advanced data assimilation scheme (such as 4D-Var) to weave disparate, temporally irregular historical observations into a complete, mathematically consistent three-dimensional grid of the atmosphere over time 7. ERA5 is, fundamentally, the output of a physics model. If traditional physics models and their data assimilation pipelines are decommissioned, artificial intelligence models will be deprived of the rigorously structured initial conditions required for both their initial training and their daily operational inference 8.

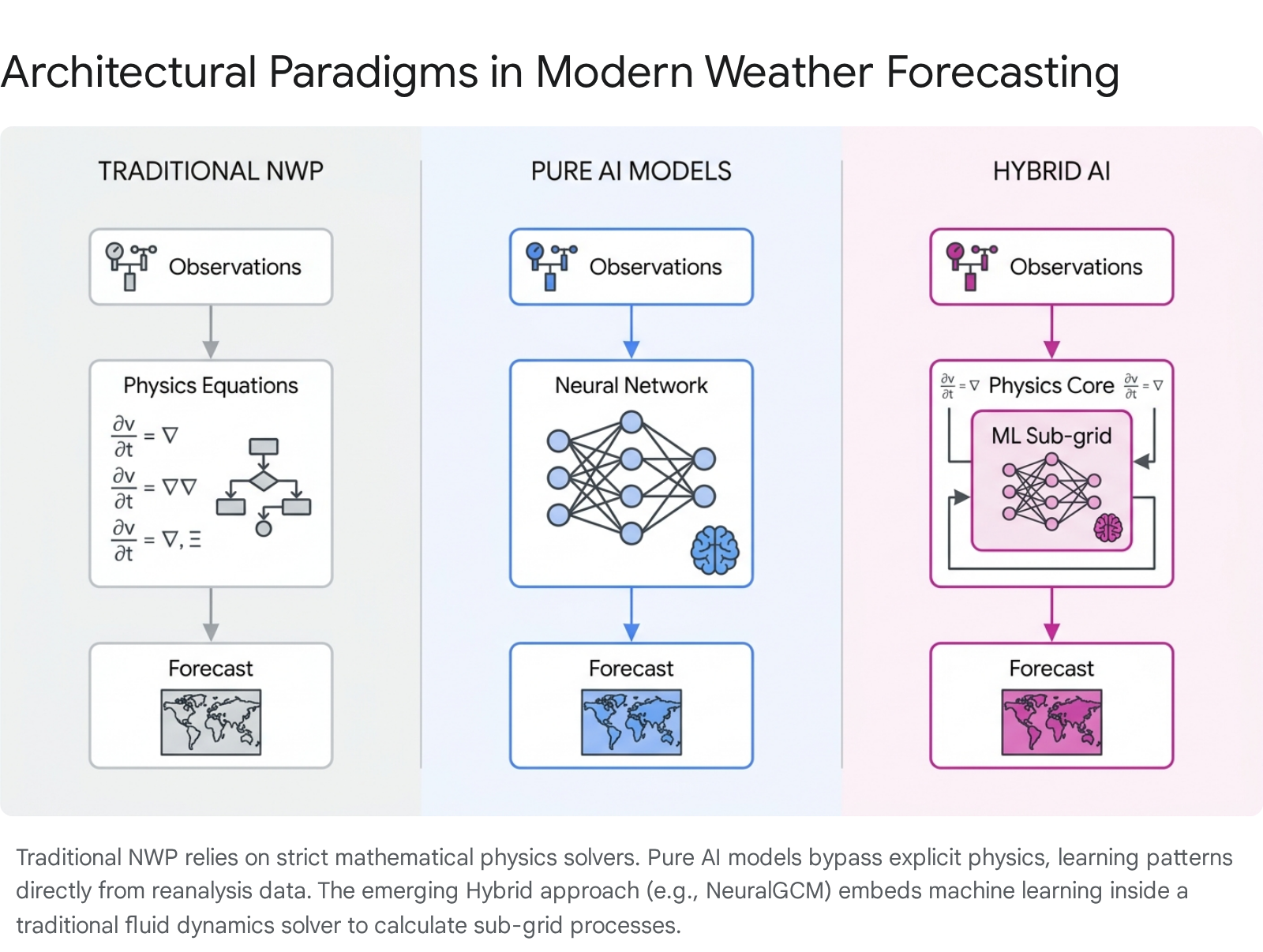

Furthermore, pure artificial intelligence models act essentially as highly sophisticated pattern-matching interpolators. While they exhibit an astonishing ability to replicate the complex interactions of atmospheric variables over time, they lack an inherent, structural understanding of physical conservation laws, such as the conservation of mass, momentum, and energy. Consequently, when pushed outside the boundaries of their training data, purely data-driven models can theoretically produce physically impossible atmospheric states. Recognizing these limitations, the true frontier of atmospheric science is not the abandonment of physics, but the development of Physics-Informed Neural Networks (PINNs) and deeply integrated hybrid models that merge differentiable mathematical solvers with machine learning parameterizations 91415.

The Asian Vanguard: High-Resolution Modeling and Architectural Innovation

Asian technology conglomerates and research institutions have aggressively pursued meteorological artificial intelligence, producing several of the most consequential foundational models in the discipline. Their approaches have largely defined the current landscape of spatial resolution scaling and multi-timescale forecasting.

Huawei's Pangu-Weather represented a watershed moment in the field. Detailed in a July 2023 publication in the journal Nature, Pangu-Weather became the first prediction model to demonstrate higher precision than traditional numerical weather forecast methods across a comprehensive suite of meteorological metrics 61011. Pangu-Weather utilizes a 3D Earth-Specific Transformer (3DEST) architecture, which models the atmosphere as a three-dimensional volume processed by self-attention layers 36. A critical architectural innovation of Pangu-Weather was its departure from pure autoregressive forecasting, a method where the model predicts the next time step and feeds that prediction back into itself, causing errors to compound continuously. Instead, Huawei trained multiple specialized sub-models for distinct forecast lead times - specifically, 1-hour, 3-hour, 6-hour, and 24-hour intervals - intelligently combining them during inference to mitigate error accumulation over extended periods 6. Pangu-Weather has exhibited remarkable proficiency in tracking complex cyclonic phenomena, significantly reducing tropical cyclone track errors at five-day lead times compared to the ECMWF IFS, while executing these predictions roughly 10,000 times faster than conventional ensemble models 61118. In 2024, Huawei Cloud further expanded its capabilities by launching the "Zhiji" Regional Model for Shenzhen, a localized iteration of Pangu-Weather operating at an ultra-high 3-kilometer spatial resolution to provide hyper-local, five-day forecasts 1912.

Concurrently, the Shanghai Artificial Intelligence Laboratory, in collaboration with several prominent Chinese universities, introduced FengWu, a multimodal weather Transformer. FengWu addresses atmospheric modeling by recognizing that treating all meteorological data homogeneously is suboptimal. Instead, it treats different atmospheric variables - such as temperature, wind speed, and humidity - as distinct modalities, utilizing modality-specific encoders and decoders to capture the unique physical behaviors of each variable 37. In early 2024, the Shanghai AI Lab pushed the spatial boundaries of the field by introducing FengWu-GHR. Traditional models are strictly limited by the spatial resolution of their training data, predominantly the 0.25-degree (approximately 25 km) resolution of the ERA5 dataset. FengWu-GHR circumvents this limitation to achieve a 0.09-degree horizontal resolution, or approximately 9 kilometers 71322. It achieves this through a novel Spatial Identical Mapping Extrapolate (SIME) method and Decompositional and Combinational Transfer Learning (DCTL), allowing the model to inherit prior atmospheric knowledge from a low-resolution pre-trained meta-model and dynamically apply it to high-resolution operational analysis data 2324. Boasting over 4 billion learnable parameters, FengWu-GHR simulates kilometer-scale atmospheric dynamics, a persistent challenge for numerical methods due to prohibitive computational costs 1323.

Additionally, researchers at Fudan University introduced FuXi, a machine learning model that addresses the temporal degradation inherent in long-range forecasting through a sophisticated cascade modeling approach. Recognizing that improving long-term forecast performance via multi-step autoregressive training often degrades short-term accuracy, the FuXi architecture employs three separate models: FuXi Short (trained for timesteps 0 to 20), FuXi Medium (timesteps 21 to 40), and FuXi Long (timesteps 41 to 60) 7. These models are sequentially cascaded, starting from a pre-trained base model, effectively merging their forecasts into each other. This architectural choice allows FuXi to maintain sharper, more detailed forecasts at extended lead times, often exhibiting lower root mean square errors for variables like sea-level pressure and temperature compared to single-model autoregressive systems 7.

Western Innovations: Graph Networks, Diffusion, and Foundation Models

Parallel to the rapid developments in Asia, Western technology entities - particularly Google DeepMind, Microsoft, and NVIDIA - have pioneered alternative algorithmic architectures that have fundamentally redefined state-of-the-art forecasting capabilities.

Google DeepMind's GraphCast, introduced conceptually in late 2022 and formally published in Science in late 2023, shifted the paradigm away from standard grid-based convolutional networks or vision transformers. GraphCast models the atmosphere using Graph Neural Networks (GNNs) based on a high-resolution, multi-scale icosahedral mesh representation of the Earth 71415. This architecture allows the model to process spatial relationships across the globe more efficiently than a latitude-longitude grid, which suffers from severe distortions at the poles. By passing "messages" through the graph nodes to simulate atmospheric dynamics, GraphCast processes data across 13 to 37 vertical pressure levels. Evaluating GraphCast against the ECMWF HRES model across 1,380 verification targets, which represent combinations of atmospheric variables, pressure levels, and lead times, GraphCast outperformed the physics-based standard on 90% of the metrics 61827. GraphCast iteratively predicts weather states at 6-hour increments out to 10 days, processing 227 variables per grid point in under 60 seconds on a single Tensor Processing Unit (TPU) 39. The model gained widespread operational attention in 2023 when it accurately predicted the track and landfall of Hurricane Lee in the Atlantic several days earlier and more definitively than traditional numerical models 6.

Building on the success of deterministic forecasting, Google DeepMind introduced GenCast in December 2024, published in the journal Nature. Recognizing that the chaotic nature of the atmosphere makes perfect deterministic forecasting mathematically impossible, meteorologists rely on probabilistic ensemble forecasts to gauge the likelihood of various weather scenarios. GenCast addresses this by utilizing a diffusion model - conceptually similar to the generative models used for high-fidelity image creation - adapted to the spherical geometry of the Earth 161718. GenCast generates an ensemble of 50 or more distinct, highly realistic 15-day weather trajectories. In comprehensive testing against the ECMWF's operational ensemble system (ENS), GenCast was more accurate on 97.2% of the tested targets, offering unprecedented reliability in predicting extreme weather events, regional atmospheric rivers, and tropical cyclone trajectories while requiring only eight minutes of computation on Cloud TPUv5 hardware 161718.

Microsoft Research approached the challenge by developing ClimaX, the first true "foundation model" designed explicitly for diverse weather and climate tasks. While models like Pangu-Weather and GraphCast are highly specialized for medium-range deterministic forecasting, ClimaX aims for broader, flexible utility across the atmospheric sciences. Built on a Vision Transformer (ViT) architecture, ClimaX introduces novel variable tokenization and variable aggregation blocks 192021. The standard ViT tokenization, which divides inputs into uniform patches, struggles with the extreme heterogeneity of climate data where datasets possess varying physical variables. Variable tokenization treats each meteorological variable as a separate modality, allowing the model to ingest datasets with differing physical groundings and spatio-temporal resolutions 192034. By pre-training on generalized global climate datasets derived from the Climate Model Intercomparison Project (CMIP6) using self-supervised learning, and subsequently fine-tuning on ERA5, ClimaX can be adapted for a multitude of downstream tasks. These include short-range weather forecasting, long-range climate projections, and the statistical downscaling of coarse climate models to higher regional resolutions, demonstrating the immense versatility of the foundation model paradigm in Earth sciences 193435.

NVIDIA's FourCastNet utilizes Fourier Neural Operators (FNOs), specifically the Adaptive Fourier Neural Operator (AFNO), to conduct ultra-fast global forecasts. By mapping atmospheric states into the frequency domain, FourCastNet achieves massive computational speedups for high-resolution modeling, establishing a vital baseline for rapid resolution scaling and paving the way for the generation of massive probabilistic ensembles crucial for assessing the risk of extreme events 318.

Comparative Architectural Analysis

The rapid advancement of artificial intelligence in weather forecasting has been characterized by highly divergent architectural approaches to the same physical problem. The following table synthesizes the core attributes of the leading meteorological models developed during the 2022 - 2024 innovation cycle.

| Model Name | Developer / Origin | Model Type / Architecture | Spatial Resolution | Training Data | Primary Forecasting Horizon |

|---|---|---|---|---|---|

| Pangu-Weather | Huawei Cloud (China) | 3D Earth-Specific Transformer (3DEST) | 0.25° (~25 km) globally | ECMWF ERA5 Reanalysis | Medium-range (1-10 days), Multi-timescale |

| GraphCast | Google DeepMind (UK/US) | Graph Neural Network (GNN) with Icosahedral Mesh | 0.25° (~25 km) globally | ECMWF ERA5 Reanalysis | Medium-range (up to 10 days) |

| FourCastNet | NVIDIA (US) | Fourier Neural Operator (AFNO) | 0.25° (~25 km) globally | ECMWF ERA5 Reanalysis | Short to Medium-range (up to 14 days) |

| ClimaX | Microsoft Research (US) | Vision Transformer (ViT) with Variable Tokenization | Variable (e.g., 1.4° to 5.6°) | CMIP6, ECMWF ERA5 | Flexible (Nowcasting to Climate Projections) |

| NeuralGCM | Google Research / ECMWF | Hybrid (Differentiable Dynamical Solver + ML Physics) | Variable (0.7°, 1.4°, 2.8°) | ECMWF ERA5 + Prescribed SSTs | Medium-range (1-15 days) to Long-term Climate |

The architectural divergence represented in this data highlights the experimental nature of current atmospheric algorithms. Transformers, such as those utilized in Pangu-Weather and ClimaX, excel at capturing long-range dependencies across the global grid via self-attention mechanisms, effectively treating the atmosphere as a sequence of complex volumetric images. Conversely, Graph Neural Networks, as seen in GraphCast and ECMWF's AIFS, eschew the rigid latitude-longitude grid in favor of spherical meshes, allowing for more physically uniform message passing and greater computational efficiency at the poles 6101522.

Institutional Integration and Operational Deployment

The rapid maturation and demonstrable superiority of these models forced national and international meteorological organizations to pivot their operational strategies aggressively. Recognizing the existential threat of obsolescence alongside the massive opportunity presented by machine learning, institutions have rapidly integrated data-driven methods into their forecasting chains.

The European Centre for Medium-Range Weather Forecasts (ECMWF) initiated the development of its own Artificial Intelligence/Integrated Forecasting System (AIFS). Launched initially as an alpha version in late 2023 under the 'Anemoi' toolkit project, the AIFS was heavily upgraded throughout 2024, transitioning from a standard graph neural network to an attention-based variant with sliding window transformer processing 1072223. By February 2025, the ECMWF officially elevated the AIFS into operational status, making it the first fully operational, open machine learning weather prediction model run side-by-side with a traditional physics-based model by a major meteorological agency 2425. Running at a 28-kilometer grid spacing, the deterministic AIFS demonstrated clear improvements over traditional physics-based models for surface parameters like 2-meter temperature and 10-meter wind speed, and achieved up to a 20% gain in accuracy for tracking tropical cyclone trajectories 2425. Furthermore, ECMWF has successfully pushed the AIFS toward ensemble modeling, generating 51-member probabilistic forecasts to provide a full range of possible scenarios 2324.

In parallel, the United States' NOAA deployed a new generation of artificial intelligence-driven global weather models in late 2025 under an initiative known as Project Eagle. This operational suite includes the AIGFS (Artificial Intelligence Global Forecast System) and the AIGEFS (an AI-based ensemble system), designed to augment the traditional American GFS models 262728. Notably, NOAA introduced the HGEFS (Hybrid-GEFS), a pioneering "grand ensemble" approach that dynamically combines the artificial intelligence-based ensemble with NOAA's flagship physics-based Global Ensemble Forecast System, yielding a combined forecast that consistently outperforms both the standalone artificial intelligence and physics-only systems 26. NOAA reported that a single 16-day forecast utilizing the AIGFS consumed only 0.3% of the computing resources required by the traditional operational GFS, finishing in approximately 40 minutes and drastically extending the lead time and reliability of extreme weather tracking 262728.

This operational shift has profound implications for global equity. The World Meteorological Organization (WMO) formally recognized this transition in its 2024 United in Science report, noting that artificial intelligence is revolutionizing weather forecasting by democratizing access. Operating a traditional numerical model like the ECMWF HRES requires supercomputing infrastructure costing hundreds of millions of dollars, creating an immense barrier to entry. Conversely, running inference on a pre-trained model like GraphCast or AIFS requires merely a cloud-based GPU, meaning national meteorological services in lower-income countries now have access to global medium-range forecast guidance of a quality previously restricted to wealthy nations 6293031.

The Energy Paradox: Training Costs vs. Inference Efficiency

A central narrative driving the rapid adoption of data-driven weather models is their astonishing computational efficiency. Traditional numerical weather prediction is a brute-force mathematical endeavor. Generating a 10-day high-resolution ensemble forecast requires solving complex thermodynamic and fluid dynamic equations iteratively across millions of interconnected grid points, a process that takes several hours and consumes massive amounts of continuous electricity on some of the world's largest supercomputing clusters 123.

In stark contrast, the inference phase of an artificial intelligence weather model is profoundly inexpensive and rapid. Once a model is fully trained, architectures like Pangu-Weather and GraphCast can produce a deterministic 10-day global forecast in less than a minute utilizing a single standard GPU or a single Tensor Processing Unit 12910. This represents a computational speedup factor of roughly 10,000 to 12,000 relative to traditional systems 11018.

However, isolating the inference cost presents an incomplete and potentially misleading picture of the artificial intelligence energy footprint. The energy paradox of this technology lies in the exceptionally high upfront computational cost required to generate the training data and optimize the foundational models. Training large-scale architectures is highly energy-intensive; as a baseline comparison, training large language models like GPT-3 consumed an estimated 1,287 megawatt-hours (MWh) of electricity 464732. While current weather models possess fewer parameters than frontier language models, their training requirements remain formidable. Pangu-Weather required 16 days of continuous training across an array of 192 NVIDIA Tesla-V100 GPUs 110. GraphCast was trained for 28 days on a cluster of 32 Cloud TPU v4 devices 19. Furthermore, the underlying data utilized to train these models - the ERA5 reanalysis dataset - represents decades of continuous, energy-intensive numerical physics simulations required to assimilate historical observations 1.

Despite this heavy initial toll, rigorous life-cycle analyses indicate a decisively positive environmental outcome for meteorological artificial intelligence. A 2026 study published in the journal Weather evaluated the total energy consumption and carbon footprint of data-driven forecasting models. The researchers concluded that, despite the training phase being considerably more carbon-intensive than executing a single physics-based forecast, this initial carbon debt is rapidly amortized by the vast energy savings accrued during repeated daily inference. While the initial training of AI foundation models demands massive computational energy, the extreme efficiency of AI inference results in an estimated 21x to 1,273x reduction in total energy consumption over a standard one-year operational cycle (running a 51-member ensemble twice daily) compared to traditional physics-based supercomputing 495033. Consequently, the global transition to machine learning prediction brings immediate opportunities to significantly diminish the carbon footprint associated with daily meteorological operations.

Forecasting Horizons: Medium-Range Determinism vs. Decadal Climate Projection

To properly contextualize the utility of these new models, it is crucial to clarify the technical and scientific distinction between their proven success in short-to-medium-range weather forecasting and their current, profound struggles with long-term climate projection.

Weather forecasting is fundamentally an "initial value problem." If the current state of the atmosphere is known with high precision, the physical equations - or a well-trained neural network acting as a surrogate - can predict how that state will evolve over the next few days or weeks. Data-driven models have proven unequivocally successful in this domain, typically outperforming numerical models out to roughly 15 days 459.

Climate projection, conversely, is a "boundary value problem." Predicting the climate decades into the future does not depend on knowing today's exact weather patterns; it depends on understanding how the entire Earth system will respond over extended periods to shifting boundary conditions. These boundaries include slowly warming ocean temperatures, changing sea ice concentrations, alterations in land use, and escalating atmospheric greenhouse gas concentrations 195234.

Purely data-driven autoregressive models struggle profoundly with long-term climate simulations 915. When autoregressive algorithms run iteratively for thousands of steps to simulate multi-decadal timeframes, small interpolation errors at each timestep rapidly accumulate. This causes the models to experience severe "climate drift," eventually producing atmospheric states that are physically impossible or completely detached from reality 15. Furthermore, because they are trained strictly on historical reanalysis data, pure artificial intelligence models cannot extrapolate to future climate states driven by unprecedented carbon dioxide levels that do not exist anywhere within their training distribution 919.

Addressing this limitation highlights the critical necessity of hybrid modeling. NeuralGCM, developed by Google Research in partnership with ECMWF and published in Nature in 2024, currently represents the most viable path forward for artificial intelligence in climate modeling 91535. NeuralGCM is the first fully differentiable hybrid general circulation model 1415. Rather than replacing the physics engine entirely, NeuralGCM retains a traditional, differentiable numerical solver to calculate large-scale fluid dynamics and atmospheric circulation.

It then integrates machine learning components specifically to parameterize complex, small-scale, sub-grid physical processes - such as cloud formation, precipitation, and radiation - which have historically been the greatest source of error and computational drag in traditional GCMs 91415.

Because the physical solver is entirely differentiable, the machine learning components can be trained "online" directly alongside the physics equations, optimizing the entire system end-to-end 1415. This hybrid approach ensures that the model strictly obeys the fundamental laws of thermodynamics while leveraging the speed and pattern-recognition capabilities of neural networks for micro-physics. When forced with prescribed historical sea surface temperatures, NeuralGCM has successfully generated highly stable climate simulations over 40-year periods, accurately tracking emergent climate phenomena, seasonal cycles, and realistic frequencies of tropical cyclones with significantly lower bias than traditional atmosphere-only models 914153536. A subsequent 2026 study in Science Advances demonstrated that by training NeuralGCM's parameterizations on satellite-based precipitation observations (IMERG), the hybrid model substantially surpassed traditional GCMs in accurately simulating the mean state, diurnal cycles, and extremes of global precipitation 3437.

Limitations, Data Scarcity, and the Extreme Value Bottleneck

As the operationalization of machine learning weather prediction accelerates, significant bottlenecks and systemic risks must be addressed by the scientific community. Chief among these is the vulnerability of data-driven models to "out-of-distribution" events - specifically, the occurrence of unprecedented extreme weather in a rapidly warming global climate.

Machine learning algorithms interpolate flawlessly within the manifold of their training data. However, almost all modern data-driven weather models are trained exclusively on the ERA5 reanalysis dataset, which spans roughly from 1979 to the present day 46913. Consequently, these models have only learned the atmospheric dynamics of the past four decades. As anthropogenic climate change accelerates, the Earth's atmosphere is moving into energetic states entirely absent from the historical record 2957. If a region experiences an unprecedented 5-sigma heat dome or a rapid intensification of a hurricane driven by record-high ocean heat content, a model trained entirely on ERA5 may fail to accurately predict the severity of the event, algorithmically pulling its forecast back toward the historical mean it was trained to recognize 3057.

This limitation is severely exacerbated by the mathematical architecture of the models themselves. Most data-driven weather models are trained using Mean Squared Error (MSE) loss functions. From the perspective of extreme value theory, MSE mathematically penalizes large deviations but inherently rewards models that predict the "average" outcome when faced with high uncertainty 5859. This symmetric optimization inevitably leads to biased, overly smooth predictions that chronically underestimate the peaks of extreme, low-probability events, particularly at longer forecast lead times where uncertainty is highest 5860.

To mitigate this critical structural flaw, researchers have begun developing specialized extreme-value augmentation modules. For instance, in 2024, researchers introduced ExtremeCast, an advanced global weather forecast model that abandons symmetric MSE in favor of "Exloss" - an asymmetric loss function explicitly designed to penalize the underestimation of extreme values 5859. Coupled with an "ExBooster" module that models atmospheric uncertainty through multiple random samplings without requiring retraining, ExtremeCast significantly increases the Symmetric Extremal Dependency Index (SEDI) hit rate for predicting low-probability severe weather disasters, without degrading the model's overall medium-range root mean square error 585961. Innovations like the asymmetric optimization of ExtremeCast, alongside the probabilistic diffusion capabilities of models like GenCast, represent essential algorithmic interventions to ensure that artificial intelligence does not blindly smooth over the deadly realities of an increasingly volatile climate 1859.

Conclusion: Synthesizing the Future of Atmospheric Computing

The technological leap observed between 2023 and 2024 represents one of the most rapid and consequential scientific advancements in the history of meteorology. Global innovation - ranging from Huawei's high-speed Pangu-Weather and the Shanghai AI Lab's ultra-high-resolution FengWu-GHR, to Western triumphs like Google's GraphCast, GenCast, and Microsoft's ClimaX - has unequivocally proven that data-driven machine learning can map the chaotic geometry of the Earth's atmosphere faster, and frequently more accurately, than brute-force physical computation.

However, fully embracing this operational revolution requires intellectual rigor and scientific nuance. Artificial intelligence is not rendering atmospheric physics obsolete; it is fundamentally elevating it. The inescapable reliance of neural networks on physics-driven reanalysis data, combined with the inherent inability of pure data-driven models to stably project long-term climate drift out of distribution, points definitively toward a hybrid future. Architectures like NeuralGCM, which embed machine learning parameterizations within differentiable, physics-bound fluid dynamic solvers, offer the optimal path forward: guaranteeing mathematical adherence to the immutable laws of nature while unlocking unprecedented computational speed and efficiency.

As national meteorological agencies and institutions like the ECMWF and NOAA aggressively transition these systems into daily operations, the focus must shift from simply maximizing standard benchmark scores to ensuring systemic resilience. The democratization of forecasting enabled by low-cost artificial intelligence inference holds massive potential for climate adaptation and disaster preparedness, particularly for under-resourced nations in the Global South. Yet, this life-saving potential will only be realized if the scientific community actively combats the data scarcity bottleneck and the inherent risk of MSE-driven extreme value suppression. Preparing for an increasingly volatile world requires atmospheric models capable of anticipating unprecedented climatic phenomena, ensuring that as our computational power scales, our understanding of an unpredictable Earth scales safely alongside it.