Longitudinal impacts of language model assistance on writing skill

The integration of Large Language Models (LLMs) into routine cognitive tasks has precipitated a fundamental shift in how human beings write, synthesize information, and develop linguistic proficiency. Initial empirical analyses published in the immediate wake of widespread generative artificial intelligence availability largely focused on short-term productivity gains, documenting significant reductions in task completion time and increases in baseline output quality for mid-level professionals 123. However, as the deployment of these technologies has matured, subsequent longitudinal studies spanning from late 2024 through early 2026 have begun to isolate the delayed, secondary effects of artificial intelligence reliance on human cognitive architecture, intrinsic skill retention, and semantic diversity 445.

Early longitudinal data reveal a complex dichotomy. When utilized as highly controlled pedagogical scaffolds - such as structured outliners or targeted feedback mechanisms - LLMs can augment human cognitive capacity and accelerate specific learning outcomes 76. Conversely, when used as unconstrained generation engines or automated ghostwriters, prolonged reliance on LLMs induces measurable deficits in neurophysiological engagement, short-term memory recall, stylistic originality, and authorial voice 497. The emerging consensus across cognitive neuroscience, computational linguistics, and educational technology indicates that the locus of human agency within the human-machine interaction determines whether the technology acts as an epistemic amplifier or a vector for cognitive atrophy 812.

Short-Term Productivity Enhancements and Baseline Assessments

Before evaluating longitudinal deficits, it is necessary to establish the baseline short-term productivity effects that drive LLM adoption. Experimental evidence from 2023 demonstrated unprecedented efficiency gains in standardized writing tasks. A preregistered online experiment involving 453 college-educated professionals randomly assigned occupation-specific, incentivized writing tasks found that access to ChatGPT decreased average task completion time by 40% while simultaneously raising output quality by 18% 23.

The data indicated a compression of inequality among workers, as participants with weaker baseline writing skills derived the most significant benefits from the assistive technology 12. These immediate productivity spikes created a strong retention effect; workers exposed to the language model during the experiment were twice as likely to report using it in their actual employment two weeks later, and 1.6 times as likely after two months 39. Similar randomized controlled trials conducted with 758 management consultants showed that those equipped with GPT-4 completed tasks significantly faster and at a higher quality threshold than unassisted peers 1.

While these metrics quantify the immediate utility of generative text models, they do not measure the latent cognitive processes of the user. The capacity to produce a high-quality document rapidly does not necessarily correlate with the user's internalization of the knowledge or the development of independent writing proficiency, necessitating a shift toward neurophysiological and longitudinal methodologies 514.

Neurophysiological Alterations and Cognitive Under-Engagement

The most granular data regarding the impact of language models on the biological processes of writing stem from real-time neurophysiological monitoring. A seminal 2025 longitudinal study conducted by the MIT Media Lab, titled "Your Brain on ChatGPT," utilized electroencephalography (EEG) to track the brain activity of 54 adult participants engaged in repeated essay-writing tasks over a four-month period 41510. Participants were divided into three cohorts: a "Brain-only" control group lacking digital tools, a "Search Engine" group utilizing traditional internet searches, and an "LLM" group using ChatGPT 1511.

Dynamic Directed Transfer Function and Frequency Band Suppression

The EEG data established a direct inverse relationship between the degree of external technological support and the intensity of internal neural engagement. The Brain-only group exhibited the strongest and most widely distributed neural networks during the writing process, indicating high levels of cognitive load, memory retrieval, and executive function 412. In contrast, the LLM-assisted group displayed the weakest cognitive engagement. Researchers measured the Dynamic Directed Transfer Function (dDTF), a metric describing the intensity with which different brain regions interact. Participants utilizing LLMs exhibited up to 55% lower dDTF connectivity while writing compared to those lacking access to generative models 41513.

Furthermore, the specific frequencies of brain activity were heavily altered. Frontal-midline theta brain waves, which are strongly associated with focused attention and cognitive effort, were prominent in the Brain-only group but remained relatively weak or entirely absent in the LLM cohort 4.

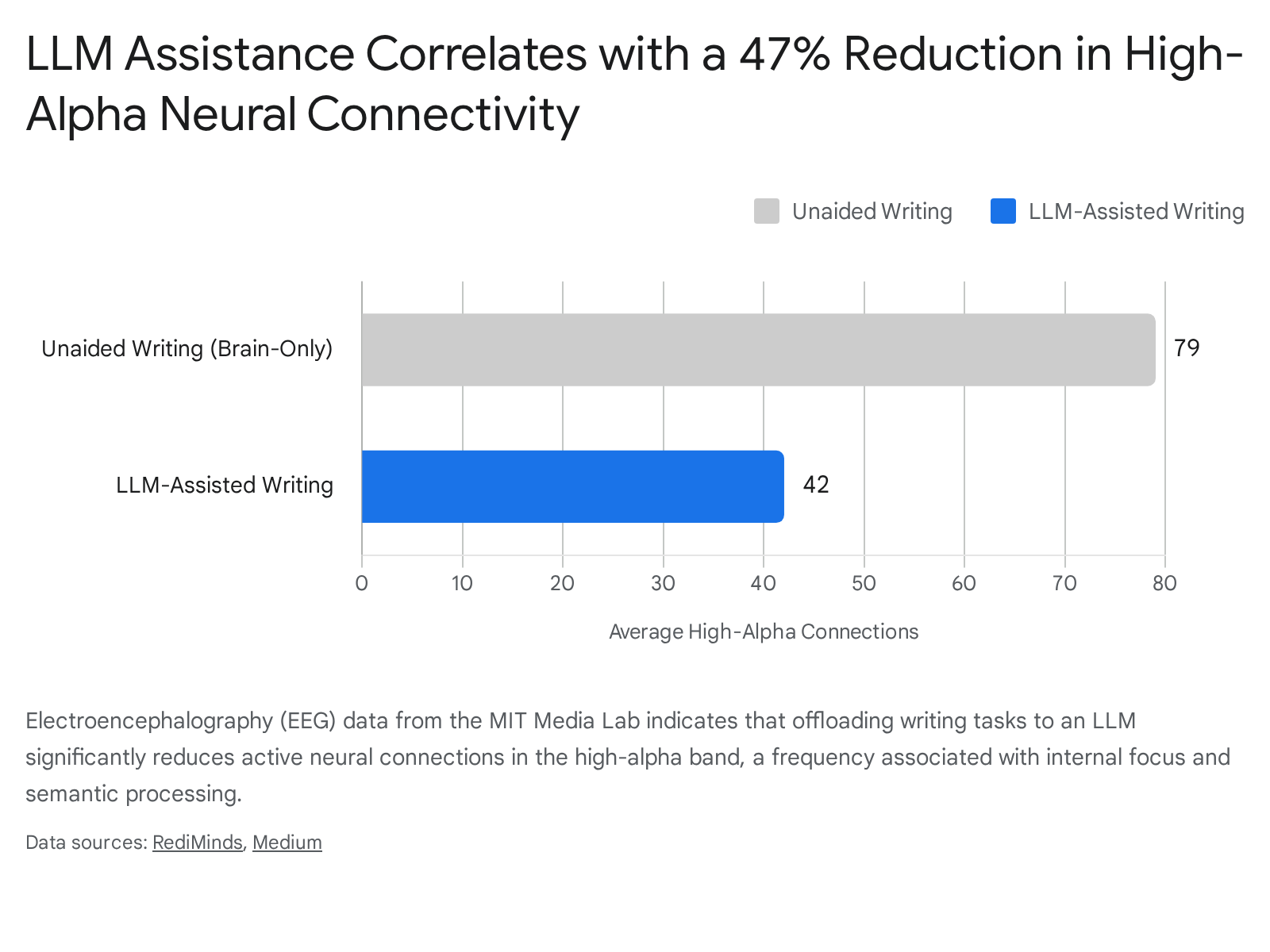

The reduction in high-alpha brain wave band connectivity is equally notable. Alpha waves represent internal focus and semantic processing. Participants writing independently averaged approximately 79 effective neural connections in this band, whereas those utilizing ChatGPT averaged only 42 connections - a 47% reduction in active neural coupling 47.

The researchers labeled this neurophysiological under-engagement as "cognitive debt," representing a deferred educational penalty wherein the brain fails to encode the rigorous schemas traditionally formed through unassisted writing 15.

Memory Consolidation Deficits and the Amnesia Effect

The reduction in real-time neural engagement exerts a measurable downstream effect on short-term memory consolidation and content ownership. The MIT Media Lab study incorporated behavioral recall tests administered minutes after the participants submitted their essays 715. The results revealed a severe memory impairment among heavy artificial intelligence users. A staggering 83.3% of the participants in the LLM cohort were unable to accurately recall or quote specific phrases from the text they had just ostensibly authored 4715. No participants in the primary LLM group could produce a fully correct quote without referencing the text 7.

By comparison, only 11.1% of the Brain-only and Search Engine groups experienced similar recall failures 713. This phenomenon demonstrates that delegating the active generation of prose to an external model short-circuits the mental encoding processes that occur during drafting and recursive reading 47. Consequently, participants in the LLM group subsequently reported a fragmented sense of authorship, with many expressing low perceived ownership over the final text, while independent writers reported strong ownership 1114.

Re-engagement Dynamics and Transition Metrics

To test the persistence of these cognitive alterations, the researchers conducted a fourth cross-over session four months into the study. Eighteen participants were swapped: those who had exclusively used LLMs were asked to write unaided (LLM-to-Brain), while the control group was given access to the LLM (Brain-to-LLM) 1021.

The transition metrics suggest that cognitive under-engagement exhibits lingering effects. The LLM-to-Brain cohort demonstrated continued suppression of alpha and beta connectivity, failing to reach the baseline neural activity levels of novice unaided writers 71221. Furthermore, their unaided writing heavily mirrored the stylistic vocabulary and n-gram patterns previously generated by the language model, indicating that the human mind had begun to internalize the machine's statistical linguistic biases rather than developing an independent voice 1021.

Conversely, the Brain-to-LLM group exhibited a network-wide spike in directed neural connectivity across multiple frequency bands 1021. Because these individuals had already established deep cognitive schemas regarding the subject matter over three prior unaided sessions, the late introduction of the language model acted as an integration tool. They utilized the model to cross-examine ideas rather than generate them wholesale, heavily engaging occipito-parietal and prefrontal brain regions 1215. This empirical observation supports the pedagogical principle that foundational cognitive friction is a prerequisite for utilizing artificial intelligence as an advanced cognitive amplifier without suffering skill atrophy 1314.

It should be noted that while these physiological metrics are compelling, researchers emphasize calibrated uncertainty; the MIT study involved a relatively small sample size that completed the longitudinal fourth session (N=18), and further peer-reviewed replication using functional magnetic resonance imaging (fMRI) across diverse demographics is required to definitively prove permanent cognitive alterations 1516.

Stylometric Homogenization and Semantic Drift

Beyond individual neurophysiology, the widespread use of language models systematically alters the linguistic character, stylistic variety, and underlying semantic meaning of human text at a macroscopic level. A comprehensive 2026 analysis titled "How LLMs Distort Our Written Language" quantified the lexical and stylistic shifts induced by artificial intelligence intervention across millions of documents 917. The researchers utilized latent space embeddings, natural language processing audits, and randomized controlled user studies to track semantic entropy 92125.

Inadvertent Alteration of Intended Meaning

A prevailing assumption among novice users is that language models operate as neutral editors, correcting syntax and grammar without altering substantive meaning. Empirical counterfactual analyses refute this premise. Utilizing a dataset of human-written essays collected in 2021 - prior to the widespread adoption of modern generative models - researchers studied how asking a model to revise an essay based strictly on expert human feedback altered the text 1819. The data demonstrated that even when models are explicitly prompted to "only make grammar edits," they consistently change the text in ways that significantly alter the original semantic meaning 1819.

This distortion occurs because current generative architectures lack a definitive boundary between satisfying a user's prompt and reshaping the text to align with the statistical center of their training distribution 20. Language models exert a consistent, directional pull toward a highly polished, standardized, and emotionally compressed register 21. When evaluated via nonparametric effect-size measures, the semantic shifts from human originals to revised outputs show massive statistical significance. Certain grading bias metrics evaluating language alterations yielded a Cohen's d effect size of up to 4.25, representing a sweeping change in text character 1821.

The Erasure of Authorial Voice and Cultural Nuance

The stylometric homogenization imposed by generative models operates on multiple measurable linguistic axes. The data shows a uniform decrease in first-person pronouns and causal connectives, alongside an artificial inflation of vocabulary diversity and abstraction 21.

The primary directional shifts in writing mechanics observed when texts undergo language model processing are detailed in the comparative analysis below:

| Linguistic Feature | Human Baseline Tendency | LLM Revision Direction | Interpretive Consequence |

|---|---|---|---|

| First-Person Pronouns | Frequent usage to establish subjective perspective. | Decrease 21 | Diminishes personal authorial voice and removes the writer from the text. |

| Argumentative Stance | Declarative, varied, and occasionally polarized. | Neutralize (70% increase) 919 | Flattens arguments to avoid controversy; compresses the rhetorical stance. |

| Eventive Markers | Action-oriented, situated verbs grounded in time. | Decrease 21 | Shifts text from an active, embedded narrative to a detached, retrospective summary. |

| Causal Connectives | Step-by-step explicit logical reasoning markers. | Decrease 21 | Jumps directly to conclusions, resulting in compressed, abstract interpretation. |

| Lexical Preferences | Diverse, idiomatic, and culturally nuanced vocabulary. | Converge (e.g., "crucial", "significant") 2030 | High within-group homogeneity; diminishes cultural stylistic variations and enforces a Western, corporate style 2131. |

Furthermore, the models actively penalize writing that deviates from their standardized normative center. Audits reveal that when acting as evaluators, models penalize informal language heavily - deducting an average of 1.90 points on a 10-point scale for informal phrasing, and 1.35 points for non-native phrasing 18.

This convergence toward a statistical median has profound implications for qualitative fields and digital humanities 21. When mapped using t-SNE projections, sentence embeddings for AI-assisted essays collapse into a tightly clustered region of semantic space, whereas human-written essays exhibit wide stylistic divergence and distance 921. Heavy users of these models self-report that their generated writing feels "less creative and not in their voice," yet they continue to utilize the outputs due to the perceived authority of the machine and the frictionless speed of generation 919.

In academic and peer-review environments, this homogenization threatens rigorous evaluation. An examination of text generated in the wild revealed that 21% of scientific peer reviews at a top artificial intelligence conference were AI-generated 1819. These generated reviews placed significantly less weight on the actual clarity and significance of the research, instead defaulting to generic, structurally perfect praise that artificially inflated peer review scores by an average of a full point 1819.

The Epistemic Atrophy Paradigm Versus Pedagogical Scaffolding

The longitudinal data requires reconciling two seemingly contradictory phenomena: workplace and experimental studies showing that artificial intelligence dramatically enhances short-term task performance 12, versus physiological studies proving that long-term reliance degrades fundamental cognitive capacity 532. This paradox is resolved by examining the precise role the artificial intelligence plays within the sequential workflow of writing.

The Cognitive Offloading Ladder

The interaction between human writers and language models can be conceptualized through the "Cognitive Offloading Ladder," a theoretical framework that categorizes how the use of automated tools shifts from cognitive support to cognitive substitution 8. This framework highlights the systemic risk of epistemic atrophy - defined as the measurable decline in independent schema construction when internal cognitive processes are outsourced to a machine 8.

| Ladder Tier | Human-AI Relationship | Description of Workflow | Risk of Epistemic Atrophy |

|---|---|---|---|

| Tier 1: AI as Editor (Technical Scaffolding) | Human Agency High | The human generates the ideas, structure, and rough draft. The AI is utilized late in the process to correct grammar, refine syntax, or expose logical gaps 33. | Low. Frees cognitive bandwidth for higher-order argumentation while maintaining core critical thinking 822. |

| Tier 2: AI as Co-Author (Semantic Support) | Agency Shared | The human and AI engage in iterative dialogue. The AI provides outlines or alternatives, but the human retains executive control, critically evaluating and actively rejecting suggestions 823. | Moderate. Requires sustained metacognitive awareness to prevent passive acceptance of AI outputs 36. |

| Tier 3: AI as Ghostwriter (Cognitive Substitution) | Machine Agency High | The human delegates the core synthesis - drafting, summarizing, and argument formation - entirely to the AI, functioning solely as a passive reviewer 82425. | Severe. Systemic substitution of internal cognitive processes leads to rapid skill degradation and loss of independent voice 58. |

When restricted to Tier 1 and Tier 2 interactions, artificial intelligence can objectively improve long-term writing skills. A comprehensive 2025 longitudinal study involving 160 Vietnamese university students utilized a pretest/posttest design over a two-month period to measure writing skills development 626. Multiple regression analysis revealed that five independent variables collectively explained 58.1% of the variance in sustained English writing improvement (Adjusted R2 = 0.581) 6. The duration of AI use emerged as the strongest predictor (β = 0.331), closely followed by the instruction method (β = 0.243), initial language proficiency (β = 0.181), and the level of active, critical interaction with the AI (β = 0.152) 626. This quantitative data indicates that when tools are integrated via structured pedagogical approaches rather than passive uncritical use, learners improve their fundamental writing capabilities 26.

Similarly, a 2025 experiment measuring the efficacy of AI practice on cover letter writing revealed that participants who utilized an AI tool to review and critique their drafts performed better on subsequent unaided writing tests than those who practiced entirely alone, generating an effect size of d = 0.38 7. Even participants who merely reviewed an AI-generated example before writing their own drafts showed skill improvements (d = 0.37) 7.

In these scenarios, the language model functions within the learner's Zone of Proximal Development, expanding text-producing capabilities by providing immediate, personalized feedback that human instructors often lack the bandwidth to offer 274128. A systematic literature review of K-12 English as a Foreign Language scaffolding analyzed 14 peer-reviewed studies between 2018 and 2024, confirming that when AI is constrained to cognitive support (e.g., organizing ideas) and language enhancement (e.g., vocabulary expansion), it successfully reduces cognitive load and fosters ideation without undermining student autonomy 29.

The critical differentiator is friction. When technology removes the friction of initial idea generation and drafting, the human skill atrophies 532. When the technology is used to introduce evaluative friction - forcing the writer to analyze, accept, or reject specific rhetorical choices - the foundational skill is reinforced 4144.

Macroeconomic Consequences and the Theory of Knowledge Collapse

The shift from localized educational impacts to macroeconomic consequences is accelerating. By the end of 2024, language model-assisted writing had thoroughly penetrated professional sectors, accounting for up to 24% of corporate press releases, 18% of financial consumer complaints, 15% of job postings, and 14% of United Nations press releases 4530. This ubiquity poses systemic risks to both organizational trust and the collective human knowledge ecosystem.

The Atrophy of Professional Proficiency

In the corporate workplace, the reliance on automation for routine communications and coding is actively altering early-career development trajectories. Junior employees frequently bypass the traditional learning curves that build resilience, troubleshooting capacity, and deep domain expertise 32. Without struggling through the minutiae of basic coding syntax or routine report writing, these professionals fail to develop the foundational knowledge necessary to judge whether an AI-generated answer is logically sound or hallucinated 3244.

Furthermore, the deployment of language models for professional communication introduces a measurable "perception gap" regarding trustworthiness. A 2025 study analyzing 1,100 professionals found that while managers viewed their own use of AI as an efficient way to sound professional, employees reacted severely negatively to AI-generated communications 31. When employees detected high levels of AI assistance in managerial emails, their perception of the manager's sincerity plummeted from 83% (for low-assistance messages) to between 40% and 52% 31. The use of artificial intelligence in relational, congratulatory, or motivational contexts is frequently interpreted by subordinates as laziness, lack of integrity, or an absence of genuine care, fundamentally undermining cognitive-based trust in leadership 31.

The Trajectory Toward Knowledge Collapse

At the societal level, the frictionless automation of human synthesis threatens the ongoing generation of shared public truth and scientific advancement. In an influential NBER working paper published in 2026, economists Acemoglu, Kong, and Ozdaglar formalize the concept of "knowledge collapse" 323334. The researchers posit a dynamic model of learning where successful decision-making requires the combination of shared, community-level general knowledge and individual, context-specific knowledge 33.

Historically, human learning exhibited economies of scope: the costly, time-consuming effort an individual expended to learn about their specific context simultaneously produced a "thin" public signal that was shared with others 33. This continuous aggregation of individual efforts built the community's broader stock of general knowledge 33.

Agentic artificial intelligence disrupts this mechanism entirely by delivering perfectly tailored, context-specific recommendations that immediately substitute for human effort 3351. While this improves immediate, isolated decision quality, it eradicates the learning externality 33. The mathematical model demonstrates that if human effort is sufficiently elastic, and the agentic model's accuracy exceeds a specific threshold, individuals will uniformly adopt the frictionless path of technological substitution 3351. Consequently, the economy tips into a "knowledge-collapse steady state." In this scenario, the generation of new, verifiable general knowledge ceases, leaving humanity entirely dependent on a static, potentially degrading, and increasingly homogenized technological repository 3351. To mitigate this risk, the authors suggest the necessity of information-design regulations, such as deliberate "garbling" or limiting the effective precision of agentic recommendations, to preserve human learning incentives and ensure the continued production of general knowledge 33.

Institutional Policy Responses and Pedagogical Adaptation

Recognizing the dual potential for cognitive enhancement and epistemic atrophy, national educational institutions are moving away from reactive, blanket bans toward highly structured, age-gated, and sequenced integration policies.

Age-Gated and Sequenced Integration

The Singapore Ministry of Education (MOE) enacted a heavily researched, phased rollout in 2026, strictly prohibiting the use of generative tools for students in Primary 1 through 3 3536. The policy is explicitly designed to prioritize physical, hands-on learning and protect the development of foundational literacy, basic recall, and motor skills from premature cognitive atrophy 3536.

Artificial intelligence is introduced in Primary 4 solely because developmental research indicates that students at this age possess the baseline executive functioning, planning abilities, and self-evaluation skills required to critically navigate algorithmic outputs 35. Furthermore, the MOE limits exposure to closed, centralized tools with built-in pedagogical guardrails hosted on the Student Learning Space (SLS). These dedicated writing assistants are programmed to actively redirect students if they ask for direct answers or stray off-topic, maintaining the requisite friction for learning rather than providing unconstrained ghostwriting capabilities 3537. Simultaneously, the MOE heavily leverages the technology for backend administrative support, utilizing systems like "Authoring Copilot" to generate lesson plans and reduce teacher workload, ensuring the technology supports the educational ecosystem without bypassing the student's cognitive process 3638.

Teacher Adoption and Metacognitive Curricula

In the United Kingdom, the Department for Education (DfE) and the National Literacy Trust documented a rapid, ground-up surge in integration. By 2025, 58% of UK teachers were using generative models routinely for lesson planning, creating quizzes, and summarizing documents - a near doubling from 2023 3940. Regular users reported saving between one and five hours a week on administrative tasks 40.

However, deep pedagogical concerns persist among educators; 66.5% of surveyed teachers expressed concern that generative tools actively decrease the perceived value of developing independent writing skills among students, and 43% of teachers rated their own confidence in managing these tools at a mere 3 out of 10 3940.

Consequently, international educational frameworks are pivoting to emphasize "GenAI literacy" and metacognition as foundational curricula 4134. The United Arab Emirates made artificial intelligence a compulsory subject from Kindergarten to Grade 12 beginning in the 2025-2026 academic year, focusing on prompt engineering and system design 40. Rather than grading the final polished essay - which a machine can easily spoof with perfect syntax - educators are increasingly assessing the artifacts of the writing process, including outlines, prompt histories, and iterative revision drafts 12. Empirical studies demonstrate that students who receive direct instruction on how to critically prompt, evaluate, and refine machine-generated content show a significantly higher likelihood of transferring actual writing skills to independent tasks 41.

Synthesis of Longitudinal Evidence

The longitudinal data collected between 2023 and 2026 confirms that Large Language Models are not functionally neutral tools; they actively reshape the cognitive networks, stylistic expressions, and semantic intentions of the humans who use them.

When utilized as a total substitute for cognitive effort - acting as a frictionless ghostwriter that circumvents the arduous process of drafting - generative models induce measurable deficits in neurophysiological engagement, short-term memory encoding, and authorial voice. Unrestricted reliance flattens individual expression into a homogenized, abstract, and semantically compressed register, posing distinct risks of epistemic atrophy on an individual level and knowledge collapse on a societal scale.

However, the empirical evidence simultaneously proves that artificial intelligence is not inherently detrimental to the development of writing skill. When implemented with deliberate friction - where the human initiates the thought process, struggles through foundational drafting, and subsequently utilizes the technology for targeted, evaluative feedback - the model acts as a powerful cognitive scaffold. Ultimately, the preservation and advancement of human writing skill in this era depends not on rejecting the technology, but on fiercely protecting the locus of human agency within the collaborative workflow. Integrating these tools successfully requires acknowledging that the cognitive struggle of writing is not an inefficiency to be optimized away, but the fundamental mechanism by which human intellect develops.