Lattice QCD calculations of the strong force

Theoretical Foundations of Lattice Regularization

The Breakdown of Perturbative Quantum Chromodynamics

Quantum Chromodynamics (QCD) represents the foundational gauge theory of the strong interaction, delineating the complex dynamics between quarks and gluons. A central property of QCD is asymptotic freedom, a phenomenon in which the strong running coupling constant, $\alpha_s(Q^2)$, monotonically decreases as the momentum transfer $Q^2$ increases 123. In the ultraviolet (high-energy) regime, this diminished coupling permits the reliable application of perturbative expansion techniques, such as Feynman calculus, allowing theoretical physicists to calculate scattering cross-sections and decay rates with high precision 14.

However, as the energy scale descends below the confinement scale of approximately $\Lambda_{QCD} \sim 1$ GeV - transitioning into the hadronic regime - the coupling constant $\alpha_s$ grows substantially. In this infrared domain, perturbative approaches suffer a catastrophic breakdown 4. The perturbation expansion transforms into a divergent asymptotic series where higher-order loop terms yield larger contributions than lower-order terms, rendering the series un-summable and physically meaningless 44. This mathematical breakdown coincides with color confinement, the physical dictate that colored quarks and gluons cannot exist as isolated free states; they must inherently bind into color-neutral hadronic asymptotic states, such as mesons ($q\bar{q}$) and baryons ($qqq$) 24.

While phenomenological models and effective field theories, such as Chiral Perturbation Theory ($\chi$PT), can approximate low-energy QCD dynamics using interacting hadrons as effective degrees of freedom, these frameworks rely on undetermined low-energy constants (LECs) that must be fitted to experimental data or derived from a more fundamental theory 46. Establishing a rigorous, analytic mathematical connection between the short-distance partonic degrees of freedom and the long-distance confinement scale remains a paramount, unresolved challenge in quantum field theory 15. Consequently, the extraction of ab initio predictions from the strong force necessitates a non-perturbative formulation.

Euclidean Spacetime and Wilson Discretization

To circumvent the limitations of perturbative expansions, Kenneth Wilson introduced lattice gauge theory in 1974, providing a mathematically rigorous, non-perturbative regularization scheme for QCD 4678. Lattice QCD (LQCD) replaces continuous, four-dimensional Minkowski spacetime with a discrete, Euclidean hypercubic grid. By executing a Wick rotation to imaginary time ($t \to i\tau$), the oscillatory path integral of quantum field theory is transformed into an exponentially damped statistical mechanics partition function, enabling numerical evaluation 78.

The introduction of the discrete grid naturally establishes a lattice spacing, $a$, which functions as an intrinsic, gauge-invariant ultraviolet momentum cutoff at the scale $\sim 1/a$ 479. This cutoff renders the quantum field theory finite, eliminating the unphysical ultraviolet singularities that require complex dimensional regularization in the continuum limit 7. In Wilson's geometric formulation, fermion fields (quarks), represented by anti-commuting Grassmann numbers, reside on the discrete intersecting nodes (sites) of the grid 4710. The strong force interactions are mediated by the gauge fields (gluons), which are represented not as algebra elements, but as compact $3 \times 3$ complex unitary matrices, $U_\mu(x) \in SU(3)$, residing on the links connecting adjacent sites 4810.

A critical advantage of this specific discretization is that it preserves exact local gauge invariance at any finite lattice spacing. Because the path integral is defined via integration over the compact $SU(3)$ group manifold, the volume of the gauge group is inherently finite. This structure allows the path integral to be mathematically well-defined without the necessity of introducing gauge-fixing terms or Faddeev-Popov ghosts 710. The physical continuum limit is formally recovered by systematically tuning the bare gauge coupling toward zero according to the renormalization group, which effectively drives the lattice spacing to zero ($a \to 0$), followed by an extrapolation to infinite spacetime volume ($V \to \infty$) 4711.

The Feynman Path Integral and Monte Carlo Evaluation

Calculating physical observables in LQCD requires the evaluation of a multidimensional Feynman path integral over all possible configurations of the gauge and fermion fields 78. Because a typical modern lattice (e.g., $64^3 \times 128$) contains billions of integration variables, analytic evaluation is impossible. Instead, physicists utilize stochastic Markov Chain Monte Carlo (MCMC) importance sampling to generate a representative ensemble of background gauge field configurations 34.

When the Grassmann-valued fermion fields are integrated out analytically, the resulting probability measure includes the highly complex fermion determinant, $\det(D + m)$, where $D$ is the discretized Dirac operator and $m$ is the quark mass matrix 1112. In the nascent decades of LQCD, the immense computational cost of evaluating this non-local determinant forced the community to adopt the "quenched approximation," a severe truncation wherein the determinant is set to unity 413. The quenched approximation essentially treats quark fields as static variables, entirely ignoring the dynamical effects of virtual quark-antiquark pair creation (sea quarks) bubbling in the QCD vacuum 413.

As algorithmic efficiency and supercomputing power expanded, the field successfully discarded the quenched approximation. Contemporary large-scale simulations are "fully dynamical," meaning they explicitly compute the vacuum polarization. State-of-the-art simulations routinely utilize 2+1+1 flavor configurations, incorporating degenerate up and down quarks, a strange quark, and a heavy charm quark directly into the sea 91213.

Fermion Formulations and Algorithmic Advancements

Discretization Schemes and the Mixed-Action Approach

Placing fermions on a discrete lattice introduces a fundamental theoretical complication known as the Nielsen-Ninomiya fermion doubling problem, which states that one cannot simultaneously preserve exact chiral symmetry, locality, and the correct number of fermion species on a lattice 412. To resolve this, several distinct fermion formulations have been developed, each requiring a different compromise. Wilson fermions explicitly break chiral symmetry at finite lattice spacing to remove the doublers, while Staggered fermions (such as the Highly Improved Staggered Quark, or HISQ, formulation) distribute the fermion spin degrees of freedom across a hypercube to preserve a remnant of chiral symmetry while maintaining high computational speed 1214. Domain Wall and Overlap fermions introduce a fictitious fifth dimension or complex matrix sign functions to preserve exact chiral symmetry at finite $a$, but at a computational cost magnitudes higher than other methods 1214.

| Simulation Paradigm | Active Sea Flavors ($N_f$) | Treatment of the QCD Vacuum | Computational Cost & Systematic Control |

|---|---|---|---|

| Quenched Approximation | 0 | Fermion determinant set to 1. Vacuum polarization from sea quarks is entirely ignored. | Lowest computational cost. Yields irreducible, unquantifiable systematic errors ($\sim 10-20\%$) for hadronic observables. |

| Dynamical (2+1) | Up, Down, Strange | Includes light and strange sea quark loops. Standardizes physical mass limits. | High cost. Standard for precision flavor physics. Accurately models low-energy QCD but neglects charm loops. |

| Dynamical (2+1+1) | Up, Down, Strange, Charm | Full inclusion of light, strange, and heavy charm sea quark loops. | Highest cost. Required for sub-percent precision in heavy-flavor observables and matrix elements involving high momentum transfer. |

Because generating gauge configurations with fully chiral fermions (like Domain Wall) is computationally prohibitive, collaborations frequently employ a "mixed-action" strategy. In this setup, computationally efficient formulations (such as HISQ or Wilson-Clover) are used to generate the "sea" of background gauge configurations. Subsequently, a theoretically cleaner, chirally symmetric "valence" fermion action is used to compute the physical correlation functions on those pre-generated backgrounds 1215.

The success of the mixed-action approach requires a rigorous quantitative understanding of the discretization artifacts introduced by the action mismatch. These mixed-action effects are parametrized in mixed-action partially-quenched chiral perturbation theory by the leading-order low-energy constant, $\Delta_{mix}$ 12. This constant measures the unphysical mass splitting of the mixing pion consisting of one valence quark and one sea anti-quark. Controlled studies comparing multiple lattice spacings ($a \in [0.048, 0.111]$ fm) demonstrate that the choice of the fermion action is the dominant factor in suppressing mixed-action artifacts. While the specific gauge action exerts a secondary effect, the inclusion of dynamical charm quark loops exerts a negligible effect on $\Delta_{mix}$ given current statistical uncertainties, validating the robustness of the mixed-action approach for high-precision matrix element extractions 1215.

Exascale Computing Infrastructure

The continued reduction of statistical and systematic uncertainties in LQCD relies intrinsically on the deployment of leadership-class High-Performance Computing (HPC) facilities. Through coordinated initiatives such as the U.S. Department of Energy's Exascale Computing Project (ECP), the lattice community has aggressively refactored legacy codebases (such as Chroma, CPS, and MILC) to efficiently utilize massively parallel, heterogeneous GPU-accelerated architectures 141617.

Target milestones for the ECP mandated that core lattice QCD benchmark suites execute at least 50 times faster on exascale machines - such as the Oak Ridge Leadership Computing Facility's Frontier (utilizing AMD EPYC CPUs and AMD Instinct MI250X GPUs) and the Argonne Leadership Computing Facility's Aurora (utilizing Intel Xeon CPUs and Ponte Vecchio GPUs) - compared to preceding petascale systems like Mira or Titan 14171819. Recent benchmarking indicates that the lattice community has exceeded these performance goals, achieving an average 70x speedup on Frontier, allowing algorithms to process lattices with up to 10 billion degrees of freedom 1419.

Critical Slowing Down and Machine Learning Interventions

Despite immense hardware scaling, LQCD faces a profound algorithmic bottleneck known as critical slowing down. As researchers push simulations to progressively finer lattice spacings ($a \to 0$) to control ultraviolet discretization errors, standard MCMC algorithms, particularly the Hybrid/Hamiltonian Monte Carlo (HMC), experience exponentially increasing autocorrelation times 1620.

This phenomenon manifests catastrophically as "topological freezing." In the continuum limit, the energy barriers separating distinct topological sectors of the QCD vacuum diverge. Because HMC relies on continuous, local, diffusive random walks through the phase space, the algorithm becomes trapped within a single topological sector, failing to transition between different winding numbers 71620. This failure of ergodicity prevents the sampler from generating a truly representative distribution of the QCD vacuum, heavily biasing the calculation of physical observables that are highly sensitive to topology, such as the pion mass and the $\eta'$ meson mass 721.

To evade critical slowing down, theorists are rapidly integrating modern machine learning architectures into the sampling pipeline. Novel generative models, specifically Normalizing Flows and Generative Flow Networks (GFlowNets), are being engineered to learn complex mathematical field transformations. These models parameterize changes of variables via deep neural networks to map the highly complex, multi-modal QCD target distribution onto a simpler, tractable base distribution where critical slowing down is mitigated 202122. By executing global, network-driven updates rather than local steps, these flow-based samplers propose statistically independent configurations with high acceptance rates, explicitly bypassing the topological barriers that plague traditional HMC 202122.

Achievements in Hadronic Spectroscopy and Structure

The Light and Heavy Hadron Mass Spectra

Historically, the ability of lattice regularizations to accurately reproduce the experimentally established hadron spectrum served as the primary validation of the theory. A watershed moment for the field occurred in 2008 when the Budapest-Marseille-Wuppertal (BMW) collaboration published a comprehensive ab initio calculation of the light hadron mass spectrum, including the proton, neutron, and various hyperons. The lattice predictions matched the experimental values with sub-percent accuracy 823. This calculation definitively confirmed that the mass of visible matter in the universe does not originate primarily from the Higgs mechanism (which accounts only for the bare quark masses), but rather emerges dynamically from the chiral symmetry breaking and interaction energy of the QCD vacuum 23.

Lattice simulations inherently calculate dimensionless quantities. To convert these lattice outputs into physical units (MeV or fm), practitioners perform a scale-setting procedure. This involves selecting a highly stable observable known precisely from experiment - such as the $\Omega$ baryon mass, the pion decay constant $f_\pi$, or the gradient-flow scale parameters - and tuning the bare gauge coupling $\beta$ until the lattice prediction matches reality 7824.

Simulating heavy quarks introduces further complexities. The bottom quark mass ($m_b \approx 4.5$ GeV) is of the same order of magnitude or larger than the inverse lattice spacing ($1/a \approx 2-5$ GeV) of typical ensembles, resulting in massive $\mathcal{O}(a m_b)$ discretization artifacts 7. To circumvent this, lattice physicists rely heavily on specialized Effective Field Theories evaluated on the lattice, such as Non-Relativistic QCD (NRQCD), or they tune the bare quark masses by setting heavy-heavy ($\Upsilon$) or heavy-light ($B$) meson masses to their experimental values on ultra-fine lattices 725.

Nucleon Matrix Elements and Parton Distributions

Beyond confirming the static mass spectrum, LQCD has advanced to computing the intricate internal 3D structure of the nucleon. Lattice extractions of the nucleon axial ($g_A$), scalar, and tensor charges have achieved a high degree of precision, transitioning into robustness standards formally recognized by the Flavour Lattice Averaging Group (FLAG) 262728.

These calculations directly guide phenomenology. Precise determinations of the scalar and tensor charges are fundamental for dark matter direct detection experiments, which rely heavily on accurately modeling the coupling of Weakly Interacting Massive Particles (WIMPs) to the nuclear medium 2729. Furthermore, precision calculations of the axial form factors are urgently required to minimize theoretical uncertainties in neutrino-nucleus scattering cross sections, providing vital inputs for upcoming long-baseline neutrino oscillation experiments such as DUNE 2729.

Moreover, theorists have broken past the historical limitation of calculating only the lowest Mellin moments of the nucleon. By employing the Large Momentum Effective Theory (LaMET) framework, lattice collaborations extract the explicit $x$-dependence of Parton Distribution Functions (PDFs) and Generalized Parton Distributions (GPDs) directly in Euclidean spacetime via the calculation of spatial quasi-PDFs, bringing first-principles theory into direct dialogue with electron-ion collider phenomenology 2830.

Flavor Physics and Precision Tests of the Standard Model

Precision flavor physics serves as a profoundly sensitive probe for physics Beyond the Standard Model (BSM). Lattice QCD evaluates the non-perturbative hadronic matrix elements that dress the weak decays of mesons, allowing experimental decay rates to be translated into precise determinations of the parameters governing quark mixing 1331.

Decay Constants and CKM Matrix Unitarity

The unitary nature of the Cabibbo-Kobayashi-Maskawa (CKM) matrix is a central pillar of the Standard Model. Extracting the element $|V_{us}|$ requires experimental data on semileptonic kaon decays ($K \to \pi \ell \nu$) combined with the theoretical calculation of the vector form factor $f_+(0)$ at zero momentum transfer 2632. Currently, computations utilizing physical mass domain wall fermions, HISQ, and twisted-mass formulations yield highly consistent results for $f_+(0)$, allowing $|V_{us}|$ to be determined to an exquisite precision approaching 0.2% 3235.

In the heavy-flavor sector, resolving persistent anomalies in the CKM unitarity triangle demands extreme precision in the extractions of $|V_{cb}|$ and $|V_{ub}|$. Historically, the semileptonic transition $B \to D^ \ell \nu$ has been studied exclusively at the zero-recoil kinematic point. Recently, however, lattice collaborations have successfully computed the $B \to D^$ form factors at non-zero recoil. This breakthrough eliminates the systematic uncertainty associated with extrapolating experimental data to the zero-recoil limit, significantly tightening the constraints on $|V_{cb}|$ and constraining the available phase space for new physics models like Leptoquarks or $Z'$ bosons 2933.

Neutral Meson Mixing and CP Violation

Neutral meson mixing - specifically $K^0 - \bar{K}^0$ and $B^0 - \bar{B}^0$ flavor oscillations - provides a stringent test of indirect CP violation. The hadronic uncertainties governing these loop-induced processes are parameterized by specific bag parameters (such as $B_K$). Due to implementations of non-perturbative Renormalization Group running on the lattice via the Schrödinger functional, calculations of $B_K$ are now matched to perturbative scales as high as 3-3.5 GeV, severely suppressing previously problematic truncation errors 2426.

Furthermore, LQCD has recently overcome severe technical challenges to execute calculations of direct CP violation parameters, which entail multi-hadron final states. Utilizing advanced G-parity boundary conditions and improved algorithms like the Exact One Flavor Algorithm (EOFA), collaborations are finalizing the calculation of the long-distance contribution to the neutral kaon mass difference $\Delta M_K$ and the direct CP violation ratio $\varepsilon'/\varepsilon$ in $K \to \pi\pi$ decays, phenomena that are entirely inaccessible to continuum perturbation theory 242835.

The Muon Anomalous Magnetic Moment

The anomalous magnetic moment of the muon, $a_\mu = (g-2)/2$, constitutes one of the most rigorous and highly publicized stress tests of the Standard Model. The Fermilab Muon $g-2$ Experiment (E989) has measured $a_\mu$ to an unprecedented precision of 127 parts-per-billion (ppb), confirming earlier measurements from Brookhaven National Laboratory 34. While the Quantum Electrodynamics (QED) and Electroweak (EW) contributions to the anomaly are known analytically to five-loop and two-loop perturbative orders respectively, the total theoretical uncertainty is overwhelmingly dominated by the strong force - specifically the Hadronic Vacuum Polarization (HVP) and the sub-leading Hadronic Light-by-Light (HLbL) scattering loop contributions 635.

Hadronic Vacuum Polarization and Dispersive Tensions

Historically, the HVP has been evaluated using a data-driven, dispersive methodology that integrates experimental cross-section data for electron-positron annihilation into hadrons ($e^+e^- \to \text{hadrons}$), commonly known as the R-ratio 3536. In 2020, the Muon $g-2$ Theory Initiative published a consensus Standard Model value based heavily on legacy dispersive inputs from experiments such as KLOE, BaBar, and CMD-2. This dispersive SM prediction stands in greater than 5-sigma tension with the Fermilab experimental average, an observation widely heralded as a potential signal for BSM physics 36.

However, the theoretical landscape was profoundly disrupted by independent advancements from the lattice frontier. In 2021, the BMW collaboration released the first complete ab initio LQCD calculation of the HVP with sub-percent precision (0.8%). Strikingly, the BMW result yielded a significantly larger hadronic contribution than the dispersive evaluation, pulling the Standard Model prediction into direct agreement with the Fermilab $g-2$ measurement, and effectively erasing the signal for new physics 3536.

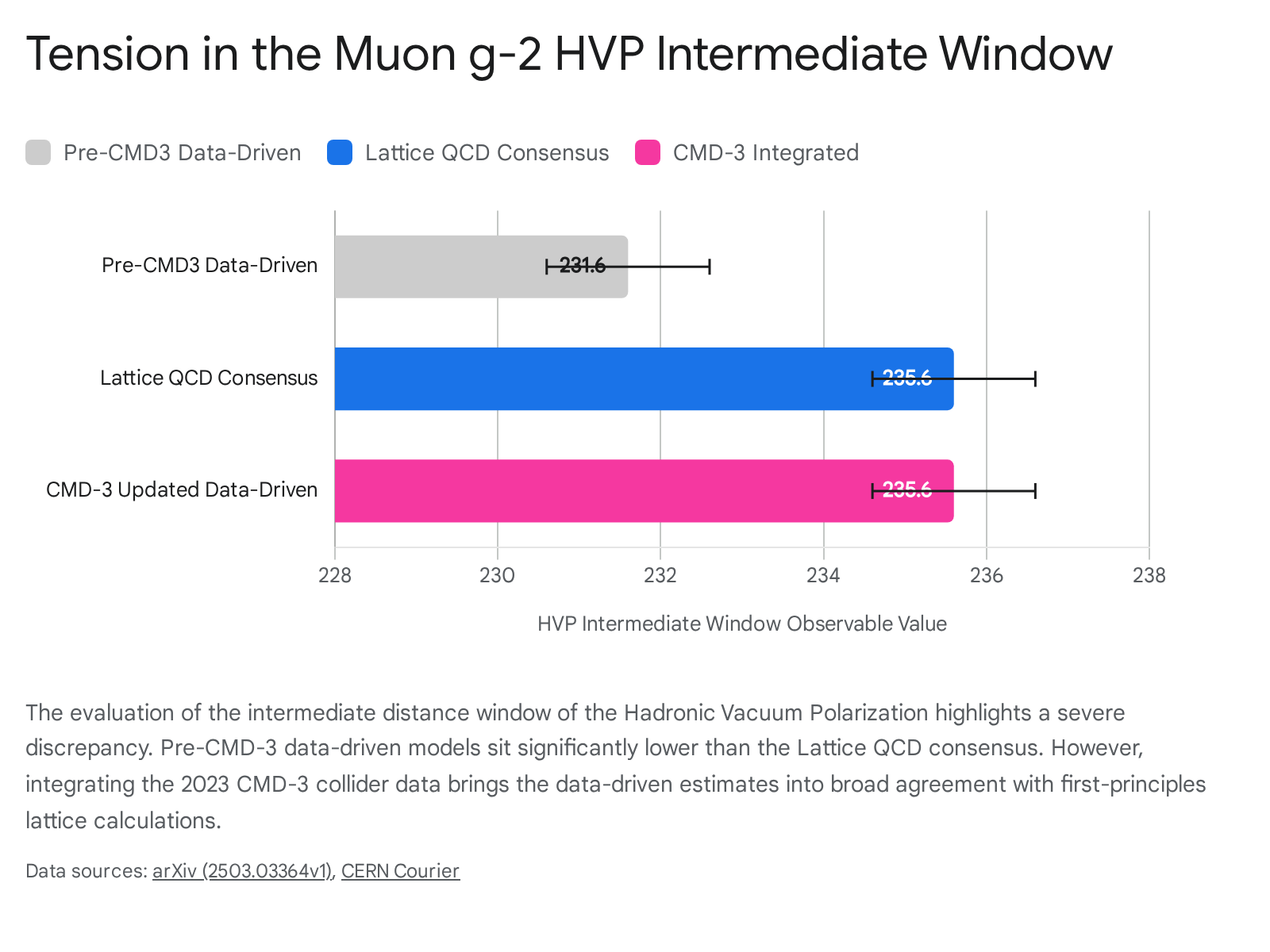

To scrutinize this divergence, multiple independent lattice groups (including Fermilab/HPQCD/MILC, RBC/UKQCD, and ETMC) focused on computing the "intermediate distance window" of the HVP, a specific Euclidean time interval that is highly statistically clean and less sensitive to finite-volume boundary effects and short-distance discretization errors. The combined lattice consensus for this window confirms a highly significant $\sim 3.7\sigma - 4\sigma$ deviation from the pre-2023 data-driven dispersive estimates, cementing the discrepancy between pure theory and legacy experimental data 2837.

The Impact of the CMD-3 Experimental Discrepancy

The puzzle deepened in 2023 with a shock from the experimental side. The CMD-3 experiment at the VEPP-2000 collider released high-precision measurements of the $e^+e^- \to \pi^+\pi^-$ cross-section - the dominant channel for the HVP. The new CMD-3 data violently disagrees with all previous R-ratio measurements (including those from its predecessor, CMD-2, operating at the exact same facility) by an astonishing 2.5 to 5 sigma 3435.

When the new, higher CMD-3 data is substituted into the dispersive integrals, the resulting HVP values increase drastically. This shifts the data-driven evaluation into strong alignment with the BMW and global lattice QCD predictions 3637.

The fundamental origin of the systematic differences between the CMD-3 data and the vast catalogue of legacy $e^+e^-$ datasets remains unknown. Until this experimental contradiction is resolved through subsequent collider runs or independent verifications, Lattice QCD stands as the only robust, purely theoretical pathway capable of consolidating the Standard Model prediction for the muon magnetic anomaly without reliance on conflicting empirical inputs 34.

Nuclear Physics and Multi-Hadron Interactions

While LQCD has historically excelled at computing the masses and decay constants of isolated, stable hadrons, calculating the properties of multi-hadron resonant systems and composite nuclei introduces severe analytical and computational hurdles. The primary difficulty stems from a geometric mismatch: LQCD calculates correlation functions in a finite, Euclidean periodic volume, whereas physical scattering experiments occur in an infinite Minkowski spacetime 38.

Lüscher Formalism and Sub-Threshold Limitations

To extract physical multi-hadron scattering amplitudes from lattice data, practitioners rely on the Lüscher formalism and its modern extensions. This theoretical framework provides a mathematically rigorous quantization condition that algebraically relates the discrete finite-volume energy spectrum of the simulated lattice to the infinite-volume scattering phase shifts via an intermediate $K$-matrix and the generalized Lüscher zeta function, $F_2$ 3839.

However, the standard two-body Lüscher method experiences a severe breakdown when energy levels drop significantly below the infinite-volume elastic threshold. Under extreme sub-threshold analytical continuation - often observed in strongly interacting baryon-baryon systems where attractive forces are high - the formalism encounters an unphysical left-hand (or $t$-channel) branch cut, rendering the standard quantization condition mathematically invalid 3840. Recent theoretical breakthroughs have sought to resolve this by modifying the formalism to explicitly project onto on-shell intermediate states. This yields a regularized $K$-matrix free of the $t$-channel cut, from which the physical scattering amplitude can be safely reconstructed via integral equations 40.

Extending the formalism to three-particle scattering processes (required to study wide resonances like the Roper resonance $N(1440)$ or the $h_1$ meson) introduces further complexities. Three-particle amplitudes are susceptible to severe kinematic divergences when an intermediate exchanged particle goes on-shell within the loop. Furthermore, the mapping of lattice energies to physical scattering amplitudes requires tracking an exponentially larger number of factorial Wick contractions in the Monte Carlo evaluation, a task that severely taxes current computational limits 39. Formalisms for processes involving four or more interacting hadrons remain largely unexplored, representing the distant theoretical frontier of lattice gauge theory 39.

Weak Transition Amplitudes and Proton-Proton Fusion

Despite these multi-body challenges, LQCD has recently made historic inroads into ab initio nuclear physics. A defining achievement of the NPLQCD collaboration was the first direct, model-independent calculation of the proton-proton fusion cross-section matrix element ($pp \to d e^+ \nu$) - the fundamental weak reaction that initiates the energy production chain in main-sequence stars like the Sun 41. The extremely slow rate of this interaction, driven by the intense electrostatic repulsion between low-energy protons and the minute weak interaction rates, makes empirical measurement in modern laboratories impossible, necessitating purely theoretical determinations .

Calculations executed at unphysical pion masses (e.g., $m_\pi \approx 432$ MeV and $806$ MeV) utilized Lellouch-Lüscher finite-volume corrections applied within a $2+\mathcal{J} \to 2$ weak transition framework, explicitly accounting for two-nucleon rescattering induced by the weak current $\mathcal{J}$ 414243. By utilizing bi-local nucleon-nucleon interpolating operators to suppress excited-state contamination, researchers successfully isolated the necessary matrix elements. This allowed for the determination of the two-nucleon axial-vector low-energy constant of pionless effective field theory, yielding $L_{1,A} = 6.0 \pm 7.1$ fm$^3$ at specific renormalization scales 4142.

While the statistical uncertainties - driven primarily by the extreme sensitivity of the finite-volume corrections to two-nucleon scattering parameters - currently remain broad, the computed values are highly compatible with phenomenological extractions at the order of magnitude level. This milestone demonstrates the viability of replacing model-dependent astrophysical nuclear reaction rates with first-principles Standard Model predictions 4143. Through continuous algorithmic enhancements, the deployment of exascale computing networks, and fundamental theoretical generalizations of finite-volume physics, Lattice QCD is successfully transitioning from an arena of post-dictive confirmation to a high-precision discovery tool across the entirety of the low-energy Standard Model.