Landauer's Principle and the Thermodynamics of Information Erasure

The physical limits of computation are fundamentally governed by the intersection of information theory and thermodynamics. Historically, information was treated as an abstract mathematical construct, detached from the physical substrate used to encode, process, and store it. This paradigm shifted in the mid-twentieth century when it was established that logical operations lacking a one-to-one mapping between inputs and outputs inherently result in the compression of the physical phase space of the computing system 112. The most prominent formulation of this relationship is Landauer's principle, which dictates that the logical erasure of information is an inherently dissipative process that expels a minimum quantifiable amount of heat into the environment 34.

The principle establishes a rigid boundary connecting abstract bit manipulation to the second law of thermodynamics. While classical thermodynamics governs the macroscopic efficiency of heat engines, the thermodynamics of information governs the microscopic efficiency of logical gates, measurement devices, and memory registers. As modern semiconductor technology approaches fundamental atomic and thermal limits, the thermodynamic cost of computation has transitioned from a theoretical curiosity to a primary engineering constraint 58.

Fundamental Principles of Information Thermodynamics

Formulated in 1961 by Rolf Landauer, the principle asserts that the logically irreversible erasure of a single bit of information in a system coupled to a thermal bath at absolute temperature $T$ requires a minimum energy dissipation equal to $k_B T \ln 2$, where $k_B$ is the Boltzmann constant 367. A logically irreversible operation is defined as any computational step where the input state cannot be uniquely deduced from the output state. The canonical example is the bit reset operation (e.g., "RESTORE TO ONE" or "ERASE"), which forces a binary memory element into a known logical state regardless of whether it initially held a 0 or a 1 1.

Because the physical implementation of a binary memory element requires at least two distinct, stable states - typically modeled as an overdamped Brownian particle in a bistable double-well potential - an unknown bit occupies a phase space volume corresponding to both possible logical states 8910. When the bit is erased and forced into a single specified well, the accessible phase space of the information-bearing degrees of freedom is halved. According to the foundational postulates of statistical mechanics, this reduction in the internal entropy of the system ($\Delta S = k_B \ln 2$) must be compensated by an equal or greater increase in the entropy of the surrounding thermal reservoir to satisfy the second law of thermodynamics, manifesting as the dissipation of heat 27.

The absolute minimum energy cost is therefore entirely dependent on the operational temperature of the heat sink. Table 1 outlines the theoretical lower bound of energy dissipation for a single bit erasure across various standard operating temperatures .

| Operating Environment | Temperature ($T$) | Theoretical Landauer Limit ($k_B T \ln 2$) |

|---|---|---|

| Room Temperature (Standard CMOS) | 300 K | $2.87 \times 10^{-21}$ Joules ($\sim 0.018$ eV) |

| Liquid Nitrogen (Cryogenic Logic) | 77 K | $7.37 \times 10^{-22}$ Joules |

| Liquid Helium (Superconducting Logic) | 4 K | $3.83 \times 10^{-23}$ Joules |

| Dilution Refrigerator (Quantum Computing) | 0.01 K | $9.57 \times 10^{-26}$ Joules |

It is highly critical to differentiate between logically irreversible operations (such as bit erasure or binary AND gates) and logically reversible operations (such as bit flips, NOT gates, or controlled-swap permutations) 2. Theoretical models and subsequent experimental verifications have demonstrated that reversible measurements or logical operations can, in principle, be executed with zero minimum thermodynamic cost, provided they are performed quasistatically (infinitely slowly) and without discarding intermediate data into the environment 21116.

Entropy Formalisms in Physics and Information

To rigorously understand the thermodynamic cost of information processing, it is necessary to reconcile the various mathematical definitions of entropy utilized across physics and computer science. While they often share an identical functional form, their physical interpretations vary depending on whether they describe classical microstates, communication channels, or quantum density matrices 121920. Table 2 summarizes the primary entropy paradigms.

| Entropy Formulation | Mathematical Definition | Primary Domain | Physical / Theoretical Interpretation |

|---|---|---|---|

| Shannon Entropy | $H = -\sum p_i \log_2 p_i$ | Information Theory | Measures the average uncertainty, surprise, or information content inherent in a probability distribution of messages or symbols. Dimensionless (measured in bits). 12192013 |

| Boltzmann Entropy | $S = k_B \ln W$ | Statistical Mechanics | Quantifies the number of accessible discrete microscopic microstates ($W$) that correspond to a specific macroscopic thermodynamic state. 121920 |

| Gibbs Entropy | $S = -k_B \sum p_i \ln p_i$ | Thermodynamics | An extension of Boltzmann entropy for systems where microstates are not equally probable, functioning as the thermodynamic equivalent of Shannon entropy. 1219 |

| von Neumann Entropy | $S = -\text{Tr}(\rho \ln \rho)$ | Quantum Mechanics | Generalizes Gibbs entropy to quantum systems using the density matrix $\rho$. Measures the entanglement and lack of pure state knowledge in a quantum system. 121914 |

| Tsallis / Rényi Entropy | $S_q = \frac{k}{q-1}(1 - \sum p_i^q)$ | Non-extensive Mechanics | Generalizations of Boltzmann-Gibbs entropy applied to complex, non-ergodic, or strongly correlated systems where traditional additivity fails. 1223 |

Thermodynamic entropy can be viewed as a specific physical instantiation of Shannon entropy, where the underlying probabilities correspond to the equilibrium distribution of physical microstates 1920. In classical information processing, the Shannon entropy of a memory register determines the theoretical minimum work required to erase it. If a memory state is already known with absolute certainty prior to the erasure protocol (a Shannon entropy of zero), no physical phase space compression occurs during the reset operation, and the thermodynamic cost of erasure can theoretically be zero 201516. Landauer's bound explicitly applies to the erasure of a maximally uncertain bit (a Shannon entropy of exactly 1 bit), yielding the classical $k_B T \ln 2$ cost 1316.

Maxwell's Demon and the Reversibility of Computation

The historical development of Landauer's principle is inextricably linked to Maxwell's demon, a thought experiment proposed by James Clerk Maxwell in 1867 that appeared to violate the second law of thermodynamics 1718. Maxwell envisioned a microscopic entity guarding a frictionless trapdoor between two gas-filled chambers. By observing the velocities of approaching molecules and selectively opening the door to permit fast molecules to pass to one side and slow molecules to the other, the demon could spontaneously generate a macroscopic temperature differential without performing mechanical work, defying thermodynamic equilibrium 171920.

The Szilard Engine

In 1929, Leo Szilard formalized this paradox into the "Szilard engine," which reduced the gas to a single molecule contained within a cylinder 171921. By measuring which half of the cylinder the molecule occupies, a demon can insert a partition, attach a frictionless piston, and extract $k_B T \ln 2$ of work during the molecule's isothermal expansion 192122. Because the single-molecule gas undergoes a cyclic process returning to its initial macrostate while extracting work from a single heat bath, the cycle explicitly violates the Kelvin-Planck statement of the second law 1923.

For decades, physicists attempted to locate the hidden entropy cost that would rescue the second law of thermodynamics. Early arguments by Léon Brillouin and Dennis Gabor suggested that the act of measurement itself - specifically the necessary scattering of photons to observe the precise location of the molecule - incurred an entropy cost exceeding the work extracted 2224.

The Measurement Versus Erasure Debate

The prevailing consensus shifted dramatically in 1982 when Charles Bennett applied Landauer's principle to the demon's memory architecture. Bennett demonstrated that the measurement phase could theoretically be performed using a logically reversible apparatus, thereby incurring no fundamental thermodynamic cost 172225. Instead, the paradox is resolved by acknowledging the finite capacity of the demon's memory.

To operate cyclically, the demon must eventually erase the recorded measurements to make room for new ones. It is this logically irreversible erasure of the demon's memory register that dissipates at least $k_B T \ln 2$ of heat into the environment. This dissipation precisely offsets the work extracted from the isothermal expansion of the Szilard engine, perfectly preserving the second law of thermodynamics 172122.

While the Bennett-Landauer resolution remains the dominant paradigm in computational thermodynamics, it has faced continued theoretical scrutiny. Critics, such as John Earman and John Norton, argued that treating the demon via information-theoretic notions was either unnecessary or physically ungrounded, suggesting that standard statistical mechanics was sufficient to exorcise the demon without invoking abstract computation 1526.

Modern Resolution and Quantum Uncertainty

More recently, research addressing the microscopic quantum nature of gas molecules has re-elevated measurement as a primary source of entropy generation. Under this framework, treating a gas molecule as a classical point particle ignores quantum mechanics. Localizing a molecule's physical state to a precise position in order to extract work invokes the quantum uncertainty principle 2436. The necessary compression of the molecule's spatial wave function during the measurement phase directly incurs an entropy cost.

This perspective argues that experimental realizations of "quantum Maxwell's demons" often demonstrate the entropy cost of measurement and localization, rather than the cost of memory erasure 2426. Ultimately, generalized inequalities governing the thermodynamics of information - developed by researchers such as Sagawa and Ueda - demonstrate a strict trade-off: $W_{meas} + W_{eras} \ge k_B T I$, where $I$ is the mutual information between the system and the memory. If a physical memory structure allows for erasure at a sub-Landauer cost, the thermodynamic cost of the preceding measurement proportionally increases, maintaining a fundamental lower bound on the combined cyclical process 1116.

Finite-Time Thermodynamics of Information Erasure

The $k_B T \ln 2$ limit strictly applies only to quasistatic processes - operations performed infinitely slowly such that the system remains in perfect thermal equilibrium with its environment at all times 102738. In physical reality, information processing must occur in finite time. Driving a physical memory system out of equilibrium to perform operations at a non-zero computational speed results in unavoidable transient dynamics and irreversible entropy production 3928.

Stochastic Thermodynamics and Langevin Dynamics

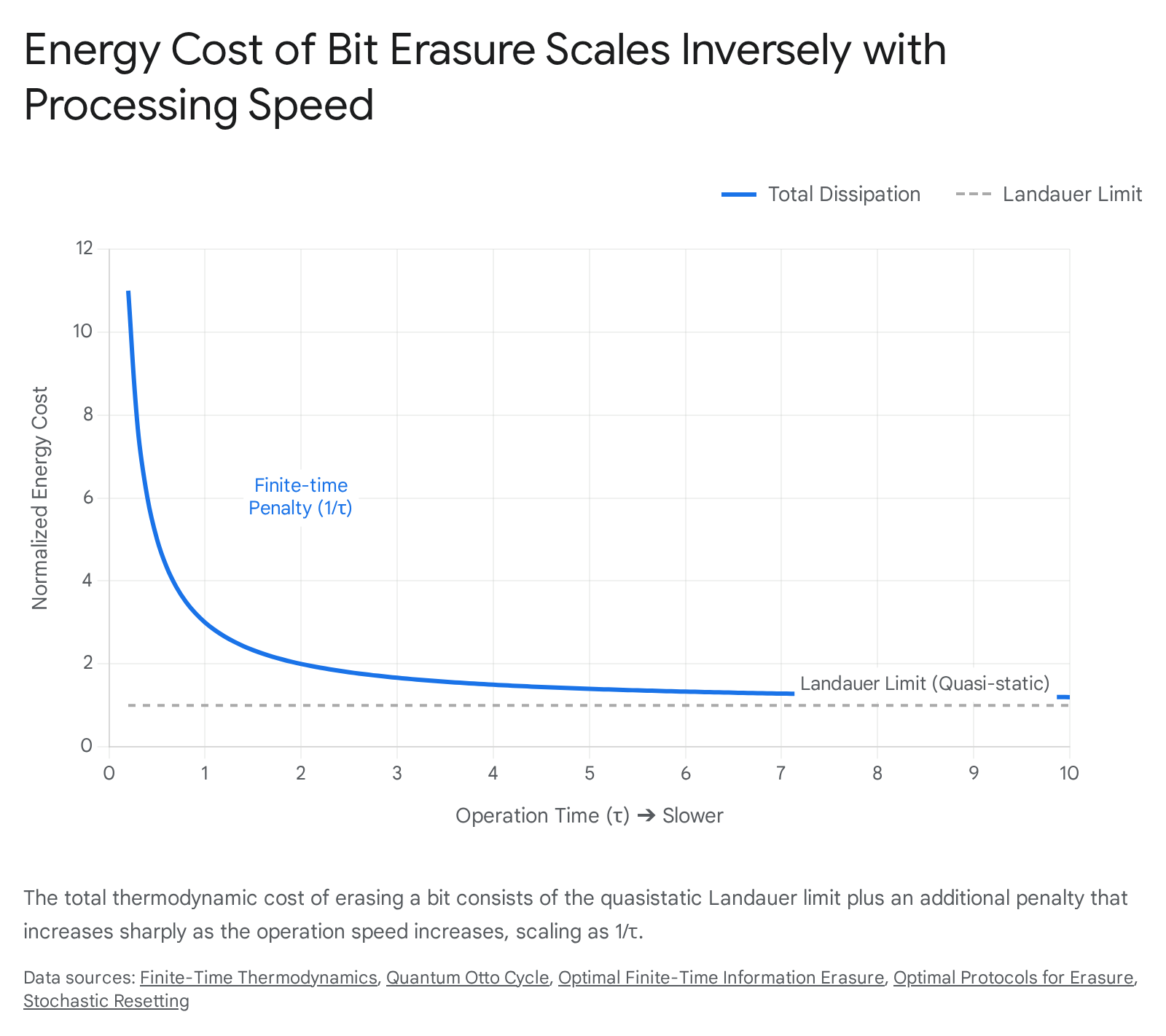

In finite-time thermodynamics, the total dissipated work $W_{diss}$ required to erase a bit comprises the quasistatic Landauer bound plus a dynamic penalty that scales inversely with the protocol duration $\tau$ 9392829. In the classical regime, a memory bit is frequently modeled as an overdamped Brownian particle diffusing within a one-dimensional double-well potential, governed by Langevin dynamics and the corresponding Fokker-Planck equations 91028.

When erasing the bit, the central potential barrier is lowered, a tilt is applied to drive the particle to the desired logical well, and the barrier is subsequently raised. Doing this rapidly requires external work to overcome the characteristic friction coefficient ($\gamma$) of the medium. The extra dissipation generated beyond the Landauer bound reflects the system lagging behind the changing potential landscape 9102830.

Thermodynamic Geometry and Optimal Transport

To minimize this finite-time penalty, researchers rely on thermodynamic geometry and optimal transport theory. A system can be steered along a geodesic in probability space by manipulating the external control parameters (such as the barrier height and well asymmetry) 273144. The extra dissipation is proportional to the square of the Wasserstein distance between the initial and final probability distributions, divided by the erasure time $\tau$ 3132.

The defining characteristic of an optimal finite-time thermodynamic protocol - achieved through techniques like counter-diabatic driving - is a constant dissipation rate throughout the duration of the erasure process, which effectively avoids sudden jumps in phase space that would cause massive entropy spikes 2846. Even with perfectly optimized protocols, however, high-speed erasure inevitably commands a substantial thermodynamic premium over the theoretical Landauer bound.

Information Thermodynamics in the Quantum Regime

The classical formulation of Landauer's principle relies heavily on systems in thermal equilibrium coupled to ideal, infinite Markovian heat baths. However, modern quantum information processing and nanoscale thermodynamics involve systems driven far from equilibrium, exhibiting non-Markovian dynamics, strong system-bath coupling, and complex quantum coherence 14733.

Recent theoretical and experimental advances have successfully extended the Landauer framework into the quantum many-body regime. In out-of-equilibrium quantum systems, the total generalized entropy production can be decomposed into changes in quantum mutual information (correlations between the system and the environment) and the relative entropy of the environment 33435. This refines the Landauer bound by demonstrating that pre-existing quantum correlations or initial entanglement can absorb part of the entropy change, potentially modifying the apparent heat dissipation required for information erasure 36.

Quantum Many-Body Systems and Generalized Entropy

A landmark 2025 study published in Nature Physics provided direct experimental verification of Landauer's principle in a highly complex quantum many-body system 3343738. The researchers utilized a quantum field simulator based on ultracold Rubidium-87 ($^{87}\text{Rb}$) Bose gases. By applying a global mass quench that transitioned the system from a massive to a massless Klein-Gordon model, they tracked the temporal evolution of the quantum field using dynamical tomographic reconstruction 343738.

The results verified that even in an interacting, continuous multi-particle system far from equilibrium, the erasure of quantum information is strictly accompanied by a quantifiable transfer of entropy and energy to the surrounding environment 3940. The experiment demonstrated that ultracold atom platforms can accurately map the information-theoretic contributions to heat dissipation, validating semi-classical quasiparticle calculations for generalized entropy production without relying on idealized classical point particles 343738.

Quantum Coherence and Extreme Dissipation

Finite-time erasure in the quantum regime introduces phenomena entirely absent in classical stochastic thermodynamics. Rapid driving of a quantum memory protocol generates quantum coherence in the energy eigenbasis. Theoretical analyses of the full counting statistics of dissipated heat have proven that this dynamically generated coherence provides a strictly non-negative contribution to all statistical cumulants of the heat distribution 33564158.

Consequently, finite-time quantum erasure is characterized by heavy-tailed probability distributions containing "rare events" of extreme dissipation 3356. While the average heat dissipation remains bound by the classical finite-time limits, these rare events can trigger massive thermal spikes on the microscopic scale - sometimes exceeding the Landauer limit by more than a factor of 30, compared to classical protocols that rarely exceed a factor of 4 58. In the design of fault-tolerant quantum hardware, these coherence-induced extreme fluctuations pose a severe risk to fragile superconducting circuits or quantum dots that possess a low threshold of tolerance for thermal noise 4658.

Implications for Hardware and Reversible Computing

For over six decades, the semiconductor industry has relied on Moore's Law and Koomey's Law, consistently shrinking transistor sizes and improving the number of computations per joule of energy dissipated 55942. However, standard complementary metal-oxide-semiconductor (CMOS) logic is approaching a fundamental thermal wall.

The End of Moore's Law and CMOS Dissipation

Conventional digital logic gates (such as AND, OR, NAND) are logically irreversible. When two input bits are condensed into a single output bit, information is erased 61. Furthermore, contemporary CMOS transistors do not merely lose $k_B T \ln 2$ of energy during this erasure. They dissipate the entire energy of the signal charge to ground during switching to maintain high noise margins and reliability 15943.

Table 3 compares the energetic efficiency of modern computing paradigms against the theoretical Landauer bound at 300 K .

| Technology Paradigm | Energy per Bit Operation (Joules) | Multiples of the Landauer Limit (300 K) |

|---|---|---|

| Standard CMOS (1 fJ) | $\sim 1.00 \times 10^{-15}$ J | $\sim 348,313\times$ |

| Emerging Superconducting (1 aJ) | $\sim 1.00 \times 10^{-18}$ J | $\sim 348\times$ |

| Sub-kBT Experimental Models | $< 2.87 \times 10^{-21}$ J | Fractional (Quasistatic limit only) |

| Landauer Limit (Theoretical) | $\sim 2.87 \times 10^{-21}$ J | $1\times$ |

While there is theoretically a factor of $10^5$ remaining for efficiency improvements, classical two-dimensional transistor scaling is plateauing 542. The heat density of modern microprocessors restricts clock speeds and drives massive data center power consumption, projecting severe bottlenecks for artificial intelligence training workloads 4445.

Reversible Logic Gates and Adiabatic Switching

To bypass the thermal limits of irreversible erasure, researchers are developing reversible computing architectures. A reversible computer ensures that every computational step possesses a one-to-one mapping between inputs and outputs, meaning no information is ever deleted from the system until the final readout 2644.

Logical reversibility is achieved using specialized logic gates, such as the Fredkin gate (a controlled-swap gate) and the Toffoli gate (a controlled-controlled-NOT gate) 146. Because these gates preserve all input information, they do not inherently trigger the Landauer erasure cost . Furthermore, modern architectures are exploring multi-valued logic (e.g., ternary systems) using ferroelectric materials to combine memory and computation, bypassing binary limitations while maintaining distinct energy profiles 666768.

However, logical reversibility must be paired with physical reversibility to yield energy savings. Reversible circuits employ adiabatic switching, where logic signals are ramped up slowly rather than abruptly switching voltages 159. By keeping the system in near-equilibrium, the energy used to charge the gate capacitances is not discarded as heat but is instead recovered and recycled during the decomputation step using resonant circuits (such as LC resonators) 25944. Startups and researchers at national laboratories are currently fabricating microelectromechanical systems (MEMS) based reversible chips capable of approaching 99% energy recovery, projecting long-term efficiency gains of up to 4,000x over traditional CMOS 5944.

The Thermodynamics of Error Correction

A critical barrier to perfect reversible computing is the thermodynamics of error correction. No physical system is completely immune to thermal noise or quantum fluctuations. When an error occurs in a reversible computation, correcting it requires detecting the error and resetting the corrupted bit to a known, error-free state. This is an inherently logically irreversible many-to-one mapping 14748.

Consequently, error correction itself incurs a Landauer thermodynamic cost. Biological systems provide naturally optimized examples of this phenomenon. RNA polymerase, executing DNA transcription, operates as a naturally reversible Brownian computer; however, it must constantly dissipate energy via chemical hydrolysis to proofread and correct mismatched base pairs 4748. Kinetic proofreading behaves thermodynamically like an energetically non-degenerate Brownian copying process, where undoing errors requires continuous energy dissipation 48. In digital hardware, there exists an unavoidable, multi-dimensional trade-off between the desired computational speed, the acceptable logical error rate, and the minimum energy dissipation required to correct those errors 314748.

The Mass-Energy-Information Equivalence Controversy

The deep connection between abstract information and physical entropy has led to several controversial extensions of Landauer's principle. The most notable in recent literature is the "mass-energy-information equivalence principle" proposed by Melvin Vopson in 2019 7134950.

Vopson hypothesized that if information erasure releases thermodynamic energy ($E = k_B T \ln 2$), and energy is equivalent to mass via Special Relativity ($E = mc^2$), then information itself must possess an intrinsic resting mass. Under this framework, the mass of a single bit of information at room temperature was calculated to be $3.19 \times 10^{-38}$ kg. This led to assertions that a digital memory device would theoretically increase in mass when filled with data, and that information constitutes a "fifth state of matter" 7135051.

However, this hypothesis has been widely refuted by the broader physics community. The foundational error in the mass-energy-information equivalence principle stems from confusing the abstract concept of information with its physical representation 1351. Information is not a localized physical substance; it is a structural configuration or constraint placed upon the degrees of freedom of a physical carrier 4951.

Erasing a hard drive does not destroy physical matter; it merely randomizes the magnetic orientations of the platters, moving the system from an ordered macrostate to a disordered one. The Landauer bound reflects the external work required to reduce the phase space of a system coupled to a thermal bath, not the annihilation of an intrinsic "information particle" 4950. Furthermore, attributing mass directly to information violates the third law of thermodynamics (as the entropy of representations behaves differently at absolute zero) and contradicts quantum mechanics, where information manifests non-locally through entanglement rather than localized massive carriers 495051.

Synthesis of Thermodynamic Limits in Computation

Landauer's principle endures as the definitive theoretical bridge between information theory and the physical world. By proving that the logical erasure of data necessitates a minimum thermodynamic cost, it established that computation is subject to the strict governance of statistical mechanics.

Recent research has drastically expanded the scope of this principle. Finite-time penalty calculations explicitly demonstrate why real-world computation is vastly more expensive than the quasistatic limit. Furthermore, the 2025 experimental breakthroughs in quantum many-body systems confirm that the irreversible transfer of entropy holds true even in complex, interacting, out-of-equilibrium environments. As classical semiconductor architectures collide with the thermal realities of atomic-scale fabrication, the industry is increasingly forced to look toward reversible computing frameworks, optimal transport protocols, and adiabatic circuit design. While the thermodynamic cost of error correction guarantees that computation will never be completely free of dissipation, navigating these fundamental physical limits remains the defining challenge for the future of computational scalability.