Jeff Hawkins's cortical column model for artificial intelligence

Introduction to the Sensorimotor Intelligence Paradigm

Over the past decade, artificial intelligence has advanced at an unprecedented pace, driven predominantly by the exponential scaling of deep learning architectures, convolutional neural networks (CNNs), and transformer models 11. Despite these remarkable achievements in statistical pattern matching and generative modeling, the contemporary deep learning paradigm exhibits persistent and fundamental limitations. Modern systems suffer from extreme data inefficiency, requiring thousands to millions of examples to learn conceptual relationships that biological organisms grasp in a few trials 342. Furthermore, they exhibit brittleness to out-of-distribution variations, are plagued by catastrophic forgetting when learning continuously, and demand exorbitant amounts of computational power and energy to train and deploy 434.

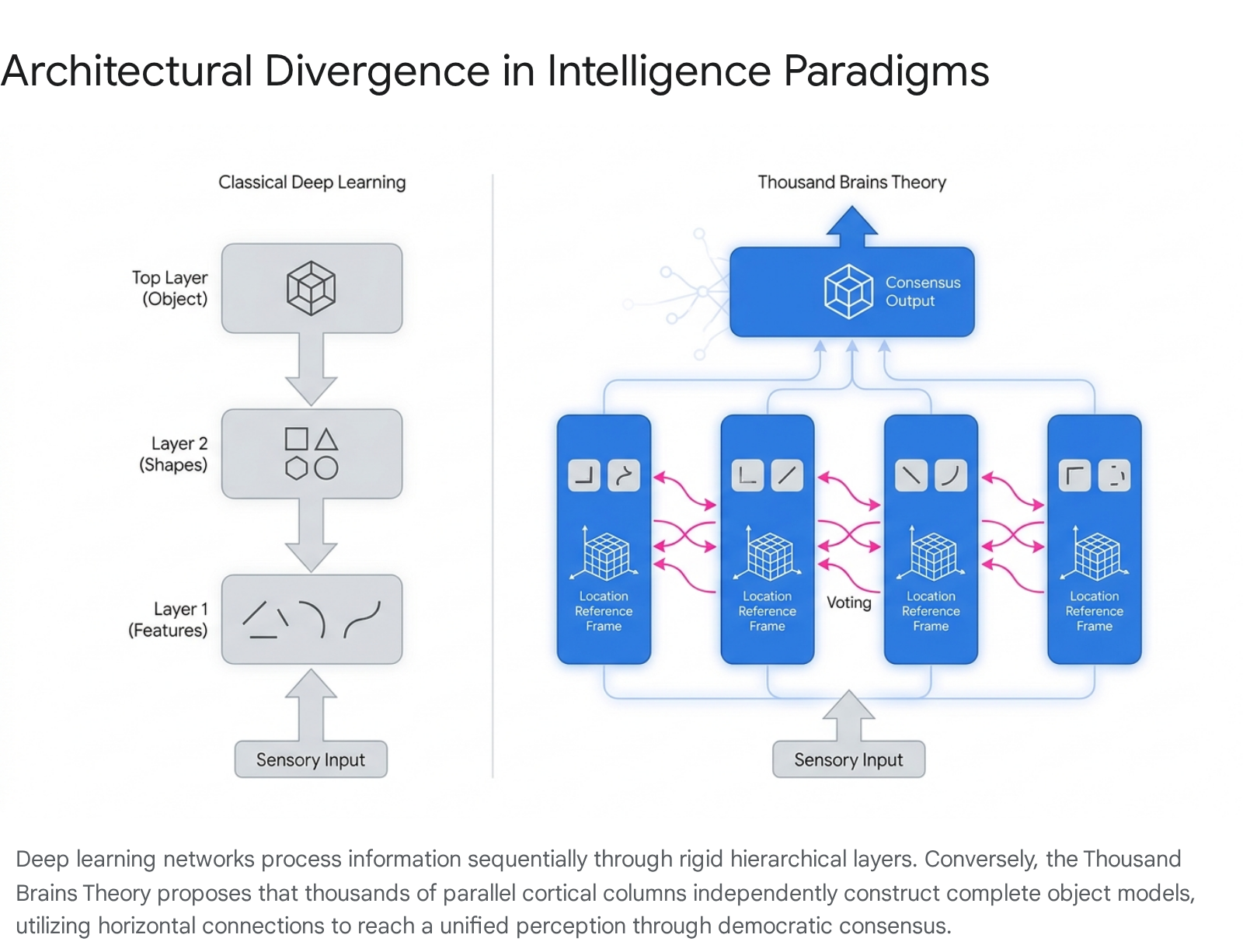

In response to these structural bottlenecks, computational neuroscience provides an alternative theoretical framework for developing artificial general intelligence. Foremost among these proposals is the Thousand Brains Theory, articulated by neuroscientist and technologist Jeff Hawkins alongside researchers at Numenta 8910. The Thousand Brains Theory challenges the foundational assumption of modern neural networks: that intelligence arises from a single, massive hierarchy of sequential feature extraction. Instead, the theory posits that the mammalian neocortex is composed of approximately 150,000 highly repetitive, semi-independent microcircuits known as cortical columns 810. According to this framework, each cortical column acts as an autonomous sensorimotor modeling system capable of learning complete representations of objects and concepts within its own spatial reference frame 105.

Unified perception, therefore, does not occur at a singular "top" layer of a processing hierarchy. Rather, it emerges organically through a decentralized voting mechanism operating across thousands of parallel models 613. This report comprehensively examines the neurobiological mechanisms underlying the Thousand Brains Theory, traces its architectural divergence from traditional deep learning and capsule networks, explores its applied instantiation in the open-source Monty architecture, and assesses the empirical neuroscientific evidence both supporting and challenging the framework.

Biological Foundations of the Universal Cortical Algorithm

To understand the Thousand Brains Theory, it is necessary to examine the anatomical and physiological realities of the human neocortex - the highly convoluted outer layer of the brain responsible for sensory perception, motor command, spatial reasoning, and conscious thought 87.

Structural Homogeneity and Cortical Columns

A foundational premise of the Thousand Brains Theory is the structural uniformity of the neocortex, an observation originally popularized by neurobiologist Vernon Mountcastle in the 1970s 858. Mountcastle noted that across disparate regions of the neocortex - whether processing visual data from the optic nerve, auditory data from the cochlea, or tactile data from the skin - the physical six-layered architecture of the tissue remains remarkably consistent 8169.

The Thousand Brains Theory argues that this anatomical uniformity implies a universal computational algorithm 91810. The brain does not utilize fundamentally different mathematical processes for seeing, hearing, or touching. Instead, it achieves functional diversity by applying the same generalized sensorimotor algorithm to different streams of sensory input 91810. The fundamental unit of this universal computation is the cortical column, a vertical grouping of neurons spanning the six layers of the neocortex. Each column is approximately one to two millimeters in larger aggregated structures, or a few tens of micrometers for mini-columns, containing thousands of interconnected neurons 81611.

Parallel Object Modeling

In classic hierarchical models of vision, input moves through increasingly abstract layers. Primary sensory regions identify simple edges; intermediate regions identify curves and shapes; and high-level temporal regions identify complete objects, such as a face or a coffee cup 5613. The Thousand Brains Theory radically departs from this flowchart model. It proposes that every cortical column - even those in lower-level sensory regions - operates as a complete learning machine attempting to build a comprehensive model of the entire object being perceived 5613.

Because a single sensor patch (such as a localized region of the retina or the tip of a single finger) can only perceive a fraction of a coffee cup at any given moment, the column cannot deduce the object's full identity from a static snapshot. Instead, the column relies heavily on active movement. By integrating sequential sensory inputs over time as the eye saccades or the finger traces the cup's surface, the local column constructs a reliable, three-dimensional representation of the object 1056. Learning and perception are therefore defined as active, sensorimotor processes rather than passive data consumption 210.

Mechanisms of Spatial Reasoning and Consensus

If individual cortical columns independently model complete objects, they require internal mechanisms to track spatial geometry, define compositional relationships, and resolve conflicting observations. The Thousand Brains Theory posits three specific neural mechanics to achieve this: cortical grid cells, displacement cells, and lateral voting.

Cortical Grid Cells and Allocentric Reference Frames

To map sequential sensory inputs into a coherent object model, cortical columns must possess an internal coordinate system. A central hypothesis of the Thousand Brains Theory is that every cortical column contains neurons functionally analogous to grid cells 56.

Grid cells were originally discovered in the entorhinal cortex, an older region of the brain, where they serve as a spatial navigation system - representing the location of an animal's body relative to its physical environment 5621. The activation locations of grid cells form a periodic, hexagonal lattice, providing a metric for physical space 2122. The Thousand Brains Theory hypothesizes that the neocortex evolutionarily co-opted this spatial mapping mechanism to understand the structure of objects 5623.

These theorized "cortical grid cells" represent the location of a specific sensor patch relative to the external, allocentric reference frame of the object being observed 5. As a finger moves across a coffee cup, cortical grid cells update the representation of the fingertip's location within the cup's unique, three-dimensional spatial space 51312. This updating process is driven by motor efference copies - internal neural signals detailing the intended movement of the sensory organ 512. The column continuously pairs incoming sensory features with these grid-cell-derived locations, learning the object topologically as a set of specific features at specific spatial coordinates 512.

Displacement Cells and Object Compositionality

Objects in the real world are highly compositional; a coffee cup may possess a distinct logo, and a bicycle is composed of wheels, a frame, and handlebars. To account for how the brain learns complex, nested objects without requiring an exponentially growing number of neural representations, the theory introduces the concept of "displacement cells" 52513.

Displacement cells are hypothesized to calculate the spatial relationship between two distinct reference frames. They determine a "displacement vector" that represents the fixed, one-to-one geometrical relationship between the location space of a sub-object (such as the logo) and the location space of the parent object (the cup) 5. This mathematical vector allows the neocortex to represent a novel compositional object not by learning it from scratch, but as an efficient spatial arrangement of previously established object models 513.

Decentralized Consensus Through Horizontal Voting

With approximately 150,000 cortical columns simultaneously generating models of the environment, the brain must reconcile ambiguous or conflicting data to produce a unified conscious perception 1027. If a person touches a coffee cup while blindfolded, multiple columns connected to different fingertips receive partial, highly ambiguous sensory evidence. One finger may sense a curved surface, while another senses a sharp rim.

The theory proposes that consensus is achieved through extensive, long-range horizontal neural connections traversing different cortical regions 513. Columns that have formed a probability distribution regarding an object's identity communicate laterally. They engage in a continuous "voting" process, broadcasting their local hypotheses 10613. The network rapidly converges on the singular consensus that best satisfies the collective sensory evidence of all participating columns. This decentralized sensor fusion explains how humans can instantly identify complex objects in a single glance or grasp, even if the localized data arriving at individual cortical columns is insufficient for identification 56.

Divergence from Deep Learning and Capsule Networks

The architectural proposals of the Thousand Brains Theory stand in stark contrast to the dominant methodologies in contemporary artificial intelligence.

Limitations of Rigid Hierarchical Feature Extraction

Current deep learning systems, particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), rely heavily on hierarchical abstraction 5614. Sensory input ascends through successive layers; simple filters at the base detect edges, intermediate layers combine these into textures and shapes, and terminal layers categorize the complete object 513.

However, this hierarchical flow struggles with spatial translation, 3D rotation, and compositional structure without exhaustive training data containing every conceivable variation 41516. Because deep learning systems implicitly memorize statistical correlations rather than explicitly mapping spatial coordinate systems, their internal representations remain highly brittle 42. The Thousand Brains framework replaces this deep, rigid hierarchy with a relatively flat, parallel array of generalized modeling units, anchoring knowledge directly to intrinsic 3D reference frames 623.

A Comparison with Capsule Networks

The limitations of standard CNNs regarding spatial relationships prompted the development of Capsule Networks (CapsNets), championed by Geoffrey Hinton. Given that both CapsNets and the Thousand Brains Theory draw explicit inspiration from biological cortical columns and emphasize spatial part-whole relationships, they are frequently compared 15313233.

Capsule Networks replace scalar-valued neurons with vector-valued "capsules," where the vector encodes both the probability of a feature's presence and its instantiation parameters, such as pose, scale, and deformation 151633. The defining mechanism of CapsNets is "dynamic routing by agreement," a bottom-up algorithmic process where lower-level capsules iteratively send their outputs to higher-level capsules whose predictions most closely match their own, dynamically constructing a parse tree of the visual scene 163334.

While theoretically elegant, Capsule Networks face severe scalability barriers. The iterative dynamic routing process incurs massive computational overhead 1633. While successful on small toy datasets like MNIST, standard CapsNet architectures struggle to scale to high-resolution benchmarks like ImageNet, suffering from vanishing gradients and capsule "starvation" where many capsules fail to receive gradients during training 16. The best performing capsule implementations hover around 60% top-1 accuracy on ImageNet, trailing far behind state-of-the-art CNNs and Transformers that exceed 90% 16.

The Thousand Brains Theory circumvents the computational bottlenecks of dynamic routing by avoiding strict parse-tree construction 1016. Instead of forcing a bottom-up routing consensus, it replicates complete object models horizontally across parallel columns, utilizing a single lateral voting step to resolve ambiguities 105. Furthermore, whereas CapsNets typically process static spatial arrays, Thousand Brains strictly enforces active, temporal sensorimotor integration to build its representations 210.

| Architectural Feature | Standard Deep Learning (CNNs / ViTs) | Capsule Networks (CapsNets) | Thousand Brains Theory (TBT) |

|---|---|---|---|

| Fundamental Computational Unit | Scalar node / Point neuron | Vector-valued "Capsule" 16 | Cortical Column / Learning Module 12 |

| Spatial Grounding | Implicitly learned via massive data augmentation | Explicitly represented via pose vectors/instantiation parameters 1533 | Explicitly structured via allocentric grid cell reference frames 5 |

| Object Representation Location | Distributed statistically across the highest terminal layers 5 | Assembled hierarchically via structural parse trees 16 | Fully replicated as complete models across many parallel columns 56 |

| Consensus Mechanism | Top-down loss minimization (e.g., Softmax) | Bottom-up dynamic routing by agreement 3334 | Lateral horizontal voting across parallel models 56 |

| Role of Time and Movement | Typically static observation (unless using specialized recurrent architectures) | Typically static image analysis 33 | Essential and mandatory; relies on sensorimotor path integration 210 |

| Identified Scalability Bottlenecks | Exponential data hunger, O(N2) quadratic scaling constraints 14 | High computational cost of dynamic routing, vanishing gradients 16 | Nascent software implementations; unproven at LLM scale for abstract reasoning 3536 |

Mitigating Catastrophic Forgetting via Active Dendrites

Beyond spatial modeling, the artificial implementation of neocortical principles seeks to resolve "catastrophic forgetting" - the phenomenon where deep neural networks rapidly overwrite previously acquired knowledge when trained on a novel task sequentially 43717.

In standard deep learning, artificial neurons are abstracted as "point neurons" that compute a single weighted sum of all inputs. Conversely, biological pyramidal neurons feature highly complex, branching dendritic trees capable of localized, non-linear computation independent of the cell body 1840. The Thousand Brains paradigm incorporates these active dendritic properties, demonstrating that they drastically increase the computational expressivity of single neurons 1840.

When researchers integrated active dendrites into artificial Spiking Neural Networks (SNNs) and standard deep learning frameworks, the networks naturally partitioned themselves into distinct, highly sparse, context-specific subnetworks 41819. Because representations are enforced to be extraordinarily sparse (e.g., maintaining activation densities of less than 10%), a new task only modifies a highly segregated subset of dendritic synapses, leaving the parameters of previously learned tasks functionally undisturbed 31719.

Independent benchmarks on continual learning tasks substantiate these claims. When tested on the standard Split MNIST and permutedMNIST datasets, models enhanced with active dendrites and time-to-first-spike (TTFS) encodings maintained an end-of-training test accuracy of 88.3% across sequential tasks, representing a marginal 8.7% drop compared to the severe 27.6% degradation observed in identical networks lacking dendritic modulation 1719. Similar competitive results have been recorded in multi-task reinforcement learning environments like Meta-World, marking a rare instance of a single architecture succeeding in both domains 43719.

The Thousand Brains Project and the Monty Architecture

To bridge the gap between theoretical neuroscience and practical artificial intelligence, Numenta spun out the "Thousand Brains Project" (TBP) as an independent, open-source non-profit entity in late 2024, directed by Dr. Viviane Clay 2021. The initiative provides developers with an open-source framework, releasing IP under a permissive MIT license to catalyze a community of researchers dedicated to building sensorimotor intelligence 2122.

The Architecture of Monty

The flagship instantiation of the Thousand Brains framework is an artificial agent named "Monty" 12246. Designed specifically to learn robust 3D object representations and execute complex tasks through environmental interaction, Monty utilizes two primary architectural components:

- Sensor Modules (SM): These modules act as interfaces with the external environment, ingesting modality-specific raw input - whether from cameras (vision), robotic tactile arrays (touch), or LiDAR 223. The SM's function is to convert this diverse sensory stream into a standardized, universal format.

- Learning Modules (LM): Serving as the artificial equivalent of biological cortical columns, LMs receive the standardized input from the SMs 12. Rather than utilizing traditional backpropagation, LMs employ rapid, associative Hebbian learning. They continuously pair incoming sensory vectors with internal spatial reference frames to build structured topological models of the environment 146.

The Cortical Messaging Protocol (CMP)

The integration of these distinct modules is mediated by the Cortical Messaging Protocol (CMP). Because all Sensor Modules output data into the standardized CMP format, multiple Learning Modules can interact, share abstractions, and execute lateral voting effortlessly, regardless of the underlying sensory modality 124623.

This strict modularity naturally resolves the persistent challenge of multi-modal data fusion. By establishing decentralized consensus across varying data streams, Monty avoids the necessity of training massive, monolithic end-to-end networks. Instead, complex task execution emerges from the combined propositions of thousands of semi-independent, localized models 12323.

Hardware Efficiency and Sparsity Benchmarks

The computational requirements of contemporary deep learning models have precipitated an energy crisis in AI, with massive GPU clusters consuming megawatts of power to execute dense matrix multiplications 4324. In stark contrast, the human brain operates on approximately 20 watts of power 3. The Thousand Brains approach attempts to emulate this efficiency by prioritizing extreme activation and weight sparsity.

Because information in a neocortical model is stored as Sparse Distributed Representations (SDRs) - where only a small fraction of neurons are active at any given moment - artificial implementations require significantly fewer active parameters during inference 3918.

Numenta and independent hardware engineers have benchmarked the performance of these sparse algorithms mapped to commodity hardware. In inference tests utilizing Google Speech Commands (GSC) datasets mapped to Xilinx Field Programmable Gate Arrays (FPGAs), networks structured upon neocortical sparsity principles matched the accuracy of dense CNNs (96.4% - 96.9%) while stripping away 95% of the active weights 3. This structural sparsity allowed the hardware to achieve a 100x acceleration in inference speed 3. Additional tests deploying time-to-first-spike (TTFS) continual-learning architectures on Xilinx Zynq-7020 SoC FPGAs reported highly efficient average inference times of 37.3 milliseconds 17.

Furthermore, benchmarks presented in late 2024 demonstrated the viability of these algorithms on enterprise central processing units (CPUs). When running large language models (such as customized variants of BERT Large, Llama 2 7B, and GPT 7B) on a 5th-generation Intel Xeon CPU, the sparsely optimized models achieved up to 20 times the power efficiency (throughput per watt) compared to standard dense models running on high-end NVIDIA A100 GPUs 25. While these metrics reflect specifically optimized inference workloads rather than generalized foundation model training, they validate the hypothesis that algorithmic sparsity can dramatically reduce AI's reliance on specialized, high-power silicon 25.

| Benchmark Context | Target Hardware | Baseline Hardware / Model | Reported Performance Improvement | Key Enabler |

|---|---|---|---|---|

| Audio Inference (GSC Dataset) | Xilinx FPGA | Standard Dense CNN | 100x increase in inference speed, matching accuracy (~96.5%) 3 | 95% removal of network weights via active sparsity 3 |

| Continual Learning (Split MNIST) | Xilinx Zynq-7020 SoC FPGA | Standard Dense ANN | 37.3 ms inference time; mitigated catastrophic forgetting (88.3% accuracy) 17 | Time-to-first-spike (TTFS) encoding and active dendritic modulation 17 |

| LLM Inference Power Efficiency | Intel 5th Gen Xeon CPU | NVIDIA A100 GPU (BERT Large) | 20x improvement in throughput per watt (power efficiency) 25 | Neocortical algorithmic sparsity and localized processing 25 |

| LLM Inference Throughput | Intel 5th Gen Xeon CPU | NVIDIA A100 GPU (Llama 2 7B / GPT 7B) | 3x to 6x acceleration in raw throughput 25 | Algorithmic optimization mapping to CPU cache architectures 25 |

Empirical Neurobiological Evidence

Given that the Thousand Brains Theory posits precise functional roles for neocortical structures, its validity is heavily contingent upon empirical neurobiological verification. Over recent years, researchers independent of Numenta have mapped the brain for evidence of cortical grid cells and the anatomical trans-columnar networks required for horizontal voting.

Discoveries of Cortical Grid Cells

The existence of grid cells in the medial entorhinal cortex (MEC) is universally accepted as the neurological basis for physical navigation 526. However, the Thousand Brains Theory aggressively hypothesized that functionally analogous cells must exist ubiquitously across the neocortex to map the conceptual and object spaces of the environment 56.

Functional magnetic resonance imaging (fMRI) studies led by Doeller et al. provided early evidence supporting this, identifying distinct hexadirectional grid-like firing patterns indicative of six-fold symmetry not only in the entorhinal cortex, but also distributed across medial prefrontal, parietal, and visual cortices 2126. More recently, single-unit electrophysiology has corroborated these macroscopic findings. Jacobs et al., utilizing direct neural recordings in human epilepsy patients navigating virtual environments, recorded 893 individual cells and identified robust grid-like codes within the human cingulate cortex 2126.

Crucially, in the context of physical object modeling, Long et al. deployed multiple tetrode arrays in freely moving rats and identified cells exhibiting grid cell, place cell, and conjunctive cell responses directly within the primary somatosensory cortex (S1) - with 3.55% of the recorded somatosensory cells firing strictly as grid cells 2627. Furthermore, research by Constantinescu et al. and subsequent fMRI studies by Peters-Founshtein have proven that these grid-cell representations extend beyond spatial geometry to map abstract mental concepts, such as tracking non-spatial trajectories in conceptual "age-day" cognitive maps 528. This wealth of data strongly aligns with the theory's assertion that the brain maps physical objects and abstract concepts using an identical, grid-based coordinate framework 528.

Anatomical Mapping of Trans-Columnar Networks

If cortical columns resolve sensory ambiguity via lateral voting, neuroanatomy must reveal an extensive network of long-range horizontal connections linking these columns. High-resolution, three-dimensional cellular reconstructions have verified this exact structural layout 71129.

Research teams spanning the Max Planck Institute for Biological Cybernetics and the RIKEN Brain Science Institute utilized two-photon imaging and cell-specific labeling to trace the axonal projections of over 150 individual neurons in the rodent vibrissal (whisker) sensory domain 729. Toshihiko Hosoya and colleagues discovered a striking hexagonal lattice of microcolumns repeating approximately every 40 microns in layer V of the cortex, exhibiting highly synchronized activity 7.

Most significantly, the reconstructions revealed that the majority of a neuron's axon projects far beyond the borders of its native cortical column, heavily interconnecting with surrounding columns that process entirely different sensory patches 29. This dense, asymmetric trans-columnar circuitry provides the precise physical hardware necessary for the decentralized, horizontal voting mechanism postulated by the Thousand Brains framework 5729.

Critiques, Biological Plausibility, and AGI Scalability

While empirical anatomical data provides substantial backing, the Thousand Brains Theory faces rigorous debate within both the neuroscience and machine learning communities regarding its scope, physiological interpretation, and immediate viability for achieving Artificial General Intelligence (AGI).

Debates on Biological Plausibility

A primary critique of the theory concerns the degree of computational autonomy assigned to low-level sensory regions. In the consensus model of neuroscience, primary sensory areas (such as V1 in the visual cortex) act merely as feature detectors for spots of light or oriented edges; the resolution of complete objects (e.g., recognizing a face) is believed to occur strictly in higher-order regions like the inferior temporal lobe and the fusiform face area (FFA) 562330.

Critics argue that asserting every cortical column - including those in V1 - learns a complete, 3D topological model of complex objects contradicts decades of receptive field mapping 2330. Furthermore, skeptics argue that the established interaction between the entorhinal cortex (for location) and the hippocampus (for binding features to location) is entirely sufficient for object recognition, rendering the hypothesis of ubiquitous cortical grid cells theoretically unnecessary 30. Proponents of the Thousand Brains Theory counter this by emphasizing the brain's massive redundancy and evolutionary history. While the hippocampus evolved for rapid episodic spatial mapping, the neocortex repurposed these grid-cell mechanics to enable highly distributed, parallel object modeling, explaining why localized cortical damage rarely results in total catastrophic amnesia for learned objects 132318.

Modularity and the Challenge of Generalization

In the realm of applied artificial intelligence, the limitations of the Thousand Brains paradigm center heavily on scalability and domain generalization. Contemporary Large Language Models (LLMs) and diffusion models have achieved profound, albeit statistically driven, zero-shot generalization by ingesting massive text and image corpora 436.

Conversely, sensorimotor agents like Monty are nascent and require complex embodied interaction - whether via robotic actuators or 3D physics simulators - to actively build their spatial models 121335. It remains highly challenging to feed the entirety of the static internet into a system that strictly demands active sensorimotor loops. Critics assert that while modular, sensorimotor systems elegantly solve catastrophic forgetting and robust 3D physical reasoning, they currently lack the necessary inductive biases to parse the nuances of abstract human formal language at the scale of a multi-billion-parameter transformer 436.

Additionally, theoretical machine learning studies simulating modular network configurations observe that, without heavily engineered routing algorithms, independent modules often fail to automatically discover latent task structures or specialize efficiently when faced with unknown, out-of-distribution real-world data 436. Whether the Cortical Messaging Protocol (CMP) can scale beyond localized object perception to seamlessly support higher-order abstract reasoning - effectively matching the generalized functional skills of the human brain - remains the central developmental challenge for the Thousand Brains Project 3536.

Conclusion

The Thousand Brains Theory presents a rigorous, biologically grounded refutation of the prevailing deep learning paradigm. By abandoning the concept of a singular, centralized processing hierarchy in favor of 150,000 parallel, voting cortical columns, the theory provides a highly compelling mechanistic explanation for how intelligence emerges from sensorimotor interaction, spatial reference frames, and decentralized consensus.

While deep learning continues to yield impressive statistical approximations of intelligence through massive scaling, it remains fundamentally constrained by its immense power consumption, structural brittleness, and susceptibility to catastrophic forgetting. The hardware efficiency and continuous learning capabilities demonstrated by active-dendrite algorithms and the Monty architecture highlight a viable, highly efficient path forward. By relying on extreme algorithmic sparsity and topological modeling, artificial agents can begin to learn like biological organisms - actively, contextually, and continuously.

Though debates regarding the precise distribution of object models within the primary sensory cortex persist, emerging fMRI and single-cell evidence validating ubiquitous grid-cell-like firing networks strongly underscores the theory's predictive power. As the Thousand Brains Project expands its open-source ecosystem, the ongoing fusion of neocortical anatomy with artificial intelligence stands as a highly credible, disruptive vector for achieving robust and embodied general intelligence.