The Importance of Search Intent in SEO for 2026

The mechanics of search engine optimization have undergone a profound structural shift over the past decade, moving from a model of lexical keyword matching to a complex framework governed entirely by the fulfillment of user intent. By 2026, the widespread integration of generative artificial intelligence, large language models, and multi-platform digital discovery environments has rendered traditional keyword density and surface-level relevance metrics effectively obsolete. Modern search engines no longer function merely as indices of hyperlinks; they operate as autonomous answer engines, actively interpreting the underlying goals, behavioral patterns, and contextual requirements of the user. Within this evolved landscape, aligning digital content precisely with search intent is not simply an optimization tactic; it is the fundamental algorithmic prerequisite for achieving visibility, maintaining algorithmic trust, and driving measurable business outcomes.

This report conducts a comprehensive examination of the evolution of search intent taxonomies, the algorithmic evaluation frameworks utilized by search engines to measure intent fulfillment, the behavioral signals that dictate ranking stability, and the cascading implications for digital performance measurement and content strategy in 2026.

Evolution of Search Intent Taxonomy

To fully comprehend the complexity of search intent modeling in 2026, it is necessary to trace the academic and operational frameworks that historically defined information retrieval behaviors. The taxonomy of search intent has transitioned from simple, surface-level query categorization to multi-dimensional behavioral mapping, reflecting the increasing sophistication of both searchers and search engines.

The foundational understanding of user intent in web search originates from early academic analyses of search engine transaction logs. Andrei Broder's seminal 2002 research established a primary tripartite classification system that categorized queries as informational, navigational, or transactional 123. Informational queries represented users seeking knowledge or answers on a specific topic, navigational queries involved users attempting to reach a predetermined digital destination, and transactional queries indicated an intent to perform a web-mediated activity, such as a purchase, registration, or software download 34. Through empirical analysis, Broder estimated that approximately 73% of web queries were informational in nature, while navigational and transactional queries comprised roughly 26% and 36% of total search volume respectively, accounting for semantic overlap and query ambiguity 3.

This baseline model was subsequently refined by researchers Daniel Rose and Danny Levinson in 2004, who proposed a more precise goal-oriented framework. Rose and Levinson sought to understand the underlying motivations driving search behavior, moving beyond the lexical components of the query to infer the user's ultimate objective 24. Their expanded taxonomy introduced nuanced subcategories such as Directed-Closed, Directed-Open, Advice, Locate, and Interact, arguing that over 80% of web queries were fundamentally informational when evaluated through a goal-oriented lens 15. For nearly two decades, these classical frameworks informed the standard digital marketing strategy: digital content was systematically mapped to an informational, navigational, commercial (a later addition denoting preliminary product investigation), or transactional stage of the user journey 679.

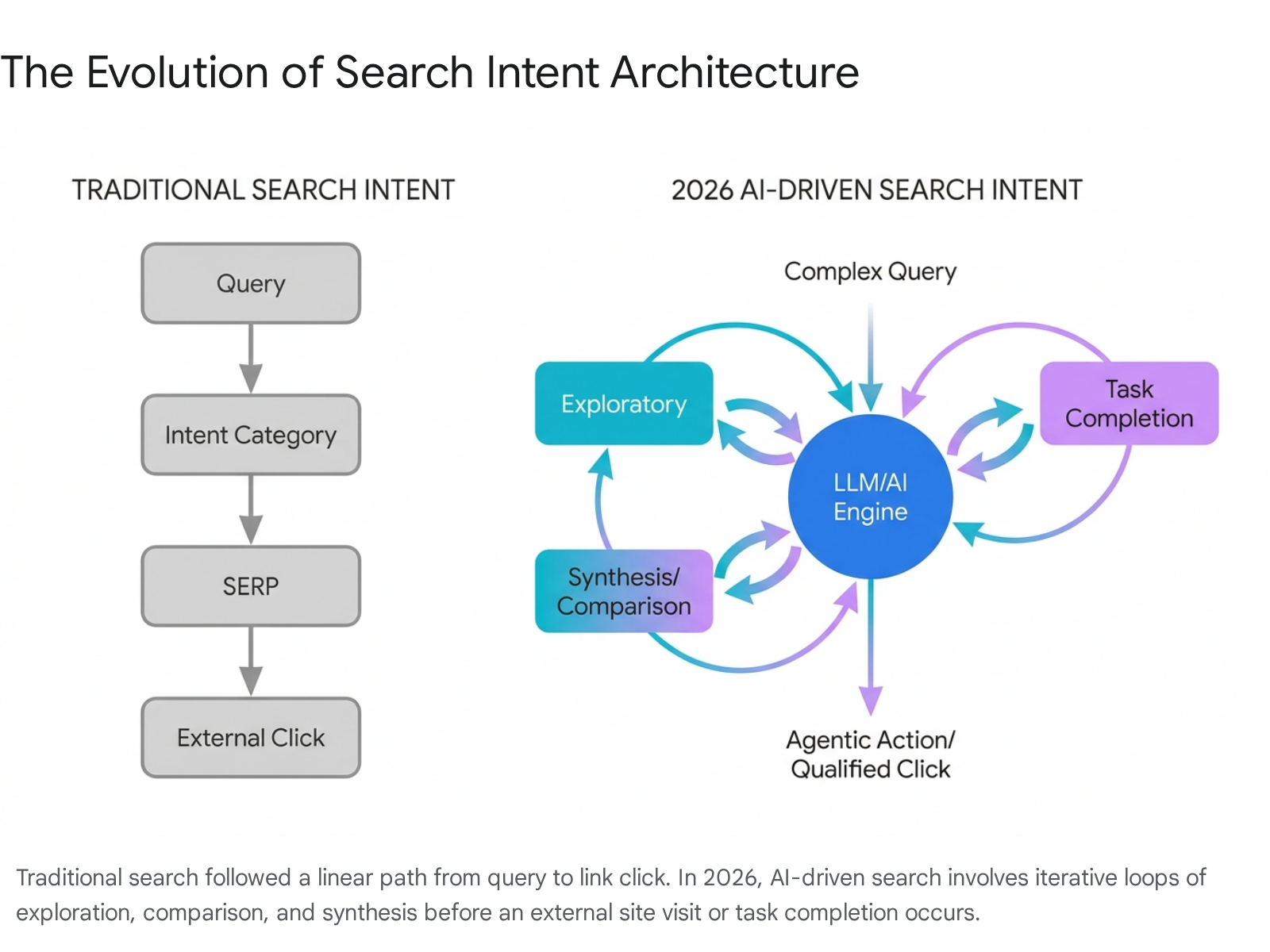

Between 2023 and 2026, the rapid consumer adoption of artificial intelligence search interfaces - including Google's AI Overviews, OpenAI's SearchGPT, Perplexity, and Anthropic's Claude - fractured the traditional four-pillar intent model. Users adapted to these new systems by significantly altering their query syntax and behavioral expectations. Traditional search queries averaged three to four words, heavily reliant on fragmented keywords. By contrast, prompt-based queries in 2026 average 12 to 25 words, combining context, constraints, and multi-faceted requests into a single, complex interaction 10.

This fundamental shift necessitated the identification of new intent categories that classic taxonomies could not adequately accommodate. In the contemporary search environment, user intent encompasses exploratory, comparative, synthesis, conversational, and multimodal dimensions.

Exploratory intent occurs when users recognize a problem or goal but lack the requisite domain knowledge to formulate specific, targeted questions. These users seek foundational frameworks and high-level landscape overviews to structure their subsequent decision-making processes. For example, a user might query an AI assistant to diagnose why a real estate website receives traffic but fails to generate leads, requesting an outline of potential causes rather than a specific technical remediation 7.

Comparative research intent reflects a demand for the real-time synthesis of tradeoffs between multiple options across customized, user-defined dimensions. Rather than a generic, static list of the "best products" in a category, the user seeks a highly contextual comparison tailored to their specific situation, such as comparing enterprise software platforms based on a specific organizational team size, compliance requirement, and budgetary constraint 7.

Synthesis intent describes scenarios where the searcher seeks a coherent understanding derived from multiple, sometimes conflicting, perspectives. The goal is not to locate a single authoritative source, but to understand the current consensus or debate surrounding a complex topic, such as the efficacy of a new medical treatment or the macroeconomic implications of a specific monetary policy 7.

Conversational intent represents a structural shift where search is executed as an iterative dialogue rather than a discrete, single-turn event. The user provides an initial prompt, evaluates the generated output, and uses follow-up queries to refine, constrain, or expand the information space. The underlying language model retains the context of the session, eliminating the need for the user to restate their original parameters in subsequent queries 6.

Task completion intent elevates the expectation of the search interface from simple information retrieval to active operational execution. The user expects the search engine or integrated AI agent to actively perform a function, such as drafting a complex document, analyzing an uploaded dataset, executing a multi-step booking process, or initiating a financial transaction directly within the chat interface 6. Finally, multimodal intent involves information seeking through a combination of textual inputs, voice commands, image uploads, and live camera feeds, requiring the search engine to process and cross-reference disparate data types simultaneously to deduce the user's goal 611.

In addition to these new categories, modern search algorithms frequently encounter the phenomenon of fractured intent, also referred to in academic literature as mixed intent. Fractured intent occurs when a single, broad query term is utilized by different individuals to achieve entirely divergent goals 12. For instance, a high-volume query such as "CRM software" demonstrates significant fractured intent; the search engine must simultaneously serve users seeking a basic definition of the technology, users attempting to compare specific feature sets, and users actively seeking to initiate a free trial of a specific platform 12.

When intent is fractured, algorithms diversify the search engine results page to satisfy multiple potential pathways. Content strategies must adapt to this algorithmic behavior by creating comprehensive hub pages that address micro-intents sequentially. Such pages typically begin with clear, authoritative definitions to satisfy informational intent, progress into structured feature comparisons to satisfy commercial intent, and conclude with highly visible transactional access points 12. This structural consolidation minimizes semantic ambiguity, allowing the algorithmic interpreter to confidently surface the content regardless of which specific intent branch the user ultimately pursues.

| Traditional Intent Taxonomy (2002-2023) | Contemporary Intent Taxonomy (2026) | User Objective | Algorithmic Content Requirement |

|---|---|---|---|

| Informational | Exploratory | Map an unknown topic landscape. | Comprehensive decision frameworks, foundational guides, diagnostic content. |

| Commercial | Comparative Research | Evaluate highly contextual tradeoffs. | Dynamic matrices, contextual pros/cons, nuanced multi-variable comparisons. |

| Navigational | Conversational | Iteratively refine a search path. | Structured semantic relationships, connected entity data, deep internal linking. |

| Transactional | Task Completion | Execute an outcome immediately. | Direct API integrations, functional tools, AI-agent compatible structured schemas. |

| (N/A) | Synthesis | Understand consensus across sources. | Aggregation of expert opinion, clear citation, balanced multi-perspective analysis. |

Search Behavior and Platform Distribution

The historical dominance of a single search engine as the universal entry point for digital discovery has diminished significantly by 2026. Search behavior is now heavily distributed across a fragmented ecosystem of platforms, driven primarily by generational preferences, the integration of native search functionalities within social networks, and regional market specificities.

Generational analysis, specifically concerning Generation Z (born 1997 - 2012), reveals a stark departure from traditional search engine reliance. Research data from 2026 indicates that 41% of Generation Z consumers turn to social media platforms first when initiating an online search for information, bypassing traditional text-based search engines entirely 1314. YouTube functions as the most universally utilized daily platform for this demographic, maintaining a 63% daily active usage rate 13. Furthermore, TikTok has transformed from a purely entertainment-focused application into a primary search utility for experiential research. Approximately 77% of Generation Z utilizes TikTok specifically to discover products, leveraging the platform's visual format to evaluate authenticity and user-generated social proof 13.

A comprehensive 2026 Adobe study highlighted that this behavioral shift is not strictly limited to younger cohorts; 49% of all United States consumers reported utilizing TikTok to find information. This usage spans multiple demographic segments, including 65% of Generation Z, 55% of Millennials, 40% of Generation X, and 12% of Baby Boomers 816. This behavior does not indicate the total abandonment of traditional search engines, but rather highlights a multi-platform intent distribution. When users require quick, authoritative facts, academic data, or transactional execution, they default to Google. Conversely, when they seek authentic product reviews, visual tutorials, or exploratory cultural discovery, they utilize TikTok or YouTube 16. The implications for search engine optimization are substantial: search visibility is no longer confined to traditional website rankings. Brands must optimize their digital footprint across multiple indices, ensuring that video content, community forum discussions, and social commerce profiles are structurally sound and topically aligned to capture domain-specific search intent 1718.

| Discovery Platform | Primary User Intent | Optimal Content Format | Algorithmic Priority Signals |

|---|---|---|---|

| Google Search / AI | Transactional, Conversational, Synthesis | Structured text, comprehensive hubs, dynamic comparisons. | Domain authority, structured data, entity relationships, E-E-A-T. |

| YouTube | Exploratory, Educational, Synthesis | Long-form video, tutorials, technical demonstrations. | Watch time, audience retention, semantic relevance of transcripts. |

| TikTok | Product Discovery, Comparative, Local | Short-form vertical video, authentic user-generated content. | Engagement velocity, completion rate, creator authority, hashtag clustering. |

| Reddit / Forums | Exploratory, Unbiased Synthesis | Text-based discussions, authentic Q&A threads. | Upvotes, comment depth, community trust, real-world experience. |

The evolution of search intent is similarly pronounced in non-Western digital ecosystems. In South Korea, the search landscape is undergoing a structural shift driven by AI-influenced discovery. Naver, maintaining a dominant market share of over 60% as of 2025, has fundamentally updated its algorithm to prioritize comprehensive user experience over historical keyword density metrics 920. In late 2024, Naver launched AiRSearch, an AI-powered search feature analogous to Google's AI Overviews, which by 2026 appears for approximately 30% to 40% of informational queries 21. To align with Naver's shifting intent mechanisms, businesses have been forced to transition from publishing high volumes of short, keyword-dense articles to producing comprehensive, long-form guides exceeding 2,000 words. Furthermore, Naver has instituted dynamic Search Engine Results Pages that adjust content priority based on sensed user intent; for queries requiring deep expertise, Naver prioritizes trusted institutional content above paid advertisements, mirroring Western E-E-A-T principles 20. Local search intent has also become paramount on the platform, with location-based B2B queries heavily prioritizing Naver Place listings 21.

In China, the digital ecosystem is undergoing an aggressive transition driven by Baidu and its large language model, ERNIE Bot. Baidu maintains a dominant market gateway, reaching over 700 million monthly active users 2210. In 2026, Baidu deepened its ecosystem integration by embedding the ERNIE language model directly into its primary search interface, eliminating the friction of requiring users to download a standalone AI application 10. This integration represents a major shift toward conversational and zero-click search behaviors within the Chinese market. ERNIE Bot processes hundreds of millions of daily prompts and surpassed 200 million monthly active users early in 2026 1011. The competitive landscape intensified significantly following the release of DeepSeek's highly efficient R1 model, prompting Baidu to further optimize ERNIE's accessibility and even open-source specific model tiers to maintain market leadership 1112.

Consequently, Baidu's SERP dynamically generates synthesized answers at the top of results for complex informational queries, leading to an estimated 15% to 25% reduction in traditional organic clicks for top-of-funnel keyword targets 12. Baidu's algorithmic evaluation of search intent requires specific structural compliance. To capture AI-driven intent on Baidu in 2026, content must feature clear heading hierarchies, bulleted procedural lists, and direct answers positioned within the first paragraph to facilitate algorithmic extraction 12. Furthermore, Baidu prioritizes Chinese-specific credibility signals, strongly favoring content written in Simplified Chinese, hosted on mainland servers, and supported by authoritative domestic backlinks or Baidu Baike encyclopedia entries 1226.

Evaluation Frameworks for Intent Alignment

Search engines do not inherently understand human language or intent; they rely on advanced mathematical models, vector embeddings, and massive datasets of human-labeled training data to calibrate their interpretation of relevance. For Google, the primary operational mechanism for establishing this ground truth is the Search Quality Rating Program, governed by the Search Quality Evaluator Guidelines.

The Search Quality Rating Program employs approximately 16,000 external human contractors globally to manually evaluate search results across a vast array of topics and languages 131429. It is critical to note that these human raters do not possess the administrative ability to alter live search rankings, issue manual penalties, or directly promote specific domains 293031. Instead, their comprehensive evaluations serve as high-fidelity algorithmic feedback. This aggregated data is utilized by Google engineers to train machine learning models and validate proposed algorithmic updates, teaching the autonomous systems how to algorithmically identify content that successfully aligns with human intent 142932.

The operational core of the Search Quality Evaluator Guidelines is the "Needs Met" rating scale. This specific metric evaluates how successfully a given search result fulfills the user's underlying intent based on the context of their query 2931. The scale ranges from "Fails to Meet" to "Fully Meets." Content that is technically flawless, beautifully designed, and highly authoritative will still receive a "Fails to Meet" rating if it answers the wrong question, fails to satisfy the temporal requirements of the query, or presents a fundamental intent mismatch 31. For instance, providing a dense, multi-page academic research paper in response to a user seeking a rapid, transactional checkout page constitutes a failure of intent alignment. The January 2025 update to the guidelines explicitly instructed raters to assess "minor interpretations and intents," requiring publishers to anticipate and serve increasingly nuanced user expectations 13.

While the Needs Met scale measures the accuracy of intent fulfillment, the E-E-A-T framework measures the underlying reliability and safety of the content provider 1433. E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. In the heavily automated digital environment of 2026, strong E-E-A-T signals serve as the foundational algorithmic barrier against the proliferation of low-quality, synthetically generated web spam 1330. Trust is explicitly identified within the guidelines as the most critical component of the framework, acting as the central pillar that the other three elements support 3315. The relatively recent addition of Experience to the framework emphasizes the necessity of first-hand, real-world interaction with the subject matter - a qualitative characteristic that autonomous language models cannot authentically replicate or simulate 133033.

The rigor of E-E-A-T evaluation scales proportionally with the potential risk to the end user. This risk profile is defined under the "Your Money or Your Life" (YMYL) designation 1432. Pages offering medical diagnoses, financial investment guidance, legal advice, or safety procedures have historically been subject to the strictest YMYL standards, requiring demonstrably high levels of formalized expertise. However, the September 2025 update to the guidelines significantly expanded the YMYL definition to explicitly include a new category: "Government, Civics, and Society." This expansion applies heightened algorithmic scrutiny to content discussing political processes, democratic elections, public policies, and public institutions, reflecting a concerted effort to combat misinformation and maintain algorithmic trust in sensitive civic domains 133235.

In the era of AI Overviews and generative search summaries, demonstrating robust E-E-A-T is no longer merely a best practice for traditional SEO; it is a strict citation requirement. Generative search engines are mathematically programmed to mitigate hallucination risks by extracting data almost exclusively from sources that project strong entity authority, transparent human authorship, and consensus-aligned expertise 293637. If a piece of content lacks verifiable E-E-A-T signals, it fails to meet the algorithmic confidence threshold required for inclusion in AI-generated summaries, rendering the content virtually invisible for broad informational queries. Furthermore, the updated guidelines explicitly dictate that content generated entirely by artificial intelligence in a "low-effort way" - defined as lacking original human insight, failing to add unique value, or simply paraphrasing existing sources - must be classified by raters as "Lowest Quality" 133538. Conversely, the guidelines acknowledge that AI can be utilized appropriately as an assistive tool for content creation, provided the final output is heavily guided by human expertise and serves a genuinely helpful purpose for the reader 3038.

Intent Mismatch and Algorithmic Remediation

When digital content fails to satisfy the user's intended objective, a highly measurable negative feedback loop is initiated. Modern search engines rely heavily on aggregated, anonymized user behavioral signals to continuously measure the efficacy of their rankings in real time, shifting from purely predictive ranking models to reactive, behavior-driven evaluation 1239.

An intent mismatch occurs when a user initiates a search query, selects a specific result, and encounters content that misaligns with their objective or cognitive expectations. This mismatch generates highly visible negative engagement metrics that search algorithms process as indicators of low relevance 1617. The primary behavioral signals utilized by modern systems to detect intent failure include:

- Dwell Time and Engagement Rate: This measures the duration a user remains actively engaged with a specific page before returning to the search ecosystem. Google Analytics 4 transitioned away from the legacy "bounce rate" metric in favor of "Engagement Rate." Under this standard, an engaged session is strictly defined as one lasting longer than 10 seconds, encompassing multiple page views, or successfully triggering a defined conversion event 1819. Consistently low engagement rates on high-traffic pages serve as a definitive algorithmic indicator of widespread intent failure.

- Pogo-Sticking and Query Chains: Pogo-sticking describes the behavior wherein a user clicks a search result, rapidly returns to the original SERP, and subsequently clicks an alternative result. This action explicitly informs the search engine that the initial result failed to satisfy the query 1239. Furthermore, analyzing query chains - the sequence of subsequent, refined searches a user makes - reveals whether the initial content effectively answered their question or merely prompted further confusion 16.

- Scroll Depth and Interaction: A lack of progression beyond the initial viewport, or a failure to interact with dynamic page elements such as comparison tables, calculators, or embedded media, signals that the content formatting did not match the user's cognitive state or preferred consumption method 3919.

When a specific page consistently triggers poor behavioral signals across a statistically significant volume of users, the algorithm autonomously downgrades its visibility, correlating the behavioral data with a failure to meet user intent 1720. While technical SEO elements - such as core web vitals, site speed, HTTPS security, and proper canonicalization - serve as mandatory table stakes, they cannot rescue content that suffers from an intent mismatch. Once a website reaches technical parity with its primary competitors, relevance and intent alignment become the sole algorithmic differentiators determining rank 1745.

Autonomous Landing Page Generation

The operational consequences of intent mismatch and poor user experience escalated significantly with the approval of Google Patent US12536233B1, titled "AI-generated content page tailored to a specific user," granted in January 2026 464721. This complex intellectual property details a system capable of autonomously intercepting search traffic and replacing poorly optimized destination pages with dynamically generated Google assets, representing a potential paradigm shift in how search traffic is routed 464950.

According to the extensive patent documentation, the proposed system evaluates the top-ranking organic or sponsored search result against a proprietary algorithmic standard termed the Landing Page Score 464722. This score is calculated in real-time, leveraging historical performance metrics such as conversion rates, bounce rates, click-through rates, and an assessment of the structural design quality of the target page 464922. The patent explicitly references functional gaps - such as the absence of necessary product filters on an e-commerce page - as primary indicators of poor usability and low intent fulfillment 46.

If the original landing page falls below a defined performance threshold, indicating a high probability of intent failure, the system is authorized to generate an updated search result 464722. This updated result bypasses the publisher's independent website entirely. Instead, the user is directed via a navigation link to a completely novel, AI-generated page hosted directly by Google 462150. The replacement page synthesizes textual and visual data extracted from the original website, combined with the user's historical search account data and contextual intent, to create a highly personalized, optimized interface 472149.

The patent describes incorporating bespoke personalized headlines, dynamically suggested product filters, multimedia elements, embedded product feeds, clear calls to action, and even conversational AI chatbots directly into this generated page 472122. Notably, the patent claims that this AI-generated page could be presented in association with sponsored content items, raising complex questions regarding attribution, advertiser consent, and the billing mechanics of traffic routed to Google-constructed pages 472149.

While search industry experts debate whether this specific technology will be deployed universally across all queries or restricted primarily to e-commerce and paid advertising environments to improve low-converting shopping campaigns, the broader strategic implication remains severe 23. It signals a structural progression where search engines transition from being passive indexers of third-party information to active architects of the user experience. If a brand fails to design content that perfectly aligns with conversion goals and user intent, the search engine claims the technological capability to dynamically reconstruct that experience, effectively disintermediating the brand's control over its own digital storefront and customer journey 464924.

Post-Facto Autonomous Results

Further emphasizing the shift toward autonomous intent fulfillment, a continuation patent published in early 2026 details a system titled "Autonomously providing search results post-facto, including in assistant context" 25. This invention addresses scenarios where a user's intent cannot be immediately satisfied because the requested information is currently unavailable, lacks requisite quality, or fails authoritativeness standards at the exact moment of the query 25.

Rather than forcing the user to repeatedly execute the same search over time, the system transforms search from a discrete, one-time action into a persistent background process 2555. The AI assistant retains the user's unanswered query and continuously monitors the index. When a new resource is published, or an existing resource is sufficiently updated to meet the required quality, completeness, and E-E-A-T criteria, the system proactively circles back to the user to deliver the answer 2555. This delivery can occur via push notifications or natively within subsequent, unrelated interactions with an AI assistant 2555. This mechanism highlights that search engines are evolving to satisfy intent on asynchronous timelines, rewarding publishers who consistently update content to meet evolving completeness standards 55.

SEO Performance Measurement in an Intent-Driven Landscape

The transformation of search intent, driven by AI summaries and zero-click search behavior, has rendered legacy SEO reporting metrics inadequate, and in many cases, strategically misleading. Effective decision-making in 2026 requires abandoning aggregate volume metrics in favor of granular, intent-segmented indicators of actual business impact.

The historical benchmark for an SEO campaign was the year-over-year percentage increase in aggregate organic traffic 1826. However, in the contemporary AI-mediated landscape, raw traffic volume provides virtually no insight into visitor quality, intent alignment, or true revenue contribution 18. The widespread deployment of AI Overviews and integrated LLM responses has accelerated the phenomenon of zero-click searches. For informational queries - where users seek definitions, quick facts, or procedural summaries - AI engines successfully synthesize and display the answer directly on the search engine results page 105758.

A comprehensive 2026 industry study revealed that the presence of an AI Overview reduces traditional organic click-through rates by an average of 18% to 35%, with informational queries experiencing the steepest declines in traffic routing 5727. The Reuters Institute's 2026 trends report noted that publishers anticipate search engine traffic to decline by more than 40% over a three-year period as AI-driven answer engines replace traditional hyperlinks 10. Consequently, an organization may report a 40% decline in top-of-funnel organic traffic metrics while simultaneously experiencing an increase in brand awareness, direct traffic, and downstream conversions, because the low-intent traffic is now satisfied directly on the SERP 18.

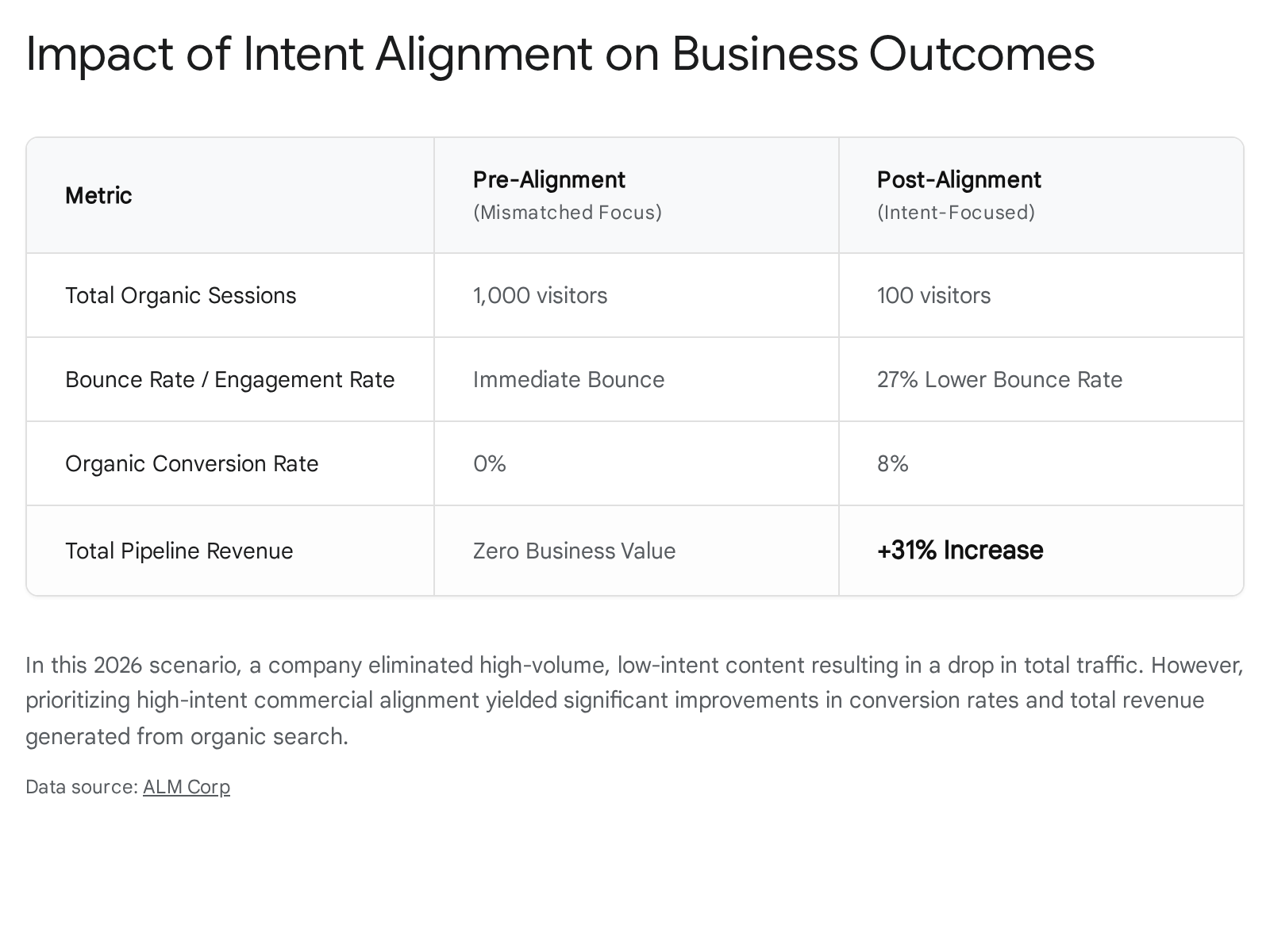

Conversely, reporting a 50% increase in traffic generated entirely by low-intent queries that result in immediate bounces creates a dangerous misalignment between marketing dashboards and financial realities. One thousand visitors who bounce immediately possess zero commercial value, whereas one hundred high-intent visitors converting at a stable, elevated rate drive actual business growth 18.

To accurately gauge SEO performance in 2026, quantitative measurement must be segmented by the underlying search intent.

For informational intent queries, success is no longer measured in clicks, but through AI Visibility and brand citation frequency. If a brand is consistently cited within AI chat interfaces or Google's AI Overviews as the authoritative source on a complex topic, it builds relational trust and authority. Performance in this sector is measured via branded search volume growth and direct traffic increases, indicating that users discovered the brand via an AI summary and subsequently sought it out directly to engage further 1018.

For commercial and transactional intent queries, measurement shifts entirely to pipeline contribution, return on ad spend equivalency, and organic conversion rate 181960. Traffic arriving on commercial landing pages must trigger meaningful engagement, such as form submissions, software demos, or cart additions. If commercial-intent traffic exhibits low engagement, it signals a critical intent mismatch on the landing page that requires immediate conversion rate optimization. The most successful organizations in 2026 recognize that SEO and CRO are not competing strategies, but deeply interconnected disciplines; aligning SEO traffic generation with rigorous CRO testing yields substantially higher conversion rates from organic channels 60.

While AI Overviews demonstrably reduce overall click volume, the data indicates a substantial improvement in the behavioral quality of the clicks that do occur. Early 2026 data analysis demonstrates that users who click through a source link provided within an AI-generated summary are significantly further along in their decision-making process, having bypassed the initial research phase 3727. Compared to traditional organic traffic, visitors arriving via generative AI referrals view 12% more pages, possess a 23% lower bounce rate, and remain on the target site 8% longer 57. Most critically, studies indicate that AI-referred traffic converts at a significantly higher rate - up to 14.2% compared to the traditional search conversion average of 2.8% 7. Because the AI system acts as an initial filter - answering basic questions and synthesizing broad context - the user only clicks the external link when they are prepared to conduct deep comparative research or execute a transaction 3758. Therefore, optimizing to be the cited source within an AI interface is the highest-leverage activity in modern search marketing.

Strategic Implications for Content Optimization

Adapting to an environment governed by complex, multi-layered search intent requires organizations to abandon isolated keyword targeting in favor of holistic, entity-based content structuring and rigorous authority building.

To be accurately understood and reliably cited by AI answer engines, content cannot exist as unstructured, dense blocks of prose. AI models rely on distinct semantic signals and predictable formatting to confidently extract factual data. In 2026, content must be architected specifically for rapid algorithmic ingestion. This involves deploying strict heading hierarchies (H2, H3) that mirror the syntactic structure of complex user queries 2112. Best practices dictate defining core concepts concisely within the first two to three sentences of a section, allowing the language model to efficiently capture the direct answer 2112. Furthermore, comprehensively integrating structured data markup for articles, frequently asked questions, product specifications, and local business parameters acts as a critical machine-readable translation layer, drastically increasing the mathematical probability of inclusion in rich results and AI citations 123661.

Producing derivative content that merely synthesizes or paraphrases existing search results is explicitly penalized under the updated Quality Rater Guidelines as low-effort content 133538. To satisfy intent - particularly exploratory and comparative research intent - brands must optimize heavily for Information Gain. Information Gain refers to the unique, net-new value a document introduces to the web ecosystem that cannot be sourced elsewhere. This requires embedding proprietary data, original survey results, hands-on testing methodologies, specific case studies, and nuanced expert opinions that challenge or advance industry consensus 2130.

Furthermore, single pages must be woven into comprehensive, interconnected topic clusters. A user entering a multi-turn conversational search session will iteratively refine their queries over time 10. A website that effectively interlinks an overarching foundational guide to highly specific, granular sub-topics provides a clear semantic map. Search algorithms utilize this internal linking structure to assess deep topical authority, rewarding domains that provide comprehensive journeys rather than isolated answers 626364.

The architecture of search intent has irreversibly evolved from a simplistic transactional exchange of keywords for hyperlinks into an intricate psychological and algorithmic negotiation. In 2026, search engines are engineered to decode conversational nuances, multi-platform discovery behaviors, and fractured objectives to deliver direct, highly synthesized outcomes. Matching this intent is the single most important ranking factor because it represents the fundamental metric by which the algorithms themselves are evaluated, trained, and optimized. Organizations that persist in tracking raw traffic volume and optimizing for keyword density will experience compounding diminishing returns, falling victim to intent mismatches, poor engagement metrics, and the existential threat of autonomous algorithmic replacement. Conversely, brands that meticulously align their content architecture with specific user objectives - investing in demonstrable E-E-A-T, structuring data for precise AI extraction, and providing genuine information gain - will secure enduring visibility in the interfaces that govern the modern digital economy.