Impact of artificial intelligence on student learning outcomes

Introduction

The integration of generative artificial intelligence into educational ecosystems since the public release of large language models in late 2022 has prompted a fundamental reevaluation of pedagogical methodologies, learning metrics, and cognitive development. As artificial intelligence models rapidly advance to match or exceed human performance in domains ranging from reading comprehension and mathematics to scientific reasoning and software engineering, the central inquiry for educators and policymakers has shifted toward understanding the empirical impact of these tools on human learning 12. The academic debate is currently defined by two opposing trajectories: the potential for artificial intelligence to dramatically accelerate learning through personalized, adaptive tutoring, and the compounding risk that it may induce cognitive atrophy by offloading critical thinking tasks to algorithmic systems 345.

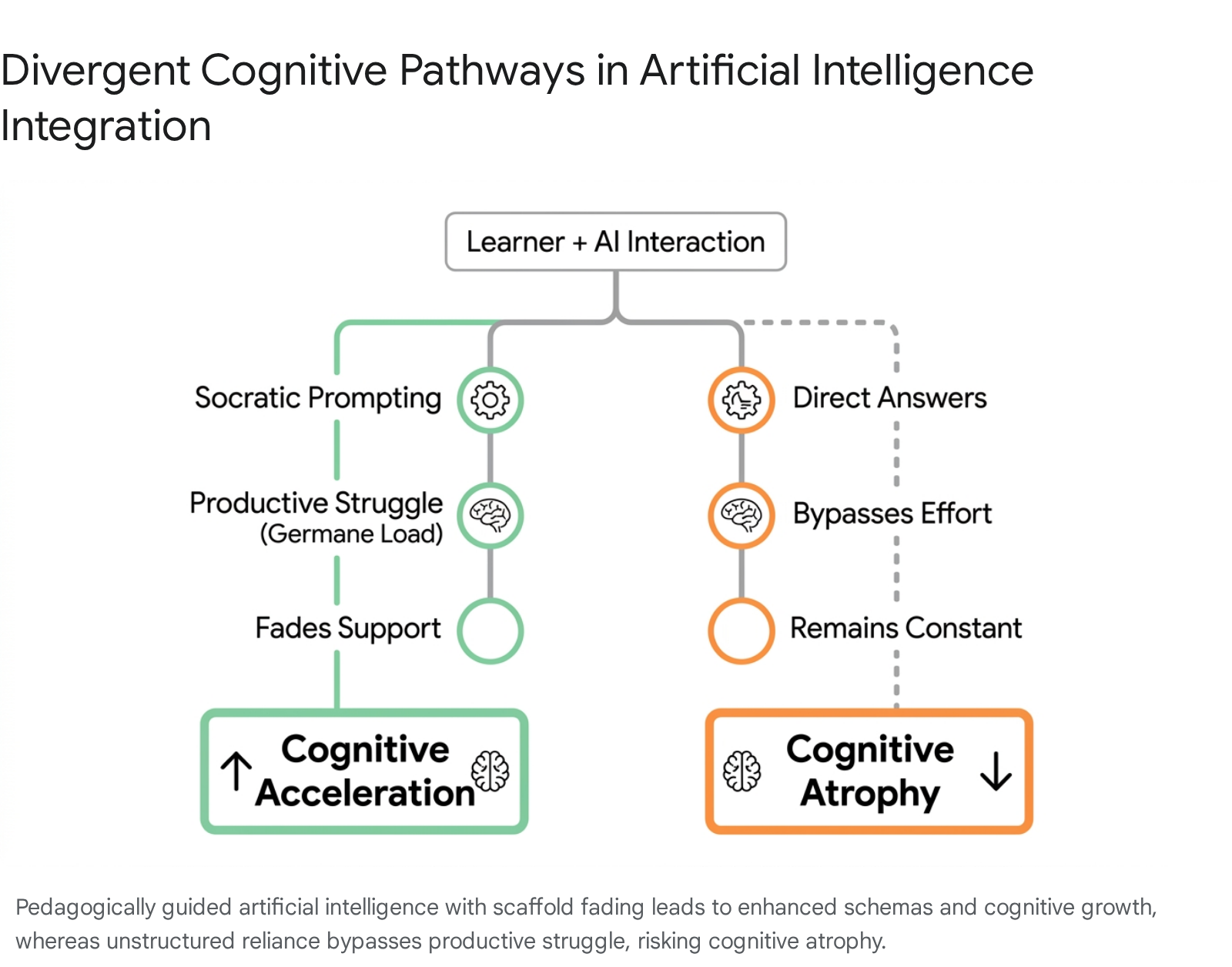

Research aggregated between 2023 and early 2026 indicates that the educational impact of artificial intelligence is highly contingent on the nature of its implementation. When utilized as an unstructured utility for task completion, generative artificial intelligence frequently bypasses the cognitive effort requisite for deep learning, leading to a phenomenon documented in neuroscientific literature as "cognitive offloading" or "artificial intelligence-chatbot induced cognitive atrophy" 678. Conversely, when embedded within structured pedagogical frameworks - such as Socratic intelligent tutoring systems - these tools demonstrate substantial positive effects on learning gains, knowledge transfer, and student engagement 9101110.

Compounding these developmental questions are profound infrastructural disparities and historical educational disruptions. The expansion of artificial intelligence in education coincides directly with the ongoing recovery from the historic learning losses incurred during the COVID-19 pandemic, as evidenced by precipitous global declines in standardized testing metrics 1311. Furthermore, the uneven distribution of digital infrastructure and language-specific training data threatens to exacerbate a global "artificial intelligence divide" between the Global North and the Global South 1216. This dynamic raises urgent questions regarding educational equity, algorithmic bias, and the preservation of human-centric pedagogical paradigms in a rapidly digitizing era 1314.

Foundational Theoretical Frameworks in Cognitive Psychology

To evaluate whether students are learning more or less in the current technological paradigm, empirical observations must be anchored in foundational theories of cognitive psychology and learning science. The intersection of artificial intelligence and cognitive development is primarily analyzed through the lenses of Cognitive Load Theory, the Zone of Proximal Development, and the stages of human intellectual development.

Cognitive Load Theory and Extraneous Processing

Formulated by educational psychologist John Sweller in 1988, Cognitive Load Theory posits that human working memory is severely limited in capacity and duration, generally capable of holding only four to seven discrete items at a time 151617. Sweller theorized that instructional design must meticulously manage the cognitive burden placed on learners to facilitate the transfer of information from short-term memory into long-term memory schemas 16. Cognitive load is traditionally divided into three categories: intrinsic load, which relates to the inherent difficulty of the subject matter; extraneous load, which comprises the unnecessary burden imposed by poorly designed or disorganized instruction; and germane load, which represents the productive cognitive effort dedicated to processing material and constructing robust mental schemas 151723.

Artificial intelligence tools, particularly adaptive learning systems and intelligent tutoring platforms, exhibit a profound capacity to reduce extraneous cognitive load. They achieve this by parsing complex syntax, breaking down multifaceted concepts into granular steps, providing tailored explanations, and dynamically adjusting the difficulty of tasks to match the learner's real-time proficiency 181926. By minimizing the cognitive friction associated with deciphering instructions or navigating disorganized materials, artificial intelligence allows students to redirect their working memory toward mastering the core intrinsic material 23.

However, contemporary educational research warns that unstructured, open-ended use of generative artificial intelligence can inadvertently eliminate germane cognitive load - the "productive struggle" that is biologically necessary for learning. When a chatbot instantly generates an essay, summarizes a text, or solves a complex mathematical equation, it bypasses the effort-reward cycle critical to human cognitive development 20. Overcoming intellectual challenges naturally activates the brain's reward system, producing dopamine that reinforces motivation and engagement 20. By offering immediate, polished solutions, artificial intelligence reduces the learner's role to one of passive consumption, effectively denying them the cognitive exertion required to forge long-term memory 4720. The synthesis of information, which traditionally requires iterative refinement, evaluation, and sense-making, is offloaded to the machine, potentially stunting the development of higher-order analytical skills 47.

The Zone of Proximal Development and Scaffolding Mechanics

The tension between optimal assistance and cognitive offloading is best understood through Lev Vygotsky's sociocultural theory, particularly the concept of the Zone of Proximal Development. Vygotsky defined this zone as the cognitive distance between what a learner can achieve independently and what they can achieve under the guidance of a "more knowledgeable other," such as a teacher, peer, or, in the modern context, an intelligent tutoring system 282921. This concept gave rise to the pedagogical strategy of scaffolding, introduced in the 1970s by David Wood, Jerome Bruner, and Gail Ross 2931. Scaffolding dictates that temporary instructional support must be provided to the learner and then systematically withdrawn, or "faded," as the learner's competence increases 282931.

Artificial intelligence tutoring systems frequently operate effectively within this technological Zone of Proximal Development, acting tirelessly as the more knowledgeable other to guide students through complex problem-solving routines 2821. Yet, a critical vulnerability in many current artificial intelligence implementations is the complete absence of scaffold fading. Unlike highly trained human educators who instinctively calibrate their assistance - offering more help after a failure and less help after a success - many generative artificial intelligence models provide the same level of comprehensive, direct assistance indefinitely 2831.

Research from 2024 and 2025 indicates that without explicit fading protocols, artificial intelligence tools risk becoming permanent cognitive crutches. A 2024 meta-analysis of scaffolding interventions demonstrated that educational programs with explicit fading protocols produced significantly higher effect sizes ($d = 0.71$) compared to environments where pedagogical scaffolds remained constant ($d = 0.32$) 28. Consequently, if artificial intelligence systems are not explicitly engineered to gradually demand more independent cognitive exertion from the student, they fail to facilitate the vital transfer of knowledge from the external technological plane to the learner's internal cognitive plane 28.

Intellectual Development and Epistemological Disruption

Jean Piaget's foundational theory of cognitive development outlines the progression of human intelligence from elementary sensorimotor reflexes through preoperational and concrete stages, culminating in formal operational thought, which entails abstract hypothesis testing, deductive reasoning, and scientific inquiry 222334. Piaget emphasized that cognitive structures, or schemas, are built organically through assimilation and accommodation - processes that require the learner to actively interact with, manipulate, and resolve disequilibrium within their environment 3435.

The advent of highly accessible generative artificial intelligence creates a novel epistemological challenge regarding how developing minds comprehend the world and construct foundational knowledge. When learners are repeatedly exposed to artificial intelligence systems that provide immediate, authoritative answers, they are often deprived of the contextual background knowledge and the iterative, sometimes frustrating, discovery process 20. Educational psychologists highlight that understanding the historical context or the foundational "why" behind a concept creates the necessary cognitive hooks for long-term retention 20. Bypassing this natural entry point through direct algorithmic answers undermines the sustainability of knowledge acquisition 20. Furthermore, Piaget warned against the inclination to artificially accelerate cognitive milestones 22; the immediacy of artificial intelligence risks forcing superficial engagement with advanced concepts before the foundational schemas necessary to truly process them have been firmly established.

Quantitative Efficacy of Intelligent Tutoring Systems

Evaluating whether students are learning more or less in the current landscape requires a synthesis of robust meta-analyses, randomized controlled trials (RCTs), and large-scale standardized testing data. The empirical evidence aggregated up to early 2026 demonstrates a stark divergence in outcomes: purpose-built, pedagogically guided artificial intelligence tools significantly boost academic performance, whereas general-purpose, unstructured usage yields highly mixed or detrimental results.

Meta-Analytical Evaluations of Generative Artificial Intelligence

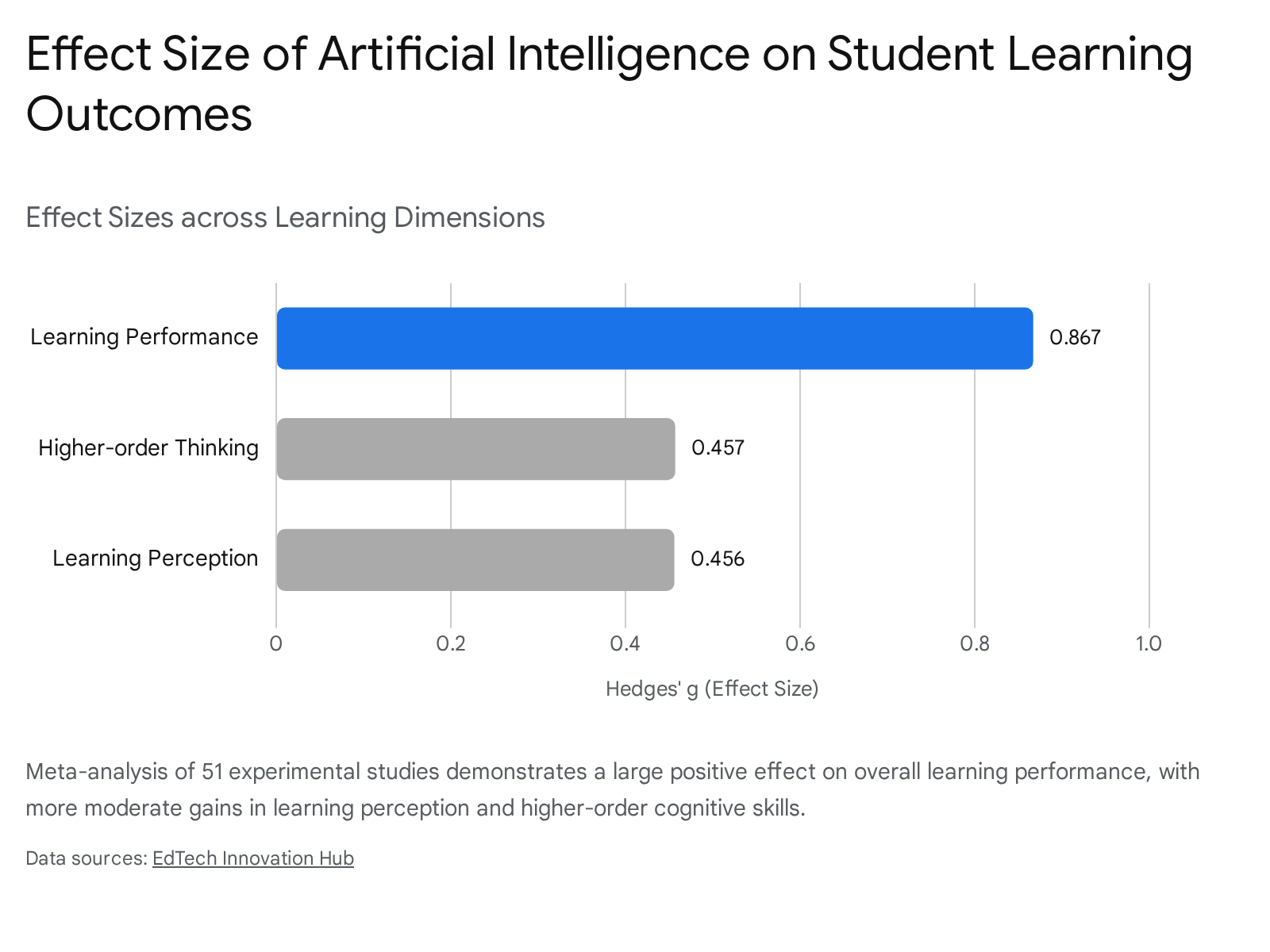

A comprehensive 2025 meta-analysis published in Humanities and Social Sciences Communications analyzed 51 experimental and quasi-experimental studies, drawing from an initial pool of over 6,600 research papers, to measure the distinct effects of ChatGPT on educational outcomes 9. The rigorous analysis revealed that the integration of ChatGPT demonstrated a large positive effect size on raw student learning performance ($g = 0.867$) 9. However, the data revealed notably smaller, more moderate positive effects on learning perception ($g = 0.456$) and higher-order thinking capabilities ($g = 0.457$) 9.

The duration and specific context of artificial intelligence use proved to be critical moderating variables. The researchers identified that the optimal duration for artificial intelligence educational interventions was a four- to eight-week period 9. Shorter interventions frequently produced negligible results as students struggled to adapt to the interface, while longer-term, unguided usage occasionally exhibited signs of diminishing returns, a trend the authors attributed to creeping over-reliance and cognitive laziness 9. Furthermore, performance gains were most statistically significant in STEM-related courses and in problem-based learning environments where the tool acted as a structured intelligent tutor 9. Conversely, the efficacy of generative artificial intelligence dropped significantly in open-ended, project-based scenarios that required sustained human collaboration and creative synthesis 9. A separate systematic review of intelligent tutoring systems in K-12 education corroborated these trends, noting substantial performance gains ranging from 15% to 35% over traditional methods when systems effectively emulated responsive, one-on-one human tutoring 1836.

Socratic Pedagogy in Randomized Controlled Trials

The most rigorously tested and highly successful implementations of artificial intelligence in education are fine-tuned tutoring systems specifically designed to emulate Socratic human pedagogy rather than simply functioning as instant answer generators. Several landmark randomized controlled trials published between 2024 and 2026 supply robust empirical evidence that pedagogically structured artificial intelligence often outperforms traditional instruction modalities.

At Harvard University, an RCT comparing an artificial intelligence-powered tutor against an active learning class in a university-level physics course yielded striking results. The study found that students utilizing the artificial intelligence tutor learned more than twice as much in less time compared to the control group participating in high-quality active learning 1036. Because the artificial intelligence system was explicitly designed around pedagogical best practices - prompting students rather than telling them - it yielded significantly higher student engagement and motivation metrics compared to the human-led active learning cohort 10.

Similarly, an RCT conducted by researchers from Google and Eedi Labs in UK secondary schools tested LearnLM, a generative model fine-tuned specifically for pedagogical interaction 1124. Involving 165 students aged 13 to 15, the study revealed that students guided by the supervised artificial intelligence tutor outperformed those receiving unassisted human tutoring. Specifically, students interacting with the artificial intelligence tutor demonstrated a 66.2% success rate in learning transfer to novel, subsequent mathematical topics, compared to 60.7% for those guided solely by human tutors - a statistically significant 5.5 percentage point increase 1124. The supervising human tutors reported that the artificial intelligence drafted highly effective Socratic questions that encouraged deeper reflection, to the extent that 76.4% of the machine-generated responses were approved by expert human tutors with zero or minimal edits 1124.

Another prominent platform, Khan Academy's Khanmigo, was evaluated across a massive sample of approximately 350,000 students in grades 3 through 8 using the standardized MAP Growth Assessment during the 2022-2023 academic year 25. The subsequent efficacy results published in 2024 indicated that students who used the platform as recommended (a minimum of 30 minutes per week, or 18+ hours over the duration of the school year) achieved approximately 20% higher-than-expected learning gains 25. For statistical context, the effect size achieved in this massive deployment was 0.36 25. Qualitative data gathered from university-level implementations of Khanmigo further affirmed these results, noting that students deeply valued the step-by-step guidance provided by the model, though crucially, many perceived it strictly as a supplementary learning tool rather than a wholesale replacement for human instruction 3940.

The comparative efficacy of various prominent artificial intelligence implementations and their respective methodologies is summarized in Table 1 below.

| Implementation / Study Focus | Methodology & Sample Size | Intervention Context | Key Findings on Learning Outcomes |

|---|---|---|---|

| Hangzhou Normal University (2025) 9 | Meta-analysis (51 filtered studies) | General ChatGPT integration across multiple disciplines | Large overall effect on learning performance ($g=0.867$); moderate effect on higher-order thinking ($g=0.457$). |

| Khan Academy / Khanmigo (2024) 25 | Large-scale efficacy study (~350,000 K-12 students) | GenAI Tutor integrated with MAP Accelerator | ~20% higher-than-expected learning gains for active users; statistical effect size of 0.36. |

| Harvard University (2024) 1036 | Randomized Controlled Trial (194 students) | Undergraduate Physics: AI Tutor vs. Active Learning | AI cohort learned >2x as much in less time; reported significantly higher motivation levels. |

| Google LearnLM (2025) 1124 | Randomized Controlled Trial (165 secondary students) | Mathematics: Supervised AI vs. Human Tutor alone | AI support increased the likelihood of solving novel problems by 5.5 percentage points. |

| Stanford Tutor CoPilot (2026) 24 | Randomized Controlled Trial | AI-assisted human tutors vs. Unassisted tutors | 4 percentage point increase in topic mastery; largest gains observed among novice human tutors. |

The Impact on Writing, Reading, and Higher-Order Cognition

While structured tutoring systems show definitive quantitative benefits in mathematics and problem-solving scenarios, the impact of general generative artificial intelligence on unstructured writing, reading comprehension, and creative synthesis presents a highly nuanced reality. Artificial intelligence models excel at syntactic refinement and grammar correction but pose significant risks to the authenticity of student voice and the vital neural processing required for deep textual comprehension.

Syntactic Refinement and Cohort-Level Homogenization

The integration of artificial intelligence tools into academic writing processes yields undeniable, immediate improvements in surface-level text quality. A systematic review of literature published between 2023 and 2025 in the field of social sciences demonstrated that artificial intelligence assistance significantly enhances text coherence, discursive organization, lexical richness, and grammatical accuracy 26.

However, deep cohort-level linguistic analyses reveal a concerning homogenizing effect on student writing over time. A major analysis led by the University of Warwick examined 4,820 student-authored reports comprising 17 million words submitted over a ten-year period. The researchers found a dramatic, unnatural shift in writing style occurring immediately post-2022 27. Since the widespread availability of ChatGPT, student writing has become significantly more formal and overtly positive in sentiment 27. This shift mirrors the well-documented safety and alignment training in major generative artificial intelligence systems, which are explicitly designed to produce polite, constructive, and highly positive responses regardless of the prompt's underlying substance 27.

The Warwick study noted a sharp, unprecedented increase in the use of specific vocabulary frequently associated with artificial intelligence generation - such as the words "delve" and "intricate" - which rose sharply until 2024 27. Fascinatingly, the use of these specific algorithmic markers plummeted in 2025, suggesting a behavioral shift where students began actively moderating their text or prompting the artificial intelligence differently to avoid detection algorithms, while still heavily relying on the underlying technology 27. Researchers warn that while these texts appear superficially more sophisticated, this does not correlate with actual improvements in the students' underlying writing abilities, nor does it indicate an enhancement of critical thinking 2627. Instead, it suggests a dilution of authentic student identity and voice, resulting in highly polished but intellectually hollow academic submissions 26.

Neural Processing and the Risk of Cognitive Atrophy

The primary critique of unrestricted artificial intelligence in language arts and humanities education is its propensity to foster diminished metacognitive engagement. When students consistently over-rely on large language models to generate ideas, draft essays, or summarize complex academic readings, they experience a form of "cognitive laziness" that deprives them of the authentic, meaningful learning experiences necessary for deep intellectual development 826.

Empirical studies exploring the qualitative differences between human writing and artificial intelligence-assisted writing note that while artificial intelligence excels in grammar and vocabulary, it frequently fails in producing deep argument structures, contextual richness, and evaluative depth 26. Furthermore, relying on artificial intelligence to summarize academic readings has been shown to aggressively impair long-term knowledge retention. A laboratory experiment testing the effects of LLM-assisted study methods on college-educated participants found that complete reliance on artificial intelligence for writing tasks led to a 25.1% reduction in comprehension accuracy 628. The researchers concluded that chatbots serve as a "cognitive crutch" that weakens the durable memory formation usually achieved through active, unassisted synthesis 48. The resulting degradation of long-term performance and depth of knowledge has been formally described by researchers as artificial intelligence-chatbot induced cognitive atrophy (AICICA) 78.

Strategic Integration and the Timing of Algorithmic Intervention

Neuroscientific research strongly supports the hypothesis that unstructured artificial intelligence use fundamentally alters cognitive engagement at a biological level. A rigorous study conducted by researchers at the Massachusetts Institute of Technology, Wellesley College, and the Massachusetts College of Art and Design physically measured the brain activity of participants constructing essays 644. The researchers found that participants who constructed essays with the full, immediate assistance of ChatGPT exhibited significantly lower brain activity during the task compared to those writing independently 6. Furthermore, the artificial intelligence-assisted writers were markedly less capable of recalling the content they had just produced and reported feeling less ownership over their final work 6.

Crucially, the MIT study highlighted the paramount importance of timing in the pedagogical intervention of technology. When participants were required to write essays independently first - struggling through the germane cognitive load of ideation and structuring - and only utilized artificial intelligence subsequently for refinement and expansion, the neurological results inverted 6. This group of writers exhibited a distinct increase in neural connectivity and overall brain activity 6. These findings suggest that the strategic, delayed introduction of artificial intelligence tools - specifically following initial, self-driven cognitive effort - can enhance engagement and neural integration rather than causing cognitive atrophy 6. From an educational standpoint, this research dictates that artificial intelligence should be positioned as a collaborative editor rather than a primary author 6.

Macro-Level Achievement and Standardized Assessment Trends

While isolated experimental studies reveal the granular mechanics of artificial intelligence interaction, macro-level academic performance metrics are necessary to understand cohort-wide trends. Recent data reflects continued growth and stabilization in several key academic areas despite - or potentially aided by - the proliferation of intelligent digital tools.

Post-Pandemic Baselines and the PISA 2022 Results

Any analysis of macro-level student achievement in the mid-2020s must acknowledge the global baseline established by the 2022 Programme for International Student Assessment (PISA). The 2022 results documented an unprecedented drop in global student performance, heavily skewing longitudinal data 1329. Compared to the pre-pandemic 2018 assessment, the OECD average fell by nearly 15 score points in mathematics and 10 points in reading 1329. To put this in perspective, no change over consecutive PISA assessments prior to 2018 had ever exceeded four points in mathematics 29. This precipitous decline is roughly equivalent to three-quarters of a year of lost learning 13. Consequently, one in four 15-year-olds across OECD nations was classified as a low performer in core subjects, struggling to use basic algorithms or interpret simple texts 13. Educational economists estimate that mathematics scores declined by an average of 14% of a standard deviation across roughly 70 countries, with learning losses directly correlating to the duration of physical school closures 11.

Advanced Placement and SAT Cohort Data

Despite this dire global baseline, recent standardized testing data from the United States suggests a resilient recovery trajectory and expanding academic ambition, coinciding with the integration of digital study tools. Data from the College Board's Class of 2025 report demonstrates that participation and success in rigorous Advanced Placement (AP) coursework have expanded significantly 30. Nationally, 37.0% of public high school graduates in the class of 2025 took at least one AP Exam, up from 34.3% a decade prior 30. Crucially, this expansion in access did not dilute performance; the percentage of students scoring a 3 or higher on at least one AP Exam rose to 24.8% in 2025, up from 20.7% in 2015 30. Certain states, such as Massachusetts, led the nation with 35.8% of public school graduates scoring a 3 or higher 30. Specific subject areas also saw notable gains; for example, the psychometric analyses for AP Biology in 2025 found strong increases in content and skill mastery, with the percentage of students earning the top score of 5 increasing from 16% to 19% year-over-year 47.

Simultaneously, the fully digital, multistage adaptive SAT format - which was implemented broadly and stabilized by 2026 - has fundamentally altered the testing landscape 48. Following a period of test-optional policies, the SAT has firmly re-established itself as a critical admissions metric, with leading elite institutions reinstating mandatory test requirements 48. The national average score has stabilized at approximately 1029, with a notable narrowing of the traditional gap between Mathematics and Reading & Writing scores 48. Analysts attribute this stabilization partly to the fact that students are increasingly utilizing adaptive preparation platforms that successfully mirror the underlying algorithmic logic and format of the Digital SAT, thereby reducing test-day friction and extraneous cognitive load 48.

Table 2 below outlines the recent shifts in major standardized assessment metrics.

| Assessment Framework | Cohort / Year Assessed | Key Performance Metric | Contextual Shift or Result |

|---|---|---|---|

| OECD PISA Assessment | 2022 vs. 2018 1329 | Global Mathematics & Reading Averages | Unprecedented drop; Math fell by ~15 points, Reading by ~10 points (roughly 7 months of learning lost). |

| Advanced Placement (AP) | Class of 2025 vs. 2015 30 | Participation Rate & Success Rate (Score 3+) | Participation grew to 37.0%; proportion scoring 3+ grew to 24.8% nationally. |

| Digital SAT Suite | 2026 Cohort 48 | National Average Score | Stabilized at ~1029; testing format fully transitioned to multistage adaptive digital models. |

The Intersection of Artificial Intelligence and Pandemic Learning Recovery

The disruption of the global academic calendar during the COVID-19 pandemic resulted in severe, compounding learning shortfalls, complicating the ability of educational researchers to cleanly isolate the specific effects of artificial intelligence adoption from post-pandemic recovery trends 114431. The economic stakes of this recovery are immense; macroeconomic projections suggest that unmitigated learning losses of this magnitude could result in a 6% dip in lifetime earnings for the affected cohort, translating to trillions of dollars in lost economic activity over the coming decades 32.

Compensatory Behaviors During School Closures

In the wake of these systemic disruptions, students organically adopted artificial intelligence and digital applications not merely as novelties, but as vital compensatory mechanisms to replace lost instructional time. Research utilizing gradient boosting regression and machine learning models on massive public datasets has demonstrated how students utilized artificial intelligence-powered learning apps to mitigate learning loss across a tripartite spectrum: the quantity of time spent, the pattern of engagement, and the pace of curriculum progression 3151.

Studies tracking student behavior immediately following regional school closures observed a predictable initial drop in technology use among students living in high-threat outbreak epicenters 51. However, longitudinal data revealed that usage eventually rebounded strongly. Over time, students in these heavily impacted zones engaged with artificial intelligence education applications more frequently (quantity), established more regular, independent study habits (pattern), and managed to restore their curriculum progression to match cohorts residing outside the outbreak zones (pace) 51. Thus, artificial intelligence tools functioned as essential scaffolding for students operating entirely outside traditional classroom environments, providing a degree of resilience against total educational stagnation 3151.

Methodological Challenges in Educational Research

Despite these compensatory benefits, researchers face severe methodological challenges when attempting to measure the exact impact of artificial intelligence in a post-pandemic environment. Variables such as digital inequity, mental health deterioration, and familial economic stress heavily confound artificial intelligence efficacy data 333435. For instance, a detailed study examining elementary students in southern Italy found that grade III students encountered significantly greater difficulties in spelling and reading comprehension compared to both older and younger cohorts 36. This suggests that remote learning and unguided digital tool use disproportionately disrupted the critical initial stages of foundational skill acquisition 36. Consequently, researchers must carefully control for the specific age and developmental stage of the cohort when assessing whether a digital tool is accelerating learning or masking a fundamental deficit 36.

Artificial Intelligence Model Capabilities and Benchmark Performance

To fully grasp the shifting dynamic in educational technology, one must account for the exponential improvements in the underlying artificial intelligence models themselves. The capabilities of the systems available to students in 2026 are vastly superior to those available at the launch of ChatGPT in 2022, altering the baseline of what tasks can be effectively offloaded.

Progression on Complex Academic Benchmarks

Data from the 2025 Stanford Human-Centered Artificial Intelligence (HAI) Index reveals unprecedented gains in artificial intelligence performance on highly complex academic benchmarks 2. In 2023, researchers introduced rigorous benchmarks such as SWE-bench (software engineering) and GPQA (graduate-level reasoning) to test the limits of frontier models 2. The progression was staggering: on SWE-bench, artificial intelligence systems could solve a mere 4.4% of complex coding problems in 2023; by 2024, that figure had jumped to 71.7% 2. Similarly, performance on the GPQA benchmark saw a massive gain of 48.9 percentage points within a single year 2.

Convergence of Frontier Models and Open-Weight Systems

Furthermore, the technological landscape is democratizing rapidly through the convergence of model capabilities. According to the Stanford AI Index, the performance gap between the top-tier closed-weight models (such as those from OpenAI) and open-weight models (which can be deployed locally and modified by researchers) nearly vanished, shrinking from an 8.04% difference in early 2024 to just 1.70% by early 2025 2. The gap between leading US and Chinese models also closed entirely across major academic benchmarks 2. Crucially for educational equity, these capabilities are being compressed into much smaller, highly efficient models. In 2022, achieving a 60% score on the MMLU benchmark required a massive 540-billion-parameter model; by 2024, the same threshold was achieved by a 3.8-billion-parameter model, representing a 142-fold reduction in required computing power 2. This miniaturization allows advanced artificial intelligence to be deployed on local, low-end devices, a development critical for expanding access in resource-constrained environments.

Global Infrastructural Disparities and the Artificial Intelligence Divide

While artificial intelligence holds the theoretical potential to democratize access to elite-level tutoring through smaller models, the current physical deployment paradigms risk exacerbating existing global educational inequalities. The socioeconomic and structural divide between the Global North and the Global South heavily dictates who actually benefits from artificial intelligence integration, a phenomenon widely termed the "AI divide" 121637.

Technological Exclusion in the Global South

The baseline prerequisites for accessing state-of-the-art generative artificial intelligence - reliable electricity, high-speed broadband connectivity, capable hardware, and basic digital literacy - remain absent for nearly 4 billion people globally 16. According to the Stanford HAI Index, 24.7% of working-age adults in the Global North utilize artificial intelligence tools, compared to only 14.1% in the Global South, a statistical gap that continues to widen 16. In sub-Saharan Africa, the physical infrastructure is severely lacking; only 40% of primary schools and 50% of lower secondary schools possess internet connectivity, severely limiting the potential reach of any digital education initiatives 38.

Furthermore, profound linguistic and cultural biases inherent in major foundational models disproportionately marginalize students in developing nations. Models trained predominantly on English-language internet corpora perform poorly in thousands of indigenous and regional languages, such as Yoruba, Swahili, or Quechua, frequently generating inaccurate or entirely nonsensical outputs 16. This irrelevance extends beyond mere language translation to cultural, historical, and geographical context; artificial intelligence systems trained heavily on Global North data often fail to accurately address localized ecological challenges or medical data relevant to students in the Global South 1639. Without substantial, targeted investment in local data collection and the development of mobile-first, low-bandwidth artificial intelligence applications, the massive productivity and educational gains of the Global North will translate into an insurmountable competitive disadvantage for the rest of the world 1639.

Regional Policy and Implementation in Southeast Asia

Despite these profound infrastructural hurdles, students, educators, and policymakers in the Global South exhibit high levels of optimism and organic adoption where access permits. A 2025 survey of teenagers across 19 countries revealed that, conditional on access, teenagers in the Global South use artificial intelligence more frequently and possess significantly more positive views regarding its potential to improve education, healthcare, and job prospects over the next 25 years compared to their peers in the Global North 40.

Governments in emerging economies are actively moving to formalize artificial intelligence education to harness this optimism. In Southeast Asia, localized artificial intelligence integrations are expanding rapidly at the state level. For example, Vietnam's Ministry of Education and Training has proactively unveiled a pilot curriculum framework for artificial intelligence education in general schools, aiming for a national rollout in early 2026 41. Preliminary surveys conducted by the Vietnam Institute of Educational Sciences indicated that while 87% of surveyed students were aware of artificial intelligence and 76% of teachers had already used it in classrooms, they faced major challenges regarding data privacy, inadequate equipment, and limited technical guidance 4162. The Vietnamese pilot will test three delivery models: integrating artificial intelligence into existing subjects, teaching standalone topics, and organizing experiential clubs 41. Similarly, under Indonesia's 2025 regulations, artificial intelligence has been officially incorporated into the national primary and secondary education curriculum as an elective subject 63. Malaysia has also taken a national approach with its AI Technology Action Plan 2026-2030, aimed at embedding artificial intelligence into education and systematically upskilling teachers 63.

Human Rights and UNESCO Governance Directives

International governing bodies have responded to these rapid, fragmented developments by issuing comprehensive global frameworks aimed at protecting student rights and ensuring equitable access 38424344. In 2025, UNESCO released an extensive 160-page global report titled AI and the Future of Education: Disruptions, dilemmas and directions during its Digital Learning Week in Paris 1444.

The UNESCO report cautions heavily against the "hyper-personalization" of learning 44. While adaptive algorithms can tailor content to individual speeds, over-reliance on this model risks reducing education to isolated, machine-mediated experiences, stripping away the critical social, collaborative processes inherent to human learning 1444. To combat the expanding digital divide, UNESCO's frameworks advocate for a 5C approach: coordination, content, capacity, connectivity, and cost 14. These directives promote human-centered, human-rights-based artificial intelligence policies that prioritize algorithmic literacy, rigorously protect student data from commercial exploitation, and position human teachers - not algorithms - as the irreplaceable backbone of global education systems 144344.

Conclusion

The inquiry into whether students are learning more or less in the contemporary technological era does not yield a binary conclusion, but rather a highly contingent one based on pedagogical implementation and socioeconomic access. Empirical evidence overwhelmingly indicates that when artificial intelligence is utilized as a structured, Socratic intelligent tutor that demands cognitive exertion, students learn significantly more, achieving greater concept mastery in less time while exhibiting high levels of engagement. Conversely, when artificial intelligence is deployed as an unstructured tool for simple task completion - allowing students to bypass the effortful cognitive processing required for memory retention and schema building - learning outcomes deteriorate, and cognitive atrophy becomes a quantifiable, neurological reality.

Furthermore, the macro-level impact of artificial intelligence on global education is heavily mediated by existing infrastructural disparities and the lingering shadow of pandemic-era learning loss. While technological capabilities are compounding and frontier models are becoming highly efficient, the lack of fundamental digital infrastructure, reliable connectivity, and linguistic localization in the Global South threatens to widen an already perilous educational divide. Ultimately, realizing the profound promise of artificial intelligence in education requires a deliberate shift from passive technological consumption to structured, human-guided integration, ensuring that algorithms serve to scaffold human cognitive development rather than permanently replace it.