The impact of artificial intelligence on the meaning of work

Introduction

The integration of artificial intelligence into the modern workplace has precipitated a profound structural and psychological transformation across the global economy. While early economic analyses focused primarily on the quantitative displacement of human labor, recent empirical data and theoretical frameworks indicate that the most disruptive impact of artificial intelligence lies in the qualitative alteration of work itself 1. Forecasts suggest that over the near term, an estimated 50% to 55% of occupations in the United States will be significantly reshaped by artificial intelligence technologies, fundamentally shifting the expectations surrounding how human workers execute tasks, create value, and derive psychological satisfaction from their employment 1.

This transformation has prompted organizational psychologists, labor economists, and corporate governance scholars to reframe the current technological transition. Rather than interpreting the shift as a pure labor crisis, evidence suggests the integration of generative algorithms and algorithmic management represents a structural "meaning crisis" 2. As computational systems increasingly assume responsibility for routine cognitive tasks, complex data processing, and foundational content generation, the traditional mechanisms through which employees derive purpose - such as task mastery, visible effort-to-output ratios, and autonomous decision-making - are being dismantled 2. The core inquiry for contemporary workforce strategy is how human workers construct meaning when machines can replicate or exceed their cognitive output, and what new paradigms of value will replace historical definitions of human labor.

The implications of this shift extend far beyond individual psychological well-being or localized organizational efficiency. Work functions as a central pillar of the global social contract. In both advanced economies and developing nations, employment provides social identity, structures temporal routines, and underpins institutional trust 345. As artificial intelligence accelerates productivity, it simultaneously fragments attention, complicates the attribution of authorship, and alters the epistemic power dynamics between management and labor 677. The following analysis exhaustively examines how artificial intelligence is changing the meaning of work by analyzing shifts in core job characteristics, the erosion of psychological ownership, the paradox of productivity and burnout, and the restructuring of human-centric labor models.

The Evolution of Job Characteristics

For decades, organizational psychology has relied heavily on the Job Characteristics Model (JCM) to understand how job design influences worker motivation, satisfaction, and performance 8910. The model identifies five core dimensions of meaningful work: skill variety, task identity, task significance, autonomy, and feedback 81112. When these dimensions are optimized, they trigger critical psychological states - specifically, the experienced meaningfulness of work, responsibility for outcomes, and knowledge of results 811. The deployment of artificial intelligence serves as a powerful job design intervention that alters all five dimensions, a phenomenon scholars term "Core Task Characteristics Substitution" 8.

Alterations to Skill Variety and Task Identity

Skill variety refers to the degree to which a job requires diverse activities and talents, while task identity is defined as the ability to complete a "whole" and identifiable piece of work from beginning to end 1113. Artificial intelligence acts as both an augmentative tool and a substituting force in these areas. By automating routine and repetitive tasks, the technology theoretically allows employees to engage in higher-order problem-solving, strategic planning, and creative endeavors, thereby expanding skill variety 815. For instance, software engineers are increasingly moving away from repetitive coding toward system-level orchestration, defining objectives, and validating integrated components - tasks that require a broader understanding of complex systems and human constraints 1.

However, the automation of intermediate tasks frequently shatters task identity. When an algorithmic system generates the foundational code, drafts the initial report, or conducts the preliminary data analysis, the human worker is relegated to the role of an editor or supervisor 14. Employees no longer shepherd a project through from a blank slate to completion; instead, they interact with fragmented outputs generated by autonomous models 14. This fragmentation diminishes the psychological experience of creating a cohesive product, leading to a profound sense of alienation from the final output 8. Furthermore, the assumption that artificial intelligence reliably expands skill variety is contested. In certain environments, over-reliance on generative models leads to deskilling, as workers lose the opportunity to practice and refine the foundational skills necessary to understand the underlying mechanics of their profession 15.

Task Significance and Knowledge Calibration

Task significance - the perceived impact of one's job on others and the broader organization - is similarly complicated by artificial intelligence 1116. When autonomous systems perform the heavy lifting of data synthesis and content generation, workers often struggle to quantify their individual contribution to the final product 1415. If a diagnostic algorithm formulates a medical treatment plan or a predictive model generates a corporate strategy, the human operator's role may feel peripheral, diminishing the intrinsic value they attach to their daily efforts 14.

Furthermore, the integration of artificial intelligence is reshaping how workers acquire and calibrate knowledge. Traditionally, expertise is built through a feedback loop of trial, error, and gradual mastery. This process is associated with the Dunning-Kruger effect, a psychological observation that novices overestimate their abilities, while true experts become acutely aware of the boundaries of their knowledge 17. Emerging research indicates that interaction with generative models fundamentally disrupts this cognitive feedback loop 17. Because the systems provide highly polished outputs instantly, they generate a uniform state of overconfidence across all skill levels 17. Both novices and seasoned professionals utilizing artificial intelligence exhibit similar levels of certainty, frequently overestimating their comprehension of the underlying material because the algorithm obscures the complexity of the knowledge generation process 17. This degradation of expert judgment persists even after the system is turned off, indicating lasting cognitive rewiring 17.

Autonomy and Algorithmic Management

Job autonomy is arguably the dimension most heavily impacted by the proliferation of automation. Autonomy is defined as the degree to which a job provides substantial freedom, independence, and discretion in scheduling work and determining procedures 1016. When implemented as a collaborative asset, artificial intelligence can enhance autonomy by providing workers with instant access to information and freeing them from bureaucratic constraints 10. However, when deployed as a mechanism for algorithmic management, it severely restricts human discretion.

Extensive case studies from the Nordic countries - regions traditionally known for strong labor co-determination and high workplace autonomy - illustrate the detrimental effects of algorithmic management in non-platform workplaces such as retail, logistics, and finance 6181920. In these environments, algorithmic systems are utilized to track individual performance, dictate schedules, and micro-manage workflows in real-time 1920. This digital surveillance creates a hyper-individualized and competitive atmosphere that undermines collective solidarity and strips workers of their professional discretion 6.

Moreover, algorithmic management introduces a critical shift in epistemic power within organizations 6. The data-driven knowledge generated by artificial intelligence systems is frequently leveraged by management to delegitimize the qualitative, contextual knowledge possessed by frontline workers 6. Because these algorithmic systems often operate as inscrutable black boxes provided by third-party technology vendors, trade unions and workers find it incredibly difficult to contest algorithmic decisions, resulting in a profound loss of workplace democracy and an increase in psychosocial stress 620.

| Job Characteristic Dimension | Traditional Work Environment Dynamics | AI-Augmented Work Environment Dynamics | Primary Psychological and Behavioral Impacts |

|---|---|---|---|

| Skill Variety | Execution of tasks requiring manual, cognitive, and technical skills. | Shift toward system orchestration, prompt engineering, and output verification. | Can elevate work to strategic levels, but risks deskilling foundational competencies over time. |

| Task Identity | Clear visibility of personal contribution from project initiation to completion. | Fragmented contribution; humans edit or supervise machine-generated initial drafts. | Diminished sense of ownership; potential alienation from the final organizational product. |

| Task Significance | Direct correlation between human effort and the impact on the organization. | Obscured individual contribution amid high-volume autonomous machine output. | Threatens purpose if the human role is perceived as redundant or strictly administrative. |

| Autonomy | Human discretion in determining methods, pacing, and problem-solving approaches. | Duality: Increased capability via assistance, or decreased freedom via algorithmic surveillance. | Can foster empowerment, or generate severe psychosocial stress and loss of workplace agency. |

| Feedback | Periodic human evaluation and natural trial-and-error experiential learning. | Real-time algorithmic performance tracking and continuous micro-corrections. | May improve efficiency, but disrupts knowledge calibration and the Dunning-Kruger cognitive loop. |

The Crisis of Psychological Ownership

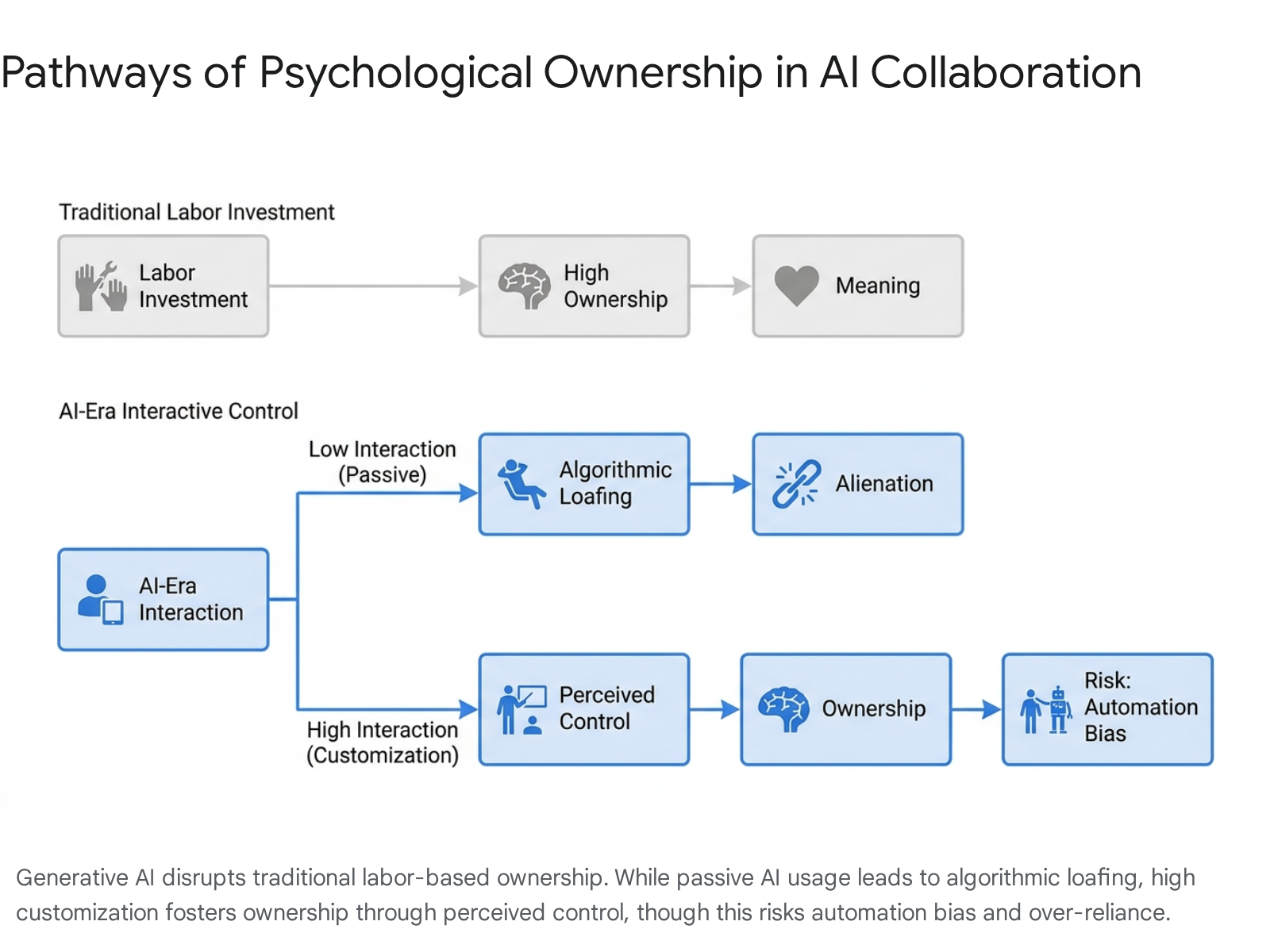

As artificial intelligence assumes a greater role in the creation of content, strategies, and solutions, the concept of psychological ownership is becoming a central fault line in the modern workplace. Psychological ownership refers to the cognitive and affective state wherein individuals feel that an object, idea, or output is "theirs," regardless of formal legal ownership 232122. This sense of ownership is historically derived from the investment of labor, time, and intimate knowledge - a dynamic commonly referred to in psychology as the "IKEA effect," where the effort expended in creating something directly correlates to the value placed upon it 23.

The AI Ghostwriter Effect and Algorithmic Loafing

Generative artificial intelligence short-circuits the traditional pathways to psychological ownership by decoupling output from human effort. Studies on human-AI text generation and strategic decision-making reveal the emergence of an "AI Ghostwriter Effect" 24. When workers use large language models to produce reports, code, or creative content, they frequently refuse to view themselves as the true authors or owners of the text, despite being the ones who prompted the system 2425. Because the cognitive labor required to generate the output is minimal, the psychological bond to the work remains weak 2526.

This lack of ownership manifests behaviorally as algorithmic loafing 25. When humans lack a sense of control or ownership over an outcome, they tend to allocate fewer cognitive resources to the task 25. In collaborative settings with automated systems, users often accept machine-generated suggestions passively, adopting the system's output with little critical reflection 25. This dynamic degrades the quality and diversity of ideas - leading to a homogenization effect where outputs become uniformly generic - and it fundamentally removes the intrinsic motivation that drives high-quality knowledge work 25.

Customization and Automation Bias

Conversely, research indicates that psychological ownership can be artificially fostered through system customization and interactivity 2327. When users are required to heavily customize their technological tools, repeatedly intervene in the generation process, and guide the output through sustained interactive prompting, they replace physical labor investment with perceived control 2223. This interactivity builds a psychological linkage, integrating the artificial intelligence into the user's extended self and increasing their willingness to take responsibility for the output 2227.

However, this customization carries substantial risks. Users who feel strong ownership over an algorithmic assistant may create echo chambers of validation, demonstrating an automation bias where they blindly trust the machine's outputs and fail to critically evaluate errors 1727. When individuals view the artificial intelligence as a highly customized collaborator rather than a generic tool, the credibility disadvantage of the algorithmic agent is reduced, sometimes resulting in situations where the machine is perceived as more credible than human experts 22.

Authorship and Epistemic Vigilance

The crisis of psychological ownership is most visible in domains where attribution is paramount, such as scientific research and academic publishing. Historically, authorship in science implied absolute ownership - a direct, verifiable association between individual intellect and the published work 731. The author was uniquely accountable for the methodology, the claims, and the integrity of the data 28.

As generative models become capable of drafting literature reviews, optimizing experimental code, and simulating datasets, the definition of authorship is shifting from strict ownership to a looser concept of participation or orchestration 7. The integration of these tools creates a vast accountability gap. Institutions like the Committee on Publication Ethics and major journals such as Nature have firmly established that artificial intelligence cannot be credited as an author because it cannot bear moral or legal responsibility for its outputs 3129. Yet, enforcing this boundary is increasingly difficult. The invisibility of generative assistance functions much like the mythical Ring of Gyges, allowing researchers to seamlessly blend machine-generated text with human insight, fundamentally challenging the epistemic integrity of the scientific record 29.

To counter the dilution of human agency, professionals across disciplines must cultivate epistemic vigilance 30. This requires reframing the human role from a passive consumer of algorithmic outputs to an active, critical evaluator 30. Agency in the contemporary era is no longer strictly about generating the initial idea; it is about maintaining the psychological ownership necessary to monitor, evaluate, and override external algorithmic inputs, ensuring that the human remains the ultimate arbiter of truth and value 30.

The Burnout Paradox and Work Fragmentation

One of the most striking contradictions in the contemporary labor market is the coexistence of unprecedented technological efficiency and historic levels of workforce exhaustion. In 2026, employee surveys indicate that up to 83% of workers report experiencing burnout, and digital exhaustion metrics have climbed relentlessly year over year 3531. If artificial intelligence is automating routine tasks and accelerating productivity, the logical expectation would be a corresponding increase in leisure, focus, and well-being. The data, however, reveals a distinctly adverse reality.

The Collapse of Focus Time

The modern knowledge worker experiences severe digital fragmentation. Telemetry data from major enterprise platforms reveals that workers are interrupted by emails, chats, or algorithmic notifications approximately every two minutes, resulting in hundreds of context-switches per day 731. Consequently, the average attention span on a single screen has collapsed to under 47 seconds, and 40% of knowledge workers never achieve 30 minutes of uninterrupted focus in a single workday 7. This fragmentation is particularly acute in hybrid work environments, where employees report spending only 31% of their hours in deep, focused work, compared to 45% for fully in-office teams 7.

Artificial intelligence contributes to this phenomenon by exponentially increasing the volume and velocity of information. Because generative tools make it effortless to produce content, reports, and code, the sheer amount of data that human workers must process, review, and synthesize has exploded 2632. The cognitive load of managing this fragmented workflow degrades the quality of thought, increases error rates, and accelerates emotional exhaustion 726. Employees are increasingly subjected to leaveism - the inability to disconnect from technology after standard working hours, driven by the pressure to keep pace with augmented productivity expectations 3138.

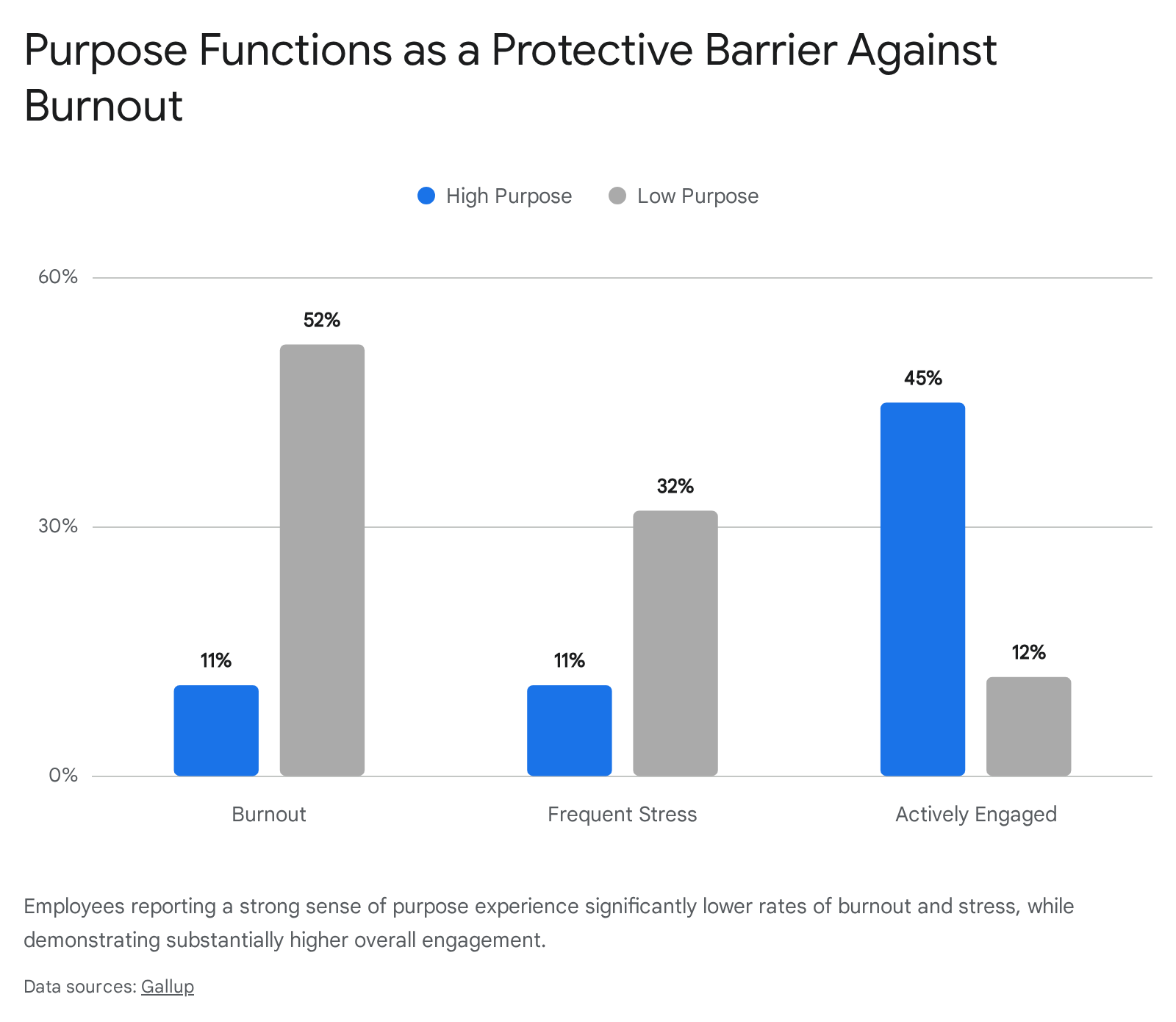

The Protective Function of Workplace Purpose

In this environment of high velocity and high fragmentation, psychological alignment and purpose operate as the primary bulwarks against systemic burnout. Burnout is no longer merely a function of workload; it is fundamentally an alignment issue 35. When the execution of tasks becomes automated or heavily assisted, the reason for performing the work becomes the sole anchor for employee engagement.

Extensive research throughout 2025 and 2026 highlights purpose as a measurable, structural factor in organizational resilience 3334. Data demonstrates that only 11% of employees with a strong sense of life purpose report regular burnout, compared to a staggering 52% among those with low purpose 33. Similarly, high-purpose workers experience significantly lower levels of frequent stress (11%) compared to their low-purpose counterparts (32%) 33. Meaning and purpose function as protective psychological mechanisms against the emotional exhaustion induced by continuous technological disruption and task fragmentation.

Limitations of Human-Machine Synergy

The assumption that combining human intellect with artificial intelligence will reliably yield superior outcomes is challenged by empirical evidence. A comprehensive meta-analysis of over 100 studies on human-computer collaboration, published in Nature Human Behaviour, revealed that while human-algorithm combinations generally outperform humans acting alone, they frequently perform statistically worse than the best artificial intelligence systems operating independently 35.

This lack of expected synergy points to severe coordination costs. Humans struggle to integrate algorithmic outputs effectively, particularly in decision-making tasks, where the cognitive burden of verifying the machine's work limits the user's cognitive flexibility 2635. While synergy is more consistently observed in content creation and generative tasks, the data suggests that organizations must carefully evaluate when collaboration is genuinely beneficial and when it merely introduces friction into the workflow 35.

Macroeconomic Labor Shifts and the Social Contract

While individual workers grapple with shifting job characteristics and burnout, global institutions are confronting the macroeconomic and societal implications of artificial intelligence. The International Labour Organization notes that the world of work is the central arena for deployment, with consequences that extend far beyond efficiency gains to affect institutional trust, democratic processes, and social cohesion 34.

The Illusion of Immediate Displacement

The public discourse surrounding artificial intelligence has been dominated by a simplistic narrative: massive capital expenditure will rapidly translate into massive labor displacement. However, economic data from 2024 through early 2026 reveals a more nuanced reality characterized by an AI-Labor Cost Paradox 36. Direct substitution of jobs has been slower than anticipated, primarily because the computing, energy, and infrastructural costs of deploying highly reliable, autonomous systems at scale remain exceptionally high 36. In contrast, real wages in many OECD countries have stagnated or remained below 2021 levels, meaning that human labor is frequently still more cost-effective than wholesale automation for complex, multi-step workflows 36.

Instead of immediate mass unemployment, the labor market is experiencing selective structural asymmetry. Automation is reshaping the content of jobs rather than eliminating them entirely. Over 119,000 technology-related supervisory and infrastructure jobs were created in 2024, partially offsetting confirmed automation-driven job losses in administrative and routine sectors 37. The true impact is visible in hiring pipelines: 66% of enterprises report reducing entry-level hiring due to automation capabilities 3738. Because algorithms excel at the foundational tasks historically assigned to junior employees, the traditional apprenticeship model of corporate learning is breaking down. Young workers face restricted entry into the knowledge economy, raising critical questions about how future senior leaders will be developed without the experiential learning derived from early-career execution 3839.

Skill Volatility and Demographic Impacts

The burden of technological change is not distributed evenly. Contrary to the assumption that high-skill, STEM-oriented occupations are most vulnerable to generative artificial intelligence, longitudinal studies of over 167 million job postings reveal that lower-wage, lower-education jobs are experiencing the greatest skill volatility 40.

As technology automates basic functions, lower-skilled workers are forced to make massive cognitive leaps to remain relevant, adopting entirely new technical skill sets that deviate significantly from their previous capabilities 40. Furthermore, workers in smaller geographic labor markets face disproportionate pressure to rapidly upskill to catch up with metropolitan hubs 4041. This dynamic places immense strain on women, minorities, and marginalized communities who disproportionately occupy these roles, threatening to exacerbate existing socio-economic inequalities and creating K-shaped economic outcomes unless robust institutional reskilling programs are implemented 3940.

The Decent Work Agenda and Global Divides

The evolving nature of work requires a renegotiation of the global social contract. The International Labour Organization's Decent Work Agenda defines meaningful employment as work that ensures equity, security, dignity, human rights, and social protection 542. As automation disrupts employment stability, governments and multilateral organizations are emphasizing that technology policies must be human-centered, focusing on mitigating psychosocial risks and preventing the decoupling of social protection from employment 349.

This imperative is deeply complicated by persistent digital divides between the Global North and the Global South. While advanced economies debate the nuances of algorithmic collaboration and knowledge worker burnout, many developing nations experience the technology primarily through indirect supply-chain pressures and the gig economy 343. In the Global South, much of the job growth remains in the informal sector, which lacks the regulatory scaffolding to protect workers from the exploitative elements of algorithmic management 543. Ensuring that the meaning of work is not entirely subjugated to technological determinism requires active policy intervention, robust social dialogue involving trade unions, and the establishment of universal, resilient social protection systems 4544.

Emerging Substitutes for Traditional Meaning

If artificial intelligence alters task identity, diminishes traditional psychological ownership, and commodifies routine knowledge work, what replaces that lost meaning? The transition to an augmented economy is giving rise to new paradigms of professional value. Human workers are increasingly finding purpose in domains that algorithms cannot replicate: systemic orchestration, relational empathy, and epistemic governance.

System-Level Orchestration

As execution is commodified, professional value shifts to orchestration. Workers are transitioning from being the creators of raw material to the editors, curators, and managers of interconnected systems 1. An engineer no longer derives meaning solely from writing flawless syntax, but from defining architectural objectives and integrating disparate machine-generated components into a functional, secure product 1.

This shift requires the adoption of System 0 thinking 45. In cognitive psychology, System 1 is intuitive and fast, while System 2 is analytical and reflective. System 0 represents a new cognitive schema: the utilization of an external, artificial circuit to process massive datasets and propose solutions, which the human mind then evaluates, contextualizes, and applies 45. Meaning is derived not from performing the underlying calculation, but from possessing the systemic wisdom to ask the correct questions and properly direct the massive computational power available.

Relational Work and Human-Centric Leadership

The automation of cognitive tasks has triggered a corresponding premium on uniquely human traits. As knowledge work becomes easier to automate, the demand for emotional intelligence, ethical judgment, conflict resolution, and complex communication is increasing dramatically 4654.

The talent ecosystem of the future places high value on alignment and human connection. Because algorithms cannot genuinely empathize, build trust, or navigate the subtle nuances of human motivation, roles centered on leadership, community building, and psychological support are becoming the most resilient and meaningful aspects of corporate life 235. Workers are discovering that while a machine can generate a project plan, it requires human intervention to persuade a skeptical team, navigate organizational politics, and provide the psychological safety necessary for innovation 47.

Epistemic Governance

Finally, a new source of meaning is emerging in the realm of accountability. Large language models and predictive algorithms are inherently probabilistic; they lack true understanding, frequently hallucinate facts, and struggle with complex causal relationships 48. Furthermore, because these models are trained on historical data, they inherently replicate and scale historical biases and social inequalities 49.

Therefore, human workers find profound meaning in acting as the ethical and factual safeguards of society. The role of the human shifts to epistemic governance: verifying truths, correcting algorithmic biases, and ensuring that technological applications adhere to moral and legal standards 4950. Whether it is a clinician reviewing a diagnostic recommendation, a judge evaluating algorithmic sentencing guidelines, or a data annotator fine-tuning a language model to prevent toxic outputs, the human responsibility to protect the integrity of information provides a deep, enduring sense of professional purpose. Through this lens, tools analyzing natural language processing are increasingly being used by social scientists not just to automate, but to deeply understand shifting human behaviors and values 51. The interaction becomes a mirror reflecting the fundamental human drive for truth and accuracy.

Conclusion

Artificial intelligence is not merely a mechanism for accelerating production; it is a profound catalyst for redefining the human relationship with labor. The traditional pillars of meaningful work - task identity, clear skill progression, and unmediated autonomy - are being irrevocably altered by cognitive automation and algorithmic management. This transition has birthed a host of psychological challenges, from the erosion of ownership and algorithmic loafing to severe attention fragmentation and an epidemic of workplace burnout.

However, the displacement of traditional tasks does not inherently guarantee the destruction of professional meaning. The value of human labor is migrating upward into realms of higher complexity and deeper humanity. As computational systems handle the synthesis and execution of information, humans are tasked with the orchestration of systems, the cultivation of relational empathy, and the rigorous enforcement of epistemic truth. Navigating this transition successfully requires organizations and policymakers to move beyond simplistic metrics of productivity. It demands a deliberate redesign of jobs to protect human agency, a commitment to lifelong reskilling, and a reaffirmation of the social contract to ensure that the technological dividends of this era serve to elevate, rather than diminish, the human experience of work.