Google Search Algorithm and Content Ranking Updates in 2026

Algorithmic Architecture and Timeline Shifts

Transition to Continuous Quality Evaluation

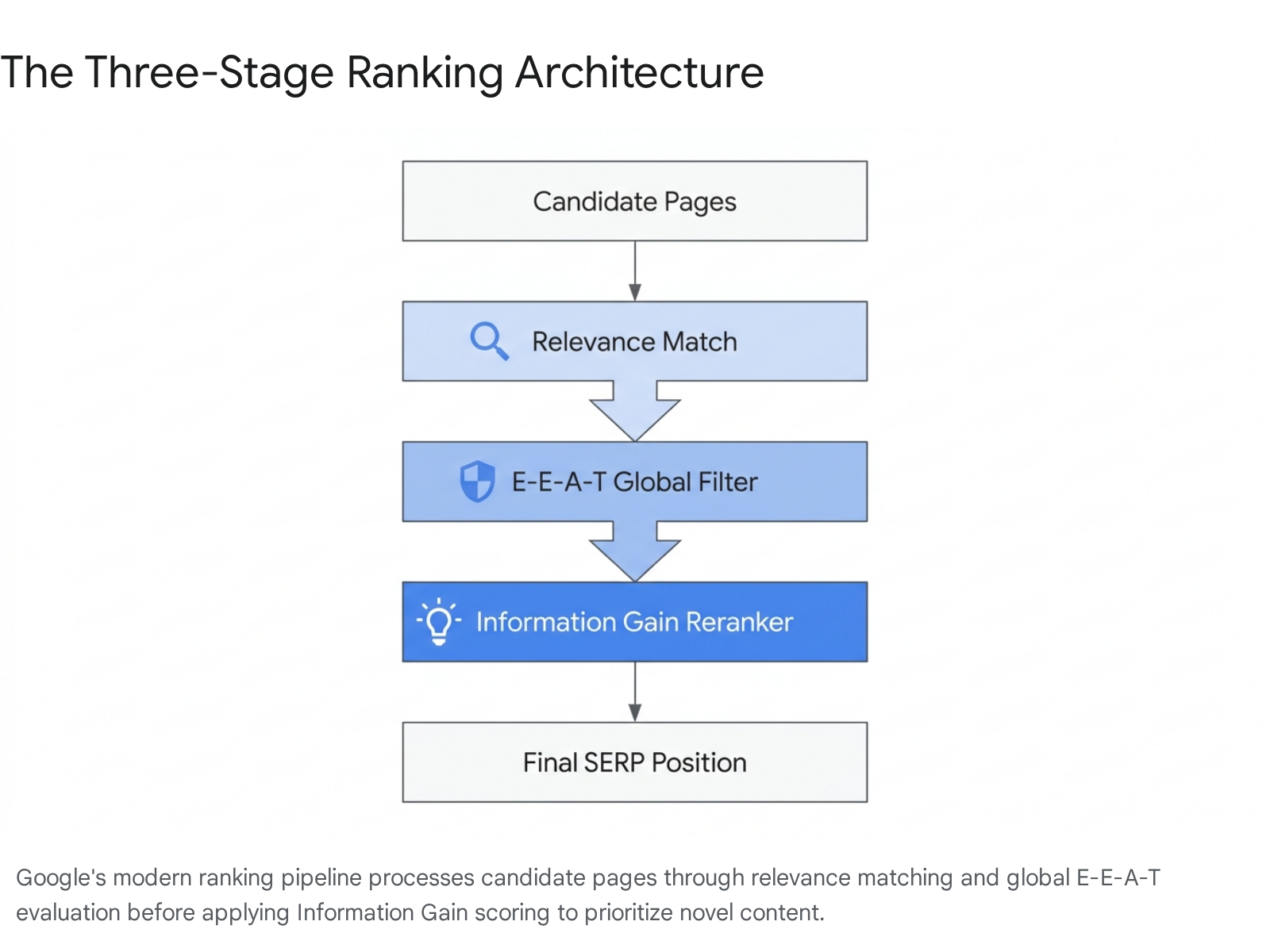

The architecture of the Google search algorithm in 2026 represents a structural departure from the era of isolated, targeted penalties. Rather than deploying discrete updates to address specific vulnerabilities, Google has fully transitioned to an integrated, continuous quality evaluation ecosystem 1. The defining characteristic of this ecosystem is the synthesis of relevance, trust, and novelty into a unified scoring mechanism that dynamically determines search visibility across all surfaces.

The cadence and severity of algorithmic adjustments have accelerated significantly since the initial pivot toward AI-aware search quality in 2023 1. Historically, Google executed thousands of minor algorithmic changes annually - averaging 13 adjustments per day in 2022 - with major core updates occurring only twice a year 2. By 2026, the interval between major core updates compressed to approximately 90 days, with each rollout compounding the systemic changes introduced by its predecessors 1. Instead of waiting for a clear violation to apply a penalty, Google's machine learning systems now embed spam and quality evaluations directly into the ranking and selection process. If content exhibits signals associated with scaled production or borrowed authority, it receives less trust, resulting in lower eligibility for prominent placements without triggering a manual action notification 4.

Major Algorithm Update Cadence (2024 to 2026)

To understand the search landscape in 2026, it is necessary to map the progression of enforcement actions that led to the current environment. Each update targeted increasingly sophisticated manipulation tactics, shifting from baseline helpfulness evaluations to complex AI-content filtering and entity authority verification.

| Update Designation | Rollout Window | Primary Algorithmic Targets and Structural Changes |

|---|---|---|

| March 2024 Core + Spam | Mar 5 - Apr 19, 2024 | An unprecedented 45-day rollout integrating the Helpful Content system directly into the core ranking algorithm. Introduced three new spam policies: scaled content abuse, expired domain abuse, and site reputation abuse 13. |

| August 2024 Core | Aug 15 - Sep 3, 2024 | Refined the embedded helpful content classifier, attempting to balance search results and correct over-corrections from the March 2024 update 1. |

| December 2024 Core | Dec 16 - Dec 24, 2024 | Recalibrated the content quality baseline heading into 2025, continuing the integration of user intent matching 1. |

| June 2025 Core | Jun 2 - Jun 20, 2025 | Targeted user engagement signals and refined passage-level ranking. Increased the weighting of E-E-A-T signals, heavily impacting affiliate sites 16. |

| August 2025 Spam | Aug 18 - Sep 1, 2025 | Focused on AI content detection and link spam networks, formalizing the algorithmic suppression of automated manipulation 1. |

| December 2025 Core | Dec 11 - Dec 29, 2025 | Refined E-E-A-T signals and author entity verification. Caused extreme volatility, particularly impacting e-commerce (52% hit) and health (67% hit) sectors 16. |

| February 2026 Discover | Feb 5 - Feb 27, 2026 | The first major update explicitly targeting the Google Discover feed. Prioritized localized relevance and domestic publishers while penalizing clickbait and sensationalism 264. |

| March 2026 Spam | Mar 24 - Mar 25, 2026 | A rapid, 20-hour global rollout targeting scaled AI content abuse, expired domain manipulation, and site reputation abuse (parasite SEO) without introducing new policy frameworks 1268. |

| March 2026 Core | Mar 27 - Apr 8, 2026 | The most volatile update in modern search history. Introduced holistic Core Web Vitals scoring, escalated Information Gain weighting, and intensified E-E-A-T requirements 126. |

The March 2026 Core Update Volatility

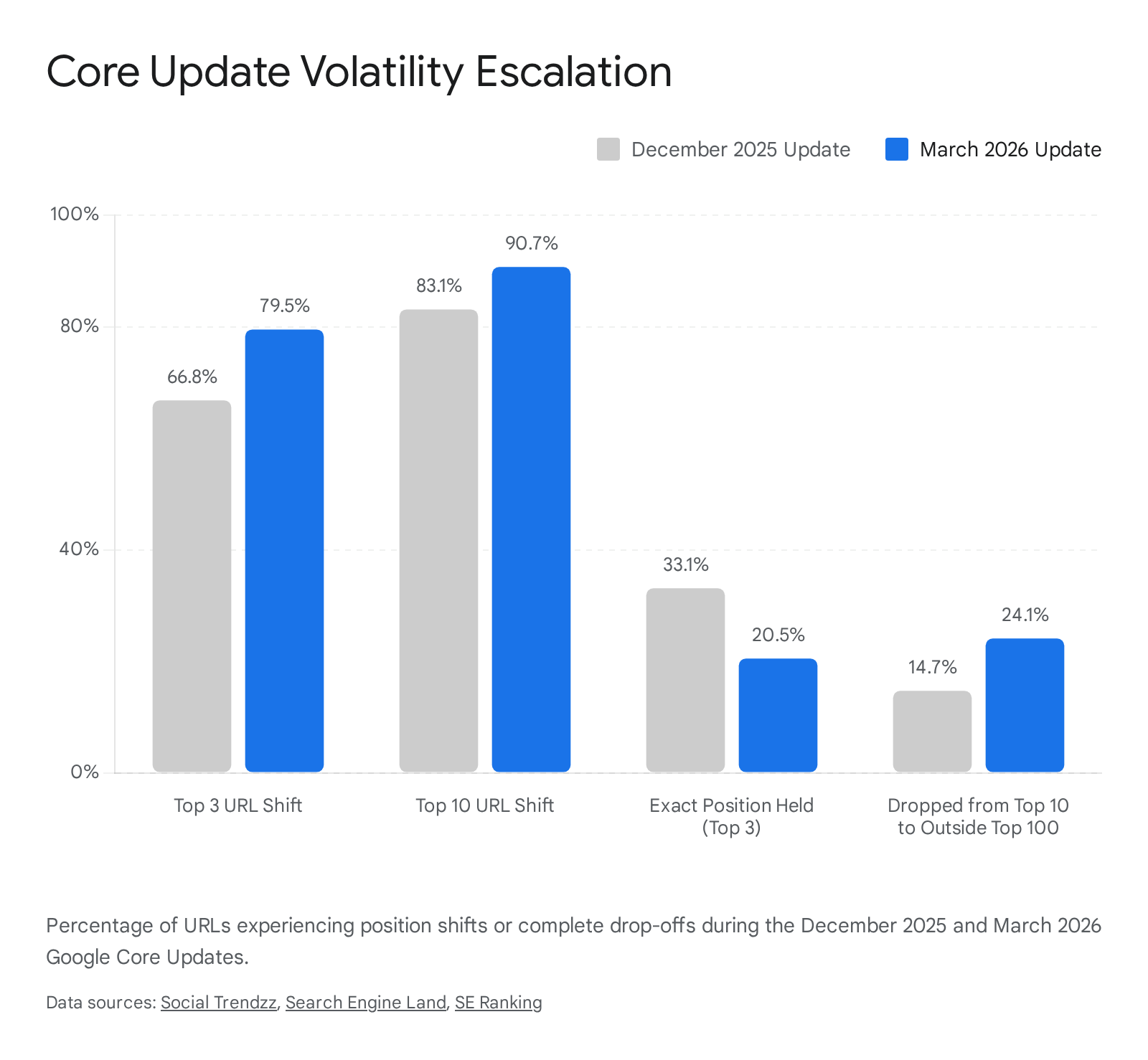

The search environment in early 2026 experienced unprecedented turbulence, culminating in the overlap of the March 2026 Spam Update and the March 2026 Core Update 5611. The Core Update, rolling out between March 27 and April 8, generated a peak volatility score of 9.5 out of 10 on the Semrush Sensor - one of the highest disruption metrics ever recorded 512. Within the first 72 hours of the rollout, over 55% of monitored websites experienced measurable ranking shifts, with heavily affected domains suffering traffic drops ranging from 20% to 70% 578.

This volatility was characterized by intense churn at the absolute top of the Search Engine Results Pages (SERPs). Empirical data indicates that 79.5% of URLs in top-three positions shifted during the March 2026 update, a substantial increase from the 66.8% churn observed during the already disruptive December 2025 Core Update 6159. Furthermore, 90.7% of all top-10 results experienced position changes 66. Most notably, 24.1% of pages that previously held a top-10 ranking disappeared from the top 100 results entirely, compared to a 14.7% total drop-out rate in December 2025 6615.

These dramatic shifts were not punitive manual actions directed at specific domains, but rather a systemic reassessment of what constitutes a "helpful" and "authoritative" result in a digital ecosystem saturated with programmatic and generative AI content 65.

Helpful Content System Integration

Deprecation of the Standalone Classifier

The most profound architectural evolution leading to the 2026 search environment was the deprecation of the Helpful Content Update (HCU) as an isolated tracking mechanism. Initially launched in August 2022 to identify and penalize content written primarily for search engines, the system operated as a periodic, distinct classifier 110. In March 2024, Google fundamentally altered its approach by integrating the Helpful Content system directly into the core ranking algorithm 11018.

This integration signaled that content quality and user focus were no longer ancillary checks but central mechanisms governing all search rankings 10. The result was an immediate 45% reduction in low-quality, unoriginal content appearing in search results 10. Throughout 2025 and 2026, this integration proved permanent; rather than retreating from these quality signals, Google reinforced them through successive core updates 10. The algorithm now continuously evaluates whether content satisfies user intent, is authored by an entity with actual experience, and contributes meaningful value to the search ecosystem 119.

Domain-Level Quality Multipliers

In 2026, the integrated Helpful Content system functions as a silent, overarching evaluator capable of applying site-wide suppression based on page-level data aggregates 118. Google's systems assess a domain's overall ratio of helpful to unhelpful content, effectively applying a quality multiplier to the entire entity 8.

This architectural shift renders traditional content volume strategies highly hazardous. Publishing large quantities of low-value, mass-produced pages no longer merely wastes a search engine's crawl budget; it actively degrades the ranking potential of the site's high-quality, authoritative pillar content 810. One unhelpful, search-engine-first page can theoretically drag down the trust score of the entire domain 1. Consequently, websites relying on programmatic generation or scaled AI content models without rigorous editorial oversight experienced severe, domain-level ranking compression during the March 2026 Core Update. These penalties often manifested as gradual declines over a week, reflecting the algorithm's holistic re-evaluation of the domain's entire content corpus rather than a penalty applied to individual URLs 78.

Information Gain as a Primary Ranking Signal

Mechanistic Definition and Patent Framework

By 2026, "Information Gain" transitioned from a theoretical concept buried in Google's intellectual property to the dominant content-quality evaluator determining top-tier search visibility 11. Patented by Google under US11354342B2 (filed in 2018 and granted in June 2022), Information Gain provides a mathematical framework for calculating how much genuinely new knowledge a specific page contributes relative to the candidate set of pages that already rank for the same search query 111213.

From 1998 to 2023, Google's primary filtering mechanism was relevance - matching keywords and semantic intent to documents 12. As generative AI flooded the web with technically relevant but highly repetitive content, relevance alone became an insufficient discriminator. To counteract AI-content saturation and zero-click search pressure, Google operationalized Information Gain at scale 1112.

The mechanism works by evaluating the originality of a candidate URL. If an article simply paraphrases or aggregates the consensus information already present in the top 10 search results, its Information Gain score is virtually zero 523. Conversely, if two pages are equally fast, well-structured, and topically thorough, the algorithm will rank the page that introduces a proprietary dataset, a first-hand case study, or a completely original framework significantly higher 11.

Personalized Reranking and Suppressing Redundancy

The Information Gain patent also facilitates personalized reranking. The search architecture leverages session data and a userHistory signal to track which documents a user has already consumed 1213. If a searcher visits a highly ranked result, finds it unsatisfactory, and returns to the SERP to refine their query, Google dynamically updates the subsequent results to prioritize pages with high Information Gain scores relative to the previously viewed content 13.

This creates an environment where search results are increasingly fragmented; two users searching for the exact same query but with different browsing histories may receive entirely different page-one results 12. The algorithm algorithmically suppresses previously read content, pushing forward fresh perspectives to ensure the user continuously uncovers novel data points and insights 12.

Evaluation Dimensions for Novelty

During the March 2026 Core Update, the weighting of Information Gain became highly aggressive 5. Analytical tracking data revealed a brutal spread: templated and rewritten content dropped 30% to 50% in visibility, while pages offering original research gained 15% to 25% 1114.

The industry currently models Google's Information Gain evaluation across five critical dimensions: 1. Proprietary Data: The inclusion of unique datasets, original internal statistics, or quantitative research unavailable elsewhere on the internet 11. 2. First-Hand Evidence: Verified local knowledge, original photography, personal testing methodologies, and lived experiences that cannot be scraped or synthesized by automated tools 111526. 3. Original Framework: The presentation of a novel conceptual structure or unique methodology for solving the user's query 11. 4. Expert Attribution: Demonstrable insights, quotes, and verifiable credentials from recognized specialists rather than generalized, anonymous summaries 1116. 5. Freshness Hook: The introduction of timely, updated variables to a static topic 11. Google's systems heavily prioritize content updates; in the e-commerce sector, product pages typically experience significant performance decay within three to six months if not systematically refreshed 28.

Exhaustive topical coverage - historically a primary tactic for SEO dominance - is no longer a reliable shield in 2026. Thoroughness previously compensated for a lack of originality; following the April 2026 algorithmic adjustments, sheer word count without a new angle actively detracts from a page's competitive viability 1129.

E-E-A-T Framework Application and Authority Consolidation

Algorithmic Translation of Trust Signals

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) is not a discrete mathematical ranking factor, nor does Google assign a singular "E-E-A-T score" to individual URLs 1517. Rather, it operates as a qualitative framework utilized by human quality raters to train the underlying machine learning algorithms 17. In 2026, these algorithms have become highly adept at parsing the web for proxy signals that correlate with the E-E-A-T framework, effectively establishing it as the primary filter through which all content is evaluated 1832.

Trust serves as the central pillar holding the framework together; without it, experience, expertise, and authority collapse 17. To algorithmically quantify this trust, Google relies on verifiable entity markers. "Experience" (the element added in 2022) demands structural proof of first-hand involvement, which the algorithm infers through original multimedia, documented testing processes, specific nuances regarding physical product usage, and verifiable local footprint markers 15291832.

"Expertise" and "Authoritativeness" are increasingly measured via off-site validation. Large-scale retrieval systems optimizing for user satisfaction cannot definitively evaluate absolute truth in complex or contested domains 19. Consequently, the algorithm defaults to mitigating risk by evaluating which entity is safest to trust 19. Brand authority, semantic entity clarity, structured professional relationships, and high-quality citations from reputable publications serve as the mathematical stand-ins for credibility 81819.

The Shift Toward Primary Destination Sources

The practical application of E-E-A-T risk mitigation in 2026 has resulted in a market-wide phenomenon known as "authority consolidation." Across numerous verticals, Google has systematically shifted visibility away from independent, mid-tier publishers, broad aggregators, and generic comparison sites, redirecting that traffic toward major destination brands, government institutions, and highly specialized authoritative platforms 151420.

Data derived from the Sistrix Visibility Index during the March 2026 Core Update highlights the precise nature of this consolidation. In cases where the algorithm faced multiple viable answers, it heavily favored the original source over the aggregator.

| Industry Vertical | Visibility Winners (Destination & Authority) | Visibility Losers (Intermediaries & Aggregators) |

|---|---|---|

| Language & Reference | Primary reference platforms (Merriam-Webster, Cambridge, Dictionary.com) 20. | Directory and quick-answer aggregators (Wiktionary -21.3%, Collins Dictionary -30.0%, OneLook -52.8%) 20. |

| Employment & Careers | Direct employer portals and specialized niche platforms (Amazon.jobs +242.7%, USAJobs +25.5%, myworkdayjobs.com +115.0%) 620. | Broad job board intermediaries and generalist aggregators (ZipRecruiter -36.6%, Glassdoor -36.3%, SimplyHired -43.2%) 620. |

| Public Sector & Data | Official institutional domains serving as original data sources (Census.gov +30.2%, HUD +36.2%, CISA +101.2%) 620. | Independent bloggers and third-party news sites summarizing government data 20. |

| Travel & Tourism | Destination brands, official tourism boards, and primary service providers 626. | Broad planning intermediaries and generic discovery platforms (Travelocity -44.3%, Hotwire -36.0%, Expedia -23.4%) 20. |

| Finance & Commerce | Established financial institutions, credentialed analysts (gains of 12-20%), and official brand shops 1421. | Generic affiliate comparison sites, coupon aggregators, and thin review portals (drops of 35-55%) 14. |

The data indicates that established domain authorities that previously occupied positions four through eight have frequently consolidated into the top three positions following the March 2026 update 14. This shift suggests that Google has increased the weight of domain-level authority metrics relative to individual page-level signals, rewarding entities that have built sustained, verifiable credibility 14.

Discover Feed Reordering and Geographic Focus

The prioritization of authoritative, direct sources extends beyond traditional SERPs and into automated content delivery surfaces like Google Discover. In February 2026, Google executed the first confirmed core update explicitly targeting the Discover feed 264. The update aimed to surface more in-depth, original content while suppressing sensationalism and clickbait 4.

A critical element of the February 2026 Discover update was the introduction of a powerful geographic and local relevance filter. Google's systems began explicitly evaluating website location, prioritizing content delivery to users from publishers based within their own country 422. Analytical tracking of the US Discover feed demonstrated that the share of international publishers declined from 8.52% to 7.04% post-update, while domestic US-based publishers increased their dominant share to nearly 90% 22.

Furthermore, the update revealed a simultaneous expansion of topic coverage and a contraction of publisher diversity, indicating that Google prefers to source content across a wider array of topics from a narrower, more highly trusted set of domains 22. Notably, X.com (formerly Twitter) experienced a sharp 38% increase in Discover visibility post-update, predominantly driven by established media brands and verified institutional accounts, illustrating Google's willingness to extract timely, original content directly from authoritative social platforms 22.

Content Generation Methods and AI Evaluation

Performance Disparities Between Human and AI Content

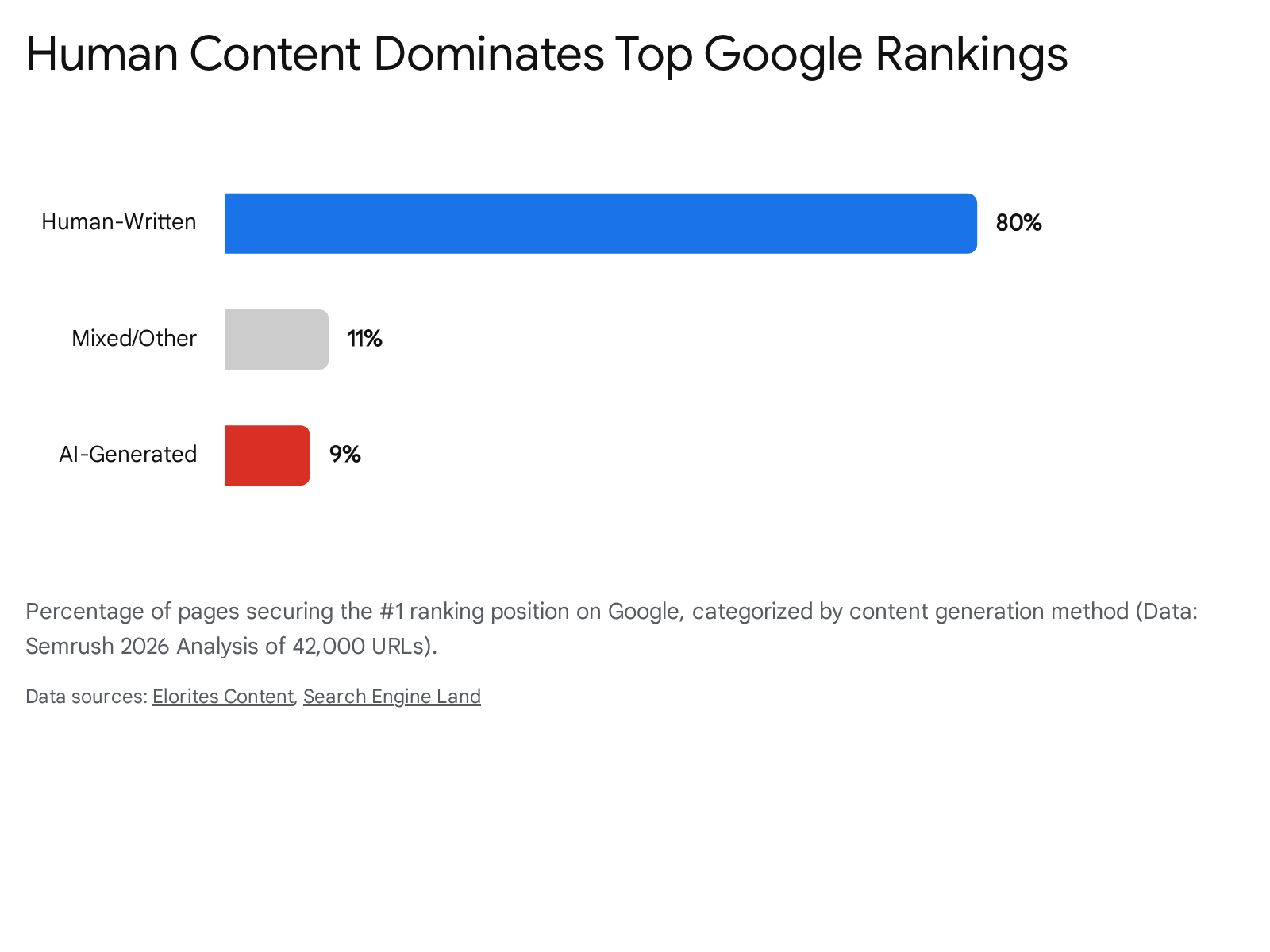

The proliferation of Large Language Models (LLMs) has saturated the internet with automated text, prompting intense debate regarding Google's algorithmic stance on AI. A prevalent misconception within the industry is that Google actively penalizes AI-generated content through specific detection software 1623. Official documentation and empirical enforcement data confirm that Google does not hunt for "AI fingerprints," nor does it apply penalties based strictly on a document's production method 52338.

However, the nature of generative AI directly conflicts with Google's updated quality signals. Because LLMs probabilistically synthesize text based on the median consensus of their training data, purely AI-generated content inherently lacks Information Gain, authentic lived experience, and verifiable human expertise 232339.

This structural deficit translates into severe ranking disparities. A comprehensive 2026 study conducted by Semrush analyzed 42,000 blog pages connected to 20,000 highly competitive keywords 24. The data revealed that human-written content dominates Google's top rankings, appearing in the number one position 80% of the time, compared to a mere 9% for purely AI-generated pages 24.

This performance gap is widest at the absolute top of the SERP, where human-authored content is approximately eight times more likely to secure the primary ranking spot 24.

Despite this disparity, AI adoption remains ubiquitous; the same datasets indicate that 86.5% of top-ranking pages utilize AI assistance in some capacity during their workflow 5. The critical differentiator is human intervention. Content that leverages AI for drafting but incorporates substantial editorial oversight, verified research, and expert nuance successfully satisfies E-E-A-T requirements and maintains high search visibility 52542.

The Semantic Filter and AI Production Stance

To manage the influx of synthetic text, the March 2026 Core Update deployed advanced machine learning indicators - referred to by analysts as the Gemini 4.0 Semantic Filter - to accurately identify content produced at scale without meaningful human editorial oversight 3242. Google AI Signals evaluate semantic intent, analyzing whether a piece of content demonstrates the specific, nuanced details indicative of real-world experience (e.g., describing how leather stretches on a specific hiking boot rather than just repeating manufacturer specifications) 32.

If the algorithm determines that a page is merely a homogenized aggregation of existing data, it fails to clear the required quality thresholds 3242. Therefore, while the tool utilized to generate the draft is irrelevant to Google's compliance systems, the lack of original insight characteristic of unedited AI output virtually guarantees algorithmic demotion in highly competitive search environments 1623.

Generative Engine Optimization and AI Overviews

The proliferation of Google's AI Overviews (AIO) has fundamentally transformed user behavior and traffic distribution models. By early 2026, AI Overviews appeared on an estimated 30% to 48% of all search queries, processing over one billion queries monthly and reaching roughly 2 billion users 2627.

This integration has severely depressed traditional organic click-through rates (CTR). Data reveals that 58.5% of Google searches result in zero clicks to any external website; when an AI Overview is present, that figure spikes to 74% 1828. Consequently, traditional pages ranking in the number one position experience an average CTR drop of 34.5% - falling from 1.76% to 0.61% - when superseded by an AI-generated summary 11.

To adapt, the industry has pivoted toward Generative Engine Optimization (GEO). Success in GEO relies on a different set of trust signals than traditional SEO. Ranking on page one of Google no longer guarantees a citation within an AI Overview; research from Brandlight in 2026 found that the overlap between top organic links and AI-cited sources has dropped below 20% 27. Instead, AI citation probability correlates strongly with Digital PR and off-site brand authority. Brand mentions correlate 0.664 with AI citation probability, vastly outperforming traditional backlinks (0.218) 27. For businesses, establishing visibility inside the generative engine has become paramount, as brands cited within an AI Overview earn 35% more organic clicks than those excluded from the summary 511.

Search Spam Policy Enforcement

Google manages deliberate search manipulation through its AI-driven spam detection engine, SpamBrain. Unlike core updates that broadly recalibrate quality scoring, spam updates are surgical enforcement actions designed to identify and penalize sites violating explicit Google guidelines 2930. SpamBrain continuously learns from patterns across billions of pages, evolving to neutralize sophisticated forms of automated content generation and link manipulation 3031.

In March 2026, Google executed a global spam update that completed its rollout in a record-breaking 20 hours 81132. While the speed of the rollout triggered industry alarm, Google did not introduce any new spam policy categories during this event 850. Instead, the update represented a dramatic refinement in SpamBrain's enforcement of three critical policies originally codified in March 2024: Scaled Content Abuse, Expired Domain Abuse, and Site Reputation Abuse 850.

Scaled Content Abuse

Scaled Content Abuse is defined as the generation of massive page volumes primarily to manipulate search rankings, providing little to no original value to users 3334. This policy was the central enforcement priority during the March 2026 algorithmic shifts 1635.

Google's enforcement is agnostic to the method of production; it targets the abusive behavior of prioritizing volume over value, whether the text is generated by LLMs, humans, or automated scraping techniques 33334. The algorithm flags sites exhibiting high-velocity publishing patterns (e.g., 10+ articles per day without proportional editorial staff), identical structural templates across URLs, and a lack of verifiable author credentials 35. Affiliate marketing operations and programmatic SEO sites - which rely on swapping localized keywords into identical text blocks - were heavily penalized, with targeted domains experiencing organic visibility losses ranging from 50% to 80% 535.

Expired Domain Abuse

Expired Domain Abuse involves the acquisition of aged, authoritative domain names that are subsequently repurposed to host low-quality content entirely unrelated to the domain's historical identity 3833. The goal of this tactic is to artificially inflate the search rankings of the new content by exploiting the historical backlink equity earned by the previous owner 354.

Google's detection logic for this abuse relies on thematic coherence 8. The algorithm analyzes the semantic mismatch between a domain's established reputation and its current content corpus 8. If a domain that spent a decade earning authoritative links as an elementary school or a medical charity is suddenly repurposed to host commercial casino links or payday loan reviews, the system flags the violation 83334. When caught, SpamBrain permanently nullifies the historical ranking benefits, ensuring that the artificial authority cannot simply be restored by deleting the newly added spam 3637.

Site Reputation Abuse and Parasite SEO

Site Reputation Abuse - colloquially known as "Parasite SEO" - occurs when a highly authoritative host domain publishes low-quality, third-party content with the primary intention of manipulating search rankings by capitalizing on the host's established trust signals 3333457.

Historically, this tactic involved marketing entities leasing subdomains from reputable news publishers to host highly lucrative affiliate coupon directories or product reviews that were entirely disconnected from the host's core editorial focus 5758. Beginning in May 2024 and culminating in strict algorithmic enforcement by early 2026, Google explicitly categorized this as a spam violation 5738. Google expanded the policy to cover all third-party content regardless of editorial oversight, effectively eliminating the defense that host editors had reviewed the parasitic material 5739. Enforcement actions result in the algorithmic demotion of the specific third-party directories or, in severe cases, manual penalties applied to the host site 5740.

European Union Antitrust Intervention

Google's aggressive enforcement of the Site Reputation Abuse policy triggered significant international regulatory friction. In November 2025, the European Commission launched an antitrust investigation into Google's parent company, Alphabet, under the Digital Markets Act (DMA) 3941. The probe was initiated following complaints from the European Publishers Council, which argued that Google was unfairly demoting news media websites and disrupting legitimate commercial income streams, such as sponsored articles and branded hubs 414264.

Under the DMA, designated "gatekeepers" like Google are legally obligated to apply fair, reasonable, and non-discriminatory conditions of access to business users on their platforms 4042. Regulators investigated whether the anti-spam policy was unfairly penalizing publishers and impacting their freedom to conduct legitimate business, with potential penalties for DMA breaches reaching up to 10% of Alphabet's global annual turnover 4142.

Google defended the policy, stating it is essential to fight deceptive pay-for-play tactics that degrade search quality 39. However, facing substantial financial and reputational risk, Google submitted a remedies offer to the European Commission in May 2026 4142. While the full text of the proposal remains confidential, documentation suggests Google is willing to adjust how the Site Reputation Abuse policy is applied to news domains within the European Union and increase transparency regarding enforcement actions 404143. This ongoing regulatory intervention threatens to fragment Google's global spam enforcement protocols, forcing SEO practitioners to track regional policy disparities when forecasting organic traffic 64.

Expanding Spam Horizons: Back-Button Hijacking

Google's spam policies require continuous updates to address emerging malicious practices. In April 2026, Google explicitly added "back-button hijacking" to its spam documentation under the malicious practices category 44. This abusive tactic involves inserting phantom history entries or manipulating browser navigation via JavaScript to prevent a user from returning to the search results page after clicking a link 44. While the policy was announced in April, Google provided a two-month remediation window, scheduling strict enforcement to begin in June 2026 44. Concurrently, Google updated its reporting mechanisms, confirming that user-submitted spam reports can now bypass automated detection and directly trigger manual actions by human reviewers 44.

Technical Performance and Core Web Vitals

Holistic Domain-Level Performance Scoring

While the March 2026 Core Update was predominantly focused on content quality and E-E-A-T evaluations, it also introduced a critical modification to technical SEO assessment by implementing holistic Core Web Vitals (CWV) scoring 1838.

Historically, Google evaluated the CWV metrics - Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS) - as independent, page-specific pass/fail signals 1. Under the 2026 architecture, these metrics are aggregated into a composite performance score that applies to the entire domain 1. Consequently, slow architectural templates, bloated JavaScript frameworks, or persistent layout shifts on auxiliary pages can actively drag down the ranking potential of the site's highest-quality content 38.

The integration of technical health with overall quality assessment proved highly punitive during the March 2026 rollout. Analytical data indicates that sites failing to maintain an LCP below the 3-second threshold lost an estimated 23% more traffic than their faster competitors within the exact same niche 538. Sites that suffer from poor architecture, excessive index bloat, or crawl budget inefficiencies broadcast low-quality signals to Google's systems, severely retarding their ability to recover from algorithmic demotions 826.

Recovery Dynamics Following Algorithmic Demotion

Discrepancy Between Official Guidance and Empirical Data

A significant disconnect exists between Google's official recovery advice and the empirical reality observed by the SEO industry in 2026. Google's standing guidance maintains that core updates are not punitive penalties; a ranking drop simply indicates that the algorithm has reassessed other content as more highly relevant 611. Official documentation suggests that site owners should refrain from hasty technical fixes, audit their content for usefulness, and wait for systems to naturally reassess the improvements 16.

However, tracking data reveals a much harsher recovery landscape. A study by SE Ranking analyzing the aftermath of the 2026 core and spam updates found that out of approximately 302,000 unique domains that disappeared from the top 100 search results, 82% failed to return even after subsequent core updates were completed 9. Among the ~52,000 domains that lost a top-10 position, only 23.5% managed to reclaim a spot on the first page of results 9.

Content Consolidation and Pruning Strategies

Because the integrated Helpful Content system applies a negative quality multiplier to an entire domain based on the aggregate ratio of poor content, surface-level edits are entirely insufficient for recovery 78. Site owners who attempt to recover by merely updating publish dates, tweaking meta titles, or inserting generic author biographies consistently fail to regain visibility 78.

The most effective, data-backed recovery pattern in 2026 is rigorous content pruning and consolidation 8. Sites that successfully navigate post-update recovery do so by identifying thin pages lacking Information Gain and actively removing them from the search index 78. Redundant or overlapping AI-generated articles must be consolidated via 301 redirects into comprehensive, highly authoritative pillar pages that feature proprietary data and demonstrable expert oversight 78. By actively reducing the volume of low-quality pages, a domain can effectively raise its minimum quality floor, resetting the algorithmic trust signals required for competitive ranking 8.

Expected Timelines for Traffic Restoration

The timeline for algorithmic recovery requires strict expectation management. Due to the architecture of Google's continuous evaluation systems, restoring trust is a protracted process. While initial signals of improvement may appear in Google Search Console within four to eight weeks as the crawler processes deleted or consolidated URLs, meaningful traffic restoration is rarely immediate 78.

In cases of algorithmic demotion tied to domain-level quality multipliers, recovery generally requires three to six months of sustained, verifiable compliance 835. Frequently, a domain will remain algorithmically suppressed until the rollout of the next major broad core update, at which point Google's systems will fully re-evaluate the site's overhauled architecture and elevated quality baseline 78.