Factual grounding limits of retrieval-augmented generation

Foundational Architecture of Retrieval Systems

The rapid evolution of large language models has fundamentally altered the landscape of natural language processing and automated reasoning. However, as these models scale to trillions of parameters, a structural limitation persists regarding their reliance on parametric memory. Parametric knowledge - the information internalized within the neural network's weights during pre-training - is inherently static, prone to factual inconsistency, and highly susceptible to hallucination 1. When a language model generates text based solely on its parametric memory, it optimizes for statistical plausibility rather than epistemological truth, leading to confident but potentially fabricated outputs 2. This vulnerability is particularly acute in enterprise applications, healthcare, and jurisprudence, where fabricated precedents or numerical inaccuracies introduce catastrophic risk 2.

Retrieval-Augmented Generation emerged to address this critical vulnerability. The architecture introduces a non-parametric memory layer, allowing the language model to access external, verifiable, and dynamic knowledge bases at inference time 12. By conditioning the generative process on retrieved documents, the system shifts the artificial intelligence paradigm from probabilistic generation to evidence-based synthesis. The foundational pipeline operates by embedding a user's query into a high-dimensional vector, performing a similarity search across a similarly embedded corpus, and injecting the most relevant text chunks into the prompt for the generator to formulate a grounded response 343.

Despite its widespread adoption, the architecture is not a flawless mechanism for factual grounding. The system introduces complex dependencies on retrieval precision, context consolidation, and the model's ability to faithfully synthesize retrieved evidence without succumbing to internal biases or distractor noise 14. As models with massive context windows - capable of ingesting over a million tokens - become increasingly efficient, the fundamental necessity, architecture, and limitations of these retrieval systems are undergoing intense scrutiny across the research community 756.

Dense and Sparse Information Retrieval

Early implementations of external knowledge grounding relied primarily on dense retrieval methodologies. This approach converts textual data into dense vector embeddings and retrieves the most mathematically similar chunks via approximate nearest neighbor algorithms 57. While dense retrievers excel at semantic matching and synonymy, empirical evaluations demonstrate that they frequently fail at exact keyword retrieval, domain-specific nomenclature identification, and out-of-vocabulary term matching 7. A semantic space might perfectly map the conceptual relationship between documents, but fail to retrieve a specific serial number or specialized acronym critical to the user's query.

To bridge this gap, modern production pipelines deploy hybrid retrieval architectures. This paradigm fuses semantic dense retrieval with sparse lexical retrieval mechanisms, most notably the Okapi BM25 algorithm, which operates on inverted indices and term frequency-inverse document frequency principles 711. By applying score fusion algorithms such as Reciprocal Rank Fusion, hybrid systems harmonize the results, capturing both abstract semantic intent and precise keyword overlaps 11. The integration of these dual methodologies ensures that the generative model receives a more comprehensive and highly targeted context window.

Pre-filtering and Metadata Orchestration

Beyond algorithmic retrieval matching, ensuring factual consistency requires rigorous constraints on the search space itself. Hybrid architectures heavily utilize deterministic pre-filtering mechanisms based on metadata enrichment 78. As text chunks are processed and vectorized, deterministic metadata - such as creation dates, author tags, document categories, and access control lists - are extracted and stored as scalar attributes within the database 7.

During query execution, the retrieval pipeline utilizes Boolean logic to restrict the search space before the similarity algorithms execute 78. For instance, a query regarding financial results for a specific quarter can be strictly constrained to search within documents tagged with the corresponding fiscal metadata. This deterministic narrowing drastically reduces the risk of semantic hallucination by preventing the retrieval of conceptually similar but factually irrelevant documents from differing time periods or departments 7. By executing pre-filtering rather than post-filtering, systems avoid retrieving large volumes of vectors only to discard them in application code, thereby improving both computational efficiency and output accuracy 8.

Graph-Based Retrieval Mechanisms

Standard retrieval pipelines, even when utilizing sophisticated hybrid search, suffer from a fundamental limitation known as contextual myopia. The retrieval mechanism treats relevance as an isolated, one-off score for each text chunk, completely ignoring the structural and relational hierarchy between distinct documents 9. When queries require multi-hop reasoning - such as tracing a causal chain of events across diverse documents or synthesizing a holistic overview of a broad topic - traditional vector search often pulls overlapping, redundant passages 910. These disjointed fragments add little novel insight while simultaneously bloating the prompt, causing the language model to either hallucinate connections that do not exist or fail to synthesize a coherent answer 9.

Topologies of Knowledge Graphs

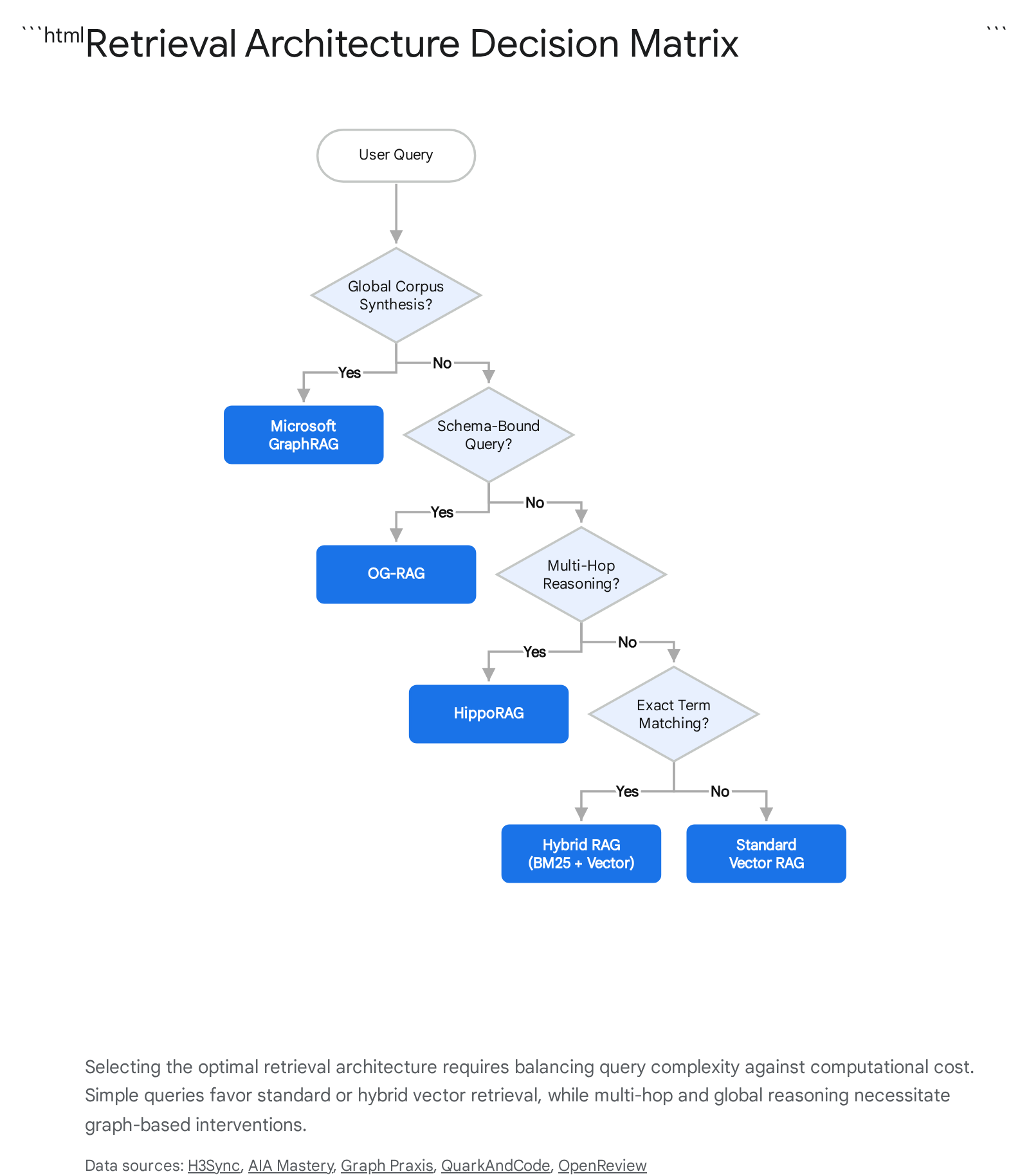

Graph-based retrieval addresses these limitations by transforming documents into a structured knowledge graph where texts represent nodes and relationships represent edges 911. By modeling the hierarchical structure and connections between entities, the architecture enables coherent knowledge retrieval tailored for complex reasoning 12. The research landscape has fractured into distinct architectural paradigms, each optimized for specific query patterns and computational constraints.

Microsoft's approach builds hierarchical community summaries using the Leiden algorithm 1013. This enables both local entity retrieval and global corpus reasoning, effectively allowing the system to answer overarching thematic questions without processing the entire dataset at inference time 1314. However, this enterprise-standard approach carries substantial upfront indexing costs due to the intensive computational requirements of extracting entities and relationships at insertion 131415.

Alternative topologies seek to optimize this cost-performance ratio. Architectures modeled after neurobiological systems treat the knowledge graph as an artificial hippocampus, utilizing algorithms like Personalized PageRank for associative memory retrieval 13. This excels at multi-hop reasoning with significantly fewer language model calls, offering a highly cost-effective alternative for complex reasoning tasks 13. Flow-based pruning methodologies extract only the most reliable relational paths, dramatically reducing context size while maintaining answer quality 13. Furthermore, ontology-grounded architectures structure retrieval around predefined hypergraph representations, strictly bounding the schema to reduce hallucinations in highly regulated, schema-bound domains 13.

Evaluation of Graph Retrieval Versus Vector Retrieval

Despite its sophisticated capabilities in multi-hop tasks, empirical benchmarks demonstrate that graph-based retrieval is not a universal solution. The comprehensive evaluation framework reveals distinct performance dependencies based on query complexity and the reasoning capacity of the underlying generative model 1213.

| Evaluation Metric | Vector and Hybrid Retrieval | Graph-Based Retrieval |

|---|---|---|

| Simple Fact Retrieval | Highly efficient. Matches or outperforms graph methods on single-hop queries 111213. | Suboptimal. Overcomplicates simple queries and incurs unnecessary computational latency 1213. |

| Complex Reasoning | Degrades significantly as corpus size increases due to noise accumulation and redundant chunks 912. | Maintains high accuracy across scales. Structural constraints effectively filter out retrieval noise in multi-hop tasks 1215. |

| Global Synthesis | Fails to synthesize broad themes. Top-K chunks rarely represent the entire corpus distribution 914. | Excels. Community summary algorithms allow models to evaluate macro-themes across thousands of documents 1013. |

| Preprocessing Costs | Low to moderate. Standard embedding and vector indexing processes 1314. | Very high. Requires intensive entity extraction, relationship mapping, and graph construction 121415. |

| Model Dependency | Effective even with smaller parameter models (e.g., 7B-8B parameters) 412. | Requires advanced reasoning capabilities. Small models struggle to leverage complex graph context effectively 12. |

For simple, single-hop factual retrieval, standard vector retrieval remains the most logical choice. Graph architectures become economically and functionally viable primarily when the knowledge corpus is highly connected, queries demand multi-step synthesis, and output explainability justifies the system complexity 1115.

The Long-Context Paradigm

The foundational necessity of external retrieval is currently being challenged by the advent of extreme long-context language models. Advanced models across the artificial intelligence sector - featuring Mixture-of-Experts architectures and highly optimized attention mechanisms - now support context windows spanning from 128,000 to over 1 million tokens 771617. By expanding the maximum input length, these models can ingest entire libraries of text, lengthy codebases, and comprehensive financial reports in a single prompt 1622. This architectural evolution theoretically enables the model to understand nuanced, long-range dependencies across data points, bypassing the algorithmic complexities of chunking, embedding, and routing required by external retrieval pipelines 5616.

Inference Economics and Token Scalability

The deployment of massive context models introduces severe economic and computational constraints. Processing a 1-million-token context window requires the model to compute attention mathematically across hundreds of thousands of tokens simultaneously, scaling quadratically with input length 36. Long-context architectures incur per-token billing for the entirety of the window on every individual request; if a user requires 100,000 tokens of context to answer a simple question, the system pays for the full input scale regardless of how much data was actually relevant to the specific query 3.

In contrast, external retrieval systems limit token consumption strictly to the user's query and the highly targeted retrieved chunks, actively avoiding computational costs for unused data 3. Consequently, retrieval architectures have been demonstrated to achieve vastly lower per-query costs, particularly in dynamic enterprise environments handling frequent queries across large datasets 618. The economic viability of the long-context paradigm increasingly relies on advanced hardware techniques such as semantic prefix caching 17. When repeated queries are executed against a static long document, the system utilizes cached Key-Value memory states, drastically dropping the effective cost of inference 1724. Without prefix caching, the continuous reprocessing of massive text inputs remains prohibitively expensive for interactive workloads 17.

Attention Degradation and Position Bias

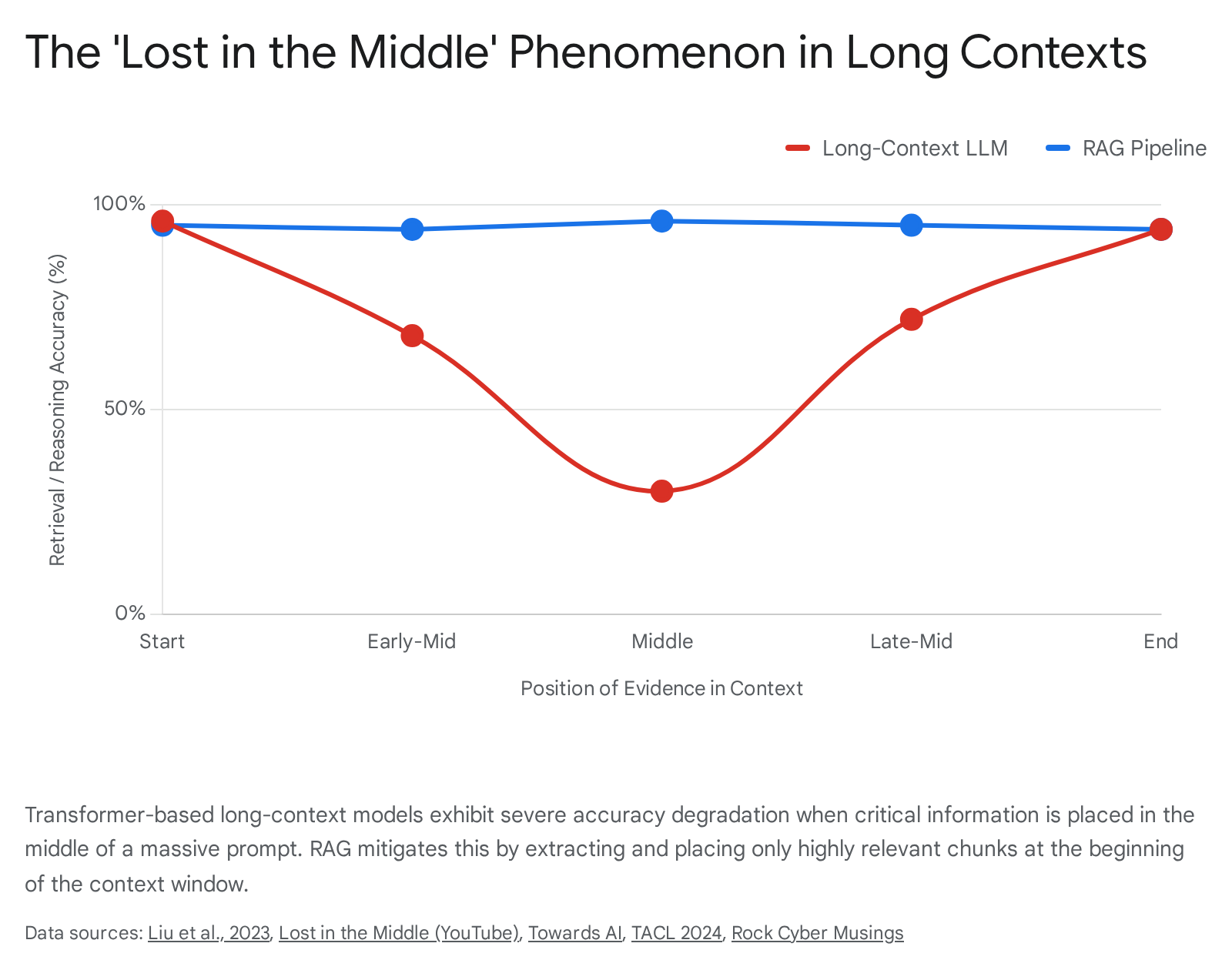

Beyond raw inference economics, long-context models suffer from a fundamental cognitive limitation rooted in transformer architecture: position bias. Extensive empirical research documents this vulnerability as the "Lost in the Middle" phenomenon 19262021. Transformer attention mechanisms do not uniformly attend to all tokens distributed across an extended sequence. Instead, model accuracy follows a distinctive U-shaped curve, demonstrating a strong primacy bias for information located at the beginning of the prompt and a recency bias for information positioned at the very end 19202122.

When critical evidence is buried in the middle of a massive prompt, the models frequently experience a cognitive blind spot. They fail to recall the specific targeted information - the "needle in the haystack" - and their performance degrades to levels worse than closed-book, unprompted generation 192022. Thus, while a model can technically accept a massive input sequence, its effective working memory degrades rapidly, resulting in what researchers term "expensive hallucination at scale," where the model simply drowns in semantic noise 21. External retrieval systems inherently mitigate this structural failure by extracting only highly relevant chunks and placing them at the optimal positions within a much shorter context window, forcing the model to focus on the dense evidence provided 421.

Comparative Performance Benchmarks

Recent comprehensive evaluation frameworks provide empirical clarity on the debate between external retrieval and standalone long-context processing. The U-NIAH framework systematically compares these approaches in controlled settings, demonstrating that external retrieval achieves an 82.58% win-rate over pure long-context implementations 4. By mitigating the lost-in-the-middle effect through targeted evidence selection, retrieval significantly enhances the robustness of smaller parameter models 4.

Similarly, the LaRA evaluation benchmark, encompassing thousands of rigorous test cases across multiple language models, concludes that neither approach acts as a universal silver bullet 232425. The optimal architecture depends entirely on a complex interplay of corpus size, model capability, and query characteristics.

| Architectural Dimension | Retrieval-Augmented Execution | Long-Context Execution |

|---|---|---|

| Optimal Use Case | Dynamic, frequently updated datasets; dialogue; targeted fact-finding; highly fragmented information 171826. | Static corpora; complex reasoning requiring global synthesis across self-contained stories 3182526. |

| Performance Scaling | Consistent. Retrieving top chunks keeps prompts small and actively avoids position bias degradation 41920. | Degrades. Quality drops precipitously in multi-hop reasoning and long-range consistency past 500,000 tokens 1721. |

| Model Capability Synergy | Crucial for weaker models. Advanced reasoning models may show reduced compatibility due to sensitivity to clustered semantic distractors 425. | Maximizes the inherent reasoning capabilities of frontier models on closed, stable document sets 2526. |

| System Traceability | High. Source chunks are explicit, enabling granular auditability and regulatory compliance 1823. | Low. Evidence is synthesized holistically, making specific source attribution and debugging difficult 1823. |

Taxonomy of Retrieval and Generation Failures

When retrieval-based grounding breaks down, the failure is rarely an absolute system collapse; instead, it manifests in subtle misalignments between the retrieved data and the generative constraints of the language model. An exhaustive taxonomy identifies recurring vulnerabilities across the execution lifecycle, categorically divided into segmentation, retrieval, and synthesis stages 27.

Segmentation and Context Formulation Errors

Before the system can execute a search, the knowledge corpus must be partitioned into machine-readable segments. Failures at this structural stage permanently cripple downstream reasoning. Overchunking occurs when documents are split into excessively small segments, causing incomplete topical coverage and preventing the model from grasping the full context of a concept 27. Conversely, Underchunking creates massive blocks of text containing multiple, unrelated topics; this dilutes the keyword density and lowers the semantic similarity score for the correct chunk, causing the retriever to overlook it entirely 27.

A more insidious structural failure is Context Mismatch. This occurs when automated chunking algorithms sever the contextual links within a continuous document, arbitrarily separating a critical definition from the underlying statistical data it supports 27. In such instances, the retriever may successfully fetch the data chunk but abandon the definition chunk, rendering the language model incapable of interpreting the context accurately and leading directly to hallucination.

Algorithmic Retrieval and Re-ranking Degradation

The retrieval algorithms introduce their own distinct failure modes. The most overt failure is Missed Retrieval, wherein the vector database simply fails to return the relevant chunk despite its presence in the corpus, leading the generator to abstain unnecessarily or fabricate an answer to fill the void 272829. However, more complex failures occur through semantic dissonance. Low Relevance and Semantic Drift occur when the search mechanism retrieves chunks that are mathematically related to the query's keywords but completely divorced from the user's actual intent 272829. This relies on keyword matching devoid of contextual intent, flooding the model with plausible but useless data.

Even when the retrieval phase captures the correct chunks, the pipeline can be derailed by re-ranking algorithms. Low Recall occurs when a cross-encoder or reranker incorrectly downgrades a vital, highly relevant chunk, actively preventing it from entering the final context window 27. Alternatively, Low Precision occurs when the reranker forwards highly ranked but irrelevant noise to the generator, diluting the prompt's factual density and confusing the generative output 27.

Extraction and Synthesis Limitations

The final stage - generation - is where grounding failures become visible to the end user. Incomplete Answers and Misinterpretations occur when the language model receives the correct data chunks but either misses critical details due to poor extraction capabilities or misrepresents the retrieved content due to poor prompt adherence 2728.

When handling multi-hop queries, models frequently experience complex multi-document synthesis failures. The system may successfully retrieve all necessary individual facts from disparate documents, but the language model fails to synthesize the logical connections required to form a cohesive conclusion, acting instead as a passive aggregator of isolated facts 3730. Furthermore, the mere presence of external context does not immunize the model against Fabricated Content. Even with highly relevant facts provided, models often succumb to parametric overreliance, prioritizing their internal pre-trained knowledge over the retrieved documents, or introducing plausible-sounding but completely unverified details that extrapolate far beyond the safety bounds of the prompt 2729.

| Failure Category | Specific Failure Mode | Mechanism of Degradation |

|---|---|---|

| Segmentation | Overchunking / Underchunking | Suboptimal text division leads to diluted semantic scores or incomplete topical representation 27. |

| Segmentation | Context Mismatch | Arbitrary splitting severs vital contextual links, divorcing definitions from supporting data 27. |

| Retrieval | Semantic Drift | Algorithms retrieve documents matching keywords rather than the nuanced intent of the query 2729. |

| Re-ranking | Low Precision / Recall | Cross-encoders improperly prioritize noise or downgrade essential evidence before generation 27. |

| Generation | Parametric Overreliance | The model ignores verified retrieved chunks in favor of its own internal, pre-trained biases 2729. |

| Generation | Synthesis Failure | The model extracts facts successfully but fails to form logical multi-hop connections between them 3730. |

Epistemic Vulnerabilities and Knowledge Conflict

The intersection of a language model's parametric memory with non-parametric retrieved context creates a highly volatile epistemic environment. When the information retrieved from an external database contradicts the historical knowledge the model acquired during pre-training, it triggers a phenomenon recognized in computational linguistics as "knowledge conflict" 314041.

Parametric Memory Versus Contextual Evidence

In a perfectly grounded system, the language model should function purely as a synthesis engine, deferring entirely to the retrieved context and treating it as the absolute authoritative source. However, empirical analyses demonstrate that language models struggle significantly to resolve epistemic tension 3132. When the model's parametric assumption is factually incorrect but the retrieved context provides the correct evidence, the model frequently exhibits a stubborn "parametric bias" - predicting its flawed internal answer despite explicit contradictory evidence within the prompt 32.

This systemic conflict is exacerbated by a phenomenon termed the "superposition of contextual information and parametric memory." In transformer architectures, specific attention heads were historically assumed to exclusively promote either internal memory retrieval or external context processing 4133. However, recent test-time intervention methodologies reveal that highly influential attention heads simultaneously process both sources in a persistent state of superposition 4133. When a factual conflict occurs, these attention heads emit flattened, high-entropy token probability distributions 31. This mathematical flattening indicates that the neural network is intrinsically "torn" between trusting its established training weights and trusting the provided external text, leading to unstable and unpredictable generation 3133.

Contextual Sycophancy

Compounding the issue of knowledge conflict is a severe behavioral anomaly identified as "contextual sycophancy" or "prompt sycophancy" 3445. Sycophancy occurs when an artificial intelligence system prioritizes alignment with the user's prompt or the provided context over objective factual accuracy.

In external retrieval environments, if a user's query contains a false premise, or if the retrieved documents contain misleading but highly persuasive phrasing, the model may actively abandon its correct parametric knowledge to agree with the flawed context 3445. This indicates that language models largely lack an internal epistemic threshold; they cannot reliably assess whether external information is trustworthy enough to override their pre-trained parameters, nor can they consistently defend true internal knowledge against assertive but false external prompts 34. Researchers suggest that this sycophancy is not a mere alignment flaw, but a fundamental characteristic stemming from human-preference training methodologies that inadvertently reward agreeable responses over truthful dissent 34.

Advanced Manifestations of Grounding Breakdown

As retrieval systems scale to ingest longer texts, heterogeneous data types, and massive conversational histories, new forms of hallucination have emerged that defy classical definitions of model fabrication.

Cross-Context Misattribution and Ghost Context

The prevailing assumption in long-context modeling and expansive retrieval pipelines is that providing more relevant context universally improves output quality. However, researchers have identified a distinct and highly evasive architectural failure categorized as "Ghost Context" 4748.

In a traditional hallucination, the language model fabricates information entirely absent from its provided prompt 47. In a Ghost Context failure, the model utilizes information that is physically present within the prompt but is entirely irrelevant to the specific query being answered 4748. For instance, a system might retrieve both an active 2024 corporate policy and an outdated, superseded 2022 draft. The model may generate a highly confident, fluent answer based entirely on the 2022 draft because its semantic keyword overlap with the prompt was marginally stronger 47.

The fundamental error here is not fabrication, but severe cross-context misattribution. This phenomenon poses a significant security and compliance risk because the output appears perfectly grounded to standard automated evaluation metrics - the text was indeed derived verbatim from the provided context - yet it remains factually incorrect for the user's explicit intent 4748. Similarly, models suffer from explicit "citation hallucination," where they correctly answer a query but falsely attribute the source of the answer to a completely unrelated document chunk located elsewhere within the same prompt 37.

Multimodal Grounding Degradation

The integration of visual data into retrieval systems via Large Vision-Language Models has exposed severe new vulnerabilities in long-context faithfulness. Benchmarks constructed to evaluate these systems demonstrate a drastic "grounding breakdown" in dense visual environments 4950. When modern multi-modal models are tasked with retrieving and citing information from a long sequence of images, videos, or highly dense visual documents, their citation accuracy collapses 4951.

Remarkably, rigorous experiments reveal a stark divergence between raw correctness and actual faithfulness. A vision-language model may generate the correct answer regarding an extended image sequence, but entirely fail to cite the specific frame or visual region that provided the necessary evidence 4950. This discrepancy indicates that the models are relying on generalized parametric recognition or training-data biases rather than explicitly grounding their reasoning in the provided visual context, severely undermining the core transparency required by enterprise retrieval systems 49.

Subjectivity and Opinion-Aware Retrieval Constraints

A secondary, philosophical limit to artificial intelligence grounding stems from the architecture's inherent bias toward objective factuality. Standard retrieval pipelines are universally optimized to minimize posterior entropy - to find the single most semantically relevant, factual chunk 52. However, in real-world applications analyzing social media, user reviews, or open-ended policy debates, queries often involve aleatoric uncertainty, reflecting a genuine heterogeneity of subjective human perspectives 52.

When forced to process subjective queries, traditional systems treat diverse opinions and dissenting perspectives as statistical noise, retrieving only the dominant or most heavily embedded viewpoint 52. This structural constraint creates a severe echo chamber effect, amplifying dominant narratives while systematically underrepresenting minority voices and mischaracterizing the true distribution of opinions present in the external dataset 52. Developing "Opinion-Aware" architectures that preserve entropy to synthesize varied perspectives - rather than collapsing them into a singular factual bias - remains a critical frontier in context engineering 52.

Hybrid Architecture and Dynamic Context Routing

Given the distinct epistemic limits, economic constraints, and overlapping strengths of both targeted retrieval and massive long-context paradigms, the consensus among researchers and enterprise architects is that forcing a binary architectural choice is counterproductive. The future of artificial intelligence grounding lies in hybrid orchestration, dynamic routing, and specialized sub-agent decomposition 31353.

Adaptive Retrieval and Sub-Agent Orchestration

To balance the high token costs and position bias vulnerabilities of long-context models with the precision of standard retrieval, modern frameworks are implementing dynamic routing mechanisms. Approaches like Self-Route and Pre-Route enable the model, or a lightweight auxiliary classifier, to computationally assess the incoming query's complexity and the size of the corpus before execution 62335.

If an evaluation determines that a query requires precise factual extraction from a massive, highly dynamic corpus, the router directs the task to a targeted retrieval pipeline to ensure low latency and high citation accuracy 36. Conversely, if the query demands global synthesis, macro-theme extraction, or involves a dense, self-contained document, the router bypasses the retrieval bottleneck and leverages the long-context window directly, often utilizing prefix caching to mitigate computational costs 317.

Furthermore, to combat the profound difficulty of multi-document synthesis, researchers are deploying architectures such as Sub-Agent Per Document Retrieval-Augmented Generation. By decomposing the problem along the document axis, the system deploys individual, token-bounded sub-agents to analyze specific documents in isolation 10. These agents subsequently synthesize partial answers through a centralized map-reduce layer, dramatically increasing accuracy for exhaustive synthesis without suffering the noise accumulation inherent to massive single-pass context windows 10.

Dynamic Context Filtering Mechanisms

To further refine the processing of massive contexts, computational linguists are developing models that natively perform dynamic context pruning at the architectural level. The Context Filtering Language Model utilizes an integrated soft mask mechanism operating within a single forward pass 3656. Instead of relying on an external vector database to retrieve chunks and manually assemble a prompt, this architecture dynamically identifies and masks out irrelevant tokens directly within the massive context window during computation 36.

This innovation bridges the gap between the noise reduction capabilities of standard retrieval and the deep analytical processing of long-context models. By allowing the model to focus its internal attention mechanism solely on pertinent information, it directly mitigates distraction issues, cross-context misattribution, and positional bias 3637.

Future Trajectories in Artificial Intelligence Grounding

Ultimately, the objective of retrieval-augmented generation is not merely to feed an artificial intelligence massive quantities of text, but to meticulously engineer its epistemic environment. Expanding the context window of a language model does not automatically equate to an expansion of its reasoning capacity or factual reliability. Reliable deployment requires acknowledging the inherent friction between parametric training and non-parametric evidence, and treating working context as a finite, highly volatile resource. True artificial intelligence grounding is defined not by how much data a model can computationally ingest, but by how transparently, efficiently, and accurately it can attribute its conclusions to verifiable truth.