Evaluation of Genuine Quantum Advantage in 2024

Introduction and Industry Context

The field of quantum information science is currently navigating a critical and highly scrutinized transition from the Noisy Intermediate-Scale Quantum (NISQ) era toward the foundational stages of fault-tolerant quantum computing (FTQC). For the past decade, the industry has been characterized by profound theoretical promises accompanied by aggressive marketing claims, often conflating incremental physical hardware improvements with paradigm-shifting computational superiority. However, developments spanning the 2024 and 2025 technology cycles demonstrate that physical hardware capabilities and quantum error-correction software have crossed mathematically verifiable thresholds 123. The discipline is moving decisively into a regime where quantum error correction is not merely theoretical but physically beneficial, demonstrating that logical fidelity can surpass the baseline fidelity of constituent physical components 145.

Assessing genuine quantum advantage in this evolving landscape requires separating rigorous scientific milestones from commercial narratives. To achieve this, researchers and institutions are fundamentally reprioritizing the creation of robust, error-corrected "logical qubits" over the mere aggregation of fragile, error-prone "physical qubits" 678. Concurrently, the industry is establishing standardized performance metrics, volumetric benchmarks, and auditing frameworks to evaluate hardware capabilities objectively 91011. These standardization efforts ensure that claims of computational superiority are grounded in reproducible, statistically significant data rather than localized, best-case algorithmic scenarios 1112. This report provides an exhaustive analysis of the current state of quantum advantage, evaluating hardware milestones, advanced benchmarking methodologies, error correction breakthroughs across varying modalities, and the realistic timelines for practical applications in materials science and cryptanalysis.

Standardization of Quantum Terminology

To accurately evaluate claims of quantum computing performance, it is necessary to establish a precise and universally accepted taxonomy. The historical lack of consensus surrounding quantum terminology has facilitated a phenomenon known in the industry as "quantum washing." This practice involves utilizing quantum terminology to market products that fundamentally rely on classical computing heuristics, thereby obscuring the actual technological mechanisms and exploiting a general lack of interdisciplinary literacy among investors and policymakers 1314.

Major standards organizations, including the Institute of Electrical and Electronics Engineers (IEEE) and the National Institute of Standards and Technology (NIST), are actively codifying definitions to ensure compatibility and interoperability across the global quantum ecosystem 151617. For example, IEEE P7130 standardizes specific terminology and definitions, while IEEE P7131 focuses on quantum computing performance metrics 1819. Through these frameworks, the industry widely recognizes three distinct tiers of quantum performance that chart the path from theoretical demonstration to industrial utility.

Computational Advantage and Supremacy

Quantum Supremacy, frequently referred to as Computational Advantage, designates a strict mathematical threshold where a quantum computer efficiently performs a highly specific task that is functionally intractable for any classical supercomputer 1721. The chosen task does not need to possess real-world utility; its sole purpose is to demonstrate the fundamental, unsimulable superiority of quantum hardware. A standard example utilized by leading laboratories is random circuit sampling (RCS), which tasks the quantum processor with sampling outputs from a highly entangled, pseudo-random quantum circuit 2120. Because the classical simulation of such circuits scales exponentially - specifically $\mathcal{O}(2^n)$ - with the number of qubits, sufficiently large RCS tasks rapidly outpace the memory and processing capabilities of exascale supercomputers 2120.

Quantum Utility

Quantum Utility represents a subsequent developmental milestone wherein quantum computers can solve a highly complex, structured computational problem more efficiently than classical brute-force methods. This definition was notably introduced in association with the simulation of quantum spin systems 17. Unlike supremacy experiments, tasks in the utility regime are scientifically relevant, though classical approximations, heuristic models, or advanced tensor network simulations might still compete with or occasionally outperform the quantum hardware depending on the exact parameters 317. Demonstrating quantum utility signifies the capability of running hybrid quantum-classical algorithms on advanced NISQ or early fault-tolerant devices to explore complex physical systems 3.

Practical Quantum Advantage

Practical Quantum Advantage is the ultimate industrial objective. It is achieved only when a quantum computer solves a problem with direct, real-world applications significantly faster or more accurately than the most advanced classical methods available 1721. Applications in this tier include large-scale catalyst simulation for clean energy, complex portfolio optimization in finance, optimal routing in global logistics, and cryptanalysis 1721. Achieving Practical Quantum Advantage highlights quantum computing as a dominant, competitive technology that boosts widespread commercial adoption, though experts project that realizing this phase requires fully error-corrected systems operating thousands of logical qubits 321.

Addressing Fundamental Misconceptions

Clarifying these operational definitions also requires dispelling pervasive myths regarding quantum mechanics. A primary misconception is that quantum computers operate by "trying all answers simultaneously." While qubits can exist in a superposition of multiple states, quantum algorithms do not simply evaluate all paths at once to find a solution. Instead, they utilize the principle of quantum interference to systematically amplify the probability amplitudes of correct answers while canceling out the amplitudes of incorrect ones - a highly nuanced mathematical process distinct from parallel classical processing 22. Furthermore, quantum systems operate entirely within the established laws of quantum mechanics; they do not violate causality, enable faster-than-light communication, or prove the existence of macroscopic multiverses, despite dramatic marketing claims occasionally suggesting otherwise 202223.

Hardware Modalities: Physical Versus Logical Qubits

The foundational unit of quantum computation is the physical qubit, which represents the tangible hardware realization of a quantum state. Depending on the underlying technology, a physical qubit may be constructed from a superconducting circuit (transmon), a trapped atomic ion, a neutral atom manipulated by optical tweezers, or an electron spin trapped in a silicon quantum dot 6726. While these physical qubits can encode a superposition of binary states, they are inherently fragile. Microscopic environmental perturbations, stray electromagnetic noise, thermal fluctuations, and minute control errors induce rapid decoherence 7. Consequently, even state-of-the-art physical qubits experience operational error rates between 0.1% and 1%, which is orders of magnitude too high to reliably execute algorithms requiring billions of sequential logic gates 7.

To circumvent this fragility, researchers developed the concept of the logical qubit. A logical qubit is an abstract, highly stable quantum bit created by encoding quantum information across an ensemble of many entangled physical qubits 78. This encoding functions similarly to classical redundancy, though quantum error correction (QEC) is significantly more complex due to the inability to clone unknown quantum states (the no-cloning theorem) and the fact that direct measurement destroys quantum superposition 72425.

Instead of direct measurement, QEC relies on specialized "ancilla" or "measure" qubits interspersed among the data-bearing qubits. These ancilla qubits continuously execute syndrome measurements, gathering parity information about the system's errors without measuring or collapsing the underlying logical data state 1826. If an error such as a bit-flip or phase-flip is detected, classical control systems compute the necessary correction and apply it dynamically 1. The success of this architecture hinges on the "error threshold theorem," which mathematically proves that if the raw physical error rates fall below a certain critical percentage (often cited as ~1% or tighter, depending on the specific code), increasing the number of physical qubits in the logical encoding will exponentially suppress the residual logical error 458.

Advancements in Quantum Error Correction

The defining scientific achievement of late 2023 and 2024 was the empirical validation of quantum error correction operating genuinely "below the threshold." Historically, aggregating multiple physical qubits to form a logical qubit introduced more operational noise than it successfully suppressed due to the massive complexity and high error rates of the entangling operations required for syndrome extraction 14. Breaking this break-even barrier is an absolute prerequisite for fault-tolerant computing, proving that engineering scalability is physically viable 4527.

Surface Code Implementations and Exponential Suppression

Superconducting architectures have predominantly relied on the surface code, a topological error-correcting scheme that maps qubits onto a two-dimensional checkerboard lattice 2832. In December 2024, Google Quantum AI published definitive results in the journal Nature detailing the performance of their 105-qubit "Willow" processor. Willow achieved a below-threshold milestone by demonstrating exponential error suppression across varying code distances 429.

When the Google researchers scaled the encoded logical qubit from a distance-3 ($3 \times 3$ grid) to a distance-5 ($5 \times 5$ grid), and ultimately to a distance-7 ($7 \times 7$ grid) surface code, the encoded error rate decreased by a factor of $\Lambda = 2.14 \pm 0.02$ at each successive step 42734. This exponential scaling resulted in a logical error probability of approximately $0.143\% \pm 0.003\%$ per cycle 34. This outcome culminated in a logical qubit whose lifetime exceeded that of its best constituent physical qubit by a factor of 2.4, unambiguously demonstrating the capacity of an error-corrected entity to outperform its underlying components 42734. To achieve this, the Willow processor underwent significant material and architectural optimizations, pushing average physical energy-relaxation times ($T_1$) to $68 \mu s$, a dramatic enhancement over the $20 \mu s$ lifetimes of Google's previous Sycamore architecture 428. A detailed error budget analysis of the Willow system indicated that incoherent errors dominated the system (accounting for 80% of errors), with coherent errors and leakage each contributing roughly 10% 34.

Concurrently, researchers at the University of Science and Technology of China (USTC) published independent findings on their 107-qubit Zuchongzhi 3.2 processor. Utilizing a distance-7 surface code, the USTC team successfully achieved an error suppression factor of $\Lambda = 1.40 \pm 0.06$ 2732. While measurably weaker than Google's suppression factor, any $\Lambda$ value strictly above 1.0 mathematically confirms operation below the fault-tolerance threshold, providing vital independent validation that superconducting surface codes represent a viable, scalable path to logical stability 27.

Trapped-Ion Fidelity and Active Syndrome Extraction

In April and September of 2024, a collaboration between Microsoft and Quantinuum demonstrated a highly efficient alternative pathway to logical qubit generation using trapped-ion technology. Applying Microsoft's advanced qubit-virtualization and error-diagnostics software to Quantinuum's H2 processor, the team leveraged the inherent all-to-all connectivity and exceptionally high fidelities of trapped ions 1. The H2 processor features 56 physical qubits boasting a two-qubit gate fidelity of 99.8% 2.

Using this platform, the researchers successfully encoded 12 highly reliable logical qubits 30. When these 12 logical qubits were fully entangled into a highly complex Greenberger-Horne-Zeilinger (GHZ) state, they exhibited a circuit error rate of $0.0011$, representing a 22-fold improvement over the corresponding physical qubits' circuit error rate of $0.024$ . Earlier phase trials utilizing just four logical qubits reported error rates up to 800 times lower than physical baselines 1224.

Crucially, the system executed over 14,000 individual experimental routines without a single uncorrected error 12. Microsoft and Quantinuum successfully performed five rounds of repeated, active syndrome extraction on eight logical qubits during a fault-tolerant computation 1. This represented the first major demonstration of diagnosing and correcting errors dynamically on logical qubits without destroying the encoded quantum information, cementing the transition into what Microsoft categorizes as "Level 2 Resilient" quantum computing 12.

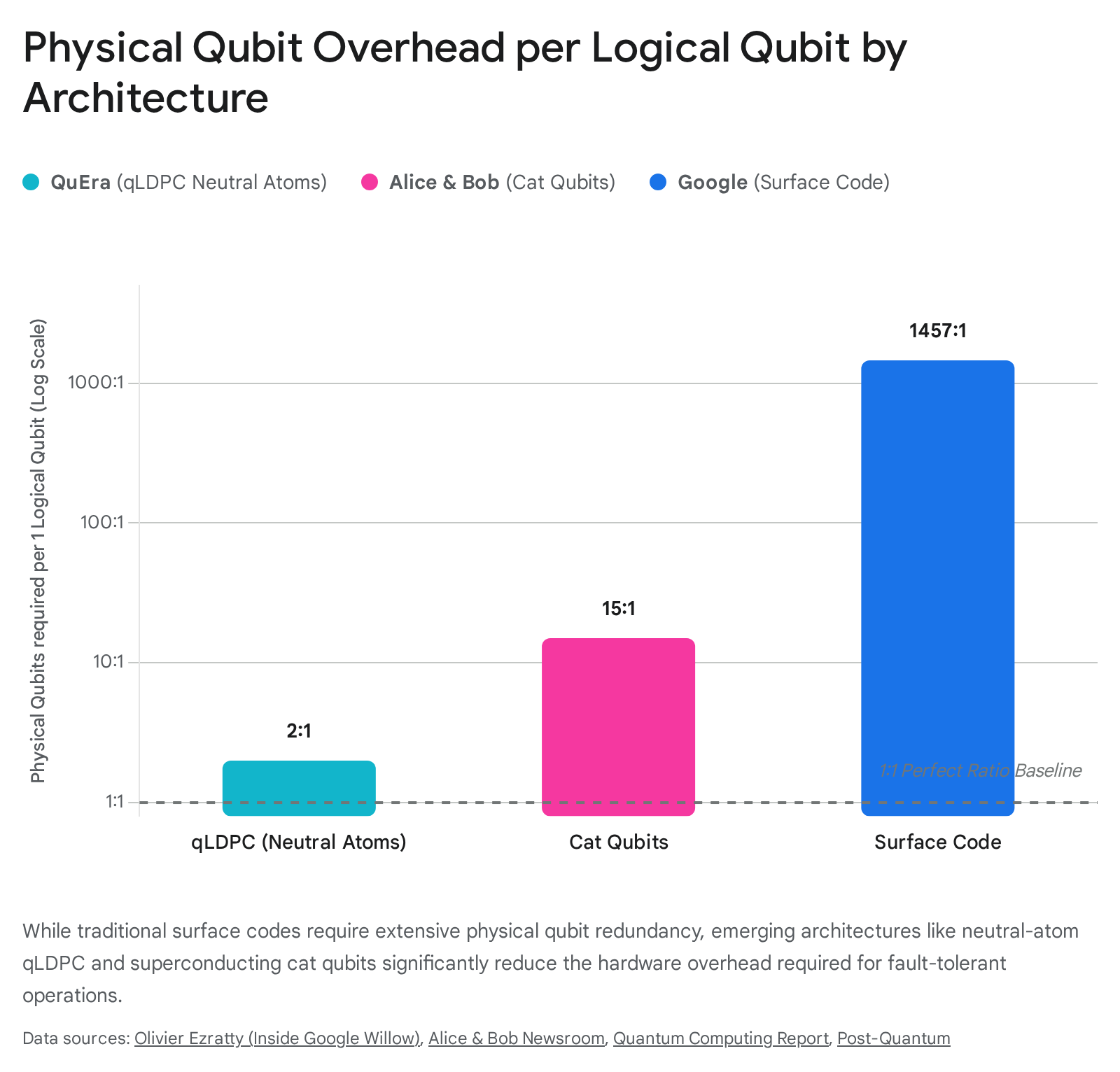

Quantum Low-Density Parity-Check (qLDPC) Architectures

While surface codes have been experimentally validated, they carry a severe, often prohibitive resource overhead. Projections indicate that utilizing surface codes to run industrially relevant algorithms may require upwards of 1,000 to 3,700 physical qubits to sustain a single logical qubit with cryptographically relevant error rates (e.g., $10^{-6}$ to $10^{-10}$) 82431. To mitigate this massive hardware demand, the industry is intensely researching Quantum Low-Density Parity-Check (qLDPC) codes, which theoretically allow for dramatically higher encoding rates and reduced physical overhead.

A prominent demonstration of qLDPC efficiency occurred in late 2023 and early 2024 through a collaboration among QuEra Computing, Harvard University, MIT, and NIST. Utilizing highly reconfigurable neutral-atom hardware, the researchers successfully executed large-scale algorithms on 48 logical qubits 263233. By aligning the complex qLDPC code structure with the hardware's unique capacity to physically move qubits in parallel using Acousto-Optic Deflectors (AODs), the team enabled syndrome extraction in constant time 34.

This implementation achieved an encoding rate exceeding 0.5 (a roughly 2:1 physical-to-logical ratio) by utilizing non-commuting affine permutation matrices developed by Kasai 34. The study verified high-rate code instances through circuit-level noise simulations, demonstrating the encoding of 580 logical qubits into 1,152 physical qubits (protecting against up to 5 errors), and 1,156 logical qubits into 2,304 physical qubits (protecting against up to 6 errors) 34. Simulations operating at a physical error rate of $0.1\%$ indicated that this architecture could reach the "Teraquop" regime - achieving one error per trillion operations ($1.3 \times 10^{-13}$) - drastically lowering the estimated physical hardware scale required for fault-tolerant computation 34.

Cat Qubits and Hardware-Efficient Codes

Another methodology aimed at reducing overhead is the deployment of "cat qubits." Promoted prominently by hardware developer Alice & Bob, cat qubits utilize specific states of microwave photons within superconducting resonators that are intrinsically protected against bit-flip errors due to their underlying physics 353637. Because bit-flips are actively suppressed by the hardware, the error correction software only needs to correct phase-flip errors.

This reduction simplifies the error correction challenge from a massive two-dimensional surface code grid to a one-dimensional linear repetition code 3538. In 2024, Alice & Bob launched the Boson 4 chip, achieving a world record for bit-flip protection among superconducting qubits by extending the lifetime to over 7 minutes 3638. By combining these highly biased noise profiles with LDPC codes, Alice & Bob projects the ability to operate 100 high-fidelity logical qubits (with an error rate of $10^{-8}$) utilizing merely 1,500 physical cat qubits, targeting the delivery of a universal fault-tolerant machine by 2030 3637. Similarly, IBM has published research on a highly efficient qLDPC architecture dubbed the "gross code," which requires just 288 physical qubits to encode 12 logical qubits while still supporting roughly a million cycles of error checks 24.

Hardware Platform Milestones (2024-2025)

The pursuit of Practical Quantum Advantage is defined by a rigorous competition among distinct physical modalities. In addition to the leading results from Google, USTC, Quantinuum, and QuEra, the broader industry achieved significant momentum through 2024 and 2025.

IBM maintained its position at the forefront of superconducting quantum computing by focusing on scale and speed via its heavy-hexagon lattice architecture, which aims to minimize the persistent issue of signal crosstalk 4539. The 156-qubit IBM Heron R2 processor boasts tunable-coupler transmon qubits with two-qubit gate errors reliably near $0.3\%$ 2839. In 2024, IBM demonstrated the execution of a highly complex circuit comprising 5,000 two-qubit gates at speeds approximately 50 times faster than previous benchmarks. This verified that the Heron architecture has entered a complexity regime (operating around 100 qubits with circuit depths of 50 - 100) that stretches the absolute limits of exact classical simulation capabilities 2845.

Beyond the dominant corporate laboratories, European initiatives are heavily investing to drive sovereign quantum capabilities and commercialize regional hardware. IQM Quantum Computers, a leading European superconducting hardware startup, delivered a state-of-the-art 50-qubit system (the "Radiance" model) to Finland's VTT research center, achieving an impressive 99.9% fidelity on two-qubit gates 454041. Operating with customized "Crystal" grid and "Star" resonator hub topologies, IQM's strategy tightly integrates on-premises quantum hardware with existing High-Performance Computing (HPC) infrastructures 4042. Their accelerated roadmap outlines the delivery of a 150-qubit system to the Euro-Q-Exa program in 2026, followed by a dedicated 300-qubit system optimized for error-correction routing in 2027 4042.

Simultaneously, the European OpenSuperQPlus project (funded by the European Flagship on Quantum Technologies) is advancing pre-competitive, open-access quantum architecture. By standardizing control electronics, cryogenic cabling, and operating systems across diverse institutions, the initiative aims to deploy a publicly available 100-qubit processor capable of robust error correction by late 2026, fostering a unified ecosystem for transparent benchmarking and collaborative software development 434445.

Summary of Major Hardware Platforms (2024-2025)

The following table summarizes the key metrics, architectural modalities, and primary achievements of the leading quantum hardware platforms over the 2024-2025 technology cycle.

| Manufacturer / Processor | Modality | Qubit Count | Key Achievement (2024-2025) | Error Correction Strategy |

|---|---|---|---|---|

| Google Willow | Superconducting | 105 physical | Below-threshold QEC ($\Lambda=2.14$); Exceeds age of universe in classical simulation time. | Distance-7 Surface Code 4 |

| USTC Zuchongzhi 3.2 | Superconducting | 107 physical | Below-threshold QEC ($\Lambda=1.40$); Demonstrated 72 $\mu s$ coherence times. | Distance-7 Surface Code 2732 |

| Quantinuum H2 | Trapped Ions | 56 physical | Created 12 highly stable logical qubits; achieved 0.0011 logical circuit error rate. | Active Syndrome Extraction 1 |

| QuEra (Harvard/MIT) | Neutral Atoms | >280 physical | Entangled 48 logical qubits; executed complex algorithms directly on logical qubits. | qLDPC / Affine Permutations 3234 |

| IBM Heron R2 | Superconducting | 156 physical | Executed 5,000 gate circuit; proved heavy-hexagon lattice operational reliability. | Error Mitigation / qLDPC 2845 |

| Alice & Bob Boson 4 | Superconducting | N/A (Cat) | Demonstrated 7-minute bit-flip protection; effectively eliminating one dimension of error. | 1D Repetition / LDPC on Cat Qubits 3637 |

| IQM Radiance | Superconducting | 50 physical | Deep HPC integration; achieved 99.9% 2-qubit gate fidelity. | Crystal/Star Grid Topologies 4540 |

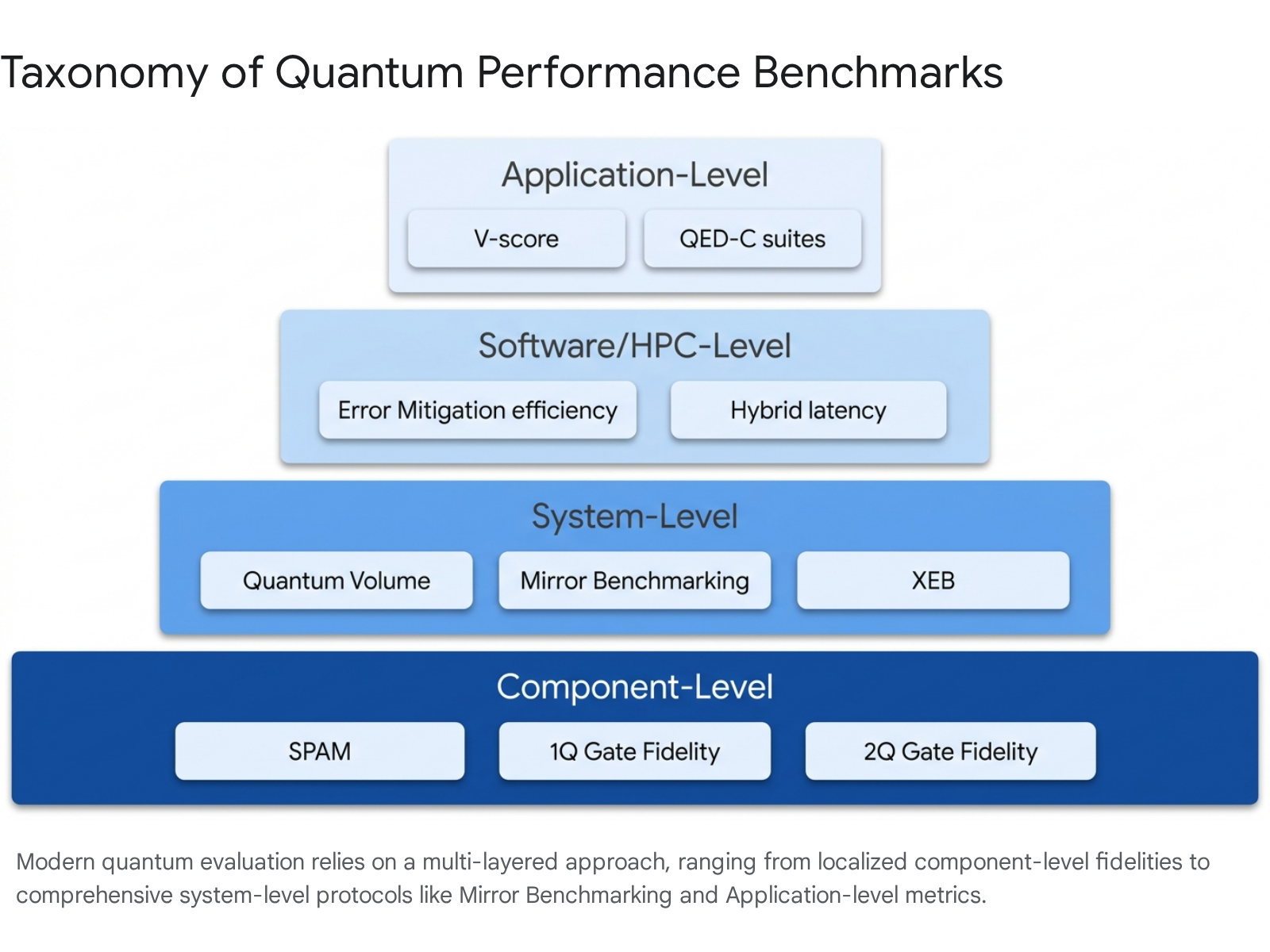

Performance Benchmarking and Verification

As quantum hardware progresses from isolated laboratory demonstrations into the domain of utility-scale hybrid computation, the metrics utilized to evaluate their performance must also evolve in complexity. Early NISQ devices relied heavily on simple, component-level benchmarks - such as state-preparation and measurement (SPAM) tests, single-qubit randomized benchmarking (RB), and volumetric metrics like Quantum Volume (QV) 95346. While Quantum Volume effectively gauges the largest "square" circuit (equal width and depth) a device can execute successfully, modern systems require holistic evaluations capable of dynamically capturing crosstalk, idle memory errors, and systemic coherence limits across deep, varied topologies 953.

Cross-Entropy Benchmarking vs. Mirror Benchmarking

The demonstration of Quantum Supremacy via Random Circuit Sampling (RCS) relies fundamentally on Cross-Entropy Benchmarking (XEB). XEB evaluates the operational fidelity of a highly entangled, random quantum circuit by directly comparing the measured output probability distribution against an ideal distribution calculated by a classical simulator 4655. The critical limitation of XEB is that it requires exact classical simulation of the entire quantum state vector; once a quantum computer surpasses the classical simulation horizon (e.g., executing high-depth circuits on Google's 105-qubit Willow chip), XEB can no longer be directly calculated because the classical verification becomes computationally impossible 4647.

To address this verification bottleneck, the industry is increasingly utilizing Mirror Benchmarking. This sophisticated technique involves running a random quantum circuit forward for a set depth, and then appending and running the exact algorithmic inverse ("mirror") of that circuit, incorporating randomized compiling to prevent accidental coherent error cancellation 48. The theoretical output of a perfectly executed mirrored circuit is strictly deterministic - it must return the qubits to their initial starting state. This deterministic outcome allows researchers to physically measure the success probability and directly infer the systemic circuit fidelity without requiring exponential classical simulation resources 464849. While volumetric benchmarks focus rigidly on algorithmic width and depth, mirror benchmarks directly capture the compounded impact of complex systemic errors that localized component testing frequently misses 50.

Standardization Initiatives and Application Suites

To prevent ecosystem fragmentation and mitigate the bias inherent in self-reported metrics, international consortia are establishing universal testing suites. The Quantum Economic Development Consortium (QED-C) provides a comprehensive, application-oriented benchmark suite. Rather than testing random gates, this suite tests devices across a variety of historically significant algorithms - including Deutsch-Josza, Grover's Search, Variational Quantum Eigensolver (VQE), Quantum Fourier Transform (QFT), and the HHL algorithm for linear systems 53515253. By sweeping over a range of problem sizes and input variables, the QED-C suite captures key performance metrics related to the ultimate accuracy ratio of the results, the total execution time, and the volume of quantum gate resources consumed, establishing a fair comparison methodology for disparate hardware designs 535152.

Furthermore, regulatory and standardization bodies are formalizing these practices. The IEEE is currently drafting the P7131 Standard for Quantum Computing Performance Metrics & Performance Benchmarking 91619. This standard aims to formalize the strict evaluation routines for both quantum hardware and software stacks, ensuring reproducible comparisons across varying topologies (e.g., comparing restricted-connectivity superconducting chips directly against fully-connected trapped-ion processors) and formally benchmarking hybrid quantum algorithms against classical high-performance compute clusters 9161819. These efforts are supported by similar guidelines such as IEEE P7130, which standardizes baseline quantum definitions to facilitate clear industry understanding 1618.

Classical Simulation Boundaries and the Supremacy Debate

Claims of quantum supremacy inherently rely on rigorous estimates of classical computational intractability. When Google announced that the exact classical simulation of the Willow processor's RCS benchmark would take a supercomputer $10^{25}$ years, it predictably sparked intense academic debate regarding the rigidity and permanence of such claims 232947. Advanced tensor network contraction algorithms and the sheer scale of modern exascale supercomputing clusters have repeatedly lowered the estimated time required for classical simulation in the past. For instance, a 10,000-year simulation claim associated with Google's 53-qubit Sycamore chip in 2019 was famously reduced to a mere 14 seconds utilizing highly optimized classical algorithms running on 1,400 GPUs by USTC researchers in 2023 39.

However, there is an academic consensus that while classical heuristics can aggressively approximate small-scale or highly noisy quantum runs, they fundamentally falter as the quantum state-space expands exponentially under high-fidelity operations. Classical simulation scales asymptotically as $\mathcal{O}(2^n)$; therefore, as quantum devices surpass 100 physical qubits operating with two-qubit gate fidelities exceeding 99.5%, they physically occupy a massive Hilbert space that is definitively beyond the reach of any exact classical simulation 212047.

Regarding the specific $10^{25}$ years figure cited for Google's Willow, prominent complexity theorists note a crucial caveat: this astronomical timeline assumes that classical memory is a strict limiting factor 47. If memory constraints were theoretically removed, optimized tensor network algorithms executing on exascale clusters would still require approximately 300 million years to simulate the Willow circuit - an amount of time that remains profoundly prohibitive, verifying that a genuine computational separation is mathematically sound under current theoretical limits 47.

Timelines for Practical Applications

The ultimate justification for the immense capital expenditure in quantum computing is the technology's potential to revolutionize specific scientific and mathematical domains. The transition from supremacy demonstrations to practical utility is highly domain-dependent.

Materials Science and Quantum Chemistry

Quantum chemistry simulations are universally considered the most natural application for quantum hardware. Because molecules are governed by quantum mechanical interactions, simulating them directly on a quantum processor bypasses the exponential overhead required to map quantum states into classical binary structures 5455. Despite significant advancements in classical algorithmic approximations (such as Density Functional Theory), classical solvers face exponential barriers when dealing with strongly correlated and dynamical many-body problems 55.

Recent detailed resource estimates indicate that simulating moderately multireference systems with localized correlations (such as photochemical chromophores or iron-sulfur $Fe_2S_2$ clusters) will require an early fault-tolerant device possessing between 25 and 100 highly reliable logical qubits, alongside shot budgets in the millions 5556. Achieving strict chemical accuracy requires executing complex algorithms like Quantum Phase Estimation (QPE) or Trotterized Hamiltonian simulation. Depending on the chosen basis set, QPE implementations demand significant computational overhead, with logical "T-gate" counts and magic state distillation severely dictating the ultimate algorithmic depth 545758.

To rigorously identify the exact inflection point where quantum methods will definitively outperform classical techniques, researchers introduced the V-score benchmark in October 2024. Developed collaboratively by researchers at EPFL and the Flatiron Institute, and published in the journal Science, the V-score evaluates how accurately different quantum and classical algorithms approximate the lowest energy ground state of complex quantum many-body problems 59. Currently, highly optimized implementations of classical algorithms still outperform their early quantum counterparts on many tasks. However, the V-score provides a mathematically grounded, application-level target for establishing definitive Practical Quantum Advantage in materials science as fault-tolerant hardware matures over the coming decade 59.

Cryptanalysis and Post-Quantum Security Standards

The systemic threat that quantum computers pose to modern digital infrastructure - specifically asymmetric encryption algorithms like RSA and Elliptic Curve Cryptography (ECC) - is well documented and driving immediate regulatory action 32160. Utilizing Peter Shor's algorithm, a cryptographically relevant quantum computer (CRQC) could factor large prime numbers exponentially faster than classical systems, rendering current internet encryption protocols obsolete 3216061.

Breaking the standard RSA-2048 encryption requires roughly 4,000 highly reliable logical qubits operating in sequence 2562. In 2019, conservative estimates suggested this task would necessitate upwards of 20 million physical qubits running continuously for 8 hours. Due to the recent rapid breakthroughs in more efficient surface code decoding, qLDPC codes, and cat qubit architectures, that required physical qubit ratio is drastically shrinking 2562. While a fully functional CRQC remains highly unlikely before the early to mid-2030s, the "Harvest Now, Decrypt Later" threat vector makes the vulnerability immediate for organizations possessing long-lifecycle confidential data, trade secrets, or state intelligence 727363.

In direct response to this threat timeline, the U.S. National Institute of Standards and Technology (NIST) finalized the first comprehensive suite of Post-Quantum Cryptography (PQC) standards in August 2024. These finalized algorithms, built upon complex mathematical puzzles distinct from prime factorization, are formally designated as Federal Information Processing Standards (FIPS) 607364.

Summary of Finalized NIST Post-Quantum Cryptography Standards

| Standard Designation | Algorithm Family | Primary Function | Status |

|---|---|---|---|

| FIPS 203 (ML-KEM) | Module-Lattice-Based | Key Encapsulation (General Encryption) | Finalized (Aug 2024) 6465 |

| FIPS 204 (ML-DSA) | Module-Lattice-Based | Digital Signatures / Identity Authentication | Finalized (Aug 2024) 186465 |

| FIPS 205 (SLH-DSA) | Hash-Based (Stateless) | Digital Signatures / Identity Authentication | Finalized (Aug 2024) 6465 |

| FIPS 206 (FN-DSA) | FFT over NTRU-Lattice | Digital Signatures (formerly FALCON) | Draft Expected Late 2024/2025 7366 |

The release of these standards has officially triggered mandatory migration timelines for federal administrative agencies and initiated aggressive adoption curves for major global technology platforms (e.g., Google, Apple, and Cloudflare) to secure network infrastructures before CRQCs come online 216066.

Mitigating Quantum Washing and Ensuring Regulatory Oversight

As the quantum computing industry attracts immense venture capital and global sovereign investment, the risk of "quantum washing" has escalated concurrently. Quantum washing occurs when organizations misleadingly utilize quantum terminology to market products that fundamentally rely on classical computing heuristics, thereby obscuring the actual technological mechanisms and exploiting the general lack of interdisciplinary literacy 1314.

To counter this dilution of the field, a robust ecosystem of programmatic verification, formal certification, and standards-based procurement is emerging 14. Frameworks such as the Defense Advanced Research Projects Agency (DARPA) Quantum Benchmarking Initiative (QBI) and the stringent application-level metrics established by the QED-C serve to discipline marketing narratives and guide institutional procurement 1114. By mandating adherence to formal certification processes - analogous to how FIPS validations via the Cryptographic Module Validation Program (CMVP) historically shaped cybersecurity markets - policymakers and corporate investors can accurately differentiate between verified fault-tolerant engineering roadmaps and deceptive hyperbole 14. The distinction is critical not only for efficient capital allocation but for national security, ensuring that strategic foresight regarding AI convergence, cryptanalysis, and systemic rivalry is based on empirical engineering milestones rather than aspirational press releases 14.

Conclusion

The 2024 - 2025 technology cycle marks a definitive and verifiable turning point in the trajectory of quantum computing. The industry has effectively transitioned its core focus from the theoretical accumulation of noisy physical qubits to the empirical demonstration of robust logical qubits and the validation of fault-tolerant architectures. Breakthroughs in exponential error suppression via surface codes, combined with the extreme resource-reducing potential of qLDPC arrays and highly biased cat qubit hardware, mathematically confirm that the fundamental prerequisites for scalable, error-corrected quantum computing are physically viable.

However, the attainment of Practical Quantum Advantage remains an ongoing, resource-intensive pursuit. While current state-of-the-art systems can execute synthetic sampling tasks that stretch exascale classical simulation to its absolute limits, the deployment of quantum utility in industrial chemistry, logistics, and active cryptanalysis requires bridging the engineering gap between dozens of logical qubits and thousands. Driven by standardized benchmarking frameworks like the V-score and guarded against the hyperbole of quantum washing by emerging regulatory oversight, the next decade of quantum engineering will rely strictly on rigorous verification to unlock the extraordinary computational paradigms that have long been theorized.