Ergodic theory and time versus ensemble averages in decision theory

Introduction

The theoretical architecture of modern economics relies heavily on models of decision-making under uncertainty, with Expected Utility Theory (EUT) serving as its foundational bedrock. Originating from the works of Daniel Bernoulli in 1738 to solve the St. Petersburg Paradox, and mathematically formalized by John von Neumann and Oskar Morgenstern in 1947, Expected Utility Theory posits that rational agents maximize the expected value of their idiosyncratic utility functions rather than the expected value of wealth itself 122. This framework has dominated macroeconomic modeling, asset pricing, and behavioral economics for over half a century. However, a fundamental methodological critique has emerged from the intersection of statistical mechanics and economics, crystallized in the "ergodicity economics" research program spearheaded by Ole Peters and his collaborators at the London Mathematical Laboratory 13.

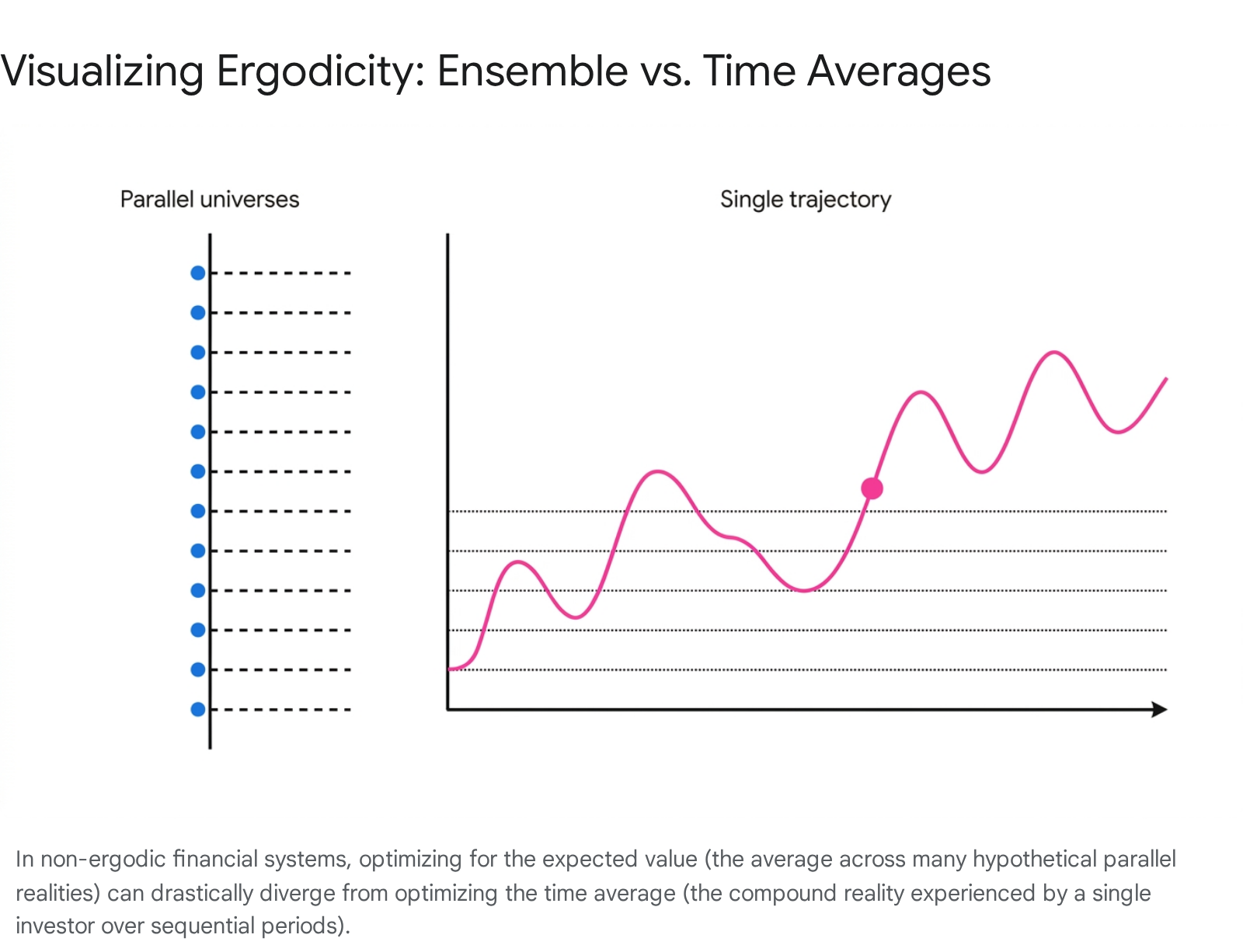

Ergodicity economics questions a core, often unstated assumption within mainstream economic theory: the indiscriminate use of expectation values - ensemble averages across parallel universes - to model the longitudinal, time-bound experience of individual economic agents 14.

The ergodic hypothesis, borrowed from 19th-century physics, asserts that the time average of an observable is equal to its expectation value 35. When the time average of a process diverges from its ensemble average, the process is deemed non-ergodic. Because financial wealth evolution is predominantly a path-dependent, multiplicative process subjected to the absorbing barrier of ruin, it is inherently non-ergodic 7896. Consequently, strategies optimizing expected value can paradoxically lead to the certain ruin of the individual over time, rendering traditional expected utility formulations potentially flawed in dynamic contexts 7712.

This exhaustive research report provides a nuanced analysis of the ergodicity economics paradigm. It clarifies the core mathematical mechanisms of ergodicity breaking using foundational models, maps its conceptual origins from classical physics to modern finance, and sharply distinguishes ergodicity from related statistical phenomena such as non-stationarity, stochastic volatility, and fat-tailed Black Swan distributions. Furthermore, the report objectively evaluates the intense academic friction surrounding the framework. It synthesizes recent empirical behavioral evidence published from 2023 through 2026 that tests human decision-making against time-average maximization, whilst simultaneously assessing the mainstream critiques from standard decision theorists who challenge the novelty, flexibility, and validity of Peters' propositions in top-tier economics journals. Finally, the analysis broadens to observe the global disciplinary reception of this paradigm across actuarial science, heterodox economics, and quantitative trading.

Epistemological Foundations: From Statistical Mechanics to Finance

To fully comprehend the ergodicity problem in economics, it is necessary to trace the concept back to its origins in nineteenth-century equilibrium statistical mechanics, pioneered by Ludwig Boltzmann, James Clerk Maxwell, and Josiah Willard Gibbs 58149. These physicists faced the monumental theoretical challenge of connecting the microscopic, deterministic laws of classical mechanics with the macroscopic, observable phenomena of thermodynamics, such as pressure and temperature 1410.

A system of gas particles enclosed in a volume is mathematically defined by the position and momentum of each individual particle, creating a multidimensional "phase space" 17111220. For a system with a macroscopic number of particles (on the order of Avogadro's number, $10^{23}$), tracking the precise trajectory of the system through phase space over continuous time is computationally impossible. To resolve this, Boltzmann introduced the "ergodic hypothesis." This hypothesis posits that over a sufficiently long period, an isolated system in thermal equilibrium will visit all accessible microstates within its constant energy shell with equal probability 5820.

If the ergodic hypothesis holds true, a profound mathematical convenience is unlocked: the infinite time average of an observable along a single trajectory is strictly equal to the ensemble average 172021. The ensemble average is defined as the spatial average across all possible microstates at a single instant in time, weighted by their equilibrium probability measure 2021. This equivalence allows physicists to essentially eliminate time from the equations, replacing complex dynamic trajectory calculations with static, probabilistic expected values 345. The mean ergodic theorem established by John von Neumann and the pointwise ergodic theorem established by George Birkhoff in the early 1930s provided rigorous mathematical footing for these concepts, cementing ergodic theory as a standalone mathematical discipline 5.

Econophysics has sought to import these concepts into financial modeling, though often with critical friction due to the differing nature of the systems 1323. When early economic theory adopted probability frameworks in the 17th and 18th centuries - predating the formalization of ergodic theory in physics by nearly 200 years - it unconsciously embedded the assumption of ergodicity into its models of expected wealth and utility 34. Mathematical economics formulated decisions based on expected values, which inherently imply an averaging over a statistical ensemble of parallel systems 14. However, human economic lives and capital markets are not closed systems in thermal equilibrium; they are systems far from equilibrium, dominated by continuous growth, irreversible absorbing barriers (such as bankruptcy), and compounding path dependence 3424.

To clarify the translation of these physical principles into financial theory, the following table maps the standard analogues used in statistical mechanics and their direct counterparts in econophysics and ergodicity economics literature 111220132314.

Table 1: Mapping Statistical Mechanics Concepts to Finance Analogues

| Statistical Mechanics Concept | Physical Definition | Finance Analogue | Economic/Financial Definition |

|---|---|---|---|

| Particles (Molecules) | The fundamental, indivisible microscopic entities of a physical system whose interactions drive macroscopic behavior. | Agents / Capital Units (Dollars) | Individual market participants, traders, or discrete units of investment capital circulating within the economy. |

| Phase Space ($\Gamma$) | The multidimensional mathematical space representing all possible microstates (positions and momenta) of the system. | State Space / Wealth Space | The set of all possible distributions of wealth, asset prices, macroeconomic variables, and portfolio allocations. |

| Energy Conservation | The constant total energy defining the accessible surface area (microcanonical ensemble) restricting particle movement. | Total Market Capitalization | The total wealth or liquidity circulating in a closed macroeconomic system, constraining aggregate purchasing power. |

| Trajectory (Time Evolution) | The continuous path a single physical system takes through phase space over time. | Wealth Path / Asset Return Series | The sequential, historical compounding of an individual investor's portfolio or an asset's price over time. |

| Ensemble Average | The average value of an observable across infinite parallel systems in different microstates at a single instant. | Expected Value (Cross-Sectional) | The mathematical expectation calculated across a probability distribution of parallel, alternative financial scenarios. |

| Time Average | The average value of an observable measured for a single system over an infinite time horizon. | Long-Term Compound Growth Rate | The geometric mean return experienced by a single investor continuously reinvesting over sequential periods. |

The distinction illustrated above forms the crux of the debate. If an economic process is ergodic, the ensemble average accurately predicts the time average, validating the use of static expected utility. However, ergodicity economics posits that because capital accumulation involves multiplicative dynamics and irreversible states, the ergodic hypothesis strictly fails in the domain of finance 77.

Core Mechanisms of Ergodicity Breaking: The Multiplicative Coin Toss

To demystify how the ergodic hypothesis fails in economic realities, Ole Peters introduced a simplified, discrete mathematical thought experiment often referred to as the "infamous coin toss" or the "Peters coin toss" 8715. The gamble perfectly isolates the mechanism of ergodicity breaking without requiring the continuous stochastic calculus of geometric Brownian motion, making it a foundational pedagogical tool in the literature.

The Gamble Setup

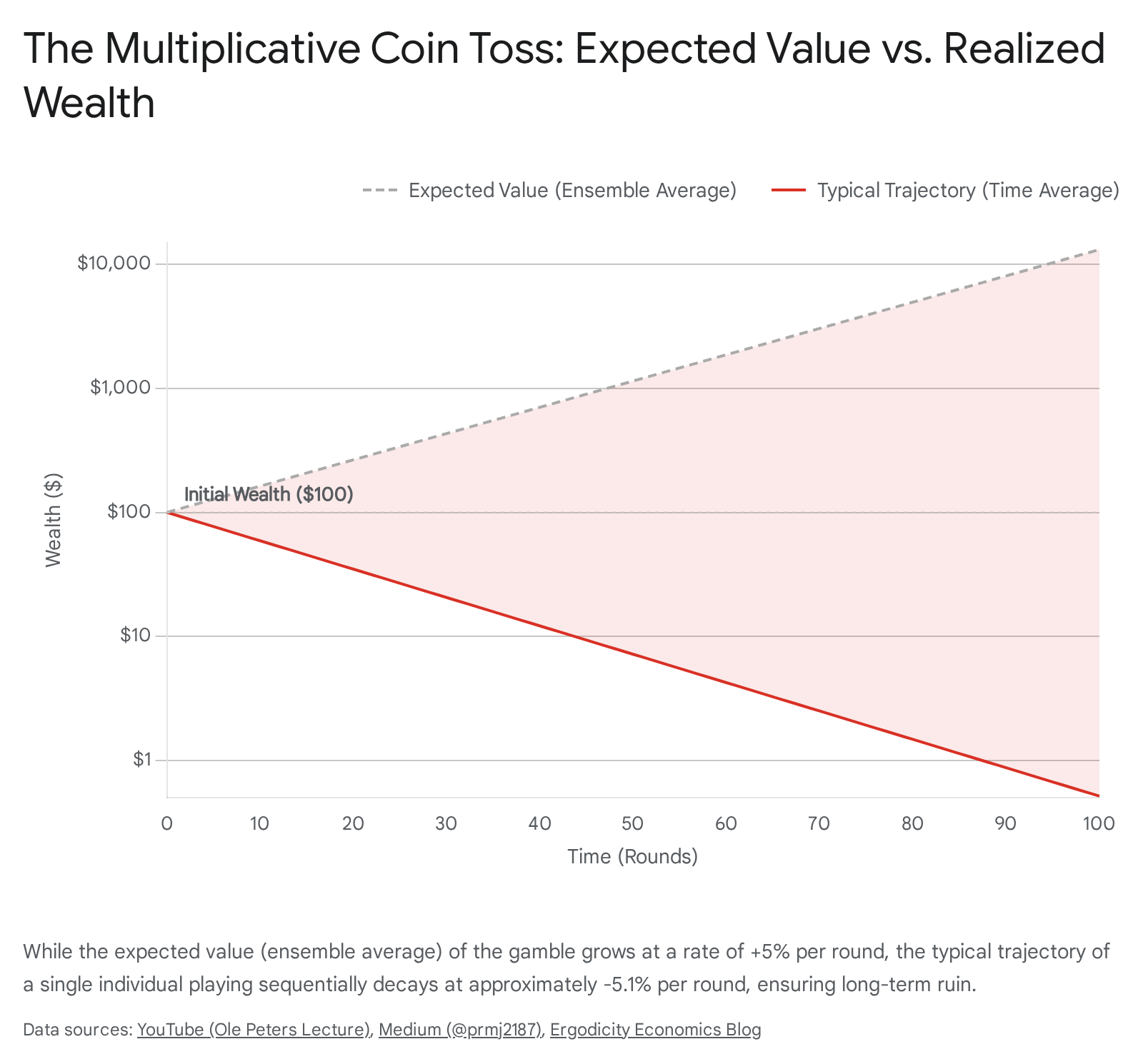

Imagine an individual playing a sequential game with a starting wealth, $x_0 = \$100$. In each discrete time period $t$, a fair coin is tossed 9727. * If Heads (probability $p = 0.5$): The individual's total current wealth increases by $50\%$. The wealth multiplier is $1.5$. * If Tails (probability $1-p = 0.5$): The individual's total current wealth decreases by $40\%$. The wealth multiplier is $0.6$.

Crucially, the game is multiplicative rather than additive. The player reinvests their entire accumulated wealth into the next round. This means the absolute dollar impact of a percentage gain or loss depends entirely on the specific path history up to that point, a defining characteristic of real-world portfolio returns 716.

The Ensemble Average (Expected Value)

Under standard probability theory and early formulations of Expected Value Theory (assuming linear utility for demonstration), one calculates the expected value (EV) to evaluate the gamble's viability. The expected multiplicative factor per round is calculated as the probability-weighted sum of the outcomes: $$\langle M \rangle = (0.5 \times 1.5) + (0.5 \times 0.6) = 0.75 + 0.30 = 1.05$$

Because the expected multiplier is greater than 1, standard expected value maximization dictates that the agent should play the game indefinitely, as it is seemingly profitable. The ensemble average of wealth at time $t$, denoted as $\langle x(t) \rangle$, grows exponentially by $5\%$ per round: $$\langle x(t) \rangle = \$100 \times 1.05^t$$ If an ensemble of $N$ parallel universes (or $N$ different people) plays this game independently for $t$ rounds, the arithmetic mean of their wealth will approach this expected value as the sample size $N$ approaches infinity 97. From a macroeconomic or aggregate statistical perspective, the system exhibits continuous growth.

The Time Average (Individual Trajectory)

However, a single individual does not have access to an ensemble of parallel lives; they experience one continuous trajectory over sequential time ($T \to \infty$) 797. Because the process is multiplicative and commutative, the order of heads and tails does not matter, only their relative frequency. Over a large number of tosses, the Law of Large Numbers dictates that the player will experience a convergence toward $50\%$ heads and $50\%$ tails 7.

The long-term per-round growth factor, $g$, must therefore be calculated using the geometric mean of the outcomes rather than the arithmetic mean: $$g = (1.5 \times 0.6)^{1/2} = (0.90)^{0.5} \approx 0.94868$$

In a typical trajectory, one head followed by one tail results in: $\$100 \times 1.5 \times 0.6 = \$90$. This constitutes a net loss of $10\%$ over two rounds, averaging a decay of roughly $5.13\%$ per round 7. Therefore, the individual's wealth over time follows the trajectory: $$x(t) \approx \$100 \times 0.94868^t$$

As time $t$ approaches infinity, the time-average wealth mathematically approaches zero (ruin) with a probability of 1 97.

The Illusion of the Ensemble

The core mechanism driving this ergodicity breaking is the extreme positive skewness generated by multiplicative compounding 7. In the ensemble average, an infinitesimally small fraction of players experiences continuous, uninterrupted heads, achieving astronomical wealth. This tiny minority mathematically drags the arithmetic average upward to the $1.05^t$ trajectory, entirely masking the reality that the overwhelming majority of individuals in the ensemble (approaching $100\%$ as time extends) are spiraling toward zero 927.

When the ensemble average diverges systematically and divergently from the time average, relying on expected value to formulate rational choice becomes epistemologically flawed. A bet with a positive expected value can still lead to certain ruin for the individual playing sequentially, highlighting a severe limitation in static decision theories applied to dynamic environments 7712.

Conceptual Demarcation: Ergodicity vs. Non-Stationarity vs. Fat Tails

A significant source of friction in the academic adoption of ergodicity economics is the conflation of non-ergodicity with other statistical complexities prevalent in quantitative finance and econometrics. To maintain analytical rigor, it is necessary to clearly distinguish non-ergodicity from non-stationarity, basic volatility clustering, and fat-tailed distributions 293031. This distinction becomes most visible in the intellectual debates between Ole Peters and Nassim Nicholas Taleb regarding the true locus of financial risk 12323334.

1. Non-Stationarity vs. Ergodicity

In time series analysis, a stochastic process is strictly stationary if its joint probability distribution remains invariant over time. Consequently, statistical parameters such as the unconditional mean and variance are constant 1718. Financial markets frequently exhibit non-stationarity due to macroeconomic regime changes, structural breaks, and shifting volatilities, often modeled using Hidden Markov Models or unit-root processes 29301938.

A critical misconception in the literature is that ergodicity requires stationarity, or conversely, that all stationary processes are ergodic. While an ergodic process is typically assumed to be stationary, a strictly stationary process can be fundamentally non-ergodic 2021. The multiplicative coin toss serves as the perfect counterexample: it possesses constant probabilities ($p=0.5$ continuously) and constant state-space multipliers (stationarity), yet it profoundly breaks ergodicity by driving a wedge between the time and ensemble averages 729. Non-stationarity concerns shifting underlying probabilities over time; non-ergodicity concerns the disconnect between sequential paths and cross-sectional ensembles regardless of parameter stability.

2. Stochastic Volatility and Heteroskedasticity

Volatility in financial markets is rarely constant. The tendency for large changes in asset prices to be followed by large changes (of either sign) and small changes to be followed by small changes is known as volatility clustering 3841. This phenomenon is typically captured by Generalized Autoregressive Conditional Heteroskedasticity (GARCH) models and represents a specific form of short-term non-stationarity in the variance of the process 1822. While volatility clustering complicates the estimation of risk parameters and options pricing, it is a distinct phenomenon from ergodicity breaking. A process can possess constant volatility (homoskedasticity) and still be non-ergodic due to multiplicative compounding.

3. Fat Tails and The Black Swan (The Taleb Perspective)

A probability distribution is described as having "fat tails" (leptokurtic) if the probability of extreme deviations from the mean drops off exponentially slower than in a Gaussian normal distribution, often following a power law 41224323. Associated with infinite variance, fat-tailed distributions imply that sample averages do not easily converge, and rare extreme events - characterized by Taleb as "Black Swans" - disproportionately dictate long-term outcomes and systemic risks 31434524.

Nassim Taleb argues that the primary epistemological flaw in modern finance, particularly within the Black-Scholes option pricing framework and Modern Portfolio Theory, is the assumption of thin-tailed (Gaussian) distributions 12303343. Taleb posits that this assumption systematically underestimates the frequency and catastrophic magnitude of tail events. Because of these extreme shocks, traditional models fail, leading to systemic fragility and the absolute necessity of tail-risk hedging to ensure survival 313325.

4. The Peters vs. Taleb Conceptual Distinction

While both Peters and Taleb rigorously critique mainstream finance for its reliance on naive probabilistic averages, their focal points are entirely orthogonal 1232. * Taleb's Focus (Fat Tails): Emphasizes extreme, rare events operating within the probability distribution that abruptly wipe out portfolios. The risk stems from the magnitude and unpredictability of outlier shocks within a single time step 123243. * Peters' Focus (Non-Ergodicity): Emphasizes that even in a theoretically perfect world of known, benign, thin-tailed distributions (like a simple 50/50 coin toss with no extreme outliers), the mathematics of sequential compounding guarantees individual ruin 9712. The risk stems not from black swans, but from the slow, mathematical gravity of the geometric mean permanently trailing the arithmetic mean over time 33.

As financial practitioners synthesize these concepts, figures like Mark Spitznagel note that while Taleb explains the source of sudden market shocks and the need for asymmetric payoffs, Peters provides the mathematical framework for why continuous exposure to even moderate volatility mechanically erodes long-term compounded wealth - the "volatility drag" 334849.

Expected Utility Theory vs. Ergodicity Economics: The Theoretical Battleground

Mainstream economic theory has historically handled the St. Petersburg Paradox and the avoidance of ruinous gambles through Expected Utility Theory (EUT). Rather than maximizing expected absolute wealth, EUT proposes that agents maximize the expected value of a non-linear utility function, $u(x)$, which captures idiosyncratic psychological risk aversion 122. The utility of money is assumed to have diminishing marginal returns; gaining an additional dollar brings less psychological satisfaction to a billionaire than it does to an impoverished individual, often modeled via logarithmic or square-root curves 252651.

Ergodicity economics offers a radical reinterpretation of this entire theoretical construct. Peters demonstrates that the utility functions deployed in EUT are mathematically identical to the "ergodicity transformations" required to linearize a non-ergodic wealth process to compute its time-average growth rate 124. For example, if wealth evolves according to continuous geometric Brownian motion (the standard model for multiplicative financial dynamics), transforming the wealth variable by the natural logarithm, $v(x) = \ln(x)$, creates a new observable that grows additively and ergodically 8927. Therefore, maximizing the expected rate of change in logarithmic utility is mathematically indistinguishable from maximizing the objective time-average compound growth rate of the portfolio 22753.

This mathematical equivalence results in a fundamental philosophical pivot: utility functions are not subjective psychological preferences reflecting a person's unique "taste for risk." Instead, they are objective mathematical mappings describing the specific physical dynamics (additive, multiplicative, etc.) of the wealth process the agent is subjected to 1224.

Table 2: Comparing Expected Utility Theory and Ergodicity Economics

| Theoretical Dimension | Expected Utility Theory (EUT) | Ergodicity Economics (EE) |

|---|---|---|

| Primary Quantity Maximized | Expected Utility (Ensemble Average). | Time-Average Growth Rate (Geometric Mean). |

| Origin of Utility Functions | Subjective, idiosyncratic psychological preferences; measures personal risk aversion and behavior. | Objective, physical "ergodicity transformations" required by the specific dynamic stochastic process. |

| Treatment of Time | Largely static. Sequences of gambles are evaluated based on their single-period expected value aggregate. | Intrinsically dynamic. Calculates the continuous compound growth rate of a single trajectory as $T \to \infty$. |

| Rationale for Rejecting Favorable Odds | The psychological pain of a potential loss arbitrarily outweighs the pleasure of an equivalent gain. | A mathematical necessity to avoid the absorbing barrier of ruin and optimize longitudinal wealth growth. |

| Underlying Epistemological Assumptions | Implicitly assumes ergodic conditions where expected values effectively govern long-term optimal behavior. | Explicitly assumes economic variables are non-ergodic and require time-average calculations for survival. |

Mainstream Critiques from Standard Decision Theorists

The bold assertions of ergodicity economics - particularly the claim that it should replace Expected Utility Theory as the foundational axiom of decision making - have sparked intense pushback from mainstream decision theorists and orthodox economists, leading to rigorous debates across pre-prints and journals from 2020 to 2025 327535455.

1. Lack of Novelty and Logarithmic Equivalence

The most pervasive critique from the economic establishment is that ergodicity economics lacks theoretical novelty 3545556. Critics like Alexis Toda and standard decision theorists argue that maximizing the time-average growth rate is mathematically identical to using the Kelly Criterion (formulated by John Kelly in 1956) or maximizing a logarithmic utility function. Both of these concepts have been well-established in portfolio theory by scholars such as Henry Latané (1959) and Paul Samuelson (1969) for decades 275556. By dismissing EUT entirely, critics claim Peters is simply repackaging existing logarithmic utility models while wrapping them in the intimidating nomenclature of statistical mechanics 5357. If the predictions of EE are perfectly identical to EUT with log-utility, critics argue the theory is practically redundant and metaphysically unfalsifiable 53.

2. Inflexible Risk Aversion

Standard economics prides itself on accommodating diverse human preferences. Expected Utility Theory allows for infinite variations in utility functions to model aggressive risk-seekers, neutral agents, or highly conservative risk-avoiders 153. Critics point out that ergodicity economics rigidly prescribes the maximization of the geometric mean (the logarithmic transformation) as the singular "optimal" objective in multiplicative environments 53. This corresponds to a specific, immutable degree of risk aversion. Critics assert this is overly restrictive and prescriptive, arguing that not everyone should pursue the exact same growth-maximizing strategy because individual investors possess varying real-world liabilities, finite time horizons, and subjective tolerances for short-term drawdowns 53.

3. Mischaracterization of Mainstream Practices

Prominent economists note that while early introductory textbook models might employ naive expected value, advanced dynamic programming and multi-period EUT already account for compounding, wealth constraints, and the absorbing barrier of ruin 275458. Critics argue that the "ergodicity problem" erects a strawman of mainstream economics, falsely claiming the discipline indiscriminately assumes ergodicity 275354. Furthermore, Nobel laureate Paul Samuelson explicitly critiqued the idea of maximizing the geometric mean in long sequences, emphasizing that individual "tastes for risk" over finite periods are what truly count, rendering the strict infinite-horizon geometric-mean criterion somewhat arbitrary for human lifespans 53.

4. Pseudoscience Accusations and Publication Venues

In more severe methodological critiques, such as Toda's 2023 paper, the ergodicity economics program has been labeled "pseudoscience" 5556. Toda argues that EE fails Popperian falsifiability because it produces few new, testable macroeconomic implications beyond what EUT already predicts 5556. Additionally, critics point out a sociological dynamic: early EE literature was predominantly published in physics journals (e.g., Physical Review Letters, Nature Physics) rather than being subjected to the peer-review processes of top-tier mainstream economics journals like the American Economic Review, Quarterly Journal of Economics, or the Journal of Political Economy 555659. Critics argue this isolates the framework from expert economic scrutiny and allows proponents to claim revolutionary breakthroughs that economists consider solved problems.

Recent Behavioral Economics Experiments (2023 - 2026)

To counter the claim of unfalsifiability and demonstrate the practical superiority of their framework, proponents of ergodicity economics have turned to empirical behavioral experiments. The core testable hypothesis of EE is that humans intuitively adapt their risk behavior based on the specific dynamics of the environment (e.g., additive versus multiplicative), rather than possessing an innate, immutable psychological risk aversion parameter as proposed by EUT 2628.

The Copenhagen Experiments (Meder et al., 2021)

Initial breakthroughs occurred with the Copenhagen experiments conducted at the Danish Research Centre for Magnetic Resonance 22829. Researchers tested human subjects playing gambles under two distinct dynamic environments: one where wealth changes were additive (gaining or losing fixed amounts), and one where they were multiplicative (gaining or losing percentages of total wealth) 22830. Standard EUT and Prospect Theory predict that a person's utility function represents stable, idiosyncratic traits, meaning their underlying risk preferences should remain constant across the two environments 22830.

The empirical findings challenged EUT: subjects systematically altered their utility functions to match the dynamic setting. Under additive dynamics, subjects exhibited behavior resembling linear utility, which is mathematically time-optimal for additive growth. When switched to multiplicative dynamics, the exact same subjects significantly increased their risk aversion, behaving in a manner closely approximating logarithmic utility, which is time-optimal for multiplicative growth 228. The subjects appeared to implicitly optimize for the time-average growth rate rather than a static expected utility.

Human Decision-Making Under Additive Ruin (Vanhoyweghen et al., 2023)

While previous research established sensitivity to non-ergodicity in multiplicative dynamics, recent literature published in the peer-reviewed Proceedings of the Royal Society A (2023) expanded this paradigm to additive environments featuring absorbing boundaries 663. Vanhoyweghen and Ginis introduced a "risk of ruin" (a complete loss condition terminating the game) into a purely additive gambling scenario. Because hitting the ruin boundary ends the trajectory, the environment becomes non-ergodic even without the presence of multiplicative compounding 6.

The experiments demonstrated that human decision-makers are highly sensitive to this additive non-ergodicity. Risk aversion spiked dramatically based on the subject's proximity and the associated likelihood of hitting the ruin state 663. The authors concluded that humans calculate time averages more intuitively than behavioral models rooted in ensemble expected values acknowledge. Instead of being viewed as irrational victims of cognitive biases, humans may be highly rational agents correctly optimizing for their temporal survival in non-ergodic systems 663.

Reinforcement Learning and Artificial Intelligence (2024 - 2026)

Beyond human behavior, the principles of ergodicity economics are increasingly intersecting with machine learning and artificial intelligence design. Research published between 2024 and 2026 in venues like the Transactions on Machine Learning Research demonstrates that standard Reinforcement Learning (RL) agents struggle immensely in path-dependent, non-ergodic environments because standard RL algorithms are hard-coded to optimize for expected cumulative rewards (ensemble averages) 64.

Recent advancements have successfully integrated ergodicity transformations directly into deep RL models. By training agents to maximize time-average intrinsic growth rates rather than expected rewards, the models autonomously discover the Kelly criterion and exhibit extreme robustness in portfolio allocation tasks. This bridges AI design with the physical realities of compounding risk, proving that ergodicity awareness yields superior outcomes in sequential decision-making algorithms 4864.

Global Disciplinary Reception

As the debate matures past initial theoretical friction, ergodicity economics is fragmenting traditional boundaries, finding mixed but potent reception across various specialized fields outside of orthodox academia 643166.

Actuarial Science and Insurance

In the realm of actuarial science, Ole Peters formalized the application of these concepts in his 2023 paper, "Insurance as an Ergodicity Problem," published in the Annals of Actuarial Science 2564. Standard microeconomics views insurance merely through the lens of individual risk aversion, where a consumer pays a premium to avoid the psychological disutility of a potential loss. Ergodicity economics reframes insurance entirely: it acts as an institutional mechanism to restore ergodicity 4364. By pooling uncorrelated risks, a cooperative ensemble effectively translates the mathematical benefits of the ensemble average back into the time-average trajectory of the individual, providing a purely physical and mathematical justification for the existence of insurance markets 4364.

Quantitative Trading and Asset Management

Among practitioners in quantitative trading and asset management, the principles of ergodicity find a far warmer reception than in academic economics departments 15335367. Practitioners inherently understand the "volatility drag" and the asymmetrical, destructive effects of negative compounding 2948. The Kelly Criterion, which explicitly maximizes long-term geometric compound growth, has long been a staple among sophisticated quants, dating back to John Kelly (1956) and Ed Thorp's work in the 1960s 171525. Ergodicity economics provides a rigorous physical scaffolding for why Kelly-based bet sizing and tail-risk hedging strategies - such as those famously deployed by Mark Spitznagel's Universa Investments - mathematically outperform naive ensemble-based portfolio construction over long, sequential horizons 7334867.

Heterodox Economics and Pedagogical Shifts

Within heterodox economics, institutional economics, and complexity theory, the paradigm is celebrated for stripping away ad-hoc psychological assumptions in favor of observable, physical dynamics 32468. The publication of the first dedicated textbook for the field, An Introduction to Ergodicity Economics (Peters & Adamou, LML Press, 2025), represents a major milestone in institutionalizing the research program for future students 29646970. Reviews from heterodox economists, such as Lars Syll, praise the textbook for highlighting how the unstated ergodicity assumptions of equilibrium models generate systemic financial crises by encouraging excessive leverage that ignores the absorbing barrier of ruin 2469. Furthermore, dedicated global conferences, such as the Ergodicity Economics 2026 event themed "Society and Finance," signal the framework's expansion. It is evolving from a narrow mathematical critique of decision theory to broader, systemic applications concerning wealth inequality, the design of social safety nets, and the structuring of regenerative, circular economies 247071.

Conclusion

Ergodicity economics fundamentally challenges the epistemological bedrock of classical decision theory. By rigorously mapping the physical concepts of statistical mechanics to financial wealth trajectories, it exposes the mathematical fallacy of applying cross-sectional ensemble averages to longitudinal, path-dependent human lives.

While mainstream economists rightfully challenge the strict novelty of the framework - pointing to its practical mathematical equivalence with logarithmic utility and the Kelly criterion - the paradigm shift lies precisely in its interpretation. Where Expected Utility Theory views the avoidance of a favorable multiplicative gamble as a subjective quirk of human psychology, ergodicity economics frames it as an objective, physical mandate for survival in a non-ergodic universe. Recent behavioral experiments spanning 2023 to 2026 heavily corroborate that both human agents and advanced artificial intelligence models adapt their decision-making architectures to the dynamic, non-ergodic structure of their environments, intuitively optimizing for time averages to prevent ruin.

As global financial markets increasingly grapple with compounding, interconnected risks, the transition from economic models optimizing parallel expected values toward models optimizing temporal realities represents not a pseudoscientific diversion, but a necessary evolution in aligning economic theory with the mechanics of the physical world.