Effectiveness of adolescent social media restrictions

The Trajectory of Digital Governance and Adolescent Psychology

The intersection of adolescent psychological development and digital ecosystem design has emerged as one of the most rigorously contested areas of public health, legal theory, and technology policy in the twenty-first century. For over a decade, psychiatric and psychological communities have documented a precipitous increase in youth mental health disorders, including anxiety, clinical depression, and self-harm 11. Concurrently, the pervasive adoption of smartphones and high-engagement social media platforms among adolescents has fundamentally rewired the mechanics of childhood socialization. This dual phenomenon has precipitated a global legislative debate over the extent to which digital platforms cause measurable psychological harm, and whether state-mandated access restrictions - specifically, age-based bans delaying digital access - serve as an effective and enforceable public health intervention.

Historically, the regulation of youth internet access relied heavily on parental mediation and industry self-regulation. Early legislative frameworks, such as the United States' Children's Online Privacy Protection Act (COPPA), merely restricted commercial data collection for users under the age of thirteen without requiring stringent identity or age verification protocols 23. However, as the limitations and widespread circumvention of these early frameworks became apparent, governments worldwide began shifting toward direct, aggressive legislative intervention 4. Between 2024 and 2026, an unprecedented wave of legislation swept across global jurisdictions, characterized by the implementation of hard age thresholds, mandatory identity verification, and substantial financial penalties for non-compliant technology companies 56.

The rationale for these state interventions rests on the assertion that platform architectures - driven by infinite scrolling, algorithmic recommendations, and quantified social metrics - are inherently unsafe for the highly plastic, developing adolescent brain 789. Yet, the empirical research underlying these claims remains complex and highly debated among leading sociologists and developmental psychologists. While robust correlational data links heavy social media use to adverse mental health outcomes, longitudinal and experimental studies frequently fail to disentangle causation from correlation 1011. Furthermore, technology researchers and child rights advocates warn that blanket access bans may yield severe unintended consequences, such as isolating marginalized youth, stunting the development of digital literacy, and driving young users toward unregulated or encrypted digital enclaves 1312.

This report examines the global research surrounding social media bans for adolescents, directly addressing whether delaying access produces measurable benefits. It provides an exhaustive analysis of current international legislative frameworks, evaluates the technical efficacy of age verification mandates, and synthesizes the epidemiological evidence concerning social media's impact on youth mental health. Finally, it assesses the preliminary empirical outcomes of the world's first national social media bans and explores alternative regulatory models focused on platform design modification and digital literacy.

The Global Legislative Landscape

The regulatory approaches to adolescent social media use diverge significantly across jurisdictions. While some governments pursue comprehensive bans with no parental exemptions, others prioritize verified parental consent frameworks or state-mandated algorithmic restrictions. This variance reflects differing cultural tolerances for state intervention in family life, distinct interpretations of international data privacy laws, and varying degrees of constitutional protection for digital speech.

Absolute Bans and the Australian Model

The most restrictive legislative models involve absolute bans on social media access for minors below a specified age, expressly overriding parental consent. The primary justification for this approach is that absolute bans eliminate the peer pressure "snowball effect," ensuring that children are not socially compelled to join platforms before reaching developmental maturity 13.

Australia represents the vanguard of this regulatory strategy. In November 2024, the Australian federal parliament passed the Online Safety Amendment (Social Media Minimum Age) Act, which came into full enforcement on December 10, 2025 25. The legislation forces platforms to take "reasonable steps" to prevent individuals under the age of sixteen from holding accounts on major networks, including Facebook, Instagram, Snapchat, TikTok, and X 216. Crucially, the Australian model permits no parental exemptions; a fifteen-year-old cannot legally maintain a social media account even if their legal guardians expressly permit it 1614. Non-compliant technology companies face maximum fines of up to A$49.5 million per breach 1614.

Tiered Restrictions and Constitutional Challenges in the United States

In the United States, legislative momentum has primarily occurred at the state level due to the absence of federal consensus. Jurisdictions such as Utah and Arkansas initially passed laws requiring parental consent for all minors to hold social media accounts, but these early frameworks faced immediate injunctions in federal courts on First Amendment grounds 1519.

Florida's House Bill 3 (HB 3), enacted in 2024, represents one of the most prominent U.S. attempts to restrict access 816. The law prohibits children under the age of fourteen from holding accounts on social media platforms that utilize "addictive features," defined as infinite scroll, autoplay, and algorithmic push notifications 817. Unlike the Australian model, Florida utilizes a tiered approach: children under fourteen are entirely banned, while fourteen- and fifteen-year-olds are permitted access subject to verified parental or guardian consent 816.

The enforcement of HB 3 was initially blocked by a federal district judge, who ruled that the legislation unconstitutionally burdened the free speech rights of minors by banning them from expressive platforms 1718. However, in late 2025, a divided panel of the 11th U.S. Circuit Court of Appeals granted a stay, allowing Florida to enforce the law while litigation continues, arguing that the law targets the addictive features rather than the speech itself 1619. The law mandates that platforms delete underage accounts within ninety days and imposes penalties of $50,000 per violation under the Florida Deceptive and Unfair Trade Practices Act 24. Fiscal analysts project that enforcing the ban could save the state tens of thousands of dollars annually in public school cyberbullying prevention and Medicaid mental health expenditures .

Digital Majority and Parental Consent in Europe

European nations have generally favored frameworks that balance child protection with privacy rights and parental autonomy. These policies often center on the concept of a "digital majority" - an age at which a minor can independently consent to the processing of their personal data.

France passed a "digital majority" law in 2023 setting the minimum age for unassisted social media use at fifteen 20. Originally, the enforcement of this law was delayed due to conflicts with the European Union's Digital Services Act (DSA), as the European Commission argued the national law encroached on EU competencies regarding platform regulation 2021. However, invoking public health exceptions under Article 114 of the Treaty on the Functioning of the European Union, France pushed forward, making the law operational by early 2026. Platforms operating in France are now required to use certified third-party verification tools to ensure users under fifteen have explicit parental permission 2028.

Similarly, the United Kingdom's approach, governed by the Online Safety Act (OSA) of 2023, places the burden of proof on platforms to prevent underage access to harmful material (such as pornography, self-harm promotion, and eating disorder content) rather than banning social media outright 2223. The OSA forces platforms to use highly effective age-gating methods, threatening fines of up to 10% of global annual turnover or £18 million 24. However, facing intense public pressure regarding youth mental health, political discourse in the UK has increasingly moved toward considering an outright Australian-style ban for users under sixteen 525.

State-Mandated Algorithmic Restrictions in Asia

Asian jurisdictions exhibit some of the most aggressive regulatory architectures globally. India established a stringent parental consent framework via the Digital Personal Data Protection Act (DPDPA) of 2023. Unlike Western frameworks that generally define the digital majority at thirteen to sixteen years old, the DPDPA legally defines a "child" as anyone under the age of eighteen 3326. Consequently, social media platforms operating in India must obtain verifiable parental consent for any user under eighteen before processing personal data. The penalties for non-compliance are existential for platforms, reaching up to ₹250 crore, alongside the threat of total service blocking within the country 3327.

The People's Republic of China (PRC) utilizes a distinct regulatory apparatus that focuses heavily on state-mandated modifications to platform architecture and temporal restrictions. Following strict limits on online gaming enacted in 2021, Chinese authorities mandate the implementation of a "Youth Mode" for social media and short-form video platforms like Douyin 2829. This mode restricts usage for individuals under fourteen to forty minutes per day and enforces a hard digital curfew, completely disabling access between the hours of 10:00 PM and 6:00 AM 2938.

Furthermore, the algorithm in China's Youth Mode is fundamentally altered, stripping away engagement-driven entertainment loops in favor of educational, scientific, and patriotic content 29. The Cyberspace Administration of China (CAC) heavily polices these platforms; violations by operators can result in severe administrative penalties, including the suspension of content updates, removal of monetization privileges, and massive fines 3031.

Synthesis of Global Legislative Approaches

The variance in global frameworks highlights a transition from self-regulation toward strict state intervention. The following table synthesizes the distinct approaches to adolescent digital regulation as of early 2026:

| Jurisdiction | Minimum Age Threshold | Regulatory Framework | Enforcement Mechanism |

|---|---|---|---|

| Australia | Under 16 | Absolute Ban | Age verification mandate; fines up to A$49.5M. No parental exemptions. |

| Florida (USA) | Under 14 (Ban); 14-15 (Consent) | Tiered Ban / Parental Consent | Age verification mandate; fines of $50,000 per violation. |

| France | Under 15 | Digital Majority / Parental Consent | Certified third-party verification tools; EU DSA compliance integration. |

| China | Under 14 (Restrictions) | Algorithmic Modification | "Youth Mode" (40 min/day limit, curfew); real-name identity registration. |

| India | Under 18 | Parental Consent | Verifiable parental consent mapped to virtual tokens/Aadhaar; fines up to ₹250 crore. |

| United Kingdom | Context-dependent | Harm-Based Age Gating | Online Safety Act mandates; fines up to 10% global revenue for failing to protect minors. |

The Efficacy and Vulnerabilities of Age Verification

The success of any statutory social media ban or parental consent framework relies entirely on the technical efficacy of the underlying age assurance infrastructure. Without robust, universally applied verification, legislative bans merely serve as symbolic policies. In practice, the technological deployment of age verification has faced immense friction, characterized by high bypass rates, user privacy concerns, and architectural vulnerabilities.

Technical Architectures of Age Assurance

The digital identity industry categorizes age assurance into three distinct methodologies, each carrying inherent trade-offs between accuracy, availability, and user privacy 3233:

- Self-Declaration: The historical standard, requiring users to input their date of birth or check a box confirming they meet the age requirement. Regulatory bodies globally, including the UK's Ofcom, have explicitly declared this method insufficient for compliance with new legislation due to its extreme ease of bypass 323.

- Age Estimation (Probabilistic): This utilizes artificial intelligence (AI) and biometric analysis to infer a user's age based on statistical inference 33. The most prominent example is facial age estimation, wherein a user uploads a live selfie, and an algorithm determines if they meet the threshold 3. While highly available and less intrusive than document uploads, facial estimation struggles with accuracy at the margins - particularly in distinguishing a mature fifteen-year-old from a sixteen-year-old - which creates friction for edge cases 34.

- Age Verification (Deterministic): This relies on definitive proof of identity. Methods include credit card verification (predicated on the assumption that minors do not hold independent credit accounts), government ID uploads, or integration with state-backed digital identity systems, such as India's Aadhaar or mobile provider databases 323.

Bypass Rates and Implementation Failures

Despite the implementation of advanced age assurance tools, comprehensive data from 2025 and 2026 suggests that determined minors easily circumvent these barriers. A May 2026 report by the UK-based nonprofit Internet Matters surveyed minors and found that 46% believed age controls were easy to bypass, and 32% admitted to having successfully bypassed them at least once 35.

The methods of circumvention expose vulnerabilities in both technology and human behavior. The most fundamental vulnerability is collusive behavior: the Internet Matters report found that 26% of parents admitted to allowing their children to bypass age checks, and 17% actively assisted them by using their own credentials or devices 3545. For biometric estimation systems, focus group reports indicate children occasionally spoof facial estimation tools using video game characters, unusual facial expressions, or even drawn-on facial hair, highlighting gaps in liveness detection technologies 4536.

Furthermore, digital routing tools completely undermine geographically bound legislative bans. Virtual Private Networks (VPNs) allow adolescents to mask their device's IP address, making it appear as though they are connecting from a jurisdiction without age verification laws 2837. Survey data from 2026 indicates that up to 22% of adult and adolescent users attempting to bypass age restrictions utilized a VPN to do so 37. Consequently, platforms must actively monitor and block VPN traffic to enforce regional laws, an undertaking that is computationally expensive and difficult to scale without inadvertently blocking legitimate, privacy-seeking users 2245.

Privacy and Data Security Trade-offs

The mandate for pervasive age verification has alarmed cybersecurity experts and privacy advocates, who argue that forcing populations to upload identification documents to private platforms creates systemic vulnerabilities. Digital rights organizations, such as the Electronic Frontier Foundation (EFF), contend that age verification mandates normalize mass surveillance, threaten the anonymity fundamental to a free internet, and ultimately harm user privacy 38.

If social media platforms centralize identity data, they become highly lucrative targets for cyberattacks and synthetic identity fraud 4939. To mitigate this, independent age-verification providers promote "zero-knowledge proof" systems and tokenized identity verification 40. Under a zero-knowledge architecture, the verification provider analyzes the biometric or documentary evidence and confirms to the social media platform that the user is over the legal age limit without transmitting the user's actual name, date of birth, or identification documents 22. While these cryptographic solutions offer a theoretical balance between safety and privacy, their widespread implementation remains fragmented across different platforms and national infrastructures, complicating compliance 41.

| Verification Method | Mechanism | Primary Advantage | Primary Vulnerability |

|---|---|---|---|

| Self-Declaration | User manually inputs birthdate. | Zero friction; highly accessible. | Easily bypassed; non-compliant with new regulations. |

| Facial Age Estimation | AI analyzes a live selfie to infer age probabilistically. | No document upload required; preserves relative anonymity. | Accuracy issues near the age threshold (e.g., distinguishing 15 from 16); vulnerable to spoofing. |

| Documentary Verification | User uploads a government ID or credit card token. | Highly accurate and deterministic. | Creates massive data privacy risks; excludes unbanked/undocumented users. |

| Zero-Knowledge Proofs | Third-party confirms age without sharing underlying identity data. | Balances high security with data minimization and privacy. | Complex to implement at scale; requires universal industry standardization. |

Epidemiological Evidence on Social Media and Mental Health

The legislative momentum driving social media bans is predominantly fueled by epidemiological data showing a precipitous decline in youth mental health starting around 2010 153. However, the scientific community remains deeply divided on whether social media is the primary causal agent of this crisis, or merely a correlational artifact of broader societal shifts.

Longitudinal Associations and Cognitive Impacts

The core argument supporting social media bans rests on massive datasets indicating that heavy digital media use correlates with depressive symptoms, behavioral problems, and anxiety. A comprehensive review published in JAMA Pediatrics analyzed data from hundreds of thousands of youths and found that social media use was associated with poorer outcomes across multiple developmental domains, particularly social-emotional development 1. Another cohort study of U.S. adolescents aged 12 to 15 demonstrated that teenagers spending more than three hours per day on social media faced double the risk of experiencing poor mental health outcomes, including symptoms of depression and anxiety 7.

Beyond emotional health, emerging research points to the cognitive impacts of early social media exposure. A study published in the Journal of the American Medical Association (JAMA) in October 2025 tracked a cohort of 9- to 13-year-olds, finding that those with rising levels of social media exposure performed more poorly on reading, memory, and vocabulary tests compared to peers with little to no exposure 42. Researchers suggest that flooding the highly plastic adolescent brain with rapid, fragmented digital input may prompt neurological pruning that makes the brain more amenable to digital consumption but less capable of sustained academic learning 42.

Researchers emphasize that the adolescent brain is uniquely vulnerable to the specific mechanics of social media. The period between ages 10 and 19 is characterized by heightened neuroplasticity, particularly in the amygdala (which handles emotional learning) and the prefrontal cortex (which governs impulse control and moderates social behavior) 7. During puberty, the brain is highly sensitive to social rewards, peer opinions, and social punishments 743. Algorithms optimized to maximize engagement frequently exploit these neurological vulnerabilities by delivering intermittent social rewards (likes, shares) or triggering the fear of missing out (FOMO) 79.

Crucially, the impact of social media is not uniform across all ages. A landmark U.K.-based longitudinal study analyzing over 17,000 participants identified specific "windows of developmental sensitivity." For females, higher estimated social media use predicted decreases in life satisfaction specifically at ages 11 - 13 and again at age 19. For males, these windows occurred later, at ages 14 - 15 and 19 44. The existence of these distinct, biologically linked developmental windows provides the strongest empirical rationale for delaying access until late adolescence.

The Causation vs. Correlation Debate

Despite the volume of associative data, the scientific consensus on causation remains highly fractured. Researchers such as Jonathan Haidt argue that the sudden introduction of smartphones and always-available social media in the early 2010s was a population-level "uncontrolled experiment" that directly caused the epidemic of youth mental illness 5345. Haidt points to direct harms - such as millions of teenagers experiencing online sexual harassment (sextortion) or cyberbullying - and indirect harms, such as severe sleep deprivation and the displacement of in-person socialization 4546. Haidt cites meta-analyses indicating that the odds of depression increase by approximately 13% for every additional hour per day of social media use, leading to massive cumulative risk for teenagers averaging over five hours daily 46.

Conversely, prominent psychologists and researchers, such as Candice Odgers and Christopher Ferguson, warn against drawing definitive causal conclusions from the existing literature 1147. A primary critique is that the vast majority of studies in this domain are cross-sectional and cannot determine the direction of the effect 1148. The "social compensation approach" suggests an alternative hypothesis: adolescents with pre-existing mental health difficulties, who are already experiencing offline interpersonal struggles, may disproportionately turn to social media for connection or distraction 49. Thus, depression may drive heavy social media use, rather than the reverse.

Furthermore, an umbrella review of meta-analyses published between 2021 and 2025 found that while problematic or addictive social media use was consistently linked to ill-being, general time spent on social media showed weak and inconsistent associations with mental health outcomes 62. Many studies demonstrate small effect sizes that are practically equivalent to the impacts of other common lifestyle variables, such as poor diet or lack of physical activity 1. Consequently, experts caution that focusing exclusively on social media bans may distract policymakers and parents from addressing the multi-faceted root causes of adolescent depression, such as economic stress, academic pressure, and lack of community resources 11.

Evaluating Outcomes: The Australian Implementation

Because theoretical debates surrounding social media bans are often abstract, researchers are closely monitoring Australia's pioneering under-sixteen ban to assess its real-world implementation and efficacy. Four months after the law took effect in December 2025, preliminary data reveals a landscape of logistical challenges, partial compliance, and mixed behavioral outcomes.

Compliance and Account Removals

Initial reports from the Australian eSafety Commissioner indicated that by mid-January 2026, technology platforms had removed, deactivated, or restricted more than 4.7 million accounts assessed to belong to users under sixteen 3450. While this figure suggests action at scale, regulatory audits quickly demonstrated that account removals do not equate to full compliance.

The eSafety Commissioner raised "significant concerns" about the poor practices of major platforms, noting that the rollout was slow, reactive, and plagued by systemic vulnerabilities 34. Platforms frequently relied on weak age verification flows, allowing users to repeatedly attempt different verification methods until they successfully regained access 64. Independent polling conducted by the Molly Rose Foundation in March 2026 underscored this failure: 61% of Australian 12-to-15-year-olds who previously held accounts on restricted platforms reported that they still possessed one or more active accounts, and 70% described circumventing the ban as "easy" 65.

Preliminary Behavioral and Psychological Outcomes

Despite the porous nature of the technological enforcement, surveys of Australian families suggest that the legislative intervention has forced a shift in domestic routines, yielding a highly complex reality.

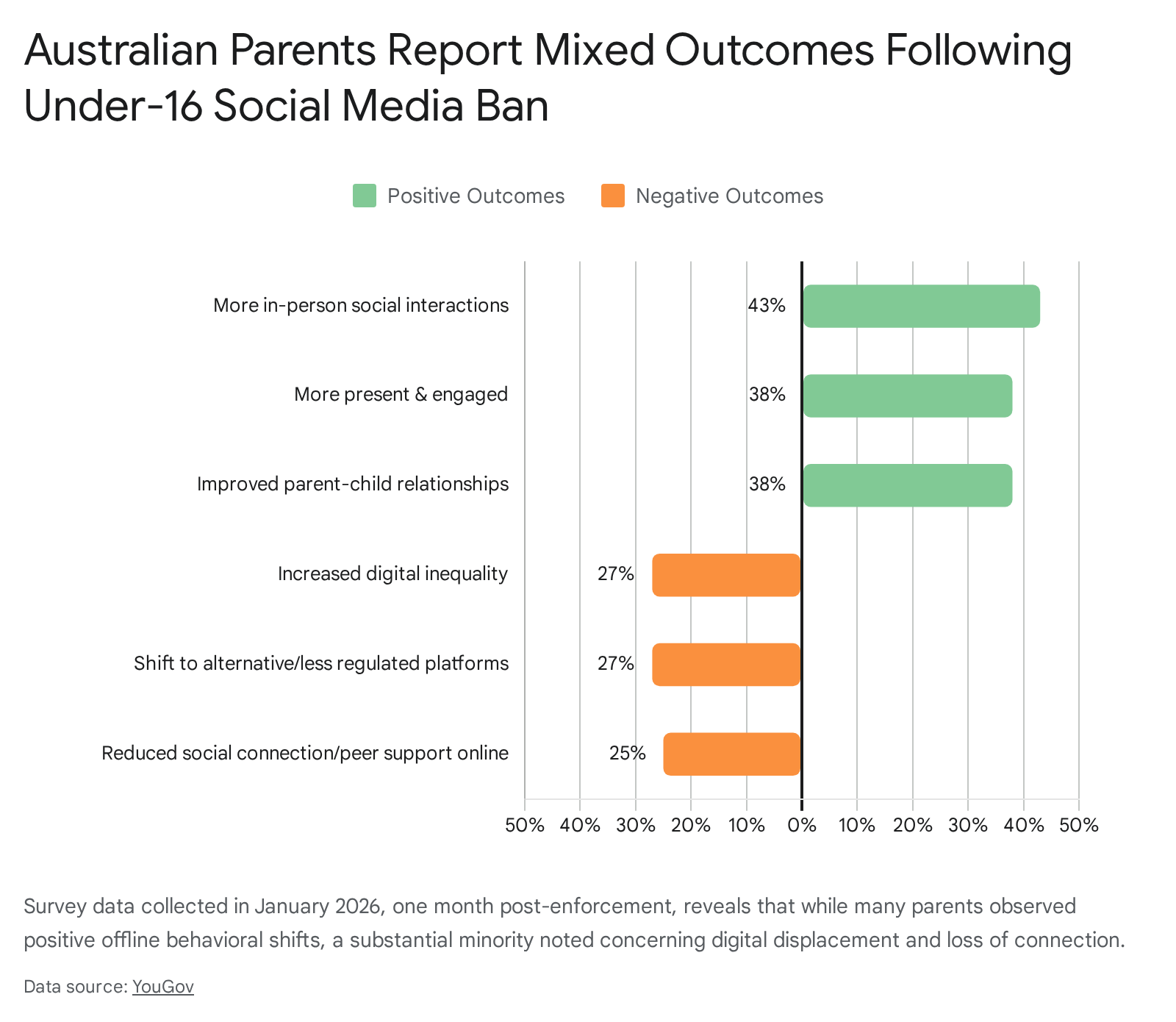

A YouGov survey from January 2026 found that among parents of children aged 16 and under, 61% observed positive behavioral changes, such as increased in-person interactions (43%) and improved parent-child relationships (38%) 5051.

However, the same survey noted significant negative impacts, with 27% of parents reporting their children had shifted to alternative or less regulated platforms, and 25% observing reduced social connection and peer support online 5051.

Crucially, the ban did not immediately correlate with a reduction in cyberbullying or image-based abuse complaints to the eSafety Commissioner in the initial months 3464. Among adolescents surveyed, 51% reported the ban made no difference to their online safety, and 14% stated they actually felt less safe 65. This early data indicates that while statutory bans can alter household habits and reduce overall screen time for some, they are insufficient as standalone tools for eliminating digital harm, particularly given the ease of technological circumvention.

Unintended Consequences of Access Bans

Given the ambiguous causal evidence and the immediate technical failures of blanket implementations, child rights advocates, digital sociologists, and international bodies like UNICEF have warned that sweeping social media bans introduce severe, unintended consequences 52. Treating digital platforms as harmful substances analogous to tobacco or alcohol fundamentally misrepresents how embedded the internet is within modern adolescent infrastructure 12.

Impacts on Marginalized and Isolated Adolescents

The most significant risk of a blanket ban is the severance of vital support networks for vulnerable and marginalized populations. For many adolescents - particularly LGBTQ+ youth, neurodivergent individuals, or those living in geographically isolated or conflict-affected areas - social media provides a critical lifeline 123952.

While a local school environment may be hostile or lacking in peers with shared experiences, digital spaces offer access to identity-affirming communities and health information 713. Research explicitly demonstrates that integration into digital social routines is positively associated with community connectedness and reduced suicide risk for marginalized youth 53. Banning access effectively removes these support systems without providing viable offline alternatives. As noted in a viewpoint paper in JMIR Mental Health, strict bans risk "instilling feelings of isolation" and disproportionately harming those who rely on digital platforms for their primary social connection 54.

Displacement to Unregulated Digital Spaces

Blanket restrictions on major, highly visible social media platforms (e.g., Instagram, TikTok) rarely extinguish the adolescent desire for digital socialization; instead, they often displace the behavior 12. When faced with rigid age gates on mainstream platforms, adolescents may migrate to less regulated platforms, encrypted messaging boards, or anonymous forums that lack robust content moderation, safety reporting tools, or age-appropriate safety guidelines 6452.

This displacement represents a significant public health hazard. Mainstream platforms, despite their flaws, are subject to public scrutiny, advertiser pressure, and regulatory transparency reporting. Darker, unregulated corners of the internet provide no such oversight, potentially exposing minors to more severe risks of grooming, unregulated explicit material, and radicalization 3452.

Arrested Development of Digital Literacy

A further unintended consequence of banning social media until an arbitrary age is the creation of a "developmental cliff." Digital literacy, emotional regulation online, and the capacity to identify misinformation are not innate skills; they are cultivated through guided practice and gradual exposure 3970.

When access is entirely removed, adolescents are deprived of the opportunity to navigate online environments under the supervision and guidance of parents or educators. As scholars of digital pedagogy argue, restriction without education leaves youth unprepared for a society fundamentally integrated with digital and artificial intelligence systems 71. When a sixteen-year-old is suddenly granted unrestricted access to a highly complex digital ecosystem without having practiced discernment in scaffolded environments, they may lack the critical judgment and self-regulation necessary to navigate manipulative design patterns or cyberbullying safely 70. Thus, absolute bans may ultimately delay, rather than prevent, digital harm.

Regulatory Alternatives to Access Bans

Recognizing the technical difficulties and potential unintended harms of blanket access bans, a growing coalition of policy researchers, technologists, and child development experts advocate for alternative regulatory paradigms. Rather than policing the age of the user, these frameworks propose regulating the design of the platform.

Algorithmic and Platform Design Modifications

The "Safety by Design" approach posits that the root cause of digital harm lies in business models optimized for endless engagement and data extraction. Organizations such as the Electronic Privacy Information Center (EPIC) and the 5Rights Foundation argue that platforms must be forced to abandon manipulative architectures that exploit adolescent psychology 555657.

Legislative frameworks pursuing this path, such as Age-Appropriate Design Codes, mandate that companies build child-centered protections into the fundamental architecture of their products 55. Key interventions under this model include:

- Algorithmic De-amplification: Prohibiting platforms from utilizing surveillance-based algorithmic feeds for minor accounts, thereby neutralizing the rabbit-hole effect of infinite, personalized scrolling 5575.

- Feature Disablement: Forcing platforms to turn off specific high-risk features for minors by default, such as autoplay mechanisms, push notifications during nighttime hours, and gamified engagement metrics 475.

- Privacy by Default: Ensuring that minor accounts default to the highest privacy settings, preventing location tracking, unwanted direct messaging from adults, and targeted advertising 75.

Proponents argue this approach is technologically neutral and more legally resilient than access bans. Because it restricts the behavior of the corporation rather than the speech rights of the adolescent, it avoids the severe First Amendment challenges that have plagued U.S. age-gating laws 1955. Furthermore, by mitigating the inherent toxicity of the platform, design regulations protect all users, acknowledging that algorithmic addiction and harmful content affect adults just as much as teenagers 439.

Educational Interventions and Emotion Regulation

In conjunction with platform design regulation, experts emphasize the necessity of proactively teaching digital resilience. If the ultimate goal is public health, policy must move beyond mere restriction to foster cognitive adaptability 70.

Clinical psychologists advocate for integrating digital literacy and emotion regulation training into early educational curricula. Rather than simply telling adolescents to disconnect, educators and parents must teach them to recognize manipulative design patterns, evaluate the credibility of digital information, and monitor their own emotional responses to online stimuli 4258. Research demonstrates that when adolescents are taught to identify when an online experience is hurting rather than helping, they are better equipped to self-regulate their usage and mitigate symptoms of anxiety 5859. By treating digital citizenship as a teachable competency, society can empower youth to navigate an increasingly AI-saturated world without succumbing to algorithmic dependencies 7071.

Conclusion

The global push to restrict adolescent access to social media represents a profound shift in internet governance, driven by legitimate and urgent concerns over a population-level decline in youth mental health. Epidemiological evidence clearly identifies early adolescence as a period of acute neurological vulnerability to the engagement mechanics embedded in modern digital platforms. However, the assertion that delaying access through state-mandated bans offers a panacea to this crisis is not supported by the totality of the research.

Early evidence from nations implementing hard bans, such as Australia, reveals that enforcement is severely constrained by technological limitations, with age verification systems routinely bypassed by determined minors and collusive parents. Furthermore, sweeping restrictions carry documented risks: they threaten to sever crucial lifelines for marginalized youth, displace adolescent socialization into darker, unregulated digital environments, and arrest the development of essential digital literacy skills.

Global research suggests that the most effective path forward lies not in attempting to excise adolescents from the digital world, but in reforming the digital world to accommodate adolescents. Policymakers who transition their focus from user restriction to platform architecture - mandating algorithmic transparency, default privacy, and the elimination of compulsive design features - can fundamentally reduce digital harm. When combined with robust educational initiatives focused on emotion regulation and critical digital citizenship, this comprehensive approach offers a sustainable framework for protecting youth in an unavoidably digital future.