Early Classroom Evidence for AI Tutors in Childhood Learning

Introduction to Algorithmic Tutoring Systems

The integration of artificial intelligence into primary and secondary education represents a fundamental shift in pedagogical methodology, transitioning digital education from passive content delivery systems to interactive, adaptive instructional environments. Historically, the pursuit of personalized education has been heavily influenced by Benjamin Bloom's 1984 "two-sigma" problem, which demonstrated that students receiving individualized human tutoring outperformed 98% of their classroom-instructed peers, achieving a 2.0 standard deviation improvement 12. While scaling one-on-one human tutoring remains cost-prohibitive for most public education systems worldwide, the rapid advancement of large language models (LLMs) has introduced generative artificial intelligence as a highly scalable alternative designed to simulate personalized instructional dialogue 345.

Recent evidence spanning from 2024 to 2026 indicates that artificial intelligence tutoring systems are moving beyond experimental pilot phases into widespread classroom deployment. By the 2024 - 2025 school year, student adoption of generative tools in the United States reached nearly 60%, with analogous usage spikes globally across high-income and low-income demographics 67. Unlike prior iterations of intelligent tutoring systems (ITS) that relied on rigid decision trees and predefined pathways, modern generative systems process natural language to interpret student misconceptions, adapt pacing dynamically, and generate customized, context-aware feedback 8910.

Despite these advanced technological capabilities, early classroom evidence reveals a highly complex reality. The efficacy of an artificial intelligence tutor is not determined solely by the underlying model's computational parameters, but rather by its pedagogical design, its integration with existing curricula, and the presence of human oversight. Unrestricted access to general-purpose conversational agents has been shown to induce "metacognitive laziness," where students bypass critical problem-solving steps to arrive at immediate answers 11. Conversely, specialized models engineered with strict instructional guardrails demonstrate measurable improvements in reading fluency, mathematical comprehension, and conceptual mastery 121314. This report synthesizes recent randomized controlled trials, global implementation data, and demographic surveys to evaluate how artificial intelligence is altering childhood learning, examining the pedagogical mechanisms at play, the measurable empirical outcomes, and the structural inequities shaping its deployment.

Pedagogical Mechanisms and Cognitive Scaffolding

Integration with the Zone of Proximal Development

The effectiveness of artificial intelligence tutors is deeply rooted in established learning sciences, particularly Lev Vygotsky's sociocultural theory and the concept of the Zone of Proximal Development (ZPD) 1516. The ZPD defines the cognitive space between what a learner can achieve independently and what they can achieve with targeted guidance. Human educators organically scaffold learning within this zone by providing hints, restructuring complex problems, and progressively fading assistance as the student's competence grows 1617.

Emerging research demonstrates that LLMs can be successfully prompted to simulate this cognitive scaffolding. A 2025 systematic evaluation framework operationalized this capability, showing that AI systems can activate a student's prior knowledge and calibrate assistance based on real-time conversational inputs 8. When an AI tutor operates successfully within the ZPD, it does not merely output direct answers; instead, it provides structured reasoning steps that help users develop internalized problem-solving frameworks 17. In special education and vocational training contexts, AI-driven adaptive platforms have been shown to facilitate differentiated instruction and collaborative learning, accommodating varied cognitive paces that human teachers in large classrooms struggle to manage consistently 1518.

However, AI integration also introduces distinct risks to cognitive development if scaffolding is improperly applied or absent. Studies indicate that when learners are presented with AI-generated solutions without being forced to engage in problem decomposition, debugging, or validation cycles, they experience shallow processing 16. This phenomenon, often termed "cognitive offloading," produces strong immediate task outputs but fundamentally weakens the student's long-term knowledge transfer and independent problem-solving capacities 911. The OECD Digital Education Outlook 2026 highlights this exact dynamic, noting that offloading cognitive work to chatbots enhances short-term task performance but frequently reverses when AI access is removed during unassisted examinations 1119.

Socratic Questioning Versus Direct Answer Generation

To mitigate the risks of cognitive offloading, developers of educational AI have increasingly turned to Socratic questioning mechanisms. This pedagogical approach programs the LLM to refuse direct requests for answers, forcing it to reply with guiding questions, contextual hints, and counter-inquiries that demand active student engagement.

A prominent example of this design is the CS50 Duck, a virtual tutor deployed in Harvard University's introductory programming courses and related secondary-school extensions 2021. Designed specifically to avoid full code generation, the CS50 Duck utilizes a retrieval-augmented generation (RAG) architecture grounded exclusively in official course materials 21. The system encourages algorithmic thinking through hints and Socratic prompts. Evaluative studies from 2025 and 2026 indicate that students utilize the tool primarily for conceptual understanding and implementation guidance rather than direct solution copying, with the model achieving an 88% accuracy rate on curriculum-specific questions 2022. Similar systems, such as the Iris virtual tutor, leverage chain-of-thought prompting alongside an awareness of the student's current workspace to adapt advice and promote independent problem-solving 23.

Khan Academy's Khanmigo similarly relies on a strict Socratic foundation. Engineered using GPT-4, Khanmigo acts as a conversational partner across various subjects, including mathematics and the humanities 222. When a student asks how to solve a fraction addition problem, the system responds by asking the student to identify the common denominator, walking them sequentially through the logical steps rather than calculating the sum 2. Independent evaluations note that students appreciate the step-by-step guidance and perceive the tool positively as a supplementary learning aid, though qualitative findings emphasize that learners do not view it as a complete replacement for traditional human instruction 25.

The Efficacy of Access Constraints

Classroom evidence suggests that the specific mode of access to an AI tutor dramatically alters student behavior and subsequent learning outcomes. A 2025 randomized experiment involving 334 higher-education students explored the differences between restricted and unrestricted AI access during independent study 13. The study initially hypothesized that forcing students to read textbook materials independently before unlocking the AI tutor (restricted access) would prevent premature reliance and encourage deeper initial processing.

Contrary to expectations, the study found that unrestricted access to the AI tutor significantly outperformed both the control group and the restricted access group, raising test performance by 0.34 standard deviations relative to the control 13. Behavioral analysis revealed that continuous, unrestricted availability allowed students to seamlessly integrate the AI into their learning flow, using it for immediate, adaptive feedback to clarify concepts iteratively as they read. In contrast, restricted access induced "intensive bursts of prompting" that disrupted the learning flow, as students initiated heavy AI usage immediately upon gaining access while ignoring the primary text 13. This causal evidence suggests that AI tutors function best as adaptive scaffolds aligned with self-regulated engagement, rather than strictly gated rewards.

Empirical Impact on Learning Outcomes

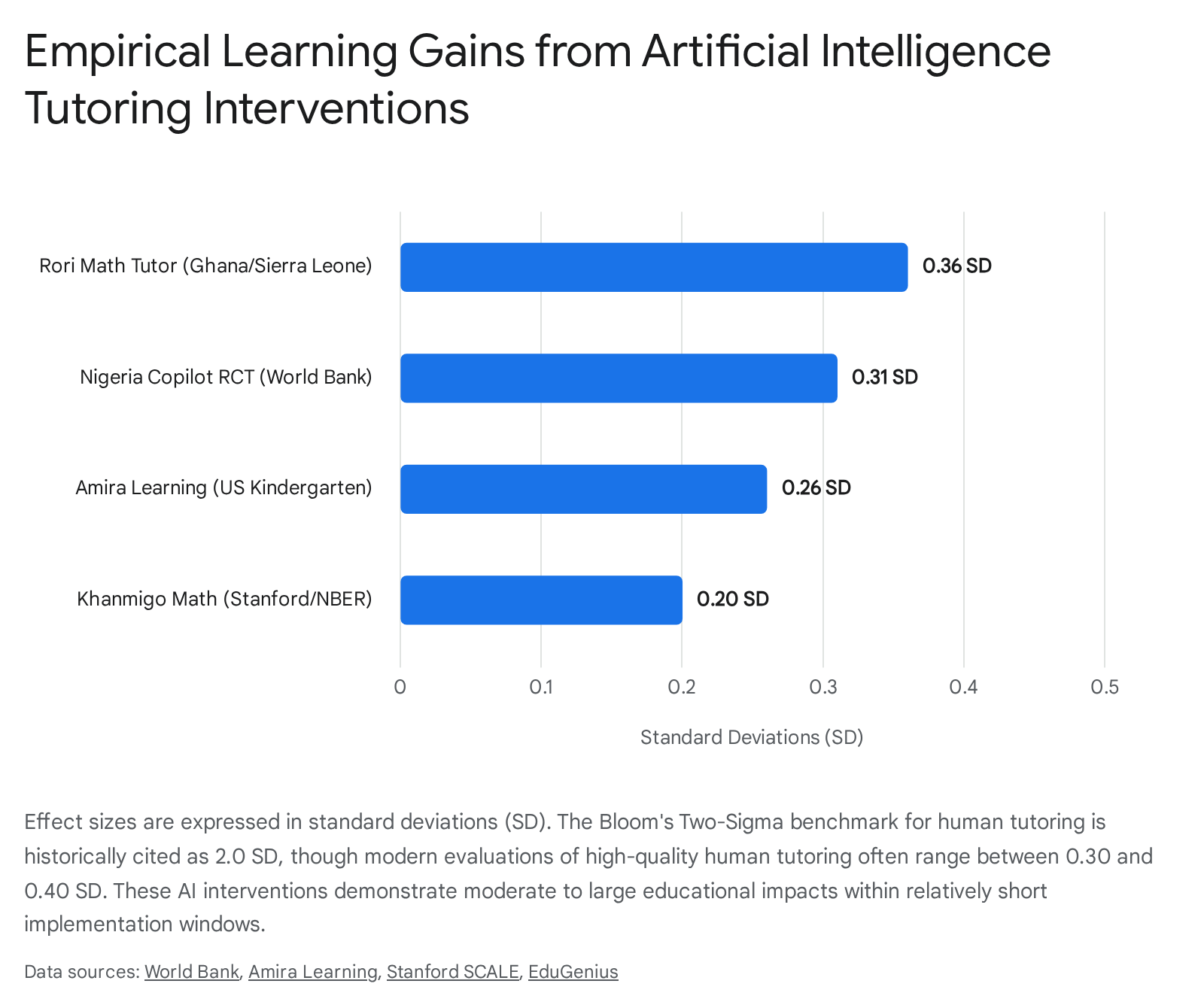

Across diverse educational settings, randomized controlled trials (RCTs) and large-scale matched comparison studies have quantified the impact of AI tutors on academic performance. The data consistently demonstrates that well-designed AI interventions can yield statistically significant learning gains, though the magnitude of these gains varies by subject, duration, and implementation model.

Literacy and Language Acquisition

In early childhood literacy, artificial intelligence has been successfully utilized to deliver high-dosage, individualized reading practice through voice recognition and adaptive tutoring protocols. Amira Learning, an AI reading coach developed in conjunction with literacy researchers, evaluates students' oral reading fluency by listening to them read aloud and providing real-time, targeted phonetic and vocabulary interventions when they struggle 23.

During the 2024 - 2025 academic year, a massive matched comparison study in a large urban Texas school district evaluated the platform's impact on 15,424 kindergarten and first-grade students 24. The study rigorously matched treatment and control students based on beginning-of-year reading percentile scores (using the DIBELS 8th Edition assessment), socioeconomic status, race/ethnicity, and special education designations. Results indicated that kindergarten students using Amira scored approximately 8 percentile points higher on end-of-year assessments than matched controls, yielding a statistically significant effect size of +0.26 standard deviations 24. First graders saw a smaller, yet significant, effect size of +0.06 SD. Similarly, empirical studies focusing on middle school cohorts observed that sixth graders using the platform for 20 to 30 minutes weekly gained 6 to 7 percentile points more than low-usage peers across consecutive years, achieving an effect size between 0.35 and 0.40 14. This robust empirical data resulted in a Moderate (Tier 2) evidence rating under the rigorous Every Student Succeeds Act (ESSA) standards 2324.

Mathematics Performance and Subject-Specific Tutoring

Mathematics, characterized by highly structured knowledge domains and algorithmic logic, has proven exceptionally responsive to AI-driven interventions 9. The Rori virtual math tutor, deployed primarily via WhatsApp in Sub-Saharan Africa, provides a compelling case study of subject-specific AI tutoring delivered in low-resource environments. Rori provides students with level-matched math micro-lessons and utilizes LLMs to interpret student responses, providing step-by-step hints and targeted socio-emotional coaching designed to foster a growth mindset 252627.

A preliminary evaluation conducted by researchers from the University of Oxford and J-PAL over an eight-month period evaluated Rori's impact on 1,000 students in grades 3 through 9 across 11 schools in Ghana 2829. Students in the treatment group were granted access to a mobile device for one hour a week during study hall to use Rori independently. The study observed markedly higher math scores for the treatment group, establishing an effect size of 0.36 standard deviations 2829. In educational contexts, this magnitude of impact is considered substantial and is roughly equivalent to an additional year of standard learning gains 2629.

Similarly, an independent Stanford/NBER evaluation of Khan Academy's Khanmigo mathematics tutoring found a 0.20 standard deviation improvement over control groups 2. While this falls short of the historical 2.0 standard deviation benchmark associated with elite human tutoring, the cost-effectiveness and scalability of AI tutors offer a pragmatic, highly scalable approach to closing mathematical achievement gaps globally 2.

Enhancing and Scaling Human Expertise

A distinct architectural approach focuses on using artificial intelligence to augment, rather than replace, human educators. Research indicates that the integration of human tutors and AI backend support may be the most effective structural model, promoting higher knowledge transfer and engagement while retaining the crucial relational elements of teaching 303132.

The "Tutor CoPilot" system, evaluated in a randomized controlled trial by Stanford University researchers involving 900 remote tutors and 1,800 K-12 students from historically underserved communities, exemplifies this hybrid model 533. Instead of interacting directly with the student, Tutor CoPilot acts as a behind-the-scenes pedagogical assistant for the human tutor. The system leverages a "model-of-expert-thinking" (the Bridge method) to analyze ongoing chat conversations and suggest effective pedagogical responses 531. When a student makes a mathematical error, the system prompts GPT-4 to generate three potential responses based on 11 distinct high-quality instructional strategies (e.g., "provide a hint," "ask a guiding question") 34. The human tutor retains complete agency, selecting, editing, or regenerating the response before sending it to the student 535.

The study demonstrated that students whose tutors had access to Tutor CoPilot were 4 percentage points more likely to master session topics and pass their exit tickets 45. Most notably, the intervention disproportionately benefited less experienced and lower-rated tutors, helping them achieve outcomes comparable to highly effective peers. Students of lower-rated tutors experienced a 9 percentage point increase in topic mastery, and students of less experienced tutors saw a 7 percentage point gain 436. Textual analysis of over 350,000 messages revealed that Tutor CoPilot successfully shifted tutor behavior; treatment tutors were 10% more likely to prompt students to explain their reasoning and significantly less likely to rely on generic praise or provide direct answers 432. At an estimated API cost of just $20 per tutor annually, the system provides a highly scalable form of real-time, embedded professional development 435.

Another parallel trial evaluated "LearnLM," an AI system developed by Google and Eedi Labs. Tested on 165 students ages 13 to 15 in the UK, the system provided AI-generated responses that were reviewed by a supervising human tutor 32. Tutors approved 76.4% of LearnLM's responses with little to no editing. Students tutored by LearnLM alongside human oversight proved substantially more likely to successfully transfer their learning to distinct, subsequent topics (achieving a 66.2% success rate) compared to those receiving help from unassisted human tutors (60.7%) 332.

| AI Tutoring Model | Primary Region | Delivery Mechanism | Pedagogy / Target Area | Measurable Empirical Impact |

|---|---|---|---|---|

| Amira Learning | North & Latin America | Direct-to-Student Voice Recognition | Early Literacy / Oral Reading Fluency | +0.26 SD in Kindergarten reading; +0.35 to +0.40 effect size for middle schoolers 1424. |

| Microsoft Copilot (World Bank RCT) | Nigeria | Direct-to-Student with Teacher Oversight | English Language & AI Literacy | +0.31 SD overall composite improvement; equating to roughly 1.5 - 2 years of progress 1. |

| Rori Virtual Tutor | Ghana, Sierra Leone | Direct-to-Student Mobile Chatbot | Mathematics / Socio-Emotional Coaching | +0.36 SD in math scores; operates on low-bandwidth WhatsApp at ~$5 per student 29. |

| Tutor CoPilot | United States | Tutor-Facing Backend API | Mathematics / Teacher Augmentation | +4% student mastery overall; +9% mastery specifically for students with lower-rated human tutors 4. |

| LearnLM | United Kingdom | Human-Supervised Chatbot | General Subject Transfer Learning | +5.5 percentage points in learning transfer to distinct subsequent topics relative to human-only tutoring 3. |

Global Implementation and Equity Disparities

The rollout of artificial intelligence in education is highly fractured along regional, infrastructural, and socioeconomic lines. Global data from 2023 indicates that 47% of academic institutions in high-income countries had implemented AI-driven tools, compared to merely 8% in low-income countries 40. This gap threatens to exacerbate existing educational disparities, particularly the gender digital divide and foundational literacy crises in the Global South 3738.

Interventions in the Global South

In response to the learning crisis in Sub-Saharan Africa - where an estimated 86% of ten-year-olds remain unable to read and understand simple texts - international organizations and local ministries are actively exploring AI as an accelerator for foundational literacy and numeracy (FLN) 25. A 2025 UNESCO Spotlight Report emphasizes that generative AI can shift systems from isolated pilot gains to sustained progress if deployed to accelerate proven pedagogical practices rather than attempting to substitute them entirely 25.

A landmark 2024 randomized controlled trial conducted by the World Bank in Edo State, Nigeria, evaluated an after-school program where first-year senior secondary students used Microsoft Copilot as a virtual English tutor 112. The program utilized explicit prompt engineering to ensure the AI acted as a Socratic facilitator rather than an answer key, and it maintained human oversight by having teachers monitor the 90-minute paired-student sessions 1. Over just six weeks, the program achieved remarkable learning gains of 0.31 standard deviations on a composite assessment of English, AI knowledge, and digital skills, with English specifically improving by 0.23 standard deviations 1. These gains are equivalent to 1.5 to 2 years of typical regional schooling progress, and the intervention proved particularly beneficial for female students, directly attacking the gender digital divide 125.

In Southeast Asia, adoption is advancing rapidly through policy frameworks. The Ministry of Education and Training in Vietnam has introduced a pilot curriculum framework for AI education slated for national rollout in early 2026. The curriculum relies heavily on project-based learning to teach human-centered AI design, ethics, and practical applications, explicitly transitioning students from passive users of technology to informed creators 39. Across Latin America, tools like EdutekaLab utilize generative AI to personalize learning paths and create adaptive assessments to combat the region's rigid teaching methods, where 30% of students currently fail to meet basic proficiency levels 40.

The Infrastructure Barrier and Digital Divides

Despite successful pilots, scalable AI implementation in the Global South and rural areas is fundamentally constrained by physical infrastructure. A 2025 World Bank analysis of Latin American higher education identified that AI initiatives consistently stall or fail in institutions lacking basic internet connectivity, raw computing power, and student device access 4142. Globally, only 40% of primary schools and 65% of upper secondary schools have internet access, making cloud-reliant LLMs inaccessible to millions 43.

To circumvent these structural barriers, developers are pursuing low-bandwidth and offline solutions. The Rori math tutor operates over the WhatsApp messaging protocol, minimizing data requirements and functioning effectively on basic mobile devices without demanding broadband internet 29. In Guatemala City, educational programs are piloting "Edge AI" solutions that run Small Language Models (SLMs) directly on low-cost devices without requiring any internet access, providing individualized lessons in Spanish, English, and math 25. Furthermore, pan-African linguistic collectives like Masakhane are building open-source datasets for African languages to ensure AI models can accurately process local speech and code-switching, resisting the dominance of imported, Western-centric training data 2544.

Educator Readiness and Pedagogical Shifts

The rapid, largely unregulated integration of generative AI into classrooms has outpaced the development of district policy frameworks, leading to significant unease among educators and parents. Surveys conducted throughout 2024 and 2025 reveal an educational landscape defined by cautious optimism intertwined with profound concerns regarding academic integrity, equity, and the erosion of fundamental cognitive skills.

Teacher Preparedness and Administrative Workload

While early public discourse perpetuated the myth that AI would universally replace teachers, research has consistently debunked this, framing AI instead as a tool that fundamentally shifts the teacher's role 4546. Educators are increasingly expected to transition from primary knowledge transmitters to learning facilitators, dialogue managers, and AI-literacy coaches - a transition that requires substantial, ongoing professional development 1047.

Survey data indicates a widespread lack of systemic preparedness. A 2025 public opinion poll found that 67% of Americans fear teachers are not ready for AI, a sentiment echoed internally by 70% of teachers and administrators who admit they do not feel adequately trained to manage AI responsibly in their classrooms 52. Despite this pervasive anxiety, actual teacher adoption is remarkably high. The 2024 OECD TALIS survey reported that 37% of lower secondary teachers use AI in their daily work, with 57% utilizing it specifically to draft or improve lesson plans 1119. Tools like EduGenius and MagicSchool allow teachers to automate the generation of differentiated worksheets, translation scaffolds, and rubrics, reportedly saving educators an average of 5.9 hours per week 2625.

However, while teachers appreciate the administrative relief, academic integrity remains a critical sticking point. OECD data indicates that 72% of teachers express acute concerns regarding academic integrity, fearing students will pass off AI-generated work as their own 19. Similarly, a Carnegie Learning survey highlighted that the number of educators who have experienced students cheating with AI grew from 53% in 2024 to 61% in 2025, underscoring the urgent need for robust assessment redesign rather than reliance on easily bypassed AI-detection tools 48.

The Socioeconomic and Demographic Divide in Adoption

Parental awareness and student access to AI tools display stark socioeconomic and demographic divisions. A spring 2025 survey by the Center for Applied Research in Education (CARE) at the University of Southern California highlighted a severe institutional communication gap: 96% of families with elementary-aged children and 83% of families with secondary students reported that their schools had not communicated any formal AI policies 49.

In the absence of systemic guidance and equitable provision, AI usage is rapidly stratifying by household income. The CARE survey revealed that over 40% of the highest-income parents reported their teenagers using AI for schoolwork, compared to just 19% of the lowest-income families 49. This 24-percentage-point economic gap in 2025 represents a doubling of the disparity observed just a year prior 49. Furthermore, access varies significantly by school governance type. Nearly half (48%) of private school parents reported that AI programs are formally integrated into their children's classes, compared to only 19% of public school parents 50.

| Demographic / Group | Sentiment or Data Point on AI in Education | Implications for Policy & Practice |

|---|---|---|

| High-Income Families | >40% report their teens using AI for schoolwork 49. | Rapidly growing AI literacy advantage; out-of-school access drives the "new digital divide." |

| Low-Income Families | 19% report their teens using AI for schoolwork 49. | 24-point usage gap threatens to leave under-resourced students behind in future job markets. |

| Private School Parents | 48% state AI programs are actively used in classrooms 50. | Private institutions are adopting technology faster, widening the public/private resource gap. |

| Public School Parents | 19% state AI programs are actively used in classrooms 50. | Bureaucratic hurdles and infrastructure deficits limit public school integration. |

| Teachers | 70% feel inadequately prepared to use AI; 72% worry about cheating 1952. | Urgent need for professional development focusing on AI literacy and alternative assessment design. |

Parental attitudes regarding the educational value of AI remain highly polarized. Polling data indicates that while 50% of parents believe AI can actively help their children learn, 61% fear it will irrevocably harm critical thinking skills, and 25% support a complete ban of the technology in schools 4951. Additionally, reflecting deep concerns over corporate data harvesting, 66% of parents demand that schools secure explicit, opt-in consent before exposing any student data to commercial AI platforms 52.

Ethical Considerations and Developmental Limits

The deployment of LLMs in K-12 education raises distinct ethical dilemmas that are rarely encountered in adult or corporate AI usage. These include navigating strict data privacy regulations, mitigating the hazards of algorithmic hallucinations, and understanding the unique developmental limitations of young children interacting with autonomous conversational agents.

Data Privacy and Security for Minors

AI systems fundamentally rely on massive data ingestion, raising severe privacy concerns when that data belongs to legally protected minors 52. In the United States, the Children's Online Privacy Protection Act (COPPA) strictly governs the collection of personal information from children under the age of 13, requiring verifiable parental consent, clear explanations of data use, and rigid limits on data retention 5354. In the European Union, the General Data Protection Regulation (GDPR) mandates even stricter safeguards, explicitly recognizing that children are less aware of the risks and consequences of data processing 5455.

Compliance with these frameworks is logistically difficult for schools utilizing off-the-shelf commercial LLMs. Standard consumer models routinely collect IP addresses, device types, geographical locations, and the granular contents of user prompts, which can inadvertently capture sensitive student information, emotional vulnerabilities, or behavioral data 52. To address this, privacy-by-design (PbD) frameworks urge educational technology developers to limit data retention, anonymize inputs before they reach the server, and explicitly prevent student data from being used to train future commercial models 54. Tools explicitly built for education, such as Stanford's Tutor CoPilot, utilize open-source libraries (like Edu-ConvoKit) to automatically redact student and tutor names before transmitting context to external language models, ensuring privacy compliance 534.

Algorithmic Bias and Hallucinations

Generative AI models are prone to "hallucinations" - the authoritative generation of plausible but factually incorrect information. In an educational context, hallucinations undermine the fundamental goal of imparting reliable, quality knowledge 56. While search-augmented models have improved accuracy (reaching roughly 80% parity with specialized legal or medical tools in some domains), recent evaluations indicate that advanced reasoning models still generate false statements during complex, multi-step reasoning tasks 57.

Beyond factual errors, the biases inherent in an LLM's vast training data present significant socio-emotional risks. Research has documented that prominent image and text generators frequently amplify gender and racial stereotypes 5763. For instance, a 2025 study in Scientific Reports found that Stable Diffusion homogenized depictions of Middle Eastern men, assigning them traditional cultural attributes regardless of their prompted professional context, while UNESCO analyses found LLMs describing women in domestic roles four times more often than men 57. When young students, still in vital developmental stages, interact with biased systems, they risk internalizing these societal prejudices 56. Furthermore, using generative AI for personal queries - such as a student seeking advice for an ongoing mental health crisis - can result in the AI replicating harmful internet misunderstandings or providing dangerous advice, emphasizing why AI must never replace human counselors or empathetic adult intervention 56.

Developmental Limitations in Early Childhood

The interaction paradigms designed for adult users or secondary students are often entirely inappropriate for early childhood education (ages 3 to 6). Developmental psychology indicates that children in this age bracket experience intense situational emotions but lack the verbal capacity, abstract thinking, and self-regulation skills required to independently converse with a text-based LLM 6465.

Recent human-computer interaction (HCI) research emphasizes that early childhood AI tools must be strictly parent-mediated. Tools like the PACEE system are co-designed with kindergarten teachers to circumvent the communication barriers between parents and children. Rather than replacing the parent with an autonomous chatbot, the AI acts as a backend coach for the adult, using visual aids and suggesting age-appropriate phrasing to help the parent guide the child through emotional or educational hurdles 6465. Experts uniformly warn that assuming young children can engage with AI autonomously fails to recognize their cognitive limits, reaffirming that parental agency, physical safety protocols, and human attachment remain the central, irreplaceable anchors of early childhood development 6458.

Conclusion and Policy Implications

Early classroom evidence demonstrates that artificial intelligence tutors hold substantial promise for improving educational outcomes, provided they are deployed as carefully designed pedagogical scaffolds rather than unrestricted computational crutches. Interventions like Amira Learning and the Rori math tutor have proven that AI can deliver cost-effective, high-dosage tutoring in foundational literacy and mathematics, achieving measurable standard-deviation gains that are highly significant in both high-resource and low-resource environments. Concurrently, platforms like Tutor CoPilot reveal the profound potential of AI to augment human educators, scaling expert instructional strategies to less experienced teachers without severing the vital human connection in the classroom.

However, the realization of this potential is currently threatened by systemic inequalities and ethical vulnerabilities. The data reveals a rapidly widening digital divide where high-income and private school students gain fluency in AI utilization while under-resourced public systems struggle with basic infrastructure, hardware deficits, and policy formulation. Furthermore, the inherent risks of data privacy violations, algorithmic bias, and cognitive offloading require immediate regulatory attention.

Moving forward, educational ministries and school districts must transition from reactive bans to proactive, structured integration. This requires robust investment in teacher professional development, the establishment of clear ethical guidelines protecting student data in compliance with COPPA and GDPR, and the procurement of AI systems explicitly engineered for pedagogy rather than general corporate use. Ultimately, the successful integration of AI in childhood education will not be defined by the obsolescence of human teachers, but by the thoughtful calibration of human-machine collaboration to foster independent, critical, and equitable learning.