Dopaminergic signaling of salience, prediction error and reinforcement

Introduction

The dopaminergic system has historically occupied a central position in behavioral neuroscience, cognitive psychology, and computational psychiatry. Characterized in early popular scientific discourse as the brain's monolithic "pleasure chemical," empirical research spanning the last three decades has systematically dismantled this oversimplification. Dopaminergic signaling is now understood as a highly dimensional, multiplexed computational system that governs several distinct, yet interacting, neurobiological processes. The precise role of dopamine - whether it serves primarily as a teaching signal that computes the difference between expected and actual rewards, a motivational magnet that imbues environmental stimuli with incentive salience, or a rigid reinforcement mechanism that drives the consolidation of automated habits - remains the subject of intensive theoretical synthesis.

Recent advancements in high-density electrophysiology, optogenetics, fast-scan cyclic voltammetry, and neuroimaging have revealed that dopamine does not perform a single function. Rather, its functional output is determined by the specific anatomical projections of distinct neuronal subpopulations, the temporal dynamics of its release (phasic versus tonic), and the presence of localized computational noise. The emergence of distributional reinforcement learning models and the identification of new molecular mediators, such as the KCC2 protein in habit formation, further illustrate that dopaminergic signaling dynamically adjusts human and animal behavior across different environmental contexts. This report provides an exhaustive analysis of the anatomical, computational, and behavioral dimensions of dopaminergic signaling, delineating how the brain separates and integrates salience, prediction error, and reinforcement.

Neuroanatomical Substrates of Dopaminergic Signaling

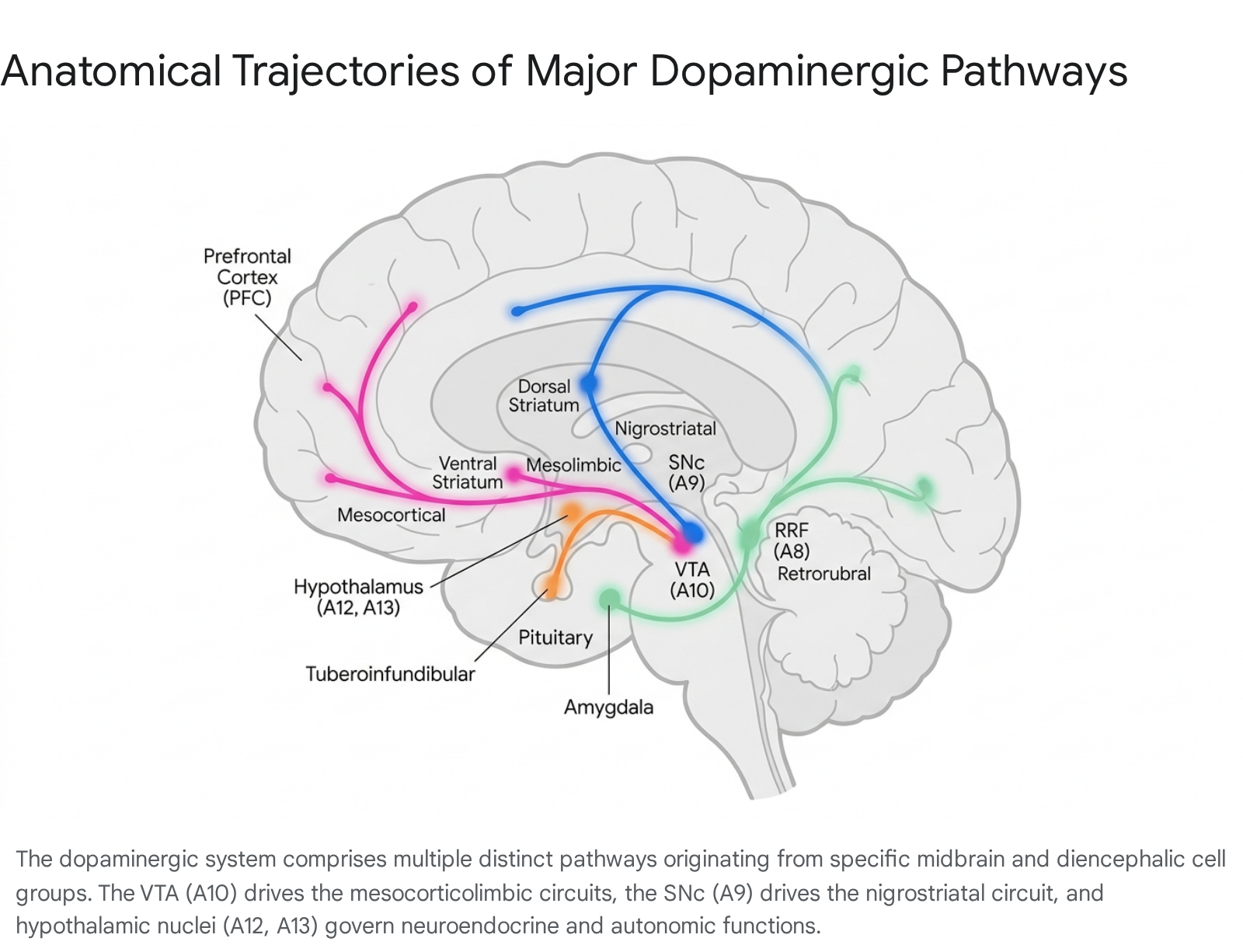

The functional diversity of dopamine is inextricably linked to the neuroanatomical organization of its projection pathways. Dopaminergic neurons are primarily located in the mesencephalon (midbrain) and diencephalon, organized into distinct clusters historically designated alphanumerically from A8 to A16 112. While the classical literature often emphasizes the mesolimbic and nigrostriatal systems, a comprehensive understanding of dopamine requires the inclusion of historically understudied diencephalic and midbrain nuclei.

The Nigrostriatal Pathway (A9)

The A9 cell group, located in the substantia nigra pars compacta (SNc), gives rise to the nigrostriatal pathway, projecting primarily to the dorsal striatum, which comprises the caudate nucleus and putamen 345. This pathway contains approximately 80% of the brain's total dopamine and is indispensable for motor control, action selection, and the execution of voluntary movement 26. The degeneration of the A9 neurons is the primary pathological hallmark of Parkinson's disease, leading to rigidity, resting tremors, and bradykinesia 34.

Beyond simple motor execution, recent studies indicate that the nigrostriatal pathway is heavily involved in goal-directed behaviors and the transition from flexible actions to rigid habit learning 7. Dopamine released from the nigrostriatal pathway modulates corticostriatal transmission in medium spiny neurons (MSNs) expressing dopamine D1 or D2 receptors. This modulation leads to movement activation via the direct pathway (D1) or movement suppression via the indirect pathway (D2), creating a finely tuned regulatory loop 67.

The Mesocorticolimbic System (A10)

The A10 cell group is situated in the ventral tegmental area (VTA) and gives rise to the mesocorticolimbic system, which is conceptually divided into two major functional branches 4.

The mesolimbic pathway projects from the VTA to the ventral striatum, particularly the nucleus accumbens (NAc), as well as the amygdala, hippocampus, and olfactory tubercle 149. This circuit is fundamentally involved in reward processing, the computation of prediction errors, and the pathophysiology of substance use disorders and schizophrenia 34. Hyperactivity within the mesolimbic pathway is strongly associated with the positive symptoms of schizophrenia, such as hallucinations and delusions, as well as the compulsive reward-seeking behavior characteristic of addiction 36.

The mesocortical pathway projects from the VTA directly to the prefrontal cortex (PFC) 34. This projection bypasses the striatum to modulate higher-order executive functions, including working memory, sustained attention, decision-making, and emotional regulation 346. Dysregulation or hypoactivity in the mesocortical pathway is frequently associated with the negative and cognitive symptoms of schizophrenia, as well as the inattention and impulsivity characteristic of attention-deficit/hyperactivity disorder (ADHD) 356.

The Retrorubral Field (A8)

Situated dorsal and posterior to the substantia nigra, the retrorubral field (RRF) contains the A8 dopaminergic cell group. Though historically overshadowed by the VTA and SNc, the RRF has emerged in recent research as a critical hub for threat evaluation and aversive processing 8910. Anatomical tracing studies indicate that the RRF projects to the central amygdala, bed nucleus of the stria terminalis (BNST), and the tail of the striatum - a region highly associated with threat avoidance 89.

Electrophysiological recordings of RRF single units demonstrate that these neurons signal diverse aspects of threat cues and aversive outcomes. In rodent models, RRF neurons exhibit distinct firing extremes that differentiate between certain danger and absolute safety, framing intermediate firing rates during states of uncertainty 8. Furthermore, the RRF receives direct, monosynaptic projections from the globus pallidus externus (GPe) 9. Viral-assisted circuit mapping reveals that the GPe targets both dopaminergic and GABAergic populations within the RRF. The GPe-recipient dopaminergic neurons project to the striatum, whereas the GPe-recipient GABAergic neurons project broadly to the amygdaloid complex, midline thalamic nuclei, and lateral hypothalamus, integrating the RRF into widespread limbic regulatory networks 9.

Hypothalamic and Diencephalic Pathways (A11-A14)

Beyond the midbrain, distinct dopaminergic populations exist within the hypothalamus, serving primarily neuroendocrine and autonomic integration functions rather than traditional reward processing.

The tuberoinfundibular pathway originates in the arcuate (infundibular) and periventricular nuclei of the mediobasal hypothalamus (A12 group) and projects to the median eminence 61112. Dopamine released into the hypophyseal portal vasculature acts as a neurohormone, tonically inhibiting the secretion of prolactin from the anterior pituitary gland by binding to D2 receptors on lactotroph cells 91314. Pharmacological blockade of this pathway by first- and second-generation antipsychotic medications frequently results in hyperprolactinemia, which can cause galactorrhea, gynecomastia, disruptions to the menstrual cycle, and profound sexual dysfunction 1117.

The incertohypothalamic pathway consists of short intradiencephalic projections originating from the A11, A13, and A14 cell groups located in the zona incerta and periventricular hypothalamus 1115. These neurons project locally to the dorsomedial and anterior hypothalamic nuclei, the lateral septal nucleus, and the ventrolateral periaqueductal gray (dlPAG) 11516. Anterograde tracing with biotinylated dextran amine (BDA) has confirmed that the A13 group is the primary source of dopaminergic input to the dlPAG, a region central to defensive behaviors and panic responses 16. This system modulates fear responses, the integration of autonomic responses to sensory stimuli, and aspects of sexual behavior 17.

Additionally, the A11 cell group gives rise to the diencephalo-spinal projection, which descends through the brainstem to terminate in the dorsal horns and intermedio-lateral cell columns of the spinal cord. This pathway modulates sympathetic nervous system activity and exerts inhibitory effects on chronic pain signaling 421.

Summary of Major Dopaminergic Trajectories

To synthesize the complex neuroanatomical routing, the primary pathways, their cellular origins, and their associated clinical pathologies are summarized below.

| Pathway | Origin Cell Group | Primary Target | Core Functions | Associated Pathologies |

|---|---|---|---|---|

| Nigrostriatal | Substantia Nigra pars compacta (A9) | Dorsal Striatum (Caudate/Putamen) | Motor control, habit formation | Parkinson's disease, Tourette's |

| Mesolimbic | Ventral Tegmental Area (A10) | Ventral Striatum (NAc), Amygdala | Reward processing, prediction error | Addiction, Schizophrenia (positive symptoms) |

| Mesocortical | Ventral Tegmental Area (A10) | Prefrontal Cortex | Executive function, working memory | ADHD, Schizophrenia (negative symptoms) |

| Tuberoinfundibular | Arcuate Nucleus (A12) | Median Eminence / Pituitary | Prolactin inhibition | Hyperprolactinemia |

| Retrorubral | Retrorubral Field (A8) | Tail of Striatum, Amygdala, BNST | Threat evaluation, aversive outcomes | Anxiety disorders |

| Incertohypothalamic | Zona Incerta / Hypothalamus (A11, A13, A14) | Hypothalamus, Lateral Septum, PAG | Fear modulation, autonomic integration | Autonomic dysregulation |

The Reward Prediction Error Framework

For decades, the dominant computational framework for understanding dopaminergic function has been the Reward Prediction Error (RPE) hypothesis. Rooted in the psychological constructs of the Rescorla-Wagner model of classical conditioning and expanded by Sutton and Barto's temporal difference (TD) reinforcement learning algorithm, the RPE model posits a specific, quantifiable role for midbrain dopamine 221819. Under this paradigm, dopamine neurons broadcast a global teaching signal representing the mathematical discrepancy between an expected reward and the actual reward received 1820.

Temporal Difference Learning Mechanics

In the canonical RPE model, a positive prediction error occurs when an outcome is better than expected (e.g., encountering unexpected food or receiving a larger-than-anticipated payout). This results in a rapid, phasic burst of dopaminergic firing 18. Conversely, a negative prediction error occurs when an expected reward is omitted or is worse than expected, resulting in a transient pause, or dip, in baseline dopaminergic firing 1819. These phasic signals facilitate dopamine-dependent synaptic plasticity in the striatum, adjusting the synaptic weights of corticostriatal connections and updating the subject's internal estimate of state and action values to guide future behavior 192021.

A critical and elegant feature of temporal difference learning is that, over the course of associative learning, the dopaminergic response shifts backward in time 22. Initially, naive dopamine neurons fire upon the receipt of an unpredicted primary reward. As the subject learns that a specific conditioned stimulus (e.g., a tone or light) perfectly predicts the forthcoming reward, the dopaminergic burst shifts entirely. It occurs in response to the predictive cue rather than the reward itself, because the reward is now fully anticipated and thus generates an error value of zero 19. If the reward is subsequently omitted after the cue, a negative prediction error occurs at the exact moment the reward was anticipated, signaling that the internal model requires downward revision 23.

Empirical Limitations of the Canonical RPE Model

Despite its immense explanatory power in simple conditioning tasks, the strict TD-RPE model faces significant empirical challenges that suggest it is an incomplete representation of the dopamine system 2224.

First, standard RPE models struggle to account for the role of dopamine in complex associative chains, such as second-order or higher-order conditioning. The Rescorla-Wagner formulation treats trials as discrete temporal objects and fails to smoothly explain how an associative relationship is formed when a second-order cue predicts a first-order cue, which in turn predicts an outcome - a mechanism critical for abstract human learning, such as valuing money as a predictor of primary goods 22.

Second, the classical model assumes a unidimensional continuum of value, treating aversive experiences or punishments merely as negatively signed rewards 23. However, high-density recordings demonstrate that while some dopamine neurons pause during aversive events, others - particularly those projecting to the tail of the striatum or originating in the retrorubral field - are actively excited by aversive stimuli and threat cues 832. The unidimensional TD learning algorithm fails to capture the multidimensional nature of threat avoidance, punishment learning, and the robust dopaminergic response to unexpected but aversive events, such as an electric shock 23.

Third, high-resolution neurochemical monitoring has revealed that dopamine levels do not always occur as discrete, phasic bursts. In tasks requiring spatial navigation, instrumental effort, or interval timing, dopamine exhibits a slow, continuous "ramping" effect as the animal approaches the reward 212225. This sustained release pattern correlates with the proximity to a goal rather than instantaneous, trial-by-trial prediction errors. It heavily resembles a signal for motivational vigor or working memory rather than a pure associative learning update 2226.

Furthermore, behavioral evidence indicates that learning rates - how heavily an organism weighs a new prediction error to update its beliefs - are not static. Animals dynamically adjust their learning rates based on environmental volatility and uncertainty. However, research examining the nucleus accumbens demonstrates that while dopamine release reliably encodes the RPE, it operates independently of the dynamically fluctuating learning rate 2135. The failure to map dopamine directly to the dynamic learning rate multiplier suggests that downstream or parallel cortical mechanisms instantiate the learning rate, while dopamine provides the raw error term.

Distributional Reinforcement Learning

To resolve several anomalies in the canonical RPE framework, computational neuroscientists have recently proposed that the mesolimbic dopamine system implements a more sophisticated algorithm known as Distributional Reinforcement Learning 242527. Standard TD learning assumes that the brain updates a single scalar value representing the mean expected reward. In contrast, distributional RL - an approach that recently drove significant performance gains in artificial intelligence research - postulates that the brain learns and represents the entire probability distribution of possible future rewards, accounting for both the mean and the full variance (including risk and uncertainty) 2728.

Circuit-Level Implementation and Opponent Processing

Advances in high-density recordings using Neuropixels probes and two-photon calcium imaging have provided robust empirical evidence for distributional RL in the mammalian striatum 27. Individual dopaminergic neurons exhibit systematic diversity in their response profiles. Some neurons act "optimistically," responding strongly to better-than-expected outcomes and weakly to worse-than-expected outcomes. Conversely, other neurons act "pessimistically," showing the inverse pattern by overweighting negative prediction errors 29.

This diversity is structurally harnessed by the opponent architecture of the basal ganglia. The D1 receptor-expressing medium spiny neurons (MSNs) of the direct pathway and the D2 receptor-expressing MSNs of the indirect pathway function as distinct computational channels. Optogenetic studies reveal that D1 and D2 MSNs preferentially encode the right (optimistic) and left (pessimistic) tails of the reward distribution, respectively 27. By maintaining a spectrum of optimistic and pessimistic predictions, the brain can execute highly sophisticated, risk-sensitive behavioral strategies that single-value RL models fundamentally cannot replicate 2729.

Regional Heterogeneity and Noisy Learning

Distributional encoding displays significant regional heterogeneity across the striatum. The dorsolateral striatum (DLS) maintains longer value-decay times and focuses on learning the value of discrete, short action elements (movements), whereas the dorsomedial striatum (DMS) and ventral striatum exhibit faster, more fluid dynamics 25. These variations ensure stability against value divergence while allowing the organism to cancel out uncertainty-induced biases in volatile environments 2528.

The implementation of distributional RL in the human brain has been further corroborated by recent human neuroimaging and pharmacological studies. Using the CogLink network model - which integrates Bayesian inference with distributional RL - researchers have demonstrated how associative uncertainty is encoded as a distribution over action-value beliefs in basal ganglia-like circuits 28. Furthermore, pharmacological manipulation using the dopamine precursor L-DOPA in a volatile two-armed bandit task revealed that increasing dopamine introduces a positive reward bias into this noisy learning process. L-DOPA decreased switching behavior following below-average rewards, demonstrating that dopamine modifies the precision and rate of learning by shaping the input distribution within recurrent neural networks 3539.

Incentive Salience and Motivational Vigor

Operating in parallel to computational learning models is the Incentive-Sensitization Theory, pioneered and continuously updated by researchers Kent Berridge and Terry Robinson 3031. This framework argues that dopaminergic signaling does not primarily mediate the hedonic pleasure of a reward ("liking"), nor does it act exclusively as a cognitive learning signal. Instead, dopamine mediates "wanting" or incentive salience - a deeply unconscious neurobiological process that transforms neutral, reward-predictive stimuli into highly attractive motivational magnets 323334.

The Separation of Wanting and Liking

Incentive salience clearly dissociates the motivational drive to obtain an object from the affective pleasure experienced upon its consumption 3235. Experimental manipulations that heavily deplete dopamine prevent animals from seeking out food, yet these animals retain normal affective facial expressions (indicative of subjective "liking") when sweet food is placed directly into their mouths 34.

Conversely, hyper-dopaminergic states increase cue-triggered wanting without elevating the hedonic impact of the reward. The "liking" component of reward is mediated by entirely different neurochemical systems, primarily endogenous opioids, endocannabinoids, and GABAergic "hedonic hotspots" distributed across the forebrain 3647. Dopamine assigns the motivational weight, while opioids deliver the consummatory pleasure.

Incentive Sensitization in Addiction

The distinction between learning and incentive salience becomes starkly apparent in the pathology of addiction. Drugs of abuse (e.g., cocaine, methamphetamine, heroin) trigger massive, pharmacologically forced surges of dopamine that far exceed the natural limits of primary reinforcers like food or social interaction 483738. Through repeated exposure, the mesolimbic circuitry undergoes profound neuroadaptation, leading to incentive sensitization. The dopaminergic system becomes hyper-reactive to drug-associated cues (e.g., the sight of a syringe, a specific location, or a behavioral ritual), generating overwhelming cravings even as tolerance builds to the drug's euphoric effects 3952.

Longitudinal studies of cocaine self-administration demonstrate that the attribution of incentive salience to environmental cues during early exposure is a highly predictive early marker of addiction vulnerability, preceding the development of tolerance or psychomotor sensitization 39. This framework effectively explains why individuals with substance use disorders, or behavioral addictions such as Compulsive Sexual Behavior Disorder (CSBD) and Problematic Pornography Use (PPU), compulsively pursue stimuli that they explicitly report no longer enjoying 233240. The cues have acquired pathological incentive salience, hijacking the organism's motivational architecture and driving approach behavior regardless of conscious intent 3452.

Cue-Evoked Dopamine and Reward-Pursuit Strategies

Recent 2026 neuroimaging and photometric research reveals that cue-evoked dopamine does not simply act as a blind motivational amplifier; it specifically shapes the strategy of reward pursuit. When an environmental cue predicts an imminent reward with high probability, it triggers a massive dopamine release in the NAc core. This surge does not merely initiate general seeking; rather, it constrains broad instrumental reward-seeking and biases the animal toward immediate "reward checking" at the known delivery location 41.

Conversely, cues that predict rewards with low probability elicit smaller dopamine transients, which bias behavior toward generalized seeking, effortful pursuit, and exploration rather than checking 41. This dynamic proves that dopamine selectively sculpts the nature of pursuit based on probabilistic predictions, intertwining the learning of probabilities with the physical execution of motivation.

| Theoretical Framework | Primary Function of Dopamine | Core Mechanism | Explanation of Addiction |

|---|---|---|---|

| Reward Prediction Error (RPE) | Learning & Value Updating | Computes the difference between expected and actual reward to update synaptic weights. | Addiction results from drugs artificially generating an unbreakable, perpetual positive prediction error, leading to endless value updating. |

| Incentive Salience ("Wanting") | Motivation & Drive | Transforms neutral predictive cues into attractive "motivational magnets." | Addiction results from the hypersensitization of the cue-driven "wanting" circuitry, persisting even as subjective "liking" decreases. |

| Distributional RL | Risk & Variance Encoding | D1/D2 opponent circuits maintain a full probability distribution of future outcomes. | Pathological pursuit reflects an overweighting of the right tail (optimistic outcome) due to dopaminergic noise and extreme variance. |

Reinforcement, Habit Formation, and Molecular Switches

While dopamine drives immediate motivation and updates prediction models, it is also the primary catalyst for the long-term consolidation of habits. Habits are defined neurologically as automated, stimulus-response behaviors that require minimal cognitive effort and persist even when the expected outcome is devalued.

Transitioning from Goal-Directed to Habitual Control

During the initial stages of behavior acquisition, actions are highly goal-directed and heavily dependent on the prefrontal cortex and dorsomedial striatum. The organism evaluates the expected value of an action and executes it deliberately. As the behavior is repeatedly reinforced over time, computational control shifts to the sensorimotor networks of the dorsolateral striatum (governed by the nigrostriatal dopaminergic pathway) 57.

As this habit loop repeats, a crucial neurophysiological shift occurs: dopamine release moves earlier in the behavioral sequence, firing in response to the antecedent cue rather than the reward itself. The brain learns to predict the reward before it arrives, and it is this anticipation - not the reward - that drives the behavior, making the habit feel automatic and difficult to override with conscious willpower 55.

The KCC2 Protein: Accelerating Habit Formation

A groundbreaking 2025 study published in Nature Communications identified a critical molecular switch governing the speed and intensity at which this habit transition occurs: the potassium-chloride co-transporter 2 (KCC2) protein 5542. KCC2 is essential for maintaining intracellular chloride homeostasis, which in turn preserves normal GABAergic inhibitory signaling in the brain.

When KCC2 levels are suppressed - a phenomenon commonly observed during chronic stress, systemic neuroinflammation, and prolonged drug exposure - the inhibitory tone of the midbrain is disrupted. Consequently, dopamine neurons fire far more rapidly and intensely in response to environmental cues 424344. This synchronized, amplified burst of dopamine effectively acts as an accelerant for habit formation. The brain rapidly links environmental triggers to dopamine release, solidifying both beneficial routines (e.g., morning exercise) and destructive compulsions (e.g., compulsive smartphone scrolling or smoking) much faster than under normal physiological conditions 554243.

Pharmacological Dissociations: Dopamine Versus Serotonin

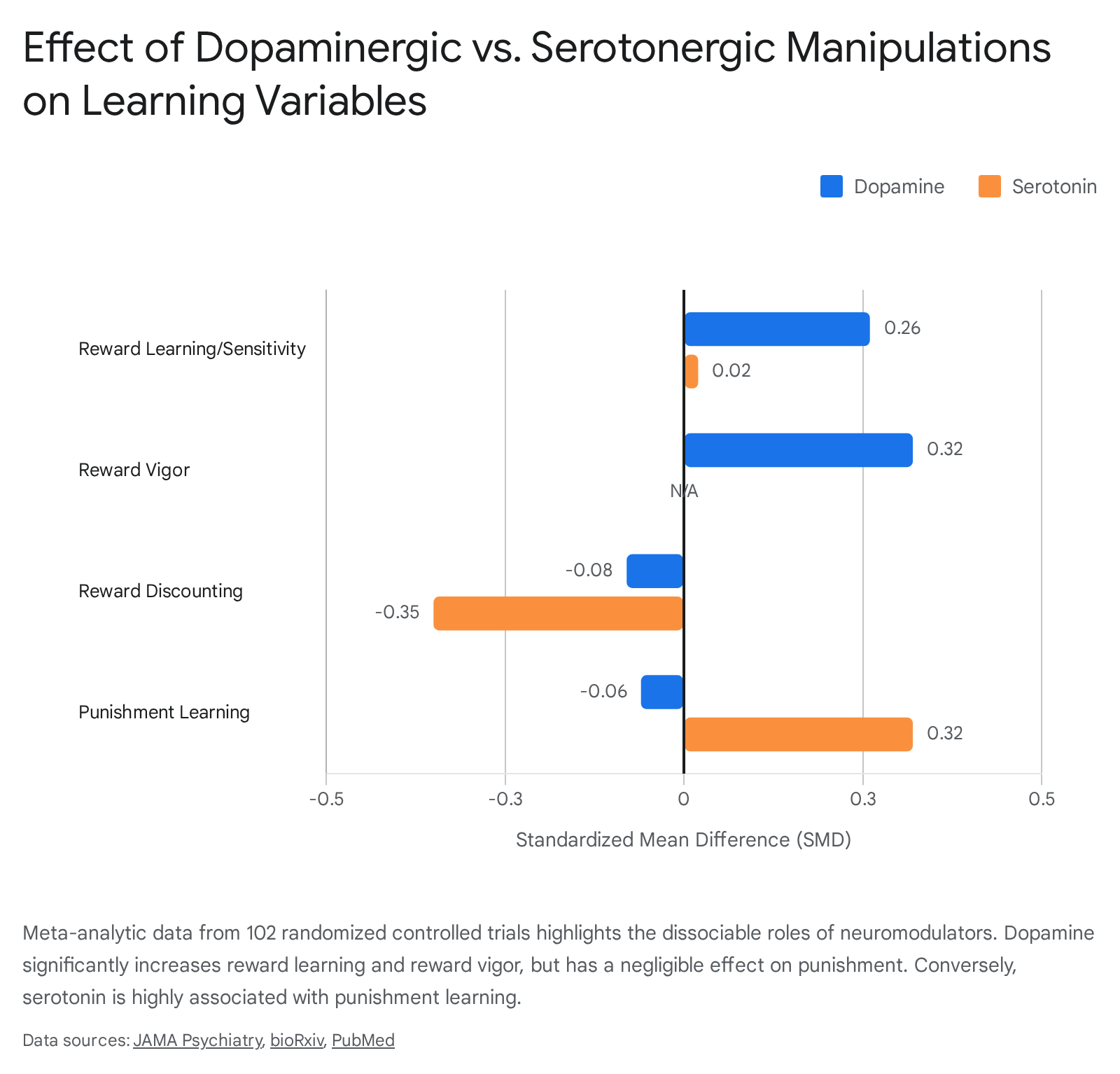

The academic debate regarding whether dopamine acts strictly as a learning signal, a motivational driver, or a reinforcement mechanism is increasingly viewed as a false trichotomy. To support treatment assignments in computational psychiatry, researchers conducted a massive systematic review and meta-analysis of pharmacological manipulations, published in JAMA Psychiatry in 2025 45. Analyzing 102 randomized controlled trials encompassing thousands of healthy human subjects, the study definitively mapped the respective behavioral impacts of dopamine and serotonin.

The data conclusively demonstrate that upregulating dopamine in healthy humans produces a small but robust increase in overall reward processing (Standardized Mean Difference [SMD] = 0.18). Specifically, dopamine enhancement significantly increased reward learning/sensitivity (SMD = 0.26) and reward response vigor (SMD = 0.32), while modestly decreasing reward discounting (SMD = -0.08) 454647. Crucially, dopamine upregulation had a negligible effect on punishment processing (SMD = -0.06) 4748.

In stark contrast, serotonergic manipulations did not meaningfully affect overall reward function. Instead, serotonin significantly increased punishment learning/sensitivity (SMD = 0.32) and profoundly decreased reward discounting (SMD = -0.35) 454648.

These findings synthesize the competing theories: Dopamine is indeed a teaching signal (enhancing reward learning), but it is simultaneously an actuator of physical effort and motivational drive (enhancing reward vigor). The MAGNet (Motivational Attractor Goal Network) neurodynamical model, introduced in late 2024, mathematically unifies these roles 49. According to the model, dopamine-dependent synaptic plasticity slowly creates "latent attractors" in the brain (the learning/RPE phase). Concurrently, phasic dopamine release acts immediately to widen these attractor basins by modulating synaptic excitability, rendering distal goals suddenly accessible and drawing the organism toward the rewarded location (the motivational/vigor phase) 49. Thus, learning and motivation are simply different temporal expressions of the same embodied action-perception loop.

Cross-Cultural Variances in Dopaminergic Processing

While neurobiological mechanisms are largely conserved across human populations, the expression of dopaminergic reward processing is heavily modulated by environmental and cultural contexts. A significant limitation in contemporary cognitive neuroscience is the overreliance on Western, Educated, Industrialized, Rich, and Democratic (WEIRD) populations 505152. Recent initiatives have sought to broaden neuroimaging research to non-WEIRD populations across South America, Africa, and Asia, revealing important gene-culture interactions 5053.

The norm sensitivity hypothesis provides a theoretical framework for understanding these variations. This hypothesis posits that humans acquire global patterns of cultural behavior - such as the independent self-construal prevalent in Western cultures versus the interdependent self-construal prevalent in Eastern cultures - through reinforcement-mediated social learning processes 5455. Because this learning relies on reward processing, the degree of cultural acquisition is influenced by polymorphic variants of genes regulating the dopaminergic system.

Specifically, research indicates that individuals carrying specific variants of the dopamine D4 receptor gene (DRD4), notably the 7R and 2R alleles, exhibit heightened sensitivity to environmental reinforcements. In cross-cultural studies comparing European Americans and Asians, these higher dopamine-signaling variants strongly moderated the adoption of culture-typical social orientations 55. This suggests that genetic variations in the dopaminergic system do not deterministically encode specific behaviors, but rather encode the plasticity and sensitivity required to absorb the prevailing behavioral norms of the surrounding cultural ecosystem 5455.

Clinical Translation and Behavioral Interventions

Understanding dopamine as a precise regulator of both distributional learning and cue-driven motivation provides clarity on contemporary behavioral health trends, most notably the pop-psychology concept of "dopamine fasting."

The Myth of Dopamine Fasting

The term "dopamine fasting" is a neurobiological misnomer. One cannot practically "fast" from a neurotransmitter, nor do baseline dopamine levels plummet simply by abstaining from digital screens or highly palatable foods 5657. From a clinical perspective, chronic engagement with high-reward supernormal stimuli (e.g., endless social media scrolling) does overstimulate the mesolimbic pathway. Electroencephalography (EEG) data monitoring active social media use demonstrates elevated Beta and Gamma wave activity, mirroring the neural engagement seen in substance dependence and gambling, followed by delayed Alpha wave recovery indicative of mental fatigue 58.

Over time, this intense stimulation leads to the downregulation of D2 dopamine receptors in the striatum. This structural recalibration forces the brain to require progressively higher stimulation thresholds to achieve baseline motivation, rendering natural rewards (such as reading or socializing) neurologically insufficient 4738.

However, the therapeutic intervention is not the wholesale starvation of sensory stimulation. Evidence-based behavioral alternatives function by engaging prefrontal cognitive control to override striatum-driven impulsivity 56. Cognitive Behavioral Therapy (CBT) and mindfulness-based stress reduction build coping skills that alter stress-related brain circuits in the anterior cingulate cortex and insula, rather than "resetting" dopamine receptors 56.

Implications for ADHD and Neuroinflammation

Extreme sensory deprivation is particularly contraindicated for neurodevelopmental conditions such as ADHD. The ADHD brain suffers from chronic dopaminergic under-stimulation in the prefrontal cortex, leading to a profound lack of internal drive. Implementing a generic "dopamine fast" removes engagement entirely, exacerbating deficits in executive function, mood, and focus 4757. Optimal management requires calibrated, structured stimulation - such as attaching novelty to important tasks and establishing routines - rather than broad deprivation 57.

Furthermore, systemic interventions such as physical exercise have been shown to directly interact with dopaminergic health. Neuroinflammation, driven by chronic stress or substance use, impairs neuronal integrity and monoamine systems. Physical activity attenuates this sickness behavior, reduces inflammatory cytokines, and protects against dopaminergic impairment, providing a robust physiological mechanism for restoring homeostasis 38.

Conclusion

Dopaminergic signaling does not operate as a singular, simplistic cognitive mechanism. It is a highly dimensional system that integrates past experiences, present motivational states, and future probabilities to architect survival behavior. Through the nigrostriatal and mesocorticolimbic pathways, dopamine acts as the vital substrate for motor execution, associative learning, and executive function. Furthermore, the discovery of the retrorubral field's role in threat evaluation, alongside the hypothalamic pathways' autonomic modulation, proves that dopamine's influence extends far beyond mere pleasure or reward.

While the classical Reward Prediction Error model remains a foundational explanation for associative learning, it has been elegantly expanded by Distributional Reinforcement Learning, which allows the brain to map complex probability distributions using opponent D1 and D2 circuits. Simultaneously, the Incentive Salience theory accurately describes how dopamine attributes motivational value to cues, driving vigorous pursuit independently of subjective hedonic pleasure - a mechanism that lies at the very core of addiction. By manipulating synaptic excitability in the short term to generate vigor, and modulating synaptic plasticity in the long term to drive KCC2-mediated habit formation, dopamine dynamically binds environmental stimuli to actions. Ultimately, dopamine is not the reward itself, but the computational currency that drives the organism to navigate an uncertain world.