Dissemination and susceptibility of conspiracy theories online

Introduction to Conspiracy Theory Dynamics

Conspiracy theories - defined analytically as explanatory frameworks that attribute the causes of significant social, political, or economic events to the clandestine orchestrations of powerful, malevolent actors - have transitioned from isolated fringe phenomena to central forces shaping public opinion worldwide 1. The digital transformation of the global information ecosystem has fostered a pervasive public and academic assumption that modern populations are experiencing an unprecedented, accelerating epidemic of conspiratorial thinking. Public polling indicates that 73% of adults in the United States believe that conspiracy theories are currently "out of control," and 59% assert that the general public is more susceptible to such beliefs today than they were a quarter-century ago 234. Furthermore, 77% of the public attributes this perceived surge directly to the advent of the internet and social media platforms 23.

However, rigorous longitudinal empirical analysis presents a significant divergence between the public perception of an escalating crisis and the historical reality of conspiracy theory endorsement. While digital platforms have fundamentally altered the visibility, transmission latency, and network topology of conspiratorial communities, baseline human susceptibility to conspiracy narratives appears remarkably stable across decades 25. The critical research question regarding modern dissemination is not merely whether the internet generates new conspiracy theorists out of a previously rational public, but rather how digital affordances, algorithmic amplification, and network segregation interact with pre-existing psychological predispositions and offline social networks to facilitate the spread of these narratives 6.

Longitudinal Analysis of Conspiracy Belief Rates

The hypothesis that the internet has fundamentally increased the baseline rate of conspiracy theory belief requires testing against historical longitudinal data. Researchers have assessed this by comparing archival polling data from the mid-20th century to contemporary survey results across identical narrative metrics.

Historical Baselines and Polling Data

Comprehensive analyses examining dozens of specific conspiracy theories over spans of up to 55 years yield no systematic evidence of an overall increase in conspiracism 27. In a multi-study evaluation tracking 46 distinct conspiracy theories, researchers found that the vast majority of beliefs either remained statistically stable or experienced significant decreases over time 25. Among 37 specific items tracked longitudinally between 1966 and 2020, only six demonstrated a significant over-time increase, while 15 showed a significant decrease, and 16 remained static 2.

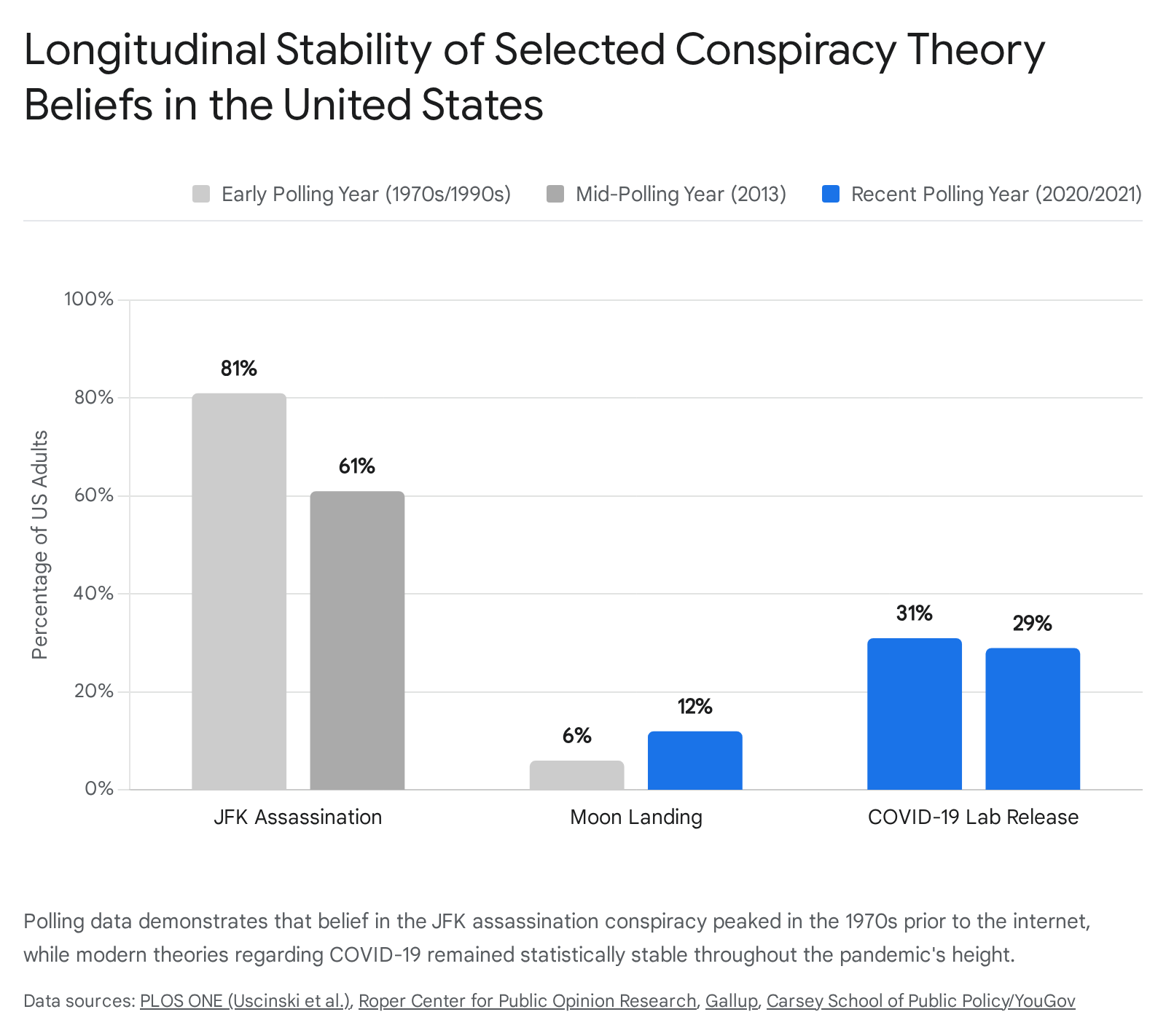

The data indicates that specific conspiracy narratives generally lose adherents as they age, with new theories emerging to replace them at roughly similar baseline adoption rates. The assassination of President John F. Kennedy serves as the longest-running continuous metric for conspiratorial belief in the United States. In 1963, 52% of the public believed Lee Harvey Oswald did not act alone 89. This figure climbed steadily to a peak of 81% in 1976, driven by congressional investigations and institutional skepticism following the Watergate scandal 8910. However, in the internet era, belief in the Kennedy conspiracy has steadily declined, dropping to 61% in 2013, and hovering around 65% in 2023 89. Younger demographics heavily immersed in digital media (Generation Z) are significantly less likely to endorse the theory, with only 42% believing multiple people were involved 10.

Conversely, belief that the 1969 Apollo Moon landings were faked has seen a marginal increase over specific intervals. In 1999, Gallup found 6% of Americans endorsed the hoax theory 11. By 2019 and 2021, various surveys measured this belief at roughly 10% to 12% in the United States, and up to 25% across certain European nations 11121314. While this represents a measurable increase, it remains confined to a distinct minority of the population, far lower than the peak conspiracism observed regarding political assassinations in the 1970s.

Stability During High-Information Crisis Periods

During the COVID-19 pandemic - a period characterized by acute uncertainty, societal disruption, and massive digital information flow - conspiracy theories regarding the virus's origins and vaccines emerged rapidly. Yet, panel surveys tracking these beliefs showed no aggregate increase during the initial critical windows of the crisis. Belief that the coronavirus was "purposely created and released" registered at 31% in March 2020, dropped slightly to 27% in June 2020, and leveled at 29% in May 2021 27. Beliefs that the virus was a cover for implanting tracking devices similarly decreased by six points over a similar timeframe, and adherence to the narrative that Bill Gates was orchestrating the pandemic dropped by three points 27.

Even theories explicitly propelled by modern algorithmic social media architecture, such as the QAnon narrative, demonstrate stability rather than exponential growth in core belief. Direct polling on QAnon endorsement showed no significant increase between August 2019 and May 2021, remaining static at approximately 5% to 6% of the population identifying as believers, despite massive increases in media coverage, deplatforming events, and algorithmic visibility during that period 257. Related broader concepts tied to the QAnon milieu (e.g., belief in a "deep state" or elite trafficking rings) maintain higher baseline support (34% to 50%), but these figures similarly show no evidence of significant over-time increases in recent years 2.

The Illusion of Increased Susceptibility

If empirical data consistently demonstrates that baseline belief rates are not increasing, the overwhelming public perception of an escalating informational crisis requires explanation. The dissonance lies in the fundamental distinction between absolute population susceptibility and algorithmic visibility.

The internet has not converted a significantly higher percentage of the global population into conspiracy theorists; rather, it has provided existing adherents with unprecedented tools for content production, network formation, and public broadcasting 151617. A study by the Media Ecosystem Observatory analyzing conspiratorial claims online revealed that while general belief in institutional conspiracies remains limited and supported by a minority, a highly active cluster of just 100 influencer accounts drove 68% of conspiratorial posts and captured nearly 90% of views on platform X 17.

This concentration of production generates a false consensus bias 18. The sheer volume of highly visible, algorithmically amplified conspiratorial content leads the general public, journalists, and policymakers to vastly overestimate the true prevalence of these beliefs among the electorate. The phenomenon is further exacerbated by the tendency of algorithms to prioritize sensational and emotionally charged content, pushing fringe narratives into mainstream feeds where they achieve high engagement metrics without necessarily converting the audience to actual belief 119.

Cross-National and Cultural Disparities

While longitudinal polling demonstrates stability within individual nations over time, cross-sectional data reveals profound disparities in conspiracy theory endorsement across different geopolitical and cultural contexts. Conspiracy theories operate globally, but their resonance is tightly coupled with local institutional trust, economic security, and democratic stability.

Institutional Trust and Macroeconomic Indicators

The variance in international conspiracy endorsement correlates heavily with macroeconomic indicators and institutional realities. Meta-analyses of cross-national datasets, such as the Comparative Conspiracy Research Survey (CCRS), suggest a robust convergence of evidence: conspiracy beliefs are systematically higher in nations exhibiting higher systemic corruption, elevated economic inequality, and lower GDP per capita 202122.

Political science literature posits that economic vitality and low corruption serve as key performance indicators of government competence and trustworthiness 20. When citizens feel politically and economically powerless, or when they observe systemic corruption, they develop a profound distrust of state institutions and official narratives 22. In this context, conspiracy theories function as an alternative epistemology - a reactive form of sense-making driven by alienation from elite decision-making processes rather than simple gullibility 2021.

Search engine performance further institutionalizes this divide. Algorithm audits demonstrate that Google search results in developing democracies return a significantly higher proportion of conspiracy-confirming links than searches executed in prosperous Western democracies 22. For example, searches related to conspiracy theories returned confirming content at rates below 10% in Germany, Switzerland, and Australia, but spiked to nearly 25% in Romania and over 30% in Serbia 22. This effectively institutionalizes an informational divide based on geography, language, and existing democratic health.

Belief Prevalence in the Global South Versus Western Democracies

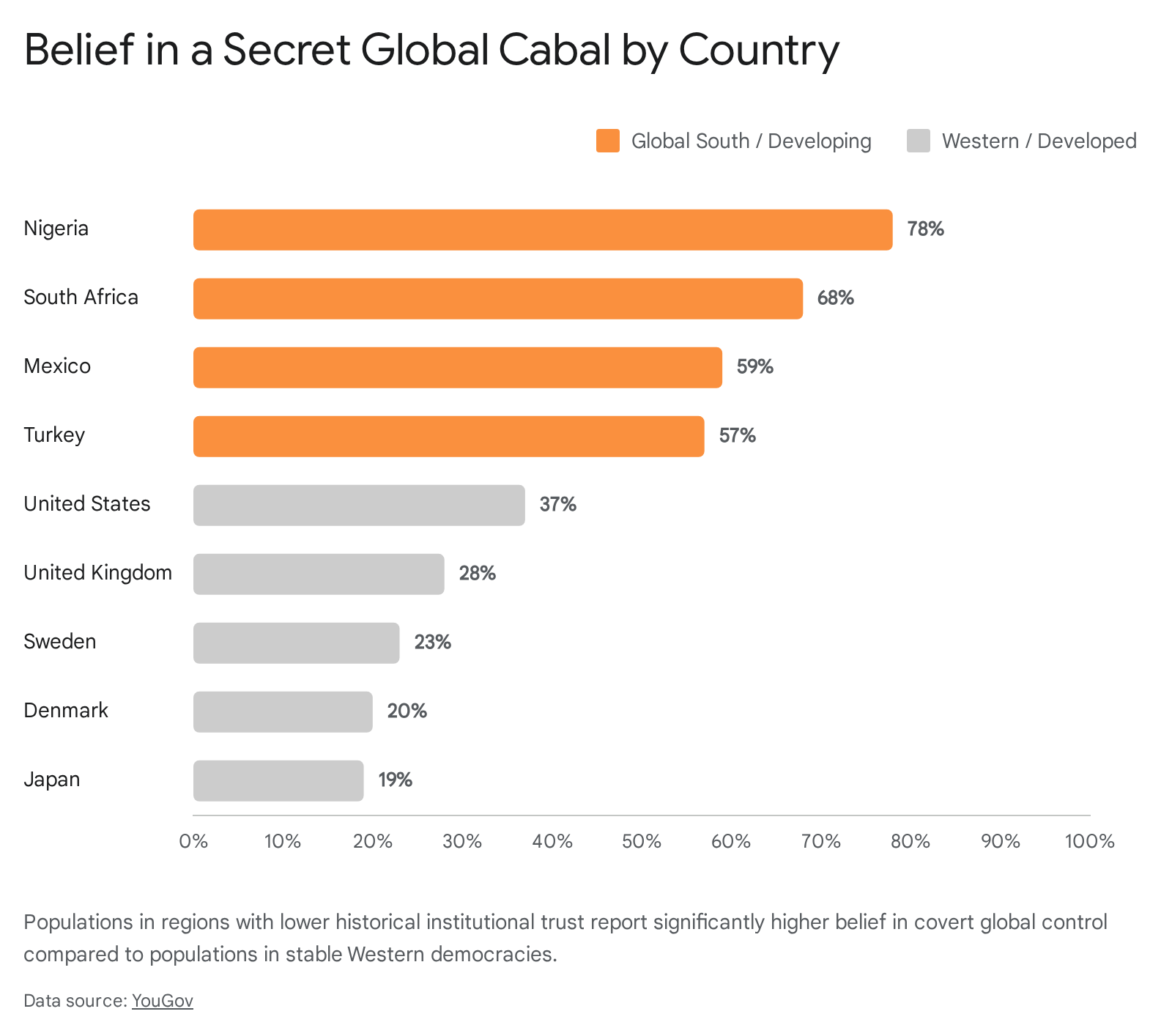

Survey data from the YouGov-Cambridge Globalism Project (2020) and subsequent Ipsos polling highlights how susceptibility to global conspiracy theories is significantly higher in the Global South and in nations with developing or unstable democratic institutions compared to Western European democracies 2324.

When asked if the world is run by a shadowy, secretive cabal behind the scenes, 78% of respondents in Nigeria and 68% in South Africa considered the claim "definitely" or "probably" true 23. High endorsement was also recorded in Mexico (59%), Turkey (57%), and Egypt (55%) 23. By contrast, in stable Western democracies, the figures drop precipitously: the United States sits at 37%, the United Kingdom at 28%, Sweden at 23%, and Japan at 19% 23.

This disparity extends to public health conspiracies. The theory that the COVID-19 virus was created and spread on purpose found adherence among 42% of Nigerians, 40% of Turkish citizens, and 37% of South Africans 23. In Western Europe, belief in the same narrative was markedly lower, with only 7% of Britons and 5% of Danes endorsing it 23.

Comparative Conspiracy Endorsement by Nation (2020)

| Country | Belief in Secret Global Cabal | Belief COVID-19 Spread on Purpose | Belief US Gov. Involved in 9/11 |

|---|---|---|---|

| Nigeria | 78% | 42% | Data Unavailable |

| South Africa | 68% | 37% | Data Unavailable |

| Mexico | 59% | Data Unavailable | 49% |

| Turkey | 57% | 40% | 55% |

| United States | 37% | 19% | 20% |

| United Kingdom | 28% | 7% | 12% |

| Denmark | 20% | 5% | Data Unavailable |

| Japan | 19% | Data Unavailable | Data Unavailable |

Data reflects polling percentages of respondents answering "definitely" or "probably" true. Figures sourced from the YouGov-Cambridge Globalism Project cross-national survey. 23

Case Studies in Democratic Transition

Regional studies further illuminate how sociopolitical history influences modern belief structures. In the Visegrad Group (V4) countries of Central Europe - Slovakia, Czech Republic, Hungary, and Poland - conspiracy theories regarding the COVID-19 pandemic resonated highly years after the outbreak. By late 2023, 56% of respondents in both Slovakia and Hungary considered at least one major COVID-19 conspiracy theory to be true, compared to 48% in Poland 25.

However, when examining "anti-Western" conspiracy theories (e.g., theories alleging malicious NGO operations or biological weapons programs), divergence within the V4 becomes stark. Slovakia exhibited the highest rate of anti-Western conspiracism, heavily correlating with national political rhetoric, while Poland exhibited the lowest (only 5.4% believing a cluster of three anti-Western claims, compared to 14.8% in Slovakia) 25. This underscores that specific narratives thrive where they align with existing geopolitical anxieties and populist political signaling 2125.

Psychological and Demographic Predictors of Conspiracism

Understanding why specific individuals adopt conspiracy theories within a given society requires analyzing the interplay between internal psychological predispositions and external demographic environments.

Conspiracy Mentality and Cognitive Processing

Sociological and psychological models frame baseline susceptibility through the lens of "conspiracy thinking" or "conspiracy mentality" - a relatively stable, individual-level predisposition to interpret salient historical and political events as the products of secret plots 6. This mindset is generally non-partisan, serving as a generalized filter for reality that thrives on an ontology of possibility rather than probability 1.

The relationship between digital media use and conspiracy belief is highly conditional upon this existing trait. While frequent social media users often express higher rates of belief in misinformation in aggregate surveys, multivariate analyses reveal that this effect is heavily moderated by pre-existing conspiracy mentality 6. Among individuals with low conspiratorial predisposition, heavy social media use does not correlate with an increased number of conspiracy beliefs 6. Consequently, social media does not invariably brainwash a neutral public; rather, it activates, feeds, and radicalizes those already cognitively primed to accept such narratives 6.

Furthermore, susceptibility is associated with cognitive processing styles. Individuals who demonstrate limited digital literacy, reduced cognitive reflection, high uncertainty avoidance, and heightened emotional responses to news are more likely to integrate false narratives into their worldview 2726. When individuals abstain from digital media, those with high conspiracy mentalities report significant drops in life satisfaction and increased feelings of social isolation, suggesting that conspiratorial online communities provide critical social support and identity validation for these users 27.

Demographic Variables and Ideological Sorting

Demographic trends also play a role in predicting susceptibility, though findings often counter conventional assumptions regarding age and digital nativism. In the United States, surveys mapping belief in pseudo-scientific conspiracies (e.g., flat Earth, microchipped vaccines, faked Moon landings) reveal that acceptance is low overall (roughly 10%), but significantly higher among Millennial respondents compared to older generations 11. In contrast, older populations - who lived through the actual events - are far less likely to doubt historical realities like the Moon landing (6% in 1999 vs 12% in recent polling heavily influenced by younger respondents) 11.

Educational attainment remains a consistent negative predictor of conspiracism. Studies conducted following the 2024 assassination attempt on Donald Trump found that conspiratorial thoughts were most prevalent among individuals holding a high school diploma but lacking a college degree, particularly men aged 25-54 exhibiting depressive symptoms 28. Political ideology dictates the flavor of the conspiracy adopted, rather than the baseline susceptibility. Partisans consistently adopt narratives that vilify out-groups; for example, left-leaning voters were highly susceptible to theories that the Trump assassination attempt was staged, while right-leaning voters adopted theories blaming "deep state" Democratic operatives 28.

Platform Affordances and Digital Dissemination Mechanisms

While social media may not generate new believers at the rates publicly assumed, the structural design - or "affordances" - of different platforms meticulously dictates how efficiently these theories propagate.

Symmetrical Versus Asymmetrical Network Dynamics

The efficacy of conspiracy theory propagation varies significantly depending on the fundamental architecture of the platform. Research spanning 17 countries during the COVID-19 pandemic evaluated the impact of using different digital platforms for news consumption on the adoption of conspiracy beliefs 29.

Platforms characterized by "symmetrical followership" - such as Facebook, Facebook Messenger, and WhatsApp - require mutual connections and are generally grounded in strong offline social ties, familial networks, or homogenous peer groups 2932. Because information shared on these platforms inherits the interpersonal trust of the sender, symmetrical platforms act as highly effective vectors for conspiracy theories. Studies indicate that primary usage of Facebook and WhatsApp for news positively increases aggregate conspiracy theory beliefs by roughly 3% to 5% on standardized scales 29.

Conversely, "asymmetrical" platforms like X (formerly Twitter) are structured around weak ties, public broadcasting, and heterogeneous interests 29. Data indicates that relying on Twitter for news has a slight negative correlation with conspiracy beliefs (reducing it by approximately 3%), potentially due to the higher likelihood of encountering corrective information, professional journalists, and integrated network exposure outside of one's immediate ideological cohort 29. However, YouTube remains a unique outlier; despite its asymmetrical structure, its recommendation algorithm has historically optimized for sustained engagement pathways that can lead users sequentially into deeper conspiratorial content silos 2932.

Network Topology and the Echo Chamber Effect

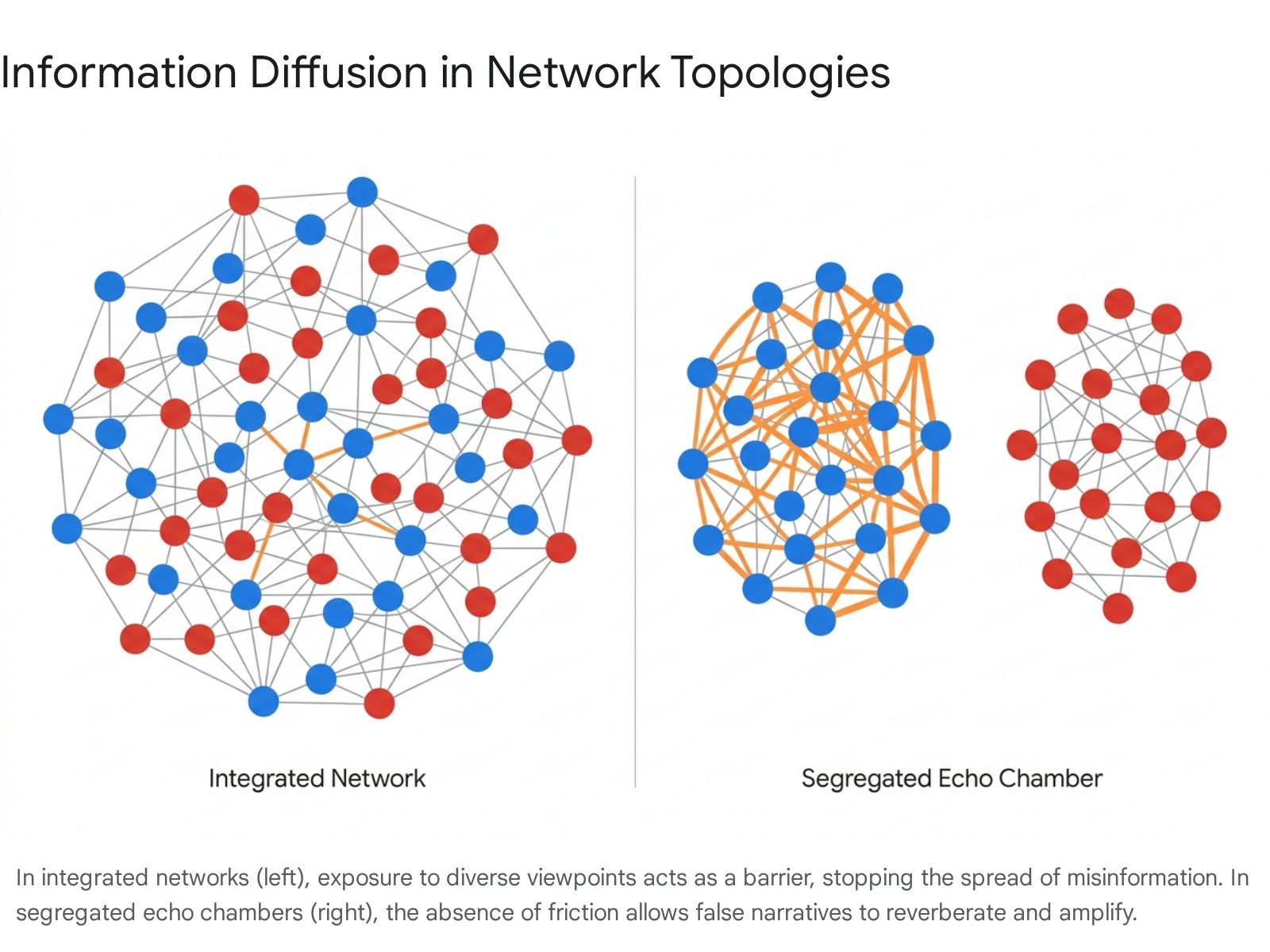

The concept of the "echo chamber" is fundamental to the digital dissemination of misinformation. Experimental macrosociological studies manipulating network architecture have demonstrated that partisan sorting systematically undermines the veracity of circulating information 3031.

In controlled simulations comparing "integrated networks" (where individuals interact with politically diverse contacts) with "segregated networks" (where contacts share homogenous political views), researchers observed that misinformation struggles to survive in integrated environments 3031. In integrated networks, susceptible individuals are disconnected from one another, and the presence of corrective counter-speech rapidly halts the spread of false claims 31.

Conversely, in segregated echo chambers, the network architecture brings together a concentrated supply of, and demand for, ideologically aligned news 30. Because individuals within these chambers are shielded from counterarguments and continuously validated by their peers, the echo chamber effectively lowers the threshold of plausibility. This allows highly improbable conspiracy theories - which would otherwise be rejected by the broader public - to achieve viral propagation and become normalized within the subgroup 3233. The echo chamber is thus driven by both homophily (the desire to associate with similar individuals) and algorithmic curation, which feeds users "more of what they like," preserving the illusion of a false consensus 1834.

Automated Amplification and Superspreader Behavior

The actors driving dissemination on social platforms can be bifurcated into human "superspreaders" and automated bot networks, each employing distinct rhetorical strategies 38. Linguistic analysis of over seven million tweets during the COVID-19 pandemic revealed that human superspreaders - highly active and influential individuals within echo chambers - tend to utilize complex language and substantive, argument-driven content 38. Their goal is to project authority, build comprehensive alternative narratives, and establish credibility with their human followers.

Bots, in contrast, deploy simpler language and rely heavily on the structural affordances of the platform, such as aggressive cross-hashtagging and rapid retweeting 38. Their objective is not to persuade through argument, but to hijack trending topics, artificially inflate engagement metrics to trigger algorithmic promotion, and increase the sheer accessibility and visibility of the conspiracy claim across unrelated data streams 138.

Subcultural Incubation and Alternative Platforms

The origin points for modern conspiracy theories rarely lie on mainstream platforms. Instead, anonymous or pseudonymous imageboards (such as 4chan and 8kun) and alternative networks (such as Gab, Parler, and Telegram) act as initial incubators for "junk information" and propaganda 353637.

Research indicates that the proportion of problematic or sensationalist content shared on 4chan's political boards is roughly 33%, compared to approximately 20% in highly political Reddit communities 36. These fringe spaces utilize an individualistic knowledge culture - often framed as "doing one's own research" - to iteratively build out conspiracy narratives 37. Once a narrative reaches a threshold of coherence, it is laundered into mainstream platforms through cross-platform linking, memes, and the process of "normiefication" 36.

Telegram, functioning as a hybrid between public broadcasting and closed messaging, has seen significant growth as a "platform of last resort" for deplatformed actors 37. Because Telegram allows users to forward messages seamlessly between disparate channels without algorithmic filtering, it facilitates the rapid connection of far-right, anti-vaccine, and general conspiratorial communities into a highly dynamic, interconnected network 37.

Comparative Table of Platform Dissemination Mechanics

| Platform Category | Examples | Network Structure | Impact on Conspiracy Belief |

|---|---|---|---|

| Symmetrical / Closed | WhatsApp, Facebook Messenger | Strong offline ties, mutual connections, homogenous groups. | High positive correlation. Information inherits interpersonal trust. 2932 |

| Asymmetrical / Open | X (Twitter) | Weak ties, public broadcasting, heterogeneous exposure. | Slight negative correlation. Exposure to professional news and counter-speech limits spread. 29 |

| Algorithmic Video | YouTube, TikTok | Interest-based clusters, engagement-driven recommendations. | Positive correlation. Recommendation algorithms sequence increasingly extreme content. 293235 |

| Fringe / Anonymous | 4chan, Telegram, Voat | Decentralized, unmoderated, highly partisan echo chambers. | Incubation zones. Used for narrative creation and coordination before mainstream laundering. 363738 |

The Interplay of Online Visibility and Offline Social Networks

Despite the intense academic focus on algorithms and digital interfaces, sociological research increasingly indicates that offline social networks and interpersonal ties remain the most potent catalysts in conspiracy adoption.

Evaluating Trust Vectors in Narrative Endorsement

A 2025 sociological study analyzing the aftermath of the attempted assassination of Donald Trump provided critical insights into the comparative potency of different information vectors 39. In the immediate days following the assassination attempt, majorities of the public were exposed to competing conspiracies (e.g., that the shooting was orchestrated by Democratic operatives, or conversely, staged by Republicans to garner sympathy) 28.

When surveyed, respondents overwhelmingly identified social media as the primary source where they encountered these theories (over 50% for both left- and right-leaning theories) 28. However, statistical analysis revealed that information consumption on social media was not consistently associated with the actual endorsement or belief in the narratives 39. Active participation on social media, such as commenting and engaging with diverse viewpoints, was even shown in some studies to reduce belief in conspiracies among certain demographics 19.

In stark contrast, information received through direct interpersonal ties (friends, family, offline acquaintances) was closely and causally linked to firmer belief in the conspiratorial narratives, regardless of the individual's political alignment 2839. The data demonstrates that trust operates as the primary vector for belief adoption. When a conspiracy theory is introduced or validated by a trusted offline contact, the psychological barrier to acceptance is significantly lower than when encountering the same claim via an anonymous algorithmic feed 19. This reinforces the finding that the internet functions primarily as a distribution mechanism for raw material, but offline socialization often provides the final validation required for genuine belief 39.

Content Moderation Policies and Ecosystem Resilience

Because platform architecture strictly dictates the flow of misinformation, the content moderation policies implemented by major technology corporations possess immense socio-political consequences.

Crowdsourced Moderation and the Elimination of Fact-Checking

In early 2025, Meta announced a sweeping restructuring of its content moderation framework, terminating its long-standing third-party fact-checking program in the United States 44404142. The corporation opted to replace professional moderation with a crowdsourced "Community Notes" system, mirroring the model implemented by X 4041. Corporate leadership cited the prioritization of "free speech" and a desire to decentralize moderation to community consensus, thereby mitigating accusations of political bias 444041.

However, researchers, academics, and fact-checking organizations emphasize the severe limitations of this crowdsourced model in curbing the spread of conspiracy theories. Studies on the efficacy of Community Notes indicate that the requirement for consensus across diverse political perspectives means that highly polarizing conspiracy theories often fail to receive a public note at all; partisan users rarely cross ideological lines to validate corrections that damage their own narratives 41434445. Furthermore, the crowdsourcing process is structurally slower than the viral diffusion of misinformation. The latency required to draft, vote on, and publish a community note allows conspiracy theories to achieve maximum penetration during the critical early stages of a news event 4445.

The implications of dismantling structured fact-checking are particularly acute on a global scale. While Meta's initial rollback was focused on the United States, experts warn that extending this crowdsourced model to the Global South - where baseline institutional trust is lower, ethnic and religious tensions are frequently weaponized, and WhatsApp or Facebook function as the primary internet portals - could precipitate severe real-world violence and democratic instability 4246. In regions lacking robust institutional safeguards, removing authoritative friction leaves vulnerable information ecosystems entirely dependent on algorithmically prioritized engagement and partisan crowds 4246. Advertisers and digital market analysts have similarly expressed concern that the degradation of data quality and the rise of unmitigated misinformation will erode brand safety and overall user trust 47.

Deplatforming Efficacy and Community Migration

When platforms choose to implement hard moderation tactics - such as deplatforming or permanently banning conspiracy communities - the effects are complex and double-edged. A comparative analysis of communities banned from Reddit (such as the QAnon-affiliated "GreatAwakening") tracked user migration to unmoderated alternative platforms like Voat 38.

The data demonstrates that banning influential conspiracy nodes effectively sanitizes the original platform. It reduces total visibility, decreases hate speech among remaining users, and protects casual users from incidental exposure 3138. However, conspiracy communities exhibit high resilience. The most dedicated users readily migrate to alternative platforms (e.g., Telegram, Voat, Gab, Parler) where they quickly recreate their previous social networks 353738.

Once on these alternative platforms, devoid of any moderation guidelines or dissenting voices, the communities condense into entirely segregated echo chambers. Studies tracking these migrations show that the linguistic toxicity and ideological radicalization of the migrating users measurably increases in the new, unmoderated environment 38. Thus, while deplatforming succeeds as a public health measure for the mainstream platform by limiting broad transmission, it inadvertently accelerates the extremism of the core believers who are pushed into the darker corners of the web 3848.

Conclusion

The intersection of conspiracy theories and digital media is defined by a complex interplay of network topology, human psychology, and platform design. Exhaustive longitudinal data refutes the popular narrative that the internet has unilaterally generated an escalating wave of conspiracy theory belief; baseline human susceptibility to these narratives has remained relatively stable over the past half-century 25. Susceptibility is far more accurately predicted by a nation's institutional stability, macroeconomic inequality, and the individual psychological predisposition toward conspiracy thinking 62021.

However, the internet has fundamentally transformed the mechanics of dissemination. Digital platforms algorithmically reward engagement, allowing a small minority of dedicated actors and automated bots to generate unprecedented visibility, creating a false consensus that distorts public reality 1738. Online network segregation allows believers to organize into impenetrable echo chambers where false narratives are validated without the friction of counter-speech 3031. Coupled with the realization that offline, interpersonal ties remain the most potent catalyst for cementing actual belief, it becomes clear that social media's primary danger lies in its efficiency as a sorting mechanism 39. It identifies vulnerable individuals, clusters them into radicalizing peer groups, and empowers them to challenge the epistemological foundations of society. As major platforms pivot away from authoritative fact-checking toward crowdsourced models, the structural advantages afforded to conspiracy theories are likely to intensify, requiring nuanced strategies emphasizing media literacy and algorithmic transparency to mitigate future harms 424446.