Consistent histories interpretation of quantum mechanics

Introduction to the Framework

The Consistent Histories (CH) framework, frequently referred to in broader theoretical literature as the decoherent histories approach, represents a rigorous mathematical and conceptual reformulation of quantum mechanics designed to eliminate the reliance on external observers, macroscopic measurement apparatuses, and the ad hoc postulate of wavefunction collapse 12. First introduced by Robert Griffiths in 1984 and independently expanded by Roland Omnès, Murray Gell-Mann, and James Hartle in subsequent years, the framework was explicitly constructed to extend quantum mechanics into domains where the orthodox Copenhagen interpretation fundamentally fails - most notably, quantum cosmology and the physics of closed macroscopic systems 134.

Standard textbook quantum mechanics relies heavily on a bifurcated ontology: a physical system evolves deterministically according to the unitary Schrödinger equation until an "observation" or "measurement" occurs, at which point the system's wavefunction stochastically "collapses" into an eigenstate of the measured observable 156. This introduces the deeply problematic "measurement problem" and the Heisenberg cut - the arbitrary boundary between the quantum system and the classical observer 456. The CH framework abolishes this division entirely. Instead, it posits that quantum mechanics applies universally across all scales and that all physical time dependence is fundamentally stochastic, with deterministic classical evolution serving merely as a limiting case where probabilities approach unity under specific macroscopic conditions 1.

The core ambition of the Consistent Histories approach is to define a coherent logical structure for assigning objective probabilities to sequences of quantum events - termed "histories" - without requiring those events to be registered by an observer or an external environment 237. It achieves this through a strict set of logical constraints known as "consistency conditions," which dictate when classical probability theory can be safely applied to quantum phenomena without generating logical contradictions 210. By reformulating quantum logic to bypass non-commuting paradoxes, the CH framework provides a complete, observer-independent interpretation of quantum theory that remains fully consistent with empirical observations and the formal structure of Hilbert space 14.

Context and Theoretical Motivation

To understand the necessity of the Consistent Histories framework, one must examine the theoretical impasses of late-twentieth-century physics. The traditional Copenhagen interpretation, championed by Niels Bohr and Werner Heisenberg, strictly limits quantum mechanics to a set of rules for predicting the outcomes of laboratory experiments 56. In this view, the universe is divided into a microscopic quantum realm of probabilities and a macroscopic classical realm of definite outcomes. The interaction between the two triggers a wavefunction collapse 55.

However, this instrumentalist approach breaks down when attempting to apply quantum mechanics to the universe as a whole. Quantum cosmology, a field that matured in the 1980s, requires a framework that can describe the early universe's evolution from a quantum state into a macroscopic spacetime 489. Because the universe is, by definition, a closed system containing everything, there can be no external observer to "measure" the cosmos and collapse its wavefunction 413. James Hartle and Murray Gell-Mann recognized that without an observer-independent formulation of quantum mechanics, quantum cosmology was logically impossible 18.

Simultaneously, Robert Griffiths sought to resolve the paradoxes of quantum foundations - such as Schrödinger's cat, the Einstein-Podolsky-Rosen (EPR) paradox, and Bell's theorem - by formalizing a language that could describe the objective properties of quantum systems between measurements 13. Griffiths's motivation was to establish a framework where one could retrodict the past states of a particle (e.g., asserting that a particle passed through a specific slit in a double-slit apparatus) based on present data, provided the appropriate logical rules were followed 4. The convergence of Griffiths's logical rigor and Gell-Mann and Hartle's cosmological ambitions resulted in the unified Consistent Histories approach.

Mathematical Formalism of Histories

The Consistent Histories approach shifts the analytical focus of quantum mechanics from the instantaneous state of a system (the wavefunction) to the temporal trajectory of physical properties. The wavefunction is demoted from its status as the exclusive ontological representation of reality to a pre-probabilistic tool used to calculate the likelihood of various objective historical sequences 101516.

Projection Operators and Event Sequences

In the CH formalism, a physical property of a quantum system at a specific time is mathematically represented by an orthogonal projection operator (or "projector"), $P$, acting on the system's Hilbert space, $\mathcal{H}$. A projector satisfies the conditions $P = P^\dagger$ and $P^2 = P$, corresponding to a specific subspace of the Hilbert space 1. For instance, a proposition such as "the particle is located in region X" corresponds to the projector onto the subspace of states localized in region X.

To describe a system comprehensively at a given time, the CH framework requires a Projective Decomposition of the Identity (PDI). A PDI is a set of mutually orthogonal projectors ${P_i}$ that sum to the identity operator $I$: $$\sum_i P_i = I, \quad P_i P_j = \delta_{ij} P_i$$ This complete orthogonal set ensures that the properties being considered are mutually exclusive and exhaustive. It forms the exact quantum analogue of a classical sample space in probability theory 1.

Definition of a History

A "history" in this framework is defined as a time-ordered sequence of these quantum events (properties) at successive times $t_1, t_2, \dots, t_n$. Mathematically, a history $Y^\alpha$ is represented by the tensor product of the projectors at these respective times: $$Y^\alpha = P_1^{\alpha_1}(t_1) \odot P_2^{\alpha_2}(t_2) \odot \dots \odot P_n^{\alpha_n}(t_n)$$ where $\alpha$ is a multi-index $(\alpha_1, \alpha_2, \dots, \alpha_n)$ that labels the specific sequence of events chosen from the available PDIs at each time step 10.

A collection of such histories, generated by choosing one projector from a complete PDI at each time step, constitutes a "family of histories" or a "framework" 110. Because the PDIs at each time step are exhaustive, the sum of all possible histories within a family covers the entire space of possibilities for that system's evolution.

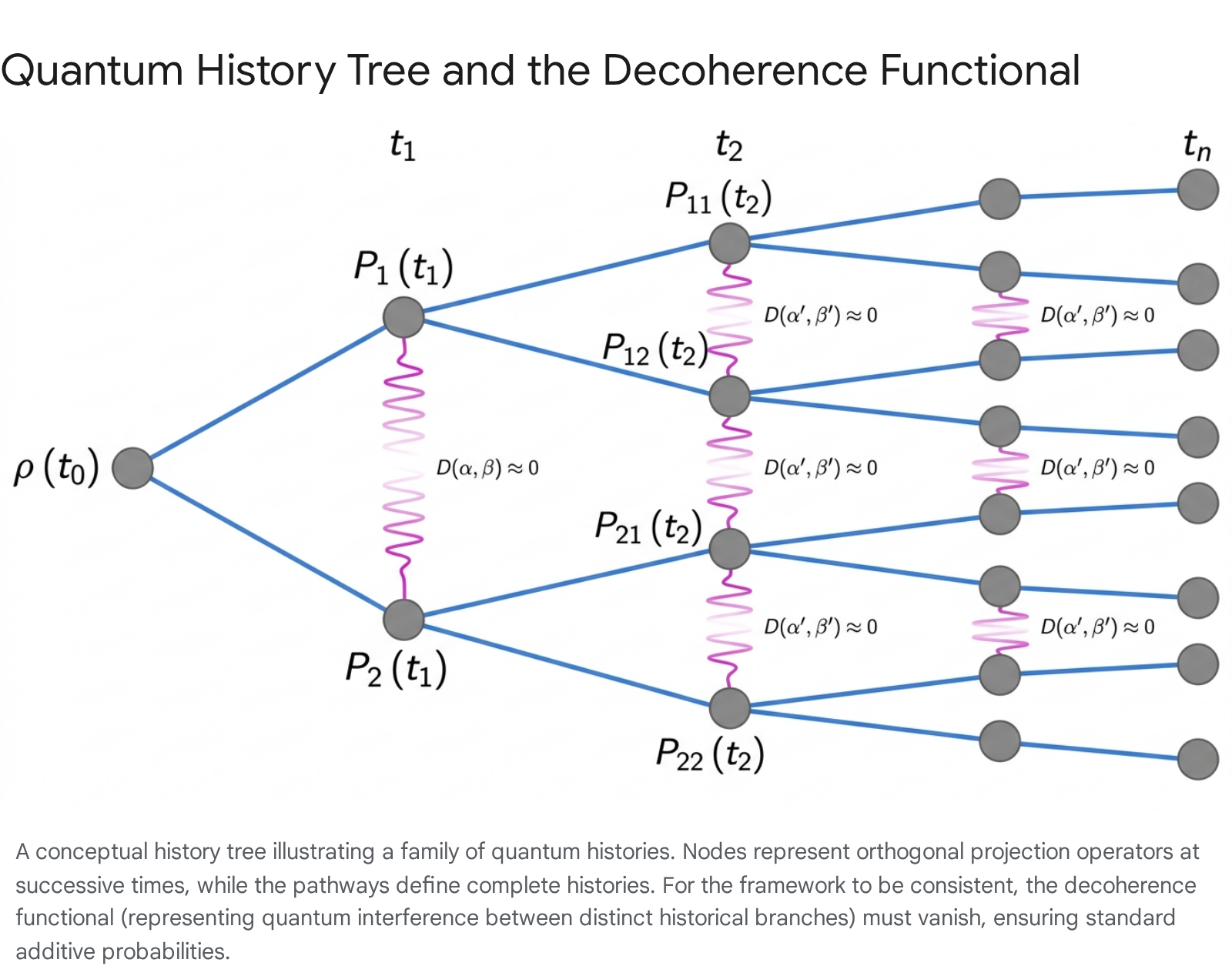

History Trees and Branching Sequences

A family of histories can be visualized as a branching tree structure. The root of the tree represents the initial state of the quantum system. At each subsequent time step $t_k$, the tree branches into mutually exclusive paths corresponding to the orthogonal projectors in the PDI chosen for that time 71718.

Each distinct path from the root to a terminal leaf represents a single, complete history within the family. Unlike the continuous, deterministic evolution of a classical trajectory, a quantum history tree acknowledges the stochastic nature of the universe. The system does not possess a single pre-determined path; rather, the tree maps the complete sample space of potential temporal trajectories 318.

The Decoherence Functional

The central technical mechanism of the Consistent Histories approach is the assignment of probabilities to the histories mapped out in the history tree.

However, owing to the wave-like nature of quantum mechanics, alternative histories can interfere with one another. When quantum interference is present, the assignment of standard additive probabilities becomes mathematically invalid, leading to violations of the Kolmogorov axioms of classical probability theory 4811. To resolve this, the framework introduces the "decoherence functional," a complex-valued measure of the quantum interference between two distinct histories within a family 48.

Definition and Trace Formulation

For a closed quantum system with an initial density matrix $\rho$ at time $t_0$, and unitary time-evolution operators $U(t_k, t_{k-1})$ bridging the dynamics between times $t_{k-1}$ and $t_k$, we define the class operator (or chain operator) $C_\alpha$ for a specific history $\alpha$ as: $$C_\alpha = P_n^{\alpha_n} U(t_n, t_{n-1}) P_{n-1}^{\alpha_{n-1}} \dots U(t_1, t_0) P_1^{\alpha_1}$$ This operator effectively chains together the unitary evolution of the system with the sequential projections onto the specified properties.

The decoherence functional $D(\alpha, \beta)$ between two histories $\alpha$ and $\beta$ is then defined mathematically as: $$D(\alpha, \beta) = \text{Tr}\left[ C_\alpha \rho C_\beta^\dagger \right]$$ where $\text{Tr}$ denotes the trace operation over the full Hilbert space 41120.

The diagonal elements of the decoherence functional, where $\alpha = \beta$, represent the unnormalized probabilities of the individual histories according to the extended Born rule: $$p(\alpha) = D(\alpha, \alpha) = \text{Tr}\left[ C_\alpha \rho C_\alpha^\dagger \right]$$ However, the critical focus of the framework lies on the off-diagonal elements ($\alpha \neq \beta$), which quantify the interference between distinct historical branches 813.

Consistency Conditions

For a family of histories to be assigned physically meaningful, classical-like probabilities, the interference between any two distinct histories within the family must vanish. This requirement is known as the "consistency condition" 278. If the condition is met, the family of histories is deemed a "consistent family" or "framework," and logical deductions can be safely made within it.

Several variations of this consistency condition exist in the theoretical literature, each with different implications for physical records and classicality: 1. Weak Decoherence (Consistency): The absolute minimum requirement for assigning additive probabilities is that the real part of the off-diagonal terms must vanish: $\text{Re}[D(\alpha, \beta)] = 0$ for all $\alpha \neq \beta$. When this holds, probability sum rules are satisfied without contradiction 411. 2. Medium Decoherence: The standard condition utilized in the Gell-Mann and Hartle formulation requires that the entire complex functional vanishes: $D(\alpha, \beta) = 0$ for all $\alpha \neq \beta$. This stricter condition ensures more robust quasi-classical behavior 412. 3. Strong Decoherence: Further conditions require that the vanishing of interference is resilient under time-reversal and implies the existence of generalized records. Strong decoherence guarantees that if a system follows a specific history, permanent orthogonal records of that history are imprinted into the surrounding Hilbert space 1013.

Partial Trace and Environmental Coupling

In realistic physical scenarios, perfect closed-system isolation is rare. To account for this, the formalism can be adapted to consider systems coupled to an environment. Researchers define a partial-trace decoherence functional: $$D_{pt}(\alpha, \beta) = \text{Tr}E \left[ C\alpha \rho C_\beta^\dagger \right]$$ where $\text{Tr}_E$ represents the partial trace over the environmental degrees of freedom 1011. When environmental interactions rapidly orthogonalize the environmental states corresponding to different system histories, this partial trace functional forces the interference terms to zero. This mathematical mechanism formally links the abstract logical rules of Consistent Histories with the physical dynamics of environmental decoherence 112014.

The Single-Framework Rule and Quantum Logic

The deepest philosophical and logical shift demanded by the Consistent Histories framework is the abandonment of universal classical logic in favor of a restricted quantum logic governed by the "Single-Framework Rule" 1.

In classical mechanics, the physical properties of a system form a Boolean algebra; one can arbitrarily combine any set of valid propositions using logical conjunctions (AND) and disjunctions (OR) without fear of contradiction. In quantum mechanics, observables are represented by operators that do not necessarily commute. Non-commuting projectors (such as those corresponding to exact position and exact momentum, or spin-x and spin-z) cannot be simultaneously assigned definite truth values without generating logical paradoxes, a reality famously codified by the Kochen-Specker theorem 124.

Principles of Framework Selection

To prevent paradoxes while maintaining a closed-system, objective ontology, Robert Griffiths instituted the Single-Framework Rule. This rule dictates that any logical deduction, probabilistic calculation, or physical reasoning must be confined entirely within a single consistent family of histories (a single framework) 1413. It is strictly illegitimate to combine statements, probabilities, or inferences drawn from two mutually incompatible frameworks.

The application of this rule is guided by four operational principles: 1. Liberty: A physicist is entirely free to choose any valid, consistent framework to analyze a quantum system. There are no fundamental restrictions on which perspective one might adopt, provided the chosen framework satisfies the decoherence functional 124. 2. Equality: No single consistent framework is fundamentally more "true," "fundamental," or "real" than any other. All consistent frameworks are equally valid mathematical representations of the underlying quantum reality 124. 3. Incompatibility: Logical reasoning cannot bridge incompatible frameworks. If Framework A and Framework B utilize non-commuting projectors, their propositions cannot be conjoined. Statements of the form "Property X from Framework A AND Property Y from Framework B" are declared mathematically and physically meaningless 1. 4. Utility: While all frameworks are equally valid, some are vastly more useful for answering specific physical questions. If an experimenter wishes to analyze the macroscopic readout of a laboratory pointer, they must utilize a framework that includes projectors corresponding to the macroscopic position of that specific pointer. Other frameworks, while mathematically consistent, will be useless for describing that specific measurement 124.

Resolution of Quantum Paradoxes

The Single-Framework Rule serves as the primary weapon of the CH interpretation against the myriad paradoxes of quantum foundations, dissolving them by identifying implicit violations of quantum logic 1.

Schrödinger's Cat and Macroscopic Superpositions: In orthodox interpretations and Many-Worlds theory, the universe is viewed as permitting vast superpositions of macroscopic states - such as a cat that is simultaneously alive and dead 115. The Consistent Histories framework argues that linear superpositions of macroscopic objects cannot be interpreted as actual states of affairs containing both properties simultaneously. A framework that includes the macroscopic projection operators for "Alive" and "Dead" is fundamentally incompatible with a framework that includes the projection operator for the coherent superposition state $(|\text{Alive}\rangle + |\text{Dead}\rangle)/\sqrt{2}$ 14. Because these projectors do not commute, one cannot logically assert that the cat is in a superposition and simultaneously ask whether it is alive or dead. If one chooses the quasi-classical framework, the cat is either definitively alive or definitively dead (with specific probabilities), and the superposition state simply does not exist as a defined property within that logical space 1.

Einstein-Podolsky-Rosen (EPR) and Bell's Theorem: CH resolves non-locality paradoxes by enforcing strict adherence to framework rules regarding separated particles 13. Bell's inequality violations are frequently cited as proof of "spooky action at a distance." However, CH demonstrates that the paradox arises only when one illegitimately combines counterfactual outcomes from incompatible measurement frameworks 13. If one measures the spin of Particle A along the x-axis and Particle B along the z-axis, those results form a consistent framework. One cannot then interject assumptions about what would have happened if Particle B had been measured along the x-axis, because that counterfactual belongs to an incompatible framework. By prohibiting this cross-framework conjunction, CH maintains strict Einstein locality; measurements on Particle A have no physical effect on Particle B 1.

Decoherence and Quasi-Classical Domains

The terminology surrounding "decoherence" in quantum foundations can occasionally be ambiguous, as the field relies on two distinct but highly overlapping concepts: environmental (or dynamical) decoherence and the abstract decoherent histories formalism 101617. Understanding the interplay between these two is critical for explaining why the macroscopic universe appears classical.

Environmental Decoherence Versus Abstract Consistency

Environmental Decoherence, pioneered by Wojciech Zurek and others, focuses on the physical, dynamical interaction between an open quantum system and its macroscopic environment (e.g., a bath of photons, thermal radiation, or air molecules) 141618. Through continuous interaction, the off-diagonal interference terms of the system's reduced density matrix decay exponentially. The environment effectively "monitors" the system, forcing it into a stable set of "pointer states" and creating the illusion of wavefunction collapse 141519. Environmental decoherence primarily relies on master equations and single-time distributions 1617.

Consistent (or Decoherent) Histories, by contrast, is a broader, abstract logical formalism. The decoherence functional evaluates the mathematical consistency of multi-time trajectories (histories) without strictly requiring a physical division between a "system" and an "environment" 1016. In CH, consistency is a static, mathematical property of a specific set of projectors, an initial state, and a Hamiltonian 10.

While the two concepts are distinct, they are deeply complementary. In practice, the abstract consistency conditions required by CH are almost entirely satisfied in nature by the physical mechanisms of environmental decoherence 201720. When a system interacts with an environment, the resulting entanglement dynamically suppresses the off-diagonal terms of the decoherence functional, ensuring that histories describing macroscopic variables are highly consistent 1120. Environmental decoherence provides the physical justification for why certain coarse-grained frameworks - specifically those corresponding to our classical reality - achieve consistency so robustly 417.

Emergence of Classicality and Records

One of the primary achievements of the Gell-Mann and Hartle expansion of CH is the mathematical derivation of "quasi-classical domains" 82021. A quasi-classical domain is a consistent family of histories characterized by a high degree of coarse-graining, where the macroscopic variables (such as energy density, momentum, and hydrodynamic properties) follow trajectories that closely approximate classical equations of motion, complete with minor quantum fluctuations 4.

For a quasi-classical realm to emerge and persist, the framework relies on the formation of generalized records. A record is a present state of the environment that perfectly correlates with a past property of the system 2017. The strong decoherence condition guarantees that if interference vanishes, permanent records of the history are imprinted into the surrounding Hilbert space 1317. This dynamic provides the physical inertia necessary for macroscopic objects - like planets, biological cells, and laboratory pointers - to exhibit stable, predictable, classical behavior over vast stretches of time and space, shielding them from the chaotic indeterminacy of the microscopic quantum realm 4.

Ontological Implications

The Consistent Histories framework demands a radical revision of classical ontology and epistemology, redefining what it means for a physical property to "exist" and what role, if any, the human observer plays in the universe 1.

Pluricity Versus Unicity

Classical physics is founded on the principle of "unicity": the assumption that at any given instant, there is a single, universally true, exhaustive description of the universe 1. The Consistent Histories framework replaces unicity with "pluricity" 1.

Because of the Single-Framework Rule, there are many incompatible but equally valid frameworks that can describe a quantum system. One framework might perfectly describe the momentum of a particle over time, while a completely incompatible framework perfectly describes its position 1. According to the principle of Equality, neither framework represents the single "true" underlying reality. Consequently, CH implies that there is no single, God's-eye view of the universe at any instant. Truth and objective reality are entirely relative to the chosen framework 4. While properties possess objective reality within a given framework (independent of any conscious observer), one cannot stitch these separate framework realities together into a singular, classical-style omniscient description 14.

Rejection of Wavefunction Realism

Interpretations like Many-Worlds and De Broglie-Bohm view the universal wavefunction as a literal, physical substance - the fundamental ontological entity of the universe 31032. Consistent Histories fundamentally rejects wavefunction realism.

In the CH framework, the wavefunction is treated merely as a mathematical instrument - a pre-probability matrix used strictly to compute the likelihood of objective physical events (histories) 310. Griffiths explicitly rejects the view that unitary wavefunctions are ontologically real 10. The true ontology of the CH universe lies in the histories of the physical properties themselves (the projection operators), not in the waves of probability that govern their distribution 316. Deterministic, unitary evolution is not seen as the ultimate truth of the cosmos, but merely as one possible history out of many, and one that is practically irrelevant when analyzing macroscopic stochastic processes 1015.

The Demotion of Measurement

In traditional interpretations, "measurement" is an undefined, primitive axiom that triggers an irreversible physical collapse 514. The CH approach entirely discards measurement as a fundamental concept 1422.

A laboratory measurement is simply a sequence of physical interactions governed by the Schrödinger equation, described within a framework that includes projectors for both the microscopic particle and the macroscopic states of the measurement apparatus 14. Because there is no physical collapse of the wavefunction, the CH framework allows physicists to calculate properties of a system before, during, and after a measurement 122. This allows physicists to analyze the measurement process itself, verifying mathematically that a macroscopic pointer accurately reflects a preexisting microscopic reality 1. This formulation directly answers historic criticisms from physicists like John Bell, who railed against the ambiguous, anthropocentric use of "measurement" in orthodox texts 1.

Comparative Analysis of Interpretations

To fully contextualize the Consistent Histories framework, it is necessary to contrast its logical structure, ontological claims, and treatment of determinism against the other dominant interpretations of quantum mechanics.

Copenhagen Interpretation

CH is frequently described by its proponents as "Copenhagen done right" 1. Both interpretations agree that quantum mechanics is fundamentally probabilistic, and both utilize a form of complementarity (where certain properties cannot be simultaneously defined) 1214.

However, Copenhagen relies on a subjective observer, an ill-defined macroscopic/microscopic boundary, and the physical collapse of the wavefunction 156. CH eliminates all of these artifacts. In CH, complementarity is rigorously formalized into the mathematical Single-Framework Rule, the macroscopic boundary is quantitatively derived via decoherence, and wavefunction collapse is explicitly rejected as a physical process - treated instead merely as a convenient mathematical shortcut for updating conditional probabilities given new information 16.

Many-Worlds Interpretation

While both CH and the Many-Worlds Interpretation (MWI) utilize decoherence to explain the emergence of classicality and both reject wavefunction collapse, their underlying ontologies are diametrically opposed 1514.

In MWI, the universal wavefunction is the sole reality, and its deterministic, unitary evolution generates a perpetually branching tree of parallel universes. Every possible history that has a non-zero probability physically occurs in an independent, equally real branch of the multiverse 61523.

Consistent Histories views the "many worlds" concept as a "quantum myth" 1. In CH, once a specific framework is selected, the dynamics are entirely stochastic: exactly one history from the sample space actually occurs in reality, while the others remain mere unrealized mathematical probabilities 14. A hybrid philosophical variant, known as "Many-Worlds Consistent Histories" (MWCH), suggests treating every consistent history within a family as a separate, physically real world 10. However, mainstream CH proponents strictly reject this, maintaining that the assignment of classical probabilities requires a single actualized outcome 14.

De Broglie-Bohm Pilot-Wave Theory

Bohmian mechanics is a deterministic, hidden-variable theory that posits the actual, continuous existence of point particles guided by a real, physical wavefunction 62224. It resolves the measurement problem by claiming that the universe is entirely deterministic, with probability arising only from human ignorance of the exact initial positions of the particles 2526.

CH diverges from Bohmian mechanics on several critical fronts. First, CH rigorously denies determinism 122. Second, Bohmian mechanics requires explicitly non-local mechanisms (action at a distance) to maintain its deterministic trajectories in entangled systems, a feature that troubled Albert Einstein 212226. CH, by confining logical deductions to single frameworks, strictly preserves Einstein locality 1. Furthermore, specific thought experiments analyzing particle trajectories (such as bubble chamber tracks and "surrealistic" trajectories) yield contradictory predictions regarding intermediate particle states between Bohmian theory and CH; CH aligns closely with standard textbook quantum mechanics, avoiding the non-local trajectory complexities introduced by the Bohmian guidance equation 2225.

Relational Quantum Mechanics

Carlo Rovelli's Relational Quantum Mechanics (RQM) posits that quantum states are not absolute but are strictly relative to the observer or interacting physical system 627. Historically, CH and RQM were viewed as parallel but distinct. RQM points out that by changing one's perspective, one can alter which histories belong in a consistent set, leaning into extreme subjectivity 2439. However, recent efforts have sought to formally integrate the two into a single robust theory 913.

| Feature | Consistent Histories | Many-Worlds Interpretation | Bohmian Mechanics | Copenhagen Interpretation | Objective Collapse (GRW) |

|---|---|---|---|---|---|

| Fundamental Ontology | Properties/Histories within frameworks | Universal Wavefunction | Particles + Guiding Wavefunction | Classical Apparatus + Quantum States | Wavefunction |

| Determinism | Stochastic (Fundamental randomness) | Deterministic (Universal level) | Deterministic (Hidden variables) | Stochastic (Upon measurement) | Stochastic (Physical collapse mechanism) |

| Role of the Observer | Irrelevant | Irrelevant (Observer is a quantum system) | Irrelevant | Central (Triggers collapse) | Irrelevant |

| Wavefunction Collapse | Mathematical tool (Conditional probability) | Illusion caused by decoherence | Does not occur | Physical event (Postulated) | Physical event (Dynamical modification) |

| Locality | Local (Within a single framework) | Local | Non-Local (Explicit action at a distance) | Non-Local (Spooky action) | Non-Local |

| Measurement Solution | Single-Framework Rule & Decoherence | Decoherence into non-interacting branches | Continuous deterministic trajectories | Postulated boundary (Heisenberg cut) | Non-linear modification to Schrödinger eq. |

Table 1: Comparative Matrix of Major Quantum Interpretations, highlighting the distinct ontological and mechanical parameters of the Consistent Histories approach 162440.

Modern Extensions and Applications

While the foundational texts of the Consistent Histories framework were established in the late 1980s and 1990s, the conceptual architecture continues to evolve, integrating with cutting-edge theoretical physics and quantum information theory in the 2020s.

Quantum Cosmology

Standard interpretations of quantum mechanics falter when applied to the universe as a whole. Gell-Mann and Hartle explicitly refined the CH framework to serve as the interpretive foundation for quantum cosmology 18. By defining the universe as a closed system governed by a universal density matrix and a Hamiltonian, cosmologists can use the decoherence functional to assign probabilities to vast cosmological histories - such as the formation of galaxies, the expansion rate of spacetime, and the emergence of macroscopic thermodynamic laws 841. The framework successfully integrates proposals like the Hartle-Hawking no-boundary condition, allowing researchers to calculate the likelihood of specific large-scale cosmological structures emerging from quantum fluctuations without referencing an external measurement apparatus 28.

Consistent Relational Histories

Recent literature has explored deep structural synergies between Consistent Histories and Relational Quantum Mechanics 2739. A unified framework called "Consistent Relational Histories" (CRH) was formally proposed in late 2025 913. CRH integrates the rigorous probability calculus and decoherence functionals of CH with the perspectival ontology of RQM. In CRH, absolute-time projectors are replaced with relational projectors, conditioning subsystem properties on internal quantum reference frames (QRFs) rather than an external background clock 13. This provides the operational semantics necessary for relational observers to formulate histories and compare descriptions across different reference systems without modifying the underlying dynamics of canonical quantum gravity 913.

Variational Consistent Histories Algorithms

In practical applications, calculating the decoherence functional for complex systems is computationally intractable for classical computers, as the number of histories scales exponentially with the number of particles and time steps 11. Recently, researchers have developed "Variational Consistent Histories" (VCH), a hybrid quantum-classical algorithm 1128. VCH utilizes modern quantum processors to evaluate the decoherence functional directly - achieving exponential speedups in calculating the complex traces - while a classical optimizer adjusts the history parameters to find consistent sets 11. This transition of CH from a purely philosophical interpretive framework to a computationally active algorithmic tool represents a major practical breakthrough in quantum foundations, enabling the quantitative study of the quantum-to-classical transition in molecular systems 11.

Criticisms and Theoretical Challenges

Despite its logical rigor, the Consistent Histories framework is not without its detractors. Criticisms generally focus on the framework's philosophical permissiveness, the exactness of its mathematics, and the perceived arbitrariness of framework selection.

Proliferation of Histories

The most formidable technical critique of the Consistent Histories approach was formulated by Fay Dowker and Adrian Kent in the mid-1990s 171843. Through rigorous mathematical analysis, Dowker and Kent demonstrated that for any given quantum system, there exists a vast, practically infinite proliferation of mathematically consistent sets of histories 18.

While a few of these consistent families correspond to our familiar, quasi-classical reality (where tables and chairs remain solid and planets follow predictable orbits), the overwhelming majority of consistent sets exhibit wildly non-classical, chaotic, or physically bizarre properties that bear no resemblance to observed reality 1843. The CH formalism, operating purely on abstract mathematical consistency conditions, lacks a fundamental, built-in "selection principle" to single out the quasi-classical domain as the "correct" or "actual" reality 1618. Critics argue that without an additional physical postulate to exclusively select the quasi-classical framework, the theory is essentially incomplete, operating as a weak theoretical filter 516.

Proponents of CH counter this by invoking the principle of Utility. The framework does not need to single out one true reality; the quasi-classical framework is simply the one characterized by high utility for human beings seeking to correlate thermodynamic records. Because all consistent frameworks are equally valid (Equality), the existence of bizarre, non-classical frameworks is a mathematical curiosity rather than a fatal flaw 124.

The Measurement Problem Debate

While CH claims to resolve the measurement problem by rendering measurement a standard physical process, some philosophers of physics argue that it merely sweeps the problem under the rug by shifting the ambiguity from "what constitutes a measurement?" to "which framework should we choose?" 439.

Because physicists must retroactively choose a framework that includes projectors for the desired experimental outcome, critics assert that CH involves an element of epistemic subjectivity masquerading as objective logic 439. If one changes their perspective or alters the experimental setup at a later time, the set of histories considered "consistent" can change, creating conceptual friction for those who desire a single, immutable, objective history of the universe. To critics, the framework acts more as a post-hoc rationalization of experimental data than a predictive fundamental theory 41639.

Conclusion

The Consistent Histories framework stands as one of the most mathematically precise and logically disciplined interpretations of quantum mechanics developed in the late twentieth century. By introducing the projective decomposition of the identity, the decoherence functional, and the restrictive, non-Boolean logic of the Single-Framework Rule, Robert Griffiths, Roland Omnès, Murray Gell-Mann, and James Hartle successfully constructed a quantum paradigm that functions entirely without external observers, subjective wave-function collapse, or arbitrary classical boundaries 13.

The framework's absolute insistence on fundamental stochasticity and pluricity forces a profound departure from classical intuition. It requires physicists to accept that the universe cannot be exhaustively described by a single, monolithic narrative, and that objective reality is strictly relative to the chosen logical framework 14. While it continues to face philosophical resistance regarding the unchecked proliferation of mathematically valid frameworks and the lack of a fundamental selection principle 1618, its foundational utility is undeniable. By providing the essential probabilistic machinery for quantum cosmology and facilitating modern advancements like Consistent Relational Histories and quantum-computational variational algorithms 91311, the Consistent Histories approach remains a vital, actively researched pillar in the ongoing quest to understand the ultimate nature of quantum reality.