Compute Governance and AI Hardware Controls

Foundations of Compute Governance

The rapid acceleration of artificial intelligence capabilities has prompted international regulators, national security apparatuses, and policy researchers to seek effective mechanisms for overseeing the technology. Among the primary inputs required for advanced artificial intelligence - data, algorithmic architectures, and computational hardware - governance efforts have increasingly converged on the regulation of hardware, a paradigm formalized as compute governance 12. The rationale for targeting hardware rests on its unique physical and economic properties. Unlike training data, which is non-rivalrous and virtually infinitely replicable, or algorithmic knowledge, which proliferates rapidly through open-source channels and academic publications, advanced semiconductor hardware is a highly concentrated, capital-intensive physical asset 12.

This tangibility renders computational hardware highly susceptible to traditional state regulatory instruments. Advanced artificial intelligence accelerators are highly detectable; the construction and operation of the large-scale data centers required to house them demand immense capital investment, specialized physical infrastructure, and massive energy consumption, making covert development of frontier models extraordinarily difficult 12. Furthermore, hardware is excludable, meaning that physical chips can be embargoed, seized, or subjected to strict export controls, effectively preventing unauthorized actors from acquiring them 12. Finally, hardware is quantifiable, allowing regulators to establish objective performance thresholds - such as calculations of floating-point operations (FLOP) or interconnect bandwidth - to trigger specific compliance obligations 13.

Semiconductor Supply Chain Vulnerabilities

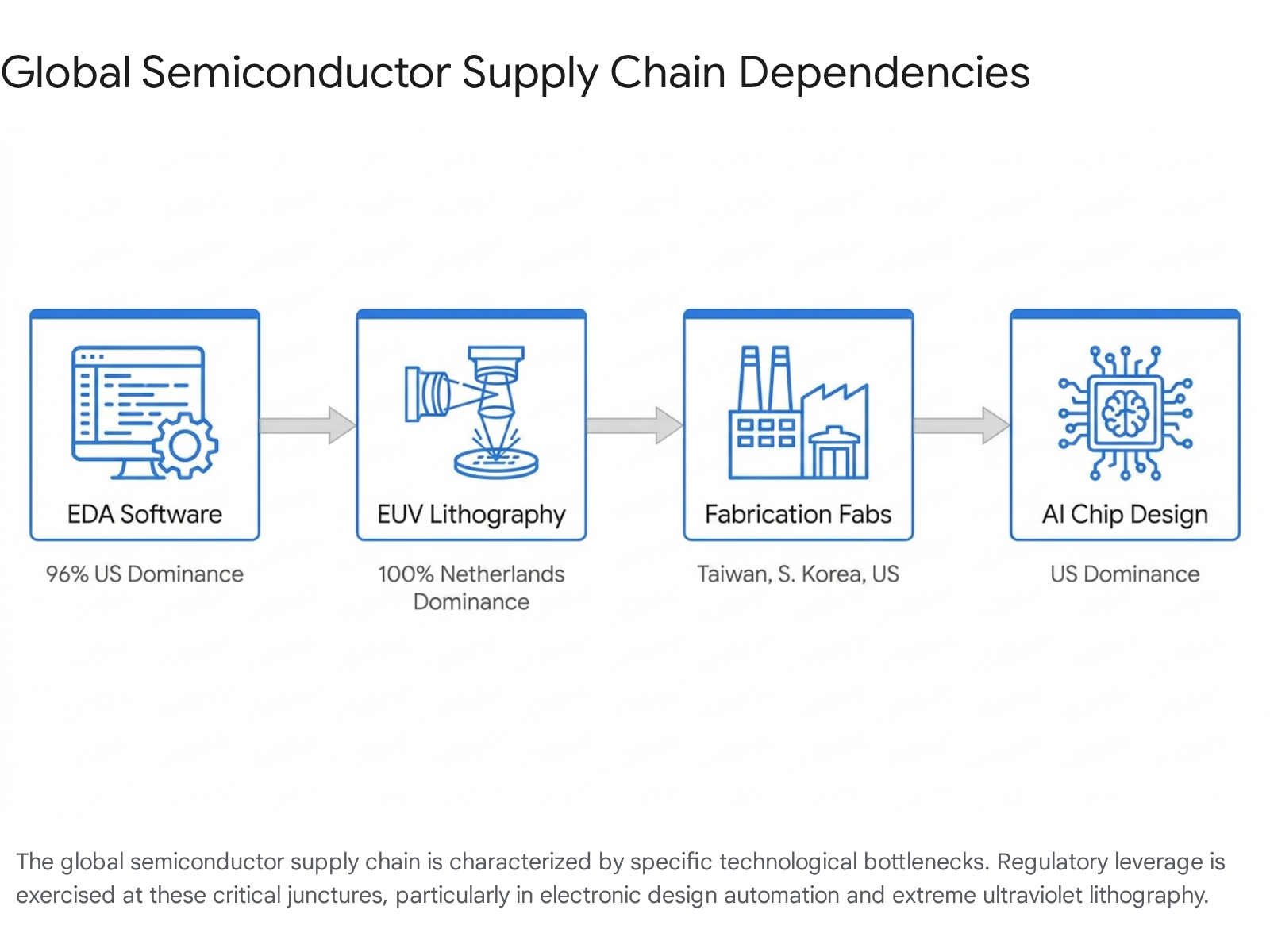

The efficacy of compute governance relies fundamentally on the structural concentration of the global semiconductor supply chain. The production of advanced artificial intelligence accelerators is not a distributed global enterprise; rather, it depends on a narrow stack of highly specialized technologies spread across specific geographic nodes 45. This supply chain is characterized by severe chokepoints, where a single nation or a tight coalition of allied nations maintains near-monopoly control over critical inputs, providing the leverage necessary to enforce global compute governance 56.

| Supply Chain Segment | Global Market Concentration | Regulatory Leverage and Chokepoint Dynamics |

|---|---|---|

| Electronic Design Automation (EDA) | The United States commands approximately 96% of the global market for advanced EDA software, dominated by Synopsys, Cadence, and Siemens EDA 67. | Advanced chips cannot be designed without EDA software. The U.S. leverages this dominance to restrict adversary chip design capabilities by blocking access to process design kits (PDKs) necessary for state-of-the-art nodes 57. |

| Photolithography Equipment | The Netherlands, specifically the firm ASML, holds a 100% monopoly on Extreme Ultraviolet (EUV) lithography scanners required for sub-5 nanometer manufacturing 78. | EUV scanners are indispensable for printing the microscopic features of frontier artificial intelligence chips. Export restrictions on these machines effectively halt the domestic mass production of advanced logic chips in restricted nations 78. |

| Front-End Fabrication | Advanced semiconductor fabrication is an oligopoly dominated by Taiwan Semiconductor Manufacturing Company (TSMC), Samsung (South Korea), and Intel (United States) 37. | Controlling the export of finished chips from these foundries, or enforcing the Foreign Direct Product Rule on fabs utilizing U.S. equipment, allows allied nations to restrict global access to the most powerful physical hardware 47. |

| Hardware Architecture Design | United States firms, most notably Nvidia and AMD, design the vast majority of server-grade Graphics Processing Units (GPUs) and specialized AI accelerators 47. | Because the fundamental architectures are controlled by U.S. entities, regulatory authorities can impose unilateral end-use and end-user restrictions on the final commercial products before they enter the global market 7. |

The extreme consolidation detailed above illustrates how relatively small investments in specific technologies control access to vastly larger downstream markets, enabling governments to dictate the pace and distribution of global artificial intelligence development 5.

Statutory and Regulatory Policy Levers

Governments increasingly rely on a matrix of statutory and regulatory tools to assert control over artificial intelligence development. These policy levers range from conventional trade restrictions to complex domestic surveillance mechanisms, each carrying distinct feasibility constraints and evasion risks 39.

Export Controls and Evasion Dynamics

The primary instrument of compute governance has been the application of export controls. Operating under authorities such as the Export Control Reform Act of 2018, the United States Department of Commerce's Bureau of Industry and Security (BIS) maintains stringent regulations targeting the transfer of advanced chips to geopolitical adversaries 91011. These controls establish specific technical performance thresholds, frequently defined by metrics such as Total Processing Performance (TPP) and interconnect bandwidth, to classify restricted items under specific Export Control Classification Numbers 312.

However, traditional export controls suffer from significant evasion vulnerabilities. Intelligence estimates indicate that massive quantities of restricted hardware successfully bypass customs through illicit networks 1314. Adversaries utilize complex, multi-layered evasion tactics, including the establishment of shell corporations in intermediary jurisdictions, the exploitation of gray markets, and the physical transshipment of components through third-party countries 31015. Once the hardware crosses a border into an uncontrolled jurisdiction, regulators lose visibility over its end-use, allowing foreign military and state-backed commercial entities to assemble powerful computing clusters 1013.

In response to market pressures and evasion realities, export control postures continually evolve. Recent regulatory updates have transitioned from outright presumptions of denial to complex case-by-case reviews for certain hardware architectures, provided specific conditions are met. These conditions often mandate that hardware undergoes independent performance testing within the United States, that export volumes are strictly capped relative to domestic sales, and that the recipient entity implements sufficient internal security procedures 1116. Despite these adjustments, the underlying fragility of physical export controls remains a chronic challenge for regulatory authorities 13.

The Remote Access Loophole and Cloud Governance

A more profound challenge to hardware export controls is the virtualization of compute resources. If foreign entities cannot legally import physical chips, they can frequently bypass restrictions by renting processing power from global Infrastructure-as-a-Service (IaaS) providers 121718. This "cloud loophole" allows adversaries to train advanced foundation models using top-tier American hardware housed in third-country or even domestic data centers, effectively rendering physical border controls obsolete 1018.

To address this vector, regulators have advanced comprehensive "Know Your Customer" (KYC) mandates for cloud providers, conceptually adapted from anti-money laundering frameworks in the financial sector 192021. Proposed regulations require U.S. IaaS providers and their foreign resellers to implement sweeping customer identification programs to verify the identity of all foreign users 1922. Furthermore, these frameworks impose an affirmative obligation on providers to submit detailed reports to regulatory agencies whenever a foreign customer attempts to utilize cloud resources to train large artificial intelligence models that possess potential capabilities for malicious cyber-enabled activities 1923.

The implementation of Cloud KYC has generated substantial friction within the technology sector. Industry analyses emphasize the profound technical difficulty of reliably distinguishing between domestic and foreign users across highly obfuscated, globally distributed network architectures 24. Mandating the collection and storage of sensitive identification documents introduces severe data privacy risks and raises acute concerns regarding compliance with international frameworks, such as the EU-US Privacy Shield, and domestic laws like the Electronic Communications Privacy Act 24. Moreover, there is significant concern that aggressive domestic cloud regulations will place U.S. providers at a competitive disadvantage, incentivizing international customers to migrate their workloads to foreign, non-aligned sovereign cloud ecosystems 2425.

Comparative Analysis of Policy Levers

The broader landscape of compute governance encompasses a variety of mechanisms, each presenting unique trade-offs between regulatory efficacy and the preservation of domestic innovation ecosystems.

| Governance Mechanism | Technical Feasibility | Evasion Vulnerabilities | Impact on Innovation Ecosystems |

|---|---|---|---|

| Physical Export Controls | Currently deployable via customs enforcement and Entity Lists 3. | High. Circumvention occurs via shadow supply chains and third-country transshipment 315. | High risk. Accelerates indigenous innovation in rival nations; strains allied relations; reduces revenues for domestic firms 310. |

| Cloud Service KYC Requirements | Near-term implementation; regulatory frameworks are currently in draft phases 319. | High. Vulnerable to "compute structuring" where workloads are deliberately fragmented across multiple providers to evade thresholds 326. | Moderate. Imposes high compliance overhead; raises severe data privacy concerns; may drive global customers to alternative providers 2427. |

| Threshold-Based Model Licensing | Currently deployable for basic reporting; requires extensive R&D for hardware enforcement 3. | High. Vulnerable to post-training parameter expansion, algorithmic efficiency gains, and distributed training methods 328. | High risk. Static computational thresholds quickly become obsolete, inadvertently capturing benign academic and commercial research 329. |

| Foreign Investment Reviews (CFIUS) | Currently deployable using existing statutory authority over inbound capital flows 17. | Moderate. Capital can be routed through opaque offshore funds, though ultimate beneficial ownership is increasingly scrutinized 17. | Low to Moderate. Protects critical intellectual property but may constrain access to global capital for domestic hardware startups 17. |

Evolving Computational Paradigms

The foundational premise of threshold-based compute governance is that massive, centralized aggregations of hardware are the sole pathway to generating dangerous artificial intelligence capabilities. This assumption is currently being challenged by rapid, fundamental shifts in how artificial intelligence systems are developed, optimized, and deployed 2830.

The Decoupling of Pre-Training Compute and Final Capabilities

Historically, the capabilities of large language models have scaled predictably in tandem with the volume of compute expended during the pre-training phase 3031. Under this paradigm, pre-training represented an enormous, infrequent capital expenditure, consuming millions of discrete GPU-hours over contiguous months, and serving as an easily identifiable target for regulatory thresholds 3233.

However, the industry is experiencing a profound transition from scaling pre-training compute to scaling post-training optimization and inference compute 3032. Recent technical developments indicate that smaller base models, when subjected to extensive post-training refinement using highly curated synthetic data, can match or exceed the performance benchmarks of vastly larger models 2832. The release of advanced reasoning models has demonstrated that increasing the computational budget at test-time - allowing the model to "think" longer before generating a response - yields substantial capability gains that were historically only achievable through massive pre-training 3334.

This decoupling presents acute challenges for governance frameworks relying on static training-compute thresholds. Techniques such as chain-of-thought reasoning, best-of-N sampling, and the integration of retrieval-augmented generation (RAG) require negligible additional training compute but drastically elevate the risk profile of the system during deployment 2834. Consequently, a model that registers well below a regulatory reporting threshold during training could be deployed alongside test-time optimizations that unlock highly dangerous capabilities, effectively bypassing the intended oversight mechanisms 2833.

Furthermore, the shift toward inference-heavy paradigms alters the strategic calculus surrounding open-weight models. Traditionally, releasing model weights was viewed as a high-proliferation risk, allowing malicious actors to bypass the financial barrier of training 35. However, if achieving frontier performance increasingly requires exorbitant operational expenditures for inference compute at deployment, the value of possessing stolen or open-source weights diminishes 30. A malicious actor in possession of frontier weights would still face the nearly insurmountable infrastructural barrier of securing the massive inference clusters required to run the model effectively at scale 3031.

The Algorithmic Efficiency Debate

The viability of compute thresholds is further complicated by the relentless pace of algorithmic progress. Algorithmic efficiency encompasses innovations in neural architectures, data curation strategies, and optimization protocols that allow developers to achieve equivalent performance using progressively fewer computational resources 3635. Empirical analyses indicate that algorithmic progress effectively halves the compute required to achieve a specific benchmark capability every nine months, translating to an efficiency gain of approximately 3x to 10x per year when controlling for hardware improvements 3639.

Critics of threshold governance argue that this "efficiency shock" renders static regulations structurally obsolete 336. If a compute threshold is fixed at a specific FLOP count, gradual algorithmic enhancements will inevitably allow developers to produce models with catastrophic capabilities while operating entirely below the regulatory radar 2936. This dynamic necessitates that governance frameworks transition toward dynamic, self-updating thresholds that automatically adjust downward to account for forecasted efficiency gains, a process requiring substantial, ongoing technical expertise within regulatory agencies 3639.

Conversely, an emerging body of empirical research challenges the assumption that algorithmic progress universally democratizes advanced capabilities. Recent ablation studies tracking efficiency gains over the past decade suggest that the vast majority of algorithmic progress - often exceeding 90 percent - is highly scale-dependent 3537. The most transformative efficiency innovations, such as the transition from Long Short-Term Memory (LSTM) architectures to Transformers, or the shift to Chinchilla-optimal data scaling laws, yield their dramatic dividends almost exclusively when applied to massive compute budgets 3537.

For small-scale models operating on constrained hardware, the rate of algorithmic progress appears to be orders of magnitude slower than previously estimated 3537. This scale-dependence suggests that rather than decreasing the overall demand for hardware, algorithmic progress actively incentivizes further capital investment; as algorithms become more efficient at translating raw compute into capabilities, frontier developers are motivated to scale their hardware clusters even larger to unlock unprecedented, highly profitable functions 38. Consequently, while smaller actors benefit from incremental efficiency gains, the absolute vanguard of artificial intelligence risk will remain tethered to the largest and most expensive data centers, preserving the strategic viability of hardware-level governance for the highest tier of development 138.

Hardware-Enabled Governance Mechanisms

Recognizing the fundamental limitations of border-based export controls, the vulnerability of cloud KYC regulations, and the fragility of static compute thresholds, researchers and policymakers are increasingly focused on embedding enforcement directly into the silicon itself. This approach, termed Hardware-Enabled Mechanisms (HEMs) or on-chip governance, involves integrating secure physical and cryptographic features into AI accelerators to enact policies, verify compliance, and prevent unauthorized usage without relying on manual audits or geographic borders 394041.

Firmware Locks and Secure Enclaves

The foundational technologies required for on-chip governance are already widely deployed in commercial electronics, spanning from Trusted Platform Modules (TPMs) utilized for anti-cheat software in video games to the secure boot chains securing enterprise mobile devices 3941. Adapting these architectures for high-performance AI hardware involves sophisticated applications of firmware and cryptography.

A prominent proposal in the near term is the implementation of "Offline Licensing." Under this framework, advanced AI chips would be manufactured with a secure module that requires a cryptographically signed digital license key, periodically issued by a regulatory authority, to initiate large-scale training workloads 3942. The system relies on hardware features such as firmware verification, secure non-volatile memory, and strict rollback protections 42. If a chip detects sustained, highly parallelized operations consistent with frontier model training in the absence of a valid license, or if it detects unauthorized attempts to modify its firmware, the secure module interrupts the workload or disables the chip entirely 42.

More advanced, though currently speculative, implementations envision the use of Trusted Execution Environments (TEEs) and hardware-backed secure enclaves 394344. These isolated processing regions protect code and data from external observation or modification, even by the host system's hypervisor 4344. In a governance context, TEEs could generate "cryptographic proof-of-training," allowing AI developers to mathematically prove to regulators the exact quantity of compute utilized, the parameters of the model, and the characteristics of the training data, achieving rigorous compliance verification without compromising sensitive commercial intellectual property or violating user privacy 339.

Implementation Feasibility and Security Constraints

While conceptually promising, the integration of HEMs into the global semiconductor ecosystem faces profound engineering and security barriers. The existing commercial hardware security paradigms are primarily optimized to defend against remote cyberattacks originating from unauthorized software; they are generally not designed to withstand sustained, physical assaults from well-resourced nation-state adversaries who possess undisputed physical ownership of the chips 39.

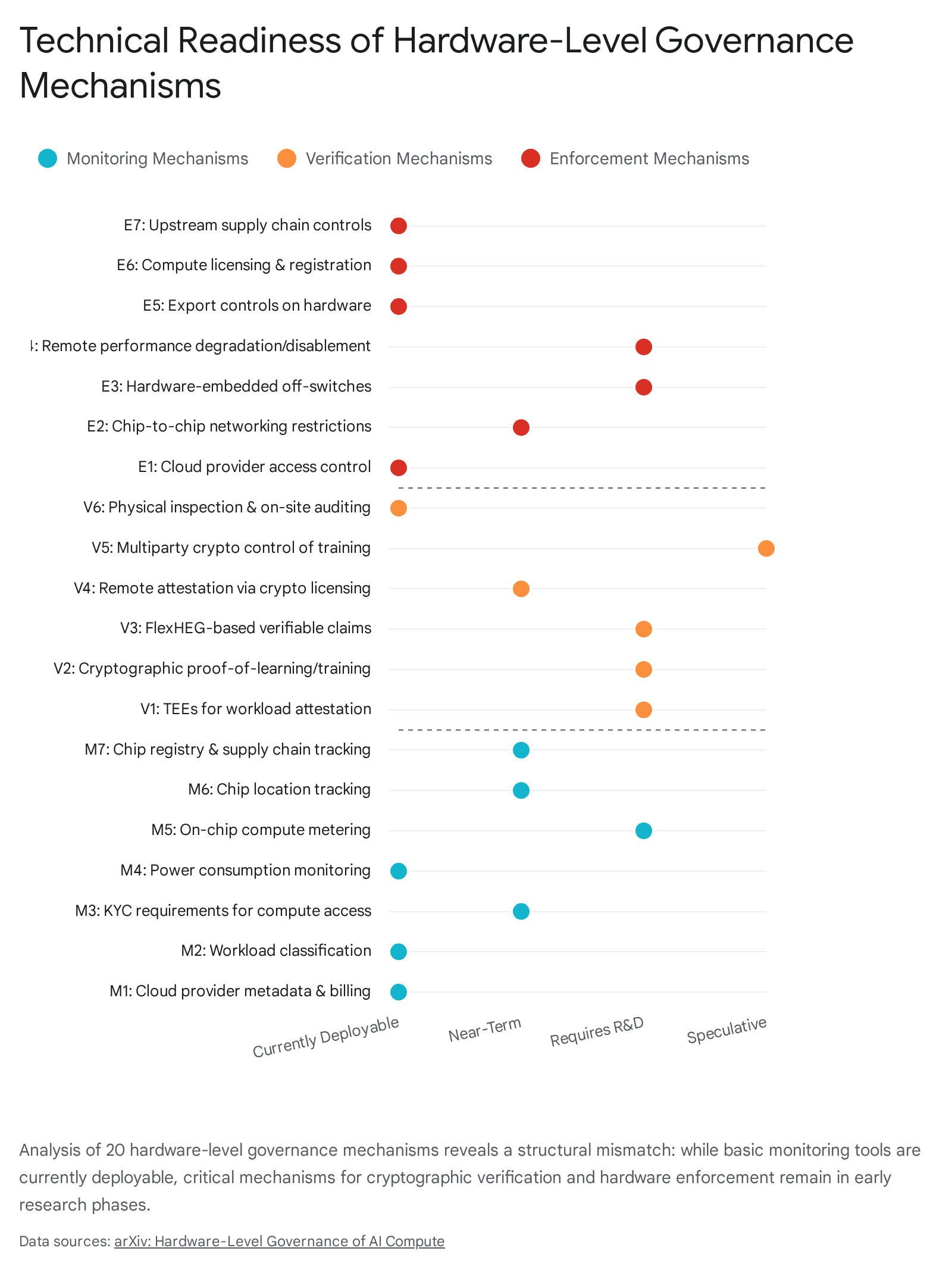

A comprehensive technical taxonomy of hardware-level governance tools reveals a stark structural mismatch in technology readiness.

While rudimentary monitoring mechanisms - such as analyzing cloud billing metadata, coarse power consumption, and supply chain trade data - are currently deployable, the precise mechanisms required for robust multilateral treaty verification remain highly immature 345. Crucial capabilities, including highly accurate on-chip compute metering, dynamic hardware off-switches, and cryptographic proof-of-training, require years of dedicated research and development before they can be reliably deployed in adversarial environments 3.

Engineering highly secure, tamper-proof hardware architectures introduces significant die area overhead, complicates thermal management, and potentially degrades overall chip performance, adding substantial nonrecurring engineering costs to already capital-intensive fabrication cycles 313. Furthermore, security experts emphasize that aiming for absolute "tamper-proofing" against a sovereign state is scientifically implausible; adversaries with access to advanced electron microscopes and focused ion beams can eventually compromise any physical enclosure 339. Consequently, the realistic objective for hardware-level governance must be "tamper-evident" architecture - systems that cannot prevent a breach entirely but mathematically guarantee that any physical or logical intrusion leaves indelible, verifiable evidence, establishing a compliance standard analogous to the safeguard verification protocols utilized by the International Atomic Energy Agency 345.

The United States Chip Security Act of 2026

The theoretical discourse surrounding embedded hardware enforcement materialized into aggressive statutory policy with the introduction and advancement of the United States Chip Security Act of 2026 1446. The legislation was directly catalyzed by intelligence reports revealing that over one million restricted high-performance chips had successfully bypassed customs enforcement, fueling the development of advanced models by rival state-affiliated entities 13.

Legislative Mandates for Hardware Tracking

Passed with unanimous bipartisan support by the House Foreign Affairs Committee, the Chip Security Act marks a watershed pivot in economic statecraft, legally shifting the burden of export compliance from paperwork and border agents directly into the physical architecture of the semiconductor 1447. The core provision of the bill establishes a rigid, 180-day mandate directing the Department of Commerce to require that all advanced integrated circuits - specifically targeting devices classified under Export Control Classification Numbers 3A090, 4A090, 3A001.z, and 4A003.z - feature active, continuous location verification mechanisms before they can be legally exported or transferred internationally 1446.

Under this framework, covered chips are required to autonomously and securely verify their geographic location, whether implemented via software, firmware, or dedicated hardware logic 1446. If a chip is diverted from its authorized data center or relocated to an embargoed jurisdiction, the system is designed to detect the discrepancy and trigger immediate, mandatory reporting obligations for the license holder 46. A secondary phase of the legislation empowers the Departments of Commerce and Defense to conduct feasibility studies on the implementation of far more invasive technical controls, explicitly exploring the viability of automated performance throttling or the total remote disablement of the hardware upon the detection of tampering or diversion 1351.

In conjunction with physical tracking mandates, aggressive legislative action has targeted the virtualization of compute. The Remote Access Security Act, which passed the House by a vast majority in early 2026, aims to definitively close the "cloud loophole" by extending the full weight of physical export controls to any foreign entity attempting to lease processing time on restricted U.S. hardware hosted in third-country data centers 18.

Compliance Hurdles and the Erosion of Global Trust

The aggressive implementation timeline and profound technical demands of the Chip Security Act have generated acute operational friction across the semiconductor supply chain 46. Compliance analysts warn that achieving full adherence within the mandated 180 days is operationally implausible for the vast majority of international data center operators and cloud service providers 46. Implementing the required infrastructure involves establishing serial-number-level cryptographic inventory systems, deploying facility security protocols perfectly aligned with BIS reporting standards, and navigating the profound complexities of firmware-level tracking across massive, heterogeneous GPU clusters 46.

Beyond immediate logistical hurdles, the integration of location tracking and potential "kill switches" into commercial hardware introduces severe strategic and reputational risks to U.S. technology leadership 13. The intentional degradation of hardware autonomy threatens to erode the foundational trust that sustains American dominance in the global semiconductor ecosystem 13. If international consumers, multinational corporations, and allied governments perceive U.S. semiconductors as inherently compromised by pervasive state telemetry or unilateral disablement features, they are highly incentivized to protect their own strategic autonomy 313.

This perception risks triggering a phenomenon of "de-Americanization" within the global supply chain, wherein sovereign nations actively seek out and subsidize alternative hardware suppliers from geopolitically neutral jurisdictions to avoid entanglement with U.S. compliance requirements 1339. Consequently, aggressive embedded governance policies, designed specifically to secure U.S. technological supremacy, may inadvertently fracture the global market, empowering foreign competitors and reducing the long-term effectiveness of U.S. economic statecraft 13.

Geopolitical Ramifications and Global South Infrastructure

The pursuit of compute governance and the resulting monopolization of hardware by a small cadre of hyperscale technology firms in the United States and China have profound implications for global economic equity. As computational power transitions from a niche technical resource to the primary engine of modern industrial, scientific, and economic progress, a nation's access to advanced hardware fundamentally dictates its trajectory for national development 252.

Compute Deserts and the Artificial Intelligence Divide

Current global distributions of artificial intelligence infrastructure exhibit stark, structural inequalities. While North America and China dominate the construction of massive data centers and capture the vast majority of AI-driven economic growth - projected to contribute trillions to global GDP - much of the developing world remains locked in "compute deserts" 524849. These regions suffer from severe infrastructural deficits, lacking the specialized public cloud capacity, robust data ecosystems, and stable energy grids required to train or deploy advanced models locally 4850.

The disparity is glaring: Africa, home to approximately 18 percent of the global population, accounts for less than 1 percent of global data center capacity 5051. Similarly, while India generates roughly one-fifth of the world's data, it possesses only about 3 percent of global data center infrastructure, rendering it data-rich but compute-poor 50. This infrastructural vacuum threatens to widen an insurmountable "AI divide" between the Global North and the Global South 4952. As the deployment of AI accelerates the automation of routine tasks, developing nations that are heavily reliant on labor-intensive industries face disproportionate risks of economic displacement and rising unemployment, lacking the digital infrastructure necessary to transition their workforces into an AI-driven economy 5253.

Furthermore, the concentration of compute power dictates the cultural and linguistic alignment of artificial intelligence systems. When models are trained almost exclusively by Western or Chinese corporations utilizing hardware located in the Global North, the resulting systems frequently reflect the specific biases, languages, and commercial priorities of their creators 255254. This marginalizes diverse global inputs and fails to provide contextualized solutions for critical, localized challenges in agriculture, regional healthcare, and domestic education across the Global South 5254.

Sovereign Artificial Intelligence Initiatives

In response to the economic threats of infrastructural monopolies and the geostrategic vulnerabilities exposed by escalating U.S.-China technology restrictions, a growing coalition of middle powers and developing nations are prioritizing "Sovereign AI" 2555. This paradigm asserts that nations must secure autonomy over critical layers of the technology stack - ranging from localized data centers and regional cloud providers to domestically trained foundational models - to prevent a new era of digital colonialism 2561.

The African Union has aggressively pursued this strategy, formally adopting its Continental Artificial Intelligence Strategy in July 2024 5657. The strategy explicitly declares AI a strategic priority for continental sovereignty, calling for massive, coordinated investments in renewable-powered data centers, regional compute hubs, and the establishment of an African Fund for AI to finance domestic digital infrastructure and reduce reliance on foreign entities 515657. At the global level, institutions such as the United Nations have established the Independent Scientific Panel on AI and the Global Dialogue on AI Governance to address these structural imbalances, advocating for frameworks that center Global South perspectives, facilitate technology transfer, and mandate equitable access to affordable compute resources 525859.

However, achieving genuine, full-stack technological sovereignty remains largely illusory for the majority of the world. Because the foundational supply chains for silicon design, EDA software, and extreme ultraviolet lithography are tightly controlled by the United States and a few allied nations, true independence is currently unattainable 2560. Developing nations often find themselves navigating a dual-stack reality, forced to choose between deep dependencies on U.S. hyperscale cloud providers or integration into China's aggressively exported Digital Silk Road infrastructure 1525.

To break this binary, international policy researchers increasingly advocate for the development of a "Third Stack" 55. This concept envisions a collaboratively built, democratically aligned global AI infrastructure that operates distinctly from both the Chinese state-driven model and the heavily monopolized U.S. commercial model 55. Establishing this alternative requires unprecedented multilateral cooperation, the creation of robust public-private computing commons, and the implementation of international policies designed specifically to subsidize and distribute compute access to researchers across the Global South 25561. Only through the intentional democratization of the physical foundations of artificial intelligence can global governance frameworks hope to mitigate catastrophic risks while ensuring the equitable distribution of the technology's transformative benefits.