Complexity economics and neoclassical economic theory

Historical Context and Theoretical Foundations

For over a century, the dominant paradigm in economic theory has been the neoclassical framework. This orthodox approach, which traces its methodological roots to nineteenth-century physics and Enlightenment philosophies of rationality, assumes that the economy is a deterministic, equilibrium-seeking system 123. Neoclassical models are constructed upon the premise that economies consist of perfectly rational agents - consumers maximizing utility and firms maximizing profits - operating within well-defined constraints and possessing complete, or at least probabilistically perfect, information 456. Through the mechanism of prices, the aggregate actions of these agents are theorized to naturally gravitate toward a static, general equilibrium that represents an optimal allocation of resources 68.

While this equilibrium-based system produces mathematically elegant and analytically tractable models, it relies on highly restrictive axioms that often fail to reflect the organic, evolving nature of real-world markets 47. The limitations of this paradigm became increasingly apparent when applied to phenomena such as technological innovation, financial crises, and structural market transformations.

In the late 1980s, an interdisciplinary group of scholars, prominently led by W. Brian Arthur at the Santa Fe Institute (SFI), initiated a formal departure from neoclassical orthodoxy 268. Drawing inspiration from evolutionary biology, statistical mechanics, and computer science, they established the foundation for "complexity economics" 1119. This framework posits that the economy is not a closed system mechanically tending toward a predetermined equilibrium. Instead, it is an open, complex adaptive system characterized by continuous non-equilibrium, emergent phenomena, and structural evolution 11011.

Complexity economics relaxes the assumption of perfect rationality. It models agents who possess incomplete information and bounded rationality, forcing them to inductively make sense of their environments, test heuristic strategies, and adapt to the outcomes they mutually create 412. Consequently, the economy is viewed as an ecology of actions and beliefs that is perpetually constructing itself anew - a system defined by contingency, indeterminacy, and historical path dependence 1013.

Axiomatic Architecture of Neoclassical Orthodoxy

To understand the magnitude of the theoretical shift introduced by complexity economics, it is necessary to examine the core axioms of neoclassical theory and their inherent structural limitations. The neoclassical deductive system is grounded in methodological individualism, assuming that macroeconomic phenomena can be entirely explained through the aggregated actions of rational individuals 518.

The Utility Function and the Representative Agent

At the microeconomic level, rationality is formalized through preference axioms - such as completeness, transitivity, and continuity - which allow agent preferences to be represented mathematically by a continuous utility function 6. Agents solve constrained optimization problems to maximize this utility. However, aggregating these heterogeneous individual demands into a coherent macroeconomic model presents severe mathematical difficulties, historically culminating in the Sonnenschein-Mantel-Debreu theorem, which demonstrated that aggregate demand curves do not necessarily inherit the well-behaved properties of individual demand curves 14.

To preserve analytical tractability, neoclassical macroeconomics frequently relies on the "representative agent" framework 15. This approach assumes that the aggregate behavior of an economy can be accurately modeled by the optimizing decisions of a single, hypothetical agent 6. While this simplification allows for the construction of Dynamic Stochastic General Equilibrium (DSGE) models, it structurally eliminates the possibility of studying network effects, systemic contagion, and phase transitions, which arise specifically from the interactions of diverse, heterogeneous agents 1416.

Diminishing Returns and the Hicksian Getaway

Another foundational pillar of traditional economic theory is the assumption of diminishing returns (negative feedback). Originally formulated for agricultural and bulk-manufacturing contexts, the law of diminishing returns states that adding variable inputs to a fixed resource eventually yields declining marginal output 817. In market dynamics, diminishing returns act as a stabilizing force: as prices rise, demand falls, and as firms grow too large, they encounter inefficiencies, ensuring that markets settle into a unique, predictable equilibrium 1318.

During the 1930s and 1960s, economists debated the existence of increasing returns, but influential theorists like John R. Hicks and Jack Hirshleifer reasserted diminishing returns to preserve the mathematical viability of perfect competition 25. Hicks acknowledged that abandoning perfect competition would have "very destructive consequences for economic theory," executing what economic historians term the "Hicksian Getaway" to protect orthodox cost functions from the destabilizing implications of increasing returns 25.

The Framework of Complexity Economics

Complexity economics dismantles these orthodox assumptions, redefining the economy as an evolutionary process rather than a mechanistic one. The following table delineates the primary axiomatic contrasts between the two paradigms:

| Analytical Dimension | Neoclassical Orthodoxy | Complexity Economics |

|---|---|---|

| System State | A closed system tending toward a static, general equilibrium 811. | An open system characterized by perpetual non-equilibrium and structural transformation 1011. |

| Agent Cognition | Perfect rationality, deductive reasoning, complete information, and continuous utility maximization 15. | Bounded rationality, inductive sense-making, adaptation, and heuristic exploration 10. |

| Agent Heterogeneity | Homogeneous behavior, frequently modeled via a singular "representative agent" 6. | Highly heterogeneous agents with differing beliefs, strategies, and structural endowments 416. |

| Market Dynamics | Diminishing returns (negative feedback) guarantee a single, efficient, and predictable market outcome 1725. | Increasing returns (positive feedback) allow for multiple possible equilibria, instability, and path dependence 1718. |

| Role of Time | Ahistorical and timeless; the system eventually reaches the same steady state regardless of initial conditions 210. | Path-dependent; historical time is critical, and small initial accidents can lock in long-term outcomes 101319. |

| Methodology | Closed-form algebraic systems and differential calculus models emphasizing determinacy 1420. | Agent-based modeling, network topology analysis, and computational simulations 91121. |

The Theory of Increasing Returns and Path Dependence

W. Brian Arthur's most profound theoretical contribution was demonstrating that modern, knowledge-based economies - particularly the technology sector - are governed not by diminishing returns, but by positive feedback loops, or increasing returns 1718. Increasing returns occur when the adoption of a technology or product increases its value to subsequent users, triggering self-reinforcing cycles of growth 1718.

Arthur's models, consolidated in his 1989 Economic Journal paper and his 1994 book Increasing Returns and Path Dependence in the Economy, revealed that technologies with high upfront research costs and negligible marginal reproduction costs exhibit powerful network effects 1718. A classic example is operating system software: as more people adopt a specific platform, developers write more applications for it, which in turn attracts more users, continually driving down unit costs while expanding utility 17.

Lock-In and Multiple Equilibria

Under increasing returns, market outcomes lose their predictability. Arthur demonstrated that if two or more competing technologies enter a market, the outcome is not determined solely by intrinsic product superiority. Instead, the market is highly sensitive to initial conditions. Minor historical accidents, random fluctuations in early adoption, or strategic initial pricing can tip the balance in favor of one technology 1719.

Once a critical mass is achieved, the positive feedback loop accelerates, leading to market "lock-in." The dominant technology secures an irreversible monopoly, even if a technically superior alternative exists 1719. This dynamic shatters the neoclassical welfare theorem which posits that free markets invariably select the most efficient outcome 1319. In an economy dominated by increasing returns, the market does not discover a unique equilibrium; it navigates a landscape of multiple potential equilibria, permanently shaped by the contingent sequence of historical events 1319.

Orthodox economists initially resisted Arthur's framework because it introduced mathematical chaos - outcomes that were path-dependent and highly sensitive to initial conditions 1317. However, the explosive growth of digital platform monopolies in the subsequent decades provided robust empirical vindication of his models, establishing increasing returns as a foundational concept for modern antitrust and technology policy 1719.

Ergodicity Economics and the Non-Equilibrium Problem

Complexity economics also incorporates critical insights from statistical mechanics regarding risk and probability, most notably through the development of "ergodicity economics" by physicist Ole Peters 22. Standard economic models of decision-making, such as Expected Utility Theory (EUT), evaluate the attractiveness of financial gambles based on their expected value 2324. Foundational concepts of risk in economics originated in the seventeenth century, predating the nineteenth-century physical concept of ergodicity 2225.

The mathematical flaw in EUT, Peters argues, is its indiscriminate assumption that economic systems are ergodic 2226. An observable is ergodic if its time average equals its expectation value (ensemble average) 25. The expected value calculates the average outcome of a gamble across an infinite ensemble of parallel universes at a single moment in time 2425. However, wealth accumulation and macroeconomic growth are multiplicative, non-equilibrium processes, rendering them fundamentally non-ergodic 26.

Time Averages Versus Ensemble Averages

In a non-ergodic multiplicative gamble - such as a continuous coin toss where heads increases wealth by 50% and tails decreases it by 40% - the expected value (the ensemble average) grows exponentially 2324. However, an individual making sequential bets over time will inevitably experience a trajectory where their personal wealth tends toward zero 26. The ensemble average is skewed by an infinitesimal fraction of individuals experiencing astronomical gains, which masks the catastrophic losses experienced by the vast majority across historical time 2526.

Because conventional models conflate these two averages, they predict that individuals should accept bets based on positive ensemble averages. When real humans reject these bets to avoid ruin, neoclassical economics introduces arbitrary "risk aversion" parameters or dismisses the behavior as cognitive irrationality 26. Ergodicity economics resolves this without assuming irrationality: individuals are mathematically optimizing their time-average growth rate, not their ensemble expected value 2526. By reintroducing historical time into probability models, ergodicity economics aligns the theoretical modeling of risk with the non-equilibrium, complex reality of capital markets 2226.

Economic Complexity and the Product Space

The transition away from aggregate, ahistorical modeling has also revolutionized development economics. Traditional structural adjustment programs often relied on macroeconomic aggregates - such as total capital, average education, and GDP - to design industrial policy 272836. However, these metrics failed to explain why countries with similar aggregate endowments, such as Peru and South Korea in the 1970s, experienced vastly different trajectories of industrialization and growth 27.

In 2007, César Hidalgo and Ricardo Hausmann introduced the Product Space, a network-based framework that measures the "productive capabilities" of nations 2930. Productive capabilities encompass the specific, non-fungible networks of knowledge, infrastructure, and institutional know-how required to manufacture goods 3132. Because capabilities cannot be measured directly, Hidalgo and Hausmann developed the Economic Complexity Index (ECI) to infer them from a country's export basket 3132.

The ECI algorithm analyzes two dimensions: diversity (how many different products a country successfully exports) and ubiquity (how many other countries can produce those products) 32. Complex economies produce a diverse range of low-ubiquity products, indicating deep, overlapping networks of specialized capabilities 3132.

Network Topology and Capability "Jumps"

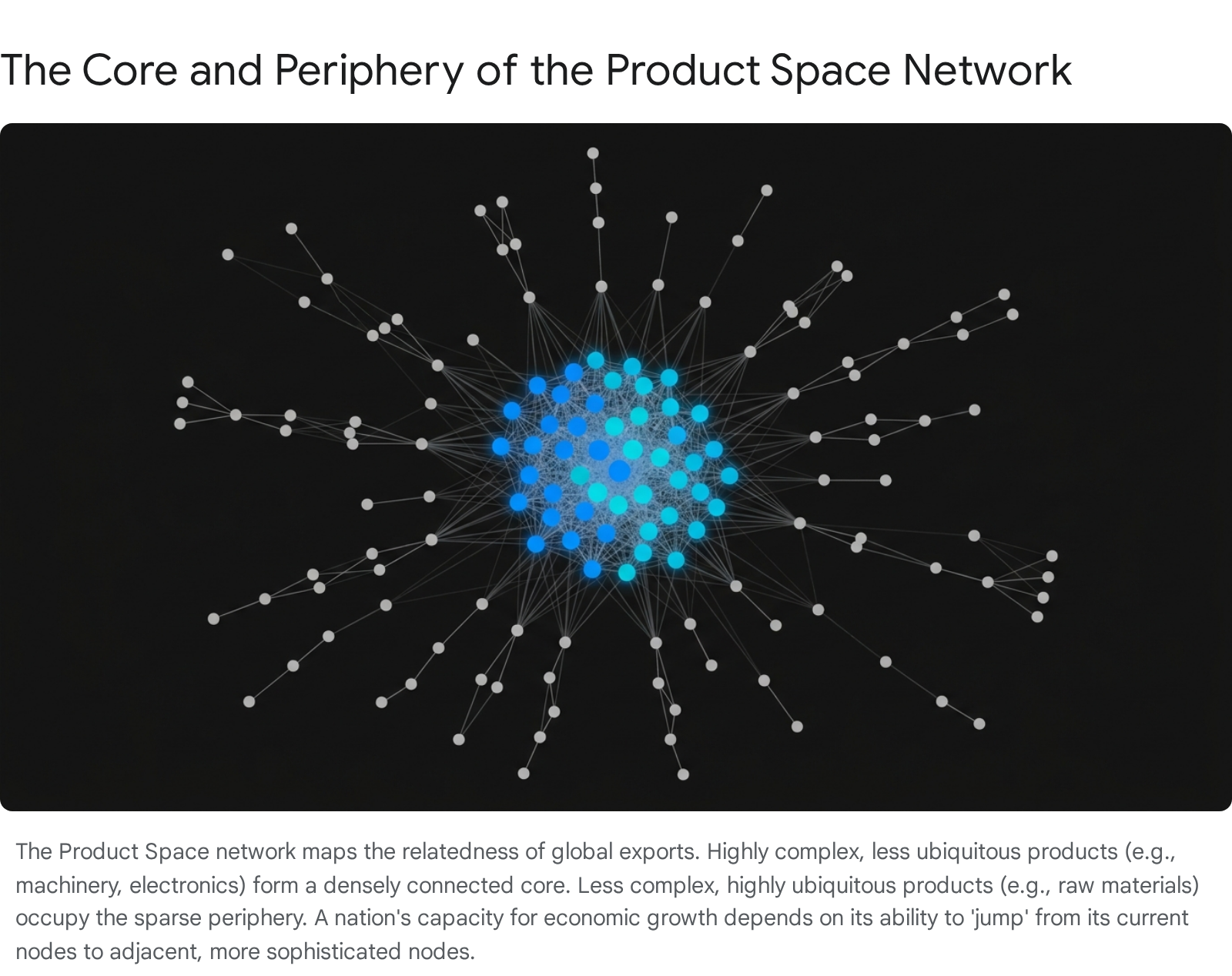

The Product Space maps the relatedness of goods in the global economy, connecting products that require similar underlying capabilities 3033. The topology of this network is highly heterogeneous, structurally conditioning the development paths available to nations 34.

Empirical analysis demonstrates that nations undergo structural transformation by moving incrementally to adjacent nodes in the Product Space 30. A country with a revealed comparative advantage in garments can easily transition to textiles, leveraging shared capabilities 33. However, highly complex products like X-ray machines or specialized chemicals sit in a dense core, while commodities like raw minerals or agricultural products occupy the sparse periphery 34.

Developing nations situated in the periphery face immense difficulty executing "unrelated jumps" to sophisticated sectors because they lack the requisite capability matrix 3435. This explains the divergence between South Korea - which successfully navigated from textiles to electronics over decades of adjacent jumps - and resource-dependent nations that remain trapped in the periphery 36. Consequently, modern industrial policy in the Global South increasingly leverages the ECI to target "smart diversification," identifying accessible adjacent nodes rather than attempting unsupported leaps into high-tech manufacturing 273637.

Agent-Based Modeling

To effectively capture the non-linear dynamics, heterogeneity, and out-of-equilibrium behavior defined by complexity economics, researchers have widely adopted Agent-Based Models (ABMs) 1121. Unlike standard mathematical equations that solve for equilibrium, ABMs are computational simulations that generate macroeconomic phenomena from the bottom up 1638.

In a landmark 2025 review in the Journal of Economic Literature, Robert Axtell and J. Doyne Farmer note that ABMs have matured from niche computational curiosities into rigorous, production-grade tools for economic forecasting and policy design 213940. ABMs populate a virtual environment with thousands or millions of heterogeneous agents, each endowed with specific rules for decision-making, learning, and interaction 3841. By allowing these agents to interact over time, the simulator produces emergent macroeconomic structures - such as boom-and-bust cycles, systemic risk cascades, and spatial inequalities - that static representative-agent models structurally exclude 162142.

Case Study on Supply Chain Shocks

The predictive superiority of ABMs in out-of-equilibrium scenarios was prominently demonstrated during the COVID-19 pandemic. Traditional economic models struggled to forecast the economic fallout because the pandemic represented a sudden, massive shock that pushed the global economy far from equilibrium 119.

A research group led by Doyne Farmer at the University of Oxford constructed a highly granular ABM of the UK economy, incorporating detailed input-output data across 56 industries and over 450 specific occupations 4353. The model analyzed how direct shocks - such as lockdown mandates preventing non-essential workers from commuting - cascaded through the production network 4344.

By simulating the collision of upstream supply bottlenecks and downstream demand contractions dynamically, the ABM successfully predicted a 21.5% contraction in the UK's GDP for the second quarter of 2020 - a figure remarkably close to the actual 22.1% contraction recorded 55. This real-time forecasting success provided a powerful proof-of-concept for the necessity of complexity economics in managing systemic crises 9.

Methodological Advances and Standardization

Historically, the widespread adoption of ABMs was hindered by computational bottlenecks and the difficulty of model calibration 3956. An ABM with millions of agents contains vast parameter spaces, making trial-and-error calibration against empirical data virtually impossible 3957.

Model Calibration and Machine Learning

Recent innovations at the intersection of machine learning and probabilistic programming have largely resolved these constraints. Researchers have begun implementing ABMs using differential programming frameworks, such as Google's JAX, which allows for the Automatic Differentiation (AD) of the simulation's outputs with respect to its parameters 395658.

When combined with probabilistic programming libraries like NumPyro, researchers can apply Generalized Variational Inference (GVI) to calibrate massive models 3958. Instead of running millions of separate simulations, gradient-based optimization allows the engine to efficiently navigate the parameter space, reducing calibration times by several orders of magnitude 3956. These advancements not only accelerate processing but also provide mathematically rigorous uncertainty quantification, transforming ABMs from theoretical sandboxes into robust forecasting tools 5658. Concurrently, protocols utilizing secure multi-party computation have enabled the calibration of ABMs on sensitive demographic and financial data without violating privacy constraints, facilitating the use of highly localized micro-data 45.

Standardization via the ODD Protocol

To ensure scientific rigor, reproducibility, and transparency, the simulation community developed the ODD (Overview, Design concepts, Details) protocol 4647. First published in 2006 for ecological modeling, ODD provides a standardized template for documenting the architecture, initial conditions, and procedural rules of an ABM 4647.

Recognizing that socioeconomic models require specific attention to behavioral modeling, researchers introduced the ODD+D extension, which mandates detailed documentation of how human decision-making, learning, and adaptation are formalized within the simulation 4648. Subsequent updates to the protocol in 2020 and 2024 have further refined these standards, requiring modelers to articulate the theoretical rationale behind agent behaviors, outline the criteria for evaluating structural realism, and provide direct links to open-source code repositories 495051.

The following table summarizes the structural elements of the standard ODD protocol used to evaluate complex economic simulations:

| Protocol Category | Element | Description of Requirement |

|---|---|---|

| Overview | Purpose and Patterns | Identifies the model's intent and the real-world patterns used to evaluate its structural realism 4650. |

| Entities, State Variables, Scales | Defines the heterogeneous agents, spatial extent, and temporal resolution of the simulation 6652. | |

| Process Overview and Scheduling | Outlines the sequence of actions and how time progresses within the model's logic 5066. | |

| Design Concepts | Design Concepts | Details the implementation of complexity principles (e.g., emergence, adaptation, learning, stochasticity) 4850. |

| Details | Initialization | Describes the initial state of the environment and agents at time zero 6653. |

| Input Data | Specifies any external time-series data or empirical inputs driving the model dynamics 53. | |

| Submodels | Provides the explicit mathematical and algorithmic rules governing specific agent behaviors 5153. |

Policy Applications and Future Trajectories

The departure from equilibrium assumptions carries profound implications for macroeconomic policy. Under a neoclassical paradigm, policy is generally viewed as an exercise in optimization - adjusting fiscal or monetary levers to shift the economy from one static equilibrium to a more efficient one. Complexity economics, however, treats the economy as computationally irreducible, meaning policy formulation must focus on dynamic steering, identifying leverage points, and managing systemic fragility 5470.

This paradigm shift is particularly urgent in the modeling of climate change and the green energy transition. Traditional Integrated Assessment Models (IAMs) have been heavily criticized for utilizing equilibrium assumptions that underestimate the long-term damages of warming while overestimating the costs of transitioning to renewables 954. By incorporating the principles of increasing returns, complexity models accurately capture the exponential cost declines in wind and solar technologies as production scales 55.

Institutions such as the Institute for New Economic Thinking (INET), led by Doyne Farmer, are actively developing macroeconomic super-simulators to guide the Net Zero transition 5456. These granular, agent-based models assist central banks and ministries of finance in forecasting the non-linear feedback loops of decarbonization, including localized labor market frictions, stranded asset risks, and the optimal timing for deploying subsidies to trigger rapid technological lock-in 555758.

In the realm of antitrust and industrial regulation, complexity economics forces regulators to recognize that high market concentration in technology sectors is often an emergent property of positive feedback networks, rather than explicit rent-seeking 1719. Regulatory interventions must therefore be exquisitely timed - prioritizing early action to prevent the lock-in of inferior standards, while recognizing that dismantling established monopolies may trigger unforeseen systemic disruptions across interconnected supply chains 19.

Ultimately, complexity economics provides the analytical scaffolding necessary to understand an economy that is organic, historical, and perpetually evolving. By abandoning the mathematical conveniences of perfect rationality and static equilibrium, the discipline has forged a highly realistic framework capable of navigating the deep uncertainties of the twenty-first century.