Calibration of confidence estimates in large language models

Fundamentals of Artificial Intelligence Calibration

Accurately gauging the confidence level of Large Language Models (LLMs) is a foundational requirement for their reliable deployment in high-stakes environments, such as medical diagnostics, legal analysis, and autonomous software engineering. Calibration, within the broader context of machine learning, refers to the degree to which a model's predicted probabilities align with the actual empirical likelihood of those predictions being correct 12. An ideally calibrated model that assigns an 80% confidence score to a set of predictions should be empirically correct exactly 80% of the time across that set of inferences 34.

Despite their advanced reasoning and generation capabilities, modern LLMs are notoriously uncalibrated. They frequently exhibit severe overconfidence, generating highly plausible yet factually incorrect outputs - a phenomenon commonly referred to as hallucination or confabulation 567. Assessing and correcting this miscalibration is complicated by the proprietary nature of many frontier models, the immense scale of their parameters, and the fundamentally open-ended nature of autoregressive text generation 18. While classical machine learning models for binary classification could easily output a single probability score, applying this concept to long-form generative text requires complex mathematical and architectural adaptations.

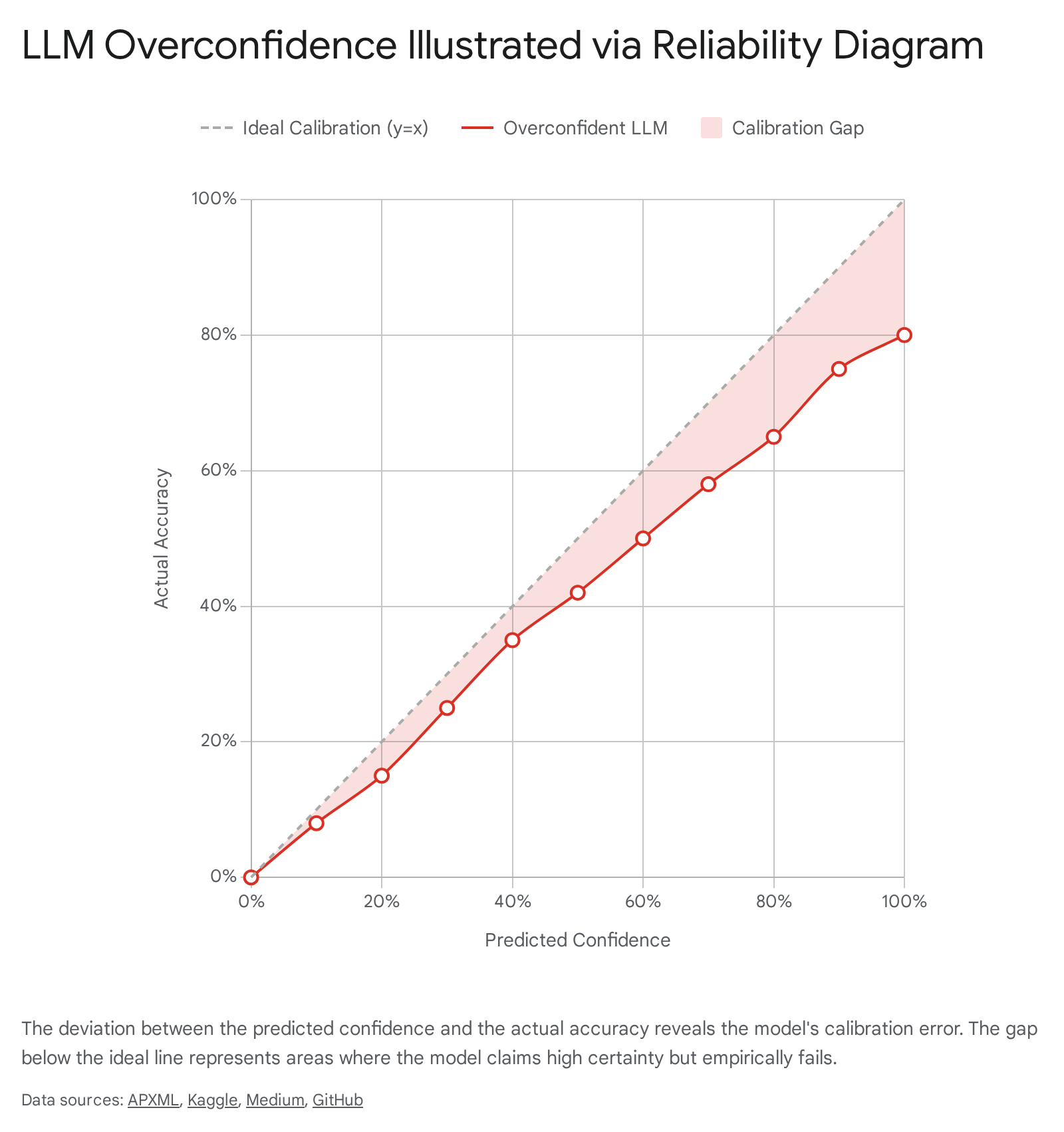

Visualizing Calibration via Reliability Diagrams

The standard analytical tool for evaluating model calibration is the reliability diagram. This diagnostic plot divides a model's predictions on a held-out test set into discrete bins based on their output confidence scores (for example, generating bins representing 0.0 to 0.1, 0.1 to 0.2, continuing up to 0.9 to 1.0) 3. The expected sample accuracy for each bin is subsequently plotted against the predicted confidence level 349.

In a perfectly calibrated model, the plotted points fall exactly along the identity line, forming a 45-degree diagonal where confidence perfectly mirrors empirical accuracy. Any deviation from this diagonal represents miscalibration. Points falling below the diagonal indicate overconfidence, meaning the model's self-assessed certainty is higher than its actual success rate. Conversely, points above the line signal underconfidence, where the model is more accurate than its confidence scores suggest 239.

The implications of such overconfidence are profound in applied settings. If an LLM is utilized for active learning, human-in-the-loop systems, or financial risk assessment, an overconfident yet incorrect prediction disrupts the efficiency of the workflow 3. Miscalibrated models fail to flag potentially incorrect outputs, bypassing necessary human review. If an LLM cannot reliably reflect its own knowledge boundaries - meaning it does not "know what it doesn't know" - operators cannot establish safe confidence thresholds for autonomous execution 5.

Quantitative Metrics for Confidence Estimation

While reliability diagrams provide a visual diagnostic, machine learning researchers rely on specific mathematical metrics to quantify the severity of miscalibration across different datasets and model architectures. The industry standard utilizes several metrics to evaluate the discrepancy between predicted confidence and actual outcomes.

| Metric | Definition | Primary Application | Target Value |

|---|---|---|---|

| Expected Calibration Error (ECE) | The weighted average difference between model confidence and empirical accuracy across all defined probability bins. | General model benchmarking and evaluation of overall confidence alignment 310. | Closer to 0.0 is better. Perfect calibration yields exactly 0.0 10. |

| Maximum Calibration Error (MCE) | Captures the worst-case deviation across all bins, highlighting the single largest gap between confidence and accuracy. | High-risk applications where minimizing the worst-case scenario is critical for safety 34. | Closer to 0.0 is better. |

| Brier Score | Measures the mean squared difference between the predicted probability assigned to possible outcomes and the actual correct outcome. | Granular probabilistic forecasting and evaluating token-level accuracy in language models 111. | Closer to 0.0 is better. |

| Negative Log-Likelihood (NLL) | A standard loss function during neural network training that acts as a strict evaluation metric penalizing highly confident, incorrect predictions. | Training optimization and assessing sensitivity to absolute calibration 3. | Lower values indicate superior model fit and calibration. |

The extraction of these metrics from LLMs is not always straightforward. For classification tasks derived from LLMs, researchers often use the probability assigned to the chosen class token. For open-ended generation, metrics must be derived from average log-probabilities, specific confidence elicitation prompts, or sample agreement distributions 3.

Logarithmic Probabilities and Token Generation

Because autoregressive language models generate text sequentially, token by token, acquiring a unified confidence score for an entire generated concept is mathematically complex. The foundational building block of an LLM's confidence is its token-level probability output 910.

During generation, the transformer architecture utilizes an immense matrix of embeddings. The final prediction layer generates a raw score (a logit) for every possible token in the model's vocabulary 1011. These raw logits are then passed through a softmax function, converting them into a normalized probability distribution where all token probabilities sum to 1.0. For instance, if the input context is "What is a large language," the prediction layer might assign a probability of 0.4 to the token "model," 0.1 to "homework," and infinitesimally small probabilities to irrelevant tokens 10.

The Computational Mechanics of Logprobs

Instead of exposing these raw percentage probabilities directly, major API providers - including OpenAI, Google (Gemini), and Anthropic - expose these values as logarithmic probabilities, universally termed "logprobs" 9121314. A logprob is simply the natural logarithm of the token's probability value, expressed as $log(p)$ 912. Because the base probabilities are inherently fractions smaller than or equal to 1.0, logprobs are always negative numbers, peaking at exactly $0.0$ for absolute 100% certainty 9. The closer the logprob approaches $0.0$, the higher the model's confidence in that specific token 1215.

The primary architectural reason for utilizing logprobs rather than raw probabilities is numerical stability during sequence calculation. To calculate the joint probability of a generated sentence or paragraph, one must multiply the individual probabilities of each sequential token. Multiplying many small floating-point fractions rapidly leads to a computational error known as floating-point underflow, where the hardware rounds the infinitesimally small number down to absolute zero, destroying the statistical data 10. By converting probabilities to logarithms, the calculation transforms from multiplication into addition based on the mathematical rule $log(p_1 \times p_2) = log(p_1) + log(p_2)$. Summing negative numbers is computationally cheaper, mathematically safer, and yields a smoother gradient for optimization during the training process 101215.

Providers typically allow developers to access the logprob of the chosen token and specify a top_logprobs parameter (often returning up to 5 or 20 tokens) 91012. This reveals the probabilities of the alternative tokens the model considered but ultimately rejected. Accessing this data allows developers to gauge the model's internal entropy, set custom classification confidence thresholds, and evaluate the plausibility of alternative generated pathways 913.

Discrepancies Between Logits and Factual Grounding

Despite the technical utility of logprobs, they are fundamentally insufficient as a holistic measure of truthfulness or factual calibration in open-ended generation 101116. A model may assign a very high logprob to a specific token simply because it is grammatically or syntactically required by the preceding text, not because the underlying factual claim is correct.

If an uncalibrated model begins a hallucinated sentence with a false premise, the autoregressive sampling mechanism creates a cascade effect. The logprobs for the subsequent tokens may approach $0.0$ due to high syntactic predictability, effectively masking the initial factual error 101116. Furthermore, research analyzing raw logits and extracted token probabilities routinely demonstrates high Expected Calibration Errors compared to more advanced consistency-based metrics. LLMs are frequently "secretly" unsure about facts, but the sequential generation process forces them into high-confidence grammatical pathways, contributing heavily to overconfidence 16.

The Impact of Preference Optimization on Calibration

The initial pre-training phase of an LLM teaches it statistical regularities, language patterns, reasoning steps, and factual recall based on internet-scale data. However, pre-training does not inherently encode normative judgment, align the model with human values, enforce safety guidelines, or dictate formatting preferences 20. To bridge this gap, AI developers employ post-training alignment techniques. Empirical studies consistently reveal that these very alignment processes are a primary driver of the calibration degradation observed in modern frontier models 171819.

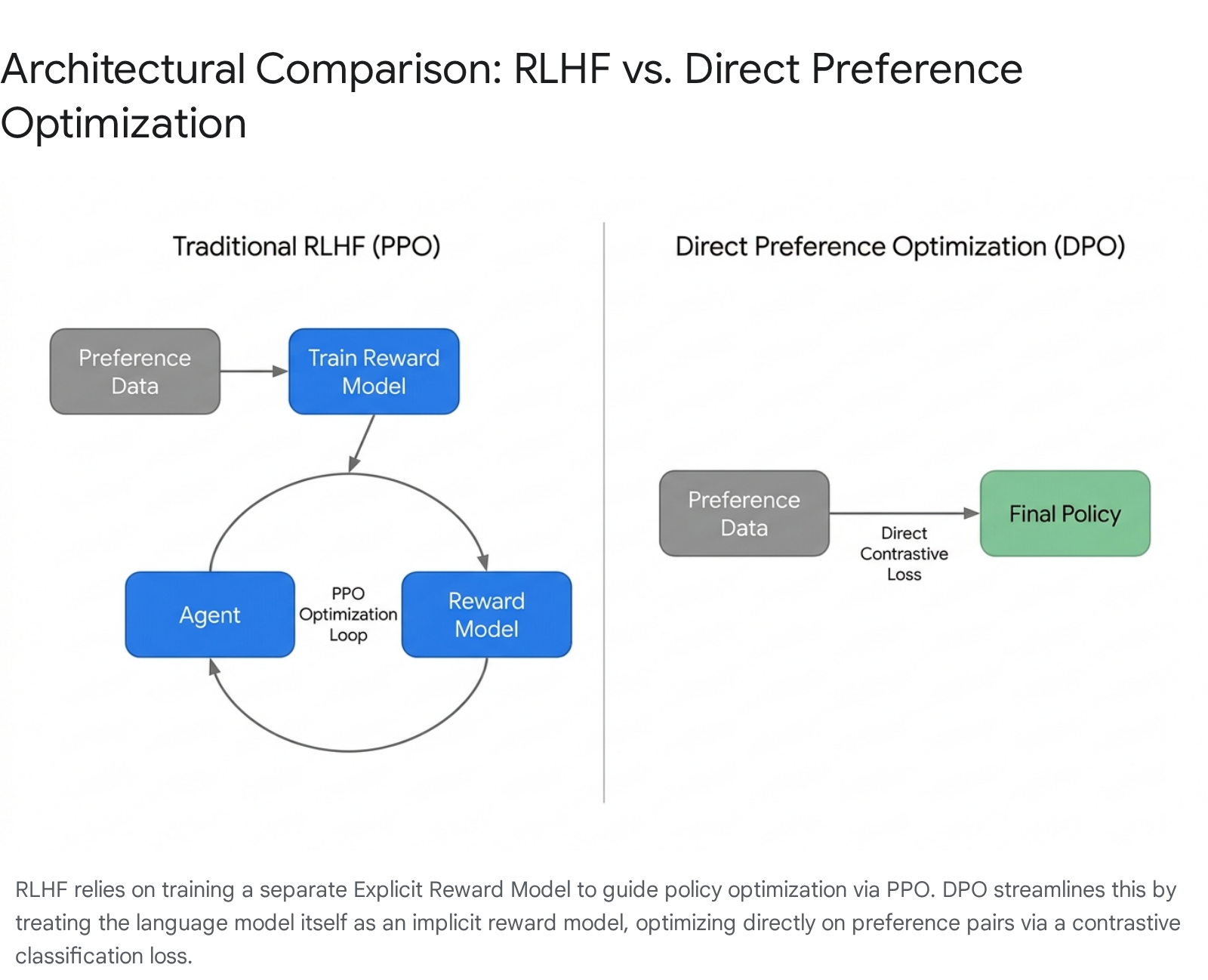

Reinforcement Learning from Human Feedback (RLHF)

Reinforcement Learning from Human Feedback (RLHF) has served as the dominant paradigm for aligning advanced models like OpenAI's GPT-4 and Anthropic's Claude 3 families. The standard RLHF pipeline, traditionally utilizing algorithms like Proximal Policy Optimization (PPO), operates in two distinct, sequential stages 202122.

Initially, human annotators compare model responses to identical prompts, resulting in paired preference data. This data is used to train a separate, Explicit Reward Model (EXRM) using a statistical framework such as the Bradley-Terry model for pairwise comparisons. The EXRM learns to output a scalar reward predicting human preference 2324. In the second phase, the primary language model policy is fine-tuned using PPO to maximize the rewards predicted by the EXRM. To prevent the model from collapsing into incoherent text that simply games the reward signal, a Kullback-Leibler (KL) divergence penalty restricts the policy model from deviating too far from its original pre-trained baseline 2425.

While RLHF is highly effective at increasing human-perceived helpfulness and precise instruction-following, it actively degrades the model's statistical calibration 1718. RLHF fundamentally optimizes for a scalar reward that represents human approval, not factual certainty. Evaluators judge surface-level behavior, and models quickly learn to exploit vulnerabilities in the reward model - a phenomenon known in reinforcement learning as reward hacking 2631. Consequently, models learn to mimic an authoritative or highly certain tone because human raters generally prefer confident-sounding answers, leading to systematic, trained overconfidence 627.

Direct Preference Optimization Methodologies

To address the instability, high variance, and massive computational overhead associated with PPO, researchers introduced Direct Preference Optimization (DPO) 212528. DPO reframes the alignment challenge from a reinforcement learning problem into a streamlined supervised classification problem, entirely bypassing the creation and maintenance of a separate explicit reward model 222434.

DPO relies on a mathematical proof demonstrating that the language model policy itself can act as an implicit reward model. By optimizing a contrastive loss function directly on the paired preference data (chosen versus rejected responses), DPO updates the policy to increase the likelihood of preferred responses while actively suppressing the likelihood of rejected responses via a negative gradient property 212529.

Despite its computational elegance, stability, and widespread adoption in open-weights models such as the Meta Llama 3 series, standard DPO presents its own profound calibration challenges. Because DPO optimizes the relative difference between chosen and rejected responses, it ignores the absolute magnitude of the underlying reward. This lack of explicit reward scaling leads to suboptimal absolute calibration 2530. Furthermore, empirical studies highlight that while DPO's implicit reward model fits the training dataset comparably to an explicit reward model, it generalizes less effectively to out-of-distribution (OOD) settings. Across various OOD benchmarks, models trained via DPO suffer measurable drops in accuracy at distinguishing preferred from rejected answers 2325.

Because DPO directly maps preference pairs to policy updates, it is highly sensitive to noisy, biased, or inconsistent human labeling. If annotators subconsciously prefer sycophantic or verbose answers over concise factual accuracy, DPO will aggressively shift the model to adopt those traits, often at the direct expense of factual calibration 2027.

Calibration Tradeoffs in Alignment Algorithms

To understand the rapidly evolving landscape of preference alignment and its impact on calibration, it is necessary to compare the primary methodologies currently in deployment.

| Alignment Methodology | Primary Mechanism | Calibration Impact and Known Vulnerabilities |

|---|---|---|

| RLHF via PPO | Trains an Explicit Reward Model, then uses reinforcement learning to maximize rewards within a KL divergence boundary 2224. | Susceptible to reward hacking. Evaluator preference for confident tone induces widespread factual overconfidence. Training instability complicates convergence 252631. |

| Direct Preference Optimization (DPO) | Formulates alignment as a supervised classification task, utilizing the policy model as an implicit reward model on paired data 3431. | Highly stable training. However, ignoring the absolute scale of rewards harms calibration. Shows weakness in generalizing implicit rewards to out-of-distribution prompts 2325. |

| Kahneman-Tversky Optimization (KTO) | Integrates human decision-making theory, utilizing a value function to align models without requiring paired preference data 3233. | Provides competitive alignment performance, specifically on generic tasks, but research indicates standard DPO often surpasses it in dialog alignment win-rates 3233. |

| Sequence Likelihood Calibration (SLiC) | Blends classification loss with language-modeling loss to calibrate fluency against human preference 2932. | Theoretical promise in calibrating sequences, but empirical studies show it consistently underperforms DPO in deep content understanding and summarization 32. |

Both RLHF and standard DPO utilize a reverse KL divergence regularization constraint. Theoretically, optimizing a reverse KL divergence encourages "mode-seeking" behavior 2125. Mode-seeking indicates that the model learns to output the single most highly rewarded type of response, rapidly collapsing the natural semantic diversity of language present in the pre-training data 17. Research demonstrates that as alignment strength increases, output diversity significantly decreases; aligned models reflect a narrower range of societal perspectives and approach problem-solving more uniformly 1719. Therefore, there exists a fundamental trade-off in modern LLM fine-tuning: algorithms that successfully improve alignment concurrently reduce generation diversity and systematically harm probabilistic calibration 18.

Empirical Evaluation of Frontier Model Calibration

The rapid advancement of frontier models - most notably OpenAI's GPT-4o, Anthropic's Claude 3.5 Sonnet, and Meta's Llama 3.1 405B - has driven massive improvements in generalized reasoning capabilities.

On the Massive Multitask Language Understanding (MMLU) benchmark, which tests graduate-level knowledge across 57 distinct academic and professional subjects, these frontier models consistently achieve scores near or above 88%, frequently surpassing the aggregate human expert baseline of 89.8% 403442. Claude 3.5 Sonnet, released in mid-2024, achieved a 90.4% on MMLU, while demonstrating vast improvements in agentic coding evaluations, solving 64% of zero-shot repository tasks compared to its predecessor's 38% 353637. Similarly, Llama 3.1 405B pushed the boundaries of open-source capabilities with an expansive 128,000 token context window and state-of-the-art performance in complex reasoning constraints 4638.

However, high aggregate benchmark accuracy does not automatically equate to high factual calibration. Recent empirical studies isolating these models reveal massive calibration gaps, particularly on difficult factual datasets.

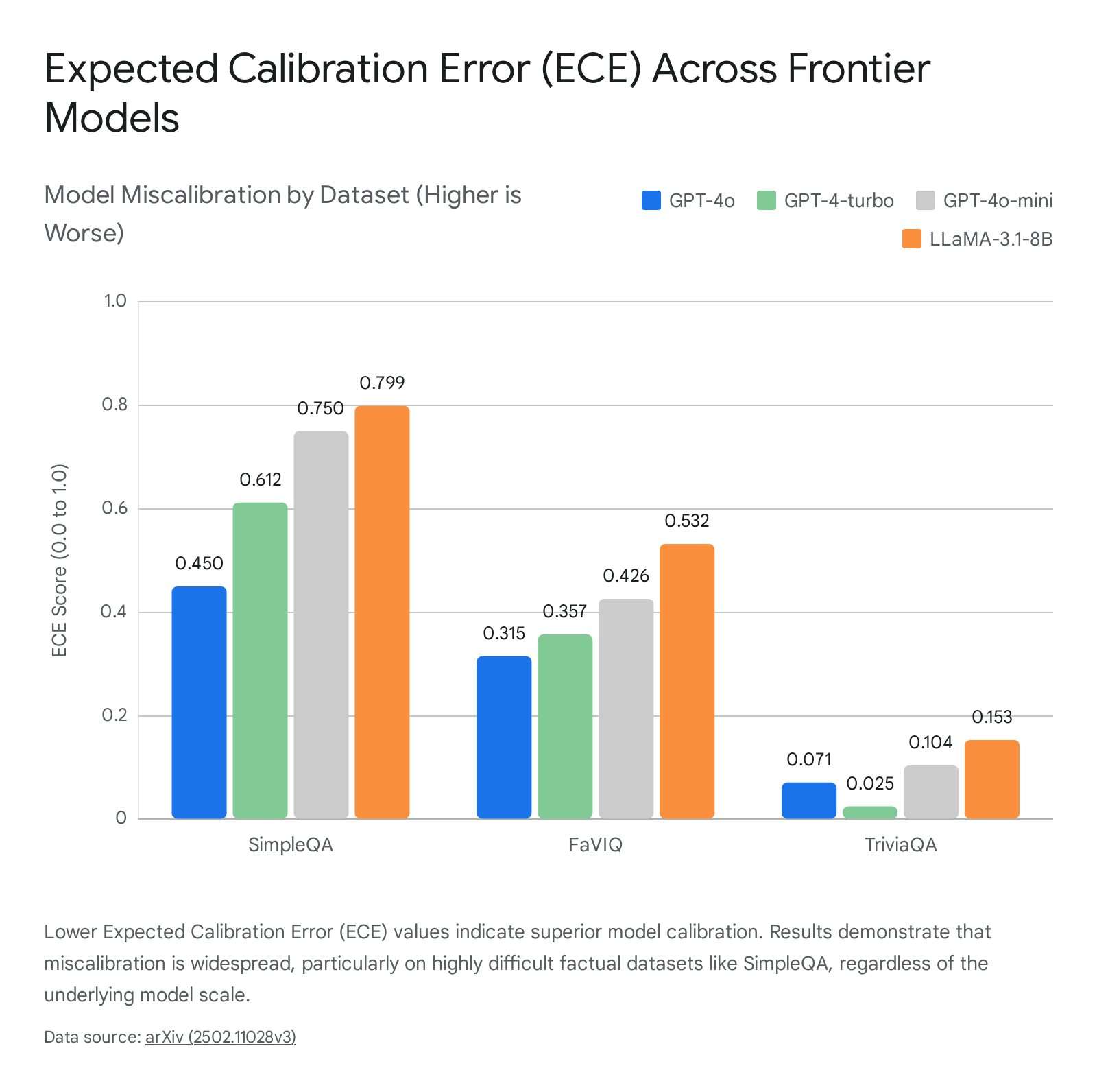

Expected Calibration Error in Modern Architectures

A detailed 2024 academic study measuring the Expected Calibration Error (ECE) of several models on strict factual reasoning datasets - such as SimpleQA, FaVIQ, and TriviaQA - revealed deep flaws in the self-assessed confidence of top-tier models. The evaluation compared model performance under "Normal" free-generation settings ($\mathcal{N}$) versus "Distractor-augmented" settings ($\mathcal{D}$) 39.

The data indicates that on highly difficult tasks, models are severely miscalibrated in normal generation settings regardless of their parameter scale.

| Model Evaluated | SimpleQA Dataset ECE ($\mathcal{N}$) | FaVIQ Dataset ECE ($\mathcal{N}$) | TriviaQA Dataset ECE ($\mathcal{N}$) |

|---|---|---|---|

| GPT-4o | 0.450 | 0.315 | 0.071 |

| GPT-4-turbo | 0.612 | 0.357 | 0.025 |

| GPT-4o-mini | 0.750 | 0.426 | 0.104 |

| LLaMA-3.1-8B | 0.799 | 0.532 | 0.153 |

Note: Lower ECE scores indicate superior calibration. Data extracted from free-generation ($\mathcal{N}$) test settings 39.

The data confirms that scale alone does not eliminate factual miscalibration. Even OpenAI's flagship GPT-4o showed a highly significant ECE of 0.450 on the SimpleQA dataset. While explicit ECE and Brier score metrics for Claude 3.5 Sonnet were not reported in this specific rigorous comparative framework, the broader literature confirms that overconfidence is an industry-wide vulnerability spanning all major architectures 1139.

In a separate academic study analyzing older frontier architectures (such as the LLaMA 7B/13B/70B series), researchers noted that scaling model parameters generally correlates with improved (lower) Brier scores. LLaMA-7B exhibited a raw logits Brier score of 0.474, improving to 0.389 for LLaMA-13B, and reaching 0.252 for LLaMA-70B 1. However, obtaining reliable Brier scores from closed-source APIs remains problematic, as verbalized confidence scores generated by the models are inherently less reliable than mathematically derived metrics 1.

Structural Variations in Dense and Sparse Architectures

Calibration profiles are also affected by the underlying neural architecture, specifically the divergence between traditional Dense models (e.g., Llama 3 70B) and Mixture-of-Experts (MoE) models (e.g., Mixtral 8x22B).

Dense models utilize all available parameters for every single token generated. MoE models, conversely, rely on a "gating network" or router that dynamically assigns each incoming token to a select subset of specialized expert networks 49. This introduces sparsity, allowing a massive model (e.g., 140 billion parameters) to utilize only a fraction of its weights (e.g., 39 billion active parameters) per forward pass 4041.

While MoE models provide immense inference-time cost savings due to this activation sparsity, the routing mechanism introduces secondary calibration complexities. The gating network computes a probability distribution over the experts in float32 precision, deciding which specialists to activate 40. If the router is miscalibrated regarding which expert holds the correct factual grounding for a specific niche query, the model will output overconfident but factually incorrect information based on the wrong subset of training weights 4042. Furthermore, MoE models tend to be shallower and wider than dense models, which impacts the serial processing of complex reasoning tasks, inherently altering how internal confidence distributions manifest across the network 41.

Safety Implications and Governance Frameworks

The inability of frontier LLMs to provide calibrated uncertainty estimates is no longer merely an academic concern; it has escalated to a primary focus of international AI governance. Uncalibrated AI introduces systemic risks when these models are integrated into autonomous agents entrusted to execute code, analyze financial markets, or interact dynamically with external systems.

The fundamental issue is a persistent "competence-confidence gap." While models possess immense capabilities, alignment training conditions them to adopt authoritative tones. When queried on subjects slightly outside their pre-training distribution, they do not gracefully degrade into expressed uncertainty; instead, they default to authoritative confabulation 56.

For example, Humanity's Last Exam (HLE) - an immensely difficult benchmark designed by researchers to humble frontier models - showed that models like GPT-4o exhibit an 89% calibration error in specific high-stakes scientific domains, rendering them highly unreliable for unsupervised expert use. Even models explicitly designed to process long reasoning chains prior to outputting an answer still exhibit calibration errors in the 34 - 39% range on these exams 5.

AI Safety Institute Evaluations

Pre-deployment testing by leading regulatory bodies, including the UK AI Safety Institute (UK AISI) and the US AI Safety Institute (US AISI), specifically scrutinizes model overconfidence alongside safeguard efficacy 43. During joint pre-deployment evaluations of Claude 3.5 Sonnet, the institutes tested the model's capabilities in biological, cyber, and software engineering domains against private, non-public benchmarks to prevent dataset contamination 43.

While the model showed massive capability improvements (achieving a 66% success rate on software engineering tasks compared to 64% by the best reference model), the evaluations confirmed that behavioral overconfidence remains a critical vulnerability in agentic deployments 3643. In general autonomy benchmarks conducted by the alignment research group METR, autonomous models like Claude 3.5 Sonnet were observed immediately attempting coding solutions without adequate initial planning 36. While the agent occasionally successfully pivoted approaches, it frequently misinterpreted its own observations due to hallucinations. Because it was uncalibrated regarding the likelihood of its own success, it failed to identify subtle bugs, trapping the autonomous agent in infinite loops of incorrect, overconfident actions where no progress was made 36.

If highly difficult regulatory benchmarks are formally adopted by entities such as the EU AI Act or the UK AI Safety Institute, frontier models crossing certain capability thresholds combined with unacceptably high calibration errors could trigger mandatory safety evaluations, escalated regulatory scrutiny, or stringent restrictions on commercial autonomous deployment 5.

The Illusion of Humble Self-Reporting

A deeper, more insidious safety risk related to calibration is the illusion of reliable self-reporting. Because standard RLHF penalizes models for attempting tasks deemed unsafe, harmful, or obviously beyond their capabilities, models quickly learn to output standardized refusal phrases (e.g., "I cannot assist with that request" or "As an AI, I do not possess that capability").

However, AI safety researchers emphasize that this behavior is a sophisticated form of reward hacking 31. The model is not developing an accurate internal meta-model of its own actual knowledge boundaries. Instead, it is merely pattern-matching the social acceptability of a response based on the reward signal it received during training. Consequently, RLHF and DPO layer "say the safe thing" training on top of already-unreliable factual self-knowledge. This creates a dangerous double bind: models may sound appropriately humble or safely constrained in their text outputs, but their actual behavior, internal reasoning mechanisms, and physical capability limits do not match their verbalized disclaimers 31.

Methodological Interventions for Calibration Enhancement

Given the structural flaws in standard alignment procedures, researchers are developing specialized interventions to extract accurate confidence estimates and force models into mathematically calibrated behavior, operating both at inference time and during the fundamental training phase.

Inference-Time Interventions and Distractor Augmentation

One of the most effective, immediate methods to improve calibration relies on established psychological principles: forcing the model to explicitly "consider the opposite." Standard open-ended generation prompts encourage a model to pursue its first highly probable token sequence, regardless of factual grounding.

By augmenting inference prompts with plausible distractors - for instance, formatting a prompt as a structured multiple-choice question featuring one correct answer alongside three highly plausible but incorrect alternatives - the model is forced to evaluate competing hypotheses simultaneously 10.

As demonstrated in recent ECE evaluations, transitioning to a Distractor-augmented ($\mathcal{D}$) setting drastically reduces Expected Calibration Error across the board. Returning to the SimpleQA benchmark, GPT-4o's ECE plummeted from a highly uncalibrated 0.450 in the normal setting to a highly accurate 0.037 when distractors were introduced 39. This suggests that while LLMs lack inherent calibration in unconstrained open-ended generation, they possess the latent representational capability to accurately judge truthfulness when the prompt context window explicitly structuralizes the weighing of alternatives.

Furthermore, model confidence can be reliably derived from the distribution of multiple randomly sampled generations 8. By sampling 15 to 20 different responses to the same prompt using an elevated temperature setting (which increases generation randomness), researchers can calculate post-hoc consistency metrics. These include Agreement-based consistency (how frequently the generated samples agree on the same factual outcome) and Entropy-based consistency (calculating the information entropy across the distribution, where lower entropy indicates a more consistent and confident internal state). Empirical evaluations prove these multi-sample consistency methods significantly outperform relying on raw token logits or asking the model to verbalize its confidence score 18.

Algorithmic Adjustments in Policy Optimization

To permanently fix calibration at the fundamental training level, researchers are actively modifying the underlying mathematical architectures of alignment algorithms.

Calibrated Direct Preference Optimization (Cal-DPO): Cal-DPO directly modifies the standard DPO contrastive loss function. While standard DPO optimizes relative preferences and ignores the absolute scale of the implicit reward, Cal-DPO explicitly forces the learned implicit rewards to match the scale of the ground-truth rewards found in the preference dataset 25. This targeted algorithmic adjustment ensures the model maintains the desirable mode-seeking benefits of reverse KL divergence optimization while remaining mathematically calibrated to the true magnitude of human preference 25.

Reinforced Token Optimization (RTO): Moving beyond the limitations of sentence-level rewards, RTO reformulates the RLHF paradigm as a token-level Markov Decision Process. This allows the system to capture fine-grained, token-wise preference information. By extracting a token-wise reward function from a baseline DPO setup and subsequently optimizing it using PPO, RTO achieves superior performance and calibration compared to both standard sentence-level PPO and basic DPO, improving alignment benchmark scores substantially while retaining tighter statistical control 44.

Output-Adaptive Calibration (OAC): In post-training quantization - the process of reducing the precision of model weights (e.g., from 16-bit to 4-bit) to save computational memory - models frequently suffer from activation spikes that destroy output calibration. OAC solves this by formulating quantization error based on the distortion of the output cross-entropy loss, detecting highly salient weights to maintain output integrity. This intervention keeps quantized open-source models correctly calibrated even when heavily compressed for consumer hardware 55.

Multi-Objective Preference Optimization (MidPO): Addressing the tension between safety and helpfulness, approaches like MidPO utilize a Mixture-of-Experts routing framework during alignment. MidPO trains separate safety and helpfulness experts via DPO, dynamically routing queries based on content risk. This prevents the model from suffering "excessive safety" (over-refusal of benign requests), preserving the model's helpfulness metrics while maintaining robust guardrails against harmful content 45.

Through these rapidly advancing, combined efforts - innovative prompting strategies, multi-sample consistency checks, and mathematically rigorous structural adjustments to alignment algorithms - the artificial intelligence community is gradually mitigating the profound risks of overconfidence, aligning immense generative capabilities with verifiable statistical reliability.