B2B content marketing moats and case studies

The Paradigm Shift in B2B Defensibility

For the better part of two decades, the foundational playbook for Business-to-Business (B2B) growth was predicated on a straightforward, implicit contract: search engines provided the audience, and brands provided the content in exchange for a click. This transactional model birthed the "volume moat" - a defensive business strategy wherein the sheer scale of Search Engine Optimization (SEO) content, top-of-funnel keyword dominance, and aggressive backlink acquisition theoretically insulated market leaders from emerging competitors. Organizations constructed massive digital footprints, assuming that capturing every conceivable long-tail search query would perpetually yield predictable pipeline generation.

By the midpoint of the 2020s, that foundational contract has been definitively nullified. The rapid maturation and integration of Large Language Models (LLMs) and generative artificial intelligence into search interfaces have triggered what industry analysts term "The Great Decoupling." This phenomenon describes a digital environment where search engine usage continues to rise unabated, yet outbound clicks to external websites are declining precipitously 1. Current market data indicates that upwards of 60% of Google searches in the United States now end without a single click to an external website, representing a fundamental collapse of the legacy mechanics of content defensibility 23. The search engine has transitioned from a decentralized directory of external links into a centralized decision engine that synthesizes proprietary data and presents it natively to the user 3.

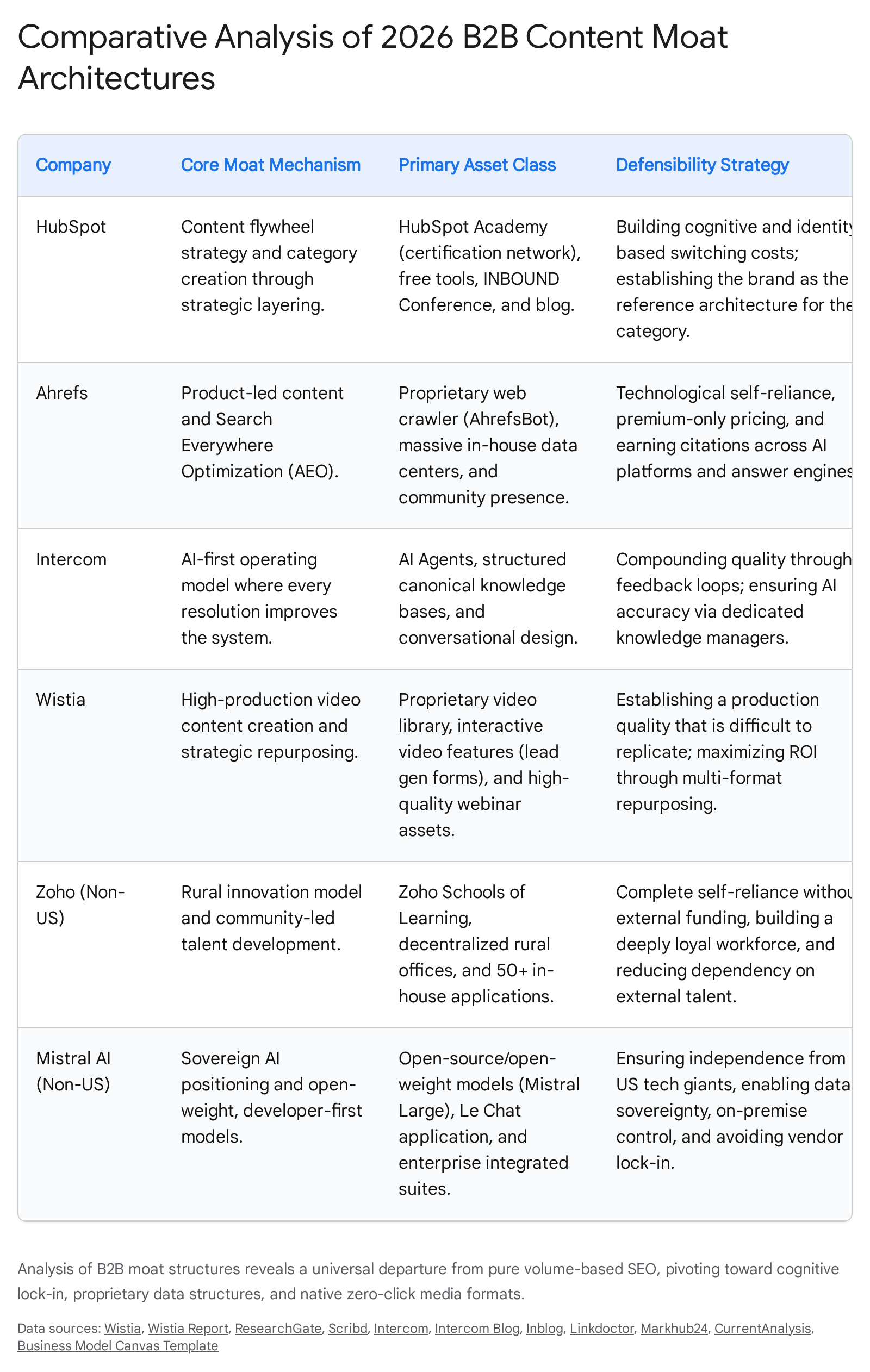

This comprehensive analysis systematically details the ongoing shift in B2B content moats. Moving away from the obsolete reliance on SEO-driven volume, the most resilient organizations are architecting new defensive structures based on proprietary data integration, community-led cognitive lock-in, and expert point-of-view content engineered for native, zero-click environments. By examining the current operational adaptations of industry vanguards - including HubSpot, Ahrefs, Intercom, Drift, Wistia, alongside non-US leaders like Zoho and Mistral AI - this report deconstructs how modern moats are built, maintained, and leveraged for durable market dominance. Furthermore, it addresses the pervasive misconceptions surrounding content volume, exploring the structural limitations of content debt, algorithmic volatility, and the emerging consensus that brand trust, rather than algorithmic manipulation, serves as the ultimate gatekeeper in the AI-mediated buyer journey.

The Fallacy of the Volume Moat and Top-of-Funnel Dominance

The most pervasive strategic error in contemporary B2B marketing is the assumption that sheer content volume or top-of-funnel keyword dominance automatically constitutes a durable business moat. During the 2010s, this heuristic was partially valid; brands could construct "digital real estate" by mass-producing generic content to capture disparate search intents. However, the search landscape has structurally evolved, and the algorithms powering discovery have been rewritten to explicitly penalize this approach.

Crawl Budget Exhaustion and Domain Degradation

Far from acting as a defensive barrier, vast repositories of generic, top-of-funnel content now act as a severe operational anchor. The modern search algorithm evaluates site-wide quality signals rather than isolating page-level metrics. A high percentage of unhelpful, redundant, or purely SEO-driven content drags down the performance of the entire domain, regardless of the quality of the organization's flagship assets 46. This dynamic is further exacerbated by the concept of "crawl budget." LLM crawlers and search bots possess finite resource allocations for indexing any given site. Bloated domains force these crawlers to expend their resources on low-value pages, frequently leaving high-value, conversion-oriented pages unindexed or deprioritized 47.

The severity of this vulnerability is best illustrated by the aggressive "content pruning" strategies increasingly adopted by major B2B organizations and digital marketplaces. In a prominent 2025 case study analyzing a major digital brand, the organization systematically deleted and de-indexed 600,000 underperforming pages - defined strictly as URLs receiving zero clicks over a 12-month period 4. The results were counterintuitive to the volume-moat thesis: despite reducing the site's total page count by 40%, the domain experienced an explosive 34% increase in overall organic traffic within six months, and the total number of keywords ranking in the top three positions doubled 46. By removing digital deadwood, the organization optimized its crawl budget, reduced keyword cannibalization, and dramatically elevated its domain-wide quality score in the eyes of the search algorithm 67.

Algorithmic Volatility and The Great Decoupling

The risks associated with volume-based strategies were further laid bare during the unprecedented volatility of the 2024 and 2025 core algorithm updates. These updates were explicitly engineered to dismantle networks reliant on AI-generated content spam and black-hat SEO tactics, favoring content demonstrably authored by humans for human utility 8. Even established, high-quality B2B domains experienced catastrophic traffic losses if their architectures were overly reliant on top-of-funnel search volume.

The most discussed casualty of this algorithmic shift was HubSpot, long considered the architectural pioneer of inbound content volume. Between 2024 and early 2025, HubSpot experienced a reported 70% to 80% decline in traditional organic traffic, with external analytics tools estimating a plummet from approximately 13.5 million monthly visits to roughly 6 million within a matter of months 1. While HubSpot successfully pivoted its broader business strategy to compensate, the scale of this organic traffic decline sent shockwaves through the B2B sector, signaling the definitive end of the volume era 1. The metric of success has fundamentally shifted away from raw traffic acquisition toward influence, data synthesis, and conversion efficiency.

| Metric Paradigm | Traditional Volume Moat (2010s) | Modern Zero-Click Moat (2026) |

|---|---|---|

| Primary KPI | Click-Through Rate (CTR), Total Organic Sessions | Save Rate, Profile Visits, Share of Voice (SoV) in LLM Outputs |

| Asset Strategy | Thousands of SEO-optimized blog posts targeting long-tail queries | Dense, proprietary data reports, native PDFs, community hubs |

| Search Engine Role | Traffic router providing outbound links to domain | Decision engine providing synthesized answers natively |

| Content Quality Bar | "Good enough" to rank above competitors on page one | Expert Point-of-View (POV) requiring deep subject matter expertise |

| Structural Goal | Algorithmic dominance via backlink acquisition | Brand trust and cognitive lock-in via community integration |

Structural Limitations and Competing Views on Defensibility

While the transition toward AI-integrated content strategies is an operational imperative, treating content as a self-sustaining moat ignores profound structural vulnerabilities. The generative era has not eliminated the friction of growth; it has merely relocated it, primarily into the realms of maintenance economics and brand trust.

The Financial Burden of Content Debt

Treating content as a volume-based moat entirely ignores the compounding reality of maintenance costs. Content is not a static capital asset; it is highly subject to decay. Information that is authoritative today frequently becomes factually inaccurate or strategically obsolete within a three-year horizon 6. Maintaining a massive library of content - especially content that serves as the training data for customer-facing AI agents - requires a colossal, continuous expenditure of financial and human resources.

According to Gartner's 2025 analysis, the financial realities of B2B marketing are becoming increasingly constrained. While 91% of B2B marketers report collecting first-party data, roughly half admit their strategies remain in exploratory stages, heavily restricted by a lack of resources for data collection, structuring, and ongoing maintenance 5. This occurs against a backdrop of macroeconomic pressure; B2B marketing budgets have flatlined at an average of 7.7% to 7.8% of overall company revenue through 2025 and 2026, with 59% of Chief Marketing Officers (CMOs) explicitly reporting insufficient budgets to execute their mandates 67.

Furthermore, the technological infrastructure supporting generative AI is experiencing exponential cost inflation. Gartner reports that while current foundational models cost over $100 million to train, next-generation models deployed by 2026 - 2027 are projected to cost up to $10 billion per model 8. These costs are inevitably passed down to enterprise software buyers, leading to a 14.2% projected increase in software spending 8. The cost of updating, verifying, and structurally optimizing tens of thousands of web pages to meet the new criteria of Generative Engine Optimization (GEO) while simultaneously absorbing rising software costs is financially untenable for organizations attempting to maintain a legacy volume moat. Consequently, Forrester notes a distinct trend in 2025 of organizations pulling content creation and digital strategy back in-house, shifting away from external agency dependencies to regain control over costs and intellectual property 9.

Brand Affinity vs. Algorithmic Dominance

Within the strategic marketing community, a fierce debate continues regarding what actually constitutes defensibility in the generative landscape. One faction advocates for total algorithmic dominance - the belief that mastering structured data, schema markup, and API-driven distribution is the ultimate key to visibility in AI overviews.

Conversely, an increasingly validated competing view posits that brand affinity and human trust completely override algorithmic perfection. As organic growth specialist Kevin Indig details through extensive 2025 usability studies, "Trust is the gatekeeper. Brand familiarity is a precondition for relevance" 10. In high-stakes B2B purchasing decisions - often categorized as Your Money or Your Life (YMYL) queries - buyers do not blindly accept the synthesized outputs of an AI Overview. They exhibit deep-scroll behavior, actively bypassing the AI's answer approximately 80% of the time to validate the information through recognized, human-authored, branded content 10.

Indig's behavioral research reveals a fundamental sequencing in the modern buyer's mind: users first ask, "Do I trust this brand?" before they ask, "Does this answer my query?" 10. If a highly optimized piece of content from an unknown entity appears in an AI summary, it is frequently disregarded in favor of a recognized market leader positioned lower on the page. Therefore, the ultimate limitation of a purely algorithmic content strategy is human skepticism. This is corroborated by Gartner's projection that, by 2030, 75% of B2B buyers will actively prefer sales experiences that prioritize authentic human interaction over AI, pushing back against the "uncanny valley" effect generated by over-automated digital ecosystems 11. Defensibility, therefore, requires a brand presence that supersedes the algorithm entirely.

The Split Architecture and Answer Engine Optimization (AEO)

To navigate the conflicting demands of machine-readability and human persuasion within the zero-click economy, elite B2B marketers have adopted a "split architecture" strategy. As search engines deploy AI Overviews across nearly 50% of all search queries, the very content that builds a brand's authority in the eyes of an LLM is the exact content that satisfies the user's query and prevents a click-through 3.

To thrive, organizations must decouple their digital real estate into two distinct functions 3: 1. The Authority Layer (The Teacher): This layer serves as proprietary "AI-bait." It consists of purely educational, dense content structured specifically for machine readability, utilizing JSON-LD schema, clear semantic headers, and high factual density. It is entirely devoid of aggressive sales pressure or pop-ups. Its primary function is not to capture traffic, but to win the AI citation and establish "invisible influence," effectively turning the brand into the foundational training data for the LLM 3. 2. The Conversion Core (The Seller): This layer comprises product pages, pricing tables, and interactive demonstrations. These assets remain remarkably lean, fast, and aggressively optimized for human psychology and high-intent conversion, stripped of the verbose educational text that previously bloated them for SEO purposes 3.

This architectural split supports the broader strategy of Answer Engine Optimization (AEO). Search is no longer a single destination; it is a feature embedded across ChatGPT, Perplexity, Gemini, YouTube, Reddit, and private communities 16. The zero-click search rate reaches 93% in Google's full AI Mode, but user engagement within that mode is deep, averaging 49 seconds overall and up to 77 seconds for complex B2B brand comparisons 2. Presence within these AI-generated summaries is exposure time, functioning more akin to digital public relations and brand building than traditional direct-response marketing 210.

Furthermore, B2B content agency Animalz provides a framework for analyzing the depth of this integration, termed the "AI Onion" 12: * Layer 1: Workflows. The outermost layer, involving basic AI drafting and summarization tools. This offers zero defensibility, as any competitor can replicate an API call to a foundational model 12. * Layer 2: Data Infrastructure. The structuring of proprietary first-party data so it can be effectively queried and synthesized by internal and external LLMs. * Layer 3: Feedback Loops. The deepest layer of the moat. Each time the system resolves a query or interacts with a user, the proprietary model learns. Competitors cannot copy the systemic, compounding knowledge accumulated by running a proprietary feedback loop over sustained periods 12.

Transitioning to Compounding Growth Loops

The shift from linear content funnels to compounding growth loops represents the most critical operational evolution in modern B2B acquisition. The traditional marketing funnel - wherein content volume is poured into the top, and qualified leads drip out the bottom - is mathematically exhausted. It is inherently linear and requires an endless, increasingly expensive injection of capital and effort to sustain output 1319.

Growth strategy frameworks, notably those developed by Reforge, correctly identify that sustainable, high-velocity growth requires self-reinforcing systems, or "loops," where the output of one cycle automatically becomes the input for the next 1314. In the context of 2026 B2B content marketing, these loops have been entirely rewired by artificial intelligence and proprietary data.

A classical content loop occurs when content is generated from product usage, distributed, and attracts more users who subsequently interact with the product, generating further content 15. Generative AI supercharges the velocity and reduces the friction of this loop, but it simultaneously demands a drastically higher standard of proprietary input to function effectively. If a company feeds an AI loop with generic data, it produces generic content that fails to rank or resonate.

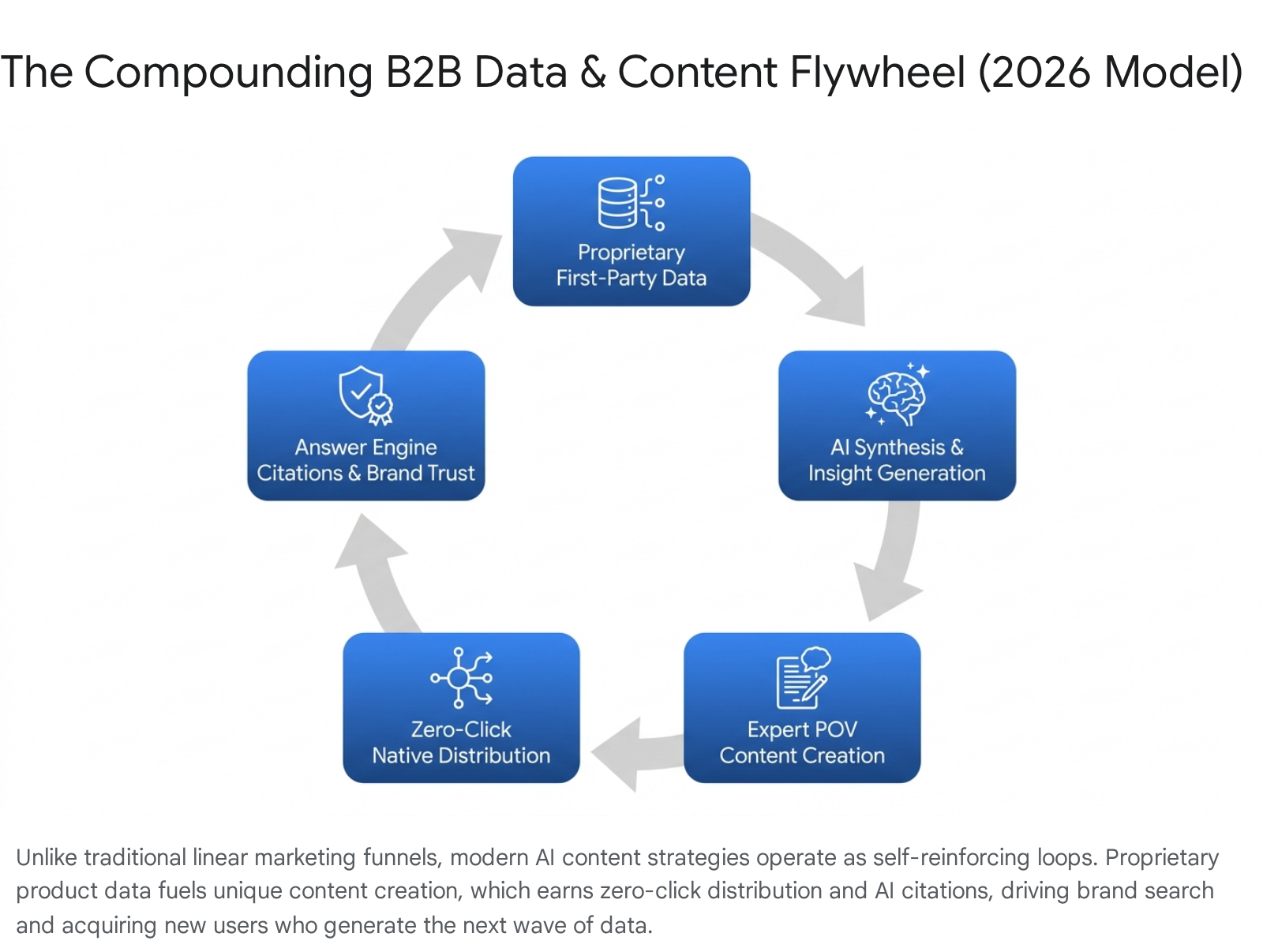

The modern B2B Data Flywheel operates sequentially: A platform captures proprietary, first-party data through deep user interaction (e.g., search queries, support tickets, sales transcripts) 2223.

Internal AI systems synthesize this unstructured data to identify precise, documented customer pain points, completely bypassing flawed, assumption-based keyword research 23. Subject matter experts then create high-density, original content (Tier 2 Thought Leadership) based strictly on this proprietary data 12. This content is distributed via zero-click native formats (e.g., LinkedIn documents, Reddit threads) that maximize in-platform engagement without requiring outbound clicks 32425. High engagement signals deep trust to Answer Engines, resulting in authoritative citations. This drives high-intent brand search, acquiring new users who generate the next wave of proprietary data 3.

Practitioner Teardowns: Evolving the Case Studies (2023 - 2026)

To understand how the theories of split architecture, proprietary data loops, and community defensibility manifest operationally, it is necessary to analyze the ongoing adaptations of the industry's vanguard. These organizations have successfully pivoted away from the foundational models that defined their 2010s growth, engineering new defensibility mechanisms explicitly tailored for the generative era.

1. HubSpot: Cognitive Switching Costs and Community Lock-In

HubSpot essentially authored the inbound marketing playbook, utilizing massive blogging volume to capture top-of-funnel demand. However, recognizing the fragility of the volume moat long before their reported 2025 organic traffic collapses, HubSpot decisively pivoted to a strategy rooted in cognitive lock-in and community infrastructure 116.

The modern HubSpot moat is architected across interlocking pillars that transcend traditional software lock-in 16: * HubSpot Academy: By creating the definitive global certification infrastructure for inbound marketing, sales, and service, HubSpot institutionalized its proprietary methodology. When a prospective B2B buyer's first structured professional education is delivered via HubSpot, the brand becomes the baseline reference architecture against which all competing software solutions are subsequently evaluated. * INBOUND Conference: This annual mega-event transforms software users into an active community, creating deep professional identity ties to the brand that are highly resistant to churn. * Unified Data Ecosystem: As AI tools become ubiquitous, HubSpot has embedded generative capabilities directly into its Marketing, Sales, and Service Hubs. The true defensibility here is not the AI algorithm itself, but the clean, structured, and unified Customer Relationship Management (CRM) data feeding the AI, allowing for precise, multi-channel execution 2728.

This strategic layering creates a formidable fourth type of switching cost: cognitive and identity-based lock-in 16. A competitor equipped with generative AI can trivially produce 10,000 blog posts; they cannot replicate two decades of category authority, an engaged community, and a certification network embedded on millions of professional resumes. This structural shift allowed HubSpot to reach a staggering $3.13 billion in total revenue by 2025, serving nearly 289,000 customers globally, entirely surviving the collapse of traditional SEO mechanics 1628.

2. Ahrefs: Proprietary Data and Search Everywhere Optimization

Ahrefs achieved over $100 million in Annual Recurring Revenue (ARR) without relying on external venture funding or employing a traditional outbound sales team, historically leaning on an unparalleled product-led SEO content strategy 22. However, the company has drastically updated its approach for the generative era. At their Ahrefs Evolve 2025 conference, the organization officially recognized the shift from "Search Engine Optimization" to "Search Everywhere Optimization," acknowledging that target audiences now utilize a fragmented network of answer engines, social discovery feeds, and community forums 16.

Ahrefs' true defensibility lies securely in its proprietary data infrastructure. Ahrefs operates AhrefsBot, the world's second most active web crawler behind Google, indexing over 8 billion web pages daily via a network of more than 3,600 dedicated servers 22. This proprietary, real-time data cannot be replicated by companies building superficial wrappers around OpenAI APIs.

In response to the zero-click shift, Ahrefs adapted its content strategy to focus on "polymorphic content" and "retrieval-first" structuring 1625. They explicitly target AI Overviews by engineering quotable assets, dense statistical original research, and precise schema markups, understanding that visibility in 2026 belongs strictly to brands that provide structured clarity wherever users search, including ChatGPT, Reddit, and Gemini 16. They explicitly recognize that off-site brand signals, community activity, and digital public relations serve as the ultimate validators for LLMs, shifting their marketing focus from pure keyword targeting to holistic brand presence 25.

3. Intercom & Drift: Answer-First Design and Conversational AI

Both Intercom and Drift built their foundational moats by revolutionizing B2B chat interfaces and human-led conversational routing. By 2026, market preferences shifted dramatically; Gartner surveys reveal that 67% of B2B buyers now actively prefer a rep-free, digitally mediated experience, and 45% utilized AI autonomously during a recent purchase evaluation 17.

Intercom adapted to this reality by treating its content not as marketing collateral, but as competitive software infrastructure. They established a radical operational mandate for their AI-driven support and sales models: "The first time you answer a question should be the last" 18. To execute this, Intercom completely restructured its customer service organization, abandoning traditional volume-based support tiers in favor of highly specialized AI-first roles 1920.

| Traditional Support Role | 2026 AI-First Support Role | Core Responsibility |

|---|---|---|

| Tier 1 Agent | AI Operations Lead | Owns day-to-day AI performance, tracks resolution quality, tunes agent behavior 20. |

| Help Center Writer | Knowledge Manager | Ensures underlying data feeding the AI is perfectly accurate and compliant, resolving hallucination root causes 19. |

| Support Manager | Conversation Designer | Shapes the AI's tone, pacing, and interaction style to build trust without misleading the customer 19. |

| Technical Support | Support Automation Specialist | Builds complex backend workflows and API connections allowing AI agents to take direct action on behalf of users 19. |

This represents a massive epistemological shift. The content moat is no longer a static knowledge base to be read by users; it is an active, agentic system where every human resolution feeds back into the model, ensuring that service quality compounds exponentially and operational issues are permanently eradicated 18. Drift followed a parallel trajectory, transitioning its conversational marketing tools into highly sophisticated, intent-driven AI buyer agents that operate autonomously to qualify, synthesize, and route high-value enterprise leads based on deeply layered first-party interaction data.

4. Wistia: High-Production Zero-Click Media

As search engines increasingly synthesize text, rich media - specifically high-fidelity video - has become a vital differentiator for B2B brands. However, Wistia recognized that standard video hosting software is fundamentally commoditized. Their adaptation to the 2026 market involves facilitating high-production value and frictionless zero-click syndication for their clients.

According to Wistia's 2025 State of Video Report, the volume of short-form video in search results grew 183% over two years, but viewer expectations simultaneously skyrocketed, leading to a severe 7% drop in engagement rates for mid-length (3-5 minute) videos 21. Modern viewers demand instant value and demonstrate zero tolerance for slow pacing. Wistia adapted by deploying AI to streamline the repurposing of high-value, tentpole content into native formats. For example, their flagship State of Video Live webinar was algorithmically segmented into 10 - 15 native, scroll-stopping social clips utilizing their proprietary AI Social Clips tool 2223.

Wistia's moat is built entirely on facilitating the Zero-Click Content Strategy for its B2B user base. By providing AI video dubbing in over 50 languages and automated social clipping capabilities directly within their platform, Wistia enables organizations to deliver their content's value natively on platforms like LinkedIn and X. This generates massive brand awareness and algorithmic favorability through on-platform engagement metrics (Save Rates, Watch Time) without requiring the user to execute an increasingly rare click back to a corporate landing page 32324.

5. Zoho: The Community and "Zohonomics" Moat (Non-US Leader)

To fully capture the diversification of the B2B landscape, we analyze Zoho, an Indian software corporation that reached a ₹50,000 crore valuation by utterly ignoring the Silicon Valley playbook. Their defensibility is deeply rooted in geographic community integration and a philosophy termed "Zohonomics" 2526.

Instead of competing for aggressively priced urban talent in global tech hubs, Zoho established its primary Research & Development operations in Tenkasi, a small village in Tamil Nadu 39. The cornerstone of their community moat is the Zoho Schools of Learning, established in 2005. Rather than hiring exclusively credentialed university graduates, the company trains rural students in software engineering, design, and business management. Students do not pay tuition; conversely, they receive a monthly stipend (Rs 10,000) and are guaranteed employment at Zoho upon graduation 2640.

This creates an unbreakable, fiercely loyal talent pipeline and generates profound community advocacy that no competitor can disrupt. Zoho operates over 55 integrated applications across a strictly unified data model, meaning enterprise clients experience zero manual data entry and no synchronization delays 41. Their moat is a highly ethical, self-reliant ecosystem built on extreme customer and employee loyalty, funded entirely by organic profitability rather than venture capital, rendering them completely immune to the algorithmic volatility and capital pressures of the US tech sector 2526.

6. Mistral AI: The Data Sovereignty Moat (Non-US Leader)

Mistral AI, headquartered in Paris, France, achieved a $6.2 billion valuation by 2024 (and an estimated $60 million in revenue by 2025) by leaning directly into the geopolitical narrative of "AI independence" and European data sovereignty 4243. In 2025 and 2026, European B2B buyers - particularly those in banking, public services, and capital markets - are intensely focused on maintaining absolute control over data infrastructure. This is driven by stringent General Data Protection Regulation (GDPR) enforcement, with cumulative fines reaching €7.1 billion, and the obligations of the newly enforced EU Data Act 2745.

Mistral's moat is structural positioning. It provides frontier-class, open-weight generative AI models that can be fully deployed on-premise 4346. This directly solves the critical "black box" pain point associated with closed US competitors. European enterprises purchase Mistral technologies not solely for their competitive inference latency, but explicitly to avoid vendor lock-in and to guarantee that their highly sensitive, proprietary data remains outside the extraterritorial jurisdictional reach of US surveillance laws like the CLOUD Act 4546. With 60% of European organizations planning to increase their investments in sovereign AI technologies by 2026, Mistral's B2B marketing strategy serves as a masterclass in aligning deep technological architecture with unavoidable geopolitical and regulatory realities 27.

Synthesizing the 2026 Mandate

The 2026 B2B content landscape represents a definitive paradigm shift. Organizations must pivot away from the agricultural farming model of digital marketing - planting thousands of low-value SEO seeds and waiting passively for clicks to harvest. The new era requires high-precision engineering: architecting integrated data feedback loops, structuring content for machine retrieval, and cultivating native community experiences that transcend the search interface.

The misconception that sheer volume guarantees defensibility has been unequivocally dismantled by the brutal realities of content decay, crawl budget exhaustion, and the rise of generative AI search summaries that synthesize rather than refer. To survive the zero-click economy and build a durable, modern moat, B2B market leaders must internalize three uncompromising strategic imperatives:

- Implement the Split Architecture: Organizations must cease attempting to force educational content to act as aggressive, direct-response sales vehicles. Digital assets must be bifurcated into a machine-readable authority layer designed explicitly to win LLM citations, and a highly optimized conversion core designed for human psychology and seamless transaction.

- Transition from Linear Funnels to Compounding Data Loops: Marketing architectures must be restructured entirely around proprietary data acquisition. First-party insights gleaned from deep customer interactions are the only assets an LLM cannot instantly commoditize. This data must feed the continuous creation of Expert POV content, which is then distributed natively to build brand trust and acquire the next generation of users.

- Invest in Cognitive Switching Costs over Algorithmic Manipulation: Algorithmic dominance is inherently transient; deep brand trust is durable. The ultimate defensibility, as demonstrated by HubSpot's Academy, Zoho's rural empowerment initiatives, and Mistral's regulatory alignment, lies in embedding the brand deeply into the professional identity, operational security, and educational advancement of the user community.

The victors of the next decade's B2B markets will not be the organizations that simply generate the most content. They will be the organizations that successfully engineer the most trusted, self-reinforcing systems of proprietary knowledge. In a digital environment saturated with infinite, artificially generated answers, the definitive business moat remains the indisputable authenticity and structural utility of the human brand operating behind it.