Artificial intelligence in healthcare efficacy and patient impact 2026

The integration of artificial intelligence into the global healthcare ecosystem has transitioned from an experimental phase into structural deployment as of 2026. Following years of accelerated investment and technological maturation, a definitive operational dichotomy has emerged within the medical sector. Healthcare organizations are realizing substantial, measurable returns on investment through administrative workflow automation, while the deployment of clinical diagnostic algorithms continues to grapple with persistent challenges regarding clinical validation, algorithmic bias, and human-computer interaction. This report provides an exhaustive analysis of the healthcare artificial intelligence landscape in 2026, delineating successful technological implementations, identifying structural deployment failures, and outlining critical implications for patient care, data privacy, and international regulatory frameworks.

Market Penetration and Economic Dynamics

The economic trajectory of healthcare artificial intelligence is characterized by rapid capital deployment and an uneven distribution of financial returns. Global forecasts project the healthcare artificial intelligence market to reach approximately $110.61 billion to $120 billion by 2028 to 2030, expanding at a compound annual growth rate of 35% to 40% 12. Healthcare organizations currently report an average return on investment of $3.20 for every $1 invested, with payback periods typically realized within 12 to 14 months 123. However, this aggregate economic metric obscures a critical divergence between administrative efficiency applications and clinical care technologies.

Administrative Workflow Automation

Administrative and operational artificial intelligence represents the most mature and rapidly scaling sector of the healthcare market. Confronted with severe financial constraints and chronic workforce shortages, health systems have prioritized workflow optimization. Industry data indicates that between 68% and 80% of hospitals and healthcare executives utilize artificial intelligence to reduce administrative burdens and optimize operations 124.

The primary applications yielding immediate financial returns include prior authorization automation, revenue cycle management, and ambient clinical documentation 34. Generative artificial intelligence models deployed as ambient scribes actively monitor patient-provider encounters and automatically generate structured clinical notes, orders, and coding suggestions. Implementations of products utilizing natural language processing have reduced physician documentation time by 40% to 45%, frequently condensing 90 minutes of after-hours charting - colloquially termed "pajama time" - into roughly 12 minutes of structured review 145. Concurrently, independent studies report associated declines in physician burnout rates from 51.9% to 38.8% within the first 30 days of ambient artificial intelligence deployment 35.

Because administrative workflows do not dictate direct patient care protocols, they carry a lower regulatory burden. These applications typically require initial implementation investments ranging from €50,000 to €250,000 per use case and deliver a return on investment within four to eight months, establishing administrative artificial intelligence as a mandatory budget line item for health systems seeking immediate operational stabilization 68. Furthermore, artificial intelligence-based revenue-cycle and billing-anomaly detection tools operate with 85% to 90% accuracy, directly recovering revenue lost to coding errors and denied claims 13.

Clinical Diagnostics and Support

Conversely, clinical artificial intelligence - encompassing diagnostic imaging analysis, predictive deterioration models, and autonomous treatment planning - faces a longer, more capital-intensive path to deployment. Clinical applications demand deep integration with existing electronic health records, rigorous regulatory clearances, and extensive clinical workflow redesign. Implementation costs typically range from €150,000 to €500,000 per use case, with deployment timelines extending up to 24 months 8.

Despite clinical applications attracting approximately 60% of all venture capital funding within the healthcare technology sector 48, adoption remains highly concentrated in specialized diagnostic domains. Radiology represents the most mature clinical segment, harboring over 500 cleared algorithms designed to detect lung nodules on computed tomography scans, identify fractures, and flag potential strokes 4. While specific artificial intelligence algorithms demonstrate up to 94% accuracy in tumor detection tasks and can significantly reduce diagnostic errors in controlled environments 3, broad clinical adoption is hindered by integration complexities and concerns regarding real-world performance degradation outside of highly controlled testing environments 78.

| Characteristic | Administrative Artificial Intelligence | Clinical Artificial Intelligence |

|---|---|---|

| Primary Use Cases | Medical coding, ambient scribes, prior authorization, scheduling | Medical image analysis, predictive risk models, clinical decision support |

| Average Implementation Cost | €50,000 to €250,000 per use case | €150,000 to €500,000 per use case |

| Typical ROI Timeline | 4 to 8 months | 12 to 24 months |

| Regulatory Burden | Low (Generally exempt from high-risk medical device classification) | High (Requires FDA 510(k), De Novo, or EU AI Act high-risk certification) |

| Primary Value Proposition | Overhead reduction, revenue cycle optimization, burnout mitigation | Diagnostic accuracy, early disease detection, personalized treatment planning |

Efficacy and Failures in Clinical Deployments

The implementation of artificial intelligence in direct clinical care reveals a spectrum of profound successes and highly visible structural failures. The efficacy of these systems depends heavily on the specific nature of the clinical task, the quality of the underlying data, and the architecture of the human-computer interaction.

Diagnostic Successes and Research Optimization

Artificial intelligence models deployed for bounded, highly specific diagnostic tasks have achieved sustained clinical success. Technologies analyzing brain scans for stroke triage have become gold standards in emergency settings, significantly cutting triage times and securing systemic reimbursement approvals 9. Similarly, in surgical environments, artificial intelligence-generated operative reports have demonstrated an 87.3% accuracy rate, outperforming surgeon-written reports, which achieved only a 72.8% accuracy rate in direct comparisons, leading to significantly fewer clinically significant discrepancies 2.

Recent clinical studies also demonstrate that collaborative configurations between humans and artificial intelligence yield superior outcomes. A randomized controlled trial conducted by Stanford Medicine researchers evaluated 70 physicians utilizing a large language model for medical diagnoses. The optimal configuration involved parallel analysis, wherein the physician and the artificial intelligence assessed the case simultaneously, followed by an automated summary analyzing the similarities and deviations between the two assessments 10. Furthermore, experimental models have outperformed human physicians in initial emergency department triage; a study evaluating 76 real-life cases demonstrated that advanced language models were diagnostically correct 67.1% of the time, compared to 55.3% and 50.0% for expert attending physicians 11.

Beyond direct point-of-care diagnostics, artificial intelligence is reshaping clinical trial administration. Research published in The New England Journal of Medicine details the use of a retrieval-augmented generation system powered by a large language model to screen volunteers for the Cooperative Program for ImpLementation of Optimal Therapy in Heart Failure (COPILOT-HF). Analyzing data from 1,894 patients, the artificial intelligence process achieved 97.9% accuracy in recruiting eligible patients, compared to 91.7% accuracy for human specialists. Crucially, the automated screening process reduced the average cost per patient from $34.75 to between $0.02 and $0.11, fundamentally altering the economics of clinical trial recruitment 14.

Algorithmic Failures and Legacy Disconnects

Despite these advancements, the deployment of generalized predictive models has resulted in notable clinical failures. The historical deployment of oncology recommendation systems, which consumed billions in funding but ultimately delivered unsafe treatment advice due to flawed data extrapolation, serves as a foundational cautionary tale 9.

More recently, widespread electronic health record sepsis prediction models have faced intense academic scrutiny. Sepsis is responsible for approximately one-third of all hospital deaths in the United States, making early algorithmic detection a high-priority commercial objective 12. However, independent analyses by the University of Michigan and studies published in JAMA revealed that earlier iterations of a prominent proprietary sepsis algorithm missed up to two-thirds of true sepsis cases, rarely identified cases unnoticed by medical staff, and frequently issued false alarms 1314.

Most critically, the model struggled to differentiate high and low-risk patients prior to human intervention. When predictive analysis was restricted to patient data recorded before a physician ordered a blood culture, the model accurately assigned higher risk scores to only 53% of patients who ultimately developed sepsis 12. This indicated that the algorithm was identifying the physician's diagnostic interventions rather than detecting early physiological deterioration; it was encoding clinician suspicion rather than predicting the disease trajectory independently 12. While vendors have subsequently updated these algorithms - citing reductions in order-to-antibiotic turnaround times and a 16% reduction in sepsis mortality indices 131519 - the controversy underscores the hazards of deploying proprietary, opaque models across diverse patient populations without rigorous, continuous local validation.

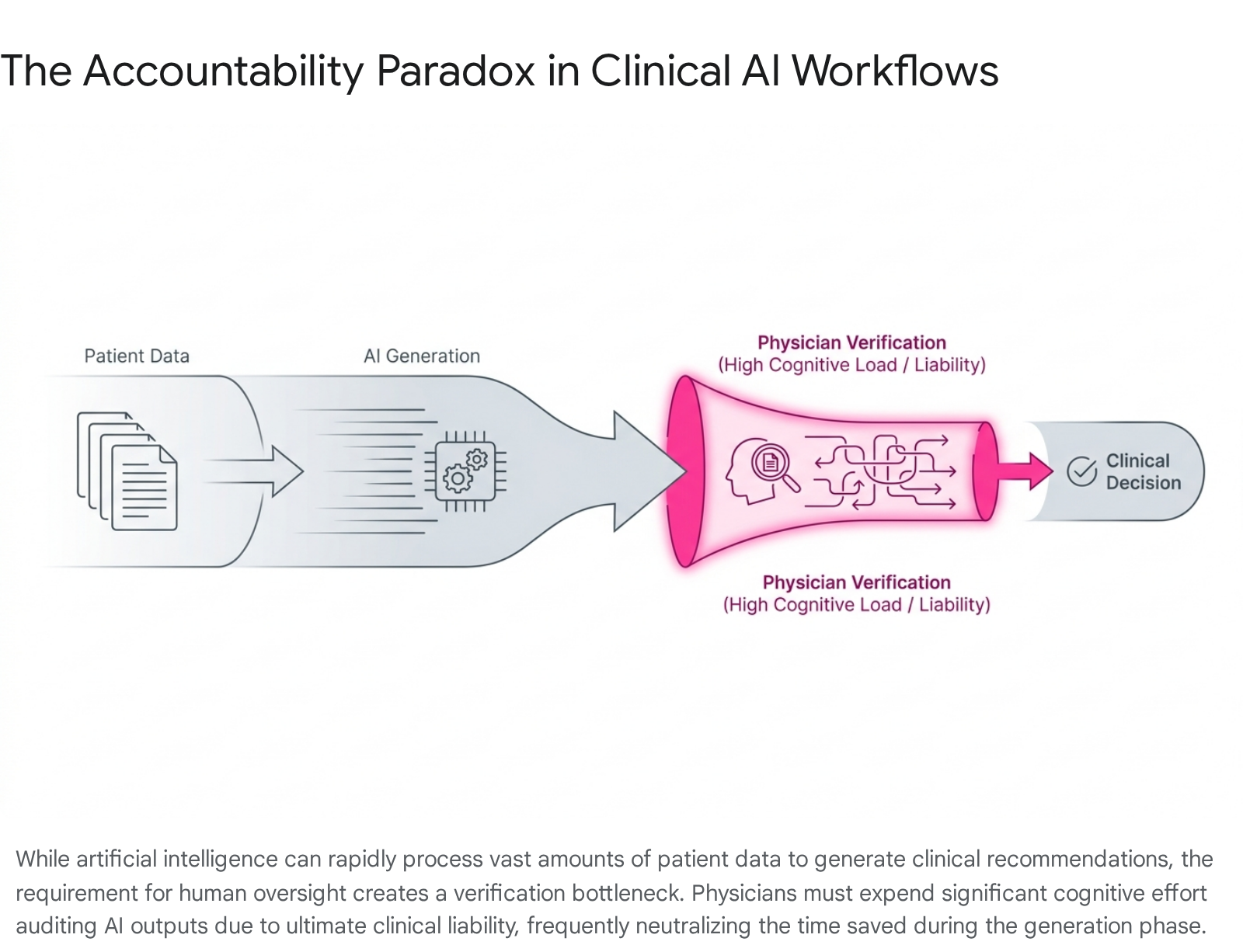

The Human-in-the-Loop Accountability Paradox

To mitigate algorithmic errors, international health authorities and regulators universally recommend a "human-in-the-loop" deployment model, wherein a human clinician reviews and approves artificial intelligence outputs before clinical execution 1617. However, empirical data from 2025 and 2026 suggests this governance paradigm generates unforeseen cognitive burdens, creating an "accountability paradox."

While artificial intelligence systems excel at synthesizing patient histories and generating fluent clinical drafts, verifying these outputs requires a highly demanding form of cognitive labor. Because large language models produce plausible rationales that can easily mask factual hallucinations, physicians must independently trace the algorithm's logic back to the source data 16. In high-stakes clinical contexts, professional liability and ethical responsibility dictate that clinicians must perform complete verification regardless of the system's stated statistical accuracy. Consequently, while artificial intelligence can generate a personalized patient communication draft almost instantly, pilot studies demonstrate that the total physician review time remains entirely comparable to manual authoring 22.

This dynamic risks transforming physicians into continuous auditors of opaque algorithms, placing a disproportionate moral and legal responsibility on practitioners who are expected to rely on technology to minimize errors yet bear total liability for determining when to override it 1618. The expectation that human vigilance can scale to monitor a continuous stream of automated outputs frequently results in automation bias - where clinicians defer to an algorithm despite reservations - or alert fatigue, where practitioners become desensitized to safety warnings 16.

Large Language Models and Health Technology Hazards

The proliferation of consumer-accessible large language models has introduced novel vectors for medical error. The Emergency Care Research Institute identified the misuse of artificial intelligence chatbots - such as ChatGPT, Copilot, and Gemini - as the primary health technology hazard for 2026 1920. These generalized tools are not regulated as medical devices, yet they are increasingly utilized by clinical personnel for administrative drafting and by patients for diagnostic guidance. Because these models are designed to predict word sequences and simulate confident expertise, they can generate highly plausible but dangerous clinical advice. Investigations reveal instances where chatbots have suggested incorrect diagnoses, recommended unnecessary testing, and hallucinated fictitious anatomical structures in response to medical inquiries 20. The hazard is amplified because the authoritative tone of the output discourages users from independently verifying the underlying medical logic 1920.

Algorithmic Bias and Health Equity

The structural scaling of clinical artificial intelligence has exposed severe vulnerabilities regarding dataset diversity, threatening to institutionalize historical medical disparities. Because machine learning models abstract parameters from retrospective data, they inadvertently encode and amplify existing systemic health inequities if deployed without rigorous sociodemographic validation 212722.

Performance Drift and Demographic Underrepresentation

A fundamental deficiency in contemporary medical algorithms is the geographical and demographic skew of their training datasets. Over half of all globally published clinical artificial intelligence models rely on data originating exclusively from the United States and China, representing a narrow fraction of the global population 29. Research indicates that diagnostic performance can degrade by 20% to 40% when models are applied to patient populations outside their original training parameters 7.

The clinical consequences of this underrepresentation are direct and harmful. Artificial intelligence-driven dermatology diagnostic tools trained predominantly on lighter skin tones suffer elevated failure rates when evaluating melanoma and other skin conditions in patients with darker skin 27. A 2025 study in The Lancet Digital Health reviewing 21 open-access skin cancer datasets revealed profound metadata gaps; of 106,950 total images, only 2,436 contained documented skin type classifications, rendering it impossible to audit the algorithms for phenotypic bias 29. Furthermore, legacy clinical algorithms have historically applied medically unjustified risk score modifications based on race, systematically categorizing minority populations as lower risk and thereby prompting clinicians to under-refer vulnerable patients for specialized cardiology or critical care resources 2223.

Equity Mitigation Frameworks

In response to widespread bias documentation, the international medical research community has formalized comprehensive algorithmic equity guidelines. In December 2024, The Lancet Digital Health and NEJM AI jointly published the STANDING Together (STANdards for data Diversity, INclusivity and Generalisability) consensus recommendations 242526. Developed by a coalition of over 350 experts spanning 58 countries, these guidelines mandate that developers explicitly audit datasets for demographic representation, formally document known population gaps, and implement bias-aware training methodologies such as adversarial debiasing prior to clinical deployment 272924.

To support these objectives, structural changes in data architecture are underway. Initiatives such as the National Clinical Cohort Collaborative (N3C) - which harmonizes records from over 75 institutions - and the National Institutes of Health's All of Us Research Program are constructing highly representative biological repositories 27. Nevertheless, researchers emphasize that domestic data aggregation efforts remain insufficiently scaled to match the rapid commercial pace of model deployment, necessitating standardized national templates for electronic health record interoperability to expand diverse training inputs safely 2735.

Global Regulatory Frameworks and Implementation Strategies

As medical algorithms transition from exploratory research to point-of-care implementation, international regulatory bodies are fundamentally overhauling traditional oversight mechanisms to accommodate adaptive, software-based medical devices.

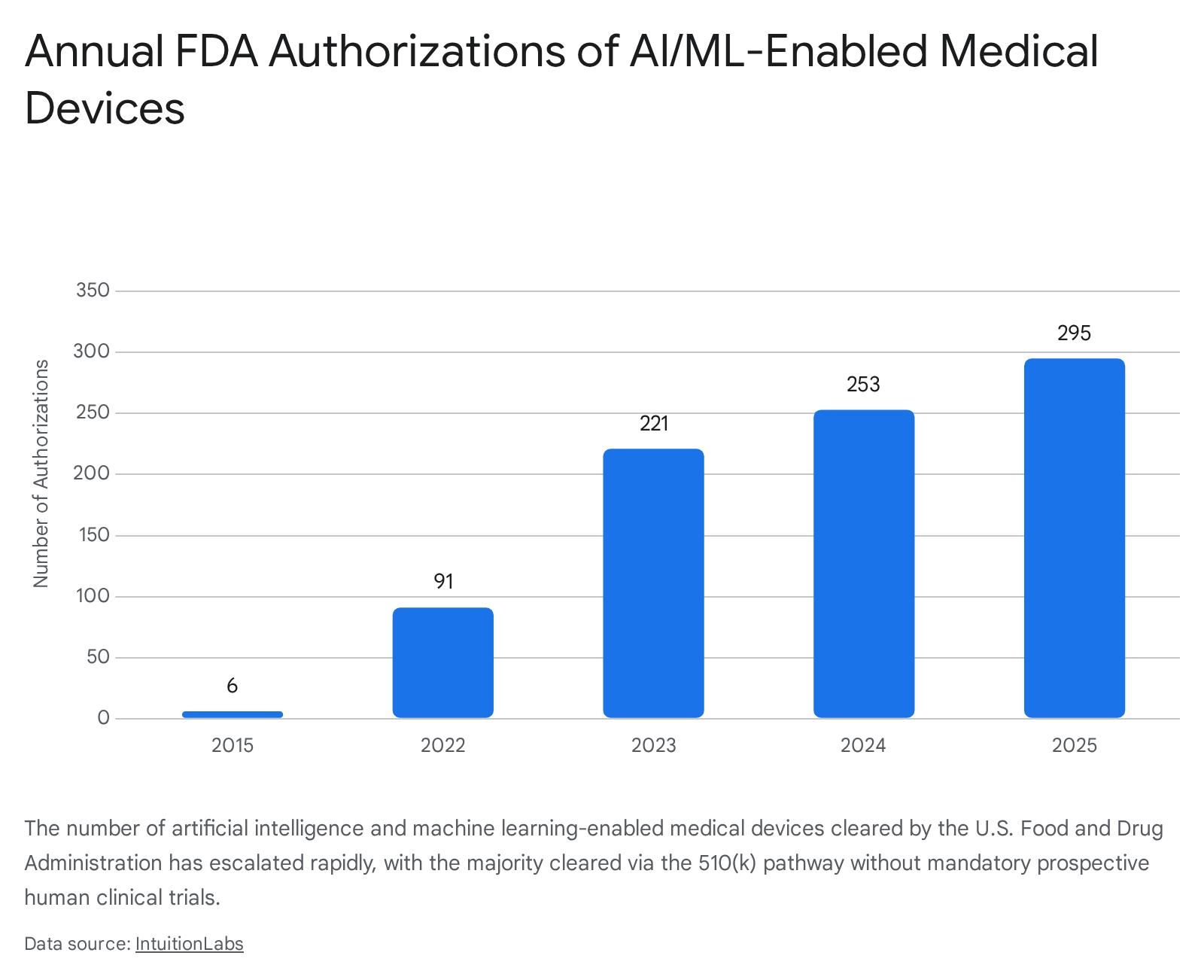

United States Regulatory Evolution

The United States Food and Drug Administration has overseen a dramatic acceleration in device clearances. From six authorizations in 2015, the annual volume surged to 253 in 2024 and 295 in 2025, bringing the cumulative total to 1,451 cleared artificial intelligence-enabled medical devices by the end of 2025 3637.

However, regulatory scrutiny has intensified regarding the clearance pathways utilized. Data indicates that 96.7% of all cleared devices enter the market via the 510(k) pathway, which relies on demonstrating "substantial equivalence" to a predicate device rather than requiring novel, prospective human clinical trials 36282930. Research published in 2025 revealed that fewer than 2% of cleared devices were supported by randomized clinical trials 37.

This reliance on retrospective validation has manifested in post-market vulnerabilities. A JAMA Health Forum study found that 60 authorized devices were associated with 182 recall events through November 2024, with 43% of these recalls occurring within a single year of authorization 2829. The study noted a distinct commercial pattern: publicly traded companies accounted for 53% of the recalled device models but were responsible for over 90% of the recall events and 98.7% of the total recalled units. Analysts interpret this disparity as evidence of intense investor-driven pressure prioritizing rapid commercialization over comprehensive pre-market clinical evaluation 2829.

To address the unique nature of adaptive software, the FDA finalized its framework for Predetermined Change Control Plans (PCCPs) in December 2024. This policy allows manufacturers to pre-specify how algorithms will be modified post-market based on real-world learning. If updates adhere to the authorized protocol regarding performance monitoring and data lineage, the manufacturer avoids the requirement of submitting a new 510(k) for each iterative software update 37314232.

Latin American Oversight

While the United States adapts existing medical device pathways, other jurisdictions are enacting highly specific clinical policies. In Brazil, the Federal Council of Medicine published Resolution No. 2,454/2026, taking effect in August 2026. This mandate establishes that artificial intelligence may only be utilized as a supplementary support tool, preserving the physician's ultimate authority over diagnostic and prognostic decisions 3334. The resolution enshrines the physician's "Right of Refusal," permitting the rejection of AI-generated recommendations if the practitioner determines the system lacks adequate scientific validation 3334. Concurrently, the Brazilian Artificial Intelligence Plan 2024 - 2028 directs R$ 23 billion toward digitalizing the Unified Health System, aiming to develop predictive tools for chronic disease detection while navigating ongoing debates regarding civil liability and intellectual property under the pending AI Legal Framework 835.

Asian Market Strategies

Asian regulatory environments are characterized by heavy state-sponsored infrastructure integration. In China, the National Medical Products Administration enacted an updated Good Manufacturing Practice for medical devices, effective November 2026. This framework imposes stringent lifecycle management protocols, demanding verified control over data collection, algorithm design, and continuous software updates 363738.

Singapore has adopted a highly coordinated national deployment model under its healthtech agency, Synapxe. Singapore is systematically integrating predictive models into clinical workflows, such as the deployment of artificial intelligence to analyze chest X-rays for bone fractures and tuberculosis within emergency departments, aiming for full national capability by the end of 2026 394041. The nation utilizes centralized platforms, including HEALIX, for secure, anonymized data sharing, explicitly focusing on shifting the national healthcare model from reactive treatment to proactive, personalized care while utilizing regulatory sandboxes to foster safe innovation 4041.

In India, the regulatory landscape is navigating the complexities of rapid technological influx across a highly diverse population. The Central Drugs Standard Control Organization oversees clinical models as Software as a Medical Device, employing a risk-based classification approach 753. However, stakeholders face significant compliance challenges aligning these innovations with the strict consent and data localization mandates of the 2023 Digital Personal Data Protection Act, which classifies health data as highly sensitive and requires robust privacy-by-design architecture 545556.

Emerging Market Leapfrogging

In developing economies, artificial intelligence is strategically leveraged to bridge severe structural deficits. In South Africa, the impending realization of the National Health Insurance scheme by 2028 seeks to consolidate disparate public and private resources 575842. Facing acute workforce shortages - operating with approximately one radiologist per 100,000 citizens compared to European averages of 13 - the implementation of artificial intelligence-assisted imaging and robust telemedicine networks is not viewed merely as an optimization tool, but as a mandatory structural pillar to achieve universal, equitable access under the new national health framework 5743.

Patient Implications and Data Monetization

The operational benefits of clinical artificial intelligence are inextricably linked to the massive consumption of personal health data, precipitating rapid shifts in patient privacy expectations, consumer-facing technology, and the commercialization of medical records.

Direct-to-Consumer Health Artificial Intelligence

Technology corporations have aggressively expanded into the direct-to-consumer health sector. By 2026, tools such as Amazon Health AI, Copilot Health, Perplexity Health, and ChatGPT Health allow users to directly upload personal medical records and wearable data to receive personalized health guidance 44. Public surveys indicate that 32% of adults utilize artificial intelligence for health information, with many uploading sensitive medical data under the assumption that personalized inputs guarantee clinical accuracy 44.

However, studies reveal persistent reliability deficits. Research published in Nature Medicine demonstrated that participants utilizing early chatbot models to determine appropriate clinical actions performed no better than a control group using standard internet search engines 44. The chatbots frequently misinterpreted prompts, resulting in conflicting or entirely incorrect medical advice, highlighting the risks of democratizing access to unvalidated diagnostic algorithms without corresponding clinical oversight 44.

Patient Data Governance and Privacy

The vast data requirements of artificial intelligence training have catalyzed global legislative overhauls. In 2026, healthcare organizations must ensure compliance across 144 national privacy laws, as third-party data breaches now account for over 85% of healthcare data incidents globally 62.

In the United States, regulators and payers have tightened the Health Insurance Portability and Accountability Act to address the unique vulnerabilities introduced by generative models. Organizations are required to implement outcome-based security safeguards, modernize Notices of Privacy Practices to explicitly detail how artificial intelligence interacts with patient data, and establish rigorous internal review workflows prior to disclosing protected health information to third-party technology vendors 945. Furthermore, states are enacting stringent localization rules; Texas mandates plain-language disclosures when artificial intelligence influences clinical scenarios, while California requires generative artificial intelligence developers to disclose training data sources and apply watermarking to health communications 9. In Europe, the EU AI Act classifies medical algorithms as high-risk, establishing mandatory human oversight and severe financial penalties for non-compliance 82962.

Commercialization of De-identified Health Data

The intersection of advanced analytics and global data privacy mandates has formalized the healthcare data monetization sector, projected to grow to $28.7 billion by 2036 64. Healthcare institutions, biotechnology firms, and insurance payers are systematically commercializing de-identified clinical, genomic, and claims data to accelerate pharmaceutical research and train predictive models 644647.

This market operates through several mechanisms. The Data-as-a-Service model involves hospitals and diagnostic networks securely consolidating structured clinical information into datasets licensed to research institutions and life sciences companies for real-world evidence studies 4648. The Insight-as-a-Service model allows healthcare providers to commercialize the predictive analytics derived from their proprietary data pools - such as disease risk scores or operational benchmarking - without directly transferring the raw, underlying patient records 46.

Furthermore, decentralized blockchain platforms are emerging, providing secure storage and enabling individual patients to directly monetize their personal health data by connecting with pharmaceutical companies recruiting for clinical studies 48. While these mechanisms provide essential funding streams and accelerate drug discovery, they place immense pressure on health systems to maintain infallible anonymization protocols, balancing the ethical imperative to protect patient privacy against the commercial and clinical value of training next-generation artificial intelligence models.

| Region / Jurisdiction | Key Policy Framework | Primary Enforcement Mechanism or Strategic Goal |

|---|---|---|

| United States | FDA PCCPs / ONC HTI-1 | Permits pre-authorized algorithmic updates; mandates EHR artificial intelligence transparency and interoperability by 2026. |

| European Union | EU AI Act / GDPR | Classifies medical artificial intelligence as high-risk; enforces rigorous conformity assessments and mandates human oversight. |

| Brazil | CFM Resolution 2,454/2026 | Protects physician autonomy; guarantees the legal right to refuse algorithmically generated clinical recommendations. |

| India | DPDPA 2023 | Defines medical records as highly sensitive; enforces strict consent protocols and regulates cross-border data transfers. |

| Singapore | Synapxe / HEALIX Platform | Utilizes centralized, anonymized national data sharing to drive predictive care and preventative health screening models. |

Conclusion

The integration of artificial intelligence into healthcare in 2026 represents a profound, yet deeply fractured transformation. The immediate financial and operational successes realized within administrative workflow automation demonstrate the technology's capability to salvage constrained hospital margins and meaningfully alleviate the bureaucratic burdens driving severe physician burnout. Conversely, the clinical and diagnostic landscape remains fraught with systemic vulnerabilities. The rapid commercial scaling and FDA clearance of diagnostic algorithms have frequently outpaced the execution of robust, prospective clinical trials, resulting in notable post-market recalls and high-profile failures in predictive modeling.

Furthermore, the integrity of clinical artificial intelligence is fundamentally threatened by the geographical and demographic homogeneity of its training datasets, which risk institutionalizing historical inequities if robust mitigation frameworks, such as the STANDING Together guidelines, are not universally adopted. The prevailing regulatory paradigm - relying on human-in-the-loop oversight - has paradoxically increased the cognitive load on physicians, transferring systemic liability onto individual practitioners who are forced to continually audit opaque systems.

Achieving a sustainable, augmented healthcare system requires maturation beyond the algorithms themselves. The future of medical artificial intelligence depends on the rigorous enforcement of post-market surveillance, the ethical curation of globally diverse datasets, the uncompromising protection of patient data sovereignty, and the implementation of clinical interfaces that authentically amplify human expertise without imposing unsustainable cognitive burdens.