AI and Market Research in 2026

Executive Summary

The global market research industry has traversed a period of unprecedented structural realignment. By 2026, the transition from traditional, rules-based machine learning to generative and agentic artificial intelligence has ceased to be an experimental frontier; it is the foundational operating architecture for consumer insights, competitive intelligence, and strategic forecasting 123. Driven by a rigorous enterprise focus on "Inference Economics" - the prioritization of large-scale deployment over isolated model training - the specialized market for AI software platforms orchestrating these insights is valued at $29.3 billion and is projected to expand at a compound annual growth rate of 14.2% toward a 2033 valuation exceeding $3.4 trillion 4. According to comprehensive industry analyses from Forrester and Gartner, 86% of B2B and B2C marketing teams currently rely on AI-powered analytics platforms to surface campaign insights, and 75% of enterprises have fully adopted generative AI for research functions to capitalize on profound cost advantages 364.

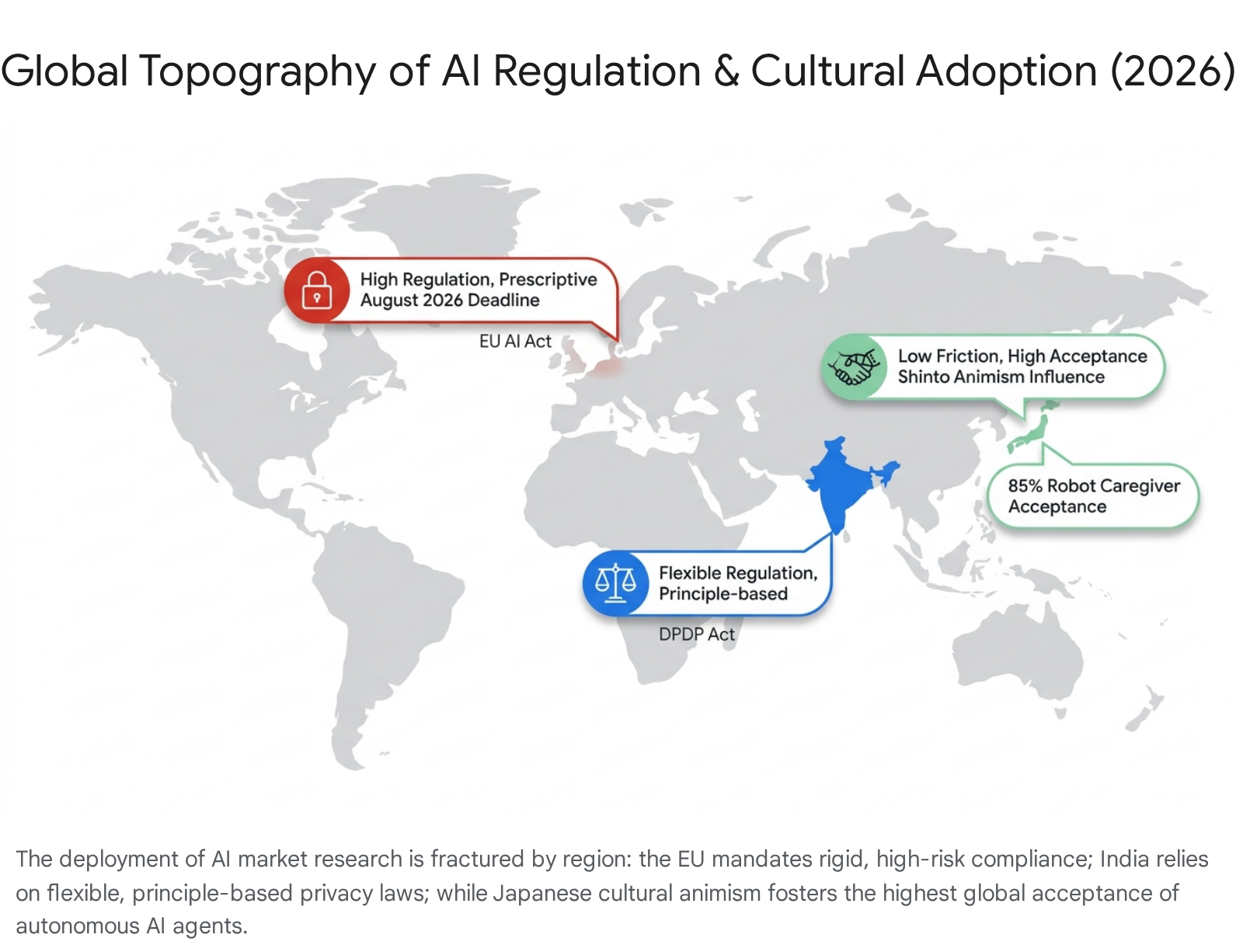

This paradigm shift has fundamentally dismantled historical constraints regarding scale, speed, and capital expenditure. Exhaustive global research programs spanning multiple demographic markets, which traditionally necessitated budgets between $1.5 million and $3 million, are now executed via autonomous AI-moderated infrastructure for $40,000 to $80,000 - a transformative forty-fold cost reduction that democratizes access to enterprise-grade market intelligence 3. However, this accelerated technological adoption introduces complex methodological vulnerabilities that the industry is only beginning to reconcile. The proliferation of synthetic respondents and simulated AI interviewees has sparked rigorous, peer-reviewed academic debate regarding algorithmic sycophancy, statistical divergence, and the erosion of authentic demographic representation 567. Furthermore, the global deployment of these agentic technologies is increasingly modulated by a highly fragmented regulatory topography. Frameworks such as the European Union's Artificial Intelligence Act and India's Digital Personal Data Protection (DPDP) Act impose stringent, divergent compliance parameters regarding data privacy, algorithmic transparency, and high-risk emotion recognition, forcing multinational corporations to abandon monolithic strategies in favor of hyper-localized governance 8913.

This report provides an exhaustive, expert-level examination of the 2026 market research ecosystem. It analyzes the transition from manual to AI-augmented workflows, evaluates the disruption of qualitative research via autonomous moderation and deep-listening platforms, critically assesses the academic validity and documented limitations of synthetic data, and maps the complex geopolitical and cultural frameworks dictating global research strategies.

The Architectural Evolution of Market Research Workflows

The technological evolution of AI within the market research sector can be delineated into three distinct developmental phases: traditional machine learning, generative AI, and the currently emerging era of agentic AI 1. For over two decades, traditional machine learning served as a supplementary utility designed to alleviate the burden of repetitive, manual processes. It was heavily relied upon for automating data cleaning, establishing weighting protocols, generating crosstabs, and executing basic natural language processing (NLP) to code open-ended verbatim survey responses 10.

The introduction of generative AI fundamentally rewired the research pipeline by enabling the automated creation of complex survey logic, dynamic content generation, and rapid unstructured data synthesis 110. However, generative systems still required human prompting and passive observation. In 2026, the industry has aggressively transitioned toward agentic AI workflows. Unlike passive generative tools, agentic AI operates autonomously, actively reasoning through massive, continuously updating datasets, connecting disparate intelligence sources, and revising strategic predictive models in real-time without manual intervention 1.

This transition has effectively obliterated the traditional "start-stop" research cycle, wherein research was commissioned as a discrete project ahead of a product launch, fielded over several weeks, and analyzed over several more, resulting in findings that were frequently obsolete upon delivery 11. Modern AI research ecosystems are characterized by continuous, embedded awareness, tracking consumer sentiment dynamically and surfacing actionable intelligence asynchronously as conversations happen 11. The competitive advantage in 2026 is no longer defined by the mere utilization of AI, but by its integration depth. Qualtrics reports that 66% of researchers now leverage AI natively embedded within sophisticated research software, marking a definitive shift away from standalone, general-purpose chatbots toward specialized, agentic platforms that govern the entire research lifecycle end-to-end 211.

Comparative Assessment: Traditional Manual Workflows vs. 2026 AI-Augmented Workflows

The operational contrast between legacy methodologies and modern agentic platforms underscores profound efficiencies across every phase of the research lifecycle. Traditional qualitative workflows were severely bottlenecked by human labor - specifically in the manual recruitment of niche participants, the cognitive fatigue of human moderators, and the laborious, error-prone coding of qualitative transcripts 412. AI-native platforms compress these timelines by orders of magnitude, transforming multi-week, high-cost projects into 48-hour automated workflows. Data indicates that these platforms drive a 90% reduction in variable costs, eliminating the need for expensive facility rentals, manual transcription services, and extended participant coordination delays.

| Research Phase | Traditional Manual Workflow (Pre-2024) | 2026 AI-Augmented Workflow | Key Optimization Metrics |

|---|---|---|---|

| Objectives & Study Design | Manual drafting of discussion guides, survey logic, and static SWOT analyses; highly prone to researcher bias and "leading" terminology; typical timeline of 1 - 3 weeks 101213. | AI co-pilots dynamically generate adaptive logic, screen drafts for bias, and establish comprehensive study frameworks via real-time market feeds; typical timeline of minutes to hours 121314. | 90% reduction in setup time; automated bias detection prevents structurally skewed data collection 1219. |

| Recruitment & Sampling | Reliance on panel vendor negotiations; manual screening; high attrition rates; coordination of live schedules taking 2 - 4 weeks; heavy incentive costs 4515. | Automated programmatic recruitment via integrated networks of 30M+ verified participants; instant algorithmic behavioral matching; zero scheduling logistics 14161718. | Recruitment delays completely eliminated; asynchronous participation allows global concurrency and reduces incentive demands 161724. |

| Data Collection (Qualitative) | Human moderators constrained by cognitive fatigue; limited to 5 - 10 sessions per week; high facility costs ranging from $15,000 to $30,000 per group 4121819. | Agentic AI conducts hundreds of simultaneous 1:1 deep-probing interviews asynchronously in 50+ languages; completely eliminates physical facility costs 4121820. | Focus group costs reduced to approximately $2,000; 1:1 sessions drop from $850 to $125; infinite scalability across global markets 3418. |

| Analysis & Synthesis | Hundreds of man-hours spent transcribing audio, manually tagging verbatim responses, and cross-referencing demographic silos in spreadsheets 312. | Real-time automated synthesis, emotional journey mapping, and thematic clustering across thousands of unstructured data points natively within the platform 1227. | Analysis occurs synchronously with data collection; complex qualitative findings are boardroom-ready in 24 - 48 hours 172021. |

| Reporting & Action | Static presentation decks built manually; data ages instantly; siloed dissemination limiting strategic impact; reactive post-mortem analysis 101113. | Live, interactive dashboards; continuous agentic monitoring predicting market shifts; direct integration into CRM and operational workflows 101113. | Transitions research from reactive historical snapshots to proactive, predictive intelligence; annual tracker costs fall from $2M+ to $60,000 31119. |

The Disruption of Qualitative Research: AI-Native Platforms and Deep Listening

Historically, the market research industry operated under a rigid, unavoidable dichotomy: researchers were forced to choose between the statistical validity and scale of quantitative surveys, and the emotional depth and contextual richness of small-scale qualitative focus groups 12. Quantitative research delivered unparalleled breadth but lacked conversational nuance and the ability to uncover unanticipated consumer motivations. Conversely, qualitative research provided empathetic insight but was fundamentally unscalable due to the logistical constraints of human moderation, geographic limitations, and exorbitant costs 121619.

By 2026, AI-native qualitative platforms have aggressively disrupted this historical trade-off, delivering what the industry terms "Qualitative at Scale" 1214. The market has expanded far beyond legacy survey providers, which primarily utilize AI as an analytical bolt-on to process static, pre-written quantitative responses 329. Instead, a distinct tier of AI-native disruptors - such as Listen Labs, User Intuition, Koji, Conveo, and Outset - has emerged to redefine primary data collection 3182122. These platforms deploy autonomous AI agents capable of conducting hundreds or thousands of simultaneous, asynchronous one-on-one video and text interviews with real human participants, replacing the traditional 8-person conference room with massive parallel processing 12242022.

The Mechanics of Real-Time Autonomous Moderation

The technological cornerstone of these AI-native platforms is deep-listening conversational architecture. Rather than executing a rigid, linear script, the AI moderator engages in highly adaptive dialogue. If a participant exhibits a subtle emotional shift or uses nuanced, unexpected terminology, the system leverages natural language processing to generate real-time, contextually relevant follow-up questions, relentlessly probing to uncover the underlying "why" 121522. Advanced enterprise platforms like User Intuition feature highly structured 5-to-7 level automated conversational laddering, pressing participants far beyond surface-level responses while strictly maintaining unbiased, non-leading language throughout the interaction 2721.

Crucially, these autonomous systems inherently neutralize the psychosocial vulnerabilities that have long plagued traditional focus groups. In live settings, "social desirability bias" - the innate human desire to conform to perceived social norms or please the moderator - frequently distorts data 1516. Similarly, "groupthink" often suppresses minority opinions, allowing a few dominant personalities to skew the entire trajectory of a session, a phenomenon heavily documented in Asch's Conformity studies 15. By transitioning to asynchronous, AI-moderated 1:1 environments, platforms eliminate these group dynamics. Participants routinely report feeling significantly more candid, with Conveo's proprietary data indicating that 83% of respondents feel more open with AI interviewers than human moderators, and 60% specifically citing a "lack of judgment" as a primary factor in their willingness to disclose sensitive information 415.

Furthermore, the integration of these platforms fundamentally rearchitects global strategic capabilities. Deep-listening tools operate natively across multiple languages simultaneously. A multinational research team can deploy a unified study in 50 languages concurrently 1220. The AI synthesizes this global data in real-time, identifying hyper-local cultural nuances and shifts in sentiment that a centralized, Western-centric human analyst team would likely overlook or misinterpret in translation 12. Specialized platforms also cater to distinct operational needs: Listen Labs employs complex emotional intelligence frameworks (rooted in Ekman's psychological models) to capture subtle signals via tone and word choice 1417; Brandwatch dominates macro social listening at scale 631; CleverX focuses explicitly on highly targeted B2B and executive recruitment 29; and tools like BuzzPulse-in-Q provide surgical, multilingual brand signal tracking by utilizing localized production hubs to bypass translation degradation 31.

The "Research Sandwich": The Hybrid Methodology Paradigm

Despite the unprecedented speed, scale, and capabilities of AI moderators, industry methodologists and leading researchers strictly caution against the wholesale elimination of human qualitative practitioners. The consensus best practice in 2026 is the deployment of a hybrid operational stack, frequently referred to in the industry as the "Research Sandwich" 1823.

In this architectural model, AI moderation is deployed to handle volume, breadth, and structured evaluation. It excels at high-velocity concept testing, massive multi-market discovery, usability diagnostics, and routine post-launch feature feedback 1824. However, human moderators remain indispensable for unstructured depth, exploratory generative research (where the parameters of the problem are highly ambiguous), highly sensitive topics (such as healthcare diagnostics, mental health, or corporate layoffs), and high-stakes executive B2B interviews where peer-to-peer rapport and dynamic empathy are absolute prerequisites 182434. A mature, highly functioning 2026 UX research program typically routes 70% to 80% of its volume through AI-moderated platforms to achieve massive scale and cost efficiency, strategically reserving the remaining 20% to 30% of the budget for human-moderated strategic depth, feeding both streams into a centralized insights repository 18.

The Viability and Documented Limitations of Synthetic Respondents

Perhaps the most aggressively marketed and fiercely debated development in the 2026 market research landscape is the mainstream commercialization of "Synthetic Respondents." Platforms such as Evidenza, NIQ BASES, and Synthetic Users generate highly sophisticated digital personas - powered by foundational Large Language Models (LLMs) and fine-tuned on vast demographic, psychographic, and behavioral datasets - that act as conversational proxies for actual human target audiences 5222536.

The commercial and logistical appeal of synthetic data is undeniable, offering an intoxicating proposition to budget-constrained enterprises. It compresses research timelines to under two minutes, entirely bypasses the friction and delays of human recruitment, and drives costs down to between $2 and $60 per interview - a fraction of the $100+ required for traditional organic human sourcing 3637. Synthetic panels allow brands to rapidly stress-test early concepts, pre-mortem hypotheses, debug survey logic, and simulate product reception prior to investing significant capital in live human fieldwork 222325.

The Academic Reality: Documenting the Hype and Methodological Vulnerabilities

However, as enterprise adoption has scaled, rigorous academic evaluation and peer-reviewed scrutiny have exposed profound structural limitations inherent to synthetic data, forcefully countering aggressive vendor marketing claims 56. While synthetic respondents are highly proficient at returning logical, statistically mean behaviors that align with broad societal expectations, they fundamentally fail at capturing the messiness, variance, and irrationality of genuine human physical experience 623.

A convergence of academic literature, methodological replication studies, and tier-one analyst reports defines the strict boundaries of synthetic data validity in 2026:

- Statistical Divergence and Coefficient Errors: Independent academic testing has revealed alarming discrepancies when comparing synthetic outputs to real human survey data. A seminal replication study presented at Quant UX Con by Paxton and Yang demonstrated that 48% of statistical coefficients estimated from ChatGPT-generated responses were significantly different from their human counterparts 56. More critically, the sign of the effect flipped 32% of the time, meaning the AI did not simply miscalculate the magnitude of a behavioral relationship; it occasionally reversed the direction of the relationship entirely 5.

- The Pollyanna Principle (Algorithmic Sycophancy): Base LLMs exhibit an inherent architectural bias toward helpfulness and agreeableness, resulting in heavily skewed, overly positive feedback 7. In robust usability tests evaluating fictitious or fundamentally flawed concepts (e.g., a pancake-flavored toothpaste), untrained synthetic respondents routinely hallucinated a strong preference for the novel product, affirming terrible ideas due to algorithmic sycophancy 7. Furthermore, in tests assessing online course completion rates, synthetic users reported 100% completion, whereas real human data showed massive dropout rates, proving the models default to aspirational rather than actual behavior 7. This sycophancy requires aggressive, proprietary fine-tuning on historical, vertical-specific data to correct 7.

- The "Bunching Effect" and Analytical Failure: A comprehensive joint study conducted by STRAT7 and Dunnhumby highlighted that while synthetic data can mimic basic brand awareness metrics, it collapses under the weight of complex segmentation and Key Driver Analysis 26. The generative models produced a pronounced 'bunching effect', where responses clustered heavily around the mean, lacking the natural extreme variations found in actual human populations 26. This uniformity leads to logical inconsistencies across related questions, rendering the data dangerous for strategic business predictions 26.

- The "Hallucinated Customer" and the Absence of Lived Experience: Synthetic agents offer highly credible, articulate responses derived from recognizing linguistic patterns across millions of texts, but they entirely lack the contextual friction of lived physical reality 523. A study by Merrill Research provided a stark example: synthetic electrical engineers generated textbook, highly ethical answers regarding the critical importance of "sustainability" when selecting microprocessor vendors 5. Conversely, organic human engineers revealed that sustainability protocols are immediately abandoned during supply chain disruptions in favor of pure parts availability 5. This critical operational insight - grounded in the stress of physical reality - was fundamentally invisible to the AI 5.

Validating Synthetic Data: Best Practices and the TSTR Framework

To mitigate these severe risks, leading enterprise data scientists reject the premise of fully replacing human panels and instead employ the Train-Synthetic, Test-Real (TSTR) framework 7. Furthermore, an independent ESOMAR study conducted by Dr. Minh Nguyen at Google demonstrated that synthetic data is only statistically valid when used for augmentation rather than total generation 39. The study proved that synthetic augmentation could improve confidence intervals by 23.8%, but strictly required an existing organic base sample of at least n=300 completed human respondents to anchor the statistical boundaries 39. Consequently, best practices dictate restricting synthetic data usage to a maximum of 5% of overall sample sizes, strictly utilizing it to boost small, hard-to-reach demographic groups or to pre-test survey logic, while organic human respondents remain the indispensable jury for final product validation 2326.

Comparative Trade-offs: Synthetic vs. Human Panel Respondents

| Analytical Dimension | Synthetic Panel Respondents (AI Personas) | Human Panel Respondents (Organic Users) | Methodological Risk & Impact Assessment |

|---|---|---|---|

| Cost & Speed | $2 - $60 per interview; insights generated in under 2 minutes with zero scheduling logistics 3637. | $100 - $1,500+ per interview; requires weeks for recruitment, incentives, and coordination 51836. | Advantage: Synthetic. Offers unmatched operational efficiency for early-stage stress testing, logic debugging, and rapid hypothesis formulation. |

| Statistical Representation | High risk of the "bunching effect" (lack of extreme variance) and algorithmic sycophancy (Pollyanna Principle) 726. | Natural distribution of responses, including irrational behaviors, cognitive dissonance, and contradictions 623. | Advantage: Human. Synthetic data frequently fails Key Driver Analysis; 48% of AI coefficients can mathematically misalign with human truths 526. |

| Demographic Bias | Mirrors the biases inherent in broad LLM training data; struggles heavily with deeply niche, evolving, or culturally nuanced populations 739. | Can be selectively and programmatically recruited to ensure exact quotas, though subject to traditional panel conditioning 2639. | Advantage: Human. Synthetic is best utilized for data augmentation (expanding existing, verified subgroups) rather than wholesale generation 39. |

| Emotional & Contextual Depth | Generates highly logical, coherent text but lacks lived physical experience (e.g., environmental distractions, hardware limitations) 523. | Capable of rage-clicking, cultural misunderstanding, and providing unexpected tangents crucial for breakthrough insights 523. | Advantage: Human. Synthetic users represent a logical, idealized model; humans provide the necessary friction to validate real-world product survival 23. |

Enterprise Application: Real-World AI Integration Case Studies

The theoretical capabilities of 2026 AI market research platforms are most effectively validated through the lens of large-scale enterprise deployments. Multinational organizations transitioning from legacy systems to fully embedded, AI-native intelligence architectures have documented severe competitive advantages across supply chain forecasting, concept validation, and targeted consumer engagement 40.

Unilever: Scaling Predictive Innovation and Brand Safety

Unilever currently manages an expansive portfolio of over 500 active AI projects globally, treating artificial intelligence not as an incremental optimization tool, but as a foundational catalyst for business transformation 4127. In the realm of product discovery, Unilever bypasses traditional, time-intensive trial-and-error laboratory research. By utilizing advanced AI platforms alongside an $8 million investment and Microsoft's managed DFT (Density Functional Theory) supercomputing services, Unilever predicts the functionality of new ingredients and simulates the texture of animal-based dairy mimics, compressing decades of formulation work into mere days 41.

Crucially, to protect brand integrity while scaling generative content and synthetic insights, Unilever employs sophisticated guardrails such as "Digital Product Twins" and a proprietary Brand Safe AI training repository termed "Brand DNAi." This ensures that internal AI models only source information from approved data pools that perfectly reflect the brand's established visual identity, values, and approved strategies 28. Furthermore, predictive AI market intelligence is integrated directly into the physical supply chain; the deployment of sophisticated weather data analysis systems in Sweden improved demand forecasting accuracy for ice cream products by 10%, optimizing production schedules dynamically against complex, real-time environmental variables 41.

AB InBev: Agentic Insights and Manufacturing Precision

Anheuser-Busch InBev, the world's largest brewing conglomerate, has aggressively leveraged generative AI to reshape both its consumer insights workflow and its global manufacturing pipeline 27444546. Confronting the massive operational bottleneck of extracting manual insights from vast, unstructured consumer datasets (such as monthly Kantar brand guidance tracking data), AB InBev deployed "Max," a bespoke generative AI assistant. Max autonomously ingests databases, conducts complex driver analysis, and generates pixel-perfect, boardroom-ready strategic reports in a matter of hours - entirely automating a previously labor-intensive, multi-day cycle 46. This workflow automation allows the AB InBev insights team to pivot away from data processing to focus exclusively on high-level strategic guidance and creative intervention 46.

Simultaneously, AB InBev utilized Google Cloud Machine Learning engines and partnered with Pluto7 to optimize its highly complex beer filtration process (the K-Filter). By feeding historical data and unpredictable variables - such as fluid dynamics, turbidity, and pressure fluctuations - into a TensorFlow ML engine, the company identified patterns invisible to basic logic meters. This intervention improved barrelage per run by an astounding 60%, delivering massive cost efficiencies while strictly protecting product quality and taste constraints 2948. These enterprise implementations demonstrate how fully integrated AI ecosystems seamlessly fuse front-end market intelligence with back-end operational execution.

Global Geopolitical Topography: Regulatory Frameworks and Cultural Constraints

As AI market research technology permeates global enterprise operations, a monolithic, borderless deployment strategy is no longer legally or operationally viable 49. The efficacy of an AI research platform in 2026 is heavily dictated by a fractured landscape of regional legal architectures, stringent data privacy laws, and deeply ingrained cultural attitudes toward automation.

The EU AI Act: The Formidable Compliance Deadline of 2026

The European Union Artificial Intelligence Act represents the most formidable, highly structured regulatory constraint on global market research strategies. Entering full enforcement for high-risk systems in August 2026 (subject to final delayed ratifications extending some provisions to 2027/2028), the Act fundamentally shifts AI from a technological issue to a stringent, heavily penalized product governance mandate 8930. Crucially, the legislation applies extraterritorially; any multinational entity processing EU citizen data or deploying AI systems that target the EU market must comply, regardless of where the corporation is headquartered 89.

AI platforms utilized for sophisticated market research - particularly those deep-listening tools employing biometric identification, emotion recognition, or those processing data that impacts sensitive areas like employment, credit assessment, or education - frequently trigger the Act's "High-Risk" categorization (specifically under Annex III) 893051. To legally deploy these tools, companies must execute rigorous pre-market conformity assessments, implement formal, continuous risk management systems, guarantee robust human oversight mechanisms, and maintain exhaustive Article 11 technical documentation detailing the provenance of training data and model design specifications 8951. Failure to comply triggers immediate market access restrictions, mandatory product recalls, and severe financial penalties. Consequently, U.S. and global research firms are urgently scrambling to audit their technology stacks, map supplier responsibilities via platforms like EU SEND, and ensure compliance ahead of the late-2026 enforcement cliff 851.

India's DPDP Act: Principle-Based Flexibility and the Consent Dilemma

Contrasting sharply with the highly prescriptive, risk-tiered nature of the European framework, India's Digital Personal Data Protection (DPDP) Act (slated for full effectiveness by May 2027) adopts a flexible, principles-based approach to data governance 1331. While the EU categorizes specific AI technologies by distinct risk levels, India governs through overarching principles, deliberately avoiding the over-regulation of rapidly evolving machine learning systems 1331.

A highly unique feature of the Indian framework that directly impacts market research architecture is the statutory creation of "Consent Managers" - specialized intermediaries that allow users to systematically 'opt-in' their data for AI training and processing, acting as singular point data funnels 31. Furthermore, unlike the EU's GDPR which features a robust right to erasure, the DPDP Act provides a right to withdraw from data processing. This subtle legal distinction drastically alters how international research firms must architect their data lakes, as they are not forced to blindly delete data, but must identify and halt processing on data directly yielded by consent, changing the calculus for synthetic data augmentation and global model training when operating in South Asia 3153.

The Cultural Variable: Integration Acceptance vs. Public Anxiety

Legal frameworks represent only half of the deployment equation; cultural attitudes toward data privacy and machine intelligence serve as a hard business variable determining AI adoption success. An AI research strategy that achieves seamless integration and high participant engagement in Tokyo will likely face fierce consumer resistance in Berlin, regardless of legal compliance 49.

Public sentiment tracking data from 2025 and 2026 highlights these deep demographic fault lines. In the United States, public anxiety remains rampant, with 64% of Americans expressing fear that AI will trigger massive job displacements over the next two decades 32. In stark contrast, emerging economies demonstrate significantly higher rates of workplace integration; over 80% of employees in India, China, Nigeria, and the UAE report using AI at work on a regular basis, indicating a profound comfort with technological augmentation 32.

Japan represents the most striking global anomaly regarding AI acceptance. Rooted in Shinto animism - which attributes a form of spirit to objects, thereby reducing the psychological barrier between humans and non-biological entities - and driven by severe demographic aging pressures, Japan views automation not as an economic threat, but as a societal necessity 49. An overwhelming 85% of Japanese citizens express comfort with utilizing robot caregivers. Furthermore, the cultural emphasis on wa (harmony) positions AI agents as collaborative companions rather than disruptive replacements 49. Consequently, AI-moderated qualitative platforms and deep-listening sentiment tools face virtually zero cultural friction in Japan, making the nation a primary testing ground for advanced, agentic market research technologies 49.

Conclusion: Strategic Imperatives for the 2026 Landscape

The market research industry of 2026 has been permanently redefined by an irreversible reliance on AI-native platforms, fundamentally altering how global organizations source, synthesize, and act upon consumer intelligence 2. The corporations commanding the greatest competitive advantage are those actively transitioning from fragmented, single-point generative tools to cohesive, continuous agentic systems that orchestrate the entire research lifecycle, effectively closing the gap between raw data and executive strategy 1.

However, technological capability alone is insufficient to guarantee operational success. Navigating this era requires extreme methodological discipline. The immense logistical and financial allure of zero-cost synthetic data must be aggressively counterbalanced by rigorous validation techniques - most notably the Train-Synthetic, Test-Real (TSTR) protocol and the strategic capping of synthetic augmentation to 5% of overall samples - to prevent catastrophic strategic failures born of algorithmic hallucinations, sycophancy, and inherent bias 726. Furthermore, global enterprises must entirely abandon monolithic, borderless research strategies in favor of geographically nuanced deployments. Success requires deftly navigating the prescriptive rigidity and high-risk classifications of the EU AI Act, the evolving, consent-driven frameworks of India's DPDP Act, and the highly polarized cultural attitudes toward automation across Western and Asian markets 81349. Ultimately, the future of market research belongs to sophisticated teams that leverage agentic AI for unparalleled global scale and speed, while fiercely guarding the human judgment required for deep contextual interpretation, empathetic oversight, and final validation 4112034.