AI Image and Video Generation in Creative Marketing

The landscape of generative artificial intelligence for visual media has transitioned from experimental applications to foundational enterprise infrastructure. As of mid-2026, image and video generation models are not merely augmenting creative workflows; they are fundamentally restructuring the economic architecture of marketing, advertising, and creative production. The convergence of multimodal generation, real-time agentic orchestration, and enterprise-grade licensing agreements has shifted the market focus from basic content creation to predictive creative intelligence and workflow automation.

The analysis indicates that this technological maturation is producing measurable impacts on advertising performance, labor market dynamics, and global intellectual property frameworks. While early generative models were evaluated on their novelty and visual fidelity, the current generation of tools is measured by application programming interface reliability, temporal consistency, brand adherence, and demonstrable return on ad spend.

Technological Maturation of Visual Generation Architectures

The technical capabilities of visual generation models have advanced rapidly, characterized by architectural shifts from pure text-to-video models to complex, multimodal systems capable of joint audio-visual generation and precise structural control.

This evolution has eliminated many of the historical vulnerabilities associated with artificial intelligence video, such as temporal instability, anatomical distortion, and physics rendering errors.

Evolution of Video Generation Systems

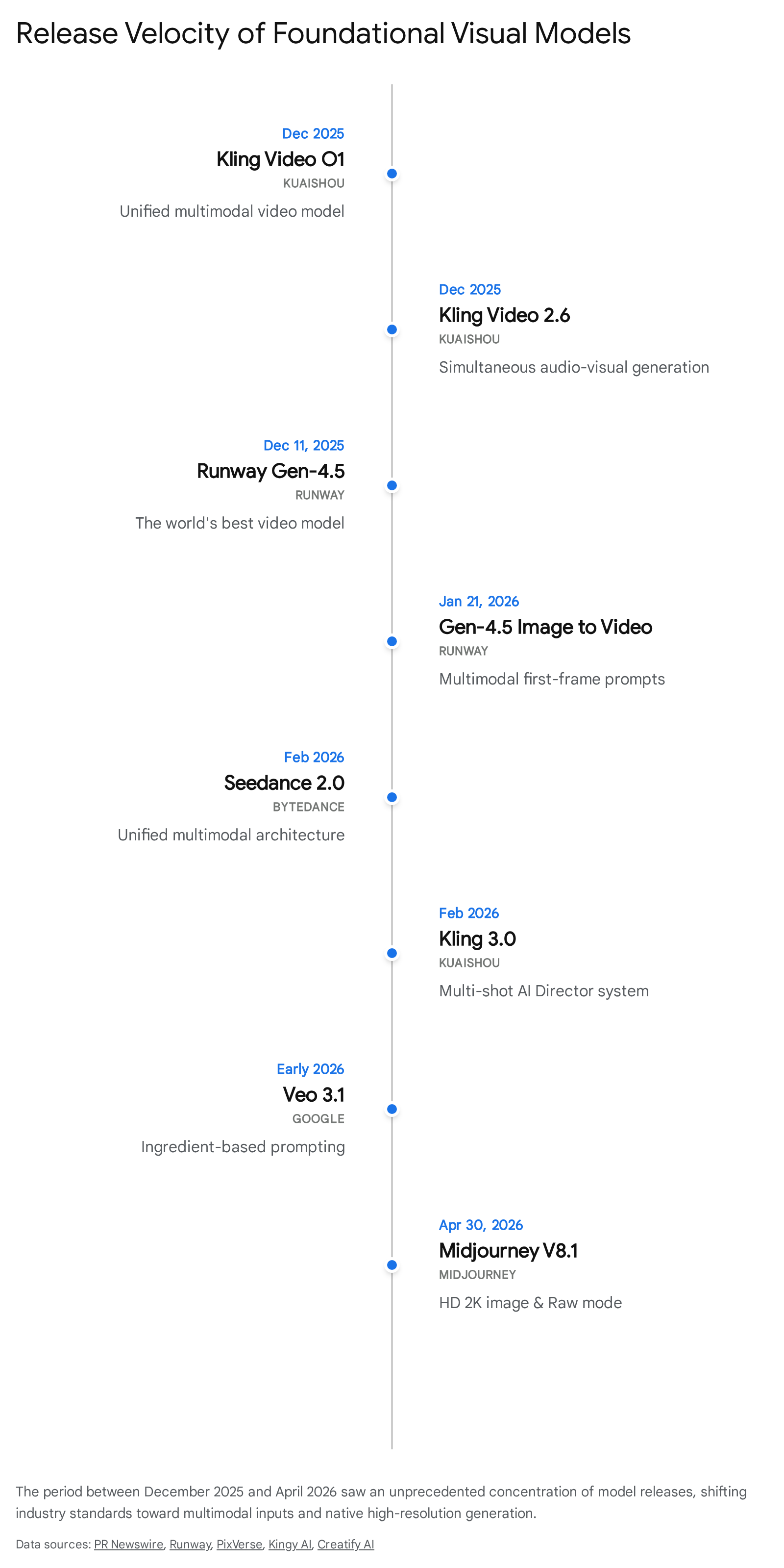

Leading platforms have moved beyond simple diffusion models to incorporate advanced spatiotemporal networks. For example, Kling AI, developed by the Chinese technology company Kuaishou, utilizes a diffusion-based transformer architecture enhanced by a proprietary three-dimensional variational autoencoder network 1. This architecture allows for synchronous spatiotemporal compression, significantly improving video quality while maintaining training efficiency 1. The release of Kling 3.0 in February 2026 introduced native 4K resolution at 60 frames per second, a multi-shot sequencing system, and enhanced character consistency 23. The model's computational efficiency enables the accurate capture of complex motion and drastic scene changes 1. The commercial viability of this architecture is evident in Kuaishou's financial disclosures; by December 2025, Kling AI achieved an annualized revenue run rate of $240 million, serving over 60 million creators globally 34.

Simultaneously, ByteDance's Seedance 2.0 model has emerged as a dominant force in commercial video production. Holding the top Elo ratings on the Artificial Analysis Video Arena for both text-to-video and image-to-video generation, Seedance 2.0 utilizes a unified multimodal architecture 2. It natively supports joint audio-video generation, eliminating the need for post-production sound synchronization, and produces clips up to 30 seconds in length 6. The integration of audio and visual generation within a single latent space represents a significant technical hurdle cleared by the industry in 2026, drastically reducing post-production timelines 7.

Google's Veo 3.1 has established a dominant position in user adoption, capturing an estimated 96.4% of model share on aggregate generation platforms by early 2026 8. Veo 3.1 excels in structured prompting, which provides enterprise users with strict compositional control over camera positioning, element placement, and movement 6. Conversely, OpenAI's Sora 2 remains highly regarded for cinematic visual storytelling but has shifted primarily toward licensed, enterprise-tier applications rather than open consumer access 956.

Runway has positioned its Gen-4.5 model specifically for professional post-production and creative editing. Unlike models optimized purely for one-shot text-to-video generation, Gen-4.5 provides timeline-like controls and keyframe functionality, allowing editors to direct camera movements, animate specific regions of an image, and maintain visual consistency across multiple clips 7. While independent benchmark testing occasionally scores Runway lower on raw visual fidelity compared to Kling or Seedance, its deep integration into existing non-linear editing workflows makes it a preferred tool for professional filmmakers and agency editors 67.

Advancements in Image Generation Systems

Image generation models have similarly prioritized workflow integration and output resolution. Midjourney transitioned from its V6 architecture to the V8 series in early 2026, entirely rewriting its codebase to enable faster iteration and a fivefold increase in generation speeds 89. The release of Midjourney V8.1 in April 2026 introduced native 2K high-definition support without the need for post-generation upscaling, alongside a raw rendering mode to increase prompt adherence by removing default aesthetic styling 1510.

These updates reflect a broader industry demand for sharper source images and controlled prompt results necessary for professional concept art and marketing visuals 15. Midjourney also replaced its legacy character reference systems with comprehensive cross-reference capabilities, vastly improving photorealism and character consistency across disparate outputs 11.

Shift Toward Multimodal Inputs and Video-to-Video Transformation

The era of isolated text-to-video generation is being superseded by multimodal inputs. User behavior analysis from platforms hosting hundreds of thousands of users indicates that while text-to-video remains the entry point, over 32% of generations now rely on image-to-video pathways to guarantee visual control 8. Models now simultaneously accept combinations of text, reference images, video clips, and audio tracks to synthesize coherent outputs 7. This allows brands to upload product photos, competitor reference videos, and royalty-free music to generate professional product demonstrations without traditional storyboarding 7.

Furthermore, video-to-video capabilities have become critical for professional workflows. These tools preserve the underlying motion, timing, and structural physics of original footage while applying entirely new visual treatments 12. Models like WAN AI provide professional-grade style transfers that maintain fluid, natural motion, allowing for the cinematic transformation of raw footage 12. This capability is essential for commercial projects where temporal stability and motion logic cannot be compromised 13. The integration of these models into comprehensive enterprise platforms ensures that marketing teams can maintain brand consistency across localized or personalized variations 2021.

| Model Name | Primary Developer | Core Capability Focus | Notable 2026 Technical Features | Benchmark Standing |

|---|---|---|---|---|

| Seedance 2.0 | ByteDance | Integrated commercial video. | Unified multimodal inputs; joint audio-video generation. | Top Elo ratings for text-to-video and image-to-video 26. |

| Kling 3.0 | Kuaishou | Cinematic shorts and motion. | 4K 60fps native; 3D VAE network; multi-shot AI director. | Highest visual fidelity scores in independent testing 137. |

| Veo 3.1 | Structured programmatic video. | Ingredient-based compositional control; high adherence. | 96.4% aggregate platform market share 68. | |

| Runway Gen-4.5 | Runway AI | Post-production integration. | Timeline controls; keyframe animation; partial rendering. | Unmatched precision for traditional video editors 67. |

| Midjourney V8.1 | Midjourney | High-definition static imagery. | Native 2K generation; Raw mode; rewritten codebase. | Industry standard for cinematic styling and photorealism 151011. |

| Sora 2 | OpenAI | Brand IP narrative storytelling. | High-fidelity physics simulation; indemnified generation. | Deep enterprise ecosystem integration 96. |

Advertising Performance Metrics and Creative Velocity

The deployment of generated creative assets in digital marketing has produced a robust dataset of performance benchmarks across major advertising networks. By 2026, sufficient longitudinal data exists to measure the impact of artificial intelligence creative on full-funnel metrics, revealing nuanced strengths and specific limitations regarding consumer intent 14.

Top-of-Funnel Click-Through Rate Enhancements

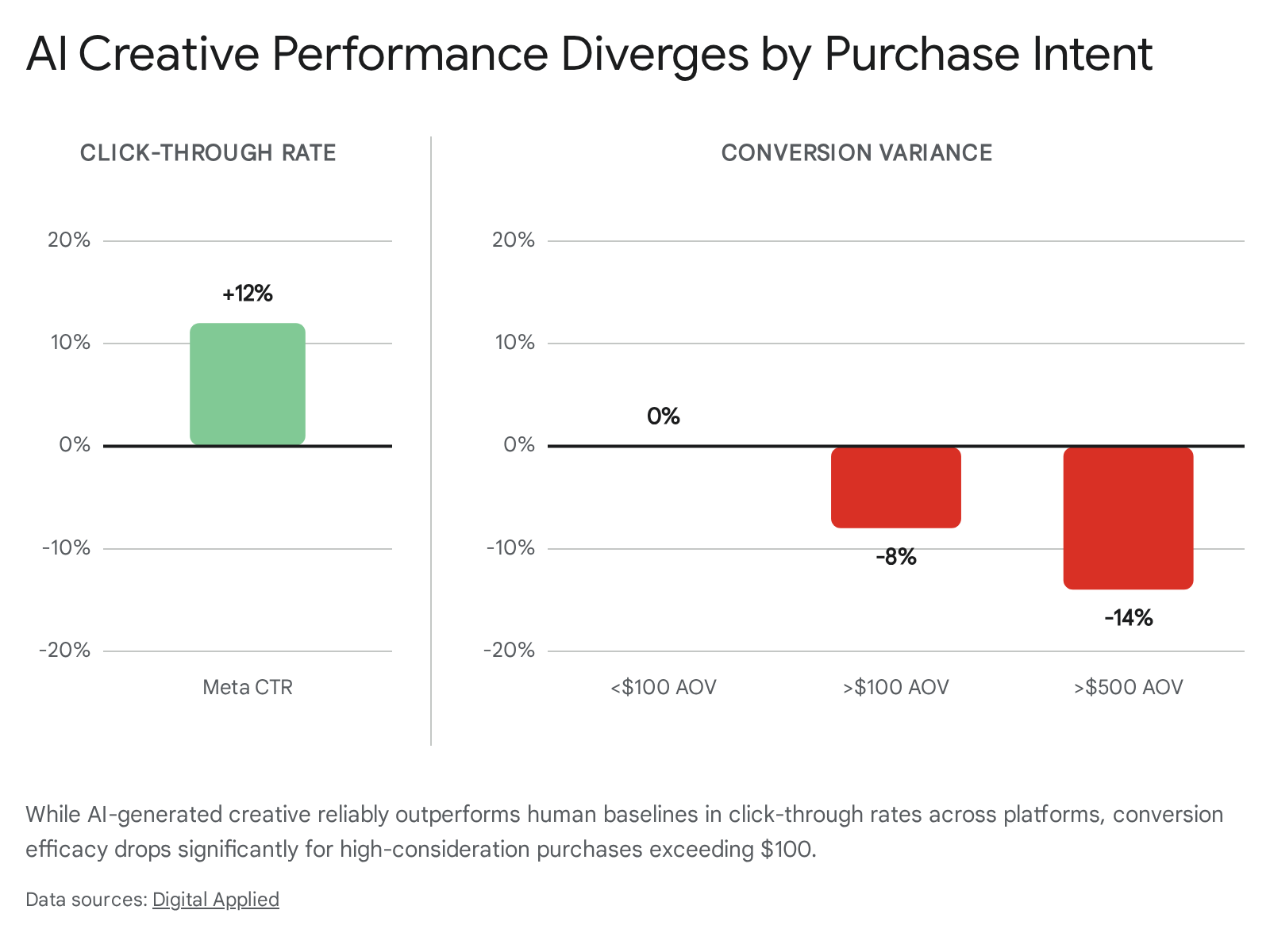

Independent split testing across platforms like Meta, Google, and TikTok demonstrates that artificial intelligence generated video and imagery consistently outperform human-created baselines at the top of the marketing funnel. Across datasets comprising over 50,000 ad variations, generated creative achieved an average 12% higher click-through rate on Meta platforms 14. In specialized studies, such as those conducted by Columbia University and Taboola analyzing over 500 million impressions, generated advertisements achieved an average click-through rate of 0.76%, compared to 0.65% for human-made advertisements 15. The Taboola study notably highlighted that generated advertisements performing the best were those that seamlessly incorporated human trust cues, such as clear facial representations, without appearing overtly synthetic 15.

This advantage is primarily attributed to the velocity of creative testing. Models allow marketing teams to rapidly generate and test visual "hooks" - the crucial first three seconds of a video advertisement 24. Because the hook is the highest-leverage variable in user engagement, the ability to test 20 to 50 variations per campaign cycle, compared to the traditional 3 to 5, significantly increases the mathematical probability of discovering a high-performing asset 2425. The proliferation of vertical video formats (9:16 aspect ratio), which now account for over 43% of generated outputs, aligns perfectly with algorithm-driven discovery engines like TikTok and Instagram Reels 825.

Conversion Metrics and the High-Consideration Gap

While generated creative excels at capturing attention, conversion data reveals limitations for high-consideration purchases. For e-commerce products with an average order value under $100, generated creative has reached full performance parity with human-produced advertisements 14. However, for products with an average order value above $100, generated advertisements convert 8% worse than human creative 14. This conversion gap widens to 14% for products over $500, and reaches an 18% deficit in business-to-business lead generation 14.

The data suggests that while these models excel at intent qualification for impulse buys, users navigating complex, high-value purchasing decisions require deeper trust signals that synthetic content currently struggles to provide 14. Consequently, marketers are deploying generative tools for the 60% to 70% of creative volume that drives direct response at scale, while reserving human creative direction and traditional production for flagship campaigns and high-consideration trust building 1426.

| Marketing Funnel Metric | Human Baseline Comparison | Primary Driver of Variance | Optimal Use Case |

|---|---|---|---|

| Click-Through Rate (Meta) | +12% improvement | Rapid testing of visual hooks and formatting. | Top-of-funnel awareness; scroll-stopping social media assets 14. |

| Conversion (AOV < $100) | Parity (0% variance) | Efficient scaling of direct-response messaging. | Low-consideration consumer goods; impulse e-commerce 14. |

| Conversion (AOV > $100) | -8% decline | Lack of deep trust signals required for expensive items. | Mid-tier retail; requires human augmentation for closing 14. |

| Conversion (AOV > $500) | -14% decline | Complex buyer journeys heavily reliant on brand authenticity. | Not recommended for bottom-of-funnel conversion without significant human polish 14. |

Economic Reconfiguration of Creative Agencies

The integration of generative models has catalyzed a structural reorganization of the advertising and creative agency landscape. The historical agency business model, heavily reliant on billing for manual execution and high labor hours, is facing severe margin compression and existential pressure as production cycles shrink from weeks to minutes 2716.

Margin Compression and Changing Pricing Models

The efficiency gains introduced by these technologies have fundamentally altered the cost structure of creative delivery. Surveys conducted by Adobe in 2026 reveal that the average creative professional spends nearly 19 hours per week collaborating with artificial intelligence, resulting in an average time savings of 17 hours per week on production . Over 94% of creatives report generating assets faster, empowering teams to iterate more frequently and respond to viral trends immediately .

Consequently, the cost per deliverable for agencies has dropped by an estimated 40% to 70% 27. Agencies that succeed in this environment are actively repricing their services; by pricing 30% to 50% below their 2023 retainers, they maintain market competitiveness while actually running at higher gross margins due to the drastically reduced delivery costs 27. Agencies attempting to maintain traditional headcount-based retainers are routinely being undercut by automated competitors 27.

The Rise of Agentic Orchestration

The agency of 2026 is defined less by isolated content generation and more by "agentic orchestration" 3017. Rather than utilizing models merely as brainstorming assistants, high-performing agencies are deploying autonomous agents to manage complex, end-to-end workflows. Gartner research predicts that 40% of enterprise applications will leverage task-specific agents by 2026, compared to less than 5% in 2025 18.

Tools such as Claude Code have emerged to facilitate this transition, allowing marketing teams to interact directly with file systems and application programming interfaces through command-line interfaces to orchestrate multi-model workflows 17. This agentic capability enables small teams to function with the output capacity of traditionally massive agencies, transforming the creative studio from a manual assembly line into an automated pipeline capable of producing hyper-personalized, platform-specific outputs instantly 2517. The shift toward creative intelligence ensures that content is not just generated, but actively predicted, adapted, and optimized automatically based on performance data 2516.

Consolidation and Specialization Archetypes

In response to these pressures, the agency sector is fracturing into distinct surviving archetypes. Generalist content shops and pure media-buying firms lacking proprietary measurement frameworks are struggling 27. Instead, the market is favoring "specialist" agencies that possess deep, un-automatable institutional knowledge (such as pharmaceutical regulatory advertising) and "AI-augmented generalists" who rebuild their delivery models around machine execution while retaining humans strictly for strategic judgment, relationship management, and creative direction 27.

This bifurcation has heavily influenced merger and acquisition activity. Following the massive Omnicom-IPG merger in late 2025, which created a $25 billion global entity with over 100,000 employees, mid-market acquisition activity paused before accelerating in 2026 19. Acquisitions are now heavily concentrated on acquiring proprietary data architectures, retail media management tools, and identity infrastructure, as agencies seek to prove value beyond automated ad execution 19.

Labor Market Dynamics in the Creative Sector

Despite projections of widespread job displacement, macroeconomic data provides a nuanced view of how these models currently impact creative labor.

Occupational Exposure and Wage Stability

Industry analysts project that applied intelligent automation will yield a long-term reduction in traditional agency headcount, with Forrester estimating a net 15% reduction in the U.S. advertising workforce by the end of 2027 272035. However, a comprehensive study published in the Journal of Cultural Economics, analyzing Gallup Panel workforce data and U.S. Bureau of Labor Statistics records between 2017 and 2024, found no evidence of a broad collapse in artists' earnings following the widespread diffusion of large language and generative models 2122.

When evaluating an "occupational exposure to generative AI index," professions such as music directors and composers showed high exposure (0.70), while roles requiring physical embodiment, like dancers, showed minimal exposure (0.04) 2123. Despite these high exposure levels for certain artistic roles, the earnings trends for highly exposed occupations remained broadly similar to less-exposed peers, with wage estimates remaining slightly positive 2123. Employment growth patterns are more ambiguous; while the value of existing employed creatives has not cratered, new hiring and staffing volume may be absorbing the efficiency gains instead of expanding headcounts 2223.

Upstream Creative Support Versus Final Output

The stability in creative wages can be attributed to how models are integrated into the labor process. The panel evidence suggests that generation platforms are disproportionately utilized for "upstream creative support" rather than the direct replacement of final creative products 22. Creative professionals report using generative tools frequently (25% compared to 20% in the broader economy) primarily for idea generation, rapid iteration, prototyping, and the automation of administrative tasks 212223.

This pattern - accelerating early-stage work while leaving the final polish and strategic execution to humans - functions as an augmentation of productivity rather than immediate obsolescence 22. Generative platforms enable creators to scale their output, increasing their individual economic value, even as the broader labor pool requires less total human capital to meet aggregate demand 23.

Macroeconomic Growth and Productivity Projections

The macroeconomic implications of integrating visual generation into digital workflows extend significantly beyond the creative sector. Researchers at the Wharton Budget Model estimate that generative technology will substantially affect 40% of current U.S. gross domestic product 24. The model projects that this will increase total factor productivity and aggregate gross domestic product by 1.5% by 2035, and nearly 3% by 2055, representing a permanent increase in economic activity 24. The peak annual contribution to productivity growth is anticipated in the early 2030s, driven by average labor cost savings scaling from 25% to 40% across exposed sectors 24.

Similarly, a computable general equilibrium study by KPMG projects that rapid adoption of agentic and generative systems could add up to $2.84 trillion to the U.S. economy by 2030, and $11.04 trillion globally by 2050 25. The study notes that while productivity gains are massive, a failure to upskill the workforce could result in structural unemployment increasing to 5.55% under rapid adoption scenarios without commensurate retraining efforts 25. The global generative market in creative industries alone is projected to grow from $4.06 billion in 2025 to $14.03 billion by 2030, reflecting a 27.1% compound annual growth rate 26.

Intellectual Property, Licensing Agreements, and Regulatory Frameworks

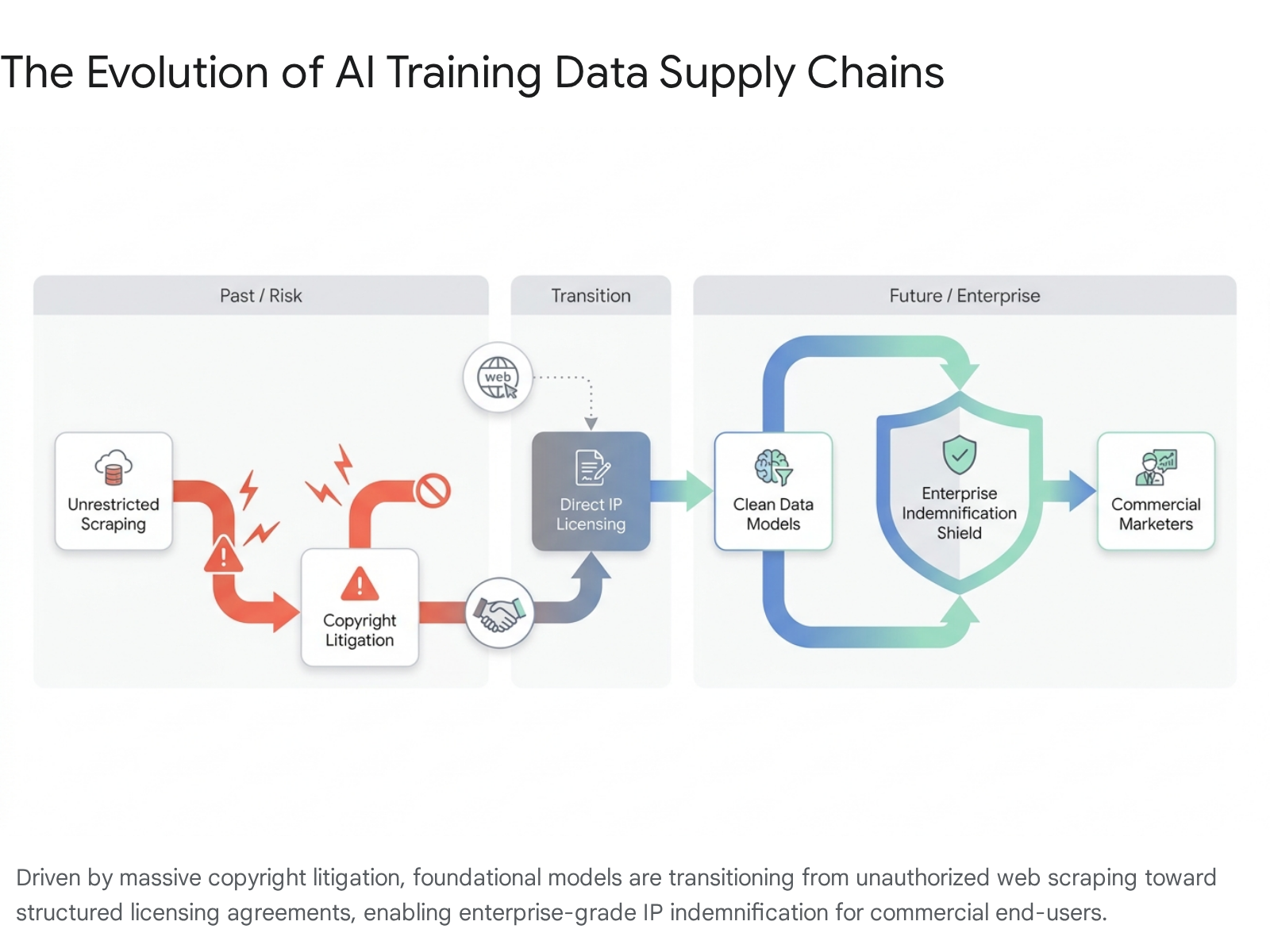

The proliferation of generative models has triggered intense legal and regulatory scrutiny regarding intellectual property and the ingestion of copyrighted training data. The transition from 2025 to 2026 marked a pivotal shift from an era of unrestricted web scraping to one defined by enterprise licensing, strict copyright litigation, and divergent global compliance standards.

The Era of Copyright Litigation

The model training practices of leading developers face severe legal challenges from both major publishers and independent creators. The flagship case of Getty Images v. Stability AI, currently proceeding in the UK High Court, alleges the unauthorized scraping of over 12 million proprietary photographs 42. In a case with massive implications, Meta and CEO Mark Zuckerberg face a class-action lawsuit from five major publishers alleging the illegal use of millions of copyrighted books to train the Llama system, with the plaintiffs claiming Zuckerberg "personally authorized and actively encouraged the infringement" 2728.

Video generation platforms are equally exposed. Runway faces multiple lawsuits under the Digital Millennium Copyright Act, including claims that the company utilized automated tools to bypass YouTube's anti-circumvention software to extract and ingest millions of creator videos without authorization or compensation 29. The sheer volume of these cases - totaling 86 separate copyright suits against AI companies in the United States alone by early 2026 - highlights the legal unsustainability of relying solely on "fair use" defenses for scraped training data 30.

The Shift Toward Enterprise Licensing

In response to mounting legal risk and enterprise demands for brand safety, the industry is rapidly establishing direct licensing frameworks. In a watershed moment in December 2025, The Walt Disney Company signed a three-year, $1 billion equity and licensing agreement with OpenAI 3132.

This agreement grants OpenAI's Sora platform the ability to generate short, user-prompted videos utilizing over 200 specific characters from Disney, Marvel, Pixar, and Star Wars 32. Crucially, the agreement expressly excludes talent likenesses and voice cloning to avoid right-of-publicity violations, and strictly prohibits OpenAI from utilizing the licensed intellectual property to train future models 3133. The resulting outputs will be curated for display on Disney+, representing the first major integration of authorized, fan-generated synthetic content into a premium streaming platform 34.

For enterprise customers, intellectual property indemnification has become a critical competitive differentiator. Major providers like OpenAI and Runway now offer negotiated indemnification clauses in their enterprise agreements. These clauses protect commercial users from third-party copyright claims arising from the generated outputs, provided the user did not intentionally prompt infringing material or disable safety filters 93536.

Divergent Global Regulatory Frameworks

The global regulatory environment governing visual generation has fragmented, forcing agencies and platforms to navigate a complex patchwork of compliance standards across different jurisdictions in 2026.

The European Union: The EU Artificial Intelligence Act, which entered into force in 2024, began phased implementation in 2025. Prohibitions on unacceptable risk systems are active, while requirements for general-purpose models involving transparency and copyright compliance will apply fully by August 2026 3738. The Act mandates that developers implement strict risk mitigation measures and respect opt-out protocols for data mining 37.

Japan: In contrast to the EU's stringent approach, Japan implemented its Act on the Promotion of Research, Development and Utilization of AI-Related Technologies in September 2025 38. Designed to foster innovation and maintain competitiveness, the Japanese framework notably eschews criminal penalties and administrative fines, relying instead on soft law principles and strategic government involvement to manage risks and promote commercial utilization 38.

The United Kingdom: The UK government engaged in extensive consultation regarding a potential "opt-out" text and data mining exception that would have heavily favored model developers. However, following severe pushback from the creative industries - and a House of Lords report warning that weakening copyright would damage the £124 billion creative sector - the government abandoned the exception in March 2026 3940. The UK is instead focusing on creator control, transparency, and specific protections against digital replicas, prioritizing licensing-based development 3940.

The United States (California): While comprehensive federal legislation remains stalled in the U.S., state-level regulation has progressed. California enacted a generative dataset disclosure law, effective January 2026, which mandates that developers publicly acknowledge the copyrighted material utilized in their training data 41. This transparency measure, strongly supported by advocacy groups like the Concept Art Association, provides creators with the necessary visibility to enforce their rights and combat uncompensated displacement 4142.

Strategic Conclusions

The current state of image and video generation represents a permanent paradigm shift in creative marketing. The technology has matured from generating unreliable, low-fidelity clips to orchestrating highly complex, multi-shot, audio-synchronized assets suitable for immediate commercial deployment. The shift toward multimodal inputs has democratized high-end production capabilities, allowing small teams to produce professional cinematic content at a fraction of traditional costs.

For marketing organizations and creative agencies, the primary differentiator is no longer access to generative tools, as they have become ubiquitous. The competitive advantage now lies in creative intelligence - the ability to use models to predict performance, test variations at high velocity, and seamlessly integrate outputs into automated, agentic pipelines. While algorithmic generation excels at top-of-funnel engagement and rapid iteration, human judgment remains indispensable for high-consideration strategy, brand trust-building, and navigating an increasingly complex global web of copyright compliance and licensing. Organizations that successfully restructure their cost models to embrace this hybrid human-machine workflow will capture unprecedented margins, while those clinging to traditional production cycles face rapid obsolescence.