Additive combinatorics and arithmetic patterns

Additive combinatorics is a sprawling, interdisciplinary branch of mathematics dedicated to investigating the combinatorial properties of algebraic operations - specifically, addition and multiplication - within sets, integers, and general groups. The discipline explores the profound principle that global arithmetic structure emerges from minimal local constraints. Unlike classical number theory, which often limits its focus to highly structured entities such as prime numbers, perfect squares, or the roots of polynomials, additive combinatorics typically operates under minimal structural assumptions 11. It examines the behavior of arbitrary, unstructured sets provided they satisfy basic density, cardinality, or expansion requirements 12.

Historically rooted in the study of integer sequences, extremal combinatorics, and additive number theory, the discipline has evolved into a sophisticated mathematical crossroads. Modern additive combinatorics relies on an intricate convergence of techniques from discrete Fourier analysis, ergodic theory, graph theory, incidence geometry, algebraic geometry, and, most recently, information theory 354. By understanding the interplay between structural rigidity and statistical pseudorandomness, mathematicians in this field have resolved some of the most persistent conjectures of the 20th century, including Szemerédi's theorem, the Green-Tao theorem, and the Polynomial Freiman-Ruzsa conjecture.

Foundations of Additive Operations and Sumsets

At the core of additive combinatorics is the analysis of sumsets and difference sets. For any two subsets $A$ and $B$ of an abelian group $G$, the sumset is defined algebraically as $A + B = {a + b : a \in A, b \in B}$ 15. Similarly, the difference set is denoted $A - B$. A central premise of the field revolves around direct and inverse problems related to the cardinalities of these sets 3.

Direct problems ask: given explicit structural information about $A$ and $B$, what can be deduced about the size, distribution, and properties of $A + B$? Inverse problems - which form the bulk of the field's deepest research - ask the reverse: if the sumset $A + B$ is anomalously small, what latent algebraic structure must $A$ and $B$ possess? 3. The study of these inverse problems traces its origins to foundational theorems such as the Cauchy-Davenport theorem, which establishes that for subsets $A, B$ of a prime cyclic group $\mathbb{Z}/p\mathbb{Z}$, the sumset satisfies $|A + B| \ge \min(p, |A| + |B| - 1)$ 3. Vosper's theorem later provided an inverse characterization of this bound, proving that equality holds only when $A$ and $B$ are arithmetic progressions sharing the same common difference 3.

The Doubling Constant and Structural Rigidity

The structural rigidity of a set is formally quantified by its doubling constant, denoted as $K = |A+A|/|A|$ 56. If $A$ is a perfectly rigid algebraic structure, such as a finite subgroup, it is closed under addition, meaning $|A+A| = |A|$ and $K = 1$ 57. In contrast, for a completely random set of integers, the sums of distinct pairs will almost never overlap, resulting in a sumset of size roughly $|A|^2/2$, meaning the doubling constant $K$ grows linearly with the size of $A$ 8.

When $K$ is small (bounded by a constant independent of the cardinality of $A$), the set $A$ exhibits profound internal structure. In the context of the integers, the classical Freiman's theorem (later refined by Ruzsa) provides the definitive inverse structural conclusion: if a finite set $A \subset \mathbb{Z}$ has a bounded doubling constant $K$, then $A$ must be a dense subset of a multi-dimensional arithmetic progression, where both the dimension of the progression and its size relative to $A$ are bounded entirely by functions of $K$ 36.

To manipulate sets with small doubling constants, researchers developed "Ruzsa calculus," a suite of inequalities governing sumsets. Among the most critical are the Plünnecke-Ruzsa inequalities, which demonstrate that if a set $A$ has a small doubling constant $K$, then any iterated sumset or difference set $nA - mA$ will also have a cardinality bounded by $K^{n+m}|A|$ 35. This proves that sets with small doubling do not experience explosive combinatorial growth upon repeated addition or subtraction 5.

Additive Energy and the Balog-Szemerédi-Gowers Lemma

A statistical relaxation of the doubling constant is "additive energy." The additive energy of a set $A$, denoted $E(A)$, is defined as the number of quadruples $(a_1, a_2, a_3, a_4) \in A^4$ such that $a_1 + a_2 = a_3 + a_4$ 9. Additive energy measures the frequency of additive collisions within a set. A set with a small doubling constant strictly requires high additive energy; however, the converse is not necessarily true, as a set might consist of a highly structured dense core alongside a vast, unstructured halo 9.

The Balog-Szemerédi-Gowers lemma bridges this gap. It asserts that if a set $A$ possesses anomalously high additive energy (proportional to $|A|^3$), there must exist a large subset $A' \subset A$ such that the doubling constant $|A'+A'|/|A'|$ is strictly bounded 210. This powerful result allows mathematicians to extract rigidly structured algebraic components from sets that only exhibit statistical additive biases, forming a critical step in countless inverse theorems 6.

The Sum-Product Phenomenon

Another foundational pillar of the discipline is the Erdős-Szemerédi sum-product theorem. Formulated in 1983, the theorem quantifies the principle that additive structure and multiplicative structure are fundamentally incompatible within the same set of real or integer numbers 11. The theorem states that for any finite set of integers $A$, either the sumset $A+A$ or the product set $A \cdot A = {a \cdot b : a,b \in A}$ must be significantly larger than $A$ itself 11. Specifically, there exist absolute positive constants $c$ and $\varepsilon$ such that $\max(|A+A|, |A \cdot A|) \geq c|A|^{1+\varepsilon}$ 11.

This implies that the real line does not contain any finite subset that behaves asymptotically like a subring or a subfield 11. Sets that are additively structured (such as standard arithmetic progressions) generate enormous product sets, while sets that are multiplicatively structured (such as geometric progressions) generate enormous sumsets 11. Over the decades, the lower bound on this exponent has been repeatedly refined by researchers such as Elekes, Solymosi, and Konyagin, bridging additive combinatorics with incidence geometry and the Szemerédi-Trotter theorem 1112. Sum-product phenomena have since been generalized to finite fields, directly impacting the construction of cryptographic extractors and pseudorandom number generators 713.

The Structure Versus Randomness Dichotomy

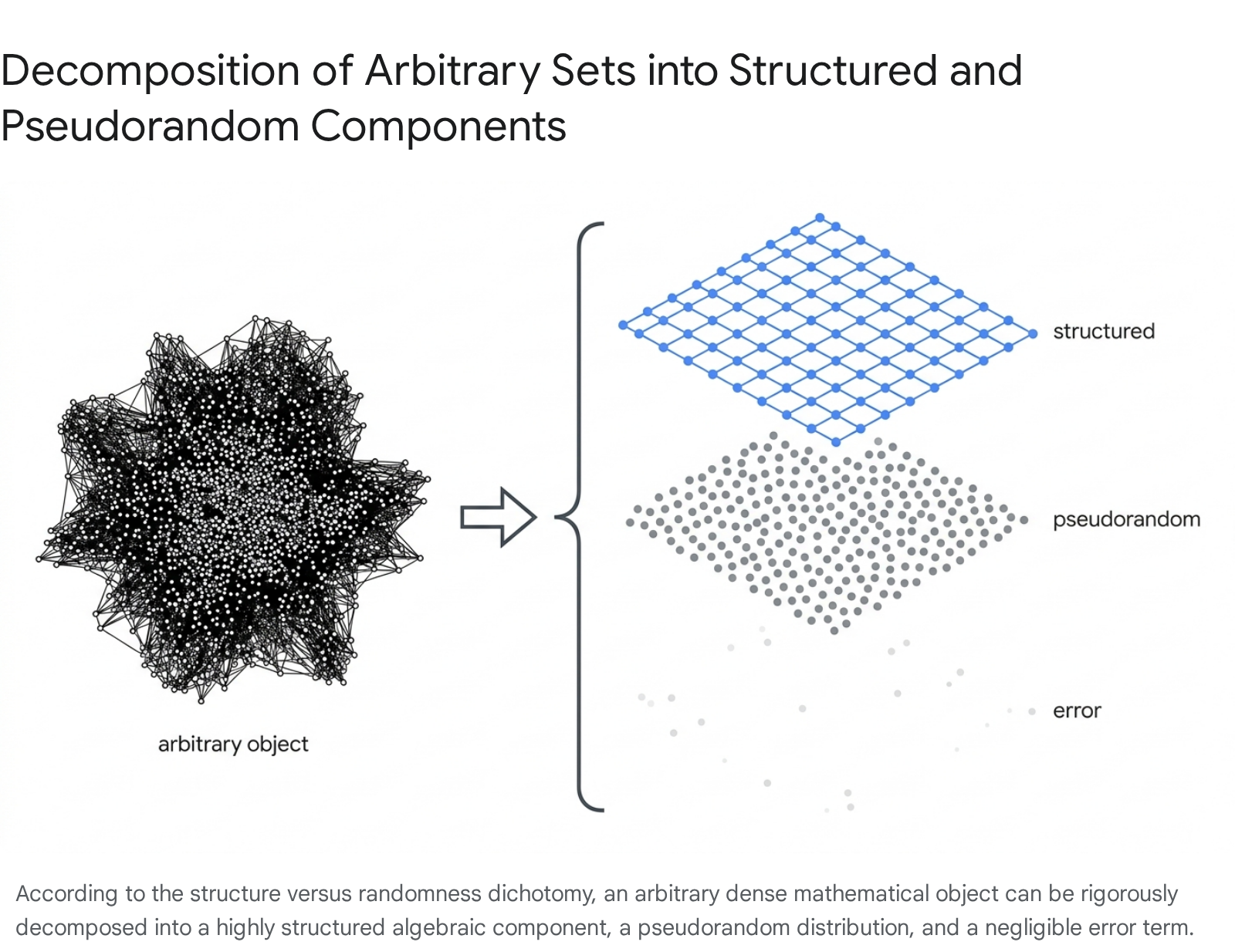

The overarching philosophical framework underlying modern additive combinatorics is the "structure versus randomness" dichotomy, a concept heavily formalized and championed by Terence Tao and Timothy Gowers 101415. This paradigm asserts that any arbitrary, high-dimensional, or complex mathematical object can be rigorously decomposed into distinct components: a highly structured component, a pseudorandom (or noise) component, and a negligible error term 51014.

Structured objects are those of low combinatorial complexity that carry algebraic or periodic regularity, such as small subgroups, multi-dimensional arithmetic progressions, or low-degree polynomials 1016. Pseudorandom objects, conversely, are sets that mimic the statistical properties of random coin flips 10. Their elements do not correlate with structured algebraic phases, meaning they lack unexpected local density concentrations and behave uniformly across the ambient space 10.

In practice, this dichotomy drives "stopping time" or density increment arguments. If a set $A$ is pseudorandom, standard statistical counting lemmas guarantee that it will contain the statistically expected number of arithmetic patterns (such as arithmetic progressions) 10. If $A$ is not pseudorandom, it must exhibit a structural bias - meaning it correlates with a structured object 10. This correlation allows researchers to restrict their attention to a smaller, denser sub-structure, iteratively increasing the set's relative density until it either becomes pseudorandom or the density logically exceeds 100%, yielding a contradiction 1017.

Arithmetic Progressions and Density Theorems

A defining pursuit of additive combinatorics is the search for arithmetic progressions within subsets of the integers. In 1927, Bartel Leendert van der Waerden proved that for any partition of the integers into a finite number of color classes, at least one color class must contain arbitrarily long arithmetic progressions 41819. This qualitative result laid the groundwork for a much more ambitious hypothesis by Paul Erdős and Pál Turán in 1936: they conjectured that merely having a positive upper density in the integers is sufficient to guarantee the existence of arbitrarily long arithmetic progressions 181922.

The upper density of a set $A \subseteq \mathbb{N}$ is defined as the limit supremum of $|A \cap {1, \dots, N}| / N$ as $N \to \infty$ 1719. The Erdős-Turán conjecture posits that if this limit is strictly greater than zero, $A$ contains $k$-term arithmetic progressions for every integer $k \ge 3$ 19. Formulated in its finitary version, the conjecture seeks to bound $r_k(N)$, the maximum size of a subset of ${1, \dots, N}$ that lacks an arithmetic progression of length $k$ 1920. The conjecture demands that $r_k(N) = o(N)$.

Roth's Theorem (Length-3 Progressions)

In 1953, Klaus Roth provided the first major breakthrough by proving the Erdős-Turán conjecture for the case $k=3$ 1920. Roth's theorem states that any subset of the natural numbers with positive upper density contains a 3-term arithmetic progression (a sequence of the form $x, x+d, x+2d$ with $d \neq 0$) 20. Equivalently, he proved that $r_3(N) = o(N)$, explicitly bounding it by $O(N / \log \log N)$ 1921.

Roth achieved this by adapting the Hardy-Littlewood circle method, an analytical technique previously used by Vinogradov to study the distribution of primes 192223. His proof formally established the density increment strategy in additive combinatorics. Roth demonstrated that if a set $A$ lacks 3-term progressions, its discrete Fourier transform must have a large non-trivial coefficient 2224. This large coefficient indicates that the set is not pseudorandom but instead heavily concentrated on a specific arithmetic progression of a smaller modulus 2224. By restricting the focus to this sub-progression, the relative density of $A$ strictly increases 1720. Because density cannot exceed 1, this iterative process must eventually terminate, proving that $A$ cannot avoid 3-term progressions indefinitely 1720.

Szemerédi's Theorem and Graph Regularity

The extension of Roth's theorem to progressions of arbitrary length $k$ proved vastly more difficult because linear Fourier analysis is insufficient to detect structures of length 4 and higher. In 1969, Endre Szemerédi proved the case for $k=4$ using a highly intricate combinatorial argument, and in 1975, he published a complete proof for all arbitrary lengths $k$, fully resolving the Erdős-Turán conjecture 181925.

Szemerédi's theorem was immediately recognized as a masterpiece of mathematical reasoning 1826. To achieve the proof, he developed what is now known as the Szemerédi Regularity Lemma 2627. The Regularity Lemma essentially applies the structure-randomness dichotomy to graph theory. It asserts that the vertices of any arbitrarily large, dense graph can be partitioned into a bounded number of subsets such that the bipartite subgraphs formed between almost all pairs of subsets behave like random graphs 101426.

While the Regularity Lemma successfully proved the existence of $k$-term progressions, the quantitative bounds it provided for $r_k(N)$ were notoriously weak. The bounds derived from the regularity proof decayed at a rate dictated by an inverse tower of exponential functions (the Ackermann function hierarchy), rendering them practically useless for understanding the true asymptotic behavior of progression-free sets 28.

Chronology of Quantitative Bounds

Following the proofs by Roth and Szemerédi, a central objective in additive combinatorics has been optimizing the upper bounds for $r_3(N)$ and $r_k(N)$, driving the threshold closer to the theoretical lower bounds discovered by Felix Behrend in 1946 2129. Behrend's geometric construction demonstrated that there exist subsets of ${1, \dots, N}$ entirely free of 3-term progressions with sizes proportional to $N / \exp(c\sqrt{\log N})$ 1821. This lower bound proved that the density threshold could not be constrained by a simple polynomial power of $\log N$ 1828.

| Year | Milestone | Bound for $r_3(N)$ (Roth's Theorem, $k=3$) | Contributor(s) |

|---|---|---|---|

| 1946 | Lower Bound Generation | $\ge N / \exp(c\sqrt{\log N})$ | Behrend 2128 |

| 1953 | Initial Upper Bound | $\le C \cdot N / \log \log N$ | Roth 2134 |

| 1987 | First Logarithmic Bound | $\le C \cdot N / (\log N)^c$ | Heath-Brown 30 |

| 1999 | Analytical Refinement | $\le C \cdot N \sqrt{\log \log N} / \sqrt{\log N}$ | Bourgain 21 |

| 2008 | Bohr Set Optimization | $\le C \cdot N (\log \log N)^2 / (\log N)^{2/3}$ | Bourgain 21 |

| 2011 | Quasi-Polynomial Advances | $\le C \cdot N (\log \log N)^6 / \log N$ | Sanders 21 |

| 2016 | Bound Improvement | $\le C \cdot N (\log \log N)^4 / \log N$ | Bloom 2134 |

| 2020 | Breaking the Log Barrier | $\le C \cdot N / (\log N)^{1+c}$ | Bloom & Sisask 1829 |

| 2023 | Major Breakthrough | $\le N / \exp(c (\log N)^{1/12})$ | Kelley & Meka 2236 |

| 2023 | Refined Exponent | $\le N / \exp(c (\log N)^{1/9})$ | Bloom & Sisask 1822 |

Analytical Frameworks and Methodologies

The pursuit of these explicit bounds, alongside the effort to decipher the structural laws governing additive sets, has necessitated the development of multiple distinct mathematical frameworks. Additive combinatorics acts as a nexus where discrete combinatorics, continuous functional analysis, ergodic theory, and polynomial algebra cross-pollinate.

Classical Fourier Analysis

Classical Fourier analysis is the traditional tool for counting solutions to linear equations within sets. In the discrete setting of finite abelian groups, such as $\mathbb{Z}/N\mathbb{Z}$, the discrete Fourier transform converts additive convolution operations into point-wise multiplication 243132. Because a 3-term arithmetic progression $x, y, z$ satisfies the linear constraint $x + z = 2y$, the total number of such progressions in a set $A$ can be expressed exactly as an integral (or summation) of the cubed Fourier coefficients of $A$'s indicator function 2432.

If the non-trivial Fourier coefficients are all uniformly small, the set is considered Fourier-pseudorandom, guaranteeing that it possesses the statistically expected number of 3-term progressions 1724. If the set lacks progressions, Parseval's identity dictates that a large Fourier coefficient must exist 1724. This physically means the set correlates strongly with a linear phase function $e(2\pi i n \theta)$, clustering along a structured sequence 2430.

However, linear Fourier analysis encounters a fatal limitation: it is mathematically "blind" to higher-order correlations. For 4-term arithmetic progressions ($x, x+d, x+2d, x+3d$), it is entirely possible to construct specific sets that lack 4-term progressions but nevertheless have perfectly uniform, small linear Fourier coefficients 3334. Quadratic behaviors cause internal phase cancellations that linear transforms cannot detect.

Higher-Order Fourier Analysis and Gowers Norms

To circumvent the failure of classical Fourier analysis for progressions of length $k \ge 4$, Timothy Gowers introduced a groundbreaking generalization in 2001, now known as higher-order Fourier analysis 232533. The centerpiece of this theory is the family of Gowers uniformity norms, denoted $U^k$.

The $U^2$ norm of a bounded function measures classical pseudorandomness and is mathematically equivalent to the $\ell^4$ norm of its linear Fourier coefficients 2431. The $U^3$ norm, however, measures correlations that involve quadratic phase polynomials (e.g., $e^{i\theta n^2}$), which are strictly necessary to detect 4-term arithmetic progressions 62331. In general, the $U^k$ norm controls counts of length-$(k+1)$ progressions.

A defining achievement of this framework is the Inverse Theorem for Gowers Norms. Formulated and proven through the joint efforts of Green, Tao, and Ziegler, the inverse theorem provides a complete structural classification of functions exhibiting large $U^k$ norms 313536. It asserts that if a set has a large $U^k$ norm, it cannot be pseudorandom; it must correlate with a highly structured algebraic object known as a nilsequence 36. A nilsequence is generated by evaluating a Lipschitz function along a polynomial orbit on a nilpotent Lie group quotient (a nilmanifold) 36. This immense analytical machinery not only provided a new, quantitatively superior proof of Szemerédi's theorem, but it also formed the structural backbone of the celebrated Green-Tao theorem (2004), which unconditionally proved that the sequence of prime numbers contains arbitrarily long arithmetic progressions 142327.

Ergodic Theory and Multiple Recurrence

Simultaneously with the analytical advancements in harmonic analysis, a completely different framework emerged from the study of dynamical systems. In 1977, Hillel Furstenberg provided a radically new proof of Szemerédi's theorem using ergodic theory 2537. Furstenberg established a strict "correspondence principle" demonstrating that combinatorial problems concerning the density of integers can be translated identically into topological problems concerning measure-preserving dynamical systems 253738.

In this framework, an integer set $A$ of positive upper density corresponds to a measurable subset $E$ of a probability space $(X, \mathcal{B}, \mu)$, and the combinatorial shift operation $x \mapsto x+1$ corresponds to a measure-preserving transformation $T$ acting on $X$. The existence of a $k$-term arithmetic progression in $A$ is mathematically equivalent to the condition that the measure of the multiple intersection $\mu(E \cap T^{-n}E \cap T^{-2n}E \dots \cap T^{-(k-1)n}E)$ is strictly greater than zero for some integer $n \ge 1$ 2537.

By proving the Multiple Recurrence Theorem for ergodic systems, Furstenberg bypassed discrete combinatorics entirely. While ergodic theory is inherently qualitative and provides zero quantitative bounds for the actual sizes of progression-free sets (as it relies on limits approaching infinity), its extreme flexibility has allowed mathematicians to prove the existence of vastly more complex configurations 33. For example, Bergelson and Leibman utilized ergodic theory to prove the existence of polynomial configurations (e.g., progressions of the form $x, x+d^2, x+d^3$) within dense sets, a feat that discrete Fourier analysis alone could not initially handle 3237.

The Polynomial Method

In the 2010s, additive combinatorics witnessed a revolution through the introduction of the "polynomial method." While Fourier analysis operated on the continuous frequency side and ergodic theory operated on the infinite limit side, the polynomial method operated algebraically within finite vector spaces, leveraging the dimensions of low-degree polynomial rings 313940.

The defining triumph of the polynomial method occurred in 2016 regarding the long-standing "cap set problem." A cap set is defined as a subset of the finite vector space $\mathbb{F}_3^n$ that contains no 3-term arithmetic progressions (i.e., no solutions to the linear equation $x+y+z=0$) 3941. For decades, Fourier-analytic density increment strategies yielded only weak logarithmic bounds, struggling to prove that the maximum size of a cap set was exponentially smaller than $3^n$ 4243. However, researchers Croot, Lev, and Pach, followed immediately by Ellenberg and Gijswijt, decisively proved that the maximum size of a cap set is exponentially bounded by $o(2.756^n)$ 2041.

The method functions by capturing the arbitrary combinatorial set within the zero locus of a polynomial of relatively low degree 1239. By viewing the specific equation $x+y+z=0$ through the lens of slice rank (a generalization of tensor rank) and bounding the size of the cap set against the maximum dimension of the vector space of polynomials of degree $\le 2n/3$, they established an absolute algebraic ceiling on the size of the set 394143. The brevity and sheer power of the proof stunned the mathematical community. It proved that for certain finite-field models, Zariski complexity and polynomial algebra can wholly bypass the massive, intricate analytical machinery of Gowers norms 123141.

| Analytical Framework | Core Mechanism | Primary Applications & Strengths | Known Limitations |

|---|---|---|---|

| Fourier Analysis / Circle Method | Identifying frequency correlations via linear phase functions $e(2\pi i n \theta)$. | Counting 3-term APs (Roth's theorem); sum-free sets; basic density increments. | Blind to higher-order quadratic structures; fails entirely for $k \ge 4$ progressions. |

| Higher-Order Fourier Analysis | Gowers $U^k$ norms and inverse theorems correlating with nilpotent Lie groups. | Proving Szemerédi's theorem with explicit bounds; Green-Tao theorem (primes). | Requires highly complex machinery; produces inverse-tower bounds that remain far from optimal in $\mathbb{Z}$. |

| Ergodic Theory | Furstenberg correspondence principle and multiple measure-preserving recurrence. | Proving the existence of highly complex polynomial and multi-dimensional patterns. | Strictly qualitative; provides absolute zero quantitative bounds on the finite size of sets. |

| The Polynomial Method | Bounding set size via the linear algebra dimension of low-degree polynomial spaces. | Cap set problem; exponential bounds in finite field models ($\mathbb{F}_q^n$); Kakeya conjecture. | Extremely difficult to adapt from finite fields to the integers $\mathbb{Z}$, as it relies heavily on field characteristics. |

Modern Breakthroughs in Arithmetic Patterns

The years 2023 through 2025 have marked a distinct renaissance in additive combinatorics, with several of the field's most impenetrable structural barriers falling to novel, interdisciplinary techniques.

The Kelley-Meka Bounds for Roth's Theorem

For 70 years, the upper bound for Roth's theorem decreased slowly, heavily constrained by the inefficiencies inherent in the Fourier density increment strategy. A major psychological milestone, the "logarithmic barrier," was finally broken in 2020 by Thomas Bloom and Olof Sisask, who proved $r_3(N) \le N / (\log N)^{1+c}$ for a small constant $c > 0$ 182944. However, this bound still remained exponentially far from Behrend's 1946 lower bound 2221.

In February 2023, computer scientists Zander Kelley and Raghu Meka released a sensational proof that shattered the quasi-polynomial barrier, proving that $r_3(N) \le N / \exp(c(\log N)^{1/12})$ 2236. Bloom and Sisask shortly refined this exponent to $1/9$ 18.

The Kelley-Meka breakthrough marked a fundamental departure from traditional Fourier methods. Instead of utilizing the standard frequency-side density increment on arithmetic progressions, they utilized a purely physical-side approach applied to the integers 22. By exploiting the positive-definite nature of the convolution operator $1_A * 1_{-A}$ and relying on almost-periodicity and Bohr sets, they demonstrated that the necessary structural concentration happens far faster and more efficiently than classical Parseval-based Fourier analysis suggested 2236. Their new bounds match the quasi-polynomial shape of Behrend's lower bounds, effectively closing the theoretical gap save for the exact numerical power in the exponent 18.

The Polynomial Freiman-Ruzsa Conjecture

While classical Freiman's theorem established that sets with small doubling constants ($|A+A| \le K|A|$) are contained within multi-dimensional arithmetic progressions, the quantitative dependency on $K$ was exceptionally weak, operating on the order of $O(\exp(K^C))$ 5145. The Hungarian mathematician Katalin Marton posited a radically tighter structural condition: she conjectured that any set $A$ with doubling constant $K$ can be covered by a polynomial number of cosets (specifically bounded by $K^C$) of a subgroup $H$, where the absolute size of $H$ is no larger than $A$ 85145. This became known as the Polynomial Freiman-Ruzsa (PFR) conjecture, widely considered the "holy grail" of additive combinatorics 4546.

In November 2023, a collaboration between Timothy Gowers, Ben Green, Freddie Manners, and Terence Tao successfully proved Marton's conjecture for vector spaces over characteristic 2 ($\mathbb{F}_2^n$) 4554. Remarkably, their proof entirely bypassed both traditional Fourier analysis and the polynomial method. Instead, they utilized tools from information theory, specifically Shannon entropy and the entropic formulation of Ruzsa distance 64756.

The entropic Ruzsa distance between two random variables $X$ and $Y$ taking values in an additive group is defined precisely as $d(X, Y) = H(X-Y) - \frac{1}{2}H(X) - \frac{1}{2}H(Y)$, where $H$ denotes Shannon entropy 5657. By applying a "distance decrement" argument (conceptually analogous to density increment but operating smoothly on entropies rather than physical densities), they proved that a lack of distance decrement geometrically forces the random variables to vanish, proving the conjecture with an explicit polynomial exponent of $C=12$ 656. Jyun-Jie Liao subsequently refined this exponent down to 9 5158. In a landmark for computer-assisted mathematics, Tao and collaborators subsequently formalized the entire proof of the PFR conjecture using the Lean 4 theorem prover 454658.

Following the characteristic-2 proof, the entropic framework was rapidly generalized. In late 2024 and 2025, researchers such as Mohammad Taha Kazemi Moghadam utilized spectral stability dichotomies and Freiman modeling to extend the polynomial bounds to odd characteristics, bounded torsion groups, and the integers $\mathbb{Z}$, effectively settling the PFR conjecture across all relevant mathematical domains 5159.

Extensions to General Groups and Applications

While traditional additive combinatorics focused on abelian groups (where $x+y = y+x$), modern research has heavily expanded into non-abelian groups and multiplicative combinatorics.

Growth in Non-Abelian Groups

In non-abelian groups, the study of small doubling shifts to the study of subset growth under multiplication, $A \cdot A \cdot A$. Foundational work by Harald Helfgott on the group $SL_2(\mathbb{F}_p)$ proved that any generating subset $A$ that is not too large must experience rapid polynomial growth, meaning $|A \cdot A \cdot A| \ge |A|^{1+\delta}$ 4849.

This subset growth phenomenon revealed that non-abelian groups possess an inherent "quasirandomness" 48. Unlike abelian groups, which harbor numerous dense subgroups that trap subsets and prevent growth, non-abelian simple groups lack such intermediate structures. Consequently, any sufficiently large subset of these groups behaves as if it is uniformly distributed 48. This principle has been instrumental in resolving problems in analytic number theory, including the development of the "affine sieve" by Bourgain, Gamburd, and Sarnak, which applies expansion properties in linear groups to find almost-primes in orbits of non-abelian group actions 4849.

Applications to Theoretical Computer Science

The structural definitions forged in additive combinatorics have found profound applications in theoretical computer science, particularly within computational complexity and algorithm design 45. The mechanisms used to detect pseudorandomness in additive sets are directly translatable to the construction of randomness extractors - algorithms that convert weak sources of entropy into perfectly uniform random bits 57.

Furthermore, the higher-order Fourier analysis developed for Gowers norms serves as the mathematical foundation for "property testing." Testing whether a massive Boolean function is a low-degree polynomial using only a negligible number of queries relies entirely on inverse theorems for uniformity norms 550. Similarly, the sum-product theorem in finite fields has been applied to construct highly efficient expander graphs, which are critical for robust network routing and error-correcting codes 749.

The Influence of the Hungarian School

The historical development and eventual maturation of additive combinatorics are inextricably linked to the Hungarian school of mathematics. Rising to prominence in the early 20th century under figures like Lipót Fejér and Frigyes Riesz, the Hungarian tradition was characterized by a unique cultural emphasis on discrete mathematics, competitive problem-solving, and extremal combinatorics 51.

This tradition was crystallized globally by Paul Erdős. Living a famously nomadic lifestyle, Erdős authored over 1,500 papers and unified disparate branches of discrete mathematics through vast international collaboration 5265. Erdős's heuristics, often formulated as monetary prize-carrying open problems (such as the Erdős-Turán conjecture), dictated the thematic trajectory of additive combinatorics for decades 266553. Endre Szemerédi, operating largely out of the Alfréd Rényi Institute of Mathematics in Budapest, provided the combinatorial masterstrokes (such as the Regularity Lemma) that successfully bridged finite graph theory with structural infinity 262754.

Historically, traditional "Hungarian combinatorics" was viewed by some as distinct from "conceptual" or algebraic mathematics - relying on highly convoluted, elementary graph-theoretic arguments rather than abstract structural frameworks 68. However, the modern era of additive combinatorics has fully synthesized the two approaches 68. The elementary, physical-space heuristics posed by the Hungarian school are now resolved using the most advanced conceptual tools in mathematics, from nilpotent Lie groups to Shannon entropy, proving that classical combinatorial boundaries often mask deep, universal analytical truths 2768.